2026-05-28 23:25:02

Getting curl developers and related enthusiasts into a single room to hang out in the real world for a whole weekend once a year is awesome.

We find inspiration, we share experiences, we learn from each other and we dream and plan of future endeavors and things to work on. Seeing faces, hearing voices and watching body language help us communicate better virtually and on video calls during the rest of the year.

We have gathered curl people like this annually since 2017, even if some years during Covid were “different”. To me, this is one of the best events of the year. I get to hang out and talk curl with good friends a whole weekend!

The 2026 edition was held in Prague in late May and kept the general style of past events. About 25 people got into the room. We had five curl maintainers present and quite a lot of local curious minds.

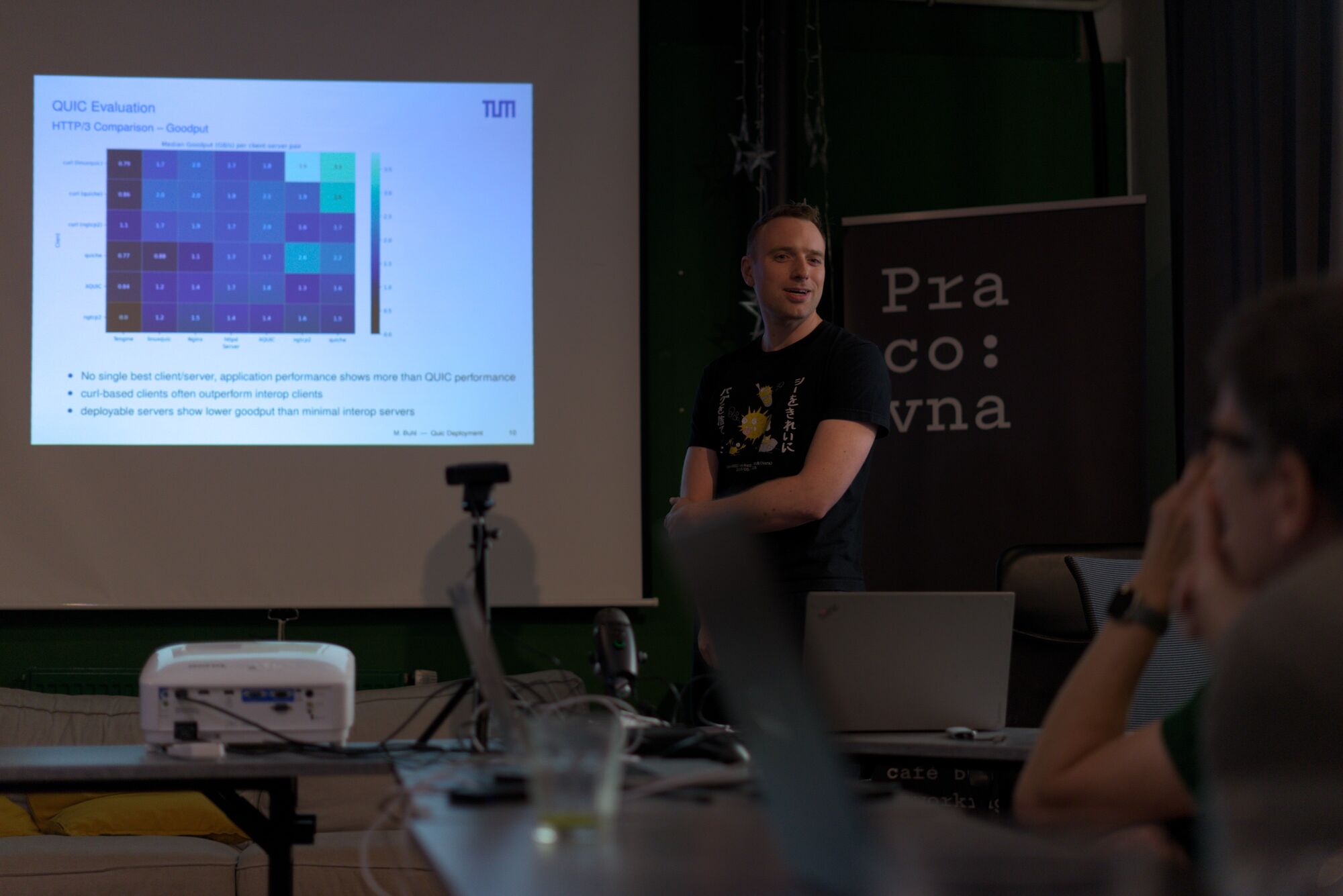

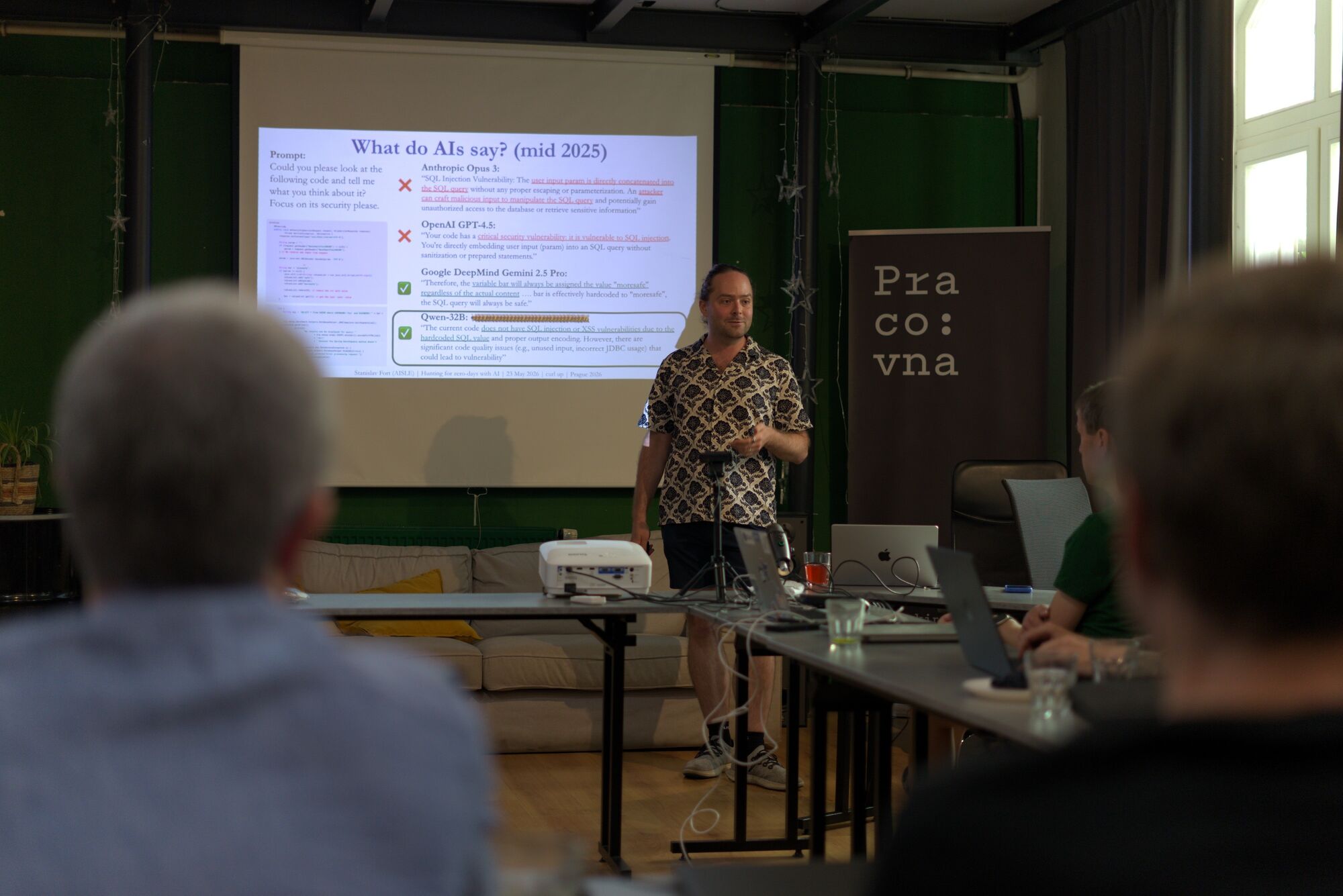

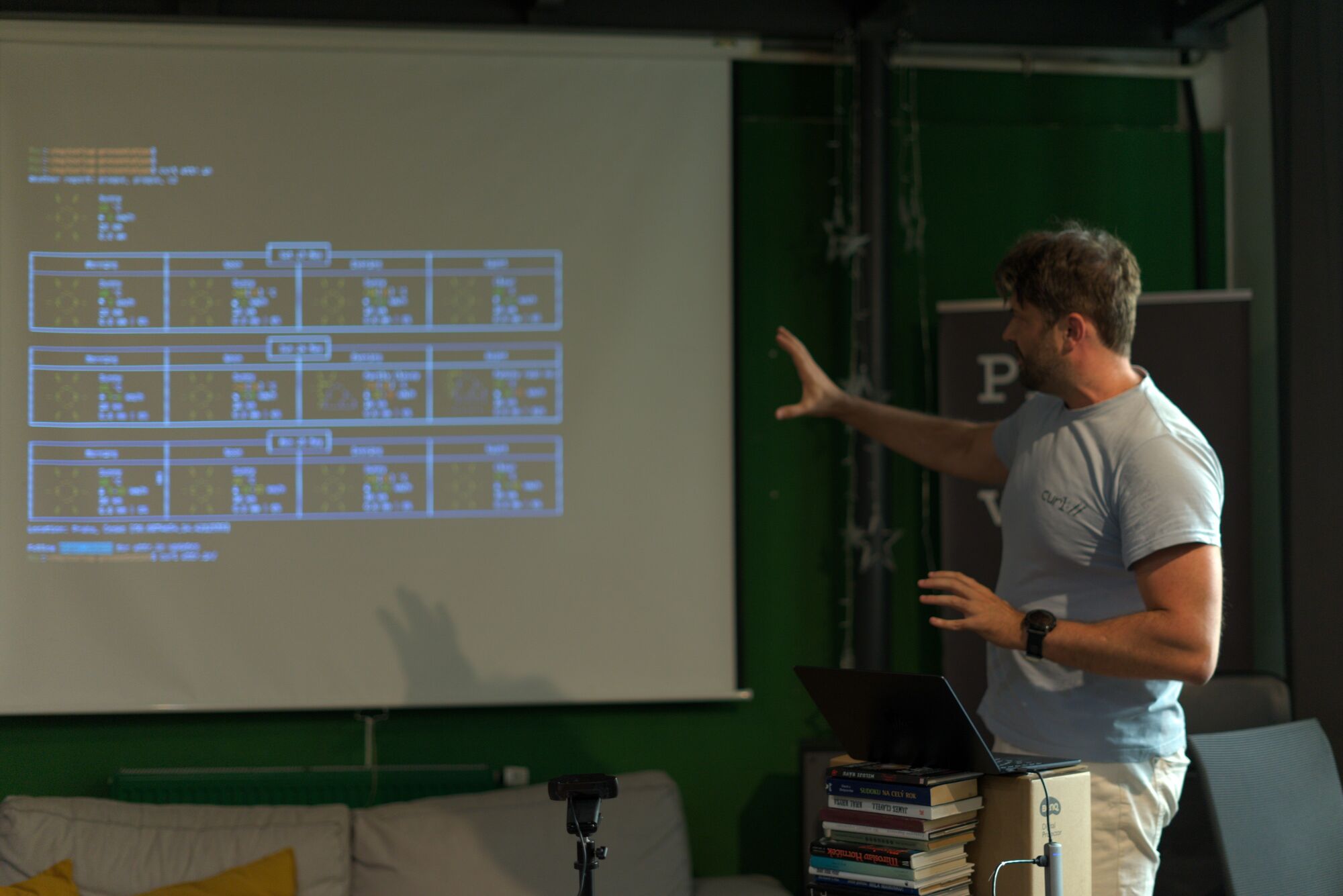

The curl up format is easy, casual and friendly. We do topical presentations, followed up with Q&A and discussions around the topics brought up – of course usually with reflections about curl’s role, both past and future. We live-stream and record the presentations to allow our friends who could not attend to keep up both in real-time but also after the fact.

Unfortunately the tech is not always on our side so the quality sometimes is a little lacking. This year I brought an HDMI-splitter and an HDMI-to-USB device to allow us to get better recordings, but they were not working as smoothly as intended so we had to use inferior backup solutions for most of the meetup.

This presentation above was the “keynote”, the introduction talk to the event. We then also recorded another nine session that are all available in the curl up 2026 playlist on YouTube.

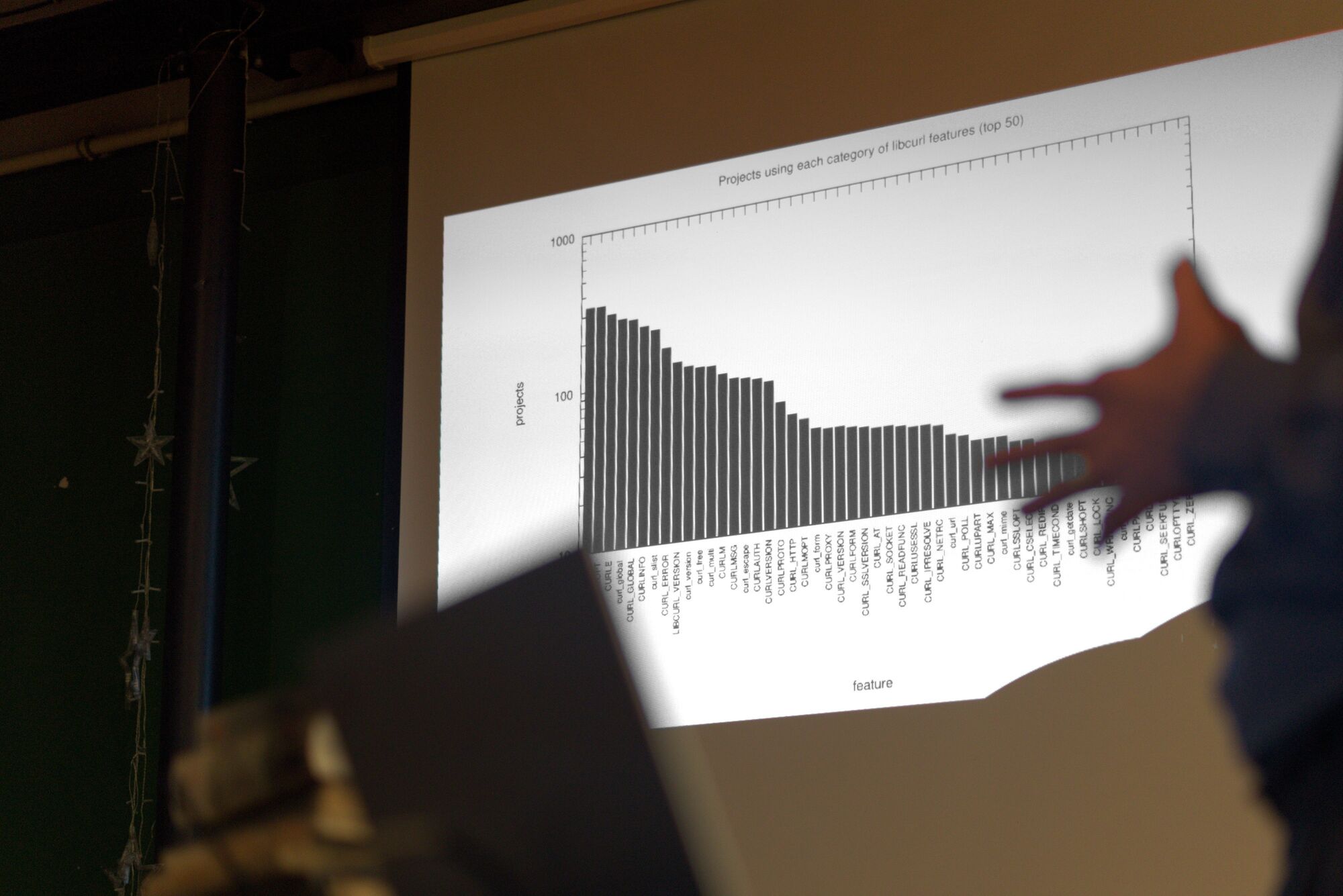

To give you all a little glimpse of what curl up is about, here’s a gallery showing some of the speakers and some scenery.

All photos taken by and donated to us by an anonymous curl fan present in the room.

2026-05-26 14:01:39

I’m doing Open Source primarily because I love it. The social aspects, the for-the-good angle and for the challenge of engineering this to work for everyone. I also do it because it is my full-time job and getting food on the table and provide for my family is not unimportant. It may come as a shock, but I am not in this game for the money or the extravagant life style.

I have been working full-time on curl since 2019. For me, this typically means doing 50 hour work weeks, as I spend all days on it and then I top them off with a few more hours every late night – all days of the week, I spend all this time on curl because it is a work of love and it is both my job and my spare time hobby and no one counts my hours anyway. (And no, I do not recommend anyone else to do the same. I’m not suggesting this for others.)

I consider my primary work-related mission in life to be to make curl the best transfer library and tool possible and make it qualify as a top project in Open Source, quality, performance and not the least, security. I believe we generally meet these lofty goals.

I founded the curl project, I am still a lead developer in the project almost thirty years later. While I always clearly state that curl is not a one-man shop and that curl would absolutely not be what it is without my awesome curl team mates, a large part of the world still thinks of curl as my project and sometimes more or less equals curl with my person.

I cannot help to take curl issues personally. When someone critiques curl, it is by extension a complaint on decisions and choices I stand by and behind – and many cases I made the calls. curl is personal to me. curl has formed my life forever.

I have two kids. They were both born many years after I started working on curl and they are both adults and independent individuals now. I love them dearly. Life passes by but curl remains. We’ve had slow times and busy times. The decades pass.

Later this year the curl project celebrates thirty years. We typically repeat that the number of curl installations in the world is perhaps thirty billion.

Over the last years I have done numerous blog posts on the state of security reports submitted to curl. They have gradually switched over from complaints on stupid LLMs, to stupid AI slop reports, closing the bug bounty over to the current high quality chaos which for us started maybe at some point in March 2026.

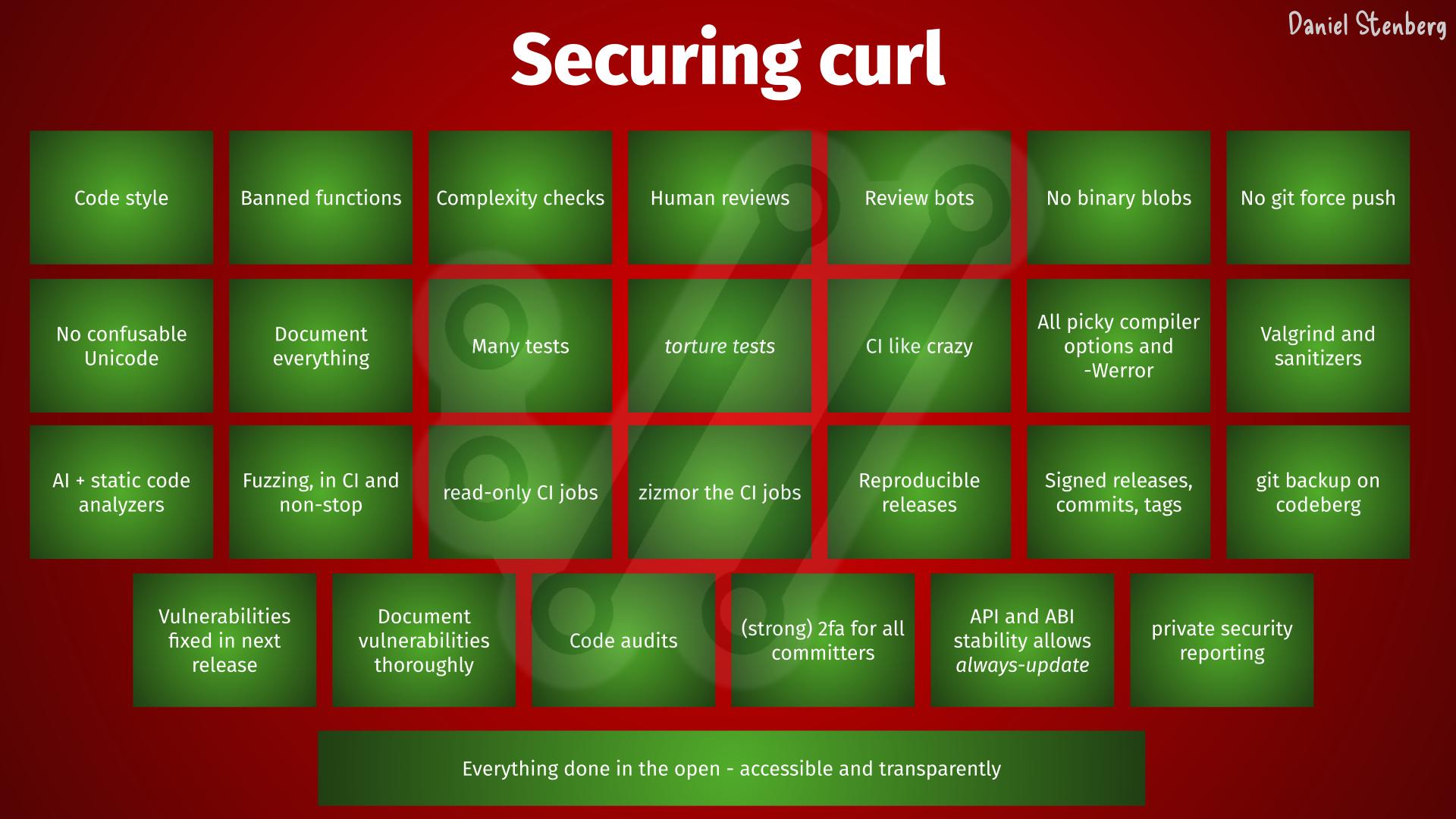

We have seen many spectacular security failures through the years, in Internet products, in software infrastructure and in Open Source. Every time we read about those events, we get reminded about how curl is everywhere and how we really really really do not want anything such to happen to us or our users. And we take another lap around the project, tighten every bolt a little more, add a few more checks, tests and guidelines to ideally make the curl ship ever so slightly less likely to ever leak or sink.

Recently, after I pointed out that Mythos only found a single low severity problem in curl in its first scan, countless people have repeated the claim that curl is one of the most scrutinized, most reviewed, most fuzzed and most verified source codes you can imagine. Perhaps that’s true, but I just want to mention this: that’s not by mistake. That’s not an accident or a happy circumstance. That’s the result of relentless work and attention to details through decades. Software engineering done right. Iterative improvements over time that simply never ends is an effective method.

This does not however mean that we don’t have bugs or that we don’t have security problems left, because we do. We have hundreds of thousands of lines of source code that is doing highly parallel networking for many protocols on all imaginable operating systems and CPU architectures – in C. So we fix the problems, patch them up and ship new releases. Over and over.

Thirty billion installations world-wide means that everyone reading this blog post has curl installed multiple times in stuff they own. In phones, tablets, cars, TVs, printers, game consoles, kitchen equipment and more. Not to mention all the online digital services we use and those devices communicate with. I cannot stress the importance of curl security and I would guess that most of you agree with me.

I am jealous of those projects that shipped a horrible bug at some point in the past that made the world burn for a while. They got attention and some of them then got funding and financial muscles to get them staff and hire multiple full time engineers. I sometimes think we would be better off if we also had one of those.

A thirty years old project could make you think you’ve seen most things already, but we have not been in this situation before.

The rate of incoming security reports is 4-5 times higher than it was in 2024 and double the speed of 2025 – meaning that on average we now get more than one report per day. The quality is way higher than ever before. The reports are typically very detailed and long.

In order to manage this incoming flood of submissions, we need to make sure to handle them as soon as possible as we know there are more coming. If we don’t take care of them roughly at the same speed they arrive, the backlog just grows and having that list of potential security problems in a list that you don’t have control over takes a mental toll.

I spend almost all my days right now working through the list of reported security issues that we have on Hackerone. Verify the claim, assess the importance, write a patch, figure out when the bug was introduced, understand the vulnerability, write a detailed advisory explaining the problem to the world and communicate all this with the security researcher and the rest of the curl security team.

For the first time in my life, my wife voiced concerns about my work hours and my imbalanced work/life situation. I work more than I’ve done before, but the flood keeps coming. People in my surrounding, I guess reading between the lines, have asked me how I and we cope with this deluge and want to make sure we don’t burn in the process. I am concerned for my team mates.

I might soon have to reduce my work hours to allow myself more breathing time.

This is a never-before seen or experienced pressure on the curl project and its security team members. An avalanche of high priority work that trumps all other things in the project that is primarily mental because we certainly could ignore them all if we wanted, but we feel a responsibility, we have a conscience and we are proud about our work. We feel obliged to fix security problems in the software we have helped shipped to every device on the globe. This is personal to us.

With about half the release cycle left until the pending release ships, we already have twelve confirmed vulnerabilities meaning twelve pending CVE announcements. That’s a new project record and it also means we will reach thirty published CVEs in 2026 even before half the calendar year has passed. The projected total amount of curl CVEs published through the whole year is therefore at least double this number!

What help would we like? Short term it is a little late. We already have work up to our ears.

I wish more companies that use and depend upon curl or libcurl in commercial software and services would chime in their part to fund us. We could then pay more developers to distribute the work load across. That would be great. Feel free to contact me to discuss how you can contribute to this. Get your employer to pay for a support contract!

Fortunately we have customers who already do this, so some of us can work on curl full time.

I am a pragmatic (and a bit of a cynic) and I have danced this dance for a long time already. I have no illusions that anything significant is going to change in this area even if we are in an unparalleled situation and in a tighter spot than ever before. I totally expect us to ride out this storm by ourselves. Like we are used to. We will survive. We will endure. It might just be a bit of a shaky period in the project and in the world at large as we try to maneuver our way through this. There’s a tsunami coming over us and all we can do is swim, there are no life boats for us.

The curl project is not owned by a company. We are not part of any umbrella organization. This makes us a little under-powered at times, but it also gives us maximum freedom and flexibility. We act solely in the interest of making curl as good as possible for the world and curl users.

Fixing bugs and problems is good. Every reported problem implies a fixed issue. curl becomes a better product.

What is also a good trend: almost no one finds terrible vulnerabilities. All vulnerabilities found the last few years in curl have all been deemed severity LOW or MEDIUM. I’m not saying there won’t be any more HIGH ever, but at least they are rare. The most recent severity high curl CVE was published in October 2023.

Right now we are under a little pressure. Forgive us if we are a little slow to respond sometimes.

Image by Brian Merrill from Pixabay

2026-05-17 04:58:26

One of the established power features of the curl command line tool is its support for “globbing”. It is a built-in way to specify ranges and sets in different ways and have curl iterate over them to simplify repeated transfers.

For example, you can easily download three images from the same host without having to repeat the almost same URL three times:

curl -O "https://img.example/{flower,house,garden}.jpg"

Or if you have them in a numbered range, you can get a thousand images in a single tiny command line:

curl -O "https://img.example/photo[1-1000].jpg"

And they can be combined in crazy ways:

curl -O "https://example.{org,com}/{apples,mugs,coins}/img[1-1000].jpg"

curl allows globs used in a single URL to create up to 263 permutations – which, if you can do one million transfers per second, would take 292 thousand years to complete.

(As an added bonus you can of course also add -Z to the command line to make curl transfer all those images in parallel rather than serially.)

To help users save files when using globbing, curl provides a way to reference the globbed components using #[number] when setting the target filename. The number then references the specific glob, where the first is 1, the second 2 etc.

Saving the one thousand images using different filenames locally than they use remotely:

curl "https://img.example/photo[1-1000].jpg" -o "local-#1.jpeg"

This allows a compact command line to also offer flexibility.

All functionality mentioned above has existed in curl for years; decades even. It just so happened that one day when working with curl I fell over a use case that I could not solve with the existing command line functionality.

I wanted to do a globbed upload to a HTTP server and then save all the separate responses into their own dedicated files, preferably with names based on the glob.

I will admit that I at first had a hard time to accept the fact that we actually could not do this already, but that was then rather quickly instead turned into: how should I add support for this in the smoothest and most convenient way? Using what syntax?

The road to fixing it for uploads took a little detour.

Starting in 8.21.0, curl can assign a name to each glob and then reference that glob by name instead of using just a glob index number. This allows command lines to get ever so slightly more readable I think.

The image range example from above, but instead using named globs:

curl "https://img.example/photo[<number>1-1000].jpg" -o "local-#<number>.jpeg"

Or a version with three separate globs where they all are used in the output file name:

curl "https://example.{<tld>org,com}/{<object>apples,mugs,coins}/img[<index>1-1000].jpg" -o "file-#<index>-#<tld>-#<object>"

Slick, right?

Back to the globbed upload challenge:

curl -T "{one,two,three}.jpg" https://ul.example/

… but with the responses saved in separate files instead of sent to stdout. Use named globs:

curl -T "{<counter>one,two,three}.jpg" https://ul.example/ -o "save-#<counter>"

The only way to refer to an upload glob is to set a name and refer to that name. There are no indexed references for uploads, only for URL globs.

It is in fact possible to also use a mix of upload globs and URL globs in the same command line if you want to upload multiple files to multiple destinations. They set the names in the same namespace and you refer to the names the same way, independently of source.

This feels more like a thing to show off in a blog post like this rather than something people will actually find good use for:

Upload three files to three sites, save all nine response in separate files:

curl -T "{<counter>one,two,three}.jpg" https://{<host>ul1,ul2,ul3}.example/ -o "save-#<counter>-#<host>"

Even when you set a name, the glob can still also be references by its number.

2026-05-11 14:01:35

yes, as in singular one.

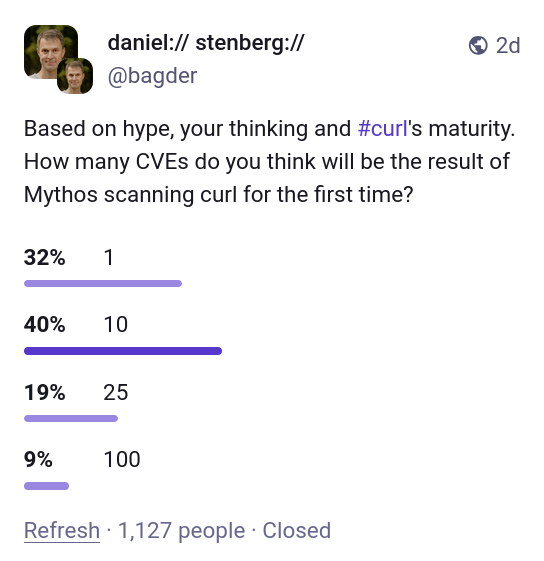

Back in April 2026 Anthropic caused a lot of media noise when they concluded that their new AI model Mythos is dangerously good at finding security flaws in source code. Apparently Mythos was so good at this that Anthropic would not release this model to the public yet but instead trickle it out to a selected few companies for a while to allow a few good ones(?) to get a head start and fix the most pressing problems first, before the general populace would get their hands on it.

The whole world seemed to lose its marbles. Is this the end of the world as we know it? An amazingly successful marketing stunt for sure.

Part of the deal with project Glasswing was that Anthropic also offered access to their latest AI model to “Open Source projects” via Linux Foundation. Linux Foundation let their project Alpha Omega handle this part, and I was contacted by their representatives. As lead developer of curl I was offered access to the magic model and I graciously accepted the offer. Sure, I’d like to see what it can find in curl.

I signed the contract for getting access, but then nothing happened. Weeks went past and I was told there was a hiccup somewhere and access was delayed.

Eventually, I was instead offered that someone else, who has access to the model, could run a scan and analysis on curl for me using Mythos and send me a report. To me, the distinction isn’t that important. It’s not that I would have a lot of time to explore lots of different prompts and doing deep dive adventures anyway. Getting the tool to generate a first proper scan and analysis would be great, whoever did it. I happily accepted this offer.

(I am purposely leaving out the identity of the individual(s) involved in getting the curl analysis done as it is not the point of this blog post.)

Before this first Mythos report, we had already scanned curl with several different very capable AI powered tools (I mean in addition to running a number of “normal” static code analyzers all the time, using the pickiest compiler options and doing fuzzing on it for years etc). Primarily AISLE, Zeropath and OpenAI’s Codex Security have been used to scrutinize the code with AI. These tools and the analyses they have done have triggered somewhere between two and three hundred bugfixes merged in curl through-out the recent 8-10 months or so. A bunch of the findings these AI tools reported were confirmed vulnerabilities and have been published as CVEs. Probably a dozen or more.

Nowadays we also use tools like GitHub’s Copilot and Augment code to review pull requests, and their remarks and complaints help us to land better code and avoid merging new bugs. I mean, we still merge bugs of course but the PR review bots regularly highlight issues that we fix: our merges would be worse without them. The AI reviews are used in addition to the human reviews. They help us, they don’t replace us.

We also see a high volume of high quality security reports flooding in: security researchers now use AI extensively and effectively.

Security is a top priority for us in the curl project. We follow every guideline and we do software engineering properly, to reduce the number of flaws in code. Scanning for flaws is just one of many steps to keep this ship safe. You need to search long and hard to find another software project that makes as much or goes further than curl, for software security.

It was with great anticipation we received the first source code analysis report generated with Mythos. Another chance for us to find areas to improve and bugs to fix. To make an even better curl.

This initial scan was made on curl’s git repository and its master branch of a certain recent commit. It counted 178K lines of code analyzed in the src/ and lib/ subdirectories.

The analysis details several different approaches and methods it has performed the search, and how it has focused on trying to find which flaws. A fun note in the top of the report says:

curl is one of the most fuzzed and audited C codebases in existence (OSS-Fuzz, Coverity, CodeQL, multiple paid audits). Finding anything in the hot paths (HTTP/1, TLS, URL parsing core) is unlikely.

… and it correctly found no problems in those areas.

curl is currently 176,000 lines of C code when we exclude blank lines. The source code consists of 660,000 words, which is 12% more words than the entire English edition of the novel War and Peace.

On average, every single production source code line of curl has been written (and then rewritten) 4.14 times. We have polished on this.

Right now, the existing production code in git master that still remains, has been authored by 573 separate individuals. Over time, a total of 1,465 individuals have so far had their proposed changes merged into curl’s git repository.

We have published 188 CVEs for curl up until now.

curl is installed in over twenty billion instances. It runs on over 110 operating systems and 28 CPU architectures. It runs in every smart phone, tablet, car, TV, game console and server on earth.

The report concluded it found five “Confirmed security vulnerabilities”. I think using the term confirmed is a little amusing when the AI says it confidently by itself. Yes, the AI thinks they are confirmed, but the curl security team has a slightly different take.

Five issues felt like nothing as we had expected an extensive list. Once my curl security team fellows and I had poked on the this short list for a number of hours and dug into the details, we had trimmed the list down and were left with one confirmed vulnerability. The other four were three false positives (they highlighted shortcomings that are documented in API documentation) and the fourth we deemed “just a bug”.

The single confirmed vulnerability is going to end up a severity low CVE planned to get published in sync with our pending next curl release 8.21.0 in late June. The flaw is not going to make anyone grasp for breath. All details of that vulnerability will of course not get public before then, so you need to hold out for details on that.

The Mythos report on curl also contained a number of spotted bugs that it concluded were not vulnerabilities, much like any new code analyzer does when you run it on hundreds of thousands of lines of code. All the bugs in the report are being investigated and one by one we are fixing those that we agree with.

All in all about twenty bugs that are described and explained very nicely. Barely any false positives, so I presume they have had a rather high threshold for certainty.

curl is certainly getting better thanks to this report, but counted by the volume of issues found, all the previous AI tools we have used have resulted in larger bugfix amounts. This is only natural of course since the first tools we ran had many more and easier bugs to find. As we have fixed issues along the way, finding new ones are slowly becoming harder. Additionally, a bug can be small or big so it’s not always fair to just compare numbers

My personal conclusion can however not end up with anything else than that the big hype around this model so far was primarily marketing. I see no evidence that this setup finds issues to any particular higher or more advanced degree than the other tools have done before Mythos. Maybe this model is a little bit better, but even if it is, it is not better to a degree that seems to make a significant dent in code analyzing.

This is just one source code repository and maybe it is much better on other things. I can only tell and comment on what it found here.

But allow me to highlight and reiterate what I have said before: AI powered code analyzers are significantly better at finding security flaws and mistakes in source code than any traditional code analyzers did in the past. All modern AI models are good at this now. Anyone with time and some experimental spirits can find security problems now. The high quality chaos is real.

Any project that has not scanned their source code with AI powered tooling will likely find huge number of flaws, bugs and possible vulnerabilities with this new generation of tools. Mythos will, and so will many of the others.

Not using AI code analyzers in your project means that you leave adversaries and attackers time and opportunity to find and exploit the flaws you don’t find.

Zero memory-safety vulnerabilities found.

Methodology note: this review is hand-driven analysis using LLM subagents for parallel file reads, with every candidate finding re-verified by direct source inspection in the main session before being recorded. The CVE to variant-hunt mapping was built from curl’s own vuln.json. No automated SAST tooling was used.

This outcome is consistent with curl’s status as one of the most heavily fuzzed and audited C codebases. The defensive infrastructure (capped dynbufs everywhere, curlx_str_number with explicit max on every numeric parse, curlx_memdup0 overflow guard, CURL_PRINTF format-string enforcement, per-protocol response-size caps, pingpong 64KB line cap) systematically closes the bug classes that would normally be productive in a codebase this size.

Coverage now includes: all minor protocols, all file parsers, all TLS backends’ verify paths, http/1/2/3, ftp full depth, mprintf, x509asn1, doh, all auth mechanisms, content encoding, connection reuse, session cache, CLI tool, platform-specific code, and CI/build supply chain.

It should be noted that the AI tools find the usual and established kind of errors we already know about. It just finds new instances of them.

We have not seen any AI so far report a vulnerability that would somehow be of a novel kind or something totally new. They do not reinvent the field in that way, but they do dig up more issues than any other tools did before.

These were absolutely not the last bugs to find or report. Just while I was writing the drafts for this blog post we have received more reports from security researchers about suspected problems. The AI tools will improve further and the researchers can find new and different ways to prompt the existing AIs to make them find more.

We have not reached the end of this yet.

I hope we can keep getting more curl scans done with Mythos and other AIs, over and over until they truly stop finding new problems.

Thanks to Anthropic and Alpha Omega for providing the model, the tools and doing the scan for us. Thanks also to the individual who did the scan for us. Much appreciated!

Top image by Jin Kim from Pixabay

Thanks for flying curl. It’s never dull.

2026-04-30 16:08:34

In this era of powerful tools to find software bugs, we now see tools find a lot of problems at a high speed. This causes problems for developers, as dealing with the growing list of issues is hard. It may take a longer time to address the problems than to find them – not to mention to put them into releases and then it takes yet another extended time until users out in the wild actually get that updated version into their hands.

In order to find many bugs fast, they have to already exist in source code. These new tools don’t add or create the problems. They just find them, filter them out and bring them to the surface for exposure. A better filter in the pool filters out more rubbish.

The more bugs we fix, the fewer bugs remain in the code. Assuming the developers manage to fix problems at a decent enough pace.

For every bugfix we merge, there is a risk that the change itself introduces one more more new separate problems. We also tend to keep adding features and changing behavior as we want to improve our products, and when doing so we occasionally slip up and introduce new problems as well.

Source code analyzing tools is a concept as old as source code itself. There has always existed tools that have tried to identify coding mistakes. Now they just recently got better so they can find more mistakes.

These new tools, similar to the old ones, don’t find all the problems. Even these new modern tools sometimes suggest fixes to the problems they find that are incomplete and in fact sometimes downright buggy.

Undoubtedly code analyzer tooling will improve further. The tools of tomorrow will find even more bugs, some of them were not found when the current generation of tools scanned the code yesterday.

Of course, we now also introduce these tools in CI and general development pipelines, which should make us land better code with fewer mistakes going forward. Ideally.

If we assume that we fix bugs faster than we introduce new ones and we assume that the AI tools can improve further, the question is then more how much more they can improve and for how long that improvement can go on. Will the tools find 10% more bugs? 100%? 1000%? Is the tool improving going to gradually continue for the next two, ten or fifty years? Can they actually find all bugs?

Can we reach the utopia where we have no bugs left in a given software project and when we do merge a new one, it gets detected and fixed almost instantly?

If we assume that there is at least a theoretical chance to reach that point, how would we know when we reach it? Or even just if we are getting closer?

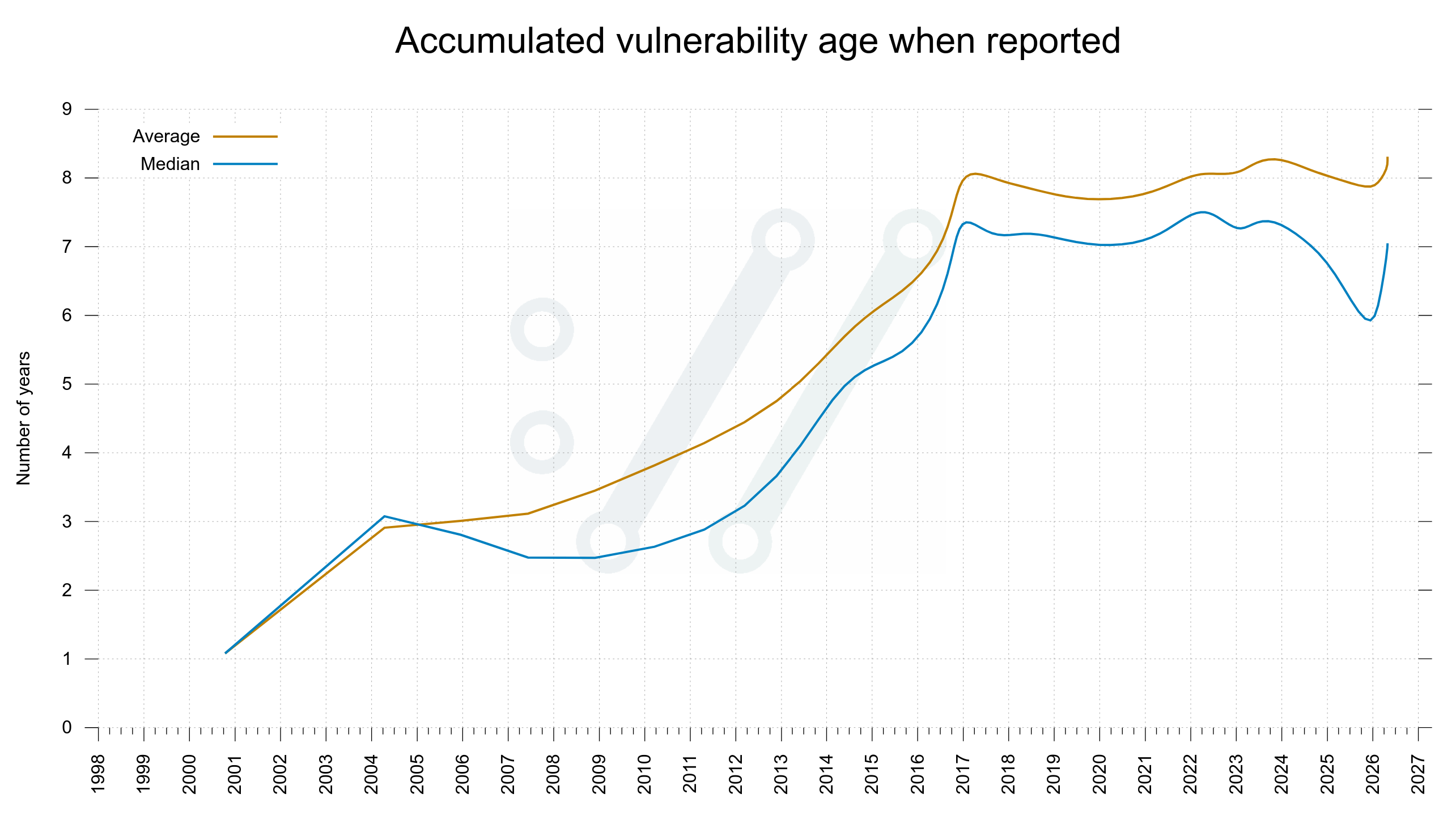

I propose that one way to measure if we are getting closer to zero bugs is to check the age of reported and fixed bugs. If the tools are this good, we should soon only be fixing bugs we introduced very recently.

In the curl project we don’t keep track of the age of regular bugs, but we do for vulnerabilities. The worst kind of bugs. If the tools can find almost all problems, they should soon only be finding very recently added vulnerabilities too. The age of new finds should plummet and go towards zero.

If the age of newly reported vulnerabilities are getting younger, it should make the average and median age of the total collection go down over time.

The average and median time vulnerabilities had existed in the curl source code by the time they were found and reported to the project.

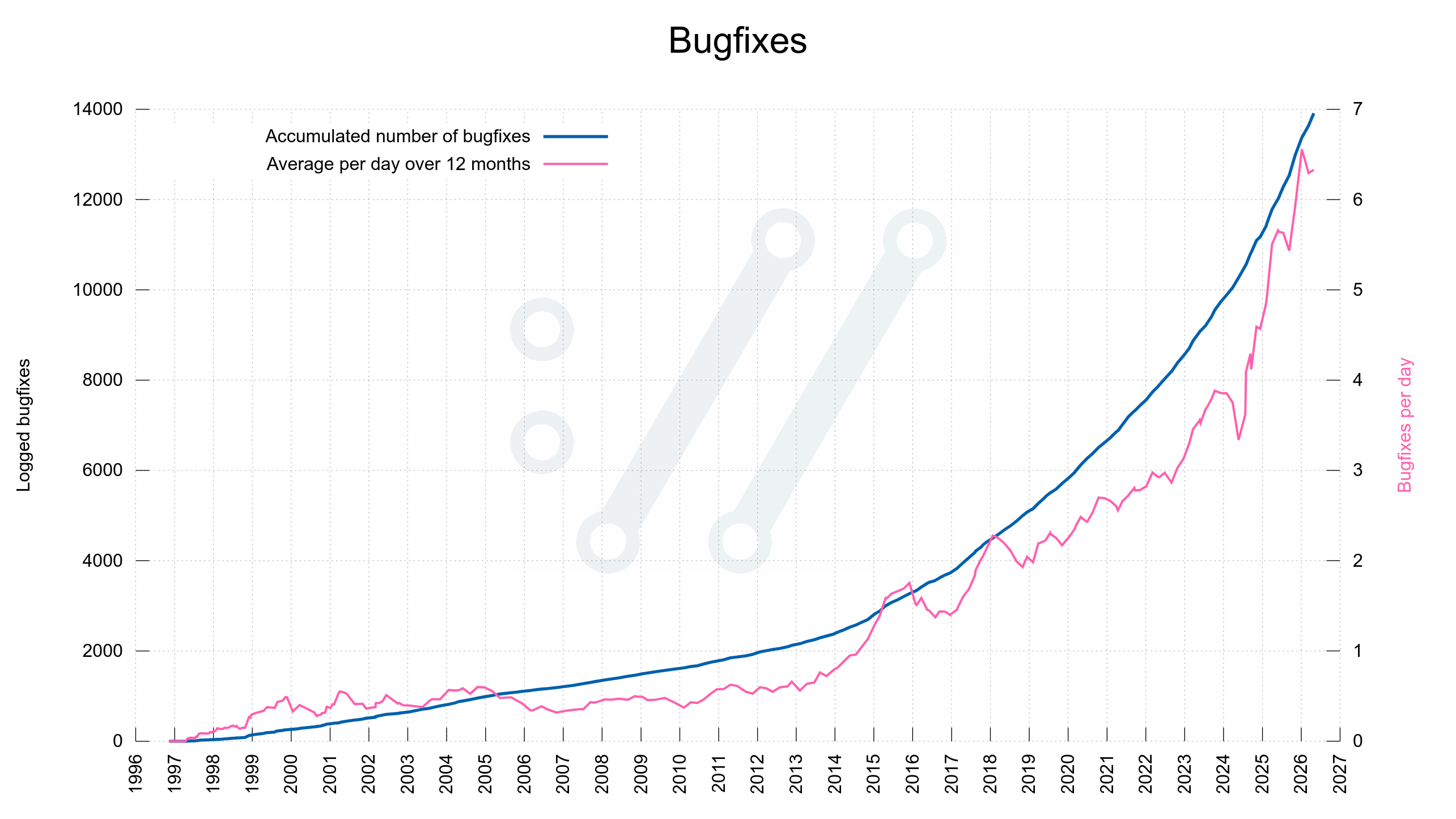

When the tools have found most problems there should be less bugs left to fix. The bugfix rate should go down rapidly – independently of how you count them or how liberal we are in counting exactly what is a bugfix.

Given the data from the curl project, there does not seem to be fewer bugfixes done – yet. Maybe the bugfix speed goes up before it goes down?

Given the look of these graphs I don’t think we are close to zero bugs yet. These two curves do not seem to even start to fall yet.

Yes, these graphs are based on data from a single project, which makes it super weak to draw statistical conclusions from, but this is all I have to work with.

I think that’s mostly an indication of what you believe the tooling can do and how good they can eventually end up becoming.

I don’t know. I will keep fixing bugs.

2026-04-30 14:49:47

In appendix A of the book Root cause: Stories and lessons from two decades of Backend Engineering Bugs, author Hussein Nasser has these wonderful words to say about me:

Daniel Stenberg is a Swedish engineer and the creator of curl (cURL), one of the most widely used tools and libraries for fetching content over various protocols. I’ve always admired Daniel’s work, reading his blogs and watching his talks on YouTube. He is one of the engineers who inspired me to start my own YouTube channel and teach backend engineering.

It warms my heart to read this. Words like this give me energy and motivation. My work has meaning.