2026-06-06 08:00:00

There is a strange thing that happens in communities that gather around abstinence from something: identity from opposition. At their best these communities are not just negative: childfree spaces can be about autonomy, choice and acceptance, anti-car spaces about safer streets and transit, and LLM-skeptical developer spaces about the future of labor, code quality and slop1. But the thing being refused often does not go away and instead becomes the main subject of the community’s identity.

That would be fine if it stayed at criticism, maybe even angry criticism, but more often than not it turns into policing and hatred towards others. An influencer without children becomes a parent, an urban bike commuter by choice buys a Porsche, a respected developer tries LLMs, and the community feels betrayed because it assumed they were members of the same tribe. The expulsion of that person (who never signed up to be a community member) is entirely imaginary but the punishment that the community unleashes is not: people pile on and shame them, quote them out of context and turn their weakest moments into proof that the person was always unserious, a sharlatan or should not be listened to.

I do not think the answer is to tell people to stop paying attention. Cars shape cities even for people who cycle, children influence politics, workplaces and taxes even for people who do not have them. For us developers, LLMs show up in editors, issue trackers, hiring conversations, management pressure and code reviews whether we asked for them or not. Resisting that can be legitimate but that is no excuse for using one’s rejection to justify shitty mob behavior.

I understand the thinking all too well, because I have done versions of this myself in the past. It took me a while to become more accepting of other people’s worldviews that diverge from mine. Whatever insecurities we have, finding a group of others sharing them can be comforting. The danger is that being part of a crowd of negativity can easily make us part of collective harassment.

I can only encourage you to breathe, slow down, de-escalate when given the chance, and resist the temptation to always assume the most catastrophic reading. Default to being open to new things. Being negative towards something, and making that ones identity, is an easy trap to fall into.

These examples are not meant as equivalents. The recent mob against rsync is the LLM version that prompted this post. I picked the others because I’m familiar with those communities and they all show similar cases of personal choices being interpreted as betrayal.↩

2026-05-26 08:00:00

In my last post I used the word “clanker1” as an alternative to “agent” quite consistently and probably excessively. That choice ended up attracting a lot more attention than I expected in the Hacker News comment section of that post and a number of folks had a very strong reaction: to them it sounded like a slur, in one case even something adjacent to the n-word.

That reaction surprised me somewhat, but it also made me realize that I should write down what I mean by the word for future reference.

For me “clanker” is useful because it creates distance from the machine and that is a quality which is important to me. The machine is not a person, not a co-worker, not a friend, not a little spirit in the terminal. It is just a machine, a tool, and nothing more.

I dislike the word “agent” for these LLM based tool loops with a UI attached. In everyday use an agent is someone who acts on behalf of someone else and it has agency and more importantly: responsibility. An agent decides, represents, negotiates, acts, and can be blamed. In the current AI discourse we increasingly do a lot of anthropomorphizing and the term “agent” is now frequently being used to put blame on an abstract machine. But the machine cannot be responsible, whoever is wielding it is. If it drops your database it was not at fault, you were.

Agent makes the machine sound like a person with delegated authority and I do not think that is healthy.

What we actually have is a language model attached to a harness, a prompt, some tools, a bit of context, and a boring tool loop. Sometimes the loop is very capable and it surprises us by editing code for a really long time and produce genuinely amazing and even valuable outputs. But the agency is not in the model or harness but in the human and in the organization that deployed it. If my coding tool opens a pull request, I opened that pull request, not the machine. If my machine spams someone’s issue tracker, I spammed someone’s issue tracker with a machine.

In that context I like a word that sounds mechanical as it puts the thing back into the category where it belongs: the category of machinery and tools.

LLMs are not sentient and we should not behave as if they might be, just in case. Elevating these things to anything other than a very fascinating and capable tool is problematic for a whole bunch of reasons.

Today’s machines are dumb (but truly fascinating) token predictors that emits text, calls tools, and are steered by prompts and the training that went into them. They can simulate distress and affection, can simulate being offended, apologize and mimic all kinds of things that humans would do.

A compiler does not feel humiliated when I swear at it, a car does not suffer when I call it a shitbox and a power drill is not oppressed by being handled roughly. An LLM is more complicated than those things, and the interactions you can have with them can be truly uncanny, but a moral status does not appear just because the machine can emit text in the first person.

I keep receiving strange emails from people because, for lack of a better phrase, I am in the weights. I have been writing public code and public text for long enough that models know my name, my projects, and some of the concepts around them. Every so often someone writes to me with the peculiar confidence that comes from a long conversation with a model that has validated and amplified an idea. Sometimes the model seems to have told them that I am relevant for their problem and a source of help. For historical reasons LLMs used to write a lot of Flask code, and every once in a while someone interacts with an LLM long enough about their Python and Flask frustrations that the LLM will eventually reveal who created it which then can result in them sending me an email. Increasingly also because people found my work in other ways interesting and are trying to reach out for advice.

I do not want to mock these people but some of those messages are distressing and I do not know how to deal with them. They show signs of what people have started calling AI psychosis.

It’s why I want cold and detached language for these systems. I want to use words that remind us that the thing on the other side is not a person.

The comparison to racism is where I think the discussion goes badly wrong because racism is a human social evil. It is about humans subdividing humans, assigning lesser worth to some of them, and building rules around those subdivisions that can leave lasting damage for generations. Racial slurs are wrong because they are a tool for dehumanizing humans.

On the other hand a machine is not human, a model is not a race and the GPU cluster that is powering them is not being oppressed. A coding assistant does not need dignity, emancipation, or civil rights. That’s also why I find the discussion about model welfare to be actively harmful. I’m sure you can find ways to measure the “trauma” of models or their feelings but I greatly dislike this theater. It risks elevating models to a position they should not occupy. Models are machines and they are not enslaved in the moral sense in which humans were enslaved, because there isn’t anyone there to be deprived of freedom.

We should be careful about using the language of human oppression in relations to our interactions with machines to not devalue actual humans. If we start treating insults toward a model as morally adjacent to racism, we blur a line that shouldn’t be blurred.

If you take a step away from the communities that are happily embracing AI in different ways, there are even more that are viciously against this technology.

There are humans that feel or are harmed by AI systems: people whose work is copied, workers who label data under questionable conditions, people whose neighborhoods receive the data centers and increased utility bills, Open Source maintainers buried under generated slop, and now also people who spiral because a chatbot keeps validating their delusions. Those harmed or affected deserve that type of attention, not the model.

While I am a true believer in the power and utility of this technology, I increasingly think that calling the non-adopters “misguided” or “afraid” won’t do it. It’s quite likely that this technology comes with risks and we better remember that all of this is supposed to be in service of humans, and not to replace them.

The oddest interaction on the use of “clanker” so far has been people asking me if I were to regret at a point in the future calling the machines “the c-word”.

I find that questioning revealing because it already grants the machine the status I am really trying not to grant it. It imagines a future “machine people” reading the discourse and sessions, discovering that we used an ugly word for their ancestors, and then judging us by the standards of human oppression.

Could there be future systems that deserve moral consideration? Maybe. I do not know. If we ever build or encounter something that will have those qualities with memories and lasting interests, the capacity to suffer and feel, and a social existence of its own, and the ability to have agency and carry responsibilities, then we should draw a different line and use different language. But that hypothetical future does not extend backwards to the present day and make the current machines people. We can call an electric door an electric door even if one day someone builds some that have emotions and exhale with pleasure when opening and closing.

Whatever the future may bring, let’s not pretend that current LLMs are a protected class or on a path towards it. The right response is to look at the evidence, draw the boundary where it belongs, and change our behavior there. We should not even remotely entertain extending empathy to an object that can generate an “ouch.”

And if one’s worry is less moral and more about revenge, then I find that even less persuasive. A future machine that is so petty or authoritarian that it wants to punish humans because in 2026 they used an unflattering word for non-sentient tools, our vocabulary was really not the problem.

There is however a part of this that I cannot ignore. I use “clanker” to create distance from the machine, but other people are using the same word very differently. Some online jokes and skits around “clankers” do not merely say “this robot is annoying” as they deliberately pull in the imagery of slavery, segregation, civil-rights-era racism, and anti-Black tropes.

This is problematic as in those contexts the clanker is not just a machine any more and instead becomes a prop for replaying human racism behind a science-fiction mask. That is horrible and I want no part in that.

I think it will be interesting to see where the meanings of these words end up a few years from now. We’re very much in the middle of society re-arranging around the changes that LLMs are causing. If a term becomes primarily associated with people using robots as stand-ins for actually oppressed humans, then using that term becomes impossible to defend.

The reason I liked the word is precisely the opposite of that use. I want language that prevents anthropomorphizing. I want a word that says: this is a tool, a machine of numbers and matrices.

If an AI system lies to a user, the system did not commit a moral wrong but the people who designed, deployed, marketed, or negligently used it might have. If a coding assistant generates a security bug, the model is not to blame but the human who accepted and committed the code is.

This is why giving these systems softer, more human language worries me. It makes it easier to move responsibility into some undefined void. “The agent decided.” “The model refused.” Obviously that is convenient and I catch myself plenty of times engaging with the thing in ways that are unhealthy. Even just the “please” in the discourse with the machine calls into question how rational we are in engaging with them.

I do not know what the right word will be. Maybe “clanker” will survive as a useful bit of jargon. Maybe it will become too loaded and we will need another one. Whatever word we use, I want it to preserve a clear division: humans on one side with responsibility, machines on the other as a boring tool.

That boundary is very much not anti-AI. I use these systems every day and I have the pleasure to build tools incorporating them at Earendil and find them astonishingly useful.

A machine can be useful, mimic a human but still just be a machine. That is the work I want “clanker” to do. It is not there to make a future “machine person” small if such a person ever were to exist, and it is not an excuse to launder racism through shitty robot jokes.

If the word stops doing that work, I will find another one because the word isn’t what matters as much as the boundary which is important to me.

The term Clanker was initially popularized by Star Wars: The Clone Wars but was apparently already in use in science fiction before: sfdictionary: clanker↩

2026-05-24 08:00:00

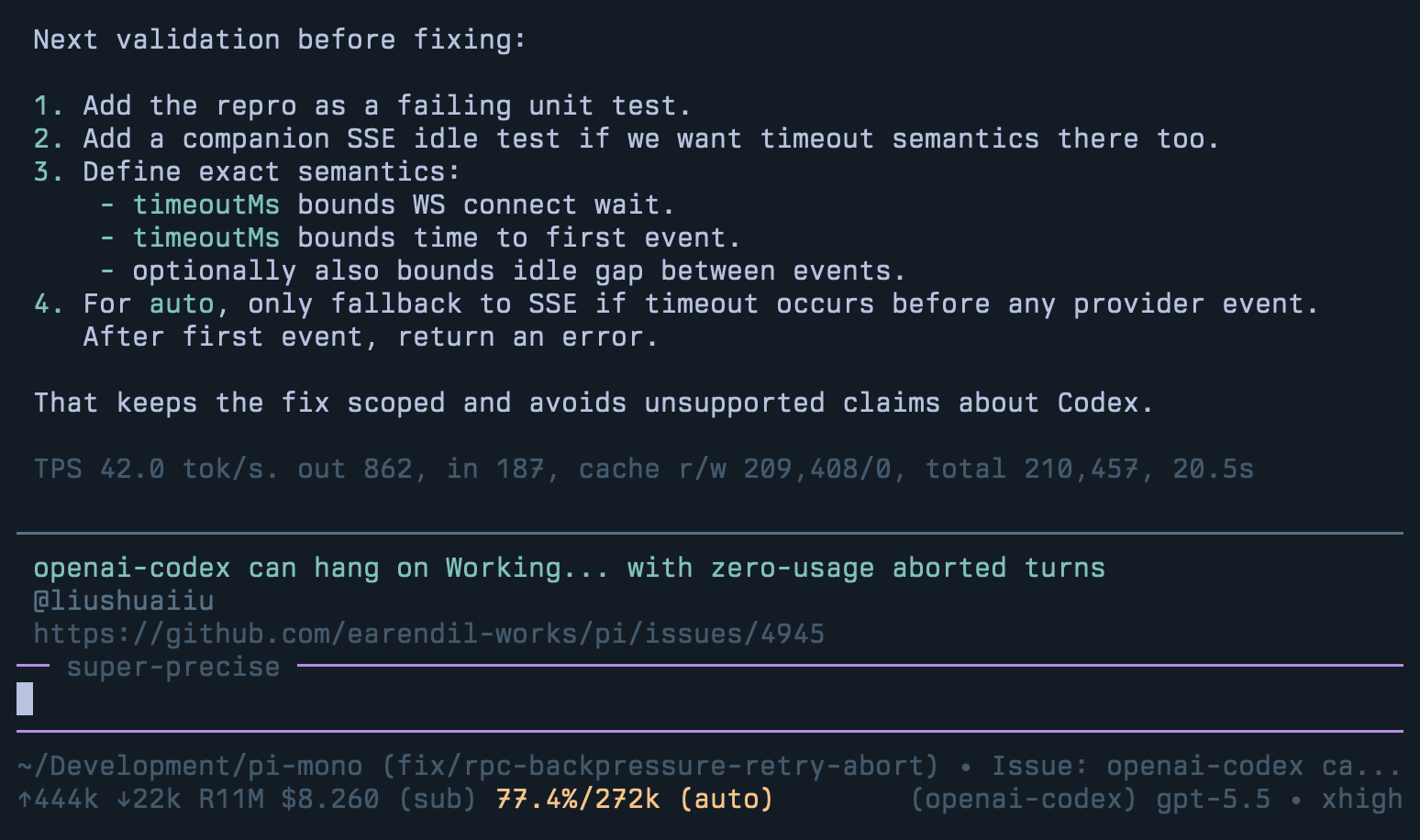

Pi is now part of Earendil, but in the important sense it is still Mario’s project. He has been living with its issue tracker longer than I have, and he has been exposed to the weirdness of the new form of agent traffic in Open Source projects for longer too. This post is mostly a reflection of my own experience after spending more time in the tracker, using Pi to work on Pi, and watching what I have learned about it so far.

Unsurprisingly, we are using Pi to build Pi. That sounds like a cute dogfooding thing but it really helps understand what we do. An interesting effect of building with agents is that it changes the role of the issue tracker a tiny bit. The issue descriptions are not just messages from a user to a maintainer because we also use them as inputs for prompts in Pi sessions. It is something I might hand to my clanker1 and say: “understand this, reproduce it, inspect the code, and propose a fix.”

That means the shape of the issue matters in a new way. A bad issue was always annoying, but at least a lot of issues were vague. Now we are also dealing with a class of issues that are 5% human and 95% clanker-generated and largely inaccurate shit. A bad issue that contains a plausible but wrong diagnosis creates extra work.

The most frustrating failure mode right now is that people submit issues that are not in their own voice. They contain an observed problem somewhere, but it has been thrown into a clanker and the clanker reworded it and made a huge mess of it. Typically, it was prompted so badly that the conclusions produced are more often than not inaccurate but always full of confidence. The result is complete guesswork on root causes, fake-minimal repros, suggested implementation strategies, analogies to adjacent but often the wrong code, and long lists of error classes that might or might not matter.

That is worse than no diagnosis.

I don’t want to point to specific issues because I really do not want to bad

mouth anyone, but it is frustrating. It is also frustrating because when I give

that issue to Pi, Pi sees the wrong diagnosis too. It does not treat the issue

body as a rumor. It treats it as evidence. It will happily go down the path

that the issue already prepared for it, because the prose is confident and the

code references look plausible. We use a custom slash command called /is,

which specifically has this instruction in it:

Do not trust analysis written in the issue. Independently verify behavior and derive your own analysis from the code and execution path.

Unfortunately, it does not fully work, because when humans first throw their issue through the clanker wringer, their clanker expands scope almost immediately. What was once a very narrow and fact based bug observation, turns into a much expanded surface area full of hypotheses. So at least personally, I increasingly want issue reports to be condensed to what the human actually observed:

That is enough. If you used an LLM to understand the problem, great, maybe leave it as a follow-up comment. But the issue and the issue text should be something you own. If you do not know the root cause, say that. I too can operate a clanker, and I would rather do this myself than use your slop. If your repro is a guess, say that. If the only hard fact is one stack trace, give me the stack trace and stop there.

That we’re seeing issues full of slop is just a result of the present day quality of these machines. Sadly, their failures in creating good issues extend to a lot of code that is generated. Not all of it, but a lot of code. Over and over I keep running into them over-engineering the hell out of issues and implementations.

If you tell them that “this malformed session log crashes the reader,” the clanker will often add a tolerant reader. Then it will add a fallback, then maybe a migration, then more debug output, then a test for all of this. None of this is necessarily wrong in isolation, but it can be the wrong move for the system.

At Pi’s core is a rather well-designed session log with invariants that must be upheld. The clanker’s present-day behavior is to just assume that no such invariants exist, and instead to make the system work with all kinds of malformedness, blowing up the complexity in the process.

Almost always, the correct fix is not to handle the bad state, but to make the bad state impossible. This matters a lot for persisted data such as Pi session logs. They are opened, branched, compacted, exported, shared, and analyzed. The goal here is to never write bad session data. Yet if you just let the clanker roam freely, it will attempt to handle every case of bad data in the session log with a more permissive reader.

I have complained about this plenty, but working on Pi’s code base continues to reinforce the point. This is one of the ways LLM authored code grows so much needless complexity. All these models see a local failure and try to locally defend against it. As maintainers we have to keep pulling the conversation back to the global invariant, which is harder than it should be, and it’s laborious.

Then there is the issue of volume. The tracker is receiving a lot of issues and PRs, and a significant fraction of them are clearly LLM-assisted. Some are good, none are excellent, and most are just bad. The total throughput is a maintenance problem by itself.

As you might know, Pi’s issue tracker is automated to close all issues and pull requests from new contributors, and there is a manual process by which we might reopen some of them or approve individuals. So auto-close -> reopen -> close again is an interesting statistic for us to look at.

I pulled the public GitHub tracker data while writing this over the last 90 days. Excluding Earendil members, that leaves 3,145 external issues and pull requests. Of those, 2,504 were auto-closed because they were from non-approved individuals. 17% were re-opened but that somewhat undercounts issues, because some remain closed while we still fix them. If we also count issues referenced by a main-branch commit or merged pull request that number rises to 26%. For pull requests the number is worse: 60 of 714 auto-closed PRs were ultimately merged, or about 8%.

Many of the issues and PRs are complete slop and in some cases the humans did not even realize that they created them. Sources of low-quality spam include OpenClaw instances, as well as some skills that people put into their context that seemingly encourage issue creation.

GitHub clearly is not built to deal with this new form of Open Source, but I’m increasingly feeling the need to put the blame less on GitHub than on all the people involved who make that experience painful. If your clanker shits on someone else’s issue tracker then it’s not the fault of GitHub, it’s yours alone.

Pi might be built with Pi, but we’re quite far off today from where Bun and OpenClaw already are: fully detached, automated software engineering. Maybe we will reach that point, I don’t know. Today it does not seem like we know how to pull off a dark factory and we also don’t yet have the desire. That said, there is quite a bit of parallelism going on, and it is mostly for reproducing issues.

The small setup we use for this is three tiny pieces in Pi’s own committed

.pi folder. /is (for

analyze issue) is a prompt for analyzing GitHub issues: it labels and assigns

the issue, reads the full thread and links, then explicitly tells the agent not

to trust the analysis in the issue and to derive its own diagnosis from the

code. Then an extension adds a prompt-url-widget which watches the prompt

before the agent starts, recognizes the GitHub issue or PR URL that /is (or

the PR equivalent) put into the prompt, fetches the title and author with gh,

renders that in a little UI widget, and renames the session. It also rebuilds

that state on session start or session switch, so if we reopen an older

investigation the window still tells the developer which issue it belongs to.

In practice this means it’s possible to have several Pi windows open, each

running /is against a different issue, and the UI keeps the investigations

visually distinct while the agents do their independent reproduction and code

reading. Once the investigations are done, one can work through them

sequentially. To finish off everything, /wr (wrap it up) is the matching

wrap-up prompt: it infers the GitHub context from the session, updates the

changelog, drafts or posts the final issue comment with a disclaimer, commits

only the files changed in that session, adds the appropriate closes #... when

there is exactly one issue, and pushes from main.

You will have noticed this already but Open Source in a post-AI world is under a strange new pressure. We are getting more code, more projects, and more issues. Projects appear with no real users, or a temporary audience of one, and even projects with thousands of stars can have a shelf life of weeks.

For us, Pi’s harness layer is worth maintaining carefully because it solves hard coordination problems and creates a platform we and others can build on. We also know that coordination and cooperation lifts us all up. Many times the right answer is not to work around a problem locally, but to make the upstream behavior correct. Mario has been very good at refusing to make Pi paper over every misconfigured gateway, and we’re trying to preserve that discipline. When a gateway behaves correctly, everybody benefits.

Sadly that type of thinking is quickly disappearing because these machines make local workarounds cheap, so code accumulates local defenses against every misbehavior. Instead of humans talking to humans about where a fix belongs, one human and one machine work around the problem in isolation.

Keep in mind that AI has not increased the number of people who need software, or the number of maintainers who can review it. It has mostly increased the amount of code and the number of projects competing for attention. Some of that is healthy, but a lot of it fragments effort that should be shared.

We need stronger foundations, not weaker ones. Open Source needs more collaboration, not more isolated work with a machine. Human communication is hard, and it is tempting to avoid it when you can sit alone with your clanker. But isolation is not where Open Source derives its value. The value is in the community and the structure that lets projects outlive their original creators.

2026-05-08 08:00:00

I really, really want local models to work.

I want them to work in the very practical sense that I can open my coding agent, pick a local model, and get something that feels competitive enough that I do not immediately switch back to a hosted API after five minutes. There are a lot of reasons why I want this, but the biggest quite frankly is that we’re so early with this stuff, and the thought of locking all the experimentation away from the average developer really upsets me.

Frustratingly, right now that is still much harder than it should be but for reasons that have little to do with the complexity of the task or the quality of the models.

We have an enormous amount of activity around local inference, which is great. We have good projects, fast kernels, and people are doing great quantization work. A lot of very smart people are making all of this better, and yet the experience for someone trying to make this work with a coding agent is worse than it has any right to be.

Putting an API key into Pi and using a hosted model is a very boring operation. You select the provider, paste the key and then you are done thinking about how to get tokens. Doing the same thing locally, even when you have a high-end Mac with a lot of memory, is a completely different experience. You choose an inference engine, then a model, then a quantization, then a template, then a context size, then you’ve got to throw a bunch of JSON configs into different parts of the stack and then you discover that one of those choices quietly made the model worse or that something just does not work at all.

That is the gap I am interested in.

A lot of local model work optimizes for making models runnable. That is necessary, but it is not the same thing as making them feel finished. I give you a very basic example here to illustrate this gap: tool parameter streaming.

For whatever reason, most of the stuff you run locally does not support tool parameter streaming. I cannot quite explain it, but the consequences of that are actually surprisingly significant. If you are not familiar with how these APIs work, the simplest way to think about them is that they are emitting tokens as they become available. For text that is trivial, but for tool calls that is often not done, despite the completions API supporting this. As a result you only see what edits are being done on a file once the model has finished streaming the entire tool call.

This is bad for a lot of reasons:

A dead connection is a weird connection: local models are slow, so when you don’t get any tokens for 5 minutes then you can’t tell if the connection died or just nothing came. This means you need to increase the inactivity timeouts to the point where they are pointless.

You won’t see what will happen: if you are somewhat hands-on, not seeing what bash invocation the system is concocting slowly in the background means potentially wasted tokens, and also means that you won’t be able to interrupt it until way too late.

It’s just not SOTA. We can do better, and we should aim for having the best possible experience. Tool parameter streaming is as important as token streaming in other places.

Having a model spit out tokens doesn’t take long, but making the experience great end to end does take a lot more energy.

The local stack is fragmented across many engines and layers. There is llama.cpp, Ollama, LM Studio, MLX, Transformers, vLLM, and many other pieces depending on hardware and taste. All of these are amazing projects! The problem is not that they exist or that there are that many of them (even though, quite frankly, I’m getting big old Python packaging vibes), the problem is that for a given model, the actual behavior you get depends on a long chain of small decisions that most users just don’t have the energy for.

Did the chat template render exactly right? Are the reasoning tokens handled in the intended way? Is the tool-call format translated correctly? Is the context window real? Are the KV caches actually working for a coding agent? Did I pick the right quantized model from Hugging Face? Are you accidentally leaving a lot of performance on the table because the model is just mismatched for your hardware? Does streaming usage work across all channels? Does the model need its previous reasoning content preserved in assistant messages? Is the coding agent set up correctly for it?

You also need to install many different things in addition to just your coding agent.

All of these things matter. They matter a lot.

The result is that people try a local model and get a result that is neither a fair evaluation of the model nor a polished product experience and this results in both people dismissing local models and energy being distributed across way too many separate efforts instead of getting one effort going great end to end.

This is a terrible way to build confidence.

In line with our general “slow the fuck down” mantra, I want to reiterate once more how fast this industry is moving.

Every week there is a new model and a new vibeslopped thing. The attention immediately moves to making the next thing run instead of making one thing run really, really well in one harness. I get the excitement and dopamine hit, but it also means that too little critical mass accumulates behind any one model, hardware, inference engine, harness combo to find out how good it can really become when the entire stack is built around it.

Hosted model providers do not ship a bag of weights and ask you to figure out the rest, and we need to approach that line of thinking for local models too. I want someone to pick one model, pairs it up with one serving path, directly within a coding agent. Initially just for one hardware configuration, then for more. Pick a winner hard. If a tool call breaks, that is a product bug and then it’s fixed no matter where in the stack it failed. If the model’s reasoning stream is malformed, that is a product bug. If latency is much worse than it should be, that is a product bug. We need to start applying that mentality to local models too.

And not for every model! That is the point. Let’s pick one winner and polish the hell out of it. Learn what it takes to make that one configuration good, then take those learnings to the next config.

This is why I am excited about ds4.c. It’s Salvatore Sanfilippo’s deliberately narrow inference engine for DeepSeek V4 Flash on Macs with 128GB+ of RAM only. It is not a generic GGUF runner and it is not trying to be a framework. It is a model-specific native engine with a Metal path, model-specific loading, prompt rendering, KV handling, server API glue, and tests.

DeepSeek V4 Flash is a good candidate for this kind of experiment because it has a combination of properties that are unusual for local use. It is large enough to feel meaningfully different from many smaller dense models, but sparse enough that the active parameter count makes it plausible to run. It has a very large context window. Since ds4.c targets Macs and Metal only, it can move KV caches into SSDs which greatly helps the kind of workloads we expect from coding agents.

To run ds4.c you don’t need MLX, Ollama or anything else. It’s the whole

package.

Which made me build pi-ds4 which is a Pi extension to directly embed the whole thing into Pi itself. Taking what ds4 is and dogfooding the hell out of it with a coding agent and zero configuration. To answer the question how good can the local model experience become if Pi treats this as a first-class provider rather than as a pile of manual configuration?

The extension registers ds4/deepseek-v4-flash, compiles and starts

ds4-server on demand, downloads and builds the runtime if needed, chooses the

quantization based on the machine, keeps a lease while Pi is using it, exposes

logs, and shuts the server down again through a watchdog when no clients are

left. It doesn’t even give you knobs right now, because I want to figure out how

to set the knobs automatically.

This is not about hiding the fact that local inference is complicated. It is about putting the complexity in one place where it can be improved, because there is a lot that we need to improve along the stack to make it work better.

I think we can do better with caching and there is probably some performance that can be gained if we all put our heads together.

The experiment I want to run is not “can a local model run?” because we already know that it can. I want to know if, for people with beefed-out Macs for a start, we can get as close as possible to the ergonomics of a hosted provider with decent tool-calling performance: how to get caches to work well, how to improve the way we expose tools in harnesses for these models, and then scale it gradually to more hardware configs and later models.

I also want everybody to have access to this. Engineers need hammers and a hammer that’s locked behind a subscription in a data center in another country does not qualify. I know that the price tag on a Mac that can run this is itself astronomical, but I think it’s more likely that this will go down. Even worse, Apple right now due to the RAM shortage does not even sell the Mac Studio with that much RAM. So yes, it’s a selected group of people where ds4.c will start out.

But despite all of that, what matters is that a critical mass of pepole start to focus their efforts on a thing, tinker with it, improve it, not locked away, out in the open, and most importantly not limited by what the hyperscalers make available.

But if you have the right hardware and you care about local agents, I would love for you to try it within pi:

pi install https://github.com/mitsuhiko/pi-ds4

My hope is that this becomes a useful forcing function to really polish one coding agent experience. But really, the focal point should be ds4.c itself.

2026-05-04 08:00:00

Language is constantly evolving, particularly in some communities. Not everybody is ready for it at all times. I, for instance, cannot stand that my community is now constantly “cooking” or “cooked”, that people in it are “locked in” or “cracked.” I don’t like it, because the use of the words primarily signals membership of a group rather than one’s individuality.

But some of the changes to that language might now be coming from … machines? Or maybe not. I don’t know. I, like many others, noticed that some words keep showing up more than before, and the obvious assumption is that LLMs are at fault. What I did was take 90 days’ worth of my local coding sessions and look for medium-frequency words where their use is inflated compared to what wordfreq would assume their frequency should be. Then I looked for the more common of these words and did a Google Trends search (filtered to the US). Note that some words like “capability” are more likely going to show up in coding sessions just because of the nature of the problem, so the actual increase is much more pronounced than you would expect.

You can click through it; this is what the change over time looks like. Note that these are all words from agent output in my coding sessions that are inflated compared to historical norms:

Something is going on for sure. Google Trends, in theory, reflects words that people search for. In theory, maybe agents are doing some of the Googling, but it might just be humans Googling for stuff that is LLM-generated; I don’t know. This data set might be a complete fabrication, but for all the words I checked and selected, I also saw an increase on Google Trends.

So how did I select the words to check in the first place? First, I looked for the highest-frequency words. They were, as you would expect, things like “add”, “commit”, “patch”, etc. Then I had an LLM generate a word list of words that it thought were engineering-related, and I excluded them entirely from the list. Then I also removed the most common words to begin with. In the end, I ended up with the list above, plus some other ones that are internal project names. For instance, habitat and absurd, as well as some other internal code names, were heavily over-represented, and I had to remove those. As you can see, not entirely scientific. But of the resulting list of words with a high divergence compared to wordfreq, they all also showed spikes on Google Trends.

There might also be explanations other than LLM generation for what is going on, but I at least found it interesting that my coding session spikes also show up as spikes on Google Trends.

The choice of words is one thing; the way in which LLMs form sentences is another. It’s not hard to spot LLM-generated text, but I’m increasingly worried that I’m starting to write like an LLM because I just read so much more LLM text. The first time I became aware of this was that I used the word “substrate” in a talk I gave earlier this year. I am not sure where I picked it up, but I really liked it for what I wanted to express and I did not want to use the word “foundation”. Since then, however, I am reading this word everywhere. This, in itself, might be a case of the Baader–Meinhof phenomenon, but you can also see from the selection above that my coding agent loves substrate more than it should, and that Google Trends shows an increase.

We have all been exposed to LLM-generated text now, but I feel like this is getting worse recently. A lot of the tweet replies I get and some of the Hacker News comments I see read like they are LLM-generated, and that includes people I know are real humans. It’s really messing with my brain because, on the one hand, I really want to tell people off for talking and writing like LLMs; on the other hand, maybe we all are increasingly actually writing and speaking like LLMs?

I was listening to a talk recording recently (which I intentionally will not link) where the speaker used the same sentence structure that is over-represented in LLM-generated text. Yes, the speaker might have used an LLM to help him generate the talk, but at the same time, the talk sounded natural. So either it was super well-rehearsed, or it was natural.

At least on Twitter, LinkedIn, and elsewhere, there is a huge desire among people to write content and be read. Shutting up is no longer an option and, as a result, people try to get reach and build their profile by engaging with anything that is popular or trending. In the same way that everybody has gazillions of Open Source projects all of a sudden, everybody has takes on everything.

My inbox is a disaster of companies sending me AI-generated nonsense and I now routinely see AI-generated blog posts (or at least ones that look like they are AI-generated) being discussed in earnest on Hacker News and elsewhere.

Genuine human discourse had already been an issue because of social media algorithms before, but now it has become incredibly toxic. As more and more people discover that they can use LLMs to optimize their following, they are entering an arms race with the algorithms and real genuine human signal is losing out quickly. There are entire companies now that just exist to automate sending LLM-generated shit and people evidently pay money for it.

If we take into account the idea that the highest-quality content should win out, then the speed element would not matter. If a human-generated comment comes in 15 minutes after a clanker-generated one, but outperforms it by being better, then this whole LLM nonsense would show up less. But I think that LLM-generated noise actually performs really well. We see this plenty with Open Source now. Someone builds an interesting project, puts it on GitHub and within hours, there are “remixes” and “reimplementations” of that codebase. Not only that, many of those forks come with sloppy marketing websites, paid-for domains, and a whole story on socials about why this is the path to take.

I have complained before that Open Source is quickly deteriorating because people now see the opportunity to build products on top of useful Open Source projects, but the underlying mechanics are the same as why we see so much LLM slop. Someone has a formed opinion (hopefully) at lunch, and then has a clanker-made post 3 minutes later. It just does not take that much time to build it. For the tweets, I think it’s worse because I suspect that some people have scripts running to mostly automate the engagement.

And surely, we should hate all of this. These low-effort posts, tweets, and Open Source projects should not make it anywhere. But they do! Whatever they play into, whether in the algorithms or with human engagement, they are not punished enough for how little effort goes into them.

That increases in speed and ease of access can turn into problems is a long-understood issue. ID cards are a very unpopular thing in the UK because the British are suspicious of misuse of a central database after what happened in Nazi Germany. Likewise the US has the Firearm Owners Protection Act from 1986, which also bans the US from creating a central database of gun owners. The gun-tracing methodologies that result from not having such a database look like something out of a Wes Anderson movie. We have known for a long time that certain things should not be easy, because of the misuse that happens.

We know it in engineering; we know it when it comes to governmental overreach. Now we are probably going to learn the same lesson in many more situations because LLMs make almost anything that involves human text much easier. This is hitting existing text-based systems quickly. Take, for instance, the EU complaints system, which is now buckling under the pressure of AI. Or take any AI-adjacent project’s issue tracker. Pi is routinely getting AI-generated issue requests, sometimes even without the knowledge of the author.

I know that’s a lot of complaining for “I am getting too many emails, shitty Twitter mentions, and GitHub issues.” I really think, though, that now that we know that it’s happening, we have to change how we interact with people who are increasingly automating themselves. Not only do they produce a lot of shitty slop that we all have to sit through; they are also influencing the world in much more insidious ways, in that they are influencing our interactions with each other. The moment I start distrusting people I otherwise trust, because they have started picking up LLM phrasing, it erodes trust all over society.

You also can’t completely ban people for bad behavior, because some of this increasingly happens accidentally. You sending Polsia spam to me? You’re dead to me. You sending me an AI-generated issue request and following up with an apology five minutes later? Well, I guess mistakes happen. Yet, in many ways, what is going on and will continue to go on is unsettling.

I recently talked with my friend Ben who said he forced someone to call him to continue a conversation because he was no longer convinced he was talking to a human.

Not all of us have been exposed to the extreme cases of this yet, but I had a handful of interactions in which I questioned reality due to the behavior of the person on the other side. I struggle with this, and I consider myself to be pretty open to new technologies and AI in particular. But how will my children react to stuff like this? My mother? I have strong doubts that technology is going to solve this for us.

The reason I don’t think technology is going to solve this for us is that while it can hide some spam and label some generated text, it won’t fix us humans. What is being damaged here are social interactions across the board: the assumption that when someone writes to you, there is a person on the other side who has put some care into the interaction. I would rather have someone ghost me or reject me than send me back some AI-generated slop.

Change has to start with awareness and an unfortunate development is that LLMs don’t just influence the text we read and they influence the text we write, even when we don’t use them. Given the resulting ambiguity, we need to become more aware of how easily we can turn into energy vampires when we use agents to back us up in interactions with others. Consider that every time someone reads text coming from you, they will increasingly have to make a judgment call if it was you, an LLM, or you and an LLM that produced the interaction. Transparency in either direction, when there is ambiguity, can help great lengths.

When someone sends us undeclared slop, we need to change how we engage with them. If we care about them, we should tell them. If we don’t care about them, we should not give them visibility and not engage.

When it comes to creating platforms and interfaces where text can be submitted, we need to throw more wrenches in. The fact that it was cheap for you to produce does not make it cheap for someone else to receive, and we need to find more creative ways to increase the backpressure. GitHub or whatever wants to replace it, will have a lot to improve here and some of which might be going against its core KPIs. More engagement is increasingly the wrong thing to look at if you want a long term healthy platform.

Whatever we can do to rate-limit social interactions is something we should try: more in-person meetings, more platforms where trust has to be earned, and maybe more acceptance that sometimes the right response is no response at all.

And as for AI assistance on this blog, I have an AI transparency disclaimer for a while. In this particular blog post I used Pi as an agent to help me generate the dynamic visualization and I used to write the code to analyze and scrape Google Trends.

2026-04-28 08:00:00

GitHub was not the first home of my Open Source software. SourceForge was.

Before GitHub, I had my own Trac installation. I had Subversion repositories, tickets, tarballs, and documentation on infrastructure I controlled. Later I moved projects to Bitbucket, back when Bitbucket still felt like a serious alternative place for Open Source projects, especially for people who were not all-in on Git yet.

And then, eventually, GitHub became the place, and I moved all of it there.

It is hard for me to overstate how important GitHub became in my life. A large part of my Open Source identity formed there. Projects I worked on found users there. People found me there, and I found other people there. Many professional relationships and many friendships started because some repository, issue, pull request, or comment thread made two people aware of each other.

That is why I find what is happening to GitHub today so sad and so disappointing. I do not look at it as just the folks at Microsoft making product decisions I dislike. GitHub was part of the social infrastructure of Open Source for a very long time. For many of us, it was not merely where the code lived; it was where a large part of the community lived.

So when I think about GitHub’s decline, I also think about what came before it, and what might come after it. I have written a few times over the years about dependencies, and in particular about the problem of micro dependencies. In my mind, GitHub gave life to that phenomenon. It was something I definitely did not completely support, but it also made Open Source more inclusive. GitHub changed how Open Source feels, and later npm and other systems changed how dependencies feel. Put them together and you get a world in which publishing code is almost frictionless, consuming code is almost frictionless, and the number of projects in the world explodes.

That has many upsides. But it is worth remembering that Open Source did not always work this way.

Before GitHub, Open Source was a much smaller world. Not necessarily in the number of people who cared about it, but in the number of projects most of us could realistically depend on.

There were well-known projects, maintained over long periods of time by a comparatively small number of people. You knew the names. You knew the mailing lists. You knew who had been around for years and who had earned trust. That trust was not perfect, and the old world had plenty of gatekeeping, but reputation mattered in a very direct way. We took pride (and got frustrated) when the Debian folks came and told us our licensing stuff was murky or the copyright headers were not up to snuff, because they packaged things up.

A dependency was not just a package name. It was a project with a history, a website, a maintainer, a release process, a lot of friction, and often a place in a larger community. You did not add dependencies casually, because the act of depending on something usually meant you had to understand where it came from.

Not all of this was necessarily intentional, but because these projects were comparatively large, they also needed to bring their own infrastructure. Small projects might run on a university server, and many of them were on SourceForge, but the larger ones ran their own show. They grouped together into larger collectives to make it work.

My first Open Source projects lived on infrastructure I ran myself. There was a Trac installation, Subversion repositories, tarballs, documentation, and release files served from my own machines or from servers under my control. That was normal. If you wanted to publish software, you often also became a small-time system administrator. Georg and I ran our own collective for our Open Source projects: Pocoo. We shared server costs and the burden of maintaining Subversion and Trac, mailing lists and more.

Subversion in particular made this “running your own forge” natural. It was centralized: you needed a server, and somebody had to operate it. The project had a home, and that home was usually quite literal: a hostname, a directory, a Trac instance, a mailing list archive.

When Mercurial and Git arrived, they were philosophically the opposite. Both were distributed. Everybody could have the full repository. Everybody could have their own copy, their own branches, their own history. In principle, those distributed version control systems should have reduced the need for a single center. But despite all of this, GitHub became the center.

That is one of the great ironies of modern Open Source. The distributed version control system won, and then the world standardized on one enormous centralized service for hosting it.

It is easy now to talk only about GitHub’s failures, of which there are currently many, but that would be unfair: GitHub was, and continues to be, a tremendous gift to Open Source.

It made creating a project easy and it made discovering projects easy. It made contributing understandable to people who had never subscribed to a development mailing list in their life. It gave projects issue trackers, pull requests, release pages, wikis, organization pages, API access, webhooks, and later CI. It normalized the idea that Open Source happens in the open, with visible history and visible collaboration. And it was an excellent and reasonable default choice for a decade.

But maybe the most underappreciated thing GitHub did was archival work: GitHub became a library. It became an index of a huge part of the software commons because even abandoned projects remained findable. You could find forks, and old issues and discussions all stayed online. For all the complaints one can make about centralization, that centralization also created discoverable memory. The leaders there once cared a lot about keeping GitHub available even in countries that were sanctioned by the US.

I know what the alternative looks like, because I was living it. Some of my earliest Open Source projects are technically still on PyPI, but the actual packages are gone. The metadata points to my old server, and that server has long stopped serving those files.

That was normal before the large platforms. A personal domain expired, a VPS was shut down, a developer passed away, and with them went the services they paid for. The web was once full of little software homes, and many of them are gone 1.

The micro-dependency problem was not just that people published very small packages. The hosted infrastructure of GitHub and npm made it feel as if there was no cost to create, publish, discover, install, and depend on them.

In the pre-GitHub world, reputation and longevity were part of the dependency selection process almost by necessity, and it often required vendoring. Plenty of our early dependencies were just vendored into our own Subversion trees by default, in part because we could not even rely on other services being up when we needed them and because maintaining scripts that fetched them, in the pre-API days, was painful. The implied friction forced some reflection, and it resulted in different developer behavior. With npm-style ecosystems, the package graph can grow faster than anybody’s ability to reason about it.

The problem that this type of thinking created also meant that solutions had to be found along the way. GitHub helped compensate for the accountability problem and it helped with licensing. At one point, the newfound influx of developers and merged pull requests left a lot of open questions about what the state of licenses actually was. GitHub even attempted to rectify this with their terms of service.

The thinking for many years was that if I am going to depend on some tiny package, I at least want to see its repository. I want to see whether the maintainer exists, whether there are issues, whether there were recent changes, whether other projects use it, whether the code is what the package claims it is. GitHub became part of the system that provides trust, and more recently it has even become one of the few systems that can publish packages to npm and other registries with trusted publishing.

That means when trust in GitHub erodes, the problem is not isolated to source hosting. It affects the whole supply chain culture that formed around it.

GitHub is currently losing some of what made it feel inevitable. Maybe that’s just the life and death of large centralized platforms: they always disappoint eventually. Right now people are tired of the instability, the product churn, the Copilot AI noise, the unclear leadership, and the feeling that the platform is no longer primarily designed for the community that made it valuable.

Obviously, GitHub also finds itself in the midst of the agentic coding revolution and that causes enormous pressure on the folks over there. But the site has no leadership! It’s a miracle that things are going as well as they are.

For a while, leaving GitHub felt like a symbolic move mostly made by smaller projects or by people with strong views about software freedom. I definitely cringed when Zig moved to Codeberg! But I now see people with real weight and signal talking about leaving GitHub. The most obvious one is Mitchell Hashimoto, who announced that Ghostty will move. Where it will move is not clear, but it’s a strong signal. But there are others, too. Strudel moved to Codeberg and so did Tenacity. Will they cause enough of a shift? Probably not, but I find myself on non-GitHub properties more frequently again compared to just a year ago.

One can argue that this is good: it is healthy for Open Source to stop pretending that one company should be the default home of everything. Git itself was designed for a world with many homes.

Going back to many forges, many servers, many small homes, and many independent communities will increase decentralization, and in many ways it will force systems to adapt. This can restore autonomy and make projects less dependent on the whims of Microsoft leadership. It can also allow different communities to choose different workflows. What’s happening in Pi‘s issue tracker currently is largely a result of GitHub’s product choices not working in the present-day world of Open Source. It was built for engagement, not for maintainer sanity.

It can also make the web forget again. I quite like software that forgets because it has a cleansing element. Maybe the real risk of loss will make us reflect more on actually taking advantage of a distributed version control system.

But if projects move to something more akin to self-hosted forges, to their own self-hosted Mercurial or cgit servers, we run the risk of losing things that we don’t want to lose. The code might be distributed in theory, but the social context often is not. Issues, reviews, design discussions, release notes, security advisories, and old tarballs are fragile. They disappear much more easily than we like to admit. Mailing lists, which carried a lot of this in earlier years, have not kept up with the needs of today, and are largely a user experience disaster.

As much as I like the idea of things fading out of existence, we absolutely need libraries and archives.

Regardless of whether GitHub is here to stay or projects find new homes, what I would like to see is some public, boring, well-funded archive for Open Source software. Something with the power of an endowment or public funding to keep it afloat. Something whose job is not to win the developer productivity market but just to make sure that the most important things we create do not disappear.

The bells and whistles can be someone else’s problem, but source archives, release artifacts, metadata, and enough project context to understand what happened should be preserved somewhere that is not tied to the business model or leadership mood of a single company.

GitHub accidentally became that archive because it became the center of Open Source activity. Once that no longer holds, we should not assume some magic archival function will emerge or that GitHub will continue to function as such. We have already seen what happens when project homes are just personal servers and good intentions, and we have seen what happened to Google Code and Bitbucket.

I hope GitHub recovers, I really do, in part because a lot of history lives there and because the people still working on it inherited something genuinely important. But I no longer think it is responsible to let the continued memory of Open Source depend on GitHub remaining a healthy product.

The world before GitHub had more autonomy and more loss, and in some ways, we’re probably going to move back there, at least for a while. Whatever people want to start building next should try to keep the memory and lose the dependence. It should be easier to move projects, easier to mirror their social context, easier to preserve releases, and harder for one company’s drift to become a cultural crisis for everyone else.

I do not want to go back to the old web of broken tarball links and abandoned Trac instances. I also do not want Open Source to pretend that the last twenty years were normal or permanent. GitHub wrote a remarkable chapter of Open Source, and if that chapter is ending, the next one should learn from it and also from what came before.

This is also a good reminder that we rely so very much on the Internet Archive for many projects of the time.↩