2026-05-22 08:00:00

It’s time to get the VMs rolling.

As stated in the intro I’m going to use Terraform to provision VMs and to configure Talos Linux. We’ll end up with this simple interface:

# Create VMs and configure Talos nodes

terraform apply

# Destroy and reset all

terraform destroy

I run these commands manually on my machine. It’s possible to add these to a CI but I like the faster feedback of running them directly.

There will also be some extra complexity and bootstrap commands as I want to use Cilium for service routing, the Container Network Interface (CNI), and in the future for the Gateway API. (Is it worth it? I don’t know, but I’m too committed to the setup to change it now.)

A quick note about the file structure I’ll use. I’ll separate the repository in two folders: one for infrastructure related files (what we’ll be doing in this post) and one for GitOps (apps and Kubernetes manifests).

├── infrastructure # All Terraform files here

│ ├── variables.auto.tfvars

│ └── talos.tf

└── gitops # GitOps using ArgoCD, setup in the future

You can split up Terraform files and terraform will automatically source all .tf files, so you can organize it however you like.

I like to separate the variable assignments into its own file (terraform sources all *.auto.tfvars files) but it’s not necessary either.

To use Terraform we need to add providers. I used the bpg/proxmox and siderolabs/talos for Proxmox and Talos Linux support:

terraform {

required_providers {

proxmox = {

source = "bpg/proxmox"

version = "0.95.0"

}

talos = {

source = "siderolabs/talos"

version = "0.10.1"

}

}

}

We also need to configure the Proxmox provider:

provider "proxmox" {

insecure = true

endpoint = "https://10.1.3.1:8006"

username = "root@pam"

password = "..."

}

You can (and maybe should) use an API token instead of username/password but I didn’t bother.

Either way it’s not good to commit credentials to git, so let’s move them to secret.auto.tfvars (add the file to .gitignore):

username = "root@pam"

password = "..."

This will get loaded automatically but we need variable blocks and reference them using var:

variable "username" {

type = string

}

variable "password" {

type = string

sensitive = true

}

provider "proxmox" {

username = var.username

password = var.password

}

I’d like to validate that the Proxmox connection works but Terraform seems to recognize that it’s not used yet, so it won’t try to connect yet. Let’s continue.

Talos Linux has an image factory where you can find images to download. It’s a helpful tool as there are many parameters you can tweak to get the image you want.

The settings I chose are:

qemu-guest-agent System extension (important for Proxmox)

(Note that iscsi-tools and util-linux-tools are also required for Longhorn.)

You’ll receive an image schematic ID, for example: ce4c980550dd2ab1b17bbf2b08801c7eb59418eafe8f279833297925d67c7515.

You can download this to Proxmox manually but Terraform can automate that.

To make it easier to update I broke it out to variables:

talos_version = "1.12.6"

talos_image_factory_id = "ce4c980550dd2ab1b17bbf2b08801c7eb59418eafe8f279833297925d67c7515"

And then reconstruct the download url and tell Proxmox to download it like so:

resource "proxmox_virtual_environment_download_file" "talos_image" {

content_type = "iso"

datastore_id = "local"

node_name = "dorne"

url = "https://factory.talos.dev/image/${var.talos_image_factory_id}/v${var.talos_version}/nocloud-amd64.raw.xz"

decompression_algorithm = "zst"

file_name = "talos-v${var.talos_version}-nocloud-amd64.img"

overwrite = false

}

Note that datastore_id should match Proxmox storage that can contain images and node_name should match the name of the Proxmox node (Proxmox can manage multiple machines/nodes, this one is called dorne).

Now we can test that our Proxmox provider is wired correctly:

terraform init

terraform plan

If everything is okay Terraform should tell you that it wants to create a resource. Let’s execute the download:

terraform apply

And it should show up in the Proxmox GUI.

Creating a VM is straightforward but there are a few settings we need to get right. Here’s a Terraform resource that will create a Talos Linux VM:

resource "proxmox_virtual_environment_vm" "talos" {

name = "talos-cp1"

tags = ["terraform", "talos"]

node_name = "dorne"

on_boot = true

stop_on_destroy = true

agent {

enabled = true

}

disk {

datastore_id = "local-lvm"

file_id = proxmox_virtual_environment_download_file.talos_image.id

interface = "virtio0"

iothread = true

discard = "on"

size = 20

}

initialization {

datastore_id = "local-lvm"

ip_config {

ipv4 {

address = "10.1.4.10/8"

gateway = "10.0.0.1"

}

}

}

cpu {

cores = 4

type = "x86-64-v2-AES"

}

memory {

dedicated = 4 * 1024

floating = 4 * 1024

}

network_device {

bridge = "vmbr0"

}

operating_system {

type = "l26"

}

}

The VM in Proxmox will be called talos-cp1 on the dorne Proxmox node (like before).

It’s important to enable the QEMU guest agent (which we also enabled in the image factory).

Using a raw image (instead of an .iso) avoids the installation process as it directly boots from the image.

The raw image also enables cloud-init configuration (the initialization block), which allows us to set a fixed IP (10.1.4.10) and set the gateway (my router, at 10.0.0.1).

I create it with 4 CPU cores, 4GB of memory, and an OS disk size of 20 GB.

This is fine to start with but you may run out of RAM or disk size when you start adding applications (like I did). Bumping up to something like 8 GB RAM and 40 GB storage is probably a good idea.

There are some extra settings there like the cpu and operating system type that I had to have but I can’t explain why.

The above will create a single VM but for fun I wanted more.

Let’s go with 3 VMs and let’s break it out in a variable:

nodes = [

{

hostname = "talos-cp1"

ip = "10.1.4.10"

cores = 4

memory = 4 * 1024,

},

{

hostname = "talos-cp2"

ip = "10.1.4.11"

cores = 4

memory = 4 * 1024,

},

{

hostname = "talos-cp3"

ip = "10.1.4.12"

cores = 4

memory = 4 * 1024,

}

]

The declaration looks like this:

variable "nodes" {

description = "List of nodes and their configurations."

type = list(object({

hostname = string

ip = string

cores = number

memory = number

}))

}

Looping in Terraform feels a bit weird to me as the syntax doesn’t wrap the whole resource, but it’s just a field that creates an each variable you can reference.

Something like this (leaving out unchanged fields):

resource "proxmox_virtual_environment_vm" "talos" {

for_each = { for node in var.nodes : node.hostname => node }

name = each.key

cpu {

cores = each.value.cores

type = "x86-64-v2-AES"

}

memory {

dedicated = each.value.memory

floating = each.value.memory

}

initialization {

datastore_id = "local-lvm"

ip_config {

ipv4 {

address = "${each.value.ip}/8"

gateway = "10.0.0.1"

}

}

}

# ...

}

This should now create 3 VMs, with different hostnames and IPs.

Now we need to configure Talos Linux.

We’ll essentially try to replicate the talosctl apply-config commands from the documentation via Terraform.

First some variables to make life a little easier:

talos_version = "1.12.6"

kubernetes_version = "1.35.2"

cluster_name = "talos-cluster"

Then we’ll need three configurations: machine secrets, client config, and the control machine config. The three nodes will be control nodes but if you want to create worker nodes you need a specific config for those, but I’ll skip that in this post.

resource "talos_machine_secrets" "machine_secrets" {

talos_version = "v${var.talos_version}"

}

data "talos_client_configuration" "client_config" {

cluster_name = var.cluster_name

client_configuration = talos_machine_secrets.machine_secrets.client_configuration

endpoints = local.node_ips

nodes = local.node_ips

}

The client config references the machine secrets and needs to list the IP addresses for all nodes and its endpoints (the control nodes, for me that’s all the nodes).

I use a local to collect those:

locals {

node_ips = [for node in var.nodes : node.ip]

}

The control machine config follows a similar pattern:

data "talos_machine_configuration" "control_machine_config" {

cluster_name = var.cluster_name

cluster_endpoint = local.cluster_endpoint

machine_type = "controlplane"

machine_secrets = talos_machine_secrets.machine_secrets.machine_secrets

kubernetes_version = "v${var.kubernetes_version}"

talos_version = "v${var.talos_version}"

config_patches = []

}

Of note here is cluster_endpoint which for us will be the first control node IP. We’ll change it later to a Virtual IP (VIP) to avoid a single point of failure, but for now:

locals {

node_ips = [for node in var.nodes : node.ip]

primary_control_node_ip = local.node_ips[0]

}

What about config_patches?

They correspond to the patches you apply with talosctl patch and we need to use it for a few things.

One thing is to specify the install image so Talos pulls from the image factory during upgrades:

locals {

install_image = "factory.talos.dev/installer/${var.talos_image_factory_id}:v${var.talos_version}"

}

data "talos_machine_configuration" "control_machine_config" {

config_patches = [

yamlencode({

machine = {

install = {

disk = "/dev/vda" # virtio0 disk

image = local.install_image

}

}

})

]

}

Talos will boot fine without it but if I understand things correctly during updates it’ll then use the official upstream image and will remove any extensions (such as the QEMU agent we need).

Another thing we need to patch is to allow our control nodes to schedule workloads because we don’t have any worker nodes:

data "talos_machine_configuration" "control_machine_config" {

config_patches = [

yamlencode({

cluster = {

allowSchedulingOnControlPlanes = true

}

})

# Other patches here...

]

}

Then we’ll need to apply the configurations to our nodes:

resource "talos_machine_configuration_apply" "control_machine_config_apply" {

for_each = { for node in var.nodes : node.hostname => node }

depends_on = [proxmox_virtual_environment_vm.talos]

client_configuration = talos_machine_secrets.machine_secrets.client_configuration

machine_configuration_input = data.talos_machine_configuration.control_machine_config.machine_configuration

node = each.value.ip

}

Note how we loop through the nodes and target them individually using their IPs, and that we added a dependency to proxmox_virtual_environment_vm.talos to ensure that the VMs are created before we try to apply the configuration.

If you terraform apply this then the VMs will spin up and the nodes will leave Maintenance mode but get stuck in Booting and will print something like:

etcd is waiting to join the cluster, if this node is the first node of the cluster,

please run `talosctl bootstrap` against one of the following IPs:

[10.1.4.10]

(a bunch of other warnings and errors)

With Terraform, bootstrapping is done like this:

resource "talos_machine_bootstrap" "bootstrap" {

depends_on = [talos_machine_configuration_apply.control_machine_config_apply]

client_configuration = talos_machine_secrets.machine_secrets.client_configuration

node = local.primary_control_node_ip

endpoint = local.primary_control_node_ip

}

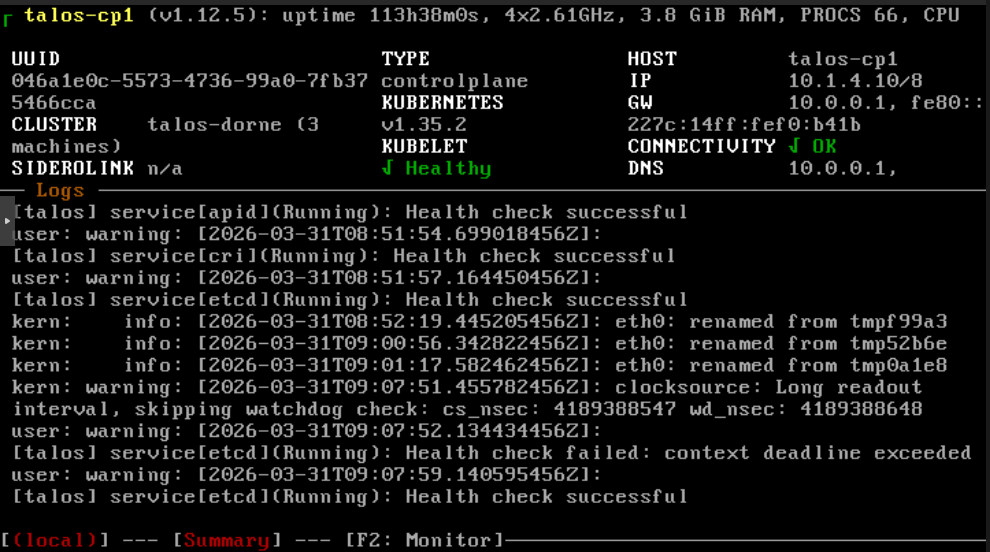

The nodes seem to be running fine and they all signal a Healthy Running state in the Proxmox console. But how do we access them?

We need the Talos and Kubernetes configuration files:

resource "talos_cluster_kubeconfig" "kubeconfig" {

depends_on = [talos_machine_bootstrap.bootstrap]

client_configuration = talos_machine_secrets.machine_secrets.client_configuration

node = local.primary_control_node_ip

}

output "talosconfig" {

value = data.talos_client_configuration.client_config.talos_config

sensitive = true

}

output "kubeconfig" {

value = resource.talos_cluster_kubeconfig.kubeconfig.kubeconfig_raw

sensitive = true

}

And generate them like so:

terraform output -raw talosconfig > talosconfig.yaml

terraform output -raw kubeconfig > kubeconfig.yaml

# Should be ok

talosctl --talosconfig ./talosconfig.yaml health -n 10.1.4.10

# Look at pods

kubectl --kubeconfig ./kubeconfig.yaml get pods -A

You can move them to ~/.talos/config and ~/.kube/config, or set TALOSCONFIG and KUBECONFIG to avoid specifying them all the time.

At this point we have a functional cluster but first I want to change a few things.

Networking, the thing that keeps your average homelabber awake at night. As if that’s not enough, in true homelabber fashion we’ll create some extra problems for ourselves just because.

I wanted to use Cilium for proxying and as the Container Network Interface (CNI) which means we have to disable them on the Talos nodes. New config_patches:

data "talos_machine_configuration" "control_machine_config" {

config_patches = [

# Disables the Flannel, the default CNI for Talos

yamlencode({

cluster = {

network = {

cni = {

name = "none"

}

}

}

}),

# Disables kube-proxy, the default proxy service

yamlencode({

cluster = {

proxy = {

disabled = true

}

}

})

# ...

]

}

If we rebuild the nodes we’ll see that talosctl health will stop at not ready:

waiting for all k8s nodes to report ready: some nodes are not ready: [talos-cp1-tmp talos-cp2-tmp talos-cp3-tmp]

This is to be expected as we haven’t installed Cilium yet. First we need to manually install the Gateway CRDs as they need to exist before we install cilium (because we want to use it for Gateway management later as well):

kubectl apply -f https://github.com/kubernetes-sigs/gateway-api/releases/download/v1.2.1/standard-install.yaml

Then we’ll install Cilium using Helm:

helm repo add cilium https://helm.cilium.io/

helm repo update

helm install cilium cilium/cilium \

--namespace kube-system \

--version 1.19.2 \

--set kubeProxyReplacement=true \

--set k8sServiceHost=10.1.4.10 \

--set k8sServicePort=6443 \

--set l2announcements.enabled=true \

--set externalIPs.enabled=true \

--set gatewayAPI.enabled=true \

--set ipam.mode=kubernetes \

--set operator.replicas=1 \

--set securityContext.privileged=true

There are a bunch of options here, the most notable:

kubeProxyReplacement=true use it as a kube-proxy replacement.

k8sServiceHost=10.1.4.10 target the first control node.

l2announcements.enabled=true use L2 announcements to give out IP addresses.

externalIPs.enabled=true allow us to set fixed IPs manually.

gatewayAPI.enabled=true enable the Gateway API that we’ll use in later posts.

securityContext.privileged=true needed to work with Talos.

With this installed talosctl health should after a while return all OK again.

Let’s try out a good old classic to see if it works: the nginx test.

We’ll use LoadBalancer to get an external IP:

kubectl run nginx --image=nginx --port=80

kubectl expose pod nginx --type=LoadBalancer --port=80

Get the IP:

$ kubectl get svc nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx LoadBalancer 10.107.132.202 <pending> 80:30952/TCP 2s

Oh right, we haven’t configured load balancing for Cilium yet. Time for our first Kubernetes manifest!

apiVersion: "cilium.io/v2"

kind: CiliumLoadBalancerIPPool

metadata:

name: first-pool

spec:

blocks:

- start: 10.1.4.101

stop: 10.1.4.255

---

apiVersion: "cilium.io/v2alpha1"

kind: CiliumL2AnnouncementPolicy

metadata:

name: l2-announcement

spec:

interfaces:

- eth0

loadBalancerIPs: true

This tells Cilium to assign load balancing IPs in the range 10.1.4.101–10.1.4.255.

Apply:

kubectl apply -f cilium_config.yaml

After a while (yes, I hate waiting) kubectl get svc nginx should show an external IP that we can visit in the browser to verify that yes, we have a running app!

Mother always nagged me to clean up after myself:

kubectl delete pod nginx

kubectl delete svc nginx

Kubernetes is supposed to be a resilient thing but we’ve introduced a central point of failure by using the first control node as the endpoint. If that one node goes down then the entire cluster is now unreachable.

We’ll fix that with a Virtual IP, where all control nodes will share a single IP. If one of them goes down then one of the others will take over. (How? Must be magic!)

Anyway, let’s designate a VIP:

cluster_vip = "10.1.4.100"

Use it for the cluster endpoint:

locals {

cluster_endpoint = "https://${var.cluster_vip}:6443"

}

And we’ll also need to patch the nodes to tell them to use the VIP:

data "talos_machine_configuration" "control_machine_config" {

config_patches = [

yamlencode({

machine = {

network = {

interfaces = [{

interface = "eth0"

vip = {

ip = var.cluster_vip

}

}]

}

}

})

]

}

For it to work we also need to specify an interface.

eth0 happened to work for me (verify with talosctl get links).

We also need to update the Cilium install parameters to target the VIP:

--set k8sServiceHost=10.1.4.100

To see that it gets assigned we can use talosctl get addresses; one of the nodes should be assigned the VIP.

If we regenerate kubeconfig it should also contain the VIP and not the node IPs, so if kubectl can reach the cluster then all is good.

One last thing I’d like to mention is how to add your own nameserver.

I’ve got my DNS overrides on my router at 10.0.0.1 that I’d like the nodes to pickup.

Here’s how to patch it, with a fallback to 1.1.1.1:

data "talos_machine_configuration" "control_machine_config" {

config_patches = [

yamlencode({

machine = {

network = {

nameservers = ["10.0.0.1", "1.1.1.1"]

}

}

})

]

}

And with that we have a functional Kubernetes cluster that we can easily tear down and rebuild.

2026-05-05 08:00:00

If I’d have to describe my homelab setup via analogy I guess it would be similar to me on a unicycle carrying plates with both of my hands, or maybe a leaking barrel with water that I try to patch up with silver tape.

I’ve also been Kubernetes-curious so I decided to completely redesign my homelab, centered around Kubernetes. It was a bit painful but at least it fulfilled my need for procrastination very well.

I’ve got three goals with the setup:

Declarative, reproducible, and automated

The big goal is to have everything declarative in a single git repository and to easily be able to bootstrap from nothing to a fully working setup.

I want to use Infrastructure as Code to create the Kubernetes cluster and GitOps to populate it with all my services automatically from the repo.

It should be really easy to make a change; I want to move away from having to ssh into the correct repo and manually do stuff.

Backups, backups, backups

While a proper GitOps setup means that infrastructure and configuration files are inherently backed up, a proper backup setup is still crucial.

Ask me how I know.

No, please don’t.

I haven’t had a proper (as in working) backup solution for years and this time I should have it from the start.

Documentation

What if I could document my setup, so future me has a chance to understand what’s happening? Writing documentation is boring, so I’ll write some blog posts instead.

I’m a bit skeptical that I can fulfill all three goals, but if I manage 2/3 or even 1/3 it’s still a big win compared to my old setup.

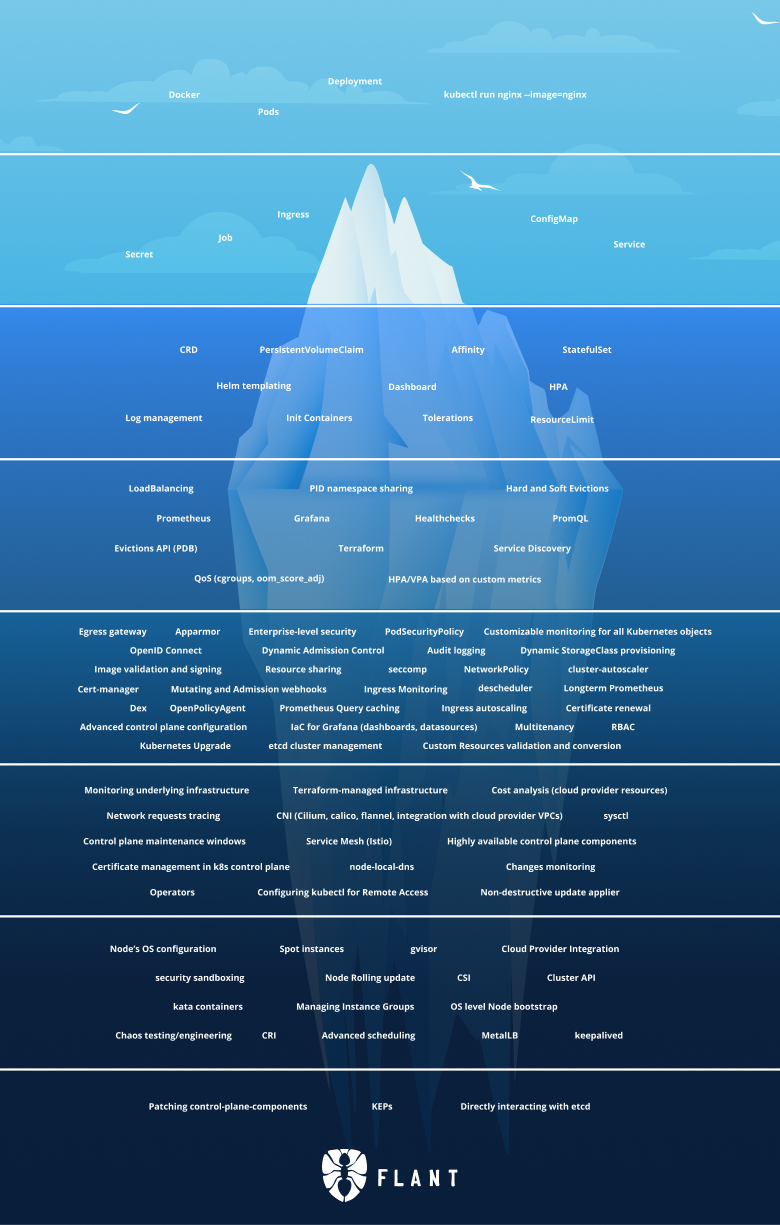

It’s a fair question and the most common critique towards Kubernetes is that’s just too complicated (especially for a homelab). Discussions online are filled with comments such as:

Kubernetes has to be most complex software I’ve ever tried to learn. I eventually gave up and decided to stick with simple single machine docker-compose deployments.

“Let’s use Kubernetes!”

Now you have 8 problems

So why would I choose Kubernetes?

Because, for whatever reason, Kubernetes is very popular and for every comment complaining about complexity you have comments extolling it’s virtues:

I was skeptical about Kubernetes but I now understand why it’s popular. The alternatives are all based on kludgy shell/Python scripts or proprietary cloud products.

Kubernetes is the biggest quality-of-life improvement I’ve experienced in my career

Having experienced the single machine docker-compose deployments, kludgy shell scripts, and proprietary cloud products; I think I need to use Kubernetes myself to be able to form an opinion on it.

And in some ways, isn’t experimentation a core part homelabbing?

There are many valid tech choices for this kind of setup and many of them are reasonable. I don’t know if my choices are reasonable—most were chosen because they sounded cool, others because I just picked one.

Here’s list of some of the choices I made, which we’ll setup in this series:

Talos Linux for Kubernetes nodes.

The coolest way to run Kubernetes. Lightweight and secure, what’s not to like?

Terraform to provision VMs on Proxmox and to initialize Talos Linux.

Cilium for proxying, CNI, load balancer, and Gateway API provider.

I opted for Cilium as it’s one dependency replacing several alternatives (such as kube-proxy, Metallb, and Traefik, which I was leaning towards at first). Gateway API is the new thing you “should” use instead of ingress, and I wanted to try it out.

ArgoCD for GitOps.

If it was purely for myself FluxCD might have been the better, simpler, choice but we might use ArgoCD at work and I don’t want to deal with two separate systems at the moment.

Renovate to keep dependencies up-to-date.

CloudNativePG for Postgres on Kubernetes.

I’ll also setup timescaledb, although we won’t use it in this series. It’s just to prepare for the future migration of long-term statistics from Home Assistant.

Longhorn on NVMEs for persistent storage.

Sanoid, Syncoid and Kopia for backup archive management.

Backups are snapshotted and stored in ZFS, which are also encrypted and shipped off-site to Backblaze for storage in the cloud. Backups from Longhorn and Postgres arrives to ZFS via Garage, a self-hosted S3 service.

Authentik as an identity provider and single-sign-on platform.

It’s nice to not have to login manually everywhere.

Huh. Displayed like this it looks like a lot, but fear not! It’ll be worth it in the end.

In the next part we’ll start by creating VMs and getting a Kubernetes cluster up and running.

2026-04-28 08:00:00

Respect your users and their confidence in you, “Microsoft” GitHub.

After years of waffling around I finally bit the bullet and migrated away from GitHub onto Codeberg and a private Forgejo instance. If Codeberg is good enough for Gentoo then it’s good enough for me.

One part of my GitHub aversion is me being anti the big American tech corporations for ideological reasons. I’d like to reduce my usage and dependence of Google/Facebook/Apple/Microsoft/Amazon etc where I can and moving away from GitHub fits that goal nicely.

The other reason is GitHub’s enshittification. GitHub has been slow and slightly buggy for years and it’s not getting better. They push out badly planned features while shipping this kind of code in GitHub actions runner:

#!/bin/bash

SECONDS=0

while ; do

done

(This apparently broke Zig and caused them to leave for Codeberg.)

You may not like it but this is what peak vibe coding looks like

I know it’s a snarky comment, but with a CEO that says “embrace AI or get out” then it’s hard to resist.

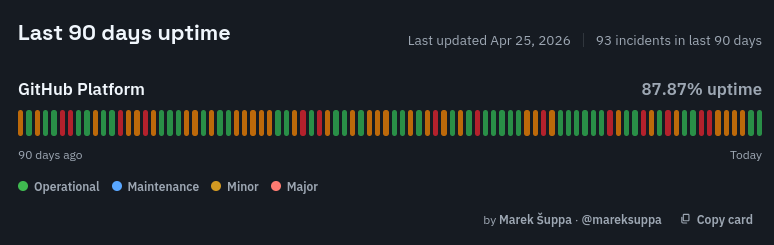

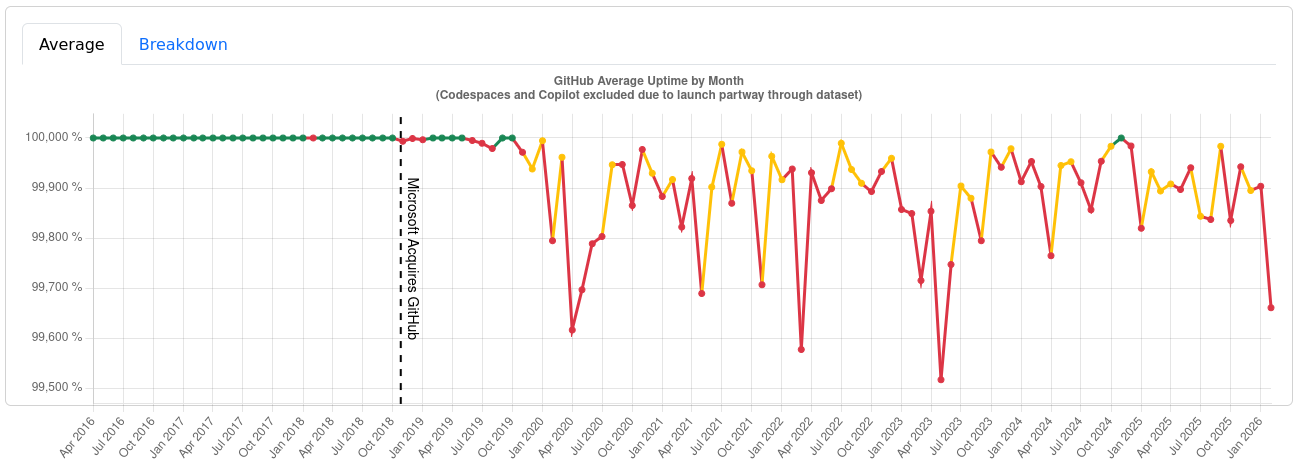

There’s empirical data to back up GitHub’s unreliability; just check out these uptime logs (taken 2026-04-27 from third party sites since the official status page predictably lies):

They don’t call it “Microslop” for nothing.

Codeberg is based on Forgejo, which is great to self-host. I’ve had it running a few weeks when I’ve been playing with my homelab and it feels exceptionally fast. The web UI is super responsive and I frequently have to double-check that I pushed as it finished so quickly.

I would love to have the speed and privacy for all my repositories but I’ve got some that I want to be public (the source for this site for example). I considered a few different setups:

Sync back changes to GitHub via Forgejo’s built-in GitHub sync?

(Keeping GitHub active would defeat the point a little though.)

Sync changes from my Forgejo instance to Codeberg?

(Maybe annoying to manage multiple repos?)

Only use Codeberg?

(I’d lose speed and privacy for my private repos.)

Expose my Forgejo instance running in my homelab?

(The internet is a scary place.)

Setup a public Forgejo on my Hetzner VPS?

(I’d still have to protect it and manage traffic.)

In the end I decided to use Codeberg as for my public-facing repositories and Forgejo as my main interface (for both public and private repos).

Some of my public repos are close to read-only (this site’s source for instance) so I’ve setup a mirror where Forgejo will push changes to Codeberg automatically. However, it’s weird to also pull changes from Codeberg to Forgejo. I guess I could setup a script to do it, but pull requests from others are rare enough that I can do it manually. Other repos (such as tree-sitter-djot) are left alone as they’re more collaborative in nature and I can’t be bothered to keep two sources in sync.

Yes, both Codeberg and Forgejo are very good.

They are snappy and speedy and there are no features I miss from either GitHub or GitLab (and plenty I’m glad to avoid—getting AI shoved into every crevice for instance).

(Yes, I used an em-dash on purpose.)

At the moment Codeberg is admittedly having periods with pretty bad performance issues. This is because they’ve been under a DDOS attack for quite some time, which has been frustrating.

The migration wasn’t difficult, just a bit repetitive.

For private repositories I just deleted them from GitHub and pushed them to Forgejo.

Public repositories had a few more steps:

2026-03-10 08:00:00

Life begins at the end of your comfort zone.

I’m lucky that I have a job where I can work remotely as it allows me to live in a small community where there are no tech jobs anywhere close. It does require me to travel a few weeks per year to the office but I don’t mind that much as I appreciate minor dozes of socializing occasionally.

I recently spent five nights on a trip with only a single backpack and it was a surprisingly great experience.

I’ve had these work trips for years and I didn’t put too much thought into how to travel. Like most people I simply filled a suitcase that I checked in together with a backpack that I brought on the airplane.

I didn’t quite know how much to pack so I always packed a little more than I needed. For example, if I’m away 5 nights then I brought 7 pairs of underwear (if disaster strikes twice). When I returned I always had a bunch of unused clothes, but that felt better than having to little.

Because I had so much space I could bring a lot of things; my own pillow, a handful of books, gigantic boardgames, and I still had space left to bring back lots of gifts.

I’ve had two backpacks that I used to travel with:

Datsusaru Battlepack Core

It’s a really sturdy backpack that’s great to bring to BJJ training, but it’s very heavy and it’s not ideal as a traveling bag.

M-Tac Backpack Urban Line

I bought this bag recently but I wasn’t too impressed. It was too small for a traveling bag and the zipper broke after just a few trips.

I’ve had a few suitcases that have broken down but I can’t remember the brand of. Most recently I’ve been using the Samsonite Essens 69cm that have held out great so far.

I’ve been spending five nights away 4–5 times a year on business travels. It’s not a crazy amount but also not negligible, so I figured it’s worth trying to optimize them a bit.

Enter one bag travel.

While I was reasonably comfortable during my travels there’s a few things that intrigued me about one bag travel:

In short, the actual trip would be more convenient and less worrisome…

If I could make it fit.

Water bottle

I later decided not to bring it.

5 pair of socks and underwear

3 t-shirts, 1 long-sleeve t-shirt

1 slightly thicker long-sleeve shirt

Training gear for Submission Wrestling

Shorts, rashguard, spats, knee pads, and mouth guard.

1 pair of pants

Mobile phone charger

A Framework 13 laptop + charger

Laptop–headphone cable

I also brought the Sony WH-1000X M3 noise canceling headphones that I wore during the trip.

A bag for dirty clothes

Toothbrush & toothpaste

Power bank

Vitamins & medicine

Nail clippers, tape, Whoop body holder

Earbuds & sleep mask

A book—the first two books in the Murderbot Diaries series.

I saw the advice that the clothes you travel with is also very important. They have a point.

Pants with large pockets with zippers.

I could use the pants the whole week if I wanted to.

A Houdini hoodie.

Really warm and cozy, should last the whole week.

A thin jacket.

Paired with the hoodie it provides a decent enough protection against the weather. I’m not going on a hiking trip; I’ll be moving between the office, the hotel, and restaurants.

It’s also thin enough so I can fit it into the fully packed backpack.

A cap and gloves.

I’m traveling in Sweden, it’s still fairly cold here.

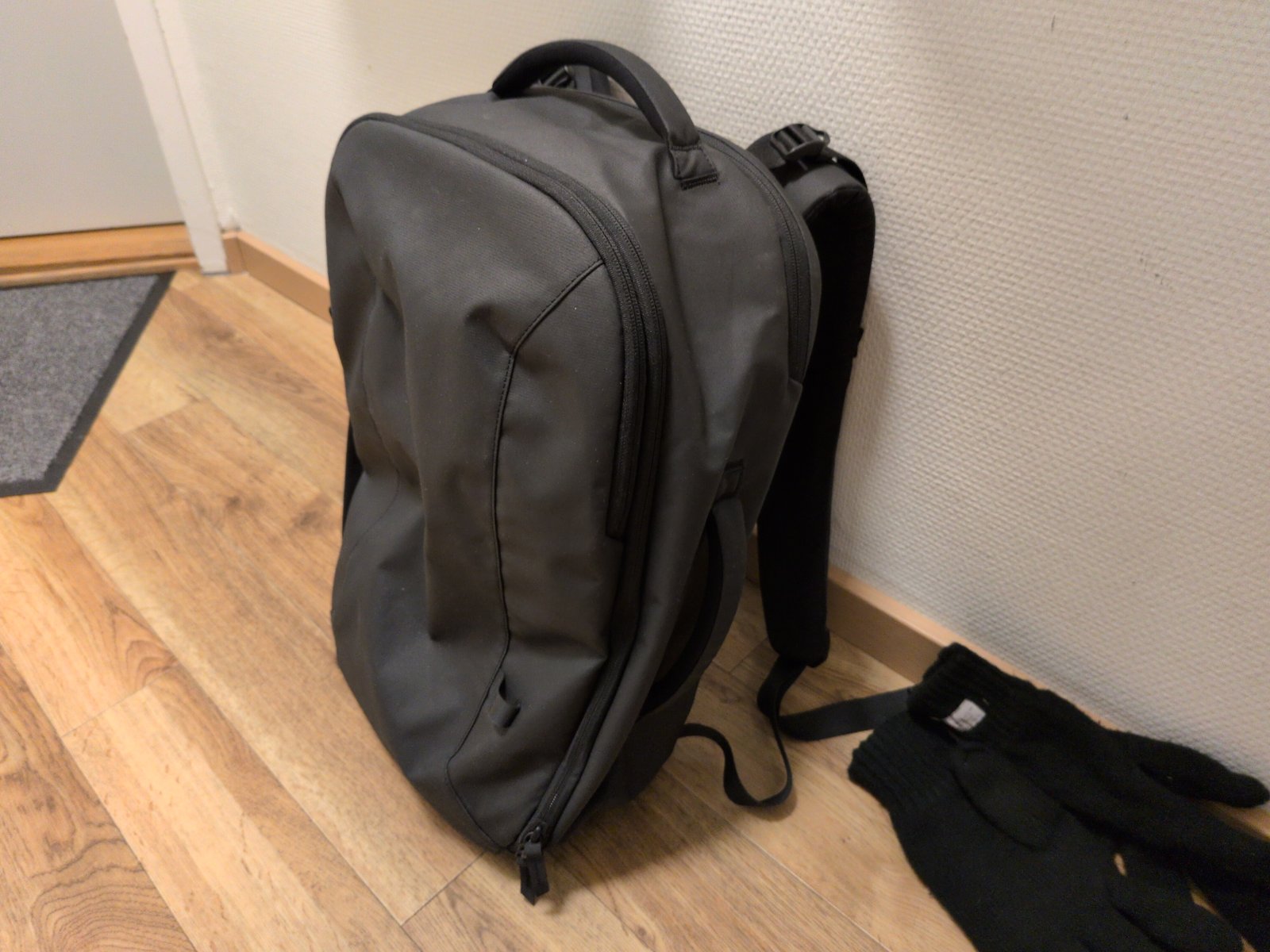

Before I could try out one bag travel I had to get a new backpack. I ended up with the Fyro Levo 30L backpack mostly because the creator had a bunch of cool videos about bags… As shipping was expensive I also got their packing cubes, the retractable key leash, and a 1L sling (that I didn’t use).

This isn’t a review of their stuff; there’s probably better options out there but I’m too inexperienced to say. Maybe I should’ve gotten the 36L backpack, and the sling was an unnecessary purchase, but other than that I’ve been very happy with the Fyro products.

Will it fit?

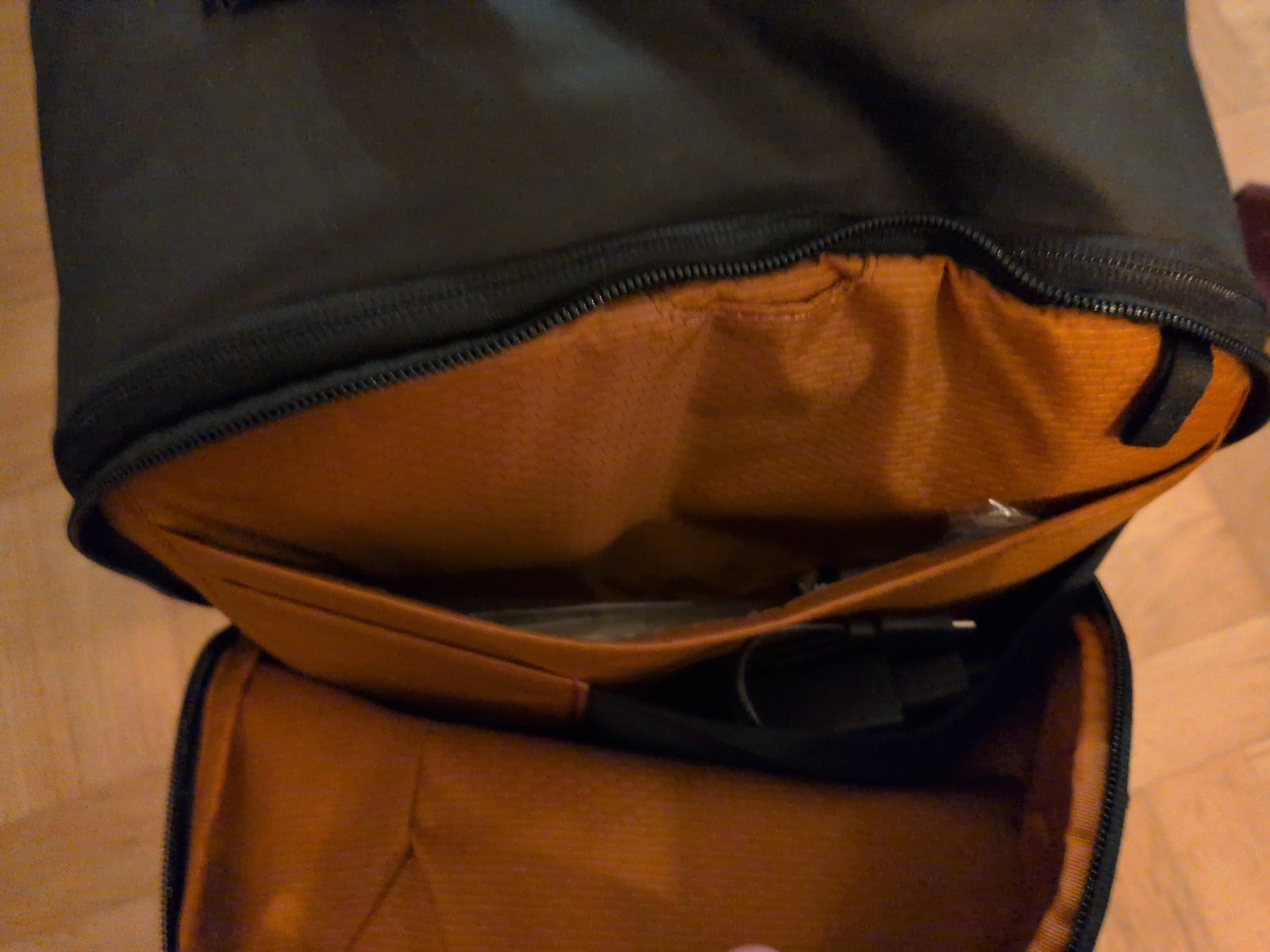

One of the biggest critiques against the bag is that the pockets here are very loose as things might fall out. When the bag is fully packed this has not been my experience—almost the reverse. Fully packed, it’s a bit too tight. But empty they’re too loose.

I did fit everything I wanted to bring. Although I skipped the water bottle it would’ve fit into the water bottle holder on the side. Maybe I’ll bring it next time.

The fully packed bag weighed in at 7.3 kg.

I do love the large front compartment.

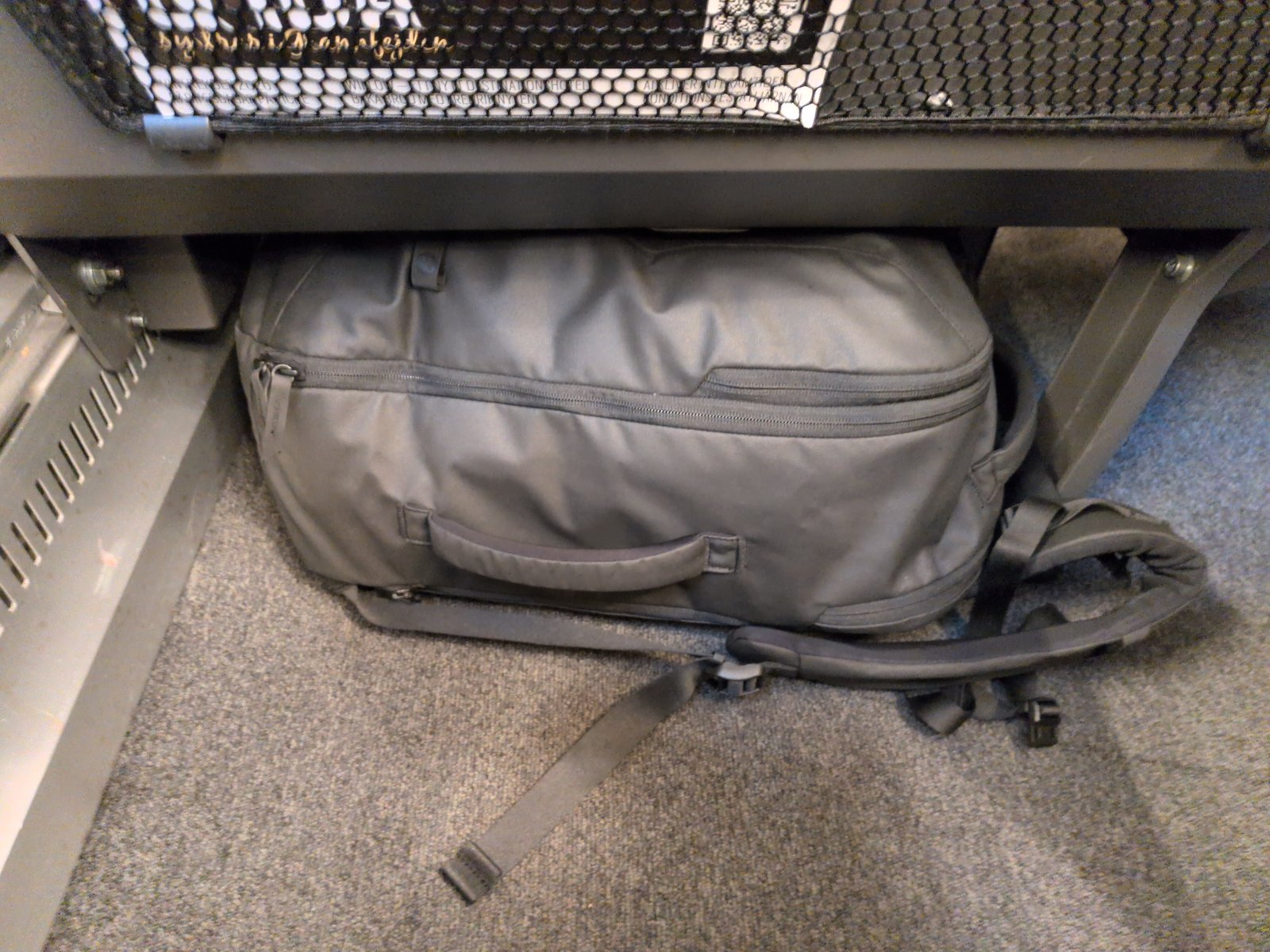

I was a bit worried that the bag wouldn’t fit under the front seat but it worked out well. I didn’t take a picture of it but it fit in the overhead compartment during the flight too.

Although the extra space of the 36L backpack would’ve been greatly appreciated I fear that it might have made the bag too large to fit under the seat. In the end I think I prefer the convenience of having the bag under the seat over the extra 6L of space.

The bag was also used to transport the laptop and other electronics between the hotel and office. I was worried that a huge bag would be a chore to bring but that’s been a non-issue. The only minor problem is that the bag doesn’t stand by itself without the packing cubes.

One’s destination is never a place, but a new way of seeing things.

Overall I’ve really enjoyed my one bag traveling experience. I managed to fit into a single bag and the trip was a lot easier and more streamlined.

There are however some downsides that bothers me quite a bit:

I couldn’t bring Luthier, my new favorite boardgame on the trip.

My work trips have been one of the best ways to reduce my boardgame list-of-shame (bought and unplayed boardgames). Or to play my favorite games again.

There’s not enough space to bring back gifts, such as clothes or LEGO.

Then again, maybe I don’t need to always bring back gifts? Or maybe small gifts are good enough?

I can’t go nuts in the local book stores.

Last time I brought back five new fantasy and sci-fi books. This time I bought a small book that I could fit at the back above the laptop.

I haven’t yet decided if I shall continue with the one bag travel, or if I shall start checking in a suitcase again. Either way it’s been eye opening how much you can do with a regular backpack.

2026-01-03 08:00:00

It’s time for a yearly review again. Time flies.

I read a few books this year.

The whole Dungeon Crawler Carl series was absolutely amazing and it quickly jumped up to one of my favorite series of all time.

I can’t be held accountable for everything I’ve ever said to a stripper.

Board games are great.

I still don’t play nearly as much as I’d like to but my kids are quickly growing up and to my eternal joy they’re all interested in board games! Isidor (8) has been loving Radlands, Loke (5) likes Chronicles of Avel, and Freja (3) loves Dragon’s Breath. My personal favorite is Luthier and I think it might be my absolute favorite game right now.

Computer games have made a small comeback.

As my kids have been gaming more I’ve regained a little bit of interest in gaming too. I’ve been playing a little Core Keeper and Beyond All Reason with Isidor and it felt really fun.

Migrated my Neovim config to Fennel.

Eh, it was fun to play with a new language.

I wrote 12 blog posts, not great, not terrible.

I managed to push back my fantasy writing ambitions to the future.

It’s important as I tend to get stuck on things, preventing me from making progress in other parts. I really don’t have the time or energy to try to write a fantasy book right now, but making my brain see that wasn’t easy.

2025 was the first full year running my company.

I’m still consulting for the same company I started out with and I enjoy it. It feels good to say that I haven’t blown it completely just yet.

I took a big step forward in de-Googling and to increase my personal security by moving to GrapheneOS. I’m super happy about it.

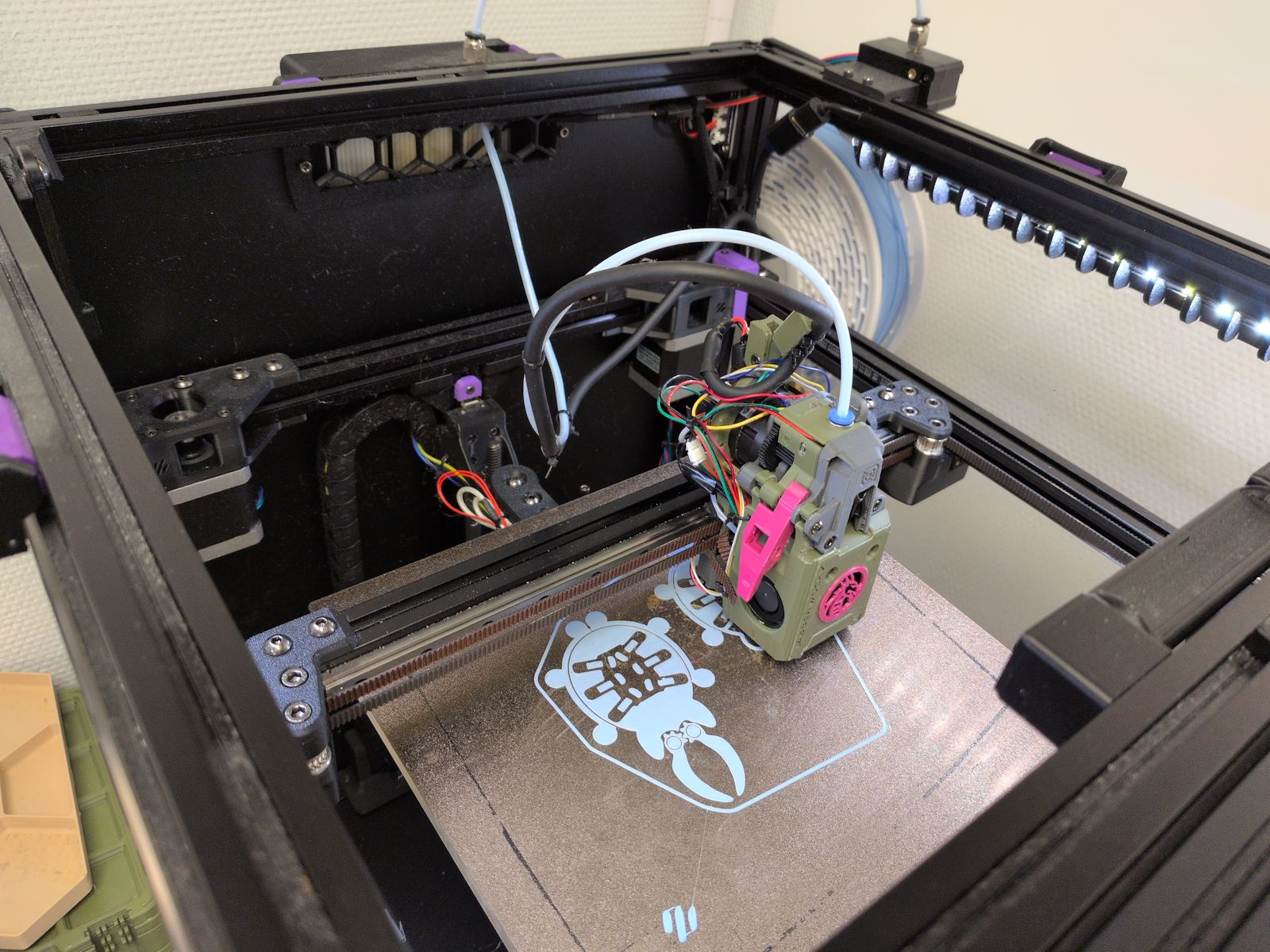

Built my second 3D-printer, a VORON 0.

It’s good! It’s fun! And I’m already planning my next printer…

We migrated away from Sonos in our Kitchen.

We got tired of buggy vendor lock-in and now we use my own Music Assistant based setup. If things break we can now blame me instead of Sonos.

I built my server first rack to host a new homelab server.

I still need to cleanup the cables and write a blog post about it.

Go on some roller coasters with the family.

We’re planning to go to Liseberg in Göteborg and I know the kids will love it.

Lose weight and build muscle.

I’ve been having a rough year training and health wise. Not being able to get a consistent strength training routine, inconsistent sleep, and spouts of depression has made me gain too much weight again.

Getting it sorted should (must) be a high priority this year.

Get our own training space for our martial arts club.

I’ve been wanting to get our own place for quite a while and I feel that we need to make it happen sooner rather than later.

Develop a company product MVP.

While I enjoy the consulting work I do at the moment my goal is to one day have a project of my own that can earn me money. I have a couple of ideas I want to pursue and I was going to do it in 2025 but I didn’t get far enough for that.

Migrate my homelab to the recently built server and expand on it.

I have this newly built server that’s just sitting idle. I need to migrate my old services to it and I’ve got ideas for lots of stuff I want to put there.

2025-12-02 08:00:00

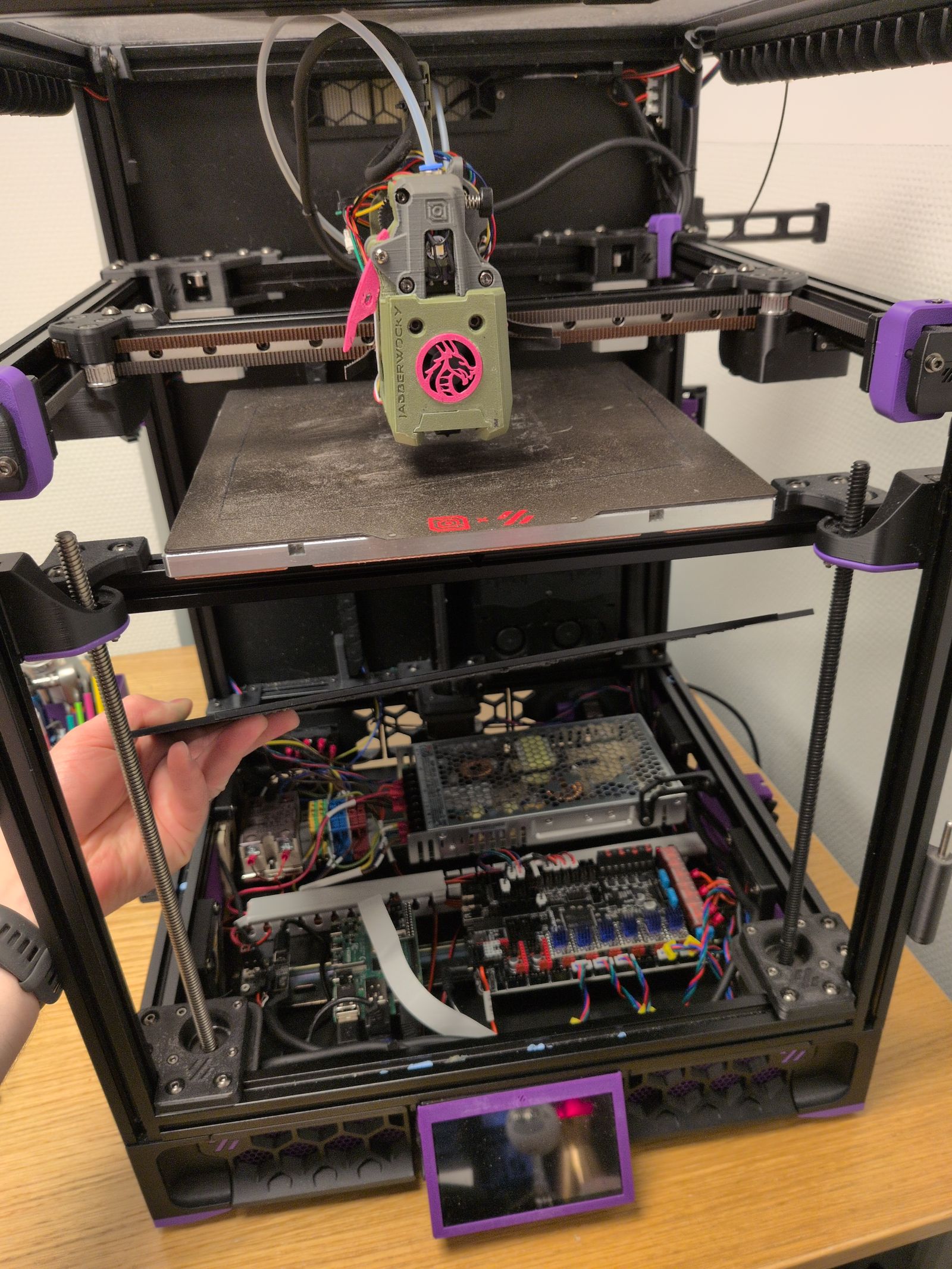

I’ve had my VORON Trident for 2 years and I’ve run it for 2600 hours. Overall I’m happy with the printer but I’ve been itching to make some more mods to it. Having finally finished the VORON 0 (with mods) I now have a backup printer I can use to rescue myself when I screw up.

As the printer was starting to crap out with a leadscrew starting to grind down again, the chamber thermistor stopped working, and PLA clogging up the Rapido hotend again it was time for a bit of a rebuild.

Besides fixing the printer I also wanted to prepare for a multi-color solution such as the Box Turtle and make some quality of life changes.

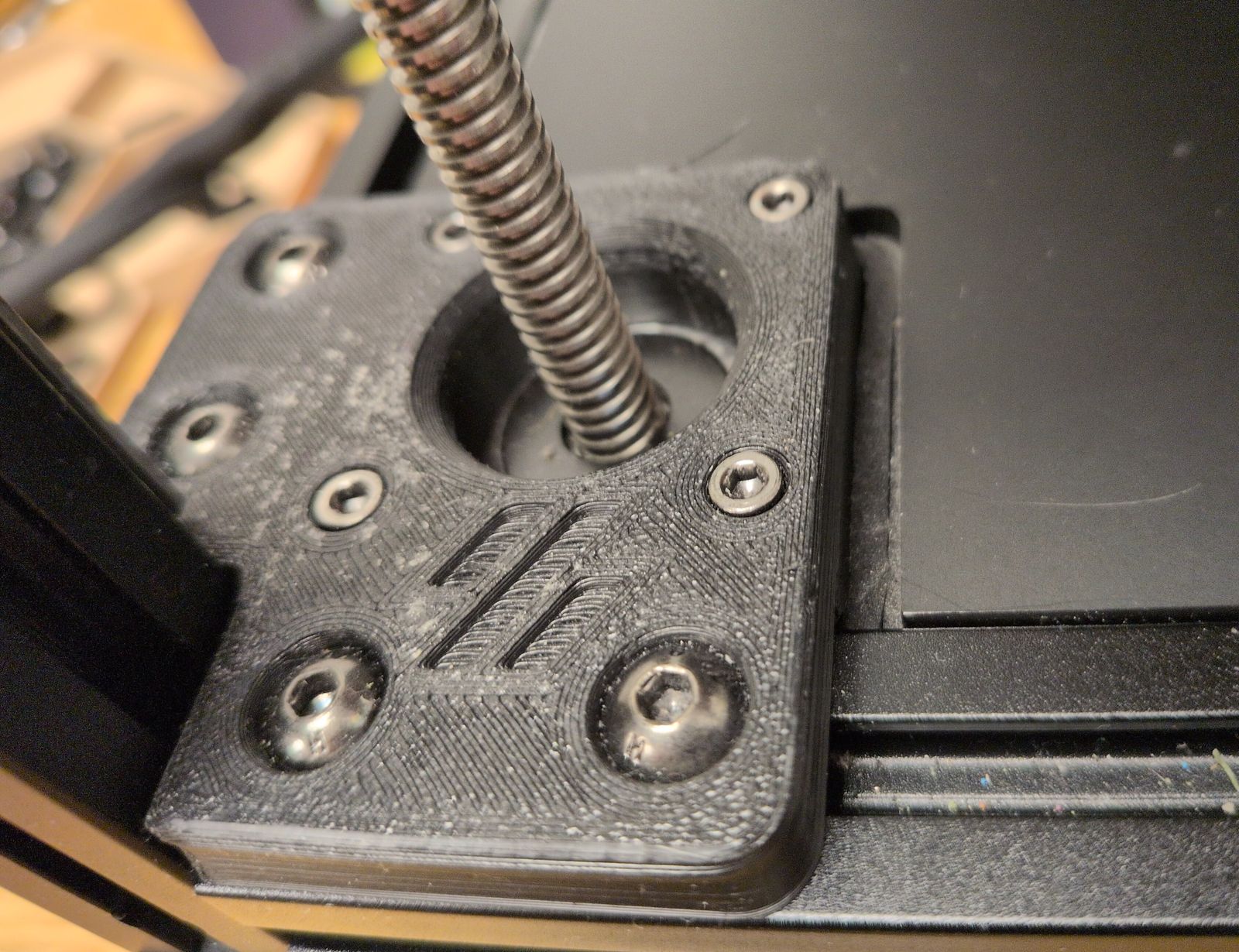

Replace the problematic leadscrew with a replacement part I received from LDO and replace the POM nuts on the other leadscrews.

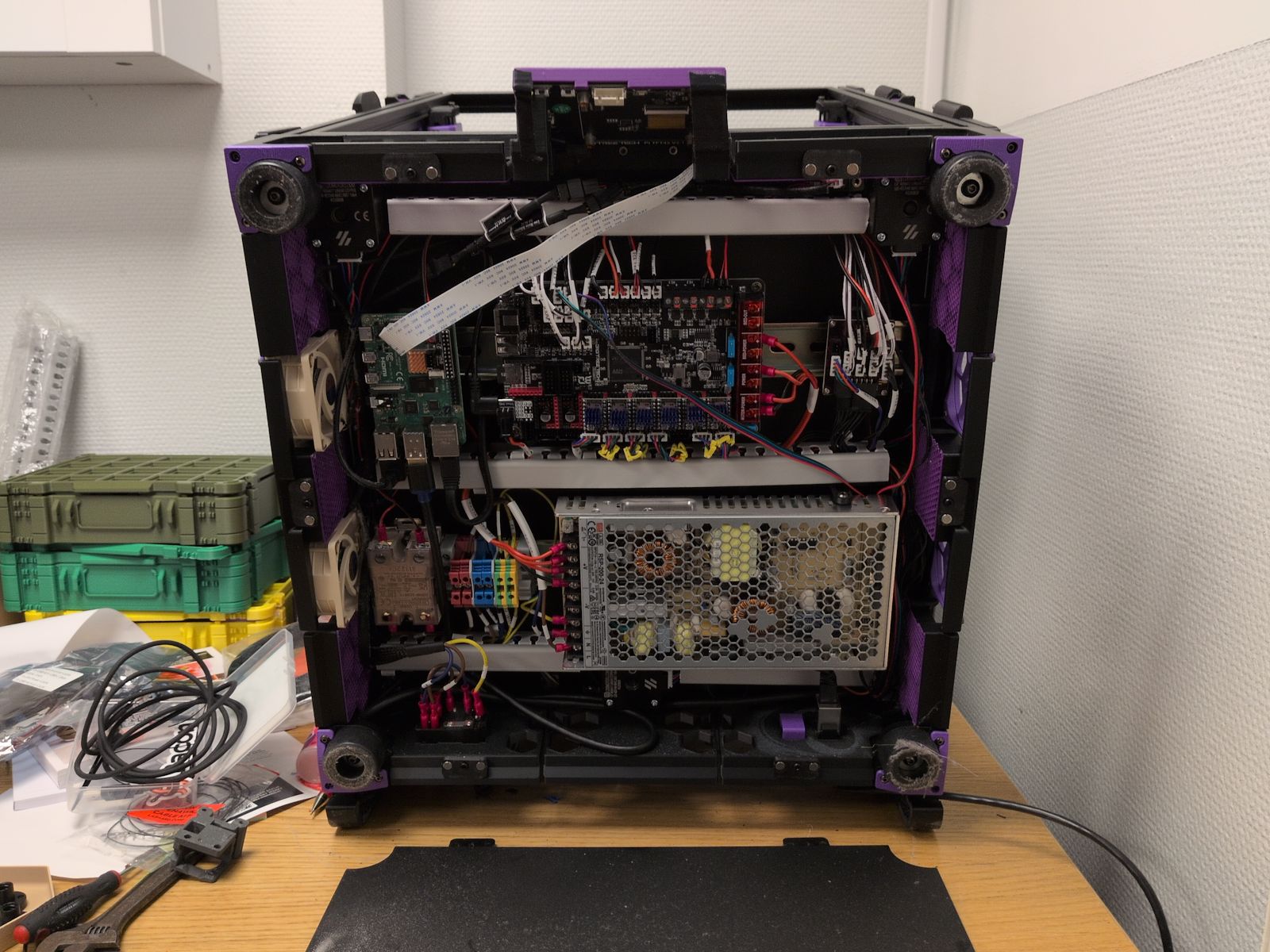

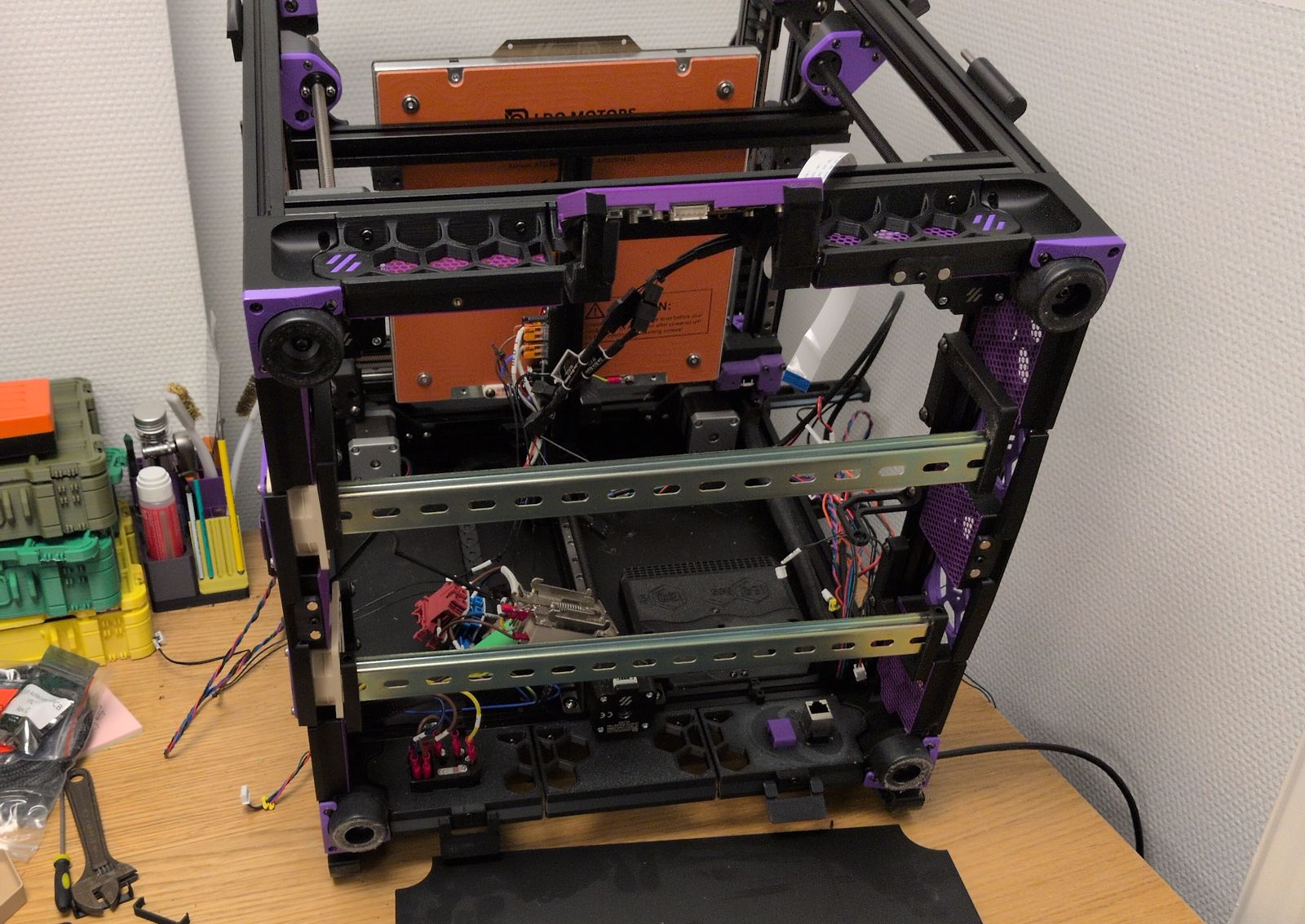

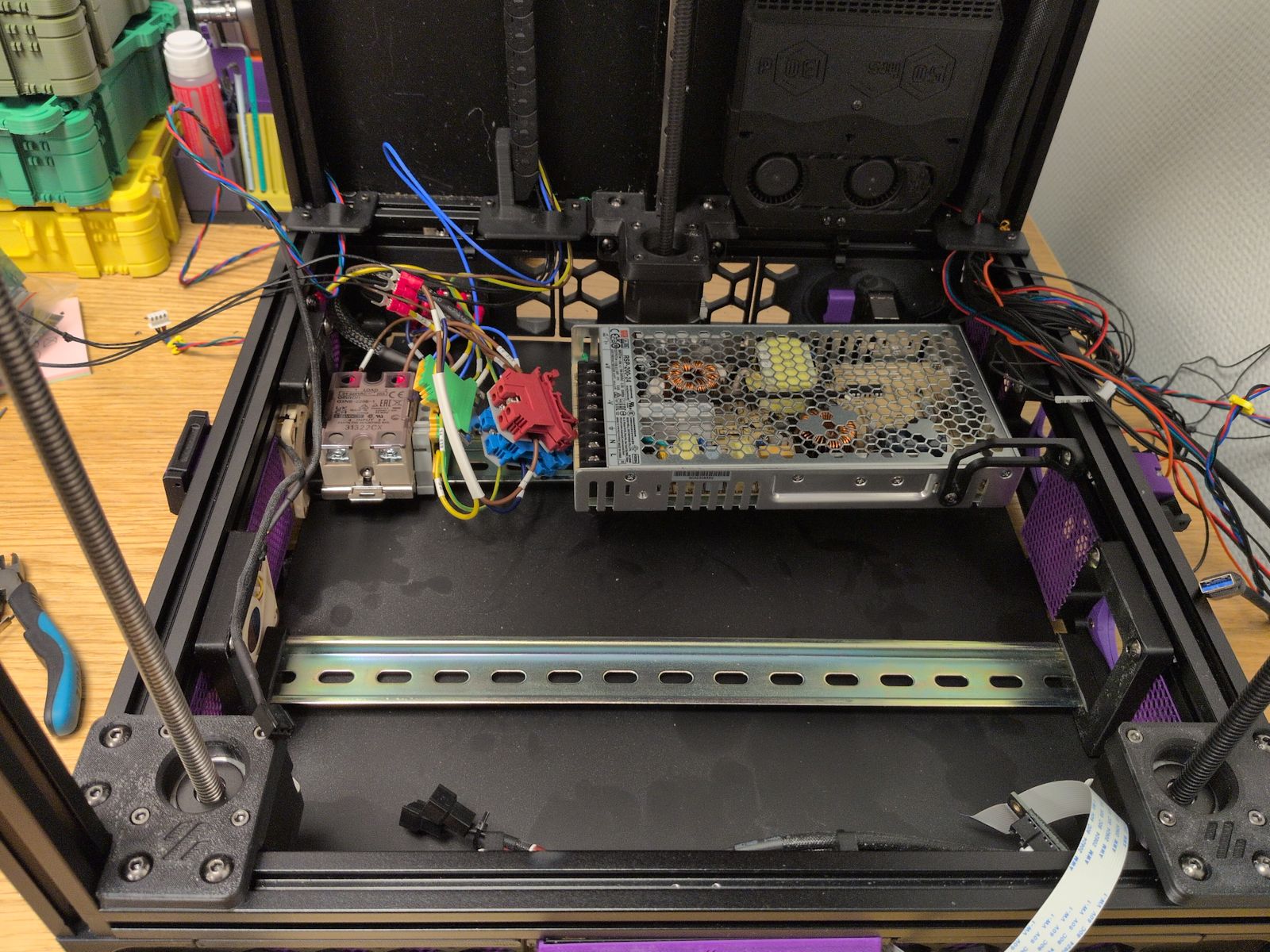

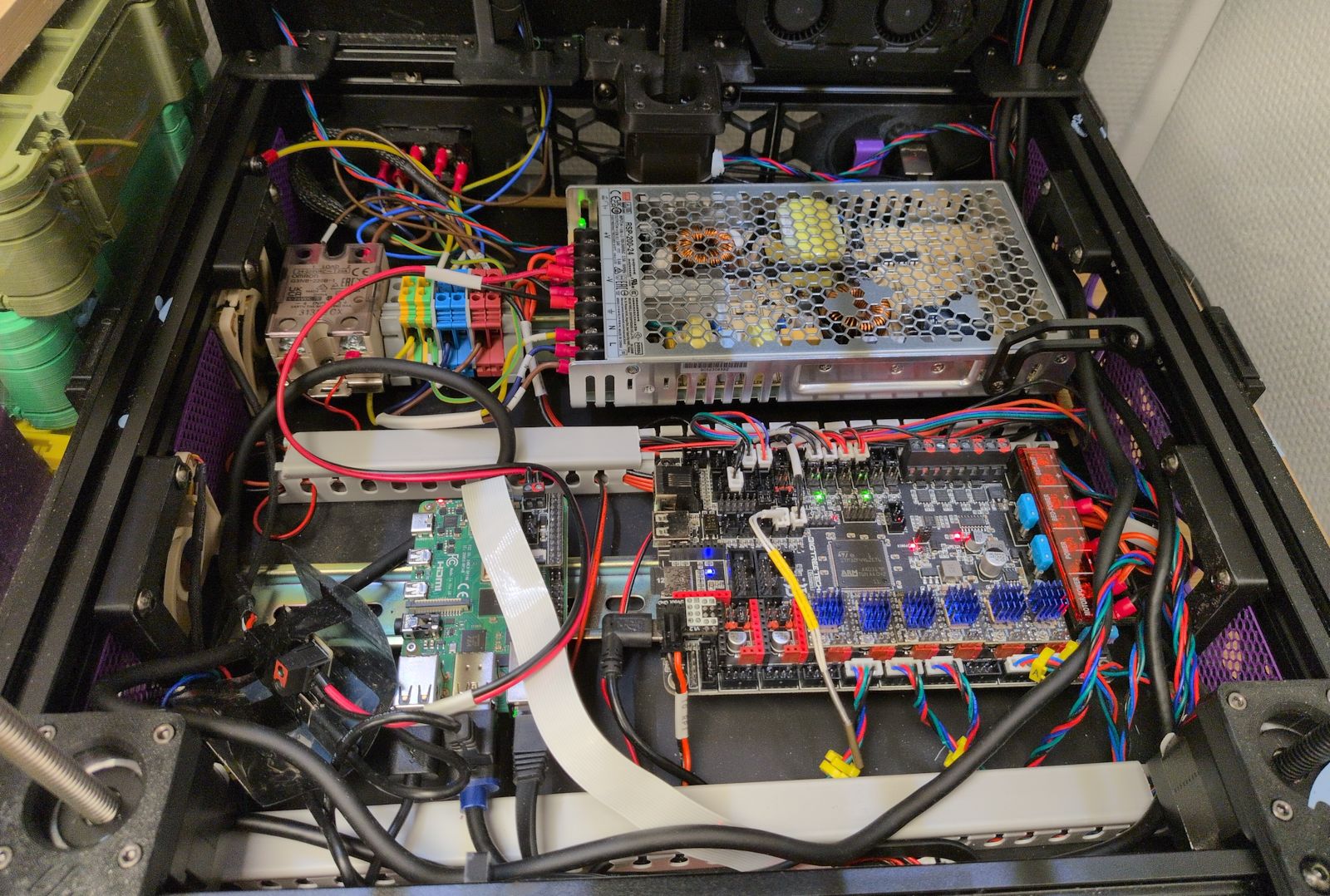

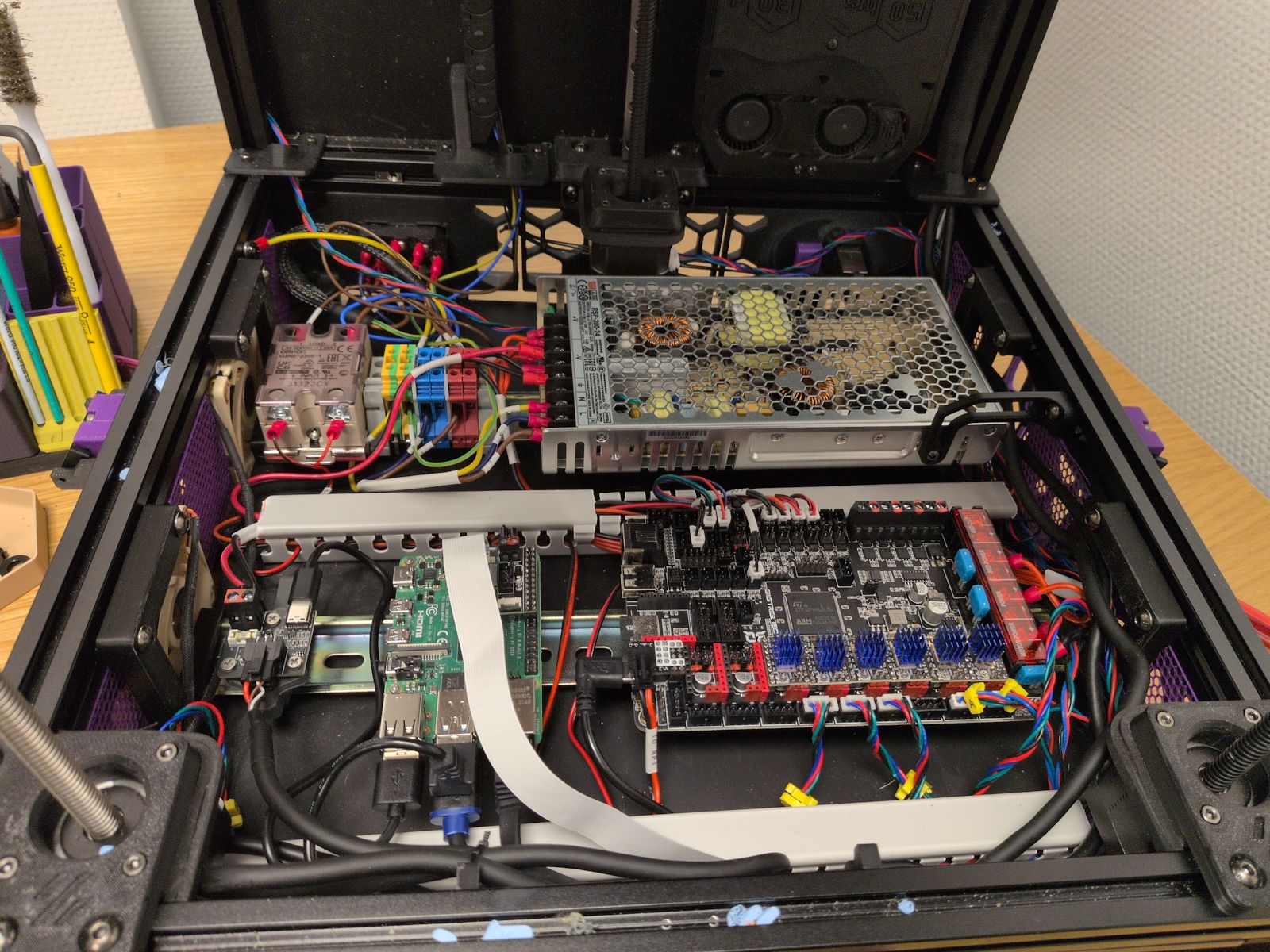

Install the Inverted electronics mod.

I’ve been using the RockNRoll mod to give access to the electronics compartment by tilting the printer backwards. The Inverted electronics mod would instead allow me to lift the bottom plate to access the electronics compartment and I want to do it before installing a Box Turtle on top of the printer.

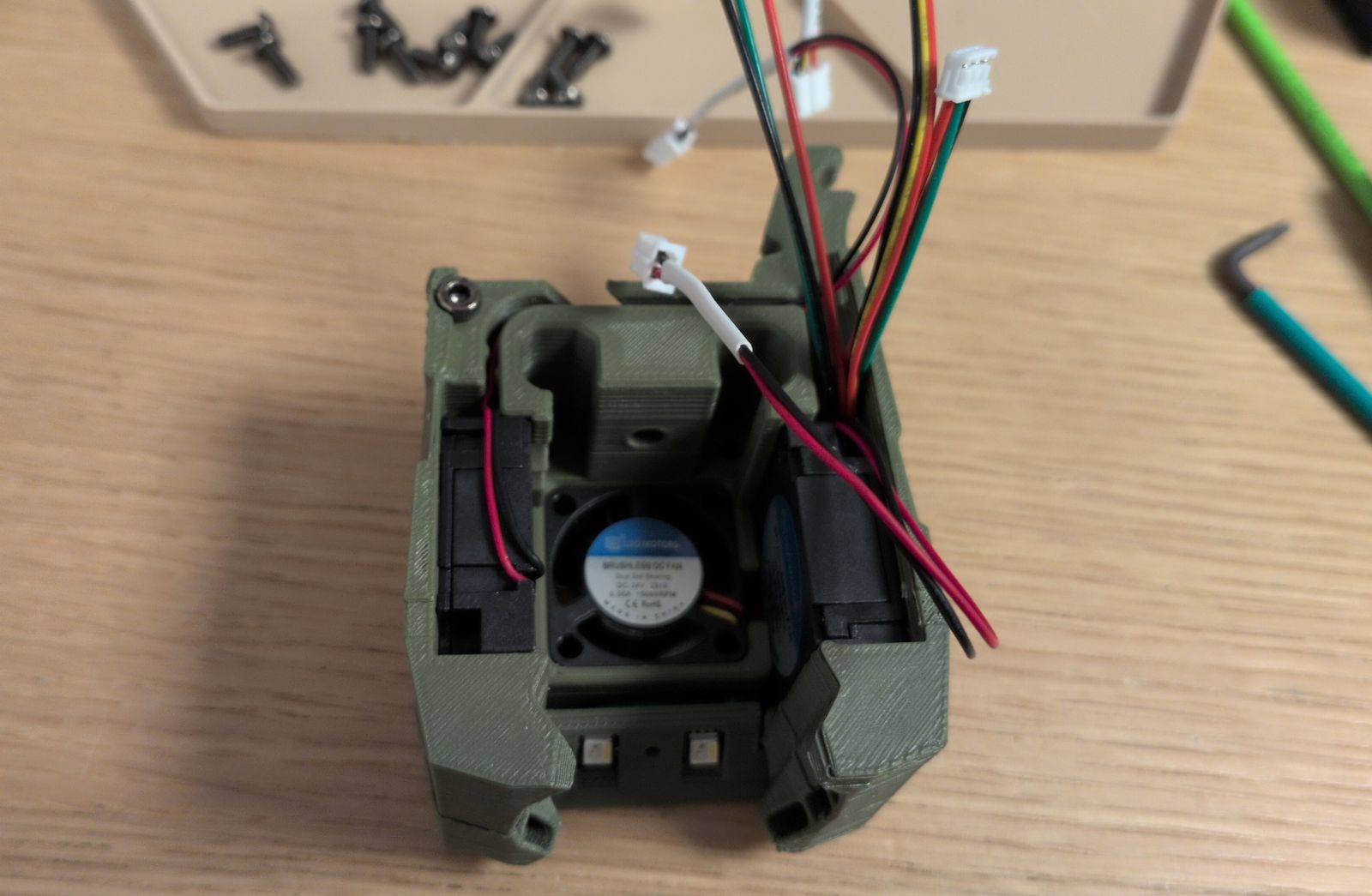

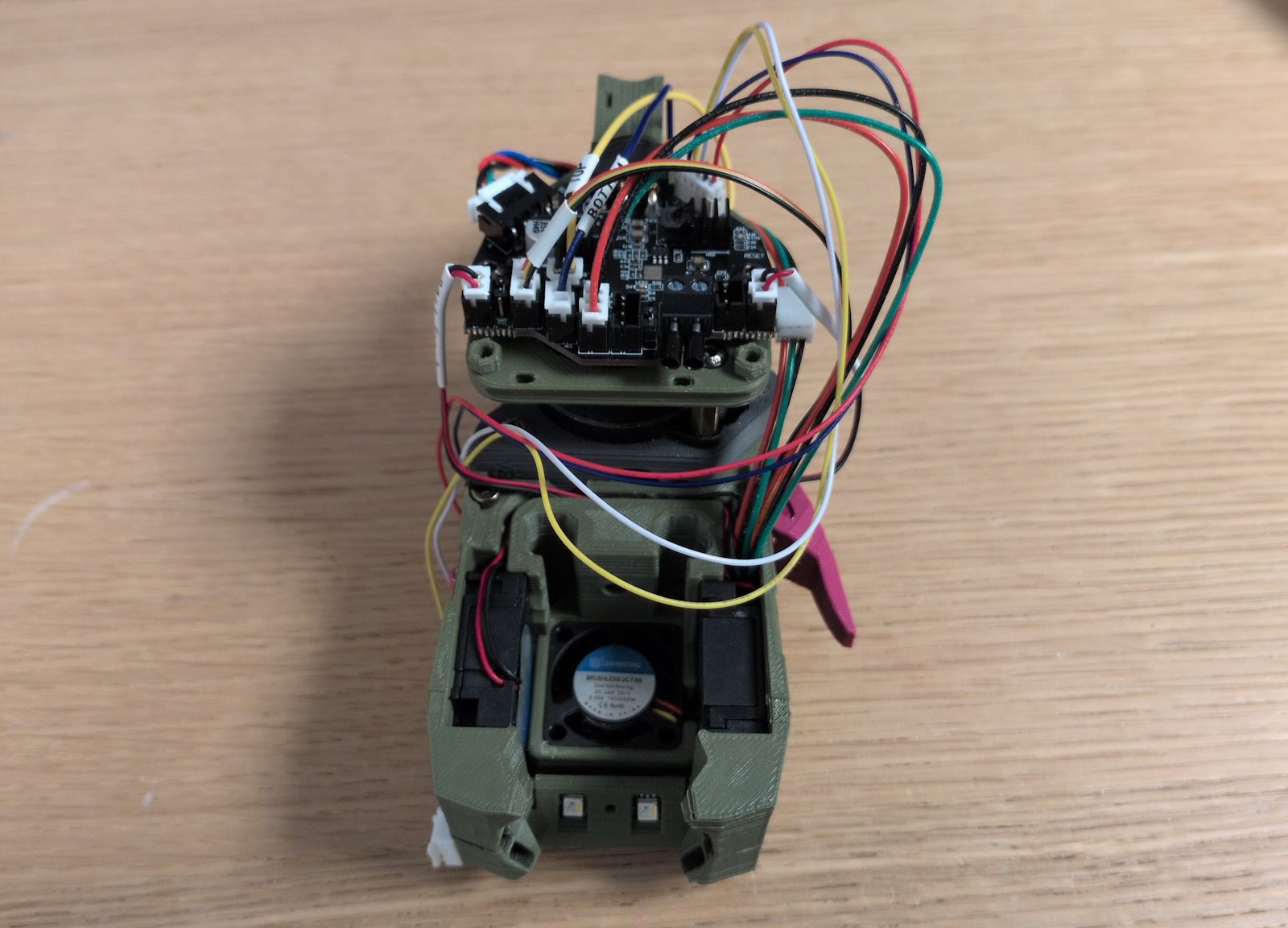

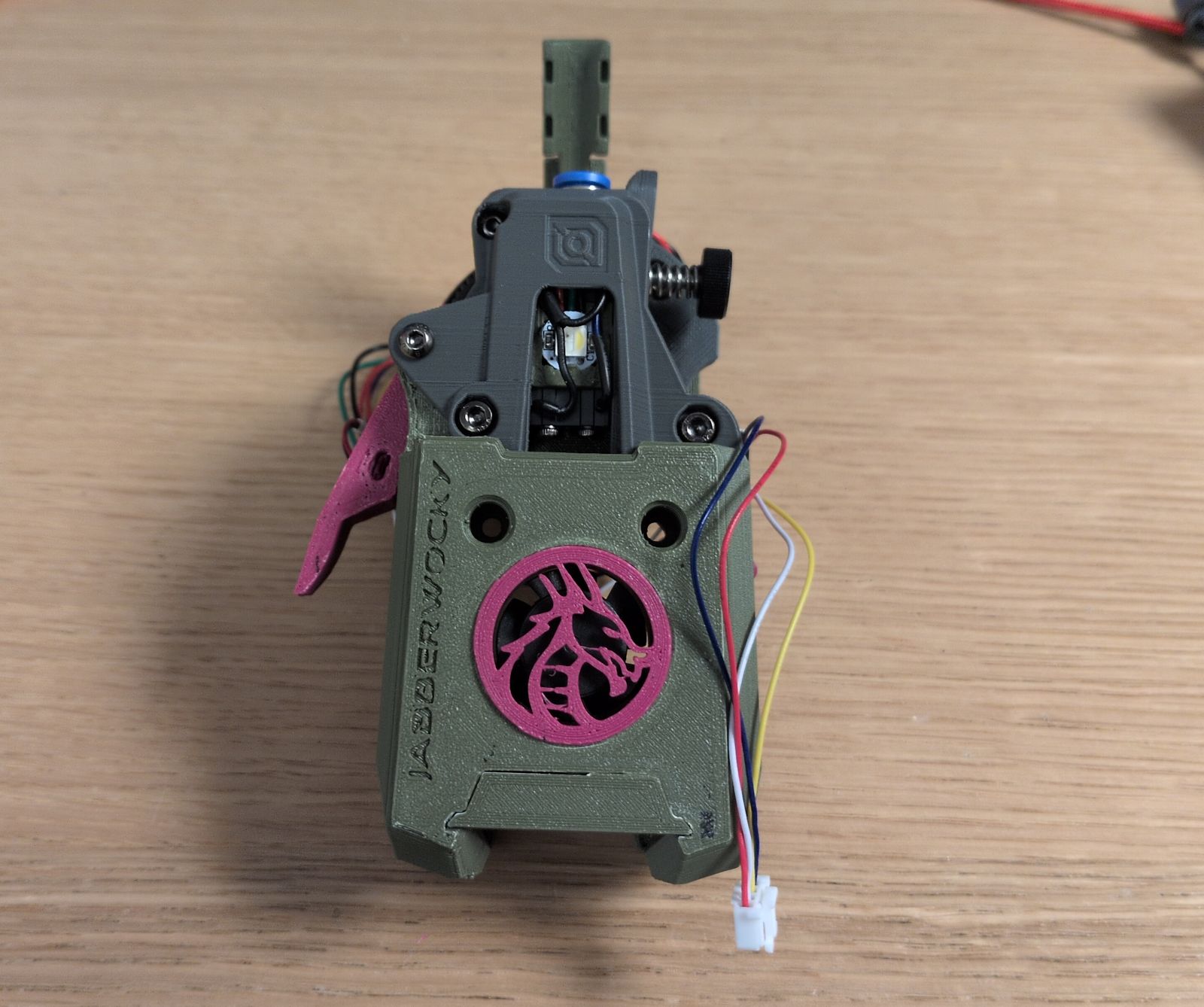

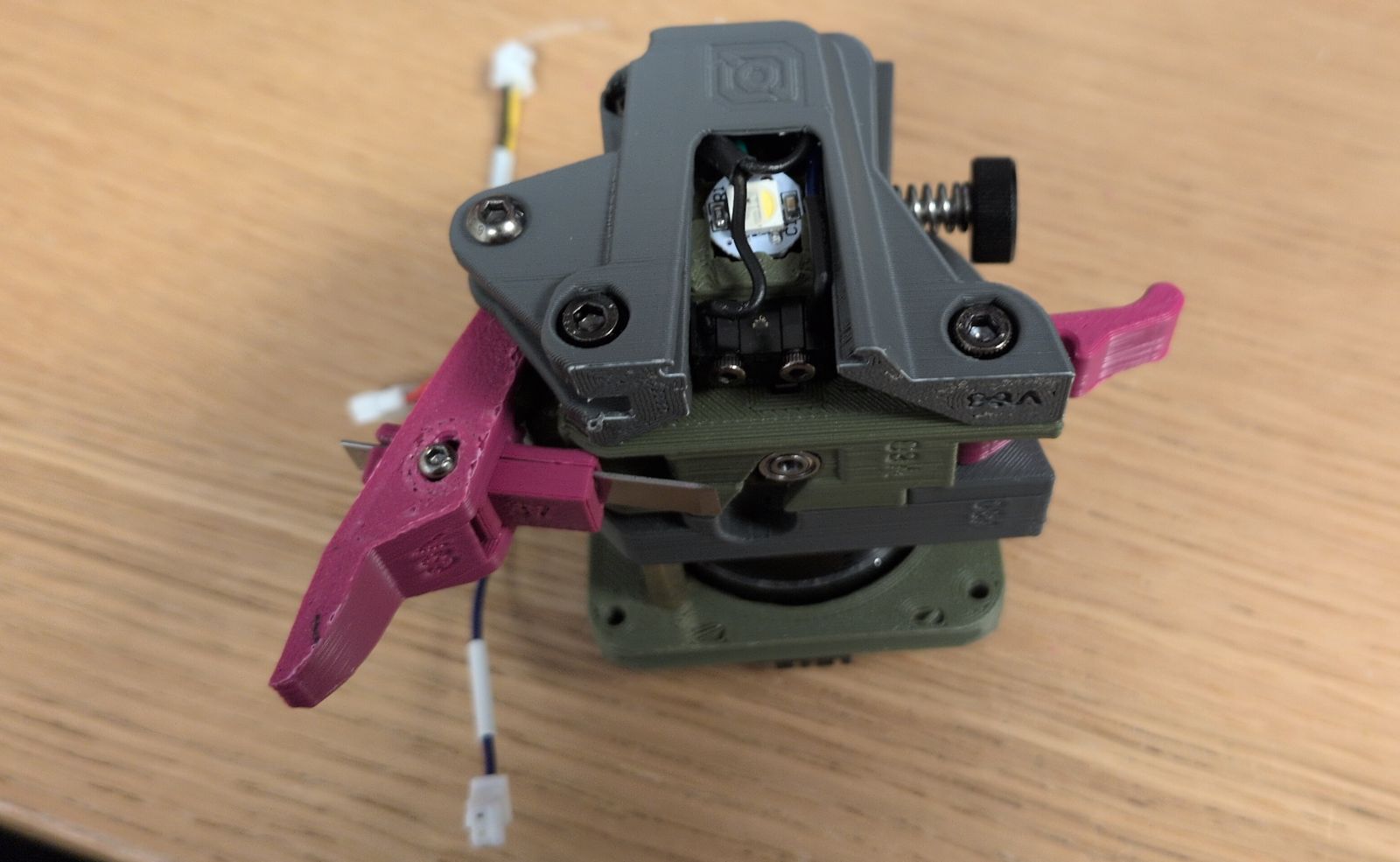

Replace the Stealthburner with the Jabberwocky toolhead.

This introduced a series of changes:

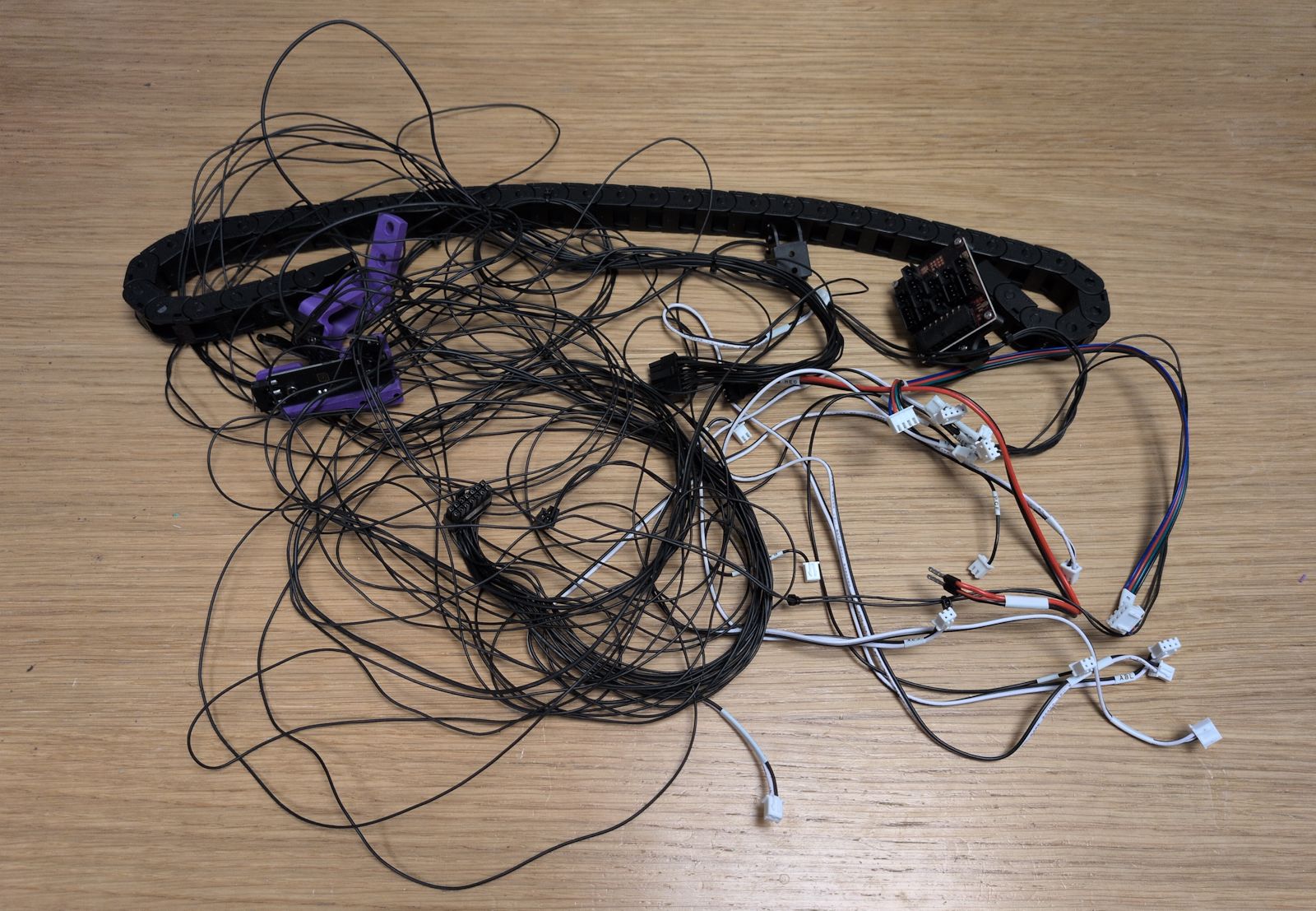

Move to an umbilical setup with the Nitehawk36 toolboard.

Use sensorless homing to get rid of the Y drag chain.

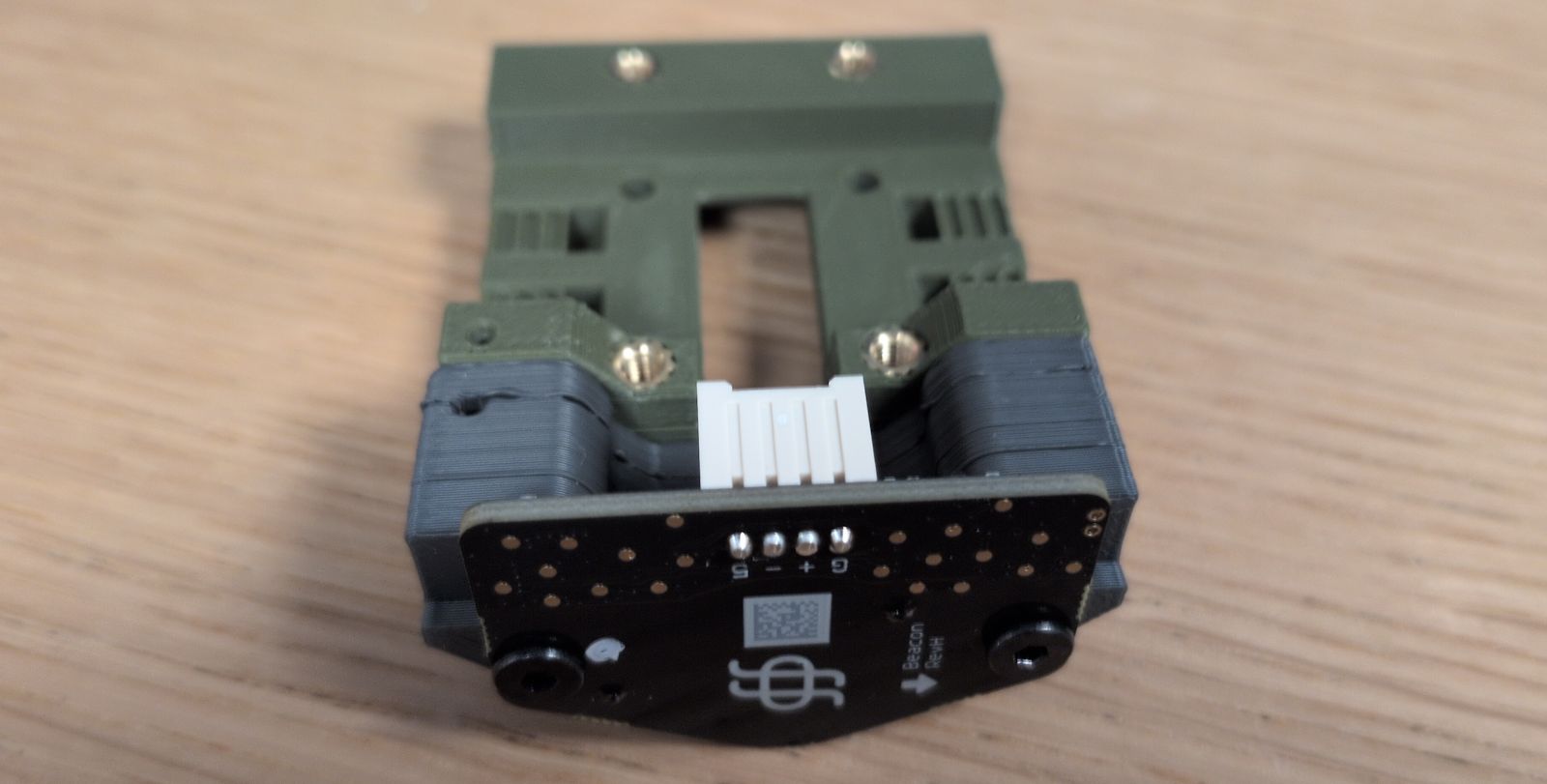

Replace TAP with the Beacon probe.

Finally, install the Jabberwocky.

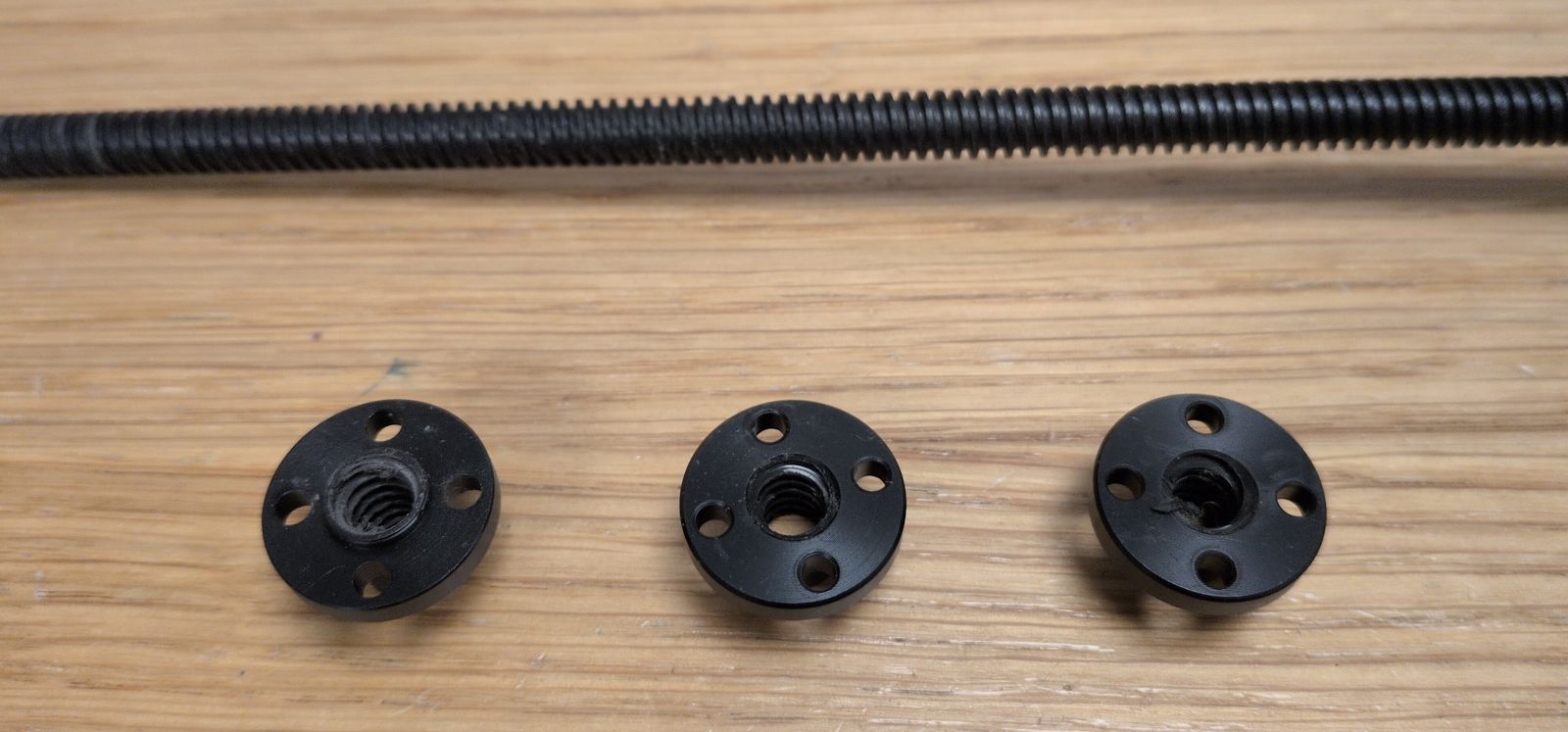

I’ve had issues before where one of the POM nuts were ground down and I felt it was happening again. The printer didn’t completely fail like before but it was sometimes getting really bad first layers in that same corner and the Z probe was occasionally failing to configure Z tilt.

I replaced all three POM nuts together with the whole lead screw (I got a new one sent to me by LDO the first time it failed but I hadn’t installed it yet).

This is apparently a common problem with some LDO kits that have coated lead screws. I still have two of the old ones that I may have to replace in the future.

I’ve been looking at the Inverted electronics mod even before finishing my Trident printer. But it wasn’t possible with the Print It Forward service I used to print parts for my first 3D printer, and after the printer was completed I didn’t feel the need to redo the wiring again.

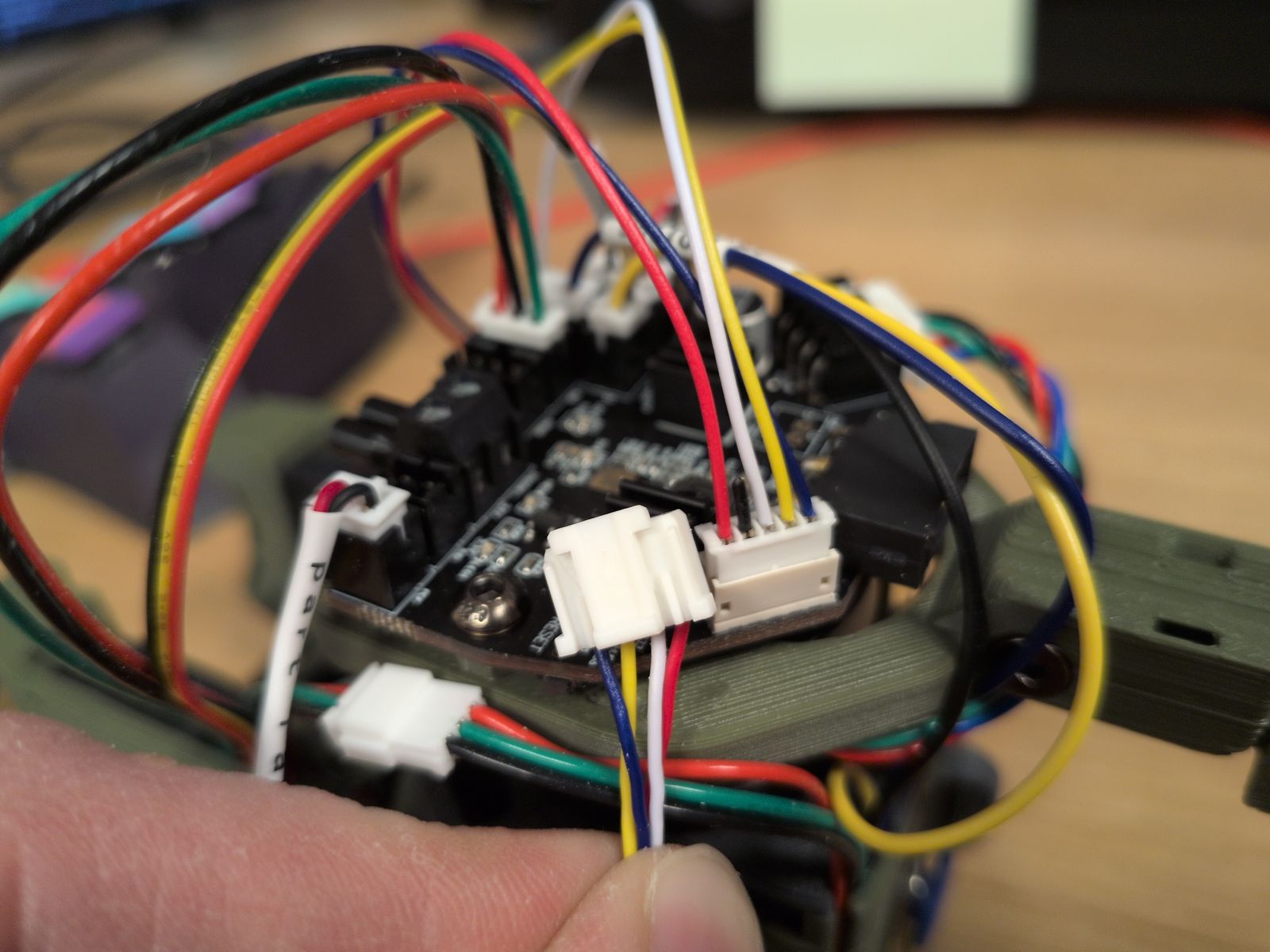

Overall it was surprisingly easy to reinstall all the electronics. It was made easier by the move to umbilical and a single USB cable to the toolhead as it removed quite a bit of wiring:

One issue I had with the mod is that the cutouts for the Z motors were a bit large, with gaps where stray filament or heat can escape through. I tried to cover them up by placing some foam tape around the motors:

I’ve been wanting to replace the Stealthburner toolhead a long time:

But what to choose? There are quite a few interesting toolheads I considered:

I use the Dragon Burner in my VORON 0 and using the same toolhead is boring.

A pretty fun toolhead and I was considering the Mjölnir version. It does require you to flip your XY joints to hang upside down and I couldn’t find a filament sensor or filament cutter for it, so I ended up skipping it.

XOL seems like a very well regarded and mature option with tons of support. It boasts much better cooling for PLA, which is one of the main reasons I want to migrate away from the Stealthburner.

A4T seems similar to XOL, while having even better cooling and a slightly simpler assembly. It would also make use of the Dragon hotend I’ve got lying here, gathering dust.

An all-in-one toolhead solution with filament sensors and a filament cutter that seems to have some quality of life features I think I’d really enjoy:

Flip up Extruder. Probably an industry first, a tool-less easy to access toolhead design so that one can access the blade or the filament path for servicing and troubleshooting. This allows a user, in the event of hopefully a rare problem during a filament changing print the ability to access the filament path to clear it of issues and continue with a print job.

The A4T-toolhead is interesting but the (supposedly) easier maintenance and multi-color consistency of the Jabberwocky really appealed to me.

I struggled a bit to get the filament to load/unload consistently by hand. I rebuilt the toolhead but in the end I believe I just didn’t have enough grip on the filament to guide it past the gears down into bottom hole.

Most of the wiring came as-is except for the cable between the Beacon and the Nitehawk36. I got the Nitehawk36 side of the cable pre-made in the Nitehawk36 kit but I had to pin the Beacon side myself.

The colors of the wires in cable were all over the place but there’s a description on the PCB of both the Nitehawk36 and Beacon so I just had to take care to match them. I also referenced the Nitehawk36 documentation and the Beacon documentation.

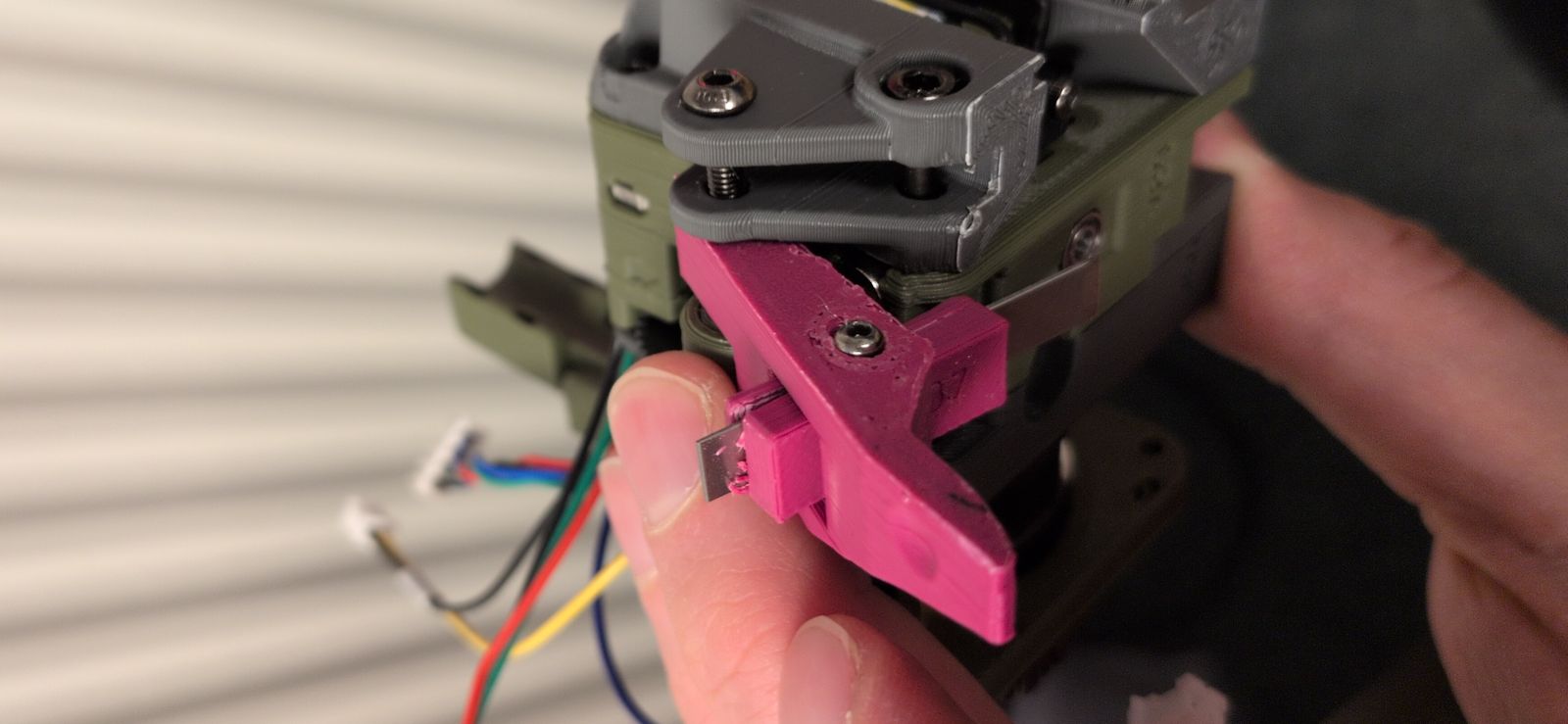

I had real difficulties installing the blade into the blade holder. There was some filament in the hole (likely due to poor print tuning) and I managed to break the holder when I tried to install the blade:

As I didn’t have a working printer when it broke I had to make it work without the filament cutter initially. Luckily I didn’t break anything crucial…

I had to make some software changes but luckily they were quite straightforward:

Use sensorless homing.

I just followed the VORON documentation.

Setup the Nitehawk36 toolboard.

LDO has setup instructions and the Jabberwocky GitHub contains klipper settings.

Setup Beacon for Z offset and mesh calibration.

Their quickstart documentation was fast and easy. I did not setup Beacon Contact; maybe I’ll get to it one day.

After months of not having a working 3D printer I’ve gotten renewed energy to play around with the printer again. I’ve got some loose plans for some mods to make on this printer:

… Or maybe something else? Who knows!