2026-05-29 07:04:35

Where is your intelligence located? In your brain?

It is a simplistic answer. A better model is that your intelligence is embodied.

Consider a cook working at an expensive restaurant. He has all his favorite knives and cooking instructions, placed exactly where he wants them. His kitchen is part of his intelligence, of his skills. The same cook working in your kitchen can probably cook better than you do, but he can’t reproduce the same meals he would prepare in his favorite kitchen.

We often assess computer programmers using whiteboard tests. It is an endless source of complaints. Programmers rightly point out that it forces them out of their element. They are just not as good when you take away their laptop. It is not an excuse, it is a real issue: you are cutting them off from part of what makes them so intelligent.

To sum it up, the model of intelligence as a brain in a jar, disconnected from anything else, is ridiculous.

If you accept the idea of embodied intelligence, then many actions that we view as a consequence of our intelligence are actually part of our intelligence. First and foremost, language. Our ability to talk or write to each other means that I am not limited by my own person. Have you ever heard of human beings isolated in small tribes making technological breakthroughs? Nah. Progress requires lots of people communicating together. Up until a few decades ago, progress required cities. Today I am less certain than it does, as I can more and more communicate with anyone in the world from anywhere. But language is still critical, we have not invented anything better. Similarly, having hands and the ability to build sophisticated tools (like laptops) allows us to extend our intelligence.

At the end of 2022, we got a breakthrough technology: ChatGPT. It built on several pre-existing ideas such as (large) language models, neural networks, and so forth. That’s the ‘GPT’ part. But an important, if underappreciated, part of the breakthrough was the ‘Chat’ component. Someone had the idea of connecting a large language model with a chat interface. Maybe this came naturally and obviously to people building this system, but it should not be assumed to be trivial or unimportant.

Language is a key component of our intelligence, and, thus, it makes sense that it would be pivotal for machine intelligence.

We embodied the AI software in a chat box.

The next step was what we call today ‘agentic AI’. We keep the chat box, but we add the ability for the AI software to interact with tools, and to make plans to use them. In effect, we give the AI more agency: it can do stuff and learn from the results as they happen. It is starting to resemble a human being with hands and tools.

I was talking with a colleague this week. My colleague is all in on the AI revolution. He uses his AI to help him write better and faster, and to get his data analysis done faster, without so much help from technical experts.

But my colleague was not aware of the agentic AI approach. I tried to explain on the phone. What does it mean to give the AI access to tools? Is this only about saving the effort of copying and pasting the AI’s response?

I ended up making a video where I start an AI in a shell within something called RStudio. It is an environment people use to program in R, to do data analysis. I don’t use R or RStudio, but thanks to the AI, I was able to build an entire climate research project in a few minutes, complete with the retrieval of the data from the web.

How did the AI do it? I recorded it. It tried a few things, initially struggling to download the data. At some point, it finds out that it needs new R packages, so it installs them, and once they are installed, it can proceed to generate figures, verifying that it works.

Agentic AI greatly extends machine intelligence by improving the embodiment of AI.

I believe that it is not yet understood as it should be.

In Montreal, the most established professor in the field of AI is Yoshua Bengio. He started his own non-trivial enterprise a few years ago (Element AI). His latest venture is Law Zero, which aims to create a Scientist AI. The first goal of this project is to build AI without the agentic component. It should be a disembodied AI that has no goal of its own, no agency.

I fear that Bengio suffers from what Kevin Kelly called Thinkism. Let me quote from Kelly’s 2008 essay.

No intelligence, no matter how super duper, can figure out how human body works simply by reading all the known scientific literature in the world and then contemplating it. No super AI can simply think about all the current and past nuclear fission experiments and then come up with working nuclear fusion in a day. Between not knowing how things work and knowing how they work is a lot more than thinkism. There are tons of experiments in the real world which yields tons and tons of data that will be required to form the correct working hypothesis. Thinking about the potential data will not yield the correct data. Thinking is only part of science; maybe even a small part. (…) Thinkism is not enough. Without conducting experiments, building prototypes, having failures, and engaging in reality, an intelligence can have thoughts but not results. It cannot think its way to solving the world’s problems. (…) The Singularity is an illusion that will be constantly retreating — always “near” but never arriving. We’ll wonder why it never came after we got AI. Then one day in the future, we’ll realize it already happened. The super AI came, and all the things we thought it would bring instantly — personal nanotechnology, brain upgrades, immortality — did not come. Instead other benefits accrued, which we did not anticipate, and took long to appreciate. Since we did not see them coming, we look back and say, yes, that was the Singularity.

I believe that University professors are especially prone to thinkism. They view intelligence as being centered on what is happening in their brain. When you live in an ivory tower, it is easy to dismiss the real world as the core source of intelligence. Further, they are often people who did quite well in school where thinkism is naturally prevalent.

I have been a professor most of my life. However, I tire quickly of talking with other professors. What I most enjoy is working with people who have new tools that they apply in the real world. Unsurprisingly, I spent most of my time working with software that people deploy in the real world.

What Kelly is saying is that a high degree of intelligence is not enough to do much of anything. The real world is not the final stage of your thinking process. It is maybe the most important part of it.

And thus, when you connect your AI with the real world, giving it the ability of running experiments (as virtually all software developers do today), you get impressive results that go much beyond what AI software can do on its own.

Agency is not a feature. Agency is primary.

2026-05-23 10:45:11

Every machine connected to the Internet has an address called an IP address. Originally, these addresses were 32-bit integers (IPv4), giving a theoretical maximum of about four billion distinct addresses. We are all familiar with these addresses (e.g., 192.168.0.0). There was a big fuss about how we would run out of addresses. It never happened because we don’t actually need every device to have its own unique address. Your home router needs an address, but every device in your home does not need a worldwide unique address.

Nevertheless, the range was extended to cover 128 bits (IPv6). An IPv6 address is conventionally written as eight groups of four hexadecimal digits separated by colons. For example:

2001:0db8:85a3:0000:0000:8a2e:0370:7334

Because addresses often contain runs of zeros, the format allows two shortcuts:

2001:db8:85a3:0:0:8a2e:370:7334.::: 2001:db8:85a3::8a2e:370:7334.The double-colon trick can appear only once in an address, and can match one or more zero groups. Hence ::1 is the loopback address (all zeros except the last group), and :: is the unspecified address (all zeros).

IPv6 also accepts an embedded IPv4 address in the last 32 bits, written in the usual dotted-decimal form. This is mostly used for IPv4-mapped IPv6 addresses such as ::ffff:192.168.1.1. The longest possible textual form is 45 characters:

0000:0000:0000:0000:0000:ffff:255.255.255.255

So a parser must accept hexadecimal groups, the compressed form, and an optional IPv4 tail. It is more involved than parsing IPv4.

The standard C function for the job is inet_pton, available on essentially every system.

Can we do better?

A few years ago, I showed that you could parse IPv4 addresses really fast. Can we do the same with IPv6?

The trick is to use data parallelism: we invoke the so-called SIMD instructions that all our processors support. These instructions can process potentially dozens of bytes at once.

Shreesh Adiga gave it a try with AVX-512, the powerful instruction set supported by recent Intel server processors and all new AMD CPUs. The idea is to load the entire string into a 512-bit register, find the colons with a single comparison, compute the spacing between them to drive a byte-level expand, translate hex digits via a permute, and finish with a multiply-accumulate that combines the hex digits into bytes. Almost the whole parser is branch-free, meaning that there are few if clauses.

I put together a small benchmark that generates random IPv6 addresses with inet_ntop (so the addresses are written in their canonical, compressed form) and parses each one with both inet_pton and the AVX-512 routine. The benchmark runs on an Intel Xeon Gold 6548N CPU @ 2.8 GHz (Emerald Rapids) with GCC, compiled with -march=native -O3.

| function | ns/addr | speed (Mv/s) | instr/addr | instr/cycle |

|---|---|---|---|---|

inet_pton |

175.3 | 5.7 | 954 | 1.56 |

| AVX-512 | 14.0 | 71.3 | 120 | 2.45 |

The AVX-512 routine is about 12 times faster than inet_pton, parsing more than 70 million addresses per second on a single core. It uses eight times fewer instructions, and runs them at a higher throughput (2.45 instructions per cycle versus 1.56).

The source code used for this benchmark is available on my blog repository.

2026-05-22 09:16:35

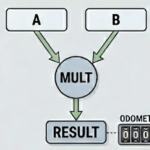

In software programming, the product between two integers is often computed to a fixed number of bits with overflow. Consider 8-bit integers. If you multiply 127 by 127, you get back the number 1 as an 8-bit unsigned integer, with an overflow. The actual full product is 16129. To represent 16129, you typically use 16 bits of precision.

Thus we have the notion of the full product. The full product of two 32-bit integers is typically represented using 64 bits. The question that preoccupied me is what fraction of all 64-bit integers can be written as the product of two 32-bit integers.

You might wonder why you would care?

We often design hash functions: they are special functions that take an input and generate a random-looking output. Several years ago I designed a very fast hash function called clhash. It is a super-fast hash function for strings having a few hundred bytes or more. If you don’t know about clhash, check it out. It is interesting in its own right.

This clhash hash function uses a type of multiplication typical of cryptographic applications. I was trying to argue that our approach had benefits compared with techniques based on standard multiplications. Let me illustrate. A simple hash function for 32-bit integers could take the least significant bits and multiply them with the most significant bits.

// simpleHighLowHash is a simple (and weak) 32-bit hash

// that multiplies the high 16 bits by the low 16 bits.

func simpleHighLowHash(x uint32) uint32 {

high := uint16(x >> 16)

low := uint16(x & 0xFFFF)

return uint32(high) * uint32(low)

}

Maybe you’d want the hash function to be uniform: all possible 32-bit hash values should be equally probable. It is only possible in this instance if the hash function can produce all 32-bit hash values, which is not the case.

The great mathematician Erdös showed that the proportion of all 2n-bit values that can be generated by the product of two n-bit values goes to zero as n becomes large. This means that if you have, say, 10000000-bit integers multiplying 10000000-bit integers, you’d expect relatively few 20000000-bit integers to be produced. But what about practical cases like 32-bit integers or 64-bit integers?

You can just brute-force the problem easily up to the multiplication of 16-bit integers into 32-bit products. At that point, slightly one out of five 32-bit numbers is a product between two 16-bit integers. About 80% of all 32-bit integers are never produced by this hash. However, the running time grows exponentially, and brute force won’t scale all the way to 32 bits.

So what do we do about the 32-bit case? That is, what do you do when you multiply two 32-bit integers to produce a 64-bit product? What fraction of 64-bit values can the following function produce?

func simpleHighLowHash(x uint64) uint64 {

high := uint32(x >> 32)

low := uint32(x & 0xFFFFFFFF)

return uint64(high) * uint64(low)

}

Can we get an exact result?

Yes!!!

Webster and his colleagues built the math to allow us to scale up the exact computation. He was kind enough to publish his code.

There are 3,215,709,724,700,470,902 64-bit (unsigned) integers that can be written as a product of two 32-bit integers. That’s about 17% of all possible values.

What about actually computing a pair of integers given their product? One approach consists of computing its full prime factorization, and then using those factors to build all possible divisors that are strictly less than 2^32, starting with a set of candidates containing only 1 and iteratively multiplying existing candidates by each prime factor (only keeping products that stay below 2^32). We can avoid adding duplicates to our set by processing unique prime factors with their multiplicity. Finally, we select the maximum such candidate m as the largest divisor under 2^32, compute the corresponding leftover n / m, and report whether a valid split into two 32-bit factors exists. In general, the answer (if it exists) is not unique: this returns the pair where one value is maximized. In Python, the code might look as follows.

for p in factor_multiplicities:

new_candidates = []

for c in candidates:

for i in range(factor_multiplicities[p] + 1):

if c * (p ** i) < 2**32:

new_candidates.append(c * (p ** i))

for new_c in new_candidates:

candidates.append(new_c)

m = max(candidates)

print(f"Maximum candidate: {m}")

leftover = n // m

print(f"Leftover: {leftover}")

if leftover >= 2**32:

print("Leftover is too large, cannot find a suitable candidate.")

You might be able to come up with a more efficient algorithm. I find it interesting to consider that if you pick a value at random, it will usually fail! That is, most 64-bit integers cannot be written as the product of two 32-bit integers.

2026-05-19 03:39:49

Converting a 64-bit integer to its decimal string representation is a mundane task that shows up everywhere: logging, JSON serialization, CSV output, debug prints, etc. In C++, you might use std::to_chars, sprintf, or some library routine.

How do these functions work? At a high level, they repeatedly divide by ten. Start with your integer k. Divide it by ten, use the remainder as the last digit (it is between 0 and 9 inclusively). You then add the code point value of the character 0 to get the ASCII digit. To go faster, you can divide by 100 and use a lookup table so that the value between 0 and 99 inclusively is mapped to a string.

So far so good. Unfortunately, even with all these optimizations, this string generation may become a performance bottleneck. Can you do better?

Let us assume that you have a recent AMD processor or an Intel server. Then you have powerful data-parallel instructions (AVX-512) that can multiply eight 64-bit integers at once. We often refer to these instructions as SIMD (single instruction multiple data). My colleague Jaël Champagne Gareau and I recently published a new paper on exactly this problem. The title says it all: Converting an Integer to a Decimal String in Under Two Nanoseconds.

When you write n / 100 in code, an optimizing compiler converts the operation to a multiplication followed by a shift. It is often described as a multiplicative inverse. Generally, you can replace the division of n by d with the division of c * n or c * n + c by m for convenient integers c and m chosen so that they approximate the reciprocal: c/m ~= 1/d. We often call c * n + c a fused multiply-add. Picking m to be a power of two means that the division by m is just a shift. Then you can get the remainder of the division by using the remainder of the division by m, multiplied by d and divided again by m, which is essentially a multiplication followed by a shift (Lemire et al., 2021).

We can put this to good use with the Integer Fused Multiply-Add (IFMA) instructions available on recent Intel and AMD processors. They essentially allow you to compute eight instances of (c * n + c)/m in one instruction. The expression (c * n + c)/m gives you the division, but we need the remainder, so instead we pick (c * n + c)%m which we need to multiply by the divisor.

The fun thing with AVX-512 instructions is that they can use a different c and a different divisor for each of the eight operations. Using Intel intrinsic functions, our core routine which converts a value smaller than 10^8 to eight digits looks as follows:

__m512i to_string_avx512ifma_8digits(uint64_t n) {

__m512i bcstq_l = _mm512_set1_epi64(n);

constexpr uint64_t twoto52 = 0x10000000000000ULL; // 2^52

__m512i ifma_const = _mm512_setr_epi64(

twoto52 / 100000000, twoto52 / 10000000,

twoto52 / 1000000, twoto52 / 100000,

twoto52 / 10000, twoto52 / 1000,

twoto52 / 100, twoto52 / 10

);

__m512i zmmTen = _mm512_set1_epi64(10);

__m512i asciiZero = _mm512_set1_epi64('0');

__m512i lowbits_l = _mm512_madd52lo_epu64(ifma_const,

bcstq_l, ifma_const); // ifma_const * bcstq_l + ifma_const

__m512i highbits_l = _mm512_madd52hi_epu64(asciiZero,

zmmTen, lowbits_l);

return highbits_l;

}

It compiles down to two multiplication-add instructions: vpmadd52huq. That’s it. Two instructions to generate eight digits.

It works by broadcasting n across all eight 64-bit lanes of a __m512i vector (bcstq_l). It then prepares a vector of carefully chosen multiplicative inverses (ifma_const) that represent the reciprocals of 10^8, 10^7, … The magic happens in the single _mm512_madd52lo_epu64 instruction, which simultaneously performs eight fused multiply-adds: each lane computes (ifma_const[i] * n + ifma_const[i]) using 52-bit low-half multiplication, effectively extracting the quotient when dividing by the corresponding power of ten. A second _mm512_madd52hi_epu64 instruction (with a vector of ten and a vector of '0') then isolates the digit values and adds the ASCII '0' offset in the high 52 bits, producing eight packed digit characters in a single 512-bit register.

If all your integers require eight digits, you are done. But in the general case, putting this to good use requires a bit of effort.

Thankfully, even if you, say, need only six digits, you can do the full 8-digit computation and then use a masked store if you want to store only six digits, ignoring the two leftovers. That is, instruction sets like AVX-512 allow you to write only some of the data to memory, which is quite convenient.

We have two variants. One is branch-heavy and does well on homogeneous data (numbers with similar digit lengths). The other is branch-light and better for mixed workloads. A quick profiling step can pick the right one for your dataset.

Our implementation is consistently 1.4–2× faster than the best competitors and 2–4× faster than std::to_chars across a wide range of inputs. What I find interesting is that even if the std::to_chars implementation is not at all naive, you can do significantly better in many ways. James Anhalt’s approach (jeaiii) is also quite fast on modern hardware.

Further reading

– The paper: doi:10.1002/spe.70079

– The benchmarks are on GitHub (fully reproducible)

– Shortly after our paper came online, Barend Erasmus created a software library implementing our proposed approach. I am not certain that Barend includes both the homogeneous and heterogeneous approaches.

2026-05-07 04:15:20

Suppose that x is a variable of an unsigned type. In C/C++, it could be of type size_t for example.

You have an expression like 6 * x and you want to know whether 6 * x overflows. That is, you want to know if 6 * x exceeds the range of values that can be represented by the type. In most cases, a variable of type size_t will be about to represent all values in the range [0, 2^64-1]. Instead of 64, let me use a variable for the number of bits: [0, 2^L-1].

The easiest approach is to compare x with (2^L-1) // 6 where I use the symbol // to denote the integer division (as opposed to /).

But can you do otherwise ?

If the value does not overflow, we know for sure that (6 * x)//6 == x. The interesting question is what happens when it overflows. We can answer this directly for an arbitrary non-zero constant a in the range [1, 2^L-1].

Let k = (a*x)//2^L be the number of times the multiplication wraps around. The effective (wrapped) value computed by the machine is r = a*x - k*2^L, with 0 <= r < 2^L. Overflow happens precisely when k >= 1. We have that k <= a − 1 because x<2^L.

Performing the integer division of r = a*x - k*2^L by a, we get x plus -k*2^L//a. When k is non-zero, this last value (-k*2^L//a) is one of -2^L//a, -2* 2^L//a, …, -(a-1) * 2^L//a.

k = 0 (no overflow): r // a = x.k ≥ 1: r // a = x + (negative integer) ≠ x.Hence we have the following result.

Theorem If x is of an unsigned type and a is a non-zero constant, then a * x overflows if and only if (a * x)//a != x.

In practice, a simple comparison x with (2^L-1) // a is likely more efficient. Optimizing compilers might be able to convert (a * x)//a != x to a simple comparison. Unfortunately, the Go compiler (for example) cannot.

An open question is whether there is a more mathematically elegant check.

2026-05-06 03:01:09

A common pattern in modern software is to map a string key to a small array of floating-point numbers. Word embeddings, feature vectors, lookup tables for physical constants: all variations on the same theme. In Go, the obvious way to write this is a map[string][]float32. But how fast is it, really, and can we do better?

I have been working on constmap, a Go library that builds an immutable map from strings to uint64 values using the binary fuse filter construction. A lookup amounts to one hash, three array reads, and two XORs. There is no comparison, no chaining, no probing. The whole table fits in roughly 9 bytes per key, which often means it fits in cache where a Go map does not.

Go has fast maps, you cannot easily beat them in performance. But if you build a smaller data structure that causes fewer cache misses, you can definitively go faster.

By default, the constmap returns a uint64. But what if your value is an array of eight float32 numbers? You have at least two options:

[][]float32. The constmap returns the index.uint64.The second option requires the unsafe package because we are smuggling a pointer through an integer field. It has some limitations.

unsafe calls converting an integer to a pointer value. Thankfully, you can just put all your arrays of floats in an array and call runtime.KeepAlive(mybigarray) at a strategic location: this will prevent Go from collecting mybigarray. The call to runtime.KeepAlive is not free but also quite cheap so you can possibly use a lot of such calls. benchmarkI built three lookups over 100,000 keys, each mapping to an 8-element []float32. We always access the first element of the array, to make sure that the bencmark is a bit fair. We have a large set of random queries (a query is a string).

We compare map[string][]float32, the standard constmap coupled with an array (so that the constmap constains indexes), and the constmap that contains what is effectively a pointer to the location of the []float32.

Run on an Apple M4 Max with Go’s standard benchmark harness:

| Lookup | Time per op |

|---|---|

map[string][]float32 |

21 ns |

ConstMap → index → [][]float32

|

11 ns |

ConstMap → pointer → *[8]float32

|

8.7 ns |

The constmap with an index is already a twice as fast as the Go map. Replacing the index by a raw pointer shaves another 2 ns by skipping the indirection through the [][]float32 slice header. It is a speedup of about 20% in my case.

The result is interesting on its own: a constmap lookup is fast enough that the next memory load, the slice header read, becomes a measurable fraction of the work.

The benchmark and code are in github.com/lemire/constmap. Run them with:

go test -bench 'FloatArray' -benchtime=1s