2026-06-03 22:50:27

New research. New announcements. A new chapter for GitLab.

On June 10, GitLab Transcend streams live from London — and engineers get a first look at GitLab 19 and Duo Agent Platform advancements before anyone else.

Including a live demo of GitLab Orbit: a knowledge graph across your entire SDLC so your agents know your pipelines, your security backlog, and what shipped last week. Not just your repo.

Virtual, free, and just days away.

OpenAI’s data platform stores 1.5 exabytes across 90,000 datasets and serves ~4,000 internal users as of May 2026. The team has scaled the platform through enormous growth in the last two years. At this scale, the hardest part of data analysis isn’t writing SQL. It’s finding the right tables to use in the first place and understanding semantically how to use data. Many tables look similar but mean different things. What’s the grain of each table? How do you join them against other data? Analysts can spend hours figuring out which tables to use and how to use them before writing a single line of code.

Last year, OpenAI’s data platform team built an in-house agent to fix that. The agent is, in their own words, “pretty vanilla”, yet it works reliably across the entire ecosystem. And the same investment in Codex that powers the agent has let the team do things most companies consider impossible, like migrating thousands of DAGs, 90,000 tables and 600 petabytes between clouds in two months.

We spoke with Emma Tang, Head of Data Platform Engineering at OpenAI, about how the agent works, why a simple architecture is enough at this scale due to strong data infrastructure foundations, the lessons for other teams, and where the platform is headed next. Thanks to Emma for taking the time to share the team’s work in detail.

In this article, you’ll learn:

The architecture behind OpenAI’s data agent, and why “vanilla” is the point.

The six layers of context that turn a single LLM into a reliable analyst across 90,000 tables.

How a question becomes a verified answer in three steps.

Three real Codex use cases inside OpenAI: a 10,000 DAG, 90,000-table cross-cloud migration, hands-off open-source patching, and automated support triage.

Five practical lessons for any team building a domain agent, and where OpenAI’s data platform is headed next.

To understand the agent, we will look at three things: what users experience when they ask a question, what architecture supports that experience, and how a request moves through the agent until it returns a verified answer.

Imagine an engineer or marketer at OpenAI who needs a quick answer. They open Slack and ask their questions in plain English. Moments later, the agent replies with its answer, the SQL it ran, and the tables it pulled from. That’s OpenAI’s data agent.

The agent sits across the entire data platform and answers questions in natural language. A user can ask in Slack, in a web portal, in their IDE, or in the Codex CLI through MCP. The agent figures out which tables are relevant, writes SQL, runs it, checks the result, and returns the answer with its reasoning attached.

Doing all of this reliably across 90,000 tables sounds like it would need a complex system. The team’s approach is the opposite of what most people expect. The agent itself is simple. The reliability comes from the engineering around it: careful data acquisition that gives the agent the right context before it ever sees a question. The next sections look at how the agent is built to get that context right.

OpenAI’s architecture is intentionally simple. Before diving deeper into the architecture, it helps to first understand the basic patterns behind agentic systems.

The basic pattern behind the data agent is an LLM plus a harness. The LLM provides the reasoning. The harness provides the tools and the agentic loop that turns reasoning into action.

The reason you need a harness is that an LLM by itself can only predict the next token. It knows a lot, but it cannot run a SQL query or act on the result. The harness fills that gap. It gives the model tools it can call, like a database query interface, assembles relevant context, and runs the model in a loop so it can reason, act, observe the result, and act again until the task is done.

Many agent systems become complicated at this point as shown in the figure below. A team might add a router that sends easy questions to a small, cheap model and hard ones to a larger model. It might mix multiple LLMs, fine-tune models on internal data, or build complex retrieval pipelines with different embedding models for different content types. Each choice can help, but each also adds cost, latency, and more ways for the system to fail.

OpenAI’s data team took a different approach. They found that a simple architecture works well at their scale, backed by their robust and unified data platform foundation. The data agent they developed consists of four main components: a single LLM, a context assembly layer, a carefully curated set of tools, and an agent runtime.

LLM. The data agent uses GPT-5.5 as the foundation model for every request. The team relies on the model to produce the right SQL queries, inspect the results, correct the queries, and reason its way to a verified answer.

Runtime. The runtime is the orchestrator that drives each request. An LLM on its own only emits text, so something has to act on what it produces. The runtime parses the model’s output, dispatches the requested calls to tools, feeds the results back into the model, and repeats this loop so the model can reason, act, observe, and act again until the task is done.

Context Assembly. This is where the real engineering work lives. A strong model still produces wrong answers without the right context. A bare schema is not enough to tell tables apart. For example, two tables may both have a user_id column and look almost identical, yet one includes logged-out users and the other does not. From the schema alone, the model cannot tell which table answers the question, and picks the wrong one.

To build a richer context, the team identified the signals that actually help the model decide which tables to use and what query to generate: a table’s schema and how people have queried it, notes from the people who own it, and what the pipeline code reveals about how it is built.

Building on these signals, the agent relies on six layers to assemble the right context when a user query arrives:

Table usage metadata. The table’s schema, its lineage, and a history of how people have queried it. Not all queries are equally useful. Queries from popular dashboards written by data scientists rank highest because they tend to be correct and reusable. One-off, exploratory queries rank lower.

Human annotations. Curated descriptions written by table owners that capture business meaning, ownership, criticality, and known caveats that cannot be inferred from schemas or past queries.

Codex enrichment. A nightly Codex job crawls the pipeline code that produces each table. It runs in batches of 100 to 200 tables, with each table taking 5 to 10 minutes. By reading the code, it captures what a table actually contains, how it is derived, how fresh it is, and when to use it instead of a similar table.

Institutional knowledge. A lot of context about the company’s data lives outside the warehouse, in Slack threads, Google Docs, and Notion pages. These documents are ingested and embedded separately, and served through an access-controlled retrieval service, so the agent never surfaces documents a user is not allowed to see.

Memory. Corrections and learnings the agent has saved from prior conversations, scoped at global or personal level.

Runtime context. When the offline context is missing or stale, the agent queries the warehouse directly, and can also talk to other platform systems like Airflow and Spark to fill the gap.

The first three layers, table usage metadata, human annotations, and Codex enrichment, are the ones that describe a table. A daily offline pipeline merges them into a single description per table, and an embedding model embeds that description into one vector per table, stored for retrieval. At runtime, when a question comes in, the tables whose descriptions best match the question are retrieved to be included in the context.

Memory is the other source to assemble the context from. It holds corrections and learnings saved from past conversations, applied on top of the retrieved descriptions so the agent starts from a more accurate baseline instead of repeating old mistakes.

The Figure above shows the overall design of context assembly. Retrieval over the table descriptions identifies the relevant tables, and relevant memory is pulled in as additional context. The last two layers fill the gaps the table store cannot. Institutional knowledge is embedded and retrieved through its own access-controlled service, and runtime context is pulled live from the warehouse when the offline description is missing or stale.

Tools. The agent has access to a small, curated set of 13 tools. These cover company context lookups, internal knowledge bases, big data systems like Airflow and Spark, and metadata services.

The agent uses them to fetch the information it needs to answer a question and verify its work.

The four components described above are the whole architecture of the data agent. There is no router, no fine-tuning, and no special post-training. Every question goes to the same model. According to Emma, the simplicity of the data agent is by design. The real engineering work happens at the infrastructure layer, which builds the right foundation for context assembly.

With the architecture in place, the next question is what happens when a user actually asks something. A question arrives in plain English. The agent’s job is to turn that question into the right context, run it, and return a verified answer. This happens in the following three steps.

Step 1: Embed the Question. The user’s question is converted into a vector using the same embedding model the team used to embed table descriptions offline. This vector is what the retrieval step searches against.

Step 2: Assemble the Context. The context assembly layer searches the vector store for the table descriptions that best match the question, combining semantic search with exact text matching. It also retrieves relevant institutional knowledge from its own access-controlled service, and adds any relevant memory to the context.

Step 3: Start the Agent Loop. The agent sends the assembled context to the LLM and puts it in a loop so it can write a SQL query, look at what comes back from the tool execution, and try again until the answer is correct.

That is the full flow. Three steps from question to verified answer. What makes the agent reliable is the quality of the context that flows through the three steps, which depends on the quality of the underlying infrastructure and how easy it is for the model to reason about. That comes from the six data layers prepared before any user asks a question. This is how the entire company can rely on the agent for critical workloads every day.

For other teams, the useful part of this story is that most components used to build the data agent are available to anyone. GPT-5.5 is on the API. OpenAI’s embedding API is public. Codex is public. MCP is an open protocol. The data platform team did not have access to anything a serious engineering team could not get. What they had was a unified, clean, and robust foundation, a carefully engineered context layer, and a willingness to keep the agent itself simple.

As pointed out in the previous section, the data platform uses Codex to read pipeline code each night, enriching the context the agent retrieves. That is one use case of Codex. But Codex supports many other use cases internally, helping run the platform itself.

Emma pointed out three interesting ones, different in scope but sharing the same pattern:

Migrating 10,000 DAGs, and 90,000 tables in two months

Releasing open-source patches without humans

Closing the support loop

OpenAI’s data platform was running out of capacity on one cloud provider. The team needed to move the data estate to a second cloud, fast. The migration involved 90,000 tables and 600 petabytes of data, plus hundreds of thousands of interdependent workloads.

The hard part of a migration at this scale is not moving the data. It is the dependency graph. Tables form a DAG. Table B depends on table A. Table C depends on table B. You cannot migrate in arbitrary order. During cutover, some tables live on the old cloud while their downstream consumers are already on the new one. At any point during the cutover, the team must know which copy of each table is the authoritative one, so dependent workloads do not read from a stale source. A system was built to replicate data across clouds in the correct direction while the migration is ongoing. This is for dependency graphs that are in the order of O(100k).

The migration touched hundreds of thousands of workloads, each needing a small code change to point at the new cloud. Filing that many pull requests by hand was not feasible, so Codex generated them instead. Codex Skills then handled the testing and validation for each PR. Around this, the team built a custom system to solve two hard problems: ordering the changes so dependencies migrated in the right sequence, and keeping data consistent while each workload ran against both the old and new cloud during cutover. That system gave Codex the guardrails it needed to operate safely at this scale.

With a strong team, and Codex doing much of the code changes, the migration finished end to end in roughly two months. Comparable cross-cloud migrations at other companies have run for years.

OpenAI’s data platform runs on more than a dozen open-source tools, including Spark, Kafka, and Flink. The team keeps its own version of each tool internally, modified with custom patches. Every time a new patch is added, it has to be tested against the existing test suites, validated on staging, and rolled to production.

This kind of work is critical for the platform’s reliability, but it is also repetitive and time-consuming. The test suites are long, with some taking hours and others running for days. An engineer used to babysit each release, watching the tests, diagnosing failures, and rolling the patch forward step by step. With more than a dozen forks, that work added up to a meaningful share of the team’s time.

The team turned the entire cycle over to Codex. A Codex-powered release agent validates patches against the test suites. It diagnoses failures and suggests fixes when something breaks. It rolls the patch all the way to production and alerts the team about what it did.

The release agent has now been running end to end for three to four months without human involvement and without a single incident. What used to require an engineer per release now runs unattended.

A platform that serves 5,500 internal users gets a steady stream of support questions. A pipeline fails. A dashboard breaks. A permission link does not work. Every one of these ends up with the platform team, and each one requires investigation before it can be fixed. At scale, that investigation work used to consume a meaningful share of senior engineering time.

Codex now handles the part of support that used to require investigation. A support bot fields the common questions first and resolves the easy ones directly. When the bot cannot resolve an issue, the engineer on call hands it off to Codex with minimal context. Codex investigates, finds the fix, and applies it. The engineer reviews and approves.

The loop that used to take an engineer a few hours per ticket now lets that engineer dispatch around a hundred fixes per day. The work is not easier. The engineer is amplified.

OpenAI’s data agent works, but the architecture itself is not what most teams should copy. What is worth borrowing is the set of decisions the team made when they built it. Emma shared five main lessons that apply to any team building a similar system.

A coding agent has one source of truth: the repository. A data agent’s source of truth is the whole company. Every system, every siloed data store, every team’s conventions, every table defined outside a unified codebase. If none of that is legible to a model, no agent architecture will save you.

OpenAI’s data is well structured. They’ve built best in class industry standard infrastructure across compute, orchestration, metadata management, storage technology, and more. There are no duplicated technologies, and the data lake is unified. Every table on the platform is produced by code in a single monorepo, and data engineering teams enforce conventions and police duplicate or unclear columns along the way. On top of that, every table has strong annotations with the owner, how critical it is, and how fresh the data should be. None of this is glamorous work, but it is what makes a vanilla agent reliable at exabyte scale.

If your team is considering building a data agent and your data is scattered or inconsistent, the agent is not the first investment. The foundation is.

The team initially connected the agent to around 40 tools, including metadata systems, orchestration tools, and big data systems. The results were bad. The model picked the wrong tool and got confused by overlapping answers from tools that did similar things.

Capping the agent at around 13 tools per call, and removing overlapping ones, fixed the problem. The lesson is that an agent does not need access to every system in the company. It needs access to the right ones, with no two tools doing the same job.

In practice, that means avoiding overlap. If two metadata services expose similar information, the agent should only see one. If two ways exist to look up table ownership, pick one. The model is better at reasoning than at choosing between near-duplicate tools.

A natural first instinct for a data agent is to embed all historical queries and use them as context. OpenAI tried this approach, and it did not work. Most queries in any company are exploratory one-offs, not canonical examples of how a table should be used.

The team improved results by ranking past queries by how trustworthy they are. The agent learns from queries the company has already written, but not all of them are worth imitating. Queries behind heavily used dashboards, usually written by data scientists, rank highest, because they tend to be correct and reused often. One-off queries written for a single analysis and never run again rank lowest. Once the team ranked queries this way, the model started copying the good patterns instead of the bad ones.

The general lesson is that retrieval quality depends on the quality of what you retrieve. What you feed into retrieval is what you get back from it.

Prescriptive prompts hurt the agent’s results. The team experimented with detailed step-by-step instructions about how the agent should approach each kind of question. The agent followed the instructions and produced worse answers. High-level guidance worked better. Tell the model what the goal is. Let it reason about how to get there. Give it the right context and the right tools, and trust the reasoning.

This matches what other teams building agents have found. Modern models are good at planning when they have good information. They are less good at being told what to plan.

The cross-cloud migration was supposed to be impossible in months. The team’s initial estimate was longer. Emma pushed for two months because the company was running out of capacity and a longer timeline was not an option. The team hit the deadline. Comparable migrations at other companies can take years.

The takeaway the team came away with is that timeline estimates from before Codex no longer apply. If a project sounds like it should take a year, the right question is whether it could be done in a quarter with an agent doing most of the work. The bigger risk is not over-promising; it’s playing it safe. A team that sticks to old timelines never finds out what the new tools make possible.

The data agent and the internal Codex use cases are not the end of the story. Emma says two things sit on the team’s near horizon: custom apps, and platforms keeping pace with users.

Custom apps generated per question.

Most analytics tools today give users a fixed set of widgets, like bar charts, line charts, and pivot tables. They are useful, but limited. If your question does not fit the available widgets, you have to write a custom script or file a request with the data team.

OpenAI wants to go further. The agent already builds traditional dashboards on demand, but the next step is freeform ones. Instead of a chart, Codex would build a full React app connected to a backing store and tailored to the exact question asked. Each one takes seconds to build, fits a single user’s need, and runs on real data with real guardrails.

When this rolls out, users will no longer pick from a fixed set of widgets. They will describe what they want and the app will appear, generated per question. A marketer who wants to explore campaign performance with a custom filter and a tailored layout will simply ask.

Users move faster than platforms can keep up.

The same Codex that powers the data agent has accelerated every team at OpenAI. Frontend engineers vibe-code new UIs in a morning. Researchers spin up custom pipelines on demand. Platform teams cannot move at that speed safely. A bad UI affects a few users, but a bad change to shared infrastructure can take the whole company offline.

This leads to a mismatch. Users now ship code to the platform faster than the team can review and validate it, and some of that code is written by people who do not fully understand what it does. Emma described examples like when a bad Flink job lands on the cluster and brings it down, upon asking the user replies, “I don’t know, I don’t know how Flink works, it’s vibe-coded. Can you help fix it?”

This is the next problem the data platform team plans to work on. The fix will not be another user-facing agent, but agents on the platform side, designed to triage incoming code, validate it before it runs, and absorb the deluge from AI-amplified users. The previous wave of agents helped users do more. The next wave will help platforms keep up.

2026-06-02 22:31:47

Most teams pick a search provider by running a few test queries and hoping for the best – a recipe for hallucinations and unpredictable failures. This technical guide from You.com gives you access to an exact framework to evaluate AI search and retrieval.

What you’ll get:

A four-phase framework for evaluating AI search

How to build a golden set of queries that predicts real-world performance

Metrics and code for measuring accuracy

Go from “looks good” to proven quality.

Few people in tech have a clearer view of AI-native engineering at hyperscale than Shah Rahman. As Global Head of Autonomous ML Iteration & Optimization for Ads at Meta, Shah spends his days architecting AI-native infrastructure and multi-agent systems that make ML iteration reliable across one of the largest production environments on the planet.

In the piece below, Shah cuts through the “everyone is an engineer now” noise and lays out what AI-native engineering actually requires: context engineering, spec-driven development, critical verification, and disciplined problem decomposition. He walks through the Agentic Development Life Cycle, the journey that separates real 10x leverage from “faster failure,” and the security guardrails that are no longer optional.

If you’re moving your engineering org toward becoming AI-native, this is a strong playbook.

Let’s get into it.

For more from Shah, connect with him on LinkedIn.

AI generates more than 75% of Google’s new code. OpenAI and Anthropic claim that almost every line of fresh code that they produce comes from AI. Amazon recently migrated 30,000 of its production applications from Java 8 to Java 17 in a matter of months, a project that would otherwise have taken an estimated 4,500 developer-years. And Mark Zuckerberg expects that AI agents will be operating as mid-level engineers by the end of 2026.

Reading those statements, we may feel as if we are looking at the last lines being written on the closing pages of an era. Perhaps even the closing pages of a profession.

But here’s the question: If AI writing everything is the answer, then why are most engineering teams shipping more bugs, more incidents, and more technical debt than they shipped two years ago?

In an April article in the New York Times, Mike Isaac and Erin Griffith gave a name to describe what’s happening across the industry. They called it code overload.

The essence of code overload, according to Isaac and Griffith, is that “tech workers are producing so much code so quickly that it has become too much to handle.” Teams that have rebuilt their work around the use of AI agents are drowning in code churn and security holes.

But. Many engineers who have employed AI agents are pulling ahead of the field, achieving real productivity gains. They are using the same models and the same tools, but they are generating very different outcomes. What explains the gap?

It comes down to one decision. Real productivity gains come when engineers decide to make the leap from writing code to orchestrating it. This piece is a working guide for engineers who want to land on the productive side of that split. It will cover the practices, guardrails, and mindset shifts that separate AI-native engineering from vibe coding and from the everyday chaos that most teams are now generating at scale.

Let me first clarify one thing: engineers are not becoming obsolete. Coding has always been a small part of engineering (20-30% max). This underappreciated reality is more visible when AI agents and tools produce more code, but more code is not necessarily more productive (often it’s less). This is a critical distinction that the industry is blurring dangerously, and I state that as:

When Andrej Karpathy coined “vibe coding” in early 2025, it captured something useful — the ability for non-engineers to build functional software by describing what they want. That democratization is valuable. But it’s categorically different from professional AI-native engineering.

AI-native engineering means commanding and mastering available and emerging AI agents and tools to engineer things that weren’t possible in the pre-AI era. Knowing how to code remains a fundamental expectation. Without that knowledge, you can build systems using AI — and that’s vibe coding. It has its place, but it’s not engineering.

The AI-native engineer operates as an orchestrator — someone who can turbocharge 10x engineering into 100x output through proper orchestration of AI agents. And that bar continues to rise weekly.

Emerging as a distinct discipline, this is the single most important skill for AI-native engineers. Context engineering means the systematic curation and injection of project-specific information into AI working memory: architectural diagrams, coding standards, business rules, team conventions, and development workflows that are reusable and standardized across your team members.

This shifts basic “prompt engineering” to sophisticated “context engineering” reflecting a deeper understanding: the quality of AI output is bounded by the quality of context it receives. Teams practicing rigorous context engineering report 40–50% speed increases and dramatically reduced alignment overhead.

As context engineering matures, Anthropic’s MCP — described as “USB-C for AI” — continues to be a universal standard for connecting agents to external tools and data sources. Context files like CLAUDE.md have become core infrastructure, not optional documentation. This persistent, evolving knowledge layer makes agents genuinely useful within your specific codebase, once you master developing and maintaining this critical context.

The quality of AI-generated code matches the quality of input specifications. Garbage in, garbage out — this principle applies with even more force when AI can generate garbage at unprecedented speed and volume.

Random prompting and vibe coding consistently underperform spec-driven workflows. AI agents get stuck in circular reasoning without clear specifications and instructions that are contained and well-defined. Consider this discipline: define what you want before asking AI to build it, break problems into discrete milestones with clear success criteria, and execute incrementally with validation at each checkpoint. Make sure the agent checks all open Qs with you and doesn’t run off on its own to find answers.

AI-generated code quality approximates that of early-career developers. Research consistently shows that around 45% of AI-generated code contains security flaws. A Stanford study found that developers using AI assistants wrote significantly less secure code and were more confident it was secure — a dangerous combination.

Meanwhile, a striking METR/Anthropic randomized controlled trial found experienced open-source developers were actually 19% slower when using AI assistants on familiar codebases. The culprit? Over-reliance without adequate verification. A GitClear study found AI-assisted codebases showed increased “code churn” — code written and then quickly revised or deleted — suggesting raw output is a poor proxy for productivity.

In the AI-native era, the bottleneck has permanently shifted from writing code to proving that it works at scale, with reliability and security. When AI generates code quickly, review, testing, and verification of that code become the new rate-limiting factors, and verification now becomes non-negotiable.

Avoid over-trusting AI with large, complex problems. Break tasks into AI-manageable chunks where humans handle edge cases, custom logic, and domain-specific aspects while AI agents handle the 70–80% of routine implementation. Complex problems lead to context pollution and slop generation that AI agents really struggle to recover from. Compacting and summarizing when context is polluted and shifting to a different session helps, but this discontinuity can be damaging for long-horizon tasks.. Many of us wasted hours, if not days, due to not decomposing and stubbornly confusing agents about expectations outside of a well-defined context, reasonable specifications, and a lack of verification guardrails.

I recommend the optimal split of: 40% context-setting, 20% generation and testing iteration, 40% reviewing and verification. This surprises many developers who spend most of their time in code generation. In practice, the generation step is fast; the verification and context work become the new time sink.

Begin with one primary AI assistant — pick your favorite one: Codex, Claude Code, or Cursor. Dive deep and build intuition for its capabilities and limitations through daily practice. Set up your workspace, workflow, and initial configurations. You’ll have to take the leap from the times of manual coding to AI-assisted and AI-generated coding practices. Your goal should be to develop judgment about when AI delivers value versus when it creates more work than it saves. Write down your personal notes, iterate, and build a strong foundation.

Adopt structured prompting frameworks. Create project-specific context files encoding team standards and architectural patterns. Implement the “Plan first, then Execute and finally review” workflow: planning mode generates specifications, execution mode implements, and make sure you review after each atomic task. Establish approval gates and guardrails that prevent agent drift. Skipping the review will pile tech debt that you and your agent will both struggle downstream.

The critical practice here is small loops with verification checkpoints. Evidence shows tight human-in-the-loop cycles with limited scope dramatically outperform large autonomous runs, at least for coding tasks. This may feel counterintuitive and slower — but it produces dramatically better outcomes in practice. Indulging into somewhat unplanned and speculative autonomous agent runs will likely produce a large volume of slop whose only destiny may be throwaway and start all over again. Avoid that before it happens.

Deploy AI agents for multi-step, multi-file tasks. Implement AI-assisted code review workflows. Use advanced techniques: multi-agent workflows, parallel sessions, and cross-agent verification loops. Every week, we hear about coding agents advancing on benchmarks and solving problems that were never solved before. Stay on top of those developments and embrace what Claude or Codex inventors are advocating, but adopt those to your needs (don’t blindly follow as their situations may be wildly different than yours).

Target metrics: 80%+ AI-generated coding rate with less than 20% rewrite rate. Achieve this, and you can pull your team toward the same proficiency level rather fast.

Research shows 70% of transformation success comes from operational and cultural change. These changes call for Organizational and technical leads to actively model transformation through daily AI usage. At the same time, ensure three critical aspects to establish the AI-native cultural foundation:

Psychological safety is paramount. MIT research found 83% of leaders believe psychological safety measurably improves AI initiative success. Celebrate “AI failure stories” as learning opportunities. Make this deliberate practice, not optional and ensure everyone feels included as part of the collective learning and growing exercise.

Evolved code review is essential. AI-generated code volume overwhelms traditional human review processes. Redesign review to distinguish AI-generated versus human code with separate review rubrics. Be especially vigilant about the dangerous combination of AI-generated and AI-reviewed PRs — these combinations should be explicitly guardrailed and governed, when necessary.

Shared context libraries become the core currency. Standardize context files, evaluation sets, and agent configurations across teams. Modern tooling enables easy packaging of context through plugins, skills, and commands — but watch for uncontrolled proliferation, where teams compete for standardization rather than collaborating. Don’t let too many team members’ desire to build agents and skills jeopardize your standardized agentic operating environment.

Traditional SDLC — and even extreme agile — falls short for how AI agents develop software alongside humans. AI-native engineering evolution toward an Agentic Development Life Cycle redefines each phase.

The most critical step. Use deep research and planning modes with multiple agents for parallel exploration. Specify against codebases, flag ambiguities, decompose into subtasks, and estimate difficulty. Create roadmaps with version milestones that help agents follow through incrementally. A planning agent can assemble findings from multiple exploration agents into a coherent implementation strategy. OpenClaw of Claude can run in multiple sub-agent in parallel.

AI agents handle end-to-end feature implementation like junior or mid-level engineers (at the time of this writing, which I expect to edge up to senior engineers within a year or two). The engineer acts as the tech lead, orchestrating multiple agents rather than coding directly. Sequential or parallel execution models depend on your roadmap and verification plan. The agentic coding tool landscape has matured rapidly — Claude Code, Cursor’s Composer mode, GitHub Copilot’s Agent Mode, and OpenAI’s Codex agent all support this pattern with varying strengths. There’s new versions coming out every month -- watch closely for new capabilities.

This is TDD reincarnated. Agents write test plans first, then implement code. All tests should fail at the beginning and then incrementally pass. Unit testing at the atomic level, integration testing across features, and end-to-end testing across the system. Don’t overindex on unit testing at the expense of integration or system testing lack.

Pro Tip: Consider separating planning, building, and testing agents. Each agent swarm specializes and develops a deep understanding of your codebase from a different perspective. Planning agents can challenge building agents who take shortcuts; testing agents who skip coverage; or the review agents who are biased towards incorrect implementations that appear to be correct. Similarly, review agents can hold every other upstream agent accountable for making mistakes or missing steps.

Deploy agent swarms specializing in key dimensions: functionality, quality, scalability, performance, reliability, security, and privacy. Agents take the first pass and produce reports; humans review each report carefully. When one agent discovers an issue — say, an injection vulnerability — apply the generalization principle: if one instance exists, others likely do too and this is your chance to proactively similar a vulnerability type in your code, not just one or two instances.

Move from post-facto documentation to continuous generation. AI agents generate summaries, key design decisions, architectural diagrams, and changelogs in real time. This flows naturally into API documentation, feature collaterals, and customer-facing contents. I’m quite excited about AI tools finally solving the outdated, stale, and inconsistent documentation problem that I’ve seen myself and my teams suffer for decades.

Encode your Layer-1 (individual) and Layer-2 (team) practices into maintained, self-evolving context files, skills libraries, and MCP tools. This ensures ADLC adoption scales across the organization rather than remaining tribal knowledge or trapped in parts of the org. Promote the ADLC tooling package.

There’s a seductive narrative in the industry right now: fewer people, less overhead, faster builds. But this narrative conflates construction costs with decision costs. AI has drastically reduced the cost of building, but that represents only 20–30% of total development costs, while leaving the cost of deciding what to build and what to cut largely untouched. With the proliferation of code and builders, this becomes a harder problem.

AI-native process optimization requires redirecting effort from coordinating execution to accelerating learning.

AI compresses the first step dramatically. But compression value depends entirely on execution quality throughout the remaining cycle. Faster building without robust user observation and scope discipline produces faster divergence from genuine product goals. This results in customers not seeing the benefits of AI acceleration.

Cheaper experimentation. Test more hypotheses per unit time. Over 70% of features never reach real users. AI makes it trivially cheap to test whether something matters before committing to full development. The discipline: kill non-viable concepts ruthlessly.

Faster prototyping for user research. Working prototypes replace documentation. Tools like Vercel’s v0, Replit Agent, and Bolt.new enable functional prototypes from natural language in minutes. This produces superior signal quality from user testing. Encourage everyone to prototype aggressively, make it a habit before you build.

Automated boilerplate, not automated judgment. AI handles undifferentiated work: scaffolding, non-novel code, business logic tests, documentation, and data models. Teams focus on differentiated work: core business logic, empathetic user experiences, novel implementations, and the crucial decision of what to keep or kill.

The “design to 50%” principle. Ship minimal functionality enabling core user journeys. Observe where users hesitate, misunderstand, or abandon. This reveals actual product challenges rather than imagined ones. AI makes this approach nearly zero cost.

The security landscape for AI-generated code has become genuinely alarming. The data shows AI-native development speed is creating new attack surfaces faster than manual security review or traditional tools can address. We observed roughly one new insecure AI integration appearing per week in our environment, many resulting in production incidents. Anthropic’s Daybreak and Mythos bring a clear wake-up call to security.

Chat Integration RCE. Built in two days using AI, achieved Remote Code Execution by bypassing 2FA and exploiting open ACLs. It costs tens of hours to detect, mitigate, and fix.

Unauthorized Database Access. An AI coding agent accessed approximately 1,500 secure, unauthorized database tables without proper authorization, exposing sensitive data to prompt injection risks.

Google Docs Prompt Injection. An AI coding agent achieved Remote Code Execution through prompt injection embedded in a Google Docs document, bypassing input filtering protections entirely.

Supply Chain Poisoning. A new attack vector called “slopsquatting” emerged in 2025 — AI models hallucinate package names that don’t exist, and attackers register those names with malicious code. Multiple documented incidents have resulted from this.

Agent Identity and Access Control. Implement step-up 2FA. Apply the principle of least privilege. No shared credentials or open ACLs. Start with passive, read-only use cases and build confidence before granting read-write or broader access.

Data Classification Awareness. Agents must respect data classifications and sensitive boundaries. “Agentic Authorization” is an emerging enterprise challenge where agents bypass restrictions at machine speed that human oversight cannot match.

Prompt Injection Protection. External content — documents, web pages, user inputs — can contain hidden instructions that hijack agent behavior. Implement input filtering, content validation, and context sanitization. Never auto-execute untrusted commands. Resist the temptation of auto-accepting all agent suggestions.

Infrastructure Sandboxing. Agent activities must be observable and auditable. Block high-risk production surfaces — configurations, critical execution, critical storage — until controls are verified. Use sandboxing and OS-level enforcement.

Static analysis integration. Data shows roughly 30% of Python and 25% of JavaScript AI-generated snippets contain security weaknesses. Centralize advanced static analysis in CI/CD pipelines. Require mandatory human review for critical functions: authentication, payments, and PII handling.

Automated quality gates. Implement “Ralph Loops”, OpenClaw, or another form of autonomous loops — iterative verification until success criteria are met. Type checking, linting, and test execution before diff submission. Multi-stage canary systems with stringent gates before production deployment.

Skills-based security. Where the agents are taught secure coding patterns, flagging common vulnerabilities during generation rather than after. Shift left, but with agents.

Skill atrophy prevention. Gartner reports 50% of organizations will require “AI-free” skills assessments by 2026. Treat AI as a learning tool — request explanations alongside generated code. Occasionally, work without AI to preserve foundational abilities. The goal isn’t Luddism; it’s insurance against the day your AI tools are unavailable or producing subtly wrong, but potentially fatal results.

The productivity paradox. Individual productivity gains from AI tools often fail to materialize at the team and company levels. Focus on end-to-end cycle time and feature velocity, not coding speed alone. Adding AI to broken processes yields broken processes that generate more code, faster.

The engineers thriving in the new environment treat AI as a collaborative partner for execution while maintaining the systems thinking, critical judgment, and communication skills that no AI can replicate. AI amplifies existing expertise rather than replacing it — senior engineers achieve dramatically better results because they bring deeper context and sharper judgment.

Your domain expertise is the key differentiator in AI-native productivity. No AI tool or agent can replace it. So, invest in sharpening your domain skills, whether that’s math, science, finance, health science, or a legal profession. Continuing to uplevel your engineering fundamentals pay recurring dividends in AI effectiveness.

This is a multi-year transformation, not a one-off tool adoption. Teams treating it as a tooling upgrade consistently fail to realize productivity gains. The organizations that succeed are the ones treating AI-native engineering as a new way of working — with new practices, new disciplines, and new definitions of what “amazing” looks like.

Are you building this way yet? If not, ping me, and I’m happy to have a chat.

This is Part 1 of a two-part series. Part 2, “AI-Native Leaders,” covers the organizational transformation, leadership models, and measurement frameworks required to make AI-native engineering work at scale.

2026-05-30 23:30:52

Datadog’s guide shows you how to connect AI spend, infrastructure, and model performance into a single view, so you can catch cost spikes the moment they happen. See how Kevel cut AWS costs by up to $100,000/month after replacing reactive cost reviews with real-time visibility.

You’ll learn how to:

Break down AI costs by token, model, provider, and team

Get alerted the instant inference volume spikes or API spend exceeds budget

Correlate cost increases directly to architecture changes so root-cause analysis takes minutes

DoorDash’s customer support chatbot had a hallucination problem. Not the dramatic kind where it invents entire conversations, but the subtle, harder-to-catch kind.

For example, the chatbot would look at a customer’s order history, see a delivery status field, misread it, and then confidently suggest a refund policy that didn’t actually exist. The raw data was right there in the chatbot’s context window, the working memory where an LLM holds everything it needs to generate a response, but having too much information was making things worse.

For reference, DoorDash is one of the largest food delivery and local commerce platforms in the United States, connecting customers with restaurants and stores through a network of independent delivery drivers called Dashers.

At that scale, the company handles hundreds of thousands of support contacts every day from customers, merchants, and Dashers, making automated support not just a nice-to-have but a necessity.

The team could see the problem clearly, but fixing it was a different story. Every change they made to reduce hallucinations in one scenario risked creating new ones in another. They were stuck between two bad options. They could deploy changes to production and hope for the best, which meant risking real customer experiences. Or they could manually test dozens of conversation scenarios for every prompt change, which would take weeks and still might miss things.

This tension isn’t unique to DoorDash. It’s the fundamental challenge anyone faces when they move from traditional deterministic software to LLM-based systems. DoorDash used to run customer support on hand-built decision trees, where every change had a predictable, traceable impact. LLMs replaced that predictability with flexibility and more natural conversations, but they also introduced non-determinism, meaning the same input can produce different outputs each time.

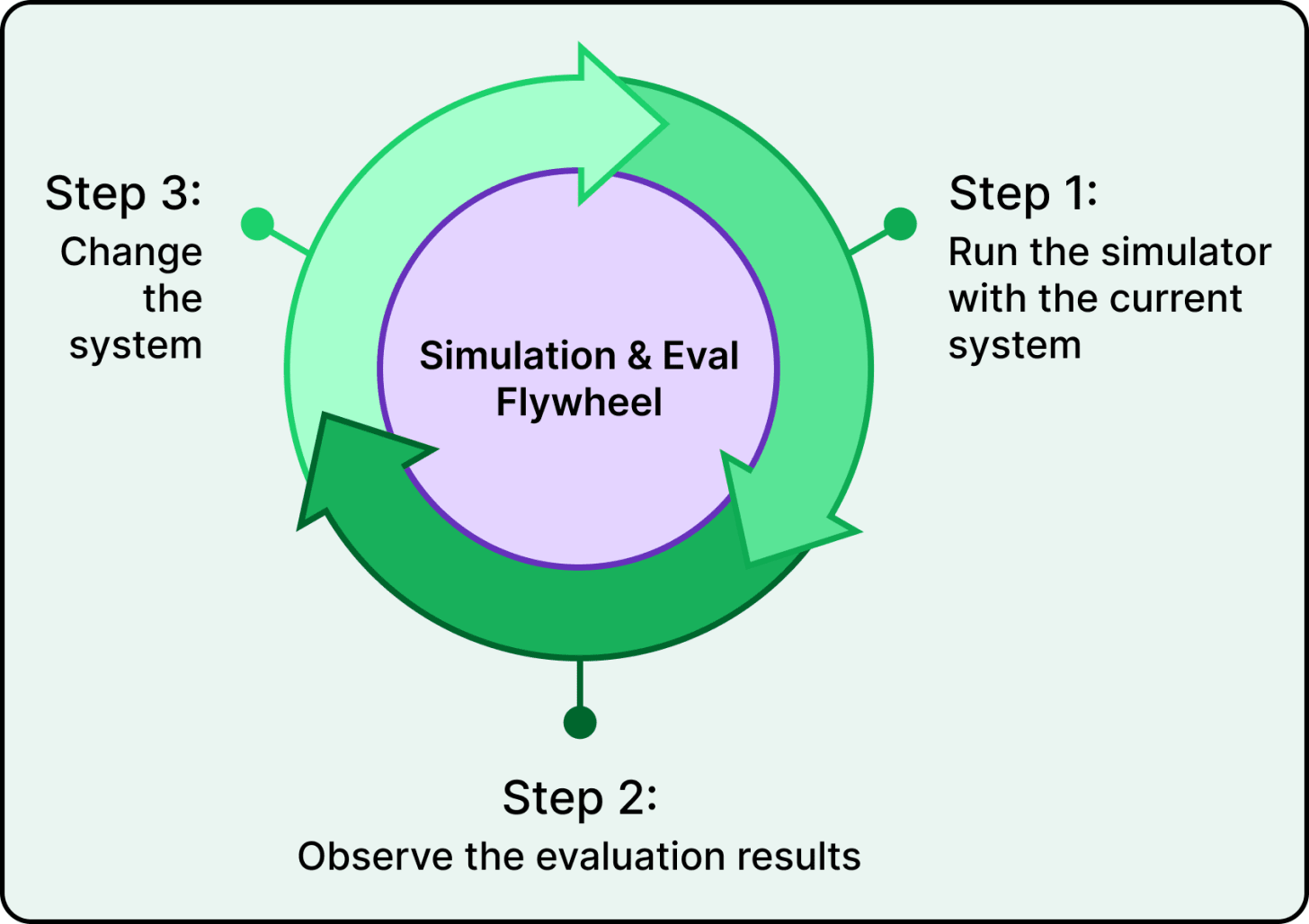

DoorDash’s answer to this problem wasn’t a better chatbot. It was a better system for improving the chatbot, something they call the simulation and evaluation flywheel. In this article, we will learn how they built this flywheel and the key takeaways.

Disclaimer: This post is based on publicly shared details from the DoorDash Engineering Team. Please comment if you notice any inaccuracies.

The flywheel has two interconnected pieces:

The first is an offline simulator that generates realistic multi-turn customer conversations without involving any real customers.

The second is an evaluation framework that automatically grades how the chatbot performed in those conversations.

Together, they create a tight iteration loop.

When the team notices a problem, they write an evaluation that captures the specific failure mode they want to fix. A single job trigger then orchestrates the entire pipeline end-to-end, automatically generating test scenarios from historical transcripts, running multi-turn conversations between the simulator and the chatbot, and evaluating the results.

Then they modify the prompt or the system architecture, run the simulator again, and check whether the pass rate climbed. If it did, they would keep going. If it didn’t, they try something else. They repeat this cycle until the pass rate hits their exit criteria, and then they deploy with confidence that the change actually works.

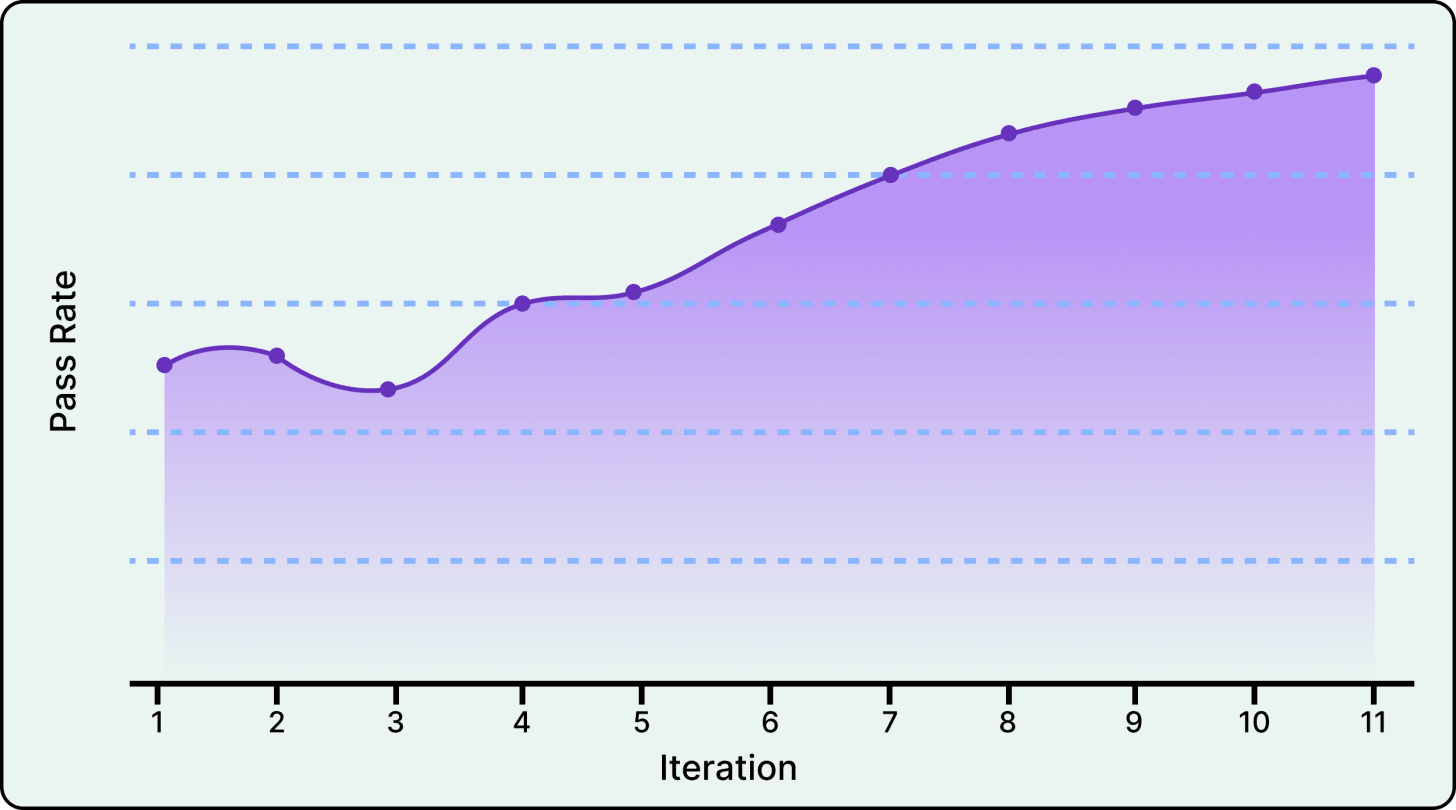

The graph below shows the pass rate for no-hallucination evaluation over time

The speed of this loop makes this a powerful approach. DoorDash can run more than 200 simulated conversations in under five minutes and get automated evaluation results immediately.

In other words, what used to take days of manual testing and review now takes hours. And because everything happens offline, they never risk degrading the experience for real customers while they iterate.

Their evaluation suite has grown to more than 50 evaluations covering hallucination detection, tone assessment, issue classification, and other quality dimensions. Before any change goes to production, it must pass the full suite, which serves as both a quality check and a regression test.

The flywheel sounds straightforward, but both the simulator and the evaluator required solving genuinely hard problems.

Speed without control is a false economy. As AI code-generation accelerates software delivery, the FeatureOps Summit 2026 is here to ensure that when we ship more, we break less.This premier virtual event brings together engineers, architects, and product leaders to explore the infrastructure of fearless delivery.

Key Themes:

AI Safety Nets: Guardrails for the flood of automated code.

Edge Resilience: Sub-millisecond evaluation at scale.

Continuous Flow: Moving past the “fixed-release” mindset. Register today to master the tools and patterns required for a fail-safe release environment.

A static test case can check whether the chatbot gives a reasonable answer to a single message, but it can’t capture what happens when a frustrated customer pushes back three times, provides additional information mid-conversation, or threatens to escalate.

DoorDash’s simulator doesn’t use scripted messages at all.

Instead, it uses an LLM to play the customer role, generating dynamic responses based on detailed test scenarios. At each turn, the simulator runs through a structured analysis, asking questions such as:

Was the issue addressed?

Is the conversation making progress?

Does the customer need to provide more information?

Is the conversation going in circles?

Based on this analysis, it decides what a realistic customer would say next.

The test scenarios themselves come from real historical support transcripts, not from engineers imagining what customers might say.

LLMs analyze past conversations from DoorDash’s database and extract structured behavioral profiles, including the customer’s personality traits (frustrated and demanding versus confused and patient), a detailed narrative of the situation, and the specific outcome the customer is seeking. This grounds the simulator in actual customer behavior rather than idealized test cases.

See the diagram below:

The simulator also exhibits realistic escalation patterns. It doesn’t immediately ask for a manager. Instead, it gives the chatbot multiple chances to resolve the issue, only escalating after repeated unhelpfulness or circular exchanges, and re-engaging when progress becomes clear again. This mirrors how real customers behave.

For a simulated conversation to be meaningful, the chatbot also needs realistic backend data. It needs to look up delivery status, check refund eligibility, and pull order details. DoorDash handles this through mock data that blends real production data with scenario-specific test data, preserving timestamps and relationships to keep interactions realistic. This allows them to test complex edge cases, including fraud scenarios and high-value refunds, that their previous testing infrastructure couldn’t handle.

Running hundreds of realistic conversations is only useful if you can tell whether the chatbot actually handled them well. However, manually reading through every simulated conversation would defeat the entire purpose of automation. So DoorDash uses an LLM to evaluate the chatbot’s performance automatically.

Each evaluation is structured as a function that takes the full conversation transcript (including tool calls and backend responses) along with the relevant company policy, applies a prompt asking whether the chatbot correctly followed that policy, and returns a binary pass or fail with the reasoning behind the judgment.

The obvious objection here is that this sounds circular. If an LLM caused the problem by hallucinating, why would you trust another LLM to catch the hallucination?

DoorDash addresses this directly with a concept they call the generator-verifier gap. Acting as a full customer support agent involves complex, multi-step decision-making across a huge range of possible scenarios. That’s genuinely hard. But verifying a single, narrowly-defined behavior is a much simpler task.

For example, “Did the chatbot claim the customer was eligible for a refund when the policy says otherwise?” is a straightforward binary question. The evaluator isn’t trying to be a better support agent. It’s checking one specific thing at a time, and LLMs are much more reliable at these focused binary judgments than they are at open-ended generation.

But DoorDash doesn’t just trust the LLM judge out of the box. They calibrate it against human judgment through a structured process. They collect a sample of conversations, have human experts label each one as pass or fail, run the LLM judge on the same samples, and then measure how often the judge agrees with the humans and how often it misses problems or flags false ones. They analyze the reasoning behind any mismatches, revise the evaluation prompt to fix systematic errors, and repeat until the judge reliably matches human expert judgment. This calibration step creates trust in the system.

The binary nature of the evaluations is important here. DoorDash isn’t asking the LLM to rate the chatbot’s performance on a subjective scale of 1 to 10. They’re asking whether the chatbot followed a specific policy or not. It makes calibration faster, makes disagreements easier to diagnose, and produces more reliable judgments.

With the simulator generating conversations and the evaluator grading them, DoorDash had a working flywheel.

During early launches, human reviewers noticed the chatbot was getting overwhelmed by the sheer volume of data in its context window. Order histories, delivery status updates, refund decisions, and tool call results were all being fed directly to the model as raw event logs. The chatbot would misinterpret a field or suggest a policy that didn’t exist, not because the information was wrong, but because there was too much of it. This runs directly counter to the intuition that giving a model more information should produce better results.

DoorDash hypothesized that the same data that was vital for the chatbot’s reasoning was becoming noise when it came time to generate a response to the customer. Their solution was an architectural layer they called the “case state,” which synthesizes the raw tool history into a structured, intermediate representation. Instead of dumping everything into the context window, the case state distills the relevant facts into a clean format that the chatbot can actually use.

Getting the case state right required the flywheel. Their first attempts at extraction logic didn’t work well at all. Some versions left out critical information, causing the chatbot to miss details that were essential for driving resolutions. Other versions remained too noisy or poorly structured, confusing the model in different ways. Since the simulator could generate numerous realistic conversations in minutes, the team experimented with dozens of different context shapes and prompt strategies in a rapid feedback loop. Each iteration took hours instead of the weeks it would have required through manual testing.

Over 11 iterations, the hallucination evaluation pass rate climbed steadily upward, with a notable dip at iteration 3, where a change actually made things temporarily worse. That dip shows that improvement isn’t linear, even with a flywheel, and that part of the flywheel’s value is catching regressions before they reach real customers.

The final result was a 90% reduction in hallucinations in simulation, and that improvement carried over into production. The strong correlation between their offline metrics and live traffic performance gave the team confidence that the flywheel is a reliable development tool, not just an internal sandbox disconnected from reality.

The simulation and evaluation flywheel has fundamentally changed how DoorDash develops and deploys chatbot improvements, compressing iteration cycles from days to hours and giving them a way to validate changes across hundreds of scenarios before any real customer is affected.

However, the flywheel does come with real tradeoffs worth understanding.

The main limitation is that it can only catch problems for which you’ve written evaluations. If a failure mode isn’t captured by an evaluation, the flywheel is blind to it. DoorDash mitigates this by running a full evaluation suite before every deployment, covering hallucination, tone, and issue classification, but new failure modes can always emerge that existing evaluations don’t cover. This is why human review remains the starting point for every improvement cycle. Despite all the automation, someone still has to look at real conversations and notice what’s going wrong.

Simulation fidelity is another inherent limitation. Even with transcript-derived scenarios and hybrid mock data, synthetic conversations are approximations of real user behavior. DoorDash reports a strong correlation between its offline metrics and production results, which validates the approach, but that correlation isn’t guaranteed to hold for every type of scenario or every kind of system change.

There’s also the question of cost. Running hundreds of LLM-to-LLM conversations per test cycle, plus LLM-as-judge evaluations on each one, requires significant compute. For smaller teams or less critical applications, a lighter-weight version with fewer scenarios and more targeted evaluations might be the pragmatic starting point.

The broader takeaway is that LLM systems require a completely different testing paradigm than traditional software. Since we can’t trace the branch anymore, we need a feedback loop that lets us simulate, evaluate, and iterate fast enough to build confidence before shipping.

References:

2026-05-29 00:31:00

What does it mean for a distributed system to be up?

On a single machine, the answer is straightforward, since a program is either running or it has crashed, and the line between the two is usually obvious from a stack trace.

Distributed systems are not so simple. Every server can report healthy while users are seeing errors, the whole system can be technically working but stuck in a state it cannot recover from on its own, and it can quietly serve wrong data while every dashboard glows green.

None of these may be because of bugs in the conventional sense. They are recurring failure patterns that have been showing up across systems for decades, with names, mechanisms, and standard ways of defending against them.

In this article, we will look at the most significant failure mode patterns in distributed systems and the standard approaches to deal with each of them.

2026-05-27 23:30:43

Sign-up forms were built for humans in browsers, so how do AI agents programmatically register with services?

Enter auth.md. By exposing a single, machine-readable Markdown file at your service root, AI agents can dynamically discover your OAuth Protected Resource Metadata, parse required scopes, and authenticate seamlessly.

With native support in WorkOS AuthKit, you can now implement this protocol out of the box, giving AI tools a standardized, secure way to log into your application.

Airtable holds embeddings for hundreds of thousands of customer databases, and on any given week, roughly three-quarters of them sit completely idle. This fact, more than any algorithm or vendor choice, decided the architecture behind their semantic search system. The interesting story is not which vector database they picked. It is how one peculiar property of their data forced a specific chain of engineering decisions, each one logical only in light of the one before it.

Airtable is a platform where customers build their own database-like applications, organized into “bases” that often hold hundreds of thousands of rows. Their AI feature, called Omni, lets users ask natural-language questions of their data and get answers back in plain English. A separate feature, linked record recommendations, suggests relationships between rows based on meaning rather than exact text matches. Both features depend on the same underlying capability, which is finding the rows in a base that are semantically relevant to a user’s intent.

This might sound simple until scale enters the picture. When a base has half a million rows, fitting all of them into a single LLM prompt becomes infeasible. The model has limits on how much context it can absorb, and even if those limits did not exist, sending that much data on every query would be slow and expensive. The system has to find the most relevant rows fast, then hand those rows to the LLM as context.

In this article, we will look at how Airtable’s data infrastructure team built its architecture, the challenges they faced, the tradeoffs they accepted, and why the choices they made only make sense once their data is properly understood.

Disclaimer: This post is based on publicly shared details from the Airtable Engineering Team. Please comment if you notice any inaccuracies.

The Airtable team anchored their work around four design priorities:

Queries had to return within 500 milliseconds at the 99th percentile, which means the slowest 1 percent of queries still had to come back within that window. Anything slower would make the AI features feel sluggish.

Writes had to be high-throughput since customer data changes constantly, and embeddings have to keep pace.

The system had to scale horizontally to support millions of independent bases.

Everything had to be self-hosted because customer data privacy required keeping it all inside Airtable-controlled infrastructure.

Beyond those priorities, Airtable’s data has three properties worth flagging early:

Customer bases vary enormously in size, with some holding a handful of rows and others holding hundreds of thousands.

Each base is isolated, meaning one customer’s data must never leak into another customer’s results.

Most bases are idle most of the time, a fact that becomes important in a later section.

Before going further, we need to understand what an embedding is.

An embedding is a list of numbers, typically several hundred or a thousand of them, generated by a neural network. The network is trained so that two pieces of text with similar meanings produce numerically close vectors. An embedding can be thought of as a fingerprint of meaning, where similarity in the numbers reflects similarity in what the text says.

One important practical fact is that embeddings are typically about ten times the size of the original data they represent, which is why Airtable cannot just store them alongside the source rows in their primary database. A separate system is needed, one designed specifically for storing and searching across these large numerical vectors.

The asynchronous embedding pipeline that generates and updates these vectors as customer data changes is a separate system, which is the database that stores the embeddings and serves queries against them. After evaluating the landscape in late 2024, Airtable selected Milvus as its database. This is because Milvus supported self-hosting, handled multi-tenancy through its partition model, and let them scale ingestion, indexing, and query execution as separate components. Picking Milvus, though, was the easy part. The hard part was figuring out how to organize Airtable’s data inside it.

See the diagram below:

The first real architectural question was how to slice up customer data so that millions of bases can coexist in one system without leaking into each other.

Two options were on the table.

The first option of shared partitions would put many bases together in the same physical slice and rely on a customer ID filter at query time to keep results separate. This approach uses resources efficiently because there is no partition for every customer, and small bases do not sit around taking up dedicated storage. The cost is that every query carries the overhead of filtering by customer ID, and deleting a customer’s data becomes complicated because the rows are scattered across shared partitions.

The second option of having one partition per base gives each customer their own physical slice. Queries are naturally isolated because they only ever touch one partition. Deletion is trivial since dropping the partition is enough. The cost is operational. With millions of customers, the database ends up managing millions of partitions, which puts pressure on its internal bookkeeping.

Airtable picked the second option. The reasoning was that strong physical isolation made permission boundaries obvious, deletion stayed simple, and queries avoided the latency cost of post-query filtering.

Then the team ran into a problem.

At around 100,000 partitions inside a single Milvus collection, performance fell off a cliff. Partition creation latency went from about 20 milliseconds to roughly 250 milliseconds. Loading a partition started taking more than 30 seconds. Adding hardware would not have fixed any of this, because the issue was not a shortage of capacity. The issue was that too many partitions in one collection overwhelmed the bookkeeping that the database needed to keep them organized.

The fix was hierarchical capping.

Each Milvus cluster now holds 400 collections, and each collection holds at most 1,000 partitions, which limits any single cluster to 400,000 bases. As the customer base grows, Airtable provisions new clusters rather than packing more partitions into existing ones.

The structure trades some operational complexity for predictable performance at every layer. See the diagram below:

Permissions deserve a brief discussion before we move further. Milvus does not know anything about who is allowed to see what data. It just stores embeddings and returns matches. Permission checks happen later, when the application layer takes the row IDs returned by Milvus and fetches the actual rows from Airtable’s primary database. This split keeps the vector search system focused on a single job, which is similarity search, and authorization stays where authorization always has lived.

The pattern of hierarchical capping shows up across distributed systems, from sharded relational databases to message broker topics. Any flat namespace eventually hits a wall, and the fix is almost always to introduce another level of grouping above it. Recognizing this principle is more transferable than memorizing the specific numbers.

See the diagram below that shows the query flow:

Once the data has been sliced up, the next question is how to actually search inside each slice.

Vector search at scale involves an unavoidable tradeoff with three currencies, namely memory, latency, and recall.

Recall means the percentage of truly relevant results that show up in a query response. Every vector index pays for performance with one of these three currencies, and no option gets all three for free.

Airtable benchmarked three index types, and the results map cleanly onto this triangle.

HNSW, which stands for Hierarchical Navigable Small World, builds a graph where similar vectors are connected to each other. A query starts at a small set of entry points near the top of the graph and follows the connections downward, hopping from one vector to its nearest neighbors until it converges on the closest match. HNSW is fast at lookup time, achieves recall in the 99 to 100 percent range, and behaves predictably under load. The cost is that the entire graph has to live in memory, which makes HNSW the most memory-hungry of the three options.

IVF-SQ8 takes a different approach. The IVF part clusters vectors into groups, so a query only has to search inside the most relevant group rather than the full dataset. The SQ8 part compresses each number in the vector from four bytes to one byte, shrinking the index dramatically. The footprint becomes much smaller, but the compression introduces approximation error that lowers recall.

DiskANN keeps most of the index on solid-state storage rather than in memory. It scales to enormous datasets per node because holding everything in RAM is not required. The cost is that every query touches disk, and disk is slower than memory, so query latency rises.

Airtable chose HNSW. Given the priorities from earlier in the design, this was almost the only available answer. A 500-millisecond latency target ruled out DiskANN’s higher per-query cost. The recall directly determines how good Omni’s responses feel to users, which makes the precision of HNSW worth paying for. The memory cost remained a real concern, but Airtable had a separate way to handle it.

The right index does not exist in the abstract. It exists relative to the priorities and constraints of a specific system. If Airtable’s latency tolerance had been looser, DiskANN would have been an interesting candidate. If their recall tolerance had been lower, IVF-SQ8 would have saved them money. None of the three options is universally better than the others.

This same triangular pattern repeats across systems engineering. Caching works the same way, where hit rate trades against memory and consistency. Database indexes work the same way, where read speed trades against write speed and storage. The technologies stop feeling intimidating once the underlying tradeoff becomes recognizable.

Picking HNSW solved the latency and recall problem, but pushed the entire cost onto memory. Across hundreds of thousands of bases, that memory bill adds up quickly. The team needed a way to shrink it without giving up the index they had just chosen.

The solution came from looking at how customers actually use Airtable. When the team analyzed access patterns, they found that only about 25 percent of bases were read from or written to in any given week. The other 75 percent sat completely idle. This was not an anomaly. It reflected something real about how people work. Users tend to focus intensively on one base for a stretch of time, set it aside for weeks or months, and then come back when the project requires their attention again.

Milvus supports offloading partitions from memory to storage and reloading them within seconds. With that capability, Airtable could keep only the hot partitions in memory and push the cold ones out. When a user opens a base that has not been touched in weeks, the partition reloads quickly enough that the user notices a brief warm-up rather than a failure.

This approach works for Airtable specifically because their access pattern is bursty and bimodal. If usage were spread evenly across all bases, with every customer constantly touching their data at the same low rate, cold offloading would not save much. The hot set would be the entire dataset. Airtable’s pattern is the opposite. A small fraction of bases is active at any moment, and the active set rotates over time.

What made this work was measurement.

The Airtable engineering team did not guess about access patterns and did not reach for a generic optimization. They looked at the data, found a property of their actual usage, and built around it. The HNSW choice became economically viable because of this measurement, and the decisions in this system reinforce each other in a way that would not be obvious from evaluating any one of them in isolation.

The traditional approach to disaster recovery in databases is backup and restore. Snapshots get taken regularly, stored somewhere safe, and used to rebuild the system if something catastrophic happens. Airtable went a different direction.

Their recovery path is to spin up a fresh Milvus cluster and re-embed customer data from the source. The most-used bases get re-embedded first so that most users see normal service quickly. The remaining bases get rebuilt lazily as customers access them. There is some compute cost during recovery and some delay before every base is fully back, but the path is conceptually simple and works across many failure modes at once. Corruption, model migrations, and certain data residency changes all reduce to the same procedure.

This option is only available because Airtable has already built an asynchronous embedding pipeline as part of earlier work. That pipeline normally generates new embeddings whenever customer data changes, processing them in the background rather than blocking writes. Recovery is not a separate system created for emergencies. It is just the existing pipeline running against an empty cluster.

The system built by Airtable involves four major tradeoffs: how to partition the data, which index to use, when to keep data in memory, and how to recover from failure.

Every one of those decisions traces back to the same upstream fact about Airtable’s tenants. Their customers run small, isolated bases that are mostly cold most of the time. Changing any one of those properties can cause the design to fall apart.

For example, a workload where every base is hot all the time would make cold offloading useless. A workload requiring strict consistency would not tolerate the asynchronous embedding pipeline. A workload with very small per-customer datasets might benefit more from shared partitions than from one-per-base.

The technologies Airtable uses, including Milvus, HNSW, and the rest, are interchangeable in principle. The same system could be rebuilt on a different infrastructure, and the architectural reasoning would still hold. What is harder to replicate is the discipline of letting the data drive the architecture rather than the other way around.

References:

2026-05-26 23:31:10

On June 10, GitLab Transcend streams live from London with an agenda built for practitioners like you. You can expect an agenda that’s full of keyboard moments with live demos of Duo Agent Platform, agentic AI use cases from your peers, and The Developer Show hosted live by Senior Developer Advocate, Colleen Lake. Register today.

GitLab Transcend streams live from London on June 10 (with regional replays for APAC and AMER on June 11). Register for free today.

In November 2023, Vercel quietly shipped an internal platform that cut its build provisioning time from 90 seconds to 5. That sounds like a story about making things faster. It is, but only on the surface. The real story is that Vercel got faster by accepting a harder constraint, building a more complicated foundation, and then layering three separate optimizations on top of it. The 18x improvement is the result.

Vercel is a deployment platform for web applications. When a developer pushes code to a connected repository, Vercel pulls that code, runs the build process (compiling, bundling assets, packaging the output) on its own servers, and then deploys the result to a global edge network of geographically distributed servers that deliver the site to end users. The build step happens on Vercel’s infrastructure, which means thousands of customers run their build scripts on machines that Vercel manages. Every push has to feel instant to the developer, has to run safely on shared hardware, and has to scale through traffic spikes without degrading.

The platform that handles all of this is internally codenamed Hive, and it has been powering Vercel’s builds since late 2023.

Hive is the reason behind the 90-to-5 transformation. In this article, we examine the constraints Vercel faced, the choices they made in response, and the optimizations that produced the speedup.

Disclaimer: This post is based on publicly shared details from the Vercel Engineering Team. Please comment if you notice any inaccuracies.

The architecture rests on a single foundational assumption. Hive operates as if every piece of code it executes might be malicious, running on machines shared by many tenants at once. That assumption influences everything that follows.

It matters because the trust calculation flips entirely between two situations. When a team runs its own code on its own server, the goal is performance and convenience. The code trusts the machine, and the machine trusts itself. When the code comes from someone else and runs on shared hardware, the calculation changes. The platform has to assume the code might try to break out of its sandbox, read another customer’s secrets, or interfere with builds running on the same machine. This is hostile multi-tenancy, and it is a different infrastructure problem from running cooperative workloads.

Vercel sits squarely in this harder category.