2026-06-08 15:42:08

Howdy, folks! Today’s roundup is mostly a bunch of follow-ups to posts I wrote before. It’s very hard to decide when to post about a particular topic, and it often happens that some relevant news story or piece of data comes out a little bit later. These roundups are a good way of cleaning up those loose ends.

Today we start with a truly wacky policy proposal by the esteemed Thomas Piketty…

Unlike many people, I never pretended to have read Thomas Piketty’s book, Capital in the Twenty-First Century. I simply didn’t read it. I did read a number of the papers that the book was based on, which is often a better and quicker way of getting the key points of a book like that. I thought those papers were a good and important addition to the economics literature, even if the messy reality of inequality didn’t always fit the simple story Piketty told, and the data he relied on was less reliable than we might want.

Despite the limitations of Piketty’s work, it sparked a long-overdue and generally healthy debate about inequality. And Piketty’s basic policy solution — tax rich people more — was pretty reasonable, even if his proposed numbers were too extreme. I did roll my eyes when Piketty stood on a stage at an academic convention and accused Greg Mankiw of being in the pocket of rich people.1 But overall his work seemed pretty serious and often reasonable.

However, after years of relative silence, Piketty has burst back onto the scene with some work that seems very unreasonable. He and his team at the World Inequality Lab — which includes his longtime co-authors Emmanuel Saez and Gabriel Zucman — have come out with a grand plan for fixing the world. And for the most part, it’s total nonsense.

Piketty described the new plan in a thread on X. Its main focus, perhaps surprisingly, is not inequality — it’s climate change!

First of all, Piketty’s baseline climate change scenarios appear based on a very outdated model — the RCP8.5 scenario, an extreme projection that essentially all serious climate scientists have now rejected. This choice of baseline suggests that Piketty et al. were trying to find ways to justify maximal policy intervention, instead of starting from the science.

Piketty’s preferred solution to climate change is degrowth. He envisions detailed central planning to achieve deliberate impoverishment of large portions of the world’s population — mandated reductions in the consumption of various specific goods, including food.

In addition to the dubious morality of deliberately impoverishing untold millions of human beings based on scientific models that have already been rejected, this kind of scheme is just utterly unworkable. Back in 2021, when I wrote about why degrowth is a political nonstarter, I declared that “implementing the kind of reallocation schemes that degrowthers throw around with abandon would require global economic planning that would put Gosplan to shame.” Piketty knows this, and thinks it’s a good thing.

Even more ridiculously, Piketty envisions a global fiscal authority to carry out this insane plan via global taxation:

Let’s set aside the obvious fact that countries are just not going to agree to give up their spending and taxation power — even the EU refuses to have a fiscal union, and it’s rather insane to imagine Indians and Chinese people agreeing to let themselves be taxed by Tanzania and Nigeria — and just point out how this proposal ignores the basic economics of climate change.

Climate change is a global negative externality — the reason countries don’t all just impose their own local carbon taxes and solve the problem is that there’s an incentive to free ride and let other countries handle it. That exact same free rider problem applies to the global fiscal authority that Piketty envisions. There’s a clear incentive for any country to simply drop out of the fund and let other countries fix climate change for them.

It’s obvious that Piketty et al. are just looking for a reason to levy high taxes on the global rich. This is the “World Inequality Lab” we’re talking about here. And it probably made sense to try to ally with other factions of the progressive movement — degrowthers, “decolonial” leftists, and so on — in order to get support for their desired policies.

But the result here is not going to be a good one for Piketty, Saez, Zucman, and their team. No country is actually going to embrace the idea of a global fiscal authority to fight climate change. In calling for this sort of thing, Piketty et al. simply make themselves look less like serious economists and more like opportunistic activists on the fringe of a “green” movement that’s already in steep decline.

In a post this week, I noted that “tokenmaxxing” — simply using as much AI coding output as you can and hoping that it pops out something valuable — is hitting its limits:

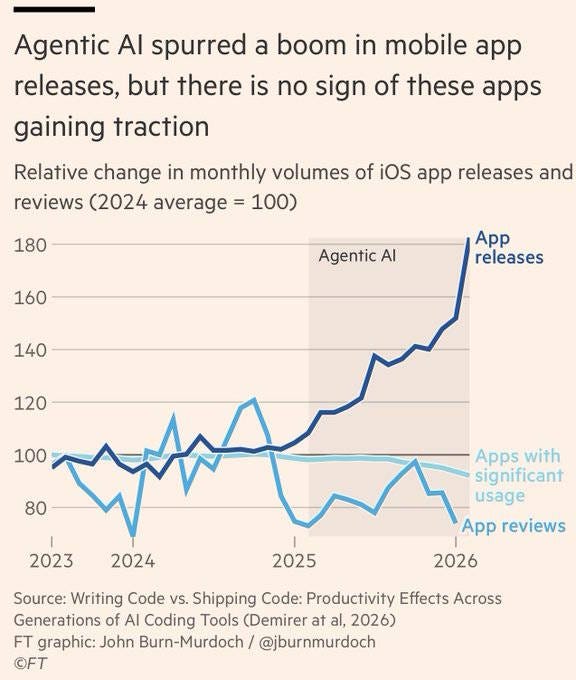

Well, here’s a follow-up. John Burn-Murdoch of the Financial Times recently made this nice chart, using data from the Demirer et al. (2026) paper that I discussed in my post:

The number of apps with significant usage is actually going down in the age of AI, even as people are releasing floods of new apps into the world. Meanwhile, Bob Elliott notes that since generative AI was created, there has been a rapid acceleration in many measures of text output, even though the economy hasn’t accelerated much:

And Sam Altman is now warning of a significant pullback on AI spending — the first such pullback since generative AI appeared.

This doesn’t look like a simple story of “bottlenecks” and “weak links” — if it were that, we wouldn’t see so many new apps and e-books hitting the market. The deeper story here may be that demand for many of the things that generative AI produces might be a lot more inelastic than we thought. The things we really want a lot more of may not actually be the things that generative AI is yet equipped to provide. As the AI industry advances, of course, that will probably change.

A couple of weeks ago, I wrote a post about how all militaries not based around large masses of cheap drones are now functionally obsolete:

This includes America’s military, which is based around a few big expensive “platforms” like fighter jets, aircraft carriers, and tanks. I’m not saying those weapons will all be useless in future wars, but if that’s all you have, and you don’t have masses of cheap drones, you will lose wars to countries that do have masses of cheap drones — such as China, if they ever get serious about turning their mighty industrial base toward making billions of weaponized drones.

The Lowy Institute has a good report explaining why Western militaries seem incapable of learning to use the essential weapons of modern warfare. They write:

Western military institutions…are failing to energetically learn from modern wars. Despite four years of unprecedented visibility into Ukrainian battlefield innovations, and the recent war in Iran, Western forces have not institutionalised key lessons into doctrine, force structure, or procurement priorities…The recent war in Iran has confirmed and amplified many of Ukraine’s lessons, particularly on the centrality of drone warfare, the inadequacy of Western counter-drone capabilities, [and] the effectiveness of cheaper long-range strike systems…And yet the response of Western institutions…has been characterised by rigidity, inertia, and what can be called a humility deficit: an unwillingness to genuinely confront the implications of what is being demonstrated in real time on real battlefields.

The U.S. military could, of course, learn from Ukraine — currently the #1 best country in the world in drone warfare. But the unrelenting hostility and disdain toward Ukraine from Donald Trump and the MAGA movement has prevented America from taking advantage of Ukraine’s expertise:

The Trump administration’s hesitancy in signing a major drone deal with Ukraine is slowing the U.S. military down in an area where it’s already trying to play catch-up…[T]he U.S. has so far refused to embrace Kyiv as a partner in its drone development…

[E]ven with senior Pentagon officials — including Defense Secretary Pete Hegseth and Army Secretary Dan Driscoll — lauding Kyiv’s drone abilities, the Trump administration is still biding its time on taking full advantage of the Ukrainian capabilities, a delay that experts say is potentially kneecapping the U.S. military…

“I don’t know what the hang-up would be in denying ourselves the ability to take advantage of that. I don’t think there’s any good reason,” Rebeccah Heinrichs, a senior fellow at the Hudson Institute think tank, said of Ukraine’s drone capabilities…One former official [called] the hold-up “lethargy” on the part of the Trump administration and “a certain amount of hostility towards Ukraine coming from the very top.”

MAGA basically created a fantasy world where Russia is a defender of Western values, Ukraine is somehow an arm of global wokeism, Ukraine is part of Russia’s legitimate “sphere of influence”, and Russia is a mighty superpower with a manly martial culture that would eventually be able to grind the Ukrainians down and inevitably triumph.

The problem with this fantasy was that it was fantasy, and if you believe in fantasy too long, reality tends to intercede. By allowing themselves to believe their own anti-Ukraine mythology, Trump and his followers are cutting themselves — and the U.S. Military — off from access to crucial modern military technology.

In my last post, I argued that Europe should put tariffs and other trade barriers on Chinese imports, in order to protect its own strategic defense-related industries. But this is actually a lot harder than it sounds. Even if Europe blocks final goods from China, China can still export intermediate goods to “third countries” that assemble those goods for final export to Europe. In fact, China has done this in response to American tariffs, reducing (though not eliminating) the decoupling effect.

But if that happens, it’ll be very good for the “third countries”! Assembly work isn’t the most valuable part of the supply chain, but it does create value, and it does create lots of jobs, and it does create local companies that then have the potential to climb up the value chain someday and start making their own components. In fact, this is exactly what China did! Back in the 2000s, China did a lot of the low-value assembly work for components made in Japan, Korea, and Taiwan; now, most of that has been onshored, but it was still important for China to go through that initial phase of learning to slap together iPhones and computers and cars.

So if putting tariffs and other trade barriers on Chinese-made goods just ends up shifting assembly to poor countries…well, that’s not the worst outcome in the world. It’ll help counteract Chinese companies’ home bias — their natural tendency to want to build factories in China instead of overseas.

In fact, as the WSJ reports, this is already happening:

“Made in China” is becoming “made by China”—all over the world…Faced with higher Western tariffs and weak demand at home, many Chinese factories are moving abroad, making everything from appliances to automobiles everywhere from North and South America to Eastern Europe…In Mexico, Chinese investment in industries such as the automobile sector generated more than 100,000 jobs from 2020 to 2023, according to one analysis…In 2024, Chery Automobile, China’s top car exporter, helped to rescue a small factory in Barcelona that struggling Japanese automaker Nissan no longer wanted…

Jeep maker Stellantis this month said it planned to build EVs with two separate Chinese companies in Spain and France. Ford and Geely are in discussions about a potentially similar deal in Spain, and have also discussed whether the collaboration might extend to the U.S…Midea, the home appliance maker…opened a roughly $100 million factory in Brazil making refrigerators and washing machines in 2024. Its subsidiary, Welling Auto Parts, opened its first overseas manufacturing facility in Mexico last year.

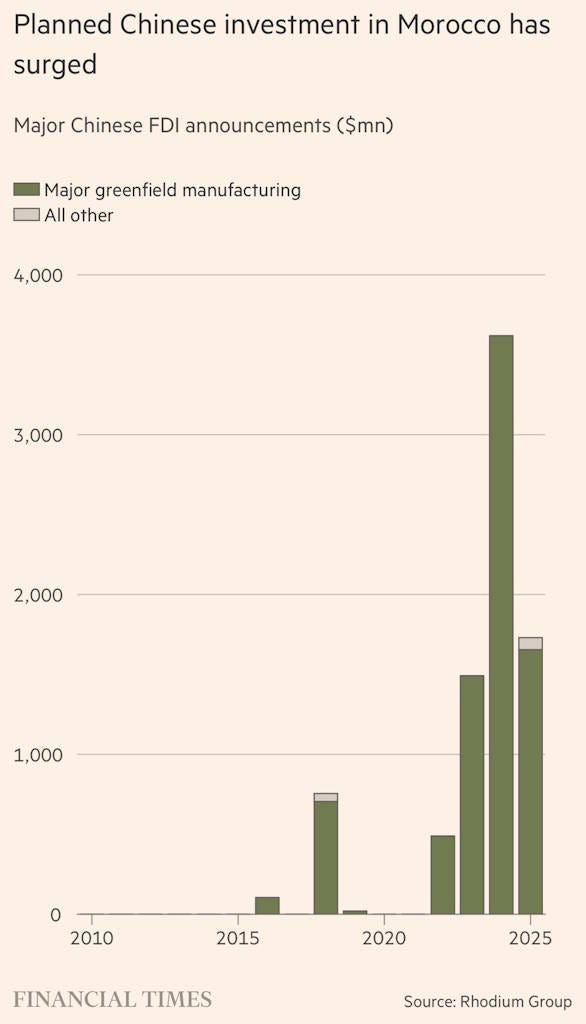

In order to get around EU tariffs, Chinese companies are fueling a Moroccan manufacturing boom:

This has helped accelerate Morocco’s growth to 5%. That’s in the range where growth starts meaningfully transforming a country.

So even in the worst-case scenario where trade barriers don’t reduce dependence on Chinese supply chains, they can help spread the blessings and bounty of industrialization to a bunch of poor countries who need the factories more than China does. The flying geese must fly!

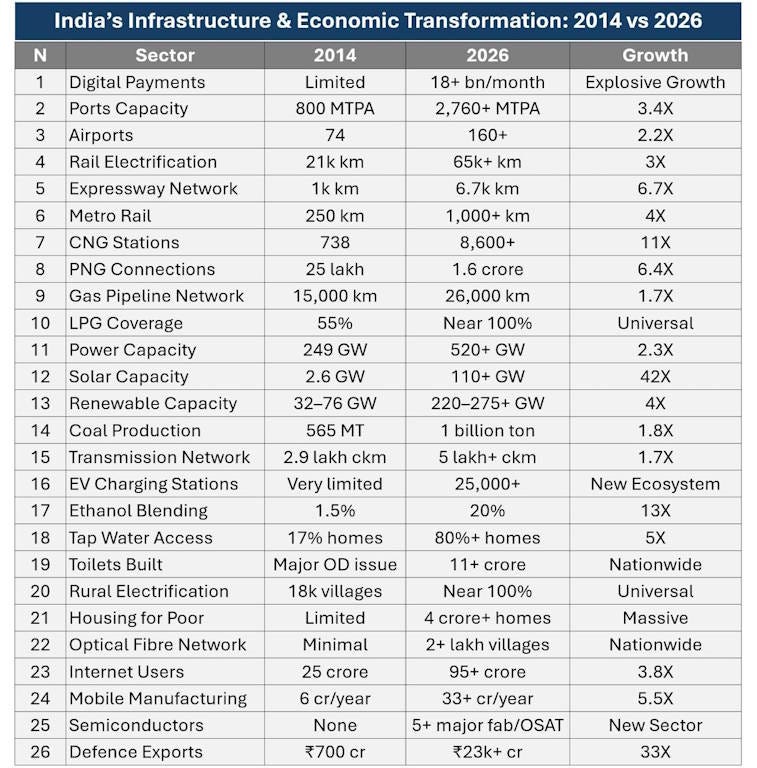

I recently came across this chart, showing various aspects of India’s infrastructure boom:

This is all pretty incredible. India’s poor infrastructure has long been regarded as a bottleneck to urbanization, manufacturing, and economic growth in general. Whatever else you think of the government of Narendra Modi, it has built a lot of infrastructure.

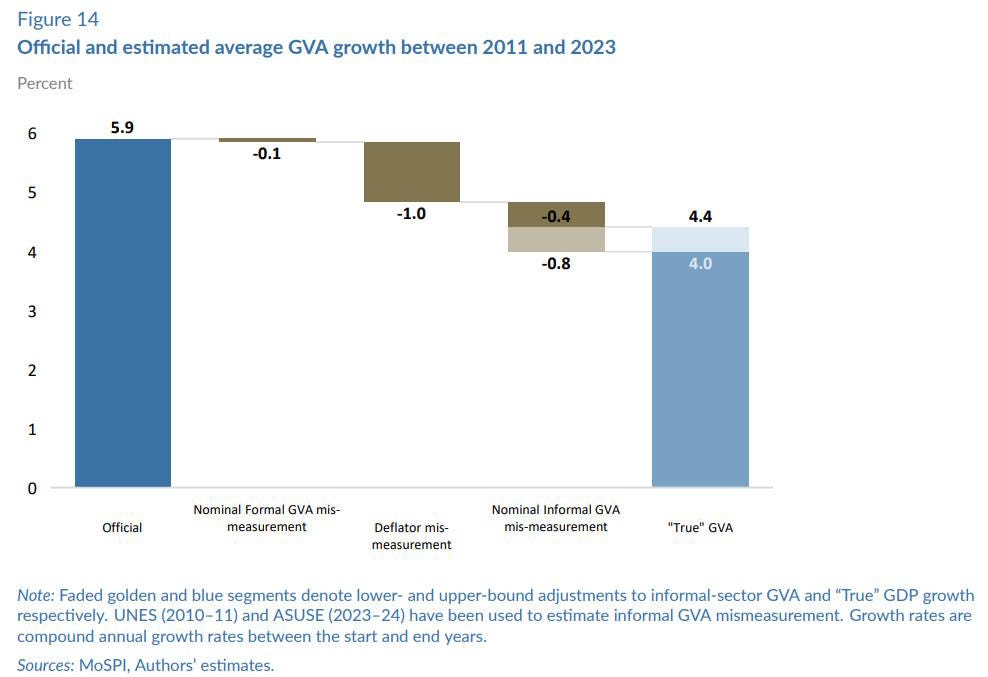

But over that same period, overall growth has been slower than we’d like to see. Anand, Felman, and Subramanian have a recent paper in which they argue that India’s GDP growth rate from 2011 to 2023 was overstated by somewhere between a quarter and a third:

India’s annual economic growth during the boom years between 2005 and 2011 may have been underestimated by about 1–1½ percentage points on average, and subsequent growth between 2012 and 2023 may have been overestimated by about 1½-2 percentage points…The first methodological issue leading to the misestimation is that the economy’s formal sector has been used as a proxy for the vast informal sector, even though the latter was disproportionately hit after 2015 by demonetization, the introduction of the goods and services tax, and the COVID-19 pandemic…The second methodological issue…is that the deflators for many sectors have been based on commodity prices, which have moved sharply relative to others. [emphasis mine]

If Anand et al.’s estimates are right — and they marshal a huge amount of supporting evidence — then it suggests that Modi’s tenure in office has been mixed. A couple of big policy missteps — demonetization and a botched tax rollout — hurt the informal sector of the Indian economy, while massive infrastructure investments have helped.

The implication here is that Modi and his successors should lean into what works. They should focus more on marshaling national resources and applying those resources toward rapid growth — two things that China did very well in the 1990s and 2000s.

I’ve been writing over the years about how the right’s favorite immigration economist does shoddy, subpar work. Despite having a job at Harvard, George Borjas — whose analyses miraculously always seem to find that immigration is much worse than all the other economists think it is — consistently uses both poor data and flawed methodology. In another roundup back in February, I pointed out how Jianxin He and Adam Ozimek had found yet another example of Borjas doing subpar economics:

Borjas’s February 2026 working paper attempted to answer whether H-1B workers earn less than comparable native-born workers…[His] findings result from substantial data errors.…The most significant mistake is a…mismatch between his H-1B and native-born samples: the H-1B applications span 2020-2023, while the ACS data covers just 2023…[Accounting for this discrepancy cuts] the wage gap roughly in half…

The second error stems from controlling for geographic wage drivers using each worker’s PUMA (public use microdata area)…The problem is that Dr. Borjas uses the PUMA where visa holders work alongside the PUMA where native workers live. Consider a native-born software developer working at Google in Mountain View who resides in a cheaper area like Fremont. If residential areas have lower average wages than business districts, this mismatch systematically inflates the apparent native wage and negatively biases the H-1B wage gap.

Again and again and again, economists catch Borjas at it. It seems pretty obvious that Borjas simply wants to conclude that immigration is bad, and doesn’t much care about methodological errors as long as they reach his desired conclusion.

In order to fight back against this accusation, Borjas decided to accuse his critics of ideologically-driven research instead. In a paper with Nate Breznau, he wrote:

Our study exploits an opportunity to observe 158 researchers working…during an experiment. After being asked their position on immigration policy, they used the same data to answer the same empirical question: Does immigration affect public support for social welfare programs? The researchers estimated 1253 alternative regression models, and the estimated impacts ranged from strongly negative to strongly positive. We find that teams composed of pro-immigration researchers estimated more positive impacts of immigration on public support for social programs, while anti-immigration teams estimated more negative impacts. The differences arise because different teams adopted different model specifications. The underlying research design decisions are the mechanism through which ideology enters the process of producing parameter estimates.

The idea here seems to be to turn one researcher’s clear pattern of errors into a he-said/she-said sort of situation. If all researchers just engineer results based on their ideology, then why should we selectively get mad at Borjas for doing what everyone else does too?

But — surprise! — it turned out that this Borjas paper also contained critical errors that invalidated the whole result! Katrin Auspurg and Josef Brüderl pointed out in a comment paper that if you fix one simple coding error in Borjas’s analysis, his entire result about ideologically-driven research just vanishes into thin air:

Borjas and Breznau…recently reported that researchers’ ideology influences their empirical findings. Although we were able to reproduce B&B’s numerical results, our reanalysis shows that the reported association is not robust. Specifically, the association hinges on a coding error. Data from four teams that contradict the ideology hypothesis were excluded from the analysis due to idiosyncratic variable coding. Correcting this error renders the ideology effect no longer statistically significant. Also, B&B employed a different outcome variable and weighting scheme to that used in a previous paper based on the same data. These two analytical decisions further contribute to the observed ideology effect. Correcting the coding error or using the same specification as in the previous paper renders the ideology effect indistinguishable from zero. Therefore, we conclude that B&B do not provide robust evidence of ideological bias in this context. Instead, the reported association appears to be a statistical artefact resulting from questionable modelling decisions. [emphasis mine]

How does this just keep happening again and again, and why is it always Borjas?

In any case, I think the implication here is pretty clear: Friends don’t let friends cite George Borjas.

Greg Mankiw makes his money by selling textbooks.

2026-06-06 16:10:50

As regular readers of this blog know, I’m pretty ambivalent about trade barriers as an economic policy. On one hand I think targeted tariffs and other trade barriers can be used to protect strategic industries from surges in underpriced import competition, especially by geopolitical rivals. On the other hand, broad tariffs like the ones Trump has used are generally bad — they hurt domestic manufacturing by making intermediate goods more expensive, they limit scale for domestic companies, etc.

And yet I do think that Europe should erect much higher trade barriers — both tariffs and non-tariff barriers — against Chinese high-tech manufactured export goods. The basic reason is that it’s important to protect Europe’s nascent modern defense industry. But I also think that blocking Chinese exports might nudge China to change its economic model to one that benefits regular Chinese people more.

In other words, China-Europe trade has some unusual characteristics right now that make trade barriers a much smarter idea than usual.

First, let’s talk about what’s going on with the Chinese economy. For the past few years, China’s government has unleashed an unprecedented torrent of subsidies for high-tech manufacturing industries. This — along with structural factors about how the Chinese economy works — has resulted in China making big global market share gains in industries like autos, pharmaceuticals, and shipbuilding. No one knows just how much of China’s market share gains are a result of government support, but as Paul Hannon reports, the OECD estimates that it’s more than half:

Government subsidies have driven most of the increase in the global market share of Chinese businesses over the past two decades as they have received three to eight times more support than their competitors, the Organisation for Economic Cooperation and Development said Monday…The analysis is based on the OECD’s Manufacturing Groups and Industrial Corporations database, which includes subsidy estimates and financial information for 525 of the world’s largest manufacturing groups spread across 15 key industrial sectors…[T]he OECD database tracks the amounts that firms are actually given…

“Industrial firms based in China receive more subsidies than their competitors based everywhere else,” the OECD said…For Chinese businesses, however, the share of [market share] gains explained by subsidies was…60%.

The Rhodium Group has a deeper dive into China’s new industrial policy. Essentially, instead of selecting a few industries to specialize in, China’s leaders just want the country to dominate everything — not just manufacturing, but services as well:

China’s industrial strategy…is becoming more systemic and pervasive, extending across all layers of production, from upstream inputs and industrial equipment to downstream applications, services, and frontier technologies…China’s next-generation industrial policy represents a shift from targeted sectoral intervention to what can be described as an “industrial policy of everything.”…While [Made in China 2025] focused on a defined set of strategic emerging industries, current policy frameworks extend across mature sectors, foundational supply chain nodes, and frontier technologies alike…

Even in mature industries facing overcapacity and severe price pressures, Beijing is providing continued support and pushing firms to upgrade production technologies to gain market share and lower production costs, rather than cutting capacity…Services, relatively neglected in earlier rounds of industrial policy, are getting more attention[.]

Basically, China does not want1 to exist in a trading system, where goods are traded for other goods. China wants to make all the goods, and have other countries pay for those goods with debt.

There are two basic reasons China is doing this. The first is pure mercantilism; China is trying to export its way out of the economic slump created by its housing bust. The second, as the Rhodium Group report explains, is power. If China controls key segments of other countries’ supply chains, it can use the threat of export controls to bring those countries to heel.

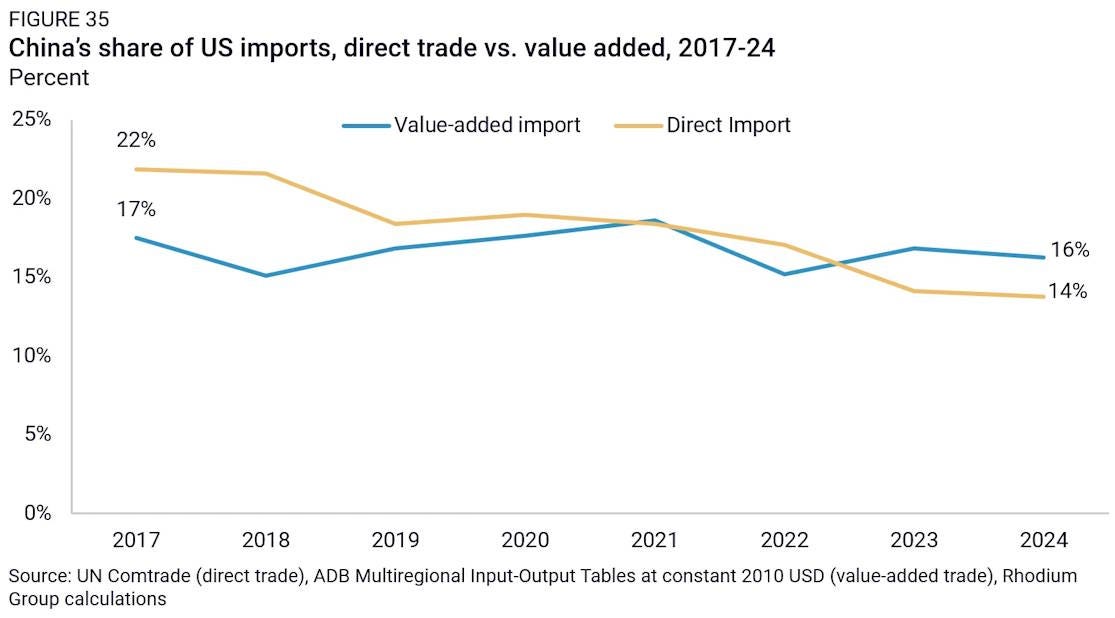

What should other countries do about this? The U.S. has chosen to respond with tariffs. These are of limited effectiveness, but they do appear to be doing something; even when you take into account the intermediate goods that China exports to America via third countries like Vietnam and Mexico, China’s share of America’s imports has fallen slightly from 2021 (or from 2017):

There are almost certainly much more effective tools that the U.S. could use to accelerate the decoupling of the two economies and reduce dependence on China…but since when has U.S. policy been driven by a desire for effectiveness?

The question is now what Europe and other developed countries — who have marginally more rational decision-making processes — are going to do about China’s attempt to dominate all tradable industries. One proposal — which Germany seems to be following so far — is to do nothing, and to simply let China make all the physical objects in the world, while focusing on services instead. This is essentially the proposal of Tej Parikh, who writes that China “has a comparative advantage in industrial policy itself”, and that trying to compete with China in any manufacturing industry is therefore doomed to fail.

This annoys me, because it represents a deep misunderstanding of the entire concept of comparative advantage! The theory of comparative advantage is about traded goods; it’s about which traded goods can be produced relatively more cheaply by which countries. If I’m better at making TVs than cars, and you’re better at making cars than TVs, then I’ll make TVs and you’ll make cars and then we’ll trade. That’s how comparative advantage works. This is why you cannot have a “comparative advantage in industrial policy”. Industrial policy is a production input, not a traded good. No one buys and sells industrial policy!

“OK, Noah,” you’re about to say. “Stop being a pedant. You know what he means. He means China is better at making anything and everything, because they use industrial policy for everything.”

Yes, I know that’s what he means. And yes, this reflects a deep misunderstanding of the concept of comparative advantage.2 Even if one country is better at making everything, it doesn’t have a comparative advantage in everything. That’s impossible. Every country has a comparative advantage at something!

That’s why in the theory of comparative advantage, trade is balanced. In the real world, China’s massive trade surplus means that trade is not balanced; much of the time, China isn’t trading goods for other goods, it’s trading goods for IOUs. That kind of unbalanced trade is something that just doesn’t happen in the theory of comparative advantage.

OK, so that was a bit of a rant. The real point here is that Parikh’s preferred solution — that every country except China should focus on innovation, and leave the making of everything to the Chinese — is simply ridiculous. First of all, it doesn’t deal at all with the issue of supply chain vulnerabilities. Second of all, China has an industrial policy for innovation, too — in fact, it’s China’s most important industrial policy. The idea of “We’ll do the innovation while China makes everything” sounds straight out of 2002 — and it was obviously wrong even back then.

The cold, hard fact is that Europe needs to do something, or risk losing its sovereignty to foreign conquerors. China — the very country that Europe’s free-traders are now suggesting should supply every single manufactured good — is waging a proxy war against Europe even as we speak. China trains Russian soldiers, provides Russia with battlefield intelligence in its war against Ukraine, helps out Russian defense manufacturers, and even does some defense manufacturing for Russia — in addition to buying Russian oil and keeping the Russian economy afloat.

And this is all while Russia is actively threatening to invade the EU. If Russia eventually does invade, Europe will need to make large amounts of drones to resist the invasion. All militaries that are not centered around large masses of drones are now obsolete — when NATO conducts war games against drone-equipped Ukrainian units, the Ukrainians easily triumph.

But both Europe and Ukraine cannot currently make drones from scratch without relying on Chinese industry. Many of the components and materials that go into making a drone are controlled by China — things like radio modules, lithium-ion batteries, electric motors, navigation cameras, and even carbon frames. Europe cannot currently make these — or can’t make many of them, at least.

If Russia were to invade Europe, China could simply decide not to sell Europe the components it needs to make drones. Why wouldn’t it? China has already proven itself perfectly willing to use export controls on rare earths and other upstream technologies to throttle other countries’ defense industries. And a Europe cowed and dominated by China’s most important ally would probably be more useful to Xi Jinping than a free and independent Europe that steers its own destiny.

If Russia invaded Europe and China simultaneously halted the export of drone components, Europe would be a lost cause. Unless Europe could assemble upstream industries for drone components from scratch before Russia’s drone-equipped armies marched across the Baltics and into Poland, the war would quickly be lost for lack of weapons.

Whether they realize it yet or not, Europe’s dependence on China for the manufacture of many key defense inputs puts it at China’s mercy. This is a downside to free trade that the folks who advocate a European retreat from manufacturing simply fail to engage with or acknowledge. It provides a strong rationale for putting up trade barriers against the import of certain intermediate goods — something that harms economic efficiency, but is necessary for defense.

When invading armies are burning your country to the ground, you should worry less about deadweight loss than about being dead.

But those who wring their hands about the economic losses should take heart. Blocking the import of Chinese goods might harm economic efficiency, but it could have some positive knock-on effects in terms of political economy.

For all China’s high-tech wizardry, its big industrial policy push doesn’t seem to be doing much to help the actual people of China.

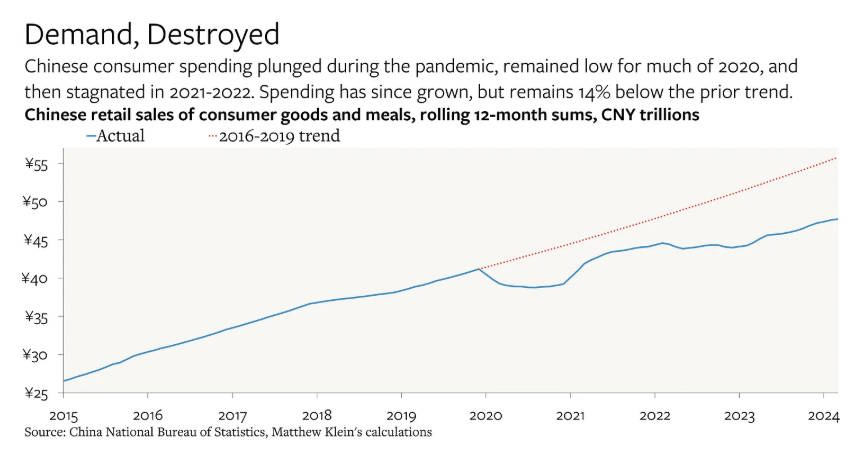

The real estate industry, which previously created plenty of labor demand and broad-based wealth for regular Chinese people, is still in the dumps and may even be getting worse. The continued property bust is weighing on aggregate demand — Fixed-asset investment is shrinking, while retail sales have flatlined.

“Industrial policy for everything” was supposed to fill the hole left by real estate, but it isn’t doing a very good job of it. Because the rise in Chinese manufacturing output is being done mostly for export, regular Chinese people aren’t able to share in the bounty the policies are creating. For example, Chinese motor vehicle consumption is below where it was a decade ago, despite surging exports:

In fact, this shift dates back to the pandemic. Matt C. Klein has a good series of charts on China’s anemic consumption. Here’s an example:

This is often framed as China helping producers at the expense of consumers. But often it’s not even that. China’s industrial subsidies pay a bunch of different companies to produce the same goods, competing their profits to zero even as they also undercut the overseas competition. A prime example of this is the solar industry:

China’s solar exports have enjoyed a surge since the bombing [of Iran] began. But that will be small cheer to its companies…Domestic demand for their products is falling for the first time in decades because the country’s power grids—far and away the biggest market for solar panels—have become overloaded with the things. Solar-panel supply, meanwhile, is overabundant because of years of splashy investment in factories…Most companies have been running at a loss since 2024 because of brutal price wars; bankruptcies are mounting.

But it’s not just undifferentiated commodity products like solar that are suffering this fate; China’s vaunted auto industry, which came out of nowhere to leapfrog all other countries with its mastery of EVs, is locked in an endless brutal price war:

China’s efforts to cool its automotive price war are faltering as BYD Co. and rivals expand discounts to avoid ceding ground in the world’s largest car market…The average price reduction for BYD cars accelerated to 10% in March…Discounts by competitors…also edged higher…Regulators’ missives aimed at halting deflationary momentum have fallen on deaf ears so far, and industry observers say it won’t stop the discounting trend anytime soon.

China’s industrial policy is accomplishing its central goal of national greatness. China’s technology level is advancing, its companies are winning global market share, and it’s gaining control over key strategic technological choke points. But China’s workers, its savers, its investors, and even its entrepreneurs are on a treadmill, unable to enjoy the fruits of their country’s industrial dominance.

European trade barriers could potentially nudge China out of this toxic political economy. If Xi Jinping & co. see that they can’t forcibly deindustrialize the West by subsidizing infinite exports, their cost-benefit calculations may shift. Providing growing living standards for Chinese people might once again become the central goal of policy, as it was during the time of Deng Xiaoping, Jiang Zemin, and Hu Jintao.

So Europe should push back against the Chinese import flood, not just for their own security, but also for the sake of regular Chinese people. Fortunately, there are indications that European leaders have had enough of Xi’s little game, and are preparing to take real action. Hopefully this newfound resolve doesn’t get lost in the maze of European bureaucracy and inertia like so many other worthwhile initiatives.

This is a colloquial expression. Countries don’t want things; I’m taking about what the Chinese government, or at least Xi Jinping, wants for China.

Parikh is confusing comparative advantage with something called “competitive advantage”. In the theory of comparative advantage, competitive advantage — who makes which good more cheaply in the absolute sense — doesn’t end up mattering for the patterns of international trade. That’s why the theory is so brilliantly counterintuitive.

2026-06-04 13:52:29

For many years, I was a big proponent of the idea that increased market power was harming the U.S. economy in various ways. In the 2010s, in the economics world, circumstantial evidence began piling up that implicated increased industrial concentration as the culprit in a variety of recent negative trends. Here’s what I wrote in 2017, after reading a bunch of that evidence:

[B]asically I see the case of the Market Power Story - or any big economic story like this - as detective work. We’re collecting circumstantial evidence, and while no piece of evidence is a smoking gun, each adds to the overall picture. IF the economy were being throttled by increased market power, we’d expect to see:

1. Increased market concentration (Check! See Autor et al.)

2. Increased markups (Check! See De Loecker and Eeckhout)

3. Increased profits (Check! See Barkai)

4. Decreased investment (Check! See Gutierrez and Philippon)

5. Decreased wages in concentrated markets (Check! See Azar et al.)

6. Increased prices following mergers (Maybe! See Blonigen and Pierce)

7. Weakened antitrust enforcement (Check! See Kwoka)

8. Decreased output (Maybe not? See Ganapati)

So, as I see it, the evidence is piling up from a number of sides here.

Some of this is micro evidence, demonstrating some of the pieces of the causal chain that some economists think leads from lax antitrust to bad economic outcomes. The Azar et al. (2017) paper shows that labor market concentration hurts wages. The Blonigen and Pierce (2016) paper shows that mergers raise prices.

The rest is macro evidence and macro theory. Economists see some trend in the economy — a lower labor share of national income, or decreased business investment, or fewer new companies being formed — and they think about whether something like monopoly power could explain those trends.

Just because a single story can explain the trends, of course, doesn’t mean it does. Ultimately you need a whole lot of micro evidence — not just a few papers — to prove each link in the chain of causality from weak antitrust enforcement to higher prices, lower output, lower wages, and so on. But in this case, the market power explanation was very tantalizing, because it had the power to explain so many of 21st century America’s dysfunctions at the same time.

This is why at Bloomberg, I wrote consistently in support of the idea that market power was making the American economy both less efficient and more unequal, and that stronger antitrust enforcement was a good solution to try. (However, I did note that antitrust wasn’t guaranteed to be a remedy, and that Big Tech companies were a bad target for antitrust enforcement.)

When Biden was elected, I was optimistic. His appointment of people like Lina Khan showed that antitrust was finally being taken seriously in Democratic Party circles. Finally, it seemed, the growing clamor of economists was going to result in some real efforts at reform:

In that post, I revisited some of the important recent papers about market power, and I also noted that prominent economists were increasingly putting their reputations on the line by writing popular books advancing the thesis that market power was hurting our economy:

[Economists] have also raised the alarm about corporate power in other forums — Thomas Philippon’s book The Great Reversal: How America Gave Up on Free Markets, John Kwoka’s book Mergers, Merger Control, and Remedies and his 2017 report on mergers, and various speeches sounding the alarm at Federal Reserve conferences. (Update: I was remiss in not mentioning Jason Furman’s briefs on market power when he was chair of the Council of Economic Advisers under Obama! They were very influential. I also neglected the interesting and often-overlooked role of sports economists, who have been complaining about market power for quite a while!)

I concluded that the Biden administration’s shift toward antitrust was a healthy example of ideas making their way from academic economics to the halls of power:

Economists have been suspicious of excess profits ever since Adam Smith complained about “the bad effects of high profits” and declared that “people of the same trade seldom meet together…but the conversation ends in a conspiracy against the public, or in some contrivance to raise prices.” The idea that competition should reduce profits to a low level in a well-functioning economy is Econ 101, as is the theory of monopoly. Biden’s tweet about capitalism and competition might sound like bold populist rhetoric, but it also could have come right out of an econ textbook…

What this means is that economists are included in the vanguard of this revolt against American corporate power…Economists make unlikely crusaders, but here they are, taking on the biggest companies in the country.

I wasn’t always happy with the Biden administration’s antitrust actions — the government lost most of its cases against Big Tech, and the vendetta against Meta seemed misplaced. But overall, a lot of action seemed to be happening in the prosaic, boring sectors of the economy where market power has probably been eroding the foundations of capitalism for years. In meat processing (multiple times), in publishing, in insurance brokering, in pharma, in medical care provision, and so on, Biden’s FTC and DOJ notched up real wins — not enough to reverse the U.S. economy’s trend toward greater concentration, but possibly enough to create a “chilling effect” that would restrain the trend toward megacorporations-in-everything.

And yet over the last couple of years, I’ve had increasingly serious doubts about the antimonopoly movement. I’m still concerned about corporate power itself — in fact, in many ways, I’m more concerned than I was a decade ago, because of the advent of AI and the unprecedented corruption of the Trump administration. But I’m increasingly unenthusiastic about the ability of the antimonopoly movement, as it currently exists in the Democratic Party, to make useful headway in curbing or balancing corporate power.

Antimonopoly is simply too important to leave to the antimonopolists.

Speaking about Milton Friedman, Robert Solow once quipped: “Everything reminds Milton of the money supply. Well, everything reminds me of sex, but I keep it out of the paper.” I am starting to feel that way about the antimonopoly folks.

Jonathan Chait has a long and very damning article about the antimonopoly movement, focusing on its crusading founder, the former journalist Barry C. Lynn. Until I read Chait’s article, I had never even heard of Lynn; this demonstrates that I’m very much out of the loop when it comes to D.C. policymaking and thought leadership, but it also shows how Lynn has escaped scrutiny compared to more popular figures like Lina Khan, Elizabeth Warren, and Matt Stoller.

In any case, from Chait’s description of Lynn, he is not the type of person whose movement I would want to follow. First of all, he seems monomaniacally obsessed with monopoly power:

“It is vital to understand,” Lynn wrote in his 2020 book, Liberty from All Masters, “that monopoly is not one of many economics problems but rather the political economic problem of our time,” causing “just about every ill in our society today.”

When he says that he holds corporate consolidation responsible for just about every problem, he means it. A list of social ills Lynn has attributed to monopolists includes not just the cost of goods and services but also: “The vast and growing inequality of wealth, political power, and control. The rise of the radical right. The surge in racism and homophobia. The attacks on reproductive choice and marriage. The collapse of our news media.”…

Anti-monopolization, Lynn argues, is “an all-encompassing framework for seeing and shaping power in every corner of our democratic republic.”…Lynn sees American history as a struggle against monopolization…A profound crisis must have profound causes, and Lynn was offering a totalistic account of social decay.

This monomania is obviously just silly. A lot of these links are just incredibly tenuous, requiring heroic leaps of assumptions about society, politics, culture, and economics. If you want to say that corporate concentration is responsible for racism, for example, you have to believe that:

racism has risen recently (highly doubtful)

the rise in racism, if it exists, is caused by economic factors (doubtful)

those economic factors are primarily — not just slightly — due to corporate concentration (highly doubtful)

Even the economics papers that find measurable effects of corporate concentration on low wages, for example, find that the effect differs enormously by geographic location. If monopsony power is responsible for low wages, then minimum wages should increase employment rather than decreasing it; in some areas, this does seem to happen, while in other areas minimum wages decrease employment, consistent with a greater amount of competition in the latter areas.

Furthermore, several credible research teams — Rossi-Hansberg et al. (2021), Rinz (2022), Autor et al. (2023) and others — have found that employer concentration has actually decreased in local markets in recent decades. This means that not just racism, but any social ill that Barry C. Lynn and his followers want to ascribe to labor monopsony, should have decreased over that period.

Another example is inflation. Antimonopoly crusaders like Elizabeth Warren were quick to blame corporate greed for inflation in 2021-22. There was extremely little data to back this up. Here’s what I wrote at the time:

Alvarez et al. (2025) found that markups — i.e., the amount that companies charge for things above and beyond what those things cost to produce — stayed constant during the post-pandemic inflation, meaning that companies weren’t actually able to use the inflation to gouge consumers…Leduc et al. (2024) and Bouras et al. (2023) found the same. And Jose Azar found that industries with higher markups — implying more market power — actually passed on less of their costs to consumers during the post-pandemic inflation…Greedflation, in other words, is not a real thing.

These are just two examples of the shaky chain of reasoning and evidence that backs up expansive claims like Lynn’s. There are many more, if you want to go looking for them. More sober antitrust types absolutely know that monopoly power is not a Grand Theory of Everything Bad in America. From Chait’s article:

Diana Moss of the Progressive Policy Institute [and] a former head of the American Antitrust Institute…told me the neo-Brandeisians’ error is to view antitrust policy “not as law enforcement but as a broad policy tool for fixing a lot of problems—economic, political, and social.” Antitrust enforcement isn’t that powerful, for the simple reason that corporate concentration is not the root cause of every problem.

This is good. But this reasonable, moderate perspective doesn’t seem to be what’s animating the modern antimonopoly movement. Chait details a telling exchange between Ezra Klein and Zephyr Teachout:

Last year, the New York Times columnist Ezra Klein asked Teachout on his podcast if she could think of any issues that cannot be solved by smashing corporate concentration. At first she ventured, “I don’t think that anti-monopoly can solve significant problems of racism in this country,” but quickly retracted even this concession. “Having said that,” she continued, “there’s a reason that Frederick Douglass and [W. E. B.] Du Bois were so concerned about monopoly power.”

Admittedly, these are words, and not actions. Chait may have also cherry-picked them from among antimonopoly movement leaders’ more reasonable statements, in order to make his point.

But when you look at the movement’s actual actions, you can clearly see the obsessive, all-encompassing nature of the belief system. For example, consider the movement’s choice of targets. These include some industries with high profit margins, but also some with very low margins. These include grocery stores, airlines, and health insurers. Grocery stores and health insurers both consistently have much lower profit margins than American corporations in general, often hovering near the zero mark. Airlines are a cyclical industry that sometimes sees some very profitable years, but generally hovers below the average:

The causal chain that runs from weak antitrust to all sorts of social harms necessarily runs through profits. If companies aren’t making profit, they aren’t controlling the market. Yet Elizabeth Warren blamed high food prices on grocery stores’ market power during the post-pandemic inflation, despite the fact that these stores make very little profit, and their margins actually declined as inflation accelerated. You could see that exact same misplaced focus in Lina Khan’s blockage of the Kroger/Albertsons merger.

As for airlines, the Biden administration’s blockage of the Spirit/JetBlue merger resulted in Spirit Airlines simply going out of business entirely. Corporate concentration was achieved after all — but it was achieved with disorder, corporate failure, and 17,000 unemployed workers rather than with an orderly merger that would have preserved some of Spirit’s routes and workers. Not exactly a resounding success for the antimonopoly movement — but that’s what happens when you try to use antitrust tools against companies in low-margin industries.

Then there’s the case of housing. The antimonopoly people have eagerly embraced the idea that corporate landlords buying up rental properties and jacking up the price is a major cause of high rent. Democrats and Republicans have both embraced this piece of “slopulism”, despite the fact that the percent of homes owned by corporate landlords is tiny and there’s some evidence showing that corporate landlords tend to charge lower rents. Supply constraints — failure to build more housing — is actually the reason for high rents, so the antimonopoly movement is distracting us from solving the real problem.

The movement’s obsessive monomania — its conviction that corporate concentration is the root of all of America’s problems — is causing it to pick the wrong targets and hurt workers. That doesn’t mean bigger corporations are better, or that there aren’t industries where we need stronger antitrust. But the antimonopolists’ totalizing obsession causes them to ignore the evidence of where and when their ideas are needed, because they assume that their ideas are always the top priority in every situation and should be applied in a blanket way to any target they choose.

Richard Feynman once said of science that “Of all its many values, the greatest must be the freedom to doubt.” Now you can respond that economics and politics aren’t “science”, but that makes the freedom to doubt even more important; the less conclusively that any one data set can answer your questions, the more important it is to look at a wide variety of data sets and consider a variety of explanations and theories.

From Jonathan Chait’s description of Barry C. Lynn, he doesn’t seem like the kind of guy who’s inclined to look at evidence that goes against his ideas:

[Lynn] believes that “most prices are entirely arbitrary and political in nature.”…More expansively, Lynn believes that “market forces”—which he places in scare quotes—do not exist. His indictment of economics is neither mild nor limited. He has compared the discipline to Lysenkoism, a pseudo-scientific fad under Stalin. “The ‘science’ of economics today … ,” he wrote in his 2011 book, Cornered, “has become a form of madness, a dream of human imagination we mistake for a pattern of the world.”

Lina Khan has also written that “There are no such things as market ‘forces’.” Statements like this certainly don’t do much to refute Chait’s allegation that the antimonopoly belief system “is more like a religion than an economic theory”.

First of all, as an aside, we should consider what it would mean for market forces not to exist and prices to be determined by politics. It would mean that grocery stores carefully calculate exactly how much they can charge for a cucumber or a package of napkins without Senators giving them an angry call or the working class rioting, or something like that. That’s kind of preposterous. It would also mean that small businesses would charge lower prices, because they’re less politically powerful than big businesses. But in fact, it’s big businesses that charge lower prices for the same goods. So the “prices are determined by politics” idea is just abjectly ridiculous, except maybe in a few special cases or where explicit regulation is involved.

But more to the point: Market forces obviously do exist. When you include sales taxes on price tags — reminding people that prices are higher than they had thought — they buy less, proving that demand curves exist and slope downward. When there is bad weather at sea, the price of fish goes up. When you charge electricity customers more, they use less electricity.

And so on. Market forces are not easy to observe in all cases, and they’re not always the most important determinant of prices. But their existence has been proven so thoroughly, by so much careful empirical observation, that to deny their existence requires a deep level of mysticism and blind faith.

If you’re not the kind of person to believe in empirical economics, then of course you’re not going to care if economists find evidence against your worldview. But once we move out of the realm of willful faith-based belief and into the real world of evidence and observation, we find that the science on monopoly power is far from settled.

First of all, there is the evidence I cited before about decreasing concentration in local labor markets (even as concentration increases nationwide). The monopsony wage penalty might be very high, but it was probably even higher in the past; this leaves room for antitrust action to help workers, but it should make us question whether monopoly power is at the root of slow wage growth.

But more fundamentally, the entire story about creeping market power being responsible for a bunch of different ills in the modern American economy is under serious dispute.

For example, the whole story about monopoly power increasing in recent decades relies on the idea that price markups have increased — if companies can’t charge higher prices relative to their costs, they must not be very powerful. Economists like De Loecker and Eeckhout find that markups have increased a lot, but there are plenty of economists who disagree with that finding! There are tons of measurement issues involved in trying to estimate markups across the whole economy. Some economists claim that essentially the entire increase in markups is due to the finance sector.

There are plenty of other pieces of the monopoly power story that are also disputed. Shapiro and Yurukoglu (2024) summarize a bunch of these. It’s hard to define what each “market” is over time, because the boundaries of the categories are arbitrary, and the nature of products themselves keeps changing. It’s hard to choose the region over which local concentration should be measured (when is one store in the same “market” as another?). Companies’ costs are hard to measure for many reasons — for example, companies sell lots of different things, and researchers don’t necessarily have the data to determine which costs are for which products. Profits are hard to measure because the cost of risk is hard to assess. And so on. In general, choosing a different set of assumptions can get you wildly different results regarding how much monopoly power has actually risen in America.

The point here is not that De Loecker and Eeckhout, or the other economists who concluded in the 2010s that monopoly power is a big deal, were wrong. Maybe they were, maybe they weren’t. Nor should you conclude that economics is just a game of “he said, she said” where everyone contradicts each other and nobody really knows anything. The correct takeaway here is that these questions are very subtle and difficult, and the most careful, serious researchers will take a long time to hash out the correct answer. In the meantime, we must live with uncertainty.

A big problem with the antimonopoly crusaders is that they don’t just refuse to live with uncertainty — they insist that you don’t live with uncertainty either. If you say “Hey dudes, maybe corporate landlords actually lower rents”, they won’t debate the finer points of causal estimation with you — they’ll simply label you as a corporate shill and dismiss you.

It’s this last bit — the anathematization of anyone who disagrees with them — that really warns me away from the antimonopoly movement. Chait describes in his article how anyone who tries to buck the antimonopoly people gets accused of being a paid corporate hack:

“We’ve largely won the intellectual debate,” [Lynn] told me matter-of-factly, allowing that the only remaining liberals who disagree with him are “those who are paid to do so.”…

When Biden considered appointing Susan Davies, a former deputy White House counsel under President Obama, to the Justice Department’s top antitrust post, a slew of articles savaged her as a corporate shill. Her candidacy died. [emphasis mine]

This tactic was clearly on display when the antimonopoly people leapt to savage Ezra Klein and Derek Thompson’s book Abundance. Matt Stoller wrote a post entitled “An Abundance of Sleaze: How a Beltway Brain Trust Sells Oligarchy to Liberals”. Dylan Gyauch-Lewis1 called the Abundance movement “The new centrist push to regain control of the Democratic Party, with corporate money”. Barry C. Lynn said that Abundance wants “to cozy up to good oligarchs, so they can shelter us until the MAGA storm blows over.”

First of all, claiming that anyone who disagrees with your ideas must be on the payroll of nefarious forces is blatant intellectual dishonesty. It also signals how weak your argument is if you have to accuse every critic of being a bad actor.

But beyond that, the antimonopoly crusaders’ reaction to Abundance shows how utterly factionalist they are. They could have simply said “Yes, we want abundance too. Guess how you get abundance? By breaking up monopolies!” Or something like that. They could have easily tried to co-opt the energy behind Abundance and treated Ezra Klein and Derek Thompson as potential allies. Instead, they leapt instantly to the attack with maximum savagery.

This is the behavior of factionalists, for whom ideas and policy are less important than building power for a clique of favored allies and fellow-travelers within the Democratic Party. Klein and Thompson were a threat not because their ideas contradicted those of the antimonopoly clique, but simply because they were not beholden to the patronage or the intellectual legacy of that clique. They were not on the team, so they were the enemy.

In my view, it is very dangerous for any political party to allow itself to be entered and captured by a clique or faction like this. I’ve spent a long time being very favorable to the ideas being put forward by the antimonopoly people, but their behavior with regards to the Abundance liberals — and the shoddy reasoning, baseless accusations, and backroom arm-twisting that they employ in these debates — has given me what the Zoomers call “the ick”.

A problem with economic policy is that it is very vulnerable to intellectual pseudo-cults. Economics research is very hard to understand, isn’t always useful, and rarely offers clear-cut answers. So policymakers and writers seeking certainty and a reason for decisiveness often fall victim to charismatic gangs of intellectuals who claim that economics is solved and that they have it all figured out. On the GOP side, these include the “supply-siders” in the 1980s and the “national conservatives” today. On the Democratic side, it includes the MMT people.

But MMT failed — essentially no one listens to people who say infinite deficits are good. The antimonopoly faction, on the other hand, appears to have succeeded in winning enormous power and prestige within an increasingly epistemically closed progressive movement. Elizabeth Warren was basically a one-woman Organization Department2 for the Biden administration, and is apparently still incredibly influential in terms of furnishing personnel for Democratic hopefuls. Meanwhile, popular Democrats like AOC are going around claiming that “market power” is what produces billionaires, and every major progressive publication now platforms the antimonopoly people’s intellectual output.

Given my writings about the problems of corporate power in the past — and my fear of the overwhelming power that AI companies might achieve — it would be relatively easy for me to join this movement. But I can’t, because monomaniacal obsession, epistemic closure, anti-empiricism, and intense factionalism are the kinds of things I just can’t sign on to.

Corporate power is a real problem in our society. But we need more reasonable programs, and more reasonable people, to fight it effectively. Banning corporate landlords, calling for price controls, attacking the grocery store industry, forcing airlines out of business, and making accusations against anyone who calls for deregulation of housing supply are just signs of an approach that’s going to lead nowhere good.

When I had GPT proofread this post before publication, it flagged the name “Dylan Gyauch-Lewis”, but its only comment was: “This unusual spelling appears to be correct.”

This is quite a compliment!

2026-06-02 17:31:21

So, Anthropic is going to IPO! The company is valued at almost $1 trillion, so this is going to be one of the biggest IPOs in history — the only other competitor being SpaceX, which is also set to go public soon. It’ll be one of the largest wealth creation events in history — the company’s seven founders are each going to be worth almost $20 billion, and regular employees will be worth in the millions to tens of millions. So much for my chances of buying a house in San Francisco!

Whether Anthropic is worth this valuation is not the topic of this post, but I guess it’s interesting to touch on. Anthropic is showing more impressive revenue growth than any company in history, having recently blown past OpenAI to an annualized rate of about $45 billion per year. Worries that the company would be unprofitable have been blown away by this hypergrowth — Anthropic is about to turn its first operating profit.

In fact, I think the price being offered for Anthropic is pretty conservative. A multiple of 20x annualized revenue really isn’t that expensive for a company growing at 130% a quarter. Obviously that’s going to level out at some point soon, but it would take only a little over one more year of that sort of growth for Anthropic to be priced like a value stock. The cautious pricing probably reflects the danger of competition, both from OpenAI and from the cheap Chinese open-source models perpetually nipping at the leaders’ heels.

The reason for Anthropic’s meteoric rise, of course, is the success of coding agents. For years, OpenAI had struggled to find a market for its state-of-the-art chatbots; everyone was wowed by the technology, and everyone used it, but people couldn’t figure out how to get it to produce lots of economic value. Anthropic basically solved that problem by being the first to invent usable coding agents — AIs that write software on their own. Claude Code, Anthropic’s agentic software, gained a huge amount of brand value, even though OpenAI’s Codex product is competitive in terms of quality.

This was true product-market fit. AI had already proved that it worked in terms of the underlying technology — probably around 2024, when reasoning models cut down on the hallucination problem. Now it had found its killer app — the equivalent of e-commerce and search for the internet, or spreadsheets and word processing for computers. Suddenly, everyone in the world was “tokenmaxxing” — trying to use coding agents as much as humanly possible.1

I first encountered this trend at a dinner event on the economics of AI (I go to a lot of those dinners these days). An entrepreneur at the dinner breathlessly told me and a couple of other attendees that he ordered his employees to “spend their salary in tokens” — that is, to create so much code with Claude Code and Codex that it cost as much as their entire paycheck. I remember asking him: “What are they using all those tokens to create?” I don’t think I got a straight answer; I’m not sure he knew.

He wasn’t alone, though. Plenty of companies encouraged their employees to use AI coding agents as much as possible. Meta even briefly had a leaderboard for who could use the most tokens. One company reportedly spent half a billion dollars on Claude Code — equal to one percent of Claude’s annualized revenue!

Reading these reports, I just kept wondering: What are all these tokens actually producing? Just like with that guy at dinner, there never seemed to be a clear answer. Were Amazon and Meta and other software companies rolling out new features? Not that I’ve seen. A lot more apps are being submitted to the App Store, but I’ve only heard of one good one (Refine.ink). I’m sure there are more out there, but so far it’s nothing like the early days of the smartphone, where I was hearing about cool new apps every couple of weeks.

Maybe it was all on the back end? I’m not a software guy, so I don’t have a proper grasp of how hard it is to make a website like Instagram run, or optimize the cloud servers at AWS. Sites and apps aren’t loading faster or obviously more reliable. Was advertising getting better? Are click-through rates improving? Were companies fixing their long-standing problems, taking care of “tech debt” so they can avoid paying large costs in the future? Maybe!

I kept quiet about these questions, since it’s not really my area of expertise. But I saw a lot of other people — people who know a lot more than I do about software engineering — asking similar things. John Loeber wrote:

The stuff I’m hearing is just insane. People are spending hundreds of thousands of dollars a month on tokens? Guys, what are you shipping?…I am seeing people fully enraptured by illusions of productivity. They have swarms of agents coordinated by Byzantine Octopus harnesses. They’re munging thousands of tokens a second. They’re doing all this stuff, churning unfinished marginalia faster than ever before. Spinning their wheels and shipping absolutely jack shit for their customers…[W]e’re getting a lot of utility from AI for engineering at our company. I think we would really struggle to burn more than $5K per engineer per month.

Uber COO Andrew Macdonald said it wasn’t yet possible to draw a link between raw AI usage and useful products actually being shipped:

“That link is not there yet, right?” [Macdonald] said. “I think maybe implicitly there is more that is getting shipped, but it’s very hard to draw a line between one of those stats and, ‘Okay, now we’re actually producing 25% more useful consumer features.’”...He said that the trade-off costs from AI are harder to justify because he can’t draw a direct link.

Microsoft, meanwhile, began canceling Claude Code licenses. Salesforce started redesigning their employee targets to measure real output instead of AI input. And people who looked into the matter basically confirmed the suspicion that a lot of this AI coding wasn’t going into actual products being shipped:

For companies using advanced AI coding tools, only 18% of spending on tokens is translating into shipped coding products that reach real users, according to EntelligenceAI, a startup that aggregated data on more than 2,000 companies using advanced AI tools for coding.

Jellyfish, a company that tracks AI usage, found rapidly diminishing returns in terms of converting tokens to actual software.

You should absolutely NOT take this to mean that AI is a bubble, or that the tech doesn’t actually work, or that Anthropic’s IPO is overpriced, etc. A lot of this is perfectly normal. When a very capable new general-purpose technology bursts onto the scene — steam power, electricity, computing, the internet, etc. — a ton of people play around with it to see how it works and experiment with how they might be able to use it. That experimentation is healthy, and we shouldn’t expect it to last forever.

It’s also reasonable for companies to push their software engineers to try something radically new. Most professionals who have written code by hand all their lives will naturally be reluctant to switch over to letting a machine take the first crack at it. Rewarding AI usage for its own sake is silly in the long run — it’s just as subject to Goodhart’s Law as anything else, and it predictably resulted in people checking the weather with AI just to hit their targets. But in the short run, it could be good to shove stodgy old engineers out of their comfort zone.

But I also think there are two more interesting things that are potentially going on here:

Companies are finding out, once again, that turning task-level productivity into economic productivity is a lot harder than it looks. This has implications for the big “AI and jobs” debate, upon which the shape of our future society could hinge.

It’s very possible that the software industry as we know it is a mature industry, like steelmaking or internal combustion. If AI creates major improvements in software, it’s possible — even likely — that it’ll be in new types of software industries instead of just “better Facebook and Amazon”.

2026-05-31 16:38:50

I consider myself a pretty good and decent guy, overall. I don’t commit crimes. I’m nice to the people I meet. I help out my friends. I take good care of my pet rabbit, and I donate lots of money to other people who take care of abandoned and sick rabbits. My politics might not always be correct or wise, but I want things like the end of poverty, the end of war, and so on.

And yet just down the highway from me, there are facilities for the mass torture of animals. In the United States, there are 73 million pigs in “concentrated animal feeding operations”, more commonly known as factory farms:

There are many horrors experienced by chickens and other animals on factory farms, but the way pigs are forced to live is probably the worst. For most of their lives, female pigs (sows) are kept in tiny cages — either “gestation crates” when they’re pregnant, or “farrowing crates” when they’re nursing. A sow will spend most of her life in one of these cages.

In a gestation crate or a farrowing crate, sows don’t have enough room to turn around — all they can do is either stand or lie down in a pile of their own feces. Imagine living your entire life in an airline seat, where you couldn’t even get up to go to the bathroom or take your seatbelt off. That’s how these pigs live.

Pigs are social creatures — they exhibit “emotional contagion”, meaning that when one pig is scared or happy, other pigs start to feel the same, and they give comfort and support to other pigs who are in distress. Research suggests that they’re at least as smart as dogs, and probably smarter. But a pig in one of these crates will never get any social interaction in her entire adult life — she can’t even turn around to look at her babies.

This is torture. The pigs who are confined this way bite the bars of their cages, desperate for a freedom that will never come. They have their tails chopped off as babies (generally without anesthetic), so that they can’t chew each other’s tails in anguish. But no relief ever comes — they live out their entire lives and die in these tiny torture-cages.

I have no other word for this except “sin”. This is a sin. If there is a God,1 and if that God is in any way good and moral, then that God is looking down with disgust on the way my society treats pigs. I go about my daily life — hanging out with my friends, petting my rabbit, going out to eat at nice restaurants — never thinking about the horrible suffering that has engulfed the entire lives of those tens of millions of pigs.

And it’s for my own benefit that those animals are being tortured. When I eat delicious guanciale, sumptuous char-siu, or mouthwatering carnitas, I’m eating the flesh of animals who were tortured for their entire lives so that I could devour their faces and shoulders and bellies for a slightly cheaper price.

OK, so why don’t I just stop whining and become a vegetarian (or a vegan, since milk cows and hens are also treated badly)? Honestly, I should, and the fact that I don’t is monstrous in a way. But simply washing my own hands of this crime feels like a pitifully inadequate response. The vegetarian movement has been around in the West for over 150 years, and very little has changed — meat consumption is probably marginally lower than if there were no vegetarians at all, but abusive factory farming practices have only been refined and expanded. Furthermore, vegetarianism, though morally laudable, has an obvious economic limitation — when one person refuses to eat meat, it lowers the price of meat for everyone else, which raises other people’s meat consumption and partially offsets the vegetarian’s action.

On top of the obvious and demonstrated inability of individual action to solve this problem, it’s insufficient even from a moral stance. Suppose that our society farmed human beings for food. Would simply refusing to eat human flesh be enough to absolve me of culpability? I don’t think so. I would still have a responsibility to try to abolish the evil system.

In fact, “abolish the evil system” is exactly what voters in California and some other states are trying to do. In 2018, by an almost 2-to-1 margin, California voters enacted a law called Proposition 12 that heavily restricted the sale of meat from pigs, hens, and calves that weren’t raised with a minimum amount of space. Crucially, the partial prohibition extended to meat from animals raised inhumanely in other states. This followed on the heels of a similar law in Massachusetts two years earlier.

Courts have upheld the law, but Republicans in Congress are trying to undo it from the federal level. In 2025 they proposed the Save Our Bacon Act, which would ban states from enacting animal welfare laws like the ones voters approved in California and Massachusetts. The Save Our Bacon Act failed on its own, but this year it got incorporated into the Farm Bill, which has passed the House and is now being considered in the Senate:

Companies and industry groups have also worked with members of Congress for over a decade to introduce federal legislation to nullify laws like those in California and Massachusetts. The latest iteration is called the Save Our Bacon Act, originally proposed last year…This effort, which for years went nowhere as standalone legislation in Congress, now has a decent chance at becoming law as part of the new Farm Bill…

In late April, the House of Representatives passed its version of the Farm Bill, which included the language from the Save Our Bacon Act…It’s “really a Save Our Crate Act,” Brent Hershey, a hog farmer who opposes it, told me. “A vote for the farm bill,” he said, “is a vote to cage an animal that can’t walk or turn around.”

Lewis Bollard has a good post explaining what’s at stake. In fact, the current Farm Bill wouldn’t just reverse the recent anti-crate laws in California and Massachusetts — it would roll back much of the progress that has been made in farm animal welfare over the decade, as well as preventing any future welfare laws along similar lines:

The [Save Our Bacon] Act would stop any state or locality from regulating the sale of meat based on how it’s produced in another state. This would likely invalidate state and local bans on foie gras, crated veal, and more…It would also halt future legislative progress. Congress hasn’t passed a farm animal welfare law in decades. State laws are where reforms actually happen. The SOB Act would gut them by mandating they contain a giant loophole for out-of-state imports.

Why should Congress prevent the voters of California and Massachusetts from taking a stand against the evils of factory farming? First and foremost, it’s a case of a concentrated interest group — the pig farming lobby — making headway against a diffuse interest (voters with a conscience). In fact, if you believe the polls, a majority of the country — even a majority of those who regularly eat pork — would probably support measures like the ones in California and Massachusetts:

Across different incomes, genders, age or race, many regular pork buying Americans (defined as those who purchase pork at least 2-3 times per month) find the use of gestation crates on pregnant pigs (66%) and the practice of [tail] docking on piglets (53%) objectionable. These findings, and other key sentiments, are from a recent survey of over 2,000 US adults conducted by The Harris Poll…According to the survey, gestation crates are seen as unacceptable by two-thirds of Americans (66%), and a strong majority (73%) are more likely to buy pork products from companies committed to ending their use than from one that is not. Tail dockingis also seen as unacceptable by just over half (56%) of Americans, and 62% of Americans think retailers and restaurants have a responsibility to ensure the cutting of piglet tails is not done by their pork producers.

A plurality of Americans want laws against animal cruelty strengthened in general, and in 2022 a poll by Data for Progress found that measures like those of California’s Prop 12 enjoy widespread national support.

There is a financial cost of switching to humane farming methods, but in the grand scheme of things it isn’t that high. After California passed Prop 12, the prices of affected products rose by about 20% relative to products that weren’t covered by the law. 20% is a significant increase; it’s possible that the American public, wearied by several years of inflation, is less inclined to care about pig torture than they were when the polls I cited above were taken.

But it would be a one-time bump in cost, and over the years the price would come back down at least somewhat, as farmers found more efficient ways to farm pigs without torturing them. In addition, California implemented the law in its typical inefficient way, forcing producers of legally compliant pork to jump through massive amounts of regulatory hoops in order to sell their product in the state. Efforts to make it easier to sell humanely produced meat would make it even cheaper to end these terrible practices.

In fact, I suspect that the American public is still in a mood to support animal welfare laws like this. The Save Our Bacon Act failed on its own, and its supporters had to end up sneakily burying it within the much bigger Farm Bill; to me, this suggests that even the SOB Act’s proponents knew how bad it would make them look if people started paying attention.

I also suspect — though I can’t prove — that the proponents of the Save Our Bacon Act care about more than just the support of the farm lobby. I suspect that part of the reason they’re so anxious to preserve abusive farming practices is that doing so affirms their right to abuse animals. The line “The cruelty is the point” probably applies here.

People who feel disempowered tend to take their frustrations out on those with even less power. Conservatives have certainly been feeling disempowered by the progressive drift of elite culture over the past few decades; by rolling back animal rights, perhaps they can demonstrate that at least they still have complete power over the pigs.

This disgusts me. In The Better Angels of Our Nature, Steve Pinker showed how economic development has tended to go hand-in-hand with less tolerance for animal cruelty. By passing a law that expanded the scope for animal cruelty, America would be slipping a little bit back down toward developing-country status. It’s moral degeneration, plain and simple.

I would hope that the advent of AI would give us humans a little bit of self-reflection about how we treat animals. Whether or not you believe that today’s AI represents a true superhuman intelligence, the rapidity with which Claude and GPT have rocketed to their current heights of ability should make even the most hardened skeptics realize that humanity is probably not the eternal pinnacle of power and intelligence in this universe.

And in a universe where humanity is neither the most powerful nor the most intelligent entity, we will desperately need a universal moral code where the strong protect the weak. Vernor Vinge, contemplating the advent of superhuman AI back in 1993, wrote: