2026-05-15 15:30:00

They offer a 240Hz refresh rate and a bundled connectivity dock.

2026-05-15 14:08:00

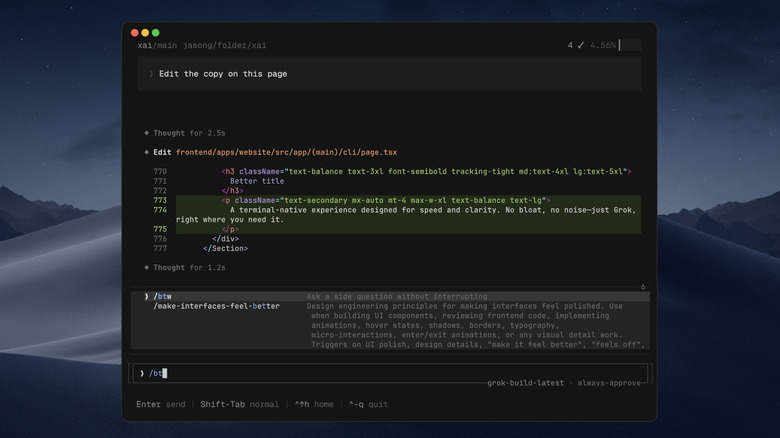

It's in early beta and only available to SuperGrok Heavy subscribers right now.

2026-05-15 05:55:48

It's a tough day to work at Microsoft PR.

2026-05-15 05:43:05

Other new inductees include Beyonce's 'Single Ladies' and Taylor Swift's '1989.'

2026-05-15 05:17:40

The 2026 model adds the Intel Core Ultra 9 290HX Plus processor.

2026-05-15 04:55:32

The $800 smart glasses could soon be a lot more useful.