2026-05-02 21:00:40

Welcome back to the Abstract! These are the stories this week that dared to dream, slinked through the city, mourned their mothers, and visited ancient graveyards.

First, scientists studied thousands of dream reports and discovered that world events—like the COVID-19 pandemic—can manifest in our vespertine visions. Then: the science of urban snake rescues, the lonely lives of orphaned dolphins, and scientists fiddle with Rome’s ancient DNA.

As always, for more of my work, check out my book First Contact: The Story of Our Obsession with Aliens or subscribe to my personal newsletter the BeX Files.

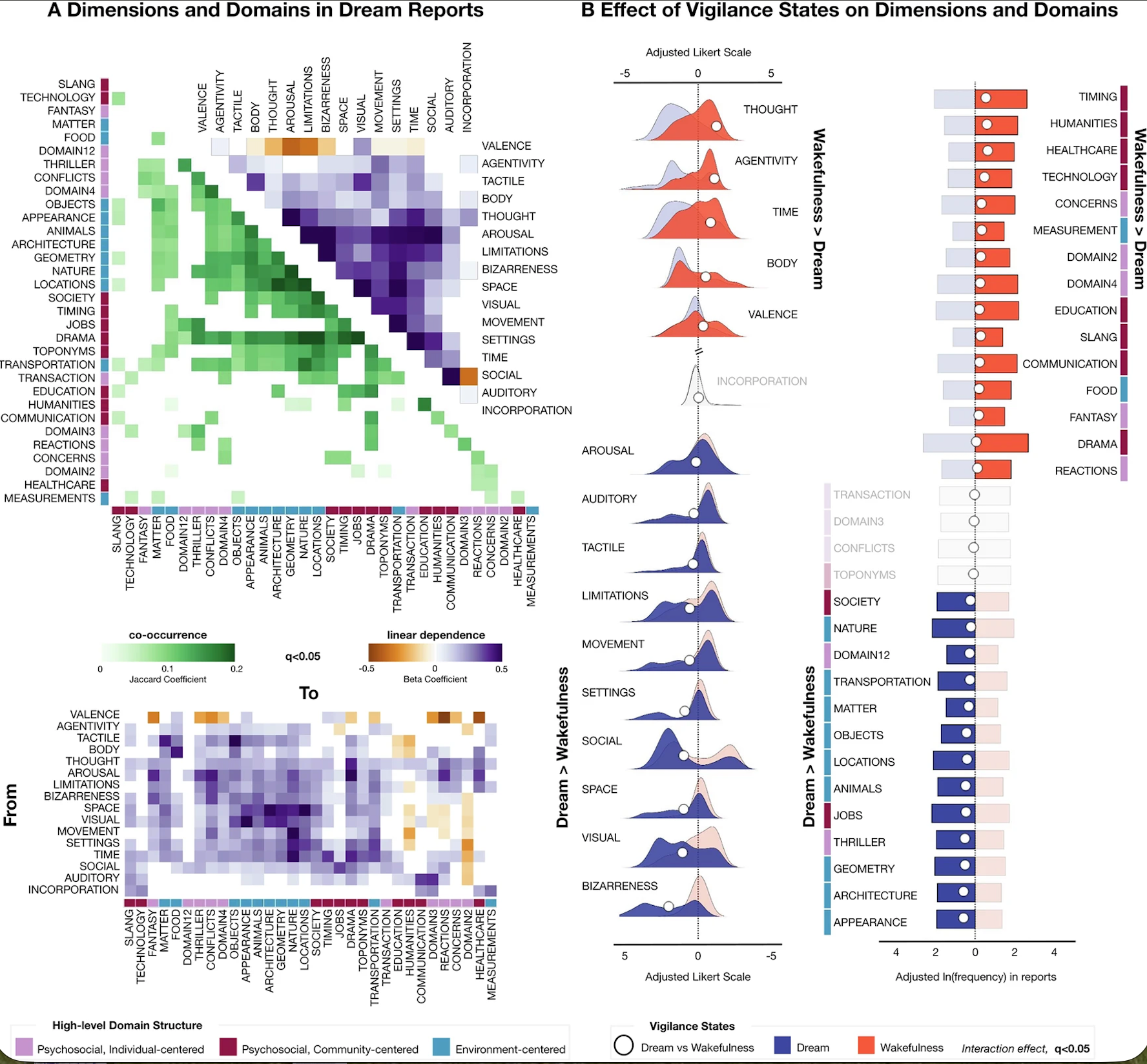

Why do we dream? It’s a question that has kept people up at night for thousands of years. Now, scientists have taken a new crack at the mystery by collecting and analyzing more than 3,700 reports from 207 participants who described both their dreams and waking experiences between 2020 to 2024, as well as 80 participants who reported their dreams during the onset of the COVID-19 pandemic from April to May 2020.

The results revealed possible links between personality traits and dream experiences, and suggested that dreams are influenced by external events such as the pandemic.

“During lockdown, dreams showed increased references to limitations and heightened emotional intensity, effects that gradually normalized over the following years,” said researchers led by Valentina Elce of IMT School for Advanced Studies Lucca. “These findings demonstrate that stable individual traits and incidental experiences jointly shape dream semantics.”

For the main dataset, Elce and her colleagues recruited 207 Italian adults ranging from 18 to 70 years old who were assessed for their psychological and cognitive traits, demographics, and sleep patterns. These participants recorded recollections of their dreams as soon as they woke up using a scale of descriptive elements, such as bizarreness, vividness, valence (emotional tone), and the level of agency they had over events in the dream. This sample of dreamers was also prompted to record their waking experiences throughout the day.

The team used natural language processing models to quantitatively analyze the semantic structure of the dream reports and correlations between individual traits and dream experiences. For example, people who let their mind wander in their waking hours reported having more bizarre dreams.

“Our findings indicate that dream bizarreness is associated with a higher tendency of the individuals to mind-wander, which also drives frequent shifts in narrative settings,” the team said. “This is in line with accounts suggesting that dreaming and mind-wandering may share a common neural and cognitive foundation.”

The lockdown group, meanwhile, was composed of 60 women and 20 men who recorded their dreams in diaries during spring 2020. By comparing the two samples, the researchers suggest that “external emotionally salient events, in this case the COVID-19 pandemic, might affect dream experiences and how such effects develop over long time spans,” according to the study.

“Notably, themes concerning healthcare, which were heavily represented in daily life during the pandemic, showed no significant changes,” the researchers said. “However, in a continuous line with what was happening in the daylight world, the actions of the individuals while they were dreaming were described as limited by physical or metaphorical constraints and the recalled emotional states carried a stronger intensity.”

Godspeed to the oneirologists—the term for scientists who study dreams—for finding new ways to probe these ephemeral experiences that constantly elude explanation.

In other news…

In cities with urban snake populations, such as Hyderabad in India, millions of people live alongside venomous snakes—including deadly Indian cobras and Russell’s vipers—that have been displaced by rapid habitat loss.

To discourage people from just killing these cosmopolitan cobras, an organization called the Friends of Snakes Society performs “snake rescues” with trained handlers who remove snakes and transport them to safer locations. By analyzing 55,467 snake rescue records in Hyderabad from 2013 to 2022, a team found that snake rescues rose nearly 17 percent over the decade, and that about 54 percent (n = 30,189) of rescues involved venomous snakes.

“Snakes have either become locally extinct or have adapted to the city as their habitat, resulting in intensified human–wildlife interactions in Hyderabad and its neighboring areas,” said researchers led by Avinash Visvanathan of the Friends of Snakes Society. “The dataset demonstrates that standardized snake rescue operations not only mitigate immediate risks but also generate valuable ecological information.”

As always, The Simpsons already did it with the 1993 episode “Whacking Day,” though in that case, a mass snake rescue was made possible by the dulcet tones of Barry White rather than a helpline. Perhaps the efficacy of baritone vocals in urban snake management could offer a future avenue of study.

Dolphins, like humans, invest a lot of maternal care into their young, typically nursing calves for two to three years. But scientists now discovered that months-old orphaned calves can survive the deaths of their mothers—though they are negatively impacted by their losses.

Ali, an Indo-Pacific bottlenose dolphin born in February 2011, suddenly lost her mother Millie in October that same year; Rocket, a member of the same species born in February 2022, was orphaned at seven months old after her mother Ripple disappeared.

Ali is probably still alive and birthed her own calf in 2025, though it sadly died of blunt force trauma at a few weeks old, possibly due to infanticide or a boat strike. Rocket endured for three years, and was sometimes spotted with a mother-calf pair that may have cared for her, before she was killed by a boat strike last year. Both Ali and Rocket displayed maladaptive behavior, especially getting too close to boats.

The study “provides rare empirical evidence that young-of-year calves can persist without maternal care,” said researchers led by Cristina Vicente-Sánchez of Flinders University.

It’s a bittersweet finding, demonstrating that when young calves are forced to sink or swim, some can make it—but they may bear lifelong signs of bereavement.

Oceans of ink have been spilled on the rise and fall of the Roman empire, but scientists have now read the story that is written in the genomes of people who lived in the aftermath.

A new study analyzed ancient DNA from 258 individuals found at grave sites in southern Germany who died between the years 400 and 700. These reconstructed lineages “reveal a major demographic shift coinciding with the late fifth century collapse of Roman state structures, when a founding population of northern European ancestry mixed with genetically diverse Roman provincial groups” said researchers co-led by Jens Blöcher and Leonardo Vallini of Johannes Gutenberg University Mainz.

These intermarriages eventually formed ”a population resembling modern Central Europeans by the early seventh century,” and reflected the rise of “Christian ideals such as lifelong monogamy, with minimal divorce or remarriage after widowhood” and “strict incest avoidance,” according to the study.

While this time of transition “has traditionally been framed as a conflict between northern ‘barbarians’ and a Roman Empire in decline, newer studies reveal a multifaceted transformation,” the team added.

Rome wasn’t built in a day, so the saying goes, and its flamboyant collapse is arguably still in motion, inspiring new interpretations and never-ending material for history podcasters.

Thanks for reading! See you next week.

2026-05-02 02:32:53

The Chinese government pressured Zambia to cancel RightsCon, the world’s largest digital human rights conference, at the last minute, according to the conference’s organizers. Beijing was upset that the speaker’s list included prominent figures from Taiwanese civil society, AccessNow, the group that organizes RightsCon, wrote Friday.

On Wednesday, guests and speakers from across the planet headed to Zambia to attend RightsCon, the largest digital human rights conference in the world. Zambian immigration officials turned away early arrivals, saying the conference had been cancelled. The African country’s government posted a vague message on Facebook saying the conference had been postponed. By the end of the day, event organizers Access Now officially cancelled the conference and told participants not to go to Africa.

RightsCon is a large conference that takes years to plan and hosts thousands of people. It requires a high level of coordination between Access Now and the host country and it’s odd to cancel something this logistically complicated five days before it begins. On Friday, Access Now revealed details about what happened in a blog post. WIRED earlier reported on the Chinese pressure.

“On April 27, one day after a government press release endorsed RightsCon, we received a phone call from [Zambia’s Ministry of Technology] about an urgent issue and were told that diplomats from the People’s Republic of China (PRC) were putting pressure on the Government of Zambia because Taiwanese civil society participants were planning to join us in person,” the post said.

“This development was extremely concerning and we immediately pushed back. Next, we opened up lines of communication with our Taiwanese participants, as is our practice when there is a potential risk for a specific community. While we needed more information, we continued to feel confident this was something we could address with the government,” Access Now added.

Scheduled speakers included Jo-Fan Yu, the CEO of the Taiwan Network Information Center, a non-profit that monitors Taiwan’s internet infrastructure, and E-Ling Chiu, the director of Amnesty International Taiwan. RightsCon was held in Taipei, Taiwan in 2025. China notoriously considers Taiwan to be part of China, and China has exerted pressure on countries and companies around the world to not acknowledge Taiwan’s independence.

After Zambia called Access Now, it posted a letter on Facebook and sent it to the rights group on WhatsApp. “This was our first official, written communication from the Ministry. According to the letter, the postponement was ‘necessitated by the need for comprehensive disclosure of critical information relating to key thematic issues proposed for discussion,’ which would be ‘essential to ensure full alignment with Zambia’s national values and broader public interest considerations,’” Access Now said in its blog.

“It is simply impossible to postpone an event the size and scale of RightsCon a week before it is set to start,” the organization added. “The summit requires more than a year of planning and preparation to host thousands of people and curate a program of more than 500 sessions.”

The language of the public letter was vague, but Access Now said its background conversations with Zambia were clear. “In order for RightsCon to continue, we would have to moderate specific topics and exclude communities at risk, including our Taiwanese participants, from in-person and online participation,” it said.

“This was our red line,” Access Now said. “Not because we were unwilling to engage, but because the conditions set before us were unacceptable and counter to what RightsCon is and what Access Now stands for.”

2026-05-02 00:50:32

This is Behind the Blog, where we share our behind-the-scenes thoughts about how a few of our top stories of the week came together. This week, we discuss a wild message, big questions about consciousness, and a visit to a museum.

JASON: I got an extremely funny Signal message yesterday after I published my most recent article about Flock. Like, very confused Signal message from someone who ostensibly thought maybe they were a source? We do sometimes get article tips that are like “Off the record, not to be quoted, confidential source:” and then the entirety of the message is like “did you see this article in The New York Times?”

2026-04-30 21:50:51

The Complier is the hardest boss to reach in the extraction shooter Marathon. To even have the chance to fight it, you need to have cleared six vaults—increasingly elaborate puzzle rooms—in the Cryo Archive, Marathon’s end game map. To even get the chance to enter each of those vaults, you need to obtain a key for each. To even get a chance to get one of those keys, you need to kill another set of bosses or find them in dangerous runs of another map. And if you do find a key, or you bring one into Cryo Archive to use, another team of players may simply kill you and take it from you.

Or, you could pay a random guy on eBay to kill the Compiler for you.

2026-04-30 21:25:36

Residents of an Atlanta suburb have been rocked by the revelation that sales employees at Flock have been accessing sensitive cameras in the town to demonstrate the company’s surveillance technology to police departments around the country. The cameras accessed have included surveillance tech in a children’s gymnastics room, a playground, a school, a Jewish community center, and a pool.

Flock has taken issue with the way that residents and activists have characterized the access but confirmed that the camera access did happen as part of its sales demonstrations. A blog post by Jason Hunyar, a Dunwoody, Georgia resident who learned about Flock accessing the city’s cameras by obtaining Flock access logs via a public records request is called “Why Are Flock Employees Watching Our Children?”

Flock has pushed back against this characterization on social media, in a blog post, at city council meetings, and in a statement to 404 Media: “The city of Dunwoody is one city in our demo partner program,” a Flock spokesperson told 404 Media. “The cities involved in this program have authorized select Flock employees to demonstrate new products and features as we develop them in partnership with the city. Moreover, select engineers can access accounts with customer permission to debug or fix any issues that may arise. No one is spying on children in parks, as the substack incorrectly asserts.”

Flock also argued that it is more transparent than any other surveillance company because it creates these access logs at all, and they can be obtained using public records requests. “Also, I must state the irony of the situation. We're one of the few technology companies in this space dedicated to radical transparency [...] I understand the concern from the resident, but it is unequivocally false to assert that Flock, or the police, or city officials are doing anything other than using technology to stop major crimes in the city.”

The records Hunyar obtained, however, show that some of the cameras that were accessed were in sensitive locations, including the pool at the Marcus Jewish Community Center of Atlanta (in Dunwoody), the children’s gymnastics room at MJCCA, and several fitness centers and studios. The access logs obtained by Hunyar show at the very least how expansive Flock’s surveillance systems can be in a single city, encompassing not just cameras purchased by the city but also cameras purchased by private businesses.

After Hunyar wrote about what he found, Flock has agreed to stop using Dunwoody’s cameras to demonstrate its product. Flock’s FAQ page states that “Flock customers own their data” and “Flock will not share, sell, or access your data.” It also states “nobody from Flock Safety is accessing or monitoring your footage.” Flock also published a blog post that notes “one of the benefits communities value most about Flock technology is the ability for law enforcement to directly access privately owned cameras, if and only if the organization allows them to, for crime-solving and security purposes.”

“Fair questions have been asked about conducting demos on cameras in sensitive locations when doing this very critical testing in the real-world. Last week, in the City of Dunwoody, questions were raised about a demo conducted as part of authorized activity approved under the city's demo partner agreement, on cameras at a local Jewish Community Center. Although the camera was only viewed during a routine demo, we understand that this is a sensitive location for many. We have therefore determined that employees will be trained to only conduct demos in more public locations, like retail parking lots,” Flock wrote in the blog. “Accusing someone of spying on children is not a policy disagreement; it is a life-altering allegation. Claims of inappropriate conduct by our employees are false. The employees being named online are well-intentioned employees who accessed a camera network with the city's explicit permission, as part of their job. They are now being called predators for it.”

2026-04-30 20:56:21

Japan’s Minister of Defense Shinjirō Koizumi posed with a cardboard drone on Monday during a meeting with drone manufacturer AirKamuy. The AirKamuy 150 is a cheap pre-fab cardboard drone meant to die on the battlefield and it comes shipped in a flatpack like an IKEA shelf.

According to Koizumi, Japan’s military has already begun to use the cardboard drone. “The Japan Maritime Self-Defense Force is already utilizing them as targets,” he said in a post on X. “In aiming to become the Self-Defense Forces that makes the most extensive use of unmanned assets, including drones, in the world, strengthening collaboration with startups enthusiastic about the defense sector is indispensable.”