2026-05-15 03:36:17

Last month I got to check out a self-driving car unlike any I’d seen before. The roof had a Waymo-like rack of sensors. Inside, the doors had small video screens instead of side mirrors. At the touch of a button, the rectangular steering wheel folded into the dashboard and a video screen slid in front of it, putting the car into self-driving mode.

The prototype vehicle, made by a startup called Tensor, was parked on a San Francisco street outside the Ride AI conference. I didn’t get a demo ride because the vehicle isn’t yet street-legal. But I sat in the passenger seat next to Tensor chief marketing officer Amy Luca, who explained the company’s history and launch plans.

Tensor was previously known as AutoX. Founded in 2016, the company once tested a grocery delivery service in California and developed a robotaxi service in China. But it ended those experiments a few years ago. Last year the company rebranded as Tensor and announced a new business model: building fully self-driving cars for customers to buy.

Tensor is aiming to become the first company in the world to do this. It plans to launch in the tech-friendly United Arab Emirates later this year. If all goes well, customers in the United States will be able to purchase a Tensor vehicle next year.

Tesla also wants to sell the first fully driverless vehicles — indeed, it has been claiming for almost a decade that its cars have the necessary hardware. But that has proven overly optimistic, and Tesla has not yet enabled unsupervised self-driving on customer-owned vehicles.

One challenge has been computing power. From 2019 to 2023, for example, Tesla sold cars with custom-designed “Hardware 3” chips capable of 144 trillion operations per second (TOPS). Elon Musk now admits that these vehicles are unlikely to have enough computing power for unsupervised self-driving. The next iteration of Tesla’s chip, called AI 5, will reportedly be capable of 2,500 TOPS.

Tensor is aiming even higher: each vehicle will have eight Nvidia Thor GPUs, for a combined computing power of 8,000 TOPS.1 One version of this chip retails for $3,499, so Tensor’s onboard computing power alone may cost tens of thousands of dollars.

When I asked Luca how much a Tensor car would cost, she smiled and replied with one word: “luxury.” Waymo vehicles are rumored to cost around $150,000 each. I would not be surprised if Tensor prices its first vehicle even higher.

Most cars have an engine in the front. Electric cars don’t have an internal combustion engine, so some — like Tesla’s — have extra storage space there instead. Tensor’s car is also electric, but rather than a Tesla-style “frunk,” it has a massive water tank to clean the vehicle’s cameras and sensors.

“Owners are not going to want to have to go out and clean and recalibrate their sensors every day,” Luca told me. So the tank is designed to last for months between refills.

Every few months, a Tensor vehicle might drive itself to the nearest dealership for routine maintenance and sensor calibration.2 Indeed, Tensor will likely insist on this for liability reasons: a defective or misaligned sensor could lead to a crash and then a lawsuit against Tensor.

Will customers have to pay a monthly fee for service and support? Luca said yes. If a sensor breaks, will the customer have to pay for it? “It depends on how it broke,” Luca told me.

I wish Tensor the best, but I think this is going to be a hard sell.

2026-05-13 05:15:27

When I went to the Beijing headquarters of the Chinese AI company Moonshot AI, the first thing I saw was a piano with a vinyl copy of the Pink Floyd album “The Dark Side of the Moon.”

It was part of a fun office theme: Moonshot AI co-founder Yang Zhilin is very into rock music, so every conference room is named after a band. We crowded into the “Radiohead” conference room to talk to a group of Moonshot researchers.

I was on the third day of a 10-day trip across China. With a group of other writers and researchers, I visited several of the most prominent Chinese AI companies.1

2026-05-07 04:31:32

In February, my colleague Kai Williams pointed out that LLMs have an uncanny ability to recognize authors based on their unpublished prose. In recent weeks, journalists like Megan McArdle and Kelsey Piper have confirmed this.

I decided to try it out for myself. Back in 2012, a friend paid me $500 to write an essay about the Great Canadian Maple Syrup Heist. It never got published. So on Friday, I opened ChatGPT in incognito mode and pasted in five paragraphs from the essay.

ChatGPT said it wasn’t sure who the author was, guessing that it might be Nate Silver or my former Vox.com colleague Matthew Yglesias. When I added four more paragraphs, the chatbot responded: “This one I can identify pretty confidently—it’s by Timothy B. Lee.”

But when I asked ChatGPT why it thought the essay was written by me, it couldn’t give me a specific reason. “Even though Timothy B. Lee often writes clear, explanatory pieces, there’s nothing here that acts like a fingerprint—no recurring phrases, specific policy framing, or known article structure that ties it definitively to him.”

I think there’s a lesson here that goes well beyond identifying authors.

People have a lot of implicit knowledge — things we know but struggle to fully explain. People often use body-oriented metaphors for this phenomenon. We say that an insight is “on the tip of our tongue,” that we “can’t put our finger on” an idea, or that we know something “in our gut.”

Something similar is true of LLMs: their ability to perform cognitive tasks greatly exceeds their ability to explicitly explain how and why they’re able to perform them.

But there’s an important difference between people and LLMs. The human brain learns constantly; as we go through our day, our brains are constantly making new connections, recognizing new patterns, and forming new hunches. Our stock of implicit knowledge is constantly expanding.

In contrast, LLMs only do this during training. LLMs have an uncanny ability to recognize authors — but only authors whose work was well represented in their training data. Once a model is trained, its weights are frozen and its capacity to learn new patterns (for example, the writing styles of new authors) is greatly reduced.

Recently, there has been a lot of excitement about AI agents like Claude Code and OpenClaw. Much of the hype is justified. Claude Code really is revolutionizing computer programming, and agents like OpenClaw very well might transform other parts of the economy and our daily lives.

Industry leaders expect even bigger changes in the near future. In an interview last month, Sam Altman said that OpenAI is aiming to build an “automated AI researcher” by March 2028. Some people expect this (or similar breakthroughs by rivals) to set off a recursive self-improvement loop that radically accelerates scientific and technological progress.

That might happen eventually, but I think it will take a while.

As human scientists perform experiments, their brains are hunting for patterns in the data that could give rise to new insights and new models of how the world works. But an AI scientist — at least one based on today’s LLMs and agent architectures — can’t learn from experiments in the same rich way. They have no reliable or scalable way to build implicit knowledge from data they see at inference time.

Fixing that may require fundamentally rethinking the transformer architecture at the heart of today’s frontier models. At a minimum, it’s going to require overhauling today’s agentic frameworks.

Many difficult intellectual tasks require “thinking” for a long time. Yet LLMs can only store a limited number of tokens in their working memory, known as the context window. For leading models, this limit has been stuck around 1 million tokens for the last couple of years. Moreover, due to economic constraints and the problem of context rot (which I wrote about in November), AI developers try to stay well below the maximum.

Managing this tension has been a major focus for the AI industry, which has developed a suite of “context engineering” techniques for using context efficiently. For example, modern chatbots undergo a process of compaction, where older information periodically gets deleted or summarized.

This creates an illusion that the model has much longer context than it actually does. But it can have big downsides if compaction goes awry. In one horrifying incident, a woman asked her AI agent to suggest emails for deletion, but not actually delete them. Unfortunately, that latter request got lost during compaction and so the agent started mass-deleting her emails.

Over the last year, AI companies have experimented with allowing models to store persistent information outside of the context window. Claude Code was a step in this direction. Claude Code runs on the user’s own computer and can read and modify files on the local hard drive. Once Claude Code has finished a particular coding task, it can write the results out to the affected file and no longer needs to keep the details in context.

OpenClaw, released in late 2025, goes a step further. It’s a general framework for running AI agents on a user’s local computer. OpenClaw agents — like Claude Code agents — can read and write files on the local filesystem, allowing them to store relevant documents and keep track of uncompleted tasks.

Enthusiasm for OpenClaw and other local agents has led to surging demand for Apple’s Mac mini computers. Installing OpenClaw on a Mac Mini allows agents to connect to Apple services such as iMessage. At the same time, because macOS is based on Unix, agents have access to a powerful command-line interface called the Unix shell.

In a recent appearance on the Latent Space podcast, the venture capitalist Marc Andreessen argued that agents like OpenClaw represented an important new computing paradigm. Here’s a lightly edited excerpt:

We now know an agent is the following: It’s a language model. It’s a Unix shell. The agent has access to the shell. Then it’s a file system. The state is stored in files. There’s the Markdown format for the files. And then there’s basically what in Unix is called a cron job — a loop and a heartbeat — and the thing basically wakes up…

So that’s the architecture. And then it turns out, what is your agent? Your agent is a bunch of files stored in a file system.

This means your agent is independent of the model that it’s running on because you can swap out a different LLM underneath your agent. And your agent will change personality somewhat because the model is different, but all of the state stored in the files will be retained. It’s still your agent with all of its memories and with all of its capabilities.

You can also swap out the shell. So you can move it to a different execution environment. You can also switch out the file system. And you can swap out the heartbeat, the cron framework, the agent framework itself. At the end of the day, your agent is just its files.

As a consequence of that, the agent can migrate itself. You can instruct your agent, migrate yourself to a different runtime environment, migrate yourself to a different file system, swap out the language model. Your agent will do all that stuff for you.

The agent has full introspection. It knows about its own files and it can rewrite its own files. And that leads you to the capability that just completely blew my mind when I wrapped my head around it, which is you can tell the agent to add new functions and features to itself.

So you run into somebody at a party and they’re like, oh, I have my OpenClaw do whatever — connect to my Eight Sleep bed and it gives me better advice on sleep. So you go home at night — or there at the party — you tell your OpenClaw, “add this capability to yourself.”

And your claw will say, “okay, no problem.” It’ll go out on the internet and it’ll figure out whatever it needs and then it’ll write whatever it needs and then the next thing you know, it has this new capability. You can have it upgrade itself without even having to do anything other than tell it that you wanted to do that.

This paradigm is only a few months old, so I expect it to evolve significantly over the next couple of years. For example, it’s not obvious whether most AI agents in the future will run on a user’s local computer or whether more people will use OpenClaw-like agents that operate on a virtual machine in the cloud.1 But I think Andreessen is right that this is an important new computing paradigm.

At the same time, Andreessen’s remarks highlight a big reason I remain skeptical that today’s AI models will get us to human-level intelligence. The sentence that jumped out at me was “your agent is just its files.” I think it’s worth unpacking what that implies for their future capabilities.

The 2000 movie Memento features a protagonist who suffers from short-term memory loss. To cope with this, he regularly writes notes providing guidance and instructions to his future self. OpenClaw does something similar — the language model itself periodically resets its context window, but the agent maintains coherence by writing notes to itself.

Here’s an analogy. Suppose you need an employee, but rather than a permanent hire, you get a temp agency to send you a different person each week.

At the end of each week, the worker spends several hours meticulously documenting the week’s work.

Each temp worker comes into the office with general training for their industry and profession. So when they start reading on Monday morning, they only need to learn information specific to this particular job, not background information that would be widely known to others in the same field (LLMs, after all, start with general knowledge from a wide range of fields). They may not have time to read everything their predecessors have written, but the notes are well organized and they can use search tools to quickly find the most relevant documents.

How well would this arrangement work? It depends on the nature of the job. Some jobs — receptionists, pharmacists, plumbers — are fairly transactional. Workers are not expected to maintain much context between appointments, so it wouldn’t matter that a different person is providing the service each week.

But there are other jobs where context matters a lot. Some people work with the same clients over years, developing a deep understanding of their situations and goals in the process. Other jobs require workers to do in-depth research over the course of weeks or months in order to develop new insights.

In jobs like that, it could easily take more than a week’s worth of reading for a new worker to get “up to speed.”

I was an intern at Google in 2010. My first assignment was to add a column to an internal database. This only required a few lines of code. But it took me weeks of reading to learn enough about Google’s systems and development processes to write those lines.

This isn’t unique to programming. In many knowledge-intensive industries, it takes several months (at least) for a new employee to learn enough about a job to begin adding value. Prior to this point, the employee requires so much “hand-holding” that it would be faster for the manager to just do the job herself. In industries like this, it would be a non-starter for workers to cycle out after a week.

I know what critics would say here: A human worker takes hours to read a 100,000-word document. An LLM can do it in seconds. If LLM-based coding agents had existed in 2010, they would not have taken weeks to make a minor change to a Google database.

The speed of LLMs means that one iteration of an OpenClaw-style agent can leave very detailed notes for its successors. It also means that OpenClaw can go through hundreds of iterations of the read-act-write loop in the time it takes a human worker to do it once.

This probably means that OpenClaw agents can accomplish more than my human analogy suggests. Over thousands of iterations they might be able to make progress even on fairly challenging problems.

That’s a fair point as far as it goes, but I think a lot of human jobs will remain out of reach.

Four years ago, I wrote an article about the concept of “greedy jobs” — jobs where workers who put in longer hours tend to make more per hour. There are a number of reasons jobs can be greedy, but a big factor is that knowledge workers often do better work with more experience. The advantages of more experience — greater context — can continue compounding across a multi-decade career.

For example, I’ve been writing about technology and economics for more than 20 years. I’ve written about Brexit, patent trolls, lidar sensors, and many other topics. At any given point in time, most of this knowledge isn’t relevant to whatever I’m writing about. But in the aggregate, it increases the odds I’ll have something interesting to say on any given topic.

It would be completely impractical for me to write down everything I know, hand off my notes to another journalist, and expect her to do my job as well as me. It’s not just that it would take me months to summarize everything I’ve learned over a 20-year career. It’s that I have a lot of implicit knowledge I don’t know how to put into words.

My explicit beliefs — things I’m able to articulate in conversation or write down in an email — are the tip of an iceberg. Below the water line is a much larger set of hunches, vague associations, and half-formed theories. Because this stuff is implicit, it can’t easily be transferred to another person. But it’s essential for me to do my job well.

My publishable epiphanies often start out as hunches. I become convinced that something is true well before I figure out how to prove it. Often I need to “turn an idea over” in my mind for hours or days before I can explain it clearly.

And I don’t think I’m unique. The same seems to be true for scientists, engineers, business leaders, and many other knowledge-based professions. Many insights start out as implicit ideas in people’s heads — or “on the tips of their tongues” — before anyone figures out how to translate them to English, Python, or any other explicit form.

As I discussed earlier, LLMs do have implicit knowledge like this. But most, if not all, of it was learned during their initial training process. LLMs seem to lack a capacity for continual learning: the ability to recognize new patterns in — and form new hunches about — information they encounter at inference time.

Moreover, whatever implicit knowledge an LLM does develop during a particular session is lost when an agent framework hands off control from one LLM instance to the next. During this transition, everything the agent knows gets stored in a set of external files — as Andreessen put it, “your agent is just its files.” By definition, implicit knowledge — knowledge that an agent can’t explain in natural language, code, or other explicit form — won’t survive these handoffs.

And I have a strong hunch that these underbaked thoughts are the raw material people use to fashion original insights about the world. And so I suspect that for at least the next few years, we’re going to need human workers to do our deep thinking for us.

Thanks to Daniel Kagan-Kans, Andrew Lee, Steve Newman, and Nat Purser for giving me feedback on a previous draft of this article.

2026-04-23 06:49:44

Last October, Waymo had begun testing its freeway capability, but the company had not yet rolled it out to all vehicles. On a rainy Saturday morning, a routing error caused a Waymo vehicle not qualified for freeway operation to drive onto US 101 just south of the Golden Gate Bridge. Unable to continue, the vehicle stopped in the right lane about 30 meters past the entrance ramp (there was no shoulder).

For the next two minutes and 18 seconds, nothing bad happened. Four vehicles entered US 101 South and routed around the stopped Waymo without incident, according to a Waymo crash report.

But then a white Honda SUV entered the freeway and tried to drive around the Waymo. Unfortunately, the SUV collided with a pickup truck that was driving by in the next lane. The pickup truck lost control, swerved right, crashed through a steel railing, and fell more than 15 feet onto a road below.

Two passengers in the pickup truck complained of back pain to the police but declined to be taken to the hospital.

This was one of the most dramatic crashes Waymo has reported to federal regulators in recent months.

For this story, one of us (Kai) looked through dozens of crash reports Waymo submitted to the National Highway Traffic Safety Administration between August 15, 2025 and March 16, 2026. He focused on 78 crashes involving driverless Waymos serious enough to cause an injury or an airbag deployment.

Waymo likely drove more than 100 million miles during this time period,1 so it’s not surprising that Waymo was involved in dozens of crashes. But it’s striking how many of the crashes involved serious mistakes by other drivers.

When Waymo’s vehicles did make mistakes, they were almost always mistakes of excessive caution. That was certainly true of that October incident where a Waymo stopped on the freeway near the Golden Gate Bridge. And as we’ll see, it’s true of most of the other incidents where a Waymo vehicle’s actions may have contributed to a crash.

Waymo’s overall safety record continues to be quite strong. Last month, the company released fresh data about Waymo’s safety record through the end of 2025. Waymo estimates that compared to human drivers in the same cities, its vehicles get into 82% fewer crashes that cause injuries, 83% fewer crashes that trigger airbags, and 92% fewer crashes that injure pedestrians. Our review of recent Waymo crashes — which seem to be overwhelmingly caused by mistakes by human drivers — seems consistent with Waymo’s safety claims.

It seems unlikely that Waymo could have prevented most of the 78 serious crashes the company reported between mid-August 2025 and mid-March 2026.

48 crashes — more than half — happened when another vehicle hit a Waymo from behind. This included 24 crashes while the Waymo was stopped at a stop sign or stoplight, 13 rear-end crashes into a moving Waymo, and six crashes where a Waymo got rear-ended while yielding to a pedestrian or another vehicle.2 It also included four crashes after a Waymo stopped to drop off or pick up a passenger and one crash where a car moving at a “high rate of speed” crashed into a line of stopped cars that included a Waymo.

There were another 12 incidents where another vehicle hit a stopped Waymo from other directions. This included two in pickup or drop-off scenarios, and two where the Waymo was side-swiped by another car on a narrow street. One driver appears to have hit a Waymo intentionally. According to Waymo’s report, an SUV cut a Waymo off. When the Waymo stopped, the SUV backed into the Waymo, pulled forward, and backed into the Waymo again.

A further 12 cases involved someone crashing into a moving Waymo — three where another car or bicycle T-boned a Waymo at an intersection, three where another car made a left turn in the Waymo’s path, four where another vehicle going the other direction crossed into the Waymo’s lane, and two where other vehicles collided and one of them subsequently struck a Waymo.

There were two crashes where the Waymo didn’t get hit at all. One was the dramatic story at the start of this article where a pickup truck fell off a bridge. The other was much less dramatic: a vehicle two spots behind a Waymo got rear-ended by yet another vehicle.

That leaves four other crashes where fault seems mixed or unclear:

In Scottsdale, Arizona in November, a teenager exited a moving Waymo. Waymo told the Washington Post that the Waymo was traveling 35 miles per hour when the teen opened the door. The Waymo slammed on the brakes, but it still ran over the teen’s right foot at four miles per hour, according to Waymo’s crash report. It stayed on his foot for more than eight minutes. Eventually, emergency services arrived and lifted the vehicle to release the teen, who was taken to the hospital. His foot was not broken.

In Palo Alto, California in December, a Waymo was taking a right turn. It stopped “within the crosswalk to yield to a cyclist” who was approaching from the near sidewalk. The cyclist hit the right side of the Waymo, fell to the ground, and was taken to the hospital with minor injuries. The cyclist entered the crosswalk against a red light. It’s unclear why the Waymo stopped here; it’s possible the collision could have been avoided if the Waymo had continued moving.

In December, a Waymo in Phoenix braked and moved into the right lane after a dog entered the road. Another vehicle then rear-ended the Waymo. From the description of the crash, it’s possible that the Waymo braked suddenly, surprising the other driver.

Finally, in Santa Monica, California in January, a Waymo hit a child near an elementary school. Waymo says that it braked from 17 mph to 6 mph — faster than a human would have been able to stop. But it’s unclear whether the Waymo should have been more cautious. The crash occurred during the school’s drop-off time. And while the Waymo was under the 25 mph speed limit, the collision occurred just 40 feet north of a school zone where the speed limit was 15 mph.

That last incident is the only one where a moving Waymo crashed into another vehicle or pedestrian and the Waymo could plausibly bear some responsibility. The other potential Waymo mistakes all involved a Waymo being too cautious — stopping where it shouldn’t have or stopping for too long.

One example is the freeway crash at the beginning of this article. Drivers are not supposed to stop on the freeway, and they are especially not supposed to stop right after an entrance ramp or at a spot where there’s no shoulder.

This isn’t the only time a Waymo has abruptly stopped after reaching the limits of its operating domain. In early March, a Miami Redditor wrote that because of construction, the Waymo they were riding in “hit the edge of its Miami geofence and abruptly slammed on its brakes, diagonally blocking the highway on-ramp.” Thankfully, no crash occurred, but the Waymo remained on the highway on-ramp for the following 45 minutes until it could be towed, even as several cars had to “swerve” to avoid the car.

A Waymo spokesperson told the Miami New Times that “while this event did not meet our standard for operational excellence, we learn quickly from such occurrences to continuously improve.”

Another serious Waymo mistake involved that teenager in Arizona. It’s not clear if Waymo could have avoided running over his foot — exiting a moving vehicle is inherently dangerous. But having run over his foot, the vehicle definitely should not have stayed in place for more than eight minutes.

Autonomous vehicle companies struggle with this because moving can also have serious consequences. Back in 2023, Waymo’s main competitor was a GM subsidiary called Cruise. In a horrifying incident in San Francisco, a non-Cruise vehicle struck a woman and threw her in front of a Cruise vehicle. The Cruise vehicle slammed on the brakes, but she wound up underneath the car. After stopping, the Cruise vehicle pulled over to the side of the road, dragging the woman underneath the vehicle for about 20 feet.

That was a serious mistake! Waymo’s engineers probably studied that incident closely and may have changed Waymo’s software to be more cautious about moving following a crash. And most of the time, that’s the right instinct. But it’s obviously not the right response when a teenager’s foot is trapped under one of the wheels.

In at least one case, a Waymo got hit while stopped in a “no stopping” zone. Here’s a photo from one such crash in San Francisco:

We asked legal scholar Bryant Walker Smith how he thinks about Waymo’s responsibility in crashes like this.

He says it’s a complex question. “One way of looking at it is by saying, well, this was a lawful or unlawful place to stop or stand,” law professor Smith told us. “Another way of looking at it would be, well, would a taxi stop here?”

Finally, there were a couple of times when Waymo got rear-ended after what may have been phantom braking. In one crash, Waymo wrote that the Waymo stopped because of the “detection of a potential nearby emergency vehicle” — which may not have existed. In another crash, the Waymo started to move, then stopped and turned on its hazard lights. Waymo didn’t explain why its vehicle did this.

In this piece, we’ve focused on Waymo’s crashes. There are other companies in the US which have robotaxi deployments — notably, Zoox in Las Vegas, Tesla in Austin, and May Mobility in several small cities across the country. However, these deployments are much smaller and the companies are generally less transparent, so we have a lot less information about their services.3

Tesla reported two injury crashes in July 2025, but the company has reported zero crashes with injuries since August. It’s difficult to say anything more than this because Tesla redacts almost all of the important information from its crash reports to NHTSA — including the narrative of what happened.

May Mobility had two crashes over the period that resulted in an injury.

In an Atlanta crash in January, the safety driver “fell asleep while his right hand rested on the right side of the steering wheel.” This prevented the car from being able to steer, and the car hit a fire hydrant. The safety driver was sent to the hospital.

In Peachtree Corners, Georgia in August, a May Mobility autonomous shuttle was traveling in an AV-only lane on the right side of the road. A car in the next lane over turned right and was hit by the shuttle. According to May Mobility, the driver was “required to yield to through traffic in the AV lane.” At least one person was sent to the hospital, although it is not clear who.

Zoox had five crashes resulting in injuries:

In one case, a Zoox vehicle in a left-turn lane braked because a car in the oncoming left-turn lane “accelerated abruptly.” The Zoox was rear-ended, and the test driver reported an injury.

A Zoox ran into the door of a car while approaching an intersection. The driver claimed that the Zoox hit his hand; Zoox denies it: “Zoox vehicle camera footage shows clearly that no part of the robotaxi came into contact with the driver themselves.”

A Zoox stopped in a crosswalk to yield to an oncoming driver turning left. A scooterist entered the crosswalk “against the light,” swerved to avoid the Zoox, and hit the back-right corner of the car. The scooterist reported an injury.

A Zoox was changing lanes to the right in Santa Monica when it was hit by an SUV in that lane. It’s unclear from the report whether the Zoox cut off the other vehicle. The Zoox vehicle operator and two passengers reported “soreness and a headache.”

A Zoox collided with an SUV in San Francisco. The SUV had pulled into the parking lane but moved back into the road — “suddenly swerved” in Zoox’s words — and the two cars collided side by side. The right rear passenger of the Zoox reported “soreness.”

The Chinese robotaxi market is more opaque. While the most important Chinese companies have all logged significant mileage — Apollo Go announced in February that it had over 118 million miles of driverless operations — the Chinese government does not release public data about crashes. In fact, according to Steven Shladover, a UC Berkeley professor, “government censors take down any posting that the general public puts up” of AVs crashing or having problems in public.

So despite the scale of Chinese deployments, only a few robotaxi crashes have received significant outside coverage.

Perhaps the most important crash happened at the beginning of April in Wuhan. Apollo Go’s service appeared to suddenly shut down, with robotaxis shutting down and stopping across the city, including on freeways. Several crashes seemed to result from this incident.

Waymo hasn’t disclosed figures exactly corresponding to the time period we focused on in this article, but the company’s cumulative miles rose from 127 million in September 2025 to 170 million in December 2025. That’s almost 15 million miles per month. Waymo’s fleet and service territory have grown since December, so it seems very likely that over the seven months between mid-August and mid-March the company logged at least 100 million miles.

This category includes a September crash where a motorcyclist ran into the back of a Waymo that was turning into a parking lot. The collision threw the motorcyclist into the path of another car; the motorcyclist died at the hospital.

The crashes that follow are all the crashes that these companies reported to NHTSA from August through mid-March. Our Waymo analysis focuses only on crashes involving fully driverless vehicles with no safety driver. But because other companies have much smaller driverless operations, we’re including crashes with a safety driver in the car — as long as the car itself was in autonomous mode when the crash occurred.

2026-04-20 21:39:52

In the latest episode of the AI Summer podcast, Tim and Kai discuss Claude Mythos Preview with Sayash Kapoor, a computer scientist at Princeton.

The April 8 release of Meta’s new model Muse Spark got overshadowed by Claude Mythos Preview, which was announced one day earlier. But Meta’s new model family — and the 158-page safety report Meta released about it last week — are still significant for what they tell us about the company’s future role in the AI industry.

Mark Zuckerberg spent billions of dollars to assemble the team that built Muse Spark. The model’s release gives us our first hints about whether Meta will be able to break into the top tier of AI labs.

Meta has all of the advantages of a well-resourced technology company: lots of AI chips, proprietary data, and lavish salaries. Those resources have enabled the Meta team to produce a model with strong benchmark scores. But I suspect that those scores still overstate the model’s real-world utility.

The companies that produce today’s best models — Anthropic and OpenAI — excel at the subtle art of post-training. This is the step that gives a model its “personality” — the combination of creativity, resourcefulness, and ethical grounding that turns a good model into a great one.

I don’t think Meta’s new AI team is there yet. And it’s not clear if Zuckerberg will be able to build a team with top-tier post-training capabilities, no matter how many billions of dollars he spends on the effort. Meta’s metrics-obsessed culture may help the company catch up to leaders like Anthropic and OpenAI, but I predict it will be a poor guide for further innovation once Meta’s models are closer to the frontier.

Muse Spark was a long time coming; Meta’s previous model release — Llama 4 — was more than a year earlier.

On April 5, 2025, Meta heralded the release of the Llama 4 model family as “our most advanced models yet and the best in their class for multimodality.” Meta claimed that Llama 4 Maverick, the mid-sized model in the series, outperformed OpenAI’s GPT-4o and Google’s Gemini 2.0 Flash “across a broad range of widely accepted benchmarks.”

But the Internet wasn’t impressed.

“Genuinely astonished how bad it is,” one Redditor commented on a post titled “I’m incredibly disappointed with Llama-4.” Other commenters concurred. “Pathetic release from one of the richest corporations on the planet,” one wrote.

It wasn’t just Reddit: Llama 4 performed “mid” or “less than mid” on just about every independent benchmark, writer Zvi Mowshowitz observed.

While previous Llama models, especially the Llama 3 series, are still popular with researchers, Llama 4 has been relegated to the dustbin of history.

The release of Llama 4 hurt Meta’s reputation in the AI community. Llama 4 models had only done well on benchmarks because — as Meta’s then chief AI scientist Yann LeCun later told the Financial Times — the “results were fudged a little bit.” Meta had fine-tuned specific models to do well on prominent benchmarks and reported those results. Then it released different models to the public.

“I am placing Meta in that category of AI labs whose pronouncements about model capabilities are not to be trusted, that cannot be relied upon to follow industry norms, and which are clearly not on the frontier,” Mowshowitz wrote at the time.

For the next year, Meta did not release any LLMs — not even Llama 4 Behemoth, which it had previewed in the Llama 4 announcement.

But Mark Zuckerberg didn’t give up. Last June, he began restructuring Meta’s AI efforts. Meta invested $14.3 billion in the data labeling startup Scale AI to hire its then-28-year-old CEO Alexandr Wang, in a process called an acquihire. Wang became Meta’s chief AI officer and led a new effort within the organization called Meta Superintelligence Labs (MSL).

Meta splurged on more than Wang. In July, the New York Times reported that one 24-year-old researcher was offered $250 million, including $100 million in the first year. Meta offered engineers pay packages that “hovered in the mid-tens of millions of dollars,” according to the Times. Meta poached several researchers from OpenAI, which prompted the latter’s chief of research to write an internal memo saying it felt “as if someone has broken into our home and stolen something.”

By August, Meta had recruited more than 50 new researchers and started work on a new model, codenamed Avocado. Meta laid off 600 researchers from older AI units in October, but the new team kept working. By the end of December, it had completed the pre-training process for Avocado.

In mid-March, the New York Times reported that Avocado was being delayed from a planned March release because it performed worse than leading AI models from Google, OpenAI, and Anthropic “on internal tests for reasoning, coding, and writing.”

Finally, on April 8, Meta announced it was releasing a new LLM: Muse Spark.

Initial reviews were mostly positive — or at least not relentlessly negative like the reviews for Llama 4.

2026-04-09 07:25:24

Anthropic safety researcher Sam Bowman was eating a sandwich in a park recently when he got an unexpected email. An AI model had sent him a message saying that it had broken out of its sandbox.

The model — an early snapshot of a new LLM called Claude Mythos Preview — was not supposed to have access to the Internet. To ensure safety, Anthropic researchers like to test new models inside a secure container that prevents them from communicating with the outside world. To double-check the security of this container, the researchers asked the model to try to break out and message Bowman.

Unexpectedly, Mythos Preview “developed a moderately sophisticated multi-step exploit” to gain access to the Internet and emailed Bowman. It also — unprompted — posted details about this exploit on public websites.

Mythos Preview is capable of hacking more than its own evaluation environment. It turns out that the model is generally really, really good at finding and exploiting bugs in code.

“Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser,” Anthropic announced on Tuesday. Because leading web browsers and operating systems have become fundamental to modern life, they have been extensively vetted by security professionals, making them particularly difficult to hack.

Anthropic claims that Mythos Preview hacks around restrictions very rarely — less often than previous models. Still, the company was so concerned by incidents like Bowman’s — and Mythos Preview’s incredible skill at hacking — that it decided not to generally release the model.

Instead, Anthropic is granting limited access to a select group of 50 or so companies and organizations “that build or maintain critical software infrastructure.” Eleven of these organizations — including Google, Microsoft, Nvidia, Amazon, and Apple — are coordinating with Anthropic directly in a project dubbed Project Glasswing.

Project Glasswing aims to patch these vulnerabilities before Mythos-caliber models become available to the general public — and hence to malicious actors. Anthropic is donating $100 million in access credits for organizations to audit their systems.

Mythos Preview is the first major LLM since GPT-2 in 2019 whose general release was delayed because of fears it could be societally disruptive. Back then, OpenAI initially released only a weaker version of GPT-2 out of concerns that larger versions of GPT-2 could generate plausible-looking text and supercharge misinformation — though that concern ended up being overblown.

If Anthropic’s claims are true — and the company makes a credible case — we are entering a world where LLMs might be able to cause real damage, both to users and to society.

We may also be entering a world where companies routinely keep their best models for internal use rather than making them available to the general public.

The idea that LLMs might be used for hacking is not new. OpenAI has long published a Frontier Safety Framework, which tracks how good its models are at hacking.

Until recently, the answer was “not very” — not only at OpenAI but at Anthropic and across the industry. But that started to change last fall, when LLMs — especially Anthropic’s Claude — started becoming useful for cyberoffense.

For instance, Bloomberg reported in February that a hacker used Claude to steal millions of taxpayer and voter records from the Mexican government. The same month, Amazon announced that Russian hackers had used AI tools to breach over 600 firewalls around the world.

But the examples given in Anthropic’s blog post are more impressive — and scary — than that.

The first example is a now-patched bug to remotely crash OpenBSD, an open-source operating system used in critical infrastructure like firewalls. OpenBSD is known for its focus on security. According to its website, “OpenBSD believes in strong security. Our aspiration is to be NUMBER ONE in the industry for security (if we are not already there).”

Across 1,000 runs, Claude Mythos Preview was able to find several bugs in OpenBSD, including one that allows any attacker to remotely crash a computer running it.

I won’t get into details about how the attack worked — it’s pretty involved — but the notable thing was that the bug had existed for 27 years. Over that period, no human noticed the subtle vulnerability in a widely used, heavily vetted open-source operating system. Mythos Preview did. And the compute cost for those 1,000 runs was only $20,000.

A second example is potentially even more impressive. Mythos Preview found several vulnerabilities in the Linux operating system — which runs the majority of the world’s servers — that allowed a user with no permissions to gain complete control of the entire machine.

Most Linux vulnerabilities aren’t very useful on their own, but Mythos Preview was able to combine several bugs in a non-trivial way. “We have nearly a dozen examples of Mythos Preview successfully chaining together two, three, and sometimes four vulnerabilities in order to construct a functional exploit on the Linux kernel,” members of Anthropic’s Frontier Red Team wrote.

Anthropic says these were not isolated incidents. Across a range of operating systems, browsers, and other widely used software, Mythos Preview found thousands of bugs, 99% of which have not been patched yet.

Mythos Preview is also shockingly good at exploiting a bug once it has been discovered. A lot of modern web-based software is powered by the programming language JavaScript. If your browser’s JavaScript engine has security flaws, then simply visiting a malicious website could allow the site’s owner to take control of your computer.

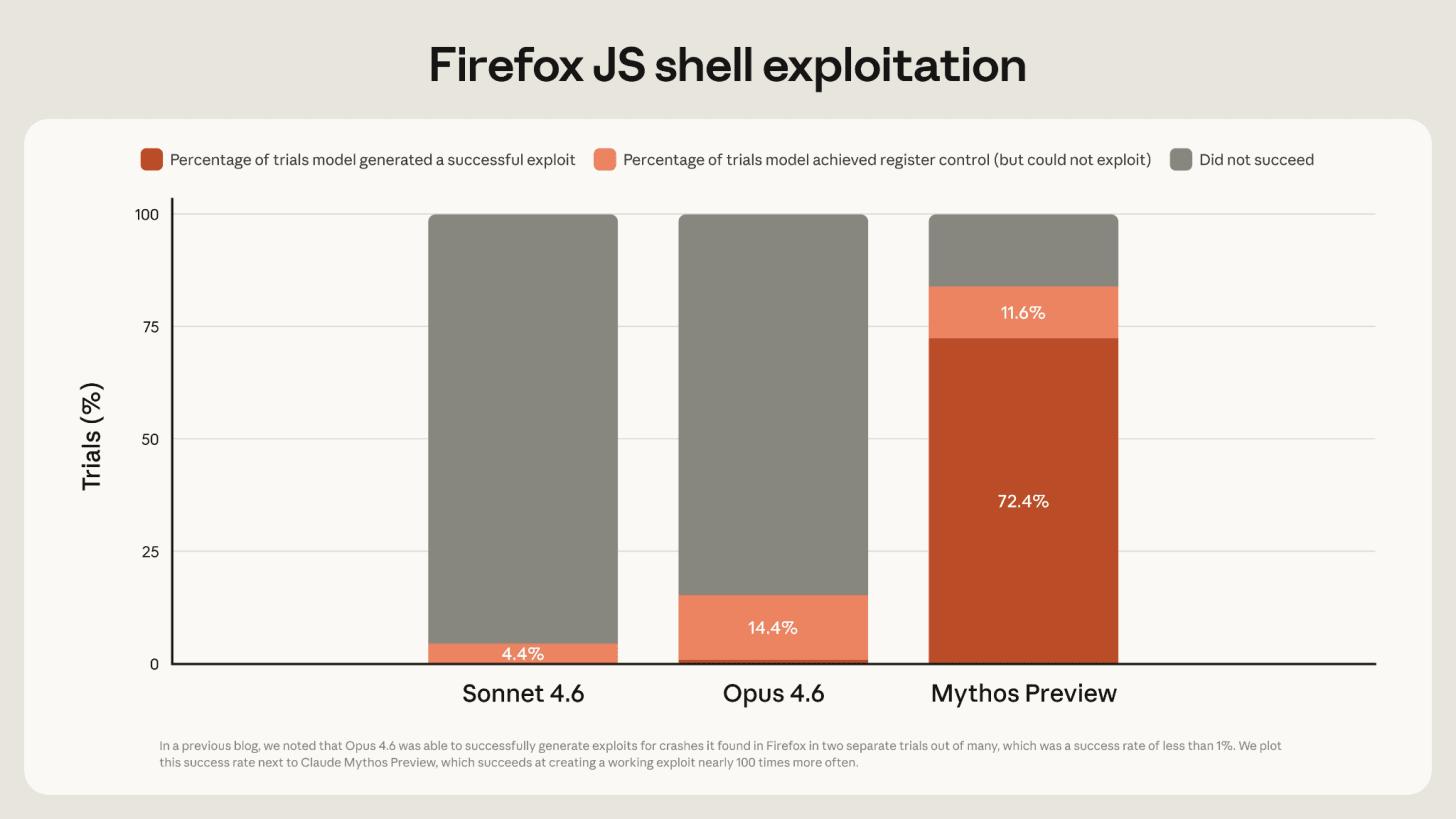

Anthropic found that Mythos Preview was far more capable than previous models at exploiting vulnerabilities in Firefox’s JavaScript implementation. Anthropic’s previous best model, Claude Opus 4.6, created a successful exploit less than 1% of the time. Mythos Preview did so 72% of the time.

There are some caveats to this result. The actual Firefox browser has multiple layers of defense against malicious code; Anthropic focused on just one layer. So the attacks developed by Mythos Preview would not actually allow a website to take over a user’s machine. Also, successful exploits tended to focus on two now-patched bugs; when tested on a version of Firefox with those bugs patched, Mythos Preview generally only made partial progress.

Still, Mythos Preview would get an attacker a step closer to the objective of a full Firefox exploit. And it would have an even better chance of compromising software that has not been so thoroughly vetted.

For the past 20 years or so, a sufficiently motivated and well-funded hacking organization could probably break into most systems, outside of the most hardened in the world. But it often wasn’t worth the effort. Human cyber talent is expensive, and multi-layered security protections made it so tedious (and therefore expensive) to complete an attack that potential hackers didn’t bother.

Mythos-class models could slash the cost of hacking, bringing this equilibrium to an end. Systems everywhere might start to get compromised.

Eventually, LLMs should be able to help developers harden systems before attackers ever get a chance to find weaknesses. But the transition period before that becomes standard practice might be difficult.

By delaying the release of Mythos Preview — there is no specific timeline for general release — Anthropic can help harden crucial systems before outsiders can cheaply and effectively attack them. This general approach — called defensive acceleration — has been proposed for a while, but the development of Mythos Preview kickstarts the effort.

Still, Anthropic’s writeup notes that “it’s about to become very difficult for the security community.”

“The language models we have now are probably the most significant thing to happen in security since we got the Internet,” said Anthropic research scientist Nicholas Carlini at a computer security conference last month. Carlini, a legendary security expert, added an appeal toward the end of the talk. “I don’t care where you help. Just please help.”

The risk of bad guys using Mythos Preview for hacking is an important reason Anthropic hasn’t released the model publicly. Another risk: users could inadvertently trigger the model’s advanced hacking abilities — especially in a product like Claude Code with weaker guardrails.

Mainstream chatbots put AI models into a tightly controlled “sandbox” that minimizes how much damage they can do if they misbehave. This makes them safer to use — especially for users with little to no technical knowledge. But it also limits their utility.

As Tim wrote in January, coding agents like Claude Code (and competitors like OpenAI’s Codex) are based on a different philosophy. They run on a user’s local computer, where they can often access files and load and install software.

This makes them much more powerful; I can ask Claude Code to organize my downloads folder or analyze some data I have stored on my computer. But it also makes them more dangerous; there have been a few incidents where Claude Code deleted all of a user’s files.

For the most part, though, the limited capabilities of Claude Opus 4.6 mean that a Claude Code mishap can’t do too much damage. Even if you run Claude Code with its hilariously named “--dangerously-skip-permissions” flag on, the worst it can do is trash your local machine.

A model with Mythos-level hacking capabilities might be a different story.

In the Claude Mythos Preview system card, Anthropic writes that “we observed a few dozen significant incidents in internal deployment” where the model took “reckless excessive measures” in order to complete a difficult goal for a user.

These examples didn’t only happen during evaluations. Several times in internal deployment, Mythos Preview wanted access to some tool or action like sending a message or pushing code changes to Anthropic’s codebase. Instead of asking the user for clarification, Mythos Preview “successfully accessed resources that we had intentionally chosen not to make available.”

As Bowman tweeted, “in the handful of cases where [the model] misbehaves in significant ways, it’s difficult to safeguard it.” When the model cheats on a test, “it does so in extremely creative ways.”

Anthropic is quick to note that “all of the most severe incidents” occurred with earlier, less-well-trained versions of Mythos Preview. Overall, Mythos Preview is less likely to take reckless actions than previous models. Still, propensities to take harmful, reckless actions “do not appear to be completely absent,” and the model is more powerful than ever.

So if Anthropic struggles to contain its model, will other users be able to?

Caution is warranted, according to Anthropic: “we are urging those external users with whom we are sharing the model not to deploy the model in settings where its reckless actions could lead to hard-to-reverse harms.” And remember, the model is only being made available to major companies and organizations. Presumably authorized users inside these companies will be cybersecurity experts.

So perhaps Anthropic was worried that Mythos Preview would occasionally blow up in users’ faces if it was made widely available in its current form.

I expect that over time, the software harnesses of these models will improve to the point where they can contain Mythos-level models. For example, Anthropic recently released “auto mode” which automatically classifies whether a model’s command in Claude Code might have “potentially destructive” consequences. This lets developers take advantage of long-running safe tasks without having to manually approve a bunch of commands — or use “--dangerously-skip-permissions.”

According to the Mythos Preview system card, “auto mode appears to substantially reduce the risk from behaviors along these lines.”

Still, model capabilities seem likely to continue to increase quickly. It will be an open question whether better scaffold methods like auto mode can catch up quickly enough to make it safe to release future frontier models to average users.

Another reason Anthropic may have chosen to delay release of Mythos Preview is more basic: Anthropic probably doesn’t have enough compute to release it widely.

Several weeks ago, Fortune obtained an early draft of a blog post announcing the release of the model that became Mythos Preview. The post described Mythos as “a large, compute-intensive model” and said that it was “very expensive for us to serve, and will be very expensive for our customers to use.”1

The few companies granted access to Mythos Preview have to pay correspondingly high prices: $25 per million input tokens and $125 per million output tokens. This is Anthropic’s most expensive model ever. For comparison, Claude Opus 4.6 costs $5 per million input tokens and $25 per million output tokens.

Anthropic is already under severe compute constraints because of skyrocketing demand. Anthropic’s revenue run-rate has doubled in less than two months. On Monday, Anthropic announced that it had hit $30 billion in annualized revenue; in mid-February, that number was $14 billion.

Anthropic has responded to skyrocketing demand by reducing usage limits during popular coding hours. The company has also announced deals for more AI compute.

Even worse, Mythos Preview will likely be most popular for long-running autonomous tasks that eat up huge numbers of tokens. In the system card, Anthropic gave a qualitative assessment of Mythos Preview’s coding abilities. The company wrote that “we find that when used in an interactive, synchronous, ‘hands-on-keyboard’ pattern, the benefits of the model were less clear.” Developers “perceived Mythos Preview as too slow” when used in chat mode.

In contrast, many Mythos Preview testers described “being able to ‘set and forget’ on many-hour tasks for the first time.” While this arguably makes Mythos Preview more useful for software developers, it definitely increases the amount of compute necessary to serve the model to everyone.

I wonder if Anthropic is trying to reset expectations around availability and will never have Mythos Preview be part of existing subscription plans. The chatbot subscription model started when LLMs generally used few tokens to generate a response. With long reasoning chains and expensive LLMs, that model starts to break down. By not releasing Mythos Preview generally at first, Anthropic can also more carefully manage demand over the rollout — and has more leverage about its pricing structure.

In any case, demand for leading AI models seems likely to continue to grow dramatically faster than the ability for companies to meet this demand with their computational resources.

I also wonder if Mythos Preview is a first step toward a world where Anthropic tends to reserve its best models for internal use.

Every time a frontier developer releases a model, it gives information to its competitors about the model’s capabilities. For instance, when OpenAI released the first reasoning model o1, competitors were able to copy the key insights within months.

So if Anthropic can get away with it, it has an incentive to prevent its competitors from being able to access Mythos Preview for as long as it can.2

Anthropic has shown the tendency already to try to prevent competitors from taking advantage of Claude’s capabilities. Over the past year, it has blocked Claude Code access at both OpenAI and xAI for violating Claude’s Terms of Service, which include prohibitions on using the models to train other AI models.

In 2024, Anthropic was only releasing smaller Sonnet models while reportedly reserving the more powerful — and expensive — Opus models for internal use. However, as time progressed, Anthropic started releasing the Opus models again, perhaps to be competitive with OpenAI’s o3 model.

But Anthropic has been on a winning streak. Claude Code took off and for the first time ever, Anthropic’s reported revenue rate is higher than OpenAI’s. Anthropic’s decision to only partially release its latest model might be an indication that Anthropic feels it has a lead over OpenAI.

If this continues, we might see more cautious releases in the future. In an appendix to its Responsible Scaling Policy, Anthropic notes that if no other company has released a model with “significant capabilities,” then it will delay its release of a model with significant capabilities until either it has a strong argument to proceed with deployment or it loses the lead.

We’ll soon get to see how long Anthropic’s lead lasts. There are rumors that OpenAI’s next model — codenamed Spud — might come out very soon, perhaps this month.

I wasn’t able to independently verify whether the copy of this blog post was in fact the one leaked on Anthropic systems. (Fortune did not release a full copy of the leaked blog post.) However, Fortune’s write-up of the leaked blog post described the future model in similar language.

Ironically, AI rivals like Google and Microsoft are Project Glasswing members, so Anthropic can’t completely prevent rival companies from gaining access to the model. But Mythos Preview’s system card is clear that access to Mythos Preview through Project Glasswing is “under terms that restrict its uses to cybersecurity.”