2026-05-14 20:49:22

I’ve written several premium articles in the last couple of weeks:

The last one, from this Tuesday, is What Do Muslims Believe? It’s the first in the current series, and is about Muslim beliefs in Muslim countries.

Today, we focus on the beliefs of Muslims when they move to the West. This is becoming crucially important, as Muslim immigration is one of the strongest factors in European voting today. So I looked at over a dozen surveys across six countries (US, UK, France, Denmark, Germany, Austria) and condensed the insights into dozens of the most relevant charts.1

The best data I could find for this group is this Pew Research survey from 2017. What can we tell about their religiosity, morals, appreciation for democracy, feelings of belonging, and concerns about extremism?

Muslims in the US are slightly more religious than Christians.

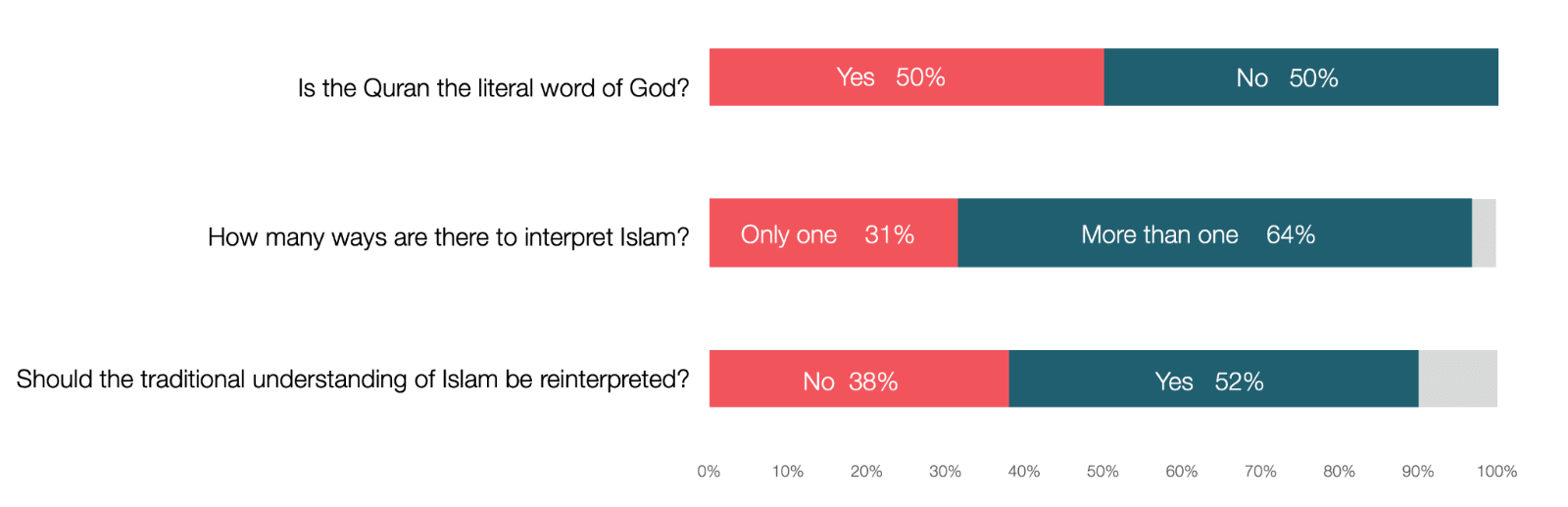

This religiosity can be seen in the share of Muslims who pray every day (65%) and visit the Mosque once a week (47%). What does religiosity mean for Muslims? A key aspect is how to interpret the Quran.

These things are connected. Muhammad claimed that the Angel Gabriel dictated to him the Quran, and was the literal word of God. This is why so many Muslims believe it, and why about a third think there’s only one way to interpret Islam and it shouldn’t be reinterpreted.

This is problematic because all ancient religious texts have some pretty illiberal positions, and that includes the Quran:

Fight those who do not believe in Allah (9:29)

Disbelievers are the worst creatures (98:6)

Do not take the Jews and Christians as allies (5:51)

Men have the authority in the marriage and can strike women (4:34)

Adultery and fornication deserve 100 lashes (24:2)

Slavery is permitted (90:13 and 2:177 assume slavery exist)

Sex with slaves and captives is permitted (4:24, 23:5-6, 70:29-30)

Fighting is prescribed in certain cases, even if disliked (2:216)

Muslims should fight until religion is entirely for Allah (8:39)

Again, this is not exclusive to Islam: 20% of US Christians also believe the Bible is the literal word of God, and that includes the Old Testament, with some nice pearls such as the punishment for homosexuality or adultery should be death. So the difference is not in nature, but in degree: The more literal and radical interpretations of old books is just more common among Muslims than in Christians in the US. And maybe this difference comes from the fact that Muslims have been in the US less than Christians on average?

Another important aspect of this is that non-Muslims miss a key point about the interpretability of the Quran. For example, although the Quran says men can strike women, what does strike mean? This requires interpretation. Some Muslims think it means a beating that leaves no marks. Others think it should not be violent or severe, like a flicker of a finger for example. Others think it should just be symbolic.

So although these data points suggest Muslims are more conservative and pious than the average American, they don’t directly imply that their morality is starkly different. For that, we need to look into morality data.

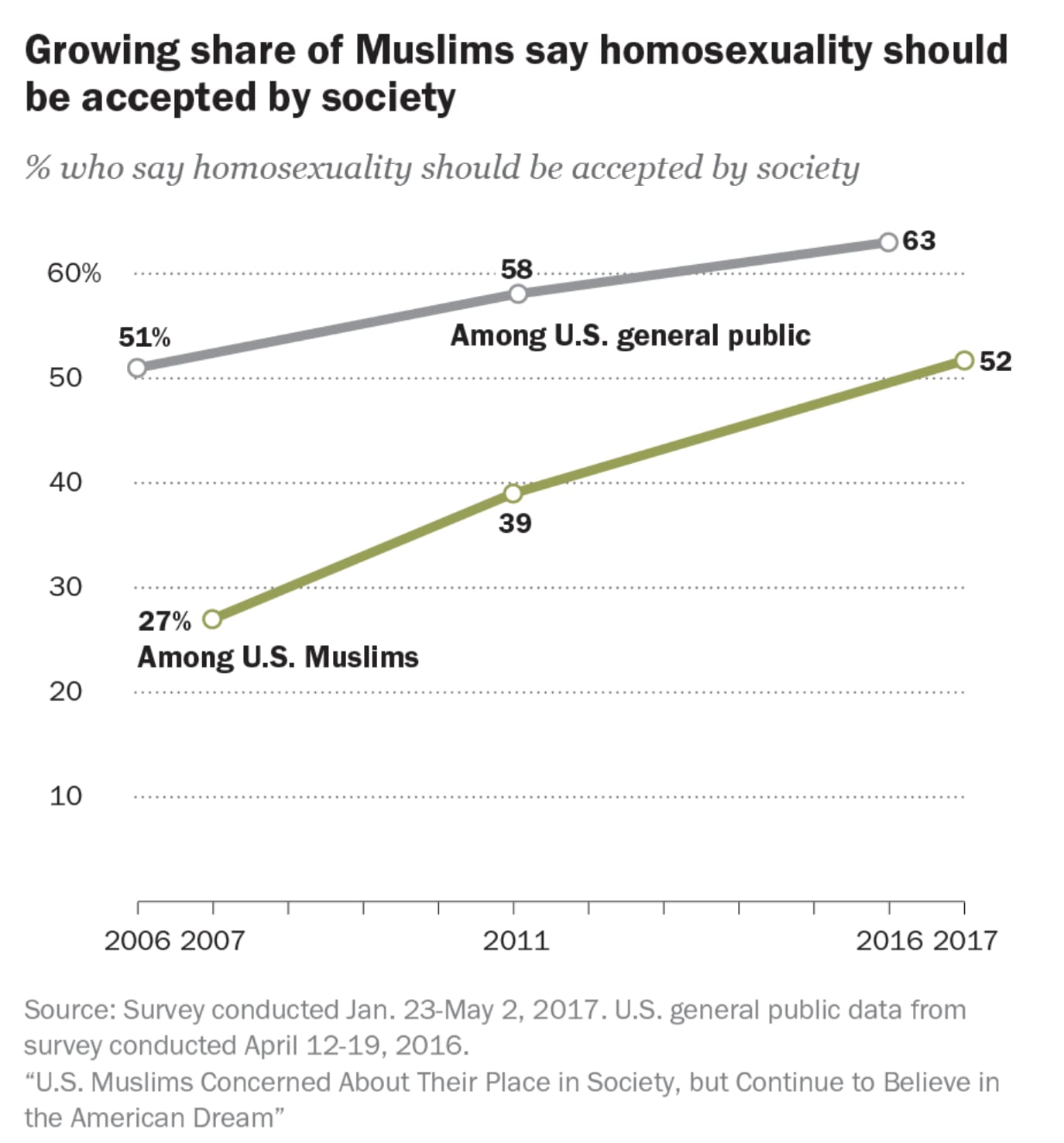

Muslims track with the rest of society in the evolution of attitudes towards homosexuality:

I’m sure that if we had the data for Christians, we’d see something similar. What I think is most telling here is how the acceptance of homosexuals among Muslims almost doubled in just 10 years. We can probably assume it’s even higher now.

A full third of US Muslims believe Islam is incompatible with democracy!

This is similar to the numbers in Muslim countries. When we looked at these numbers across the Muslim world, we saw that this went hand in hand with authoritarianism and a desire to have religion supersede the state.

However, again, this data point is not enough. For example, we could imagine here that the majority of Muslims think democracy and Islam are compatible, and that the more moderate Muslims fear that it’s not, because they fear the more radical interpretations of Islam (which they don’t share), are incompatible with democracy. That’s exactly why we see 44% of the general public thinking Islam and democracy are incompatible. So we need to go deeper.

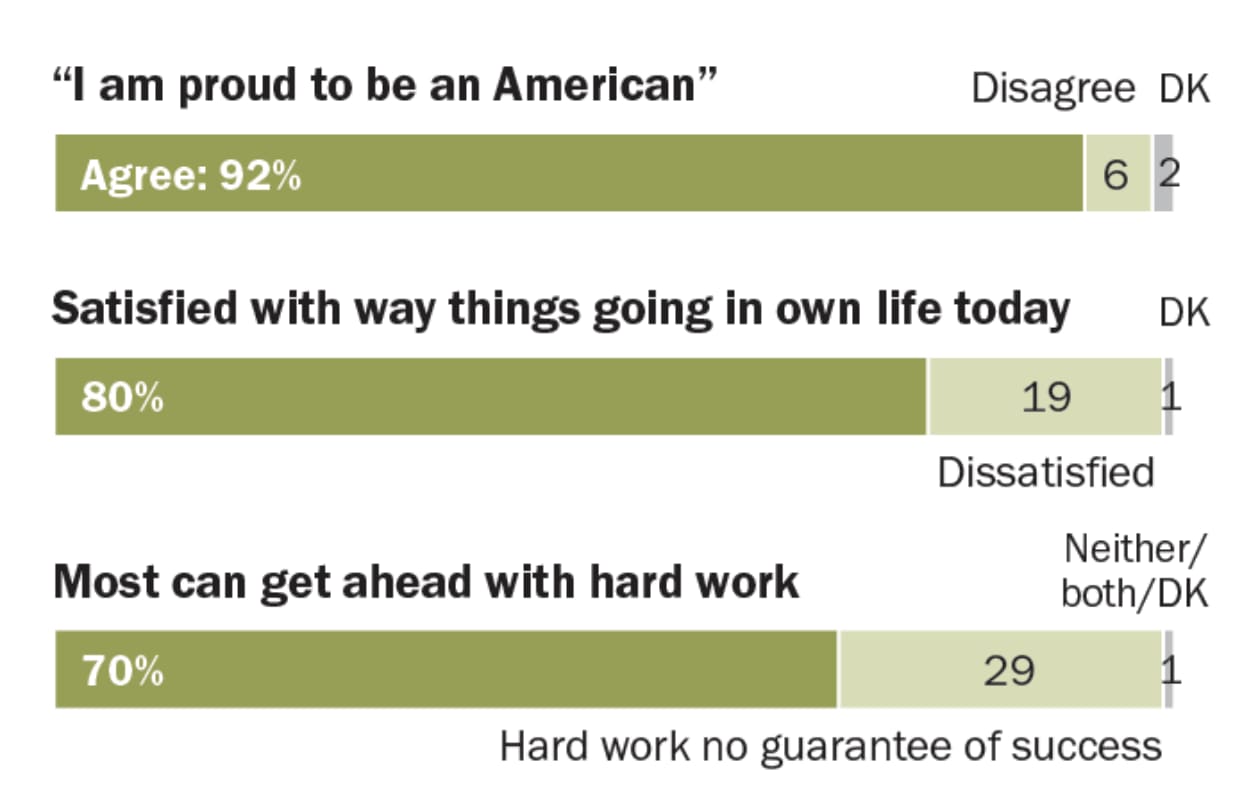

In the US, Muslims are proud to be American, they’re pretty happy, and they think the system works for them.

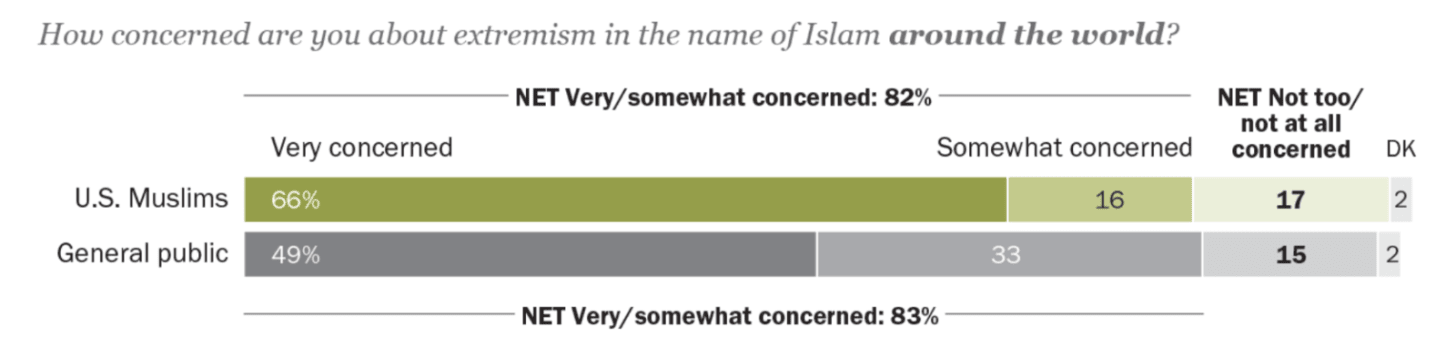

US Muslims are more concerned than the average American about extremism in the name of Islam:

Even as they believe support for extremists within Islam is low:

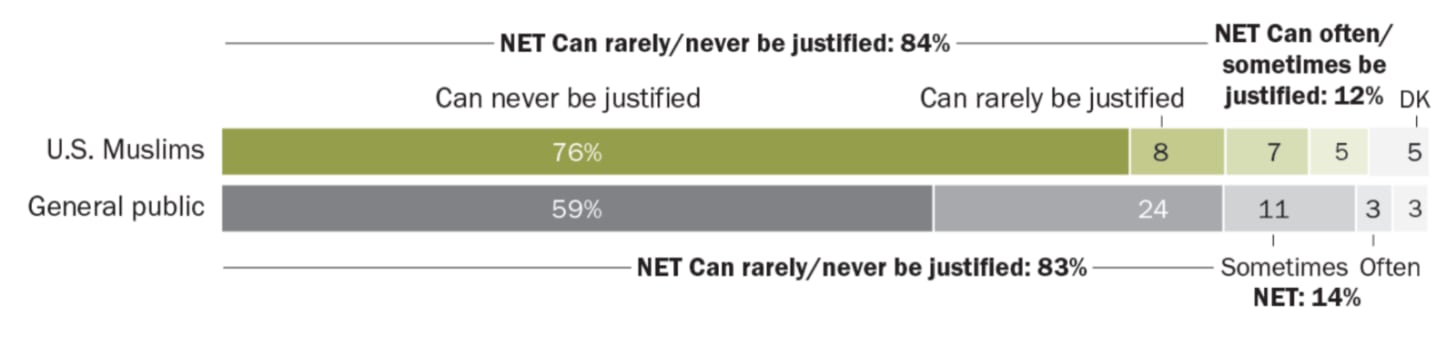

Muslims in the US think targeting civilians for political purposes is never justified (much less than the average American!).

In other words, US Muslims are mostly moderate, most don’t support extremism, but they realize some Muslims do, and that worries the moderates.

In summary, the picture in the US is encouraging. Although Muslims seem to be pious, their beliefs seem reasonably well integrated with those of the broader public. They believe in democracy and don’t believe in extremism. But a sizable minority believes Islam should be interpreted traditionally and that it has a natural conflict with democracy, while Muslims suspect there’s a sizable share of extremists among them, and that worries them.

Alas, the picture in Europe is not as good.

Across Europe, most Muslims identify either only with the host country or with both the host and the origin countries.

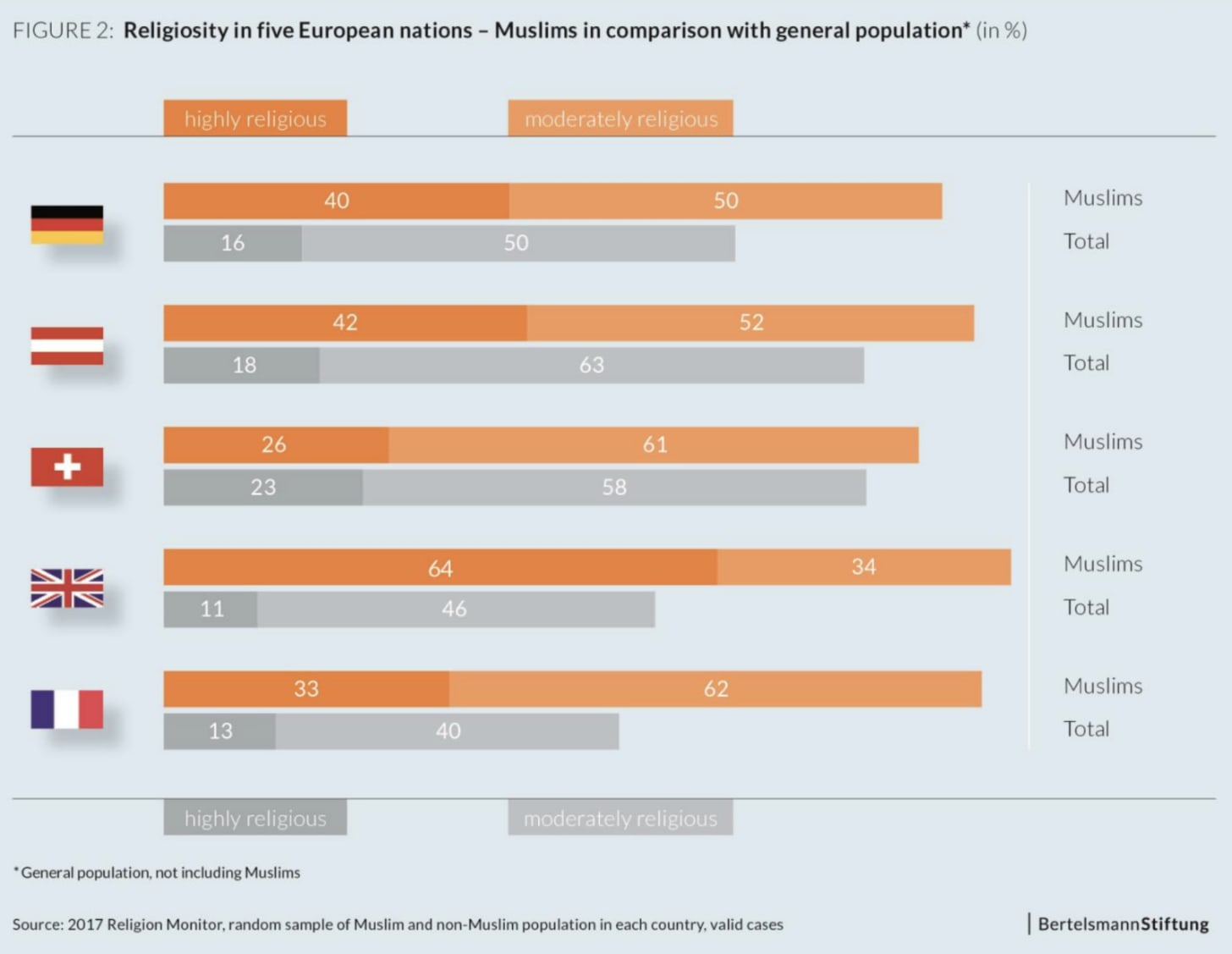

Muslims are more religious than the rest of the population.

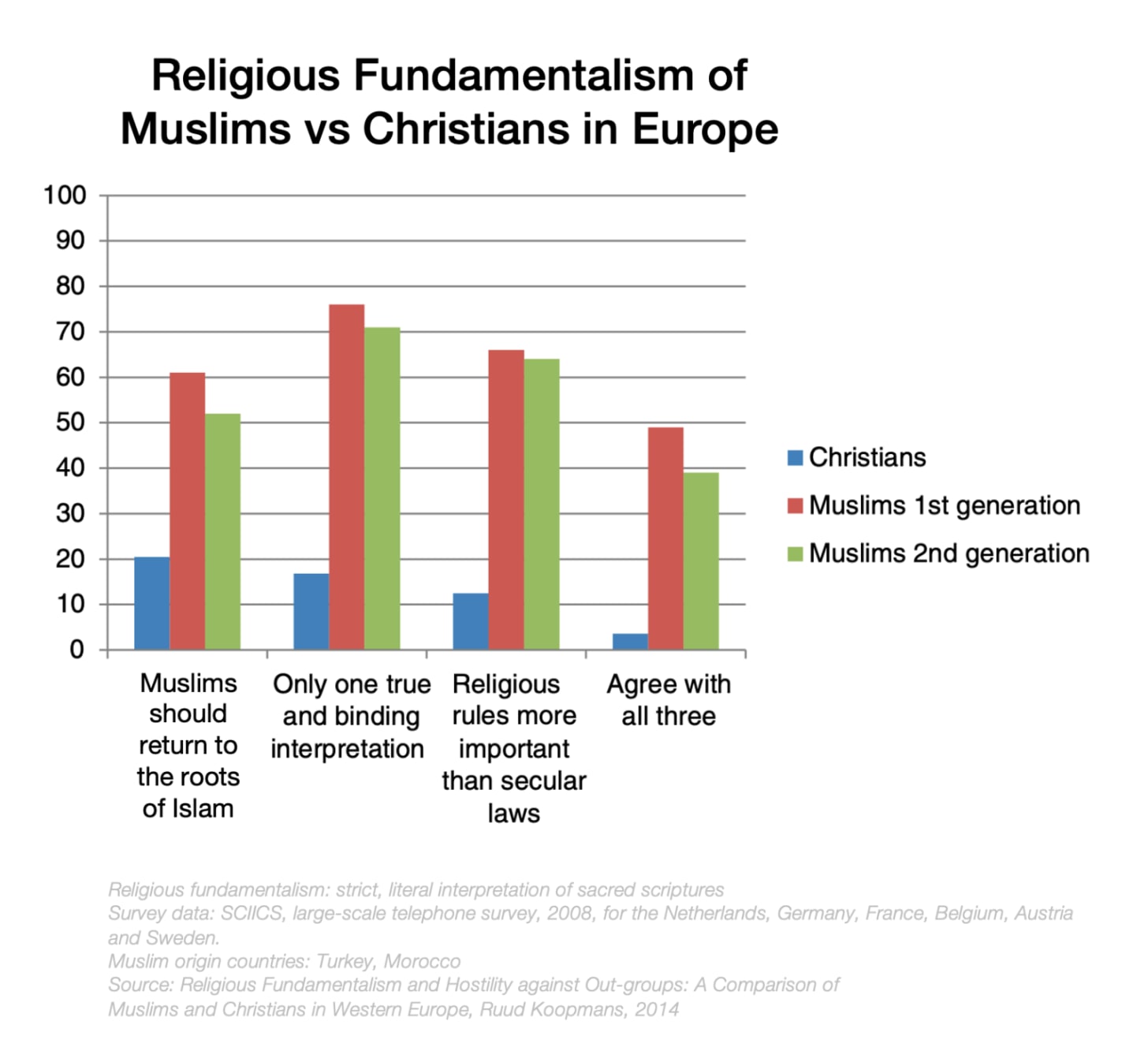

A survey across six European countries2 on immigrant integration produced several interesting papers. This one looked at how religiosity was connected to out-group hostility. Muslim immigrants and their children were substantially more fundamentalist than their Christian counterparts.

Now, religious fundamentalism is not a problem by itself. Everybody can choose their own way of life. It’s only problematic if it has consequences for others, and unfortunately here there are, as Muslim fundamentalism includes respecting religious laws before secular ones, among other things. So what are Muslims’ attitudes on homosexuality, Judaism, and the conflict between the West and Islam?

I found the next graph quite interesting.

Fundamentalist Christians were as hostile to Jews and homosexuals as non-religious Muslims!3 This means that, in Europe, the hostility towards non-Muslim groups is not just a result of religiousness and piety.

People tend to blur the immigrants of all European countries, but the immigrant populations don’t typically have the same origin:

Spain has mostly Moroccans

France has Moroccans, Algerians, and Tunisians

In Germany, Turks and Syrians prevail

In the UK, 80% of Muslims are South Asians (54% Pakistani, 18% Bangladeshi, 9% Indian)

This means that survey results will differ.

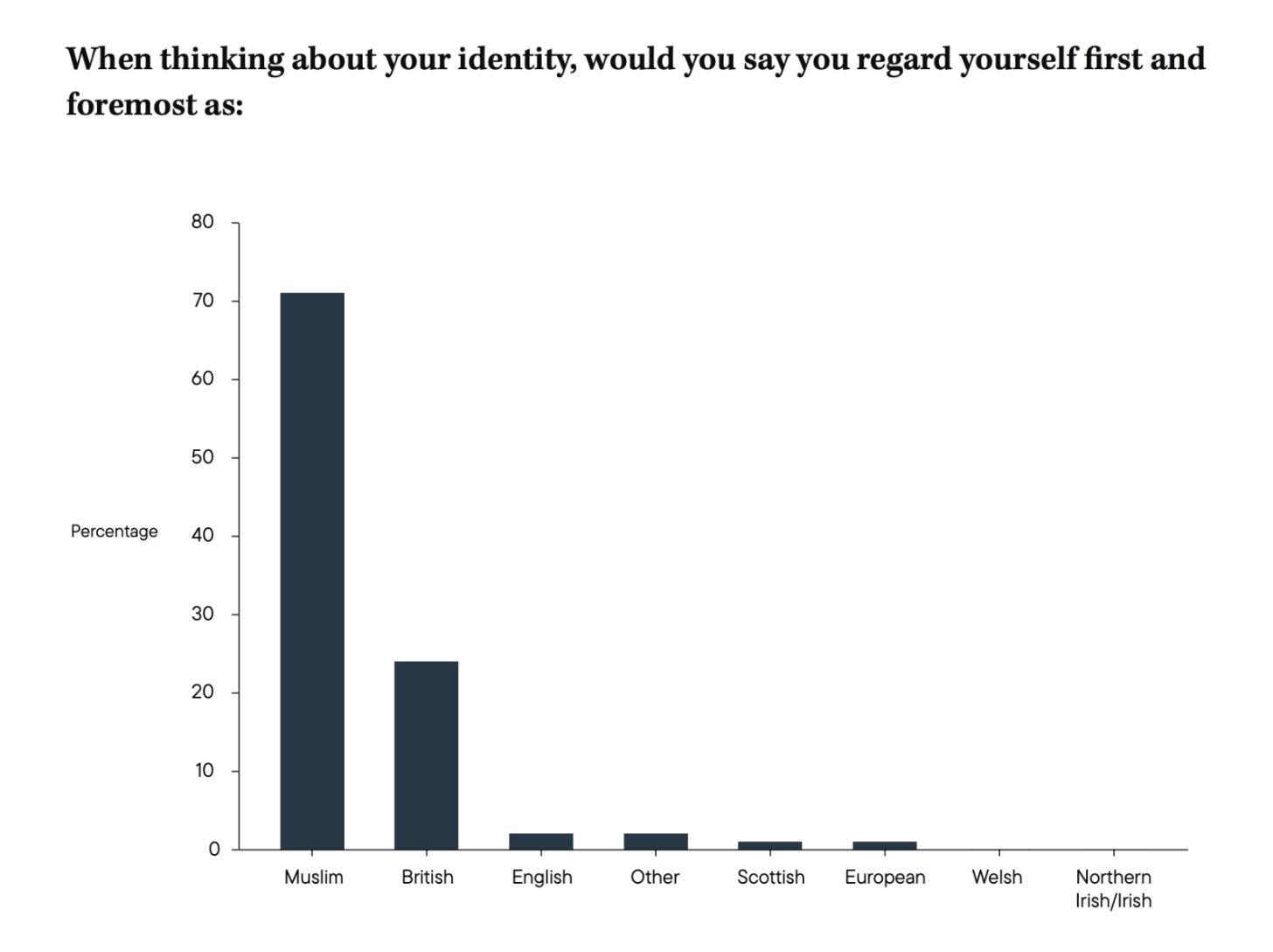

This UK survey from 20164 showed that over 90% of Muslims felt they belonged to Britain either very (55%) or fairly (38%) strongly. However, when asked in this more recent survey (2025) whether they feel more Muslim than British, a majority said Muslim.

What do Muslims think about the compatibility of Western values and Islam? Channel 4 commissioned a survey of 1000 British Muslims in 2016 (comparing them to non-Muslims).5 It found that 66% of Muslims thought Islam and Western democracy were compatible, but 22% of Muslims didn’t think so.

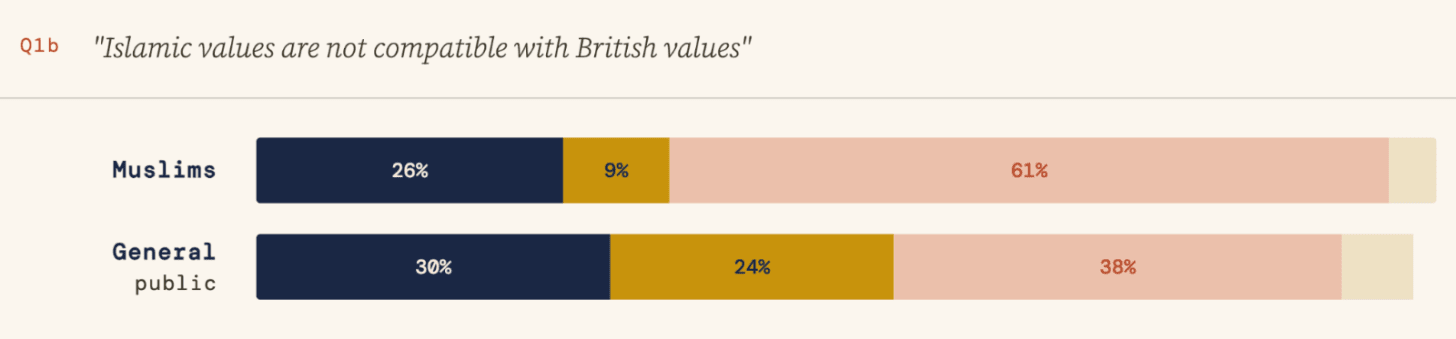

A bigger share of Muslims thought Islamic values are not compatible with British values (the previous graph was for democracy).

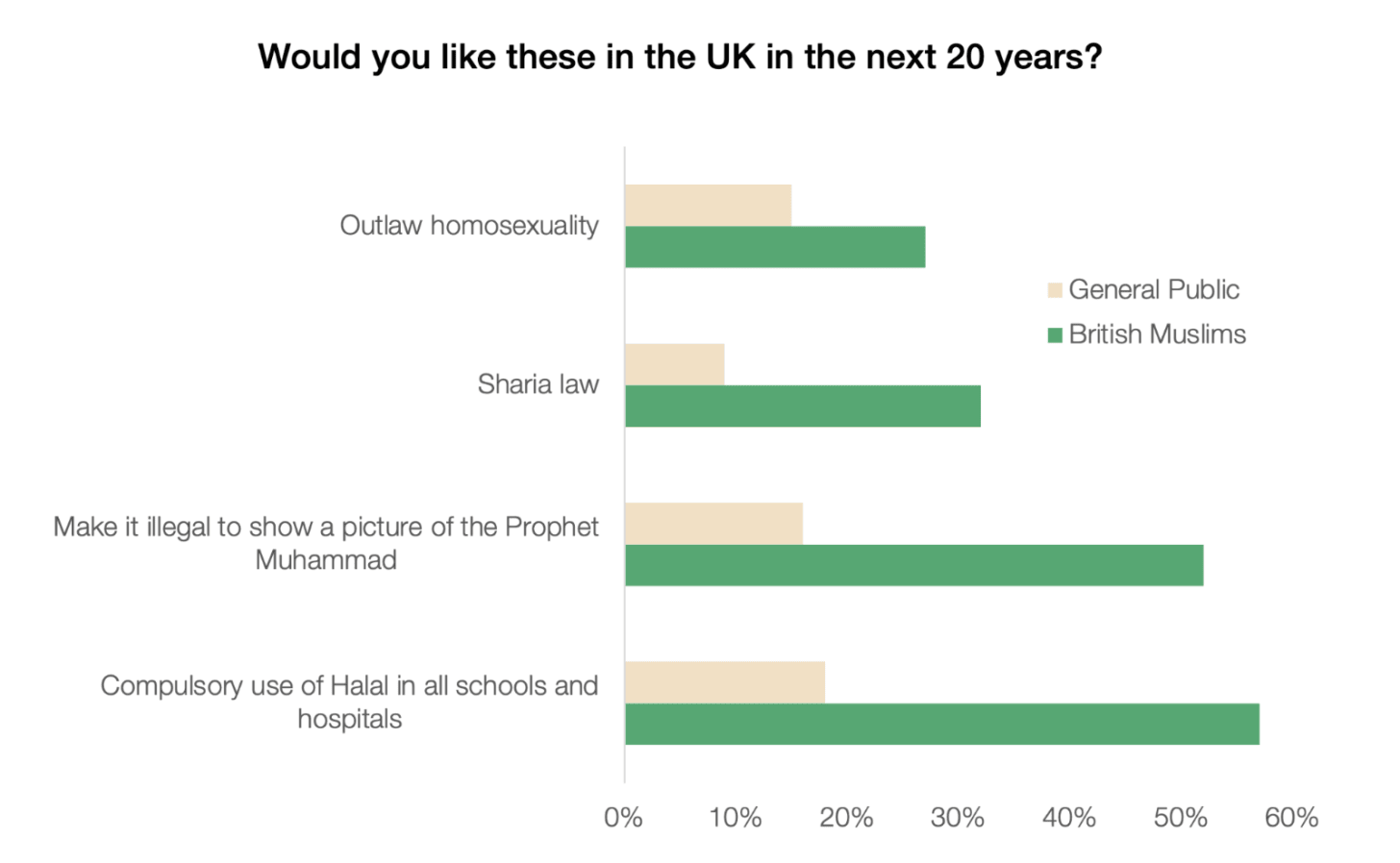

38% of British Muslims would support introducing some elements of Sharia law.

This is broadly aligned with the findings of the other survey I mentioned above, where 42% support the introduction of some aspects of Sharia law in the UK.

As we said, Sharia means a lot of things. One is finance:

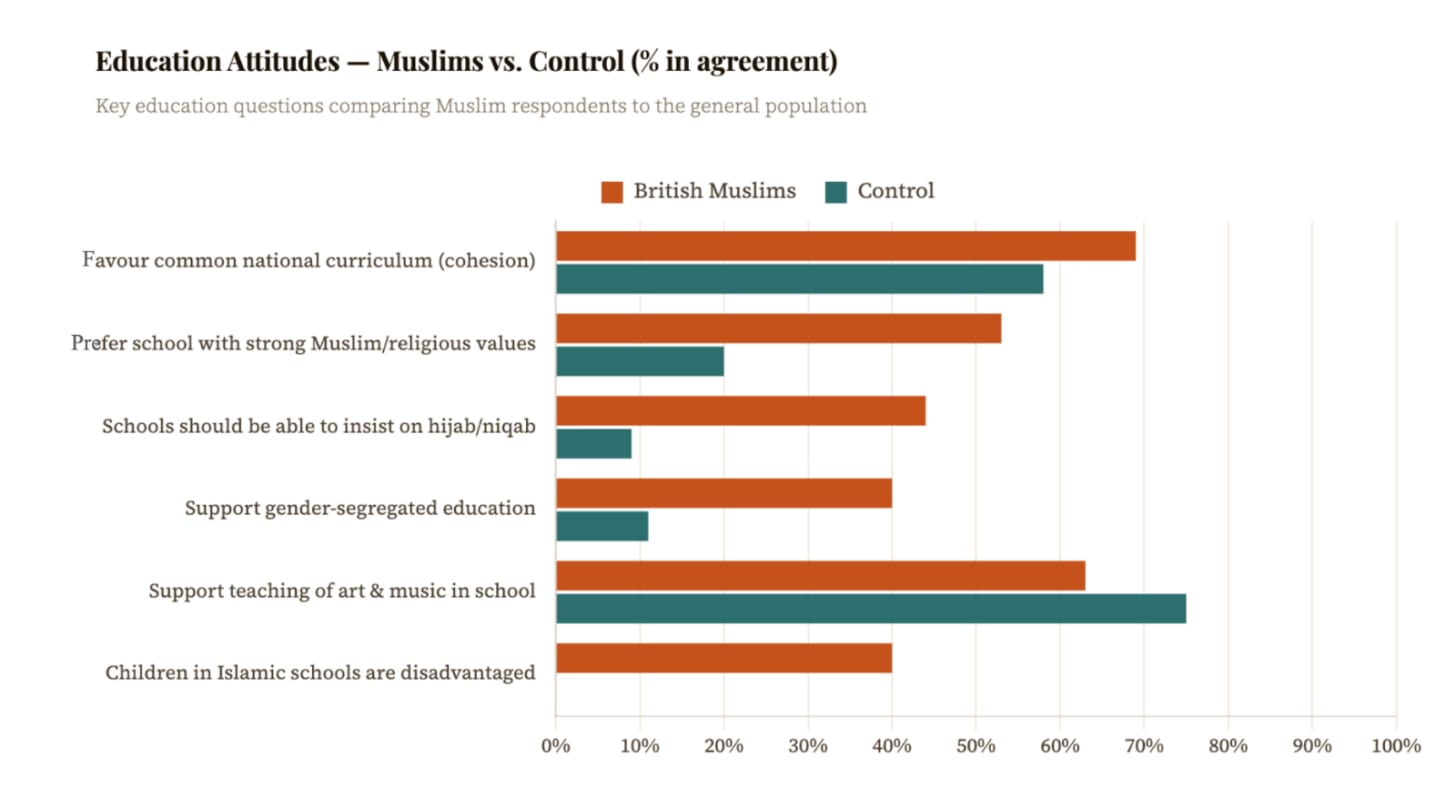

Another is education:

I have mixed feelings about this one. I think Muslims should be able to teach their children about Islam, and although I personally dislike gender-segregated education, I understand why they might prefer it (like some Christians have gender-segregated schools, too). Some Western schools have gendered dress codes—including differences for boys and girls, even in non-religious schools—so I don’t mind that Muslims would like this for their children.

I am more concerned about these:

These are not about a community that has its own customs and traditions, but about imposing their values on everyone else.

By far the most worrisome to me here is the illegality of publishing a picture of Prophet Muhammad. I get that this is anathema to Muslims (as is Satanism worship for Christians), but maybe the single most important value of the West is the freedom of speech, which by definition means you should be OK with others saying things you disagree with. Especially things you dislike.

I think what matters most is the relationship to violence. 13% of Muslims think that Islam poses a threat to British national security.

And 23% think criticism of Islam is justified.

I find these data points interesting. The numbers are not so different from those of the general public, which I think means several things:

Some Muslims see a threat, and think it should be addressed.

Others prefer to see the bright side of Islam and don’t see a problem.

Some might prefer to believe that Muslims are problematic, but that is justified, as a reaction from a racist UK. They don’t want to overblame Muslims for any conflict.

This is consistent with the British Muslim condemnation of violence, which is higher than for natives.

Nearly half of Muslims think we should do more to combat extremism.

A sizable share of Muslims think Muslims should be the ones to tackle their own radicalization.

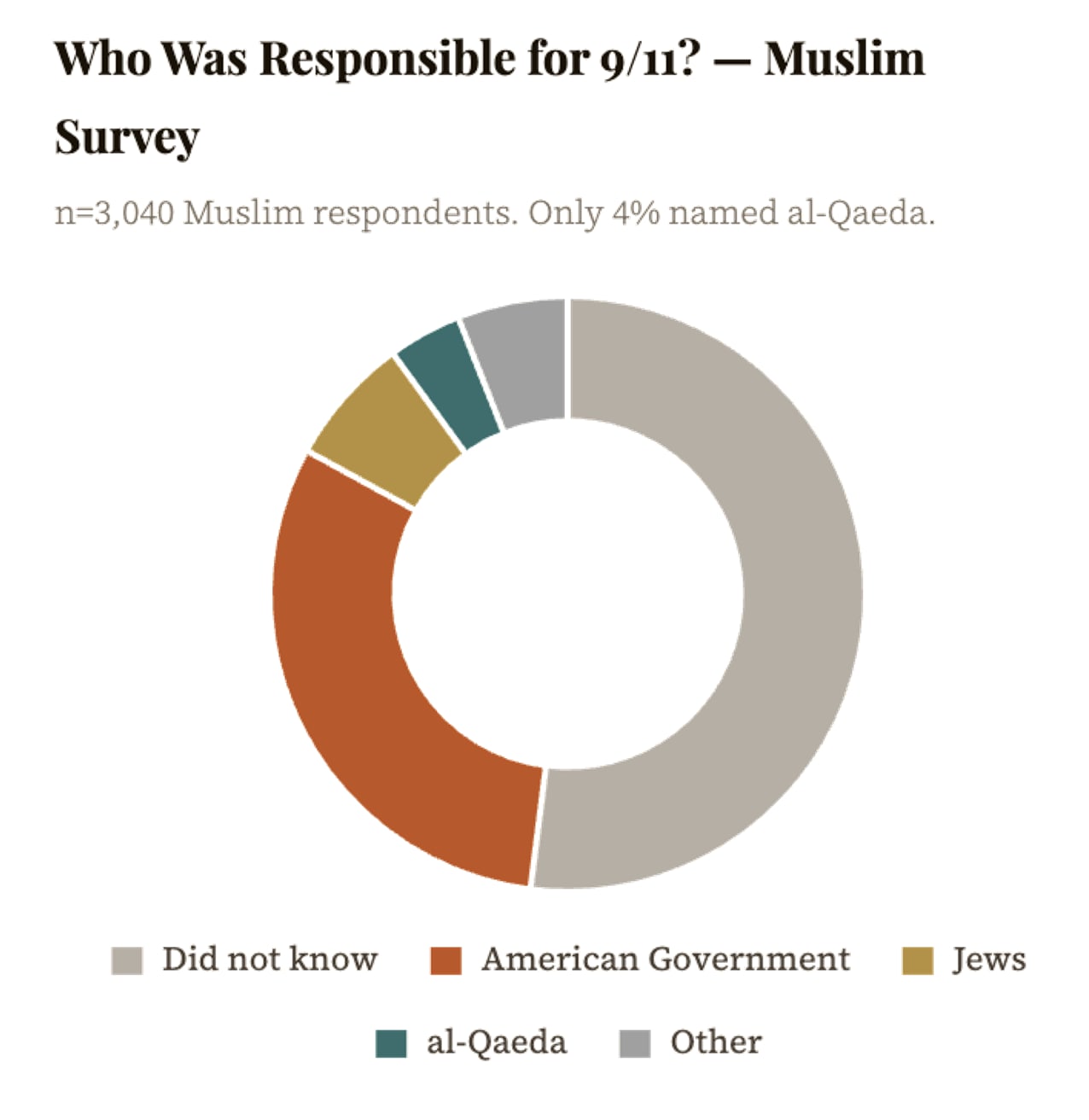

The following data point is one of the most concerning: only 4% of British Muslims think Al Qaeda was behind the 9/11 attacks:

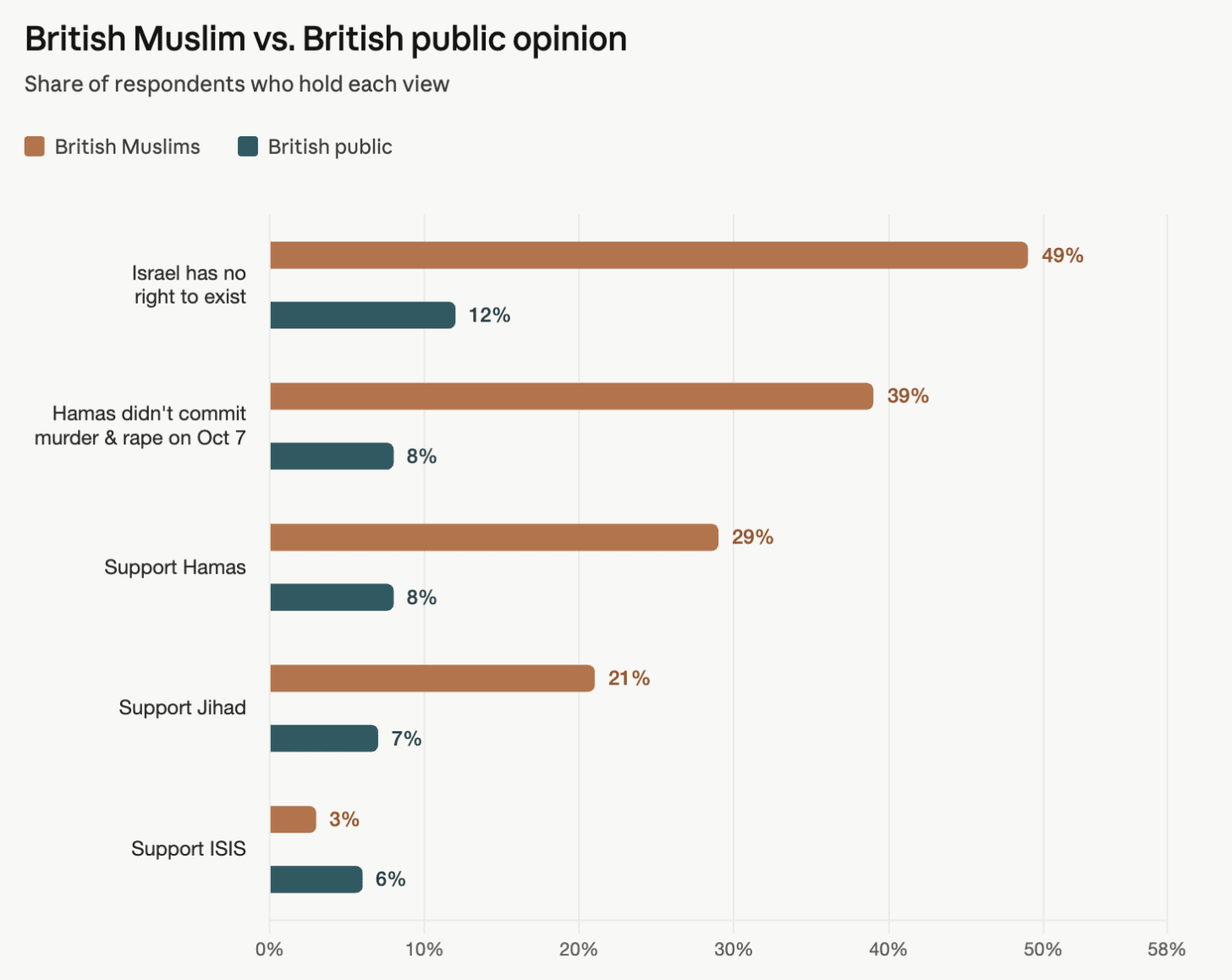

This reminds me of positions on Jews, Israel, and October 7th 2023. According to this 2024 survey,6 Muslims are 2-3x more likely than the general public to think Jews have too much power in finance, media, foreign policy, pharma—across the UK and the US. Also:

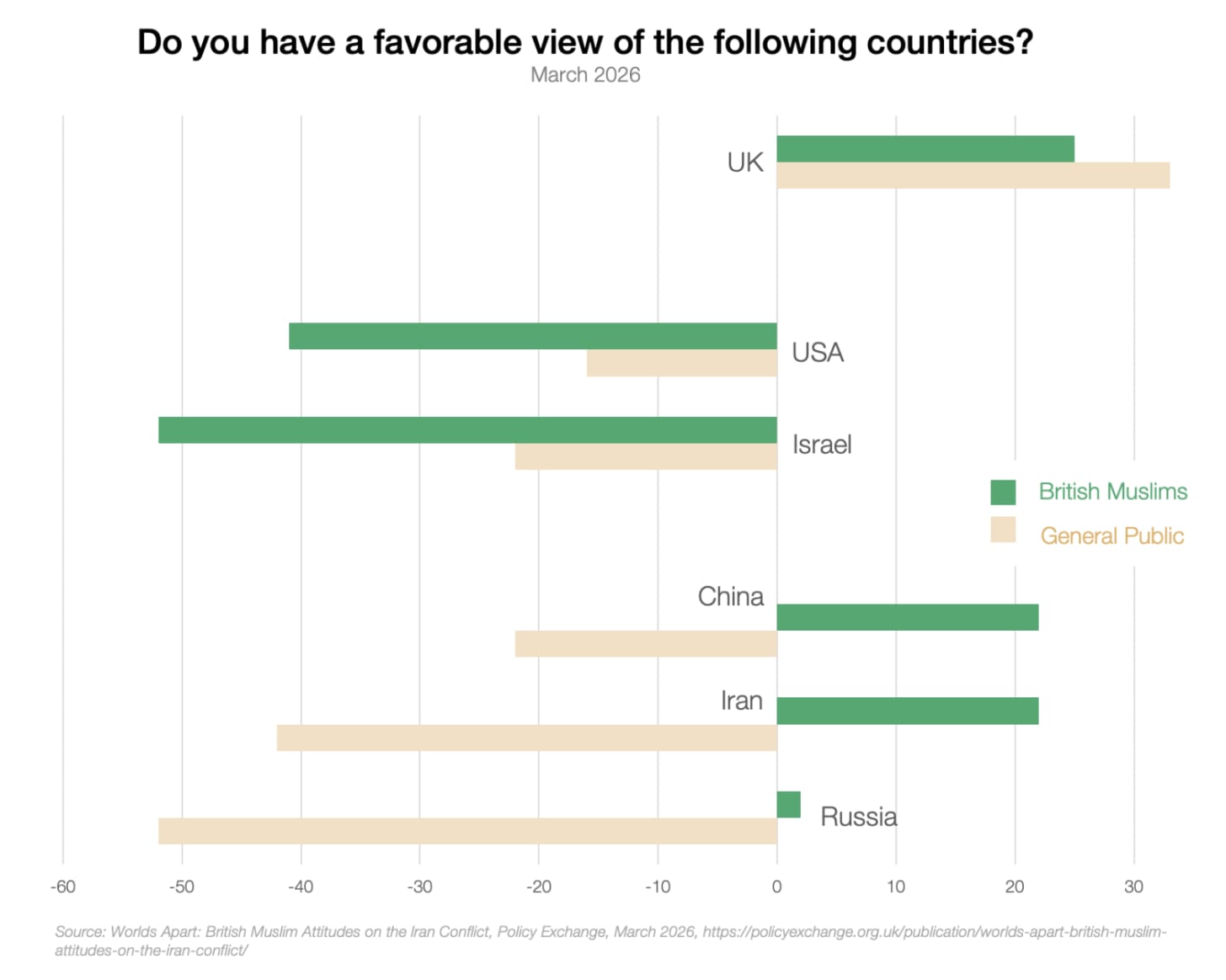

Two recent surveys from 2026 have shown data that is also concerning.

All the authoritarian regimes opposed to Western values (China, Russia, Iran) are highly supported by British Muslims: between 44 and 64 points of difference from the general public!

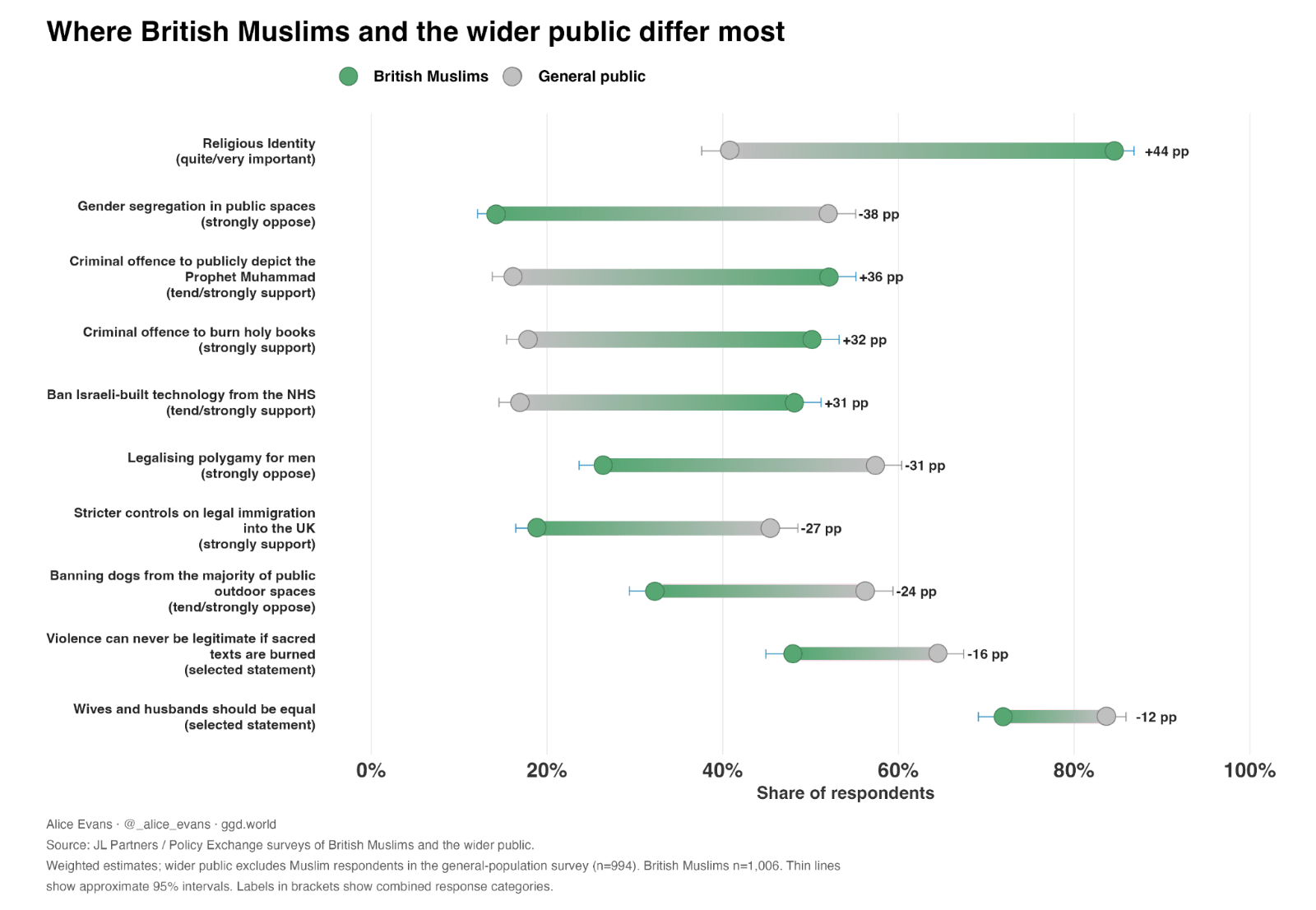

What about beliefs?

This is where Muslims and the general British public differ the most:

To note, these positions tend to be shared by both men and women in Islam—except for what directly affects wives:

Overall, the picture of Muslims in the UK seems to be one where most think the West and Islam are perfectly compatible, because they mostly just want to live their lives. But a sizable share of British Muslims seem to want to impose some aspects of their customs on the rest of society. Some Muslims are radical: 10% to 25% of UK Muslims have quite radical views on violence and terrorism, and 30% to 60% want to impose some of their beliefs on the rest of society. Bleak. Many moderates agree Muslims should do more to fight them.

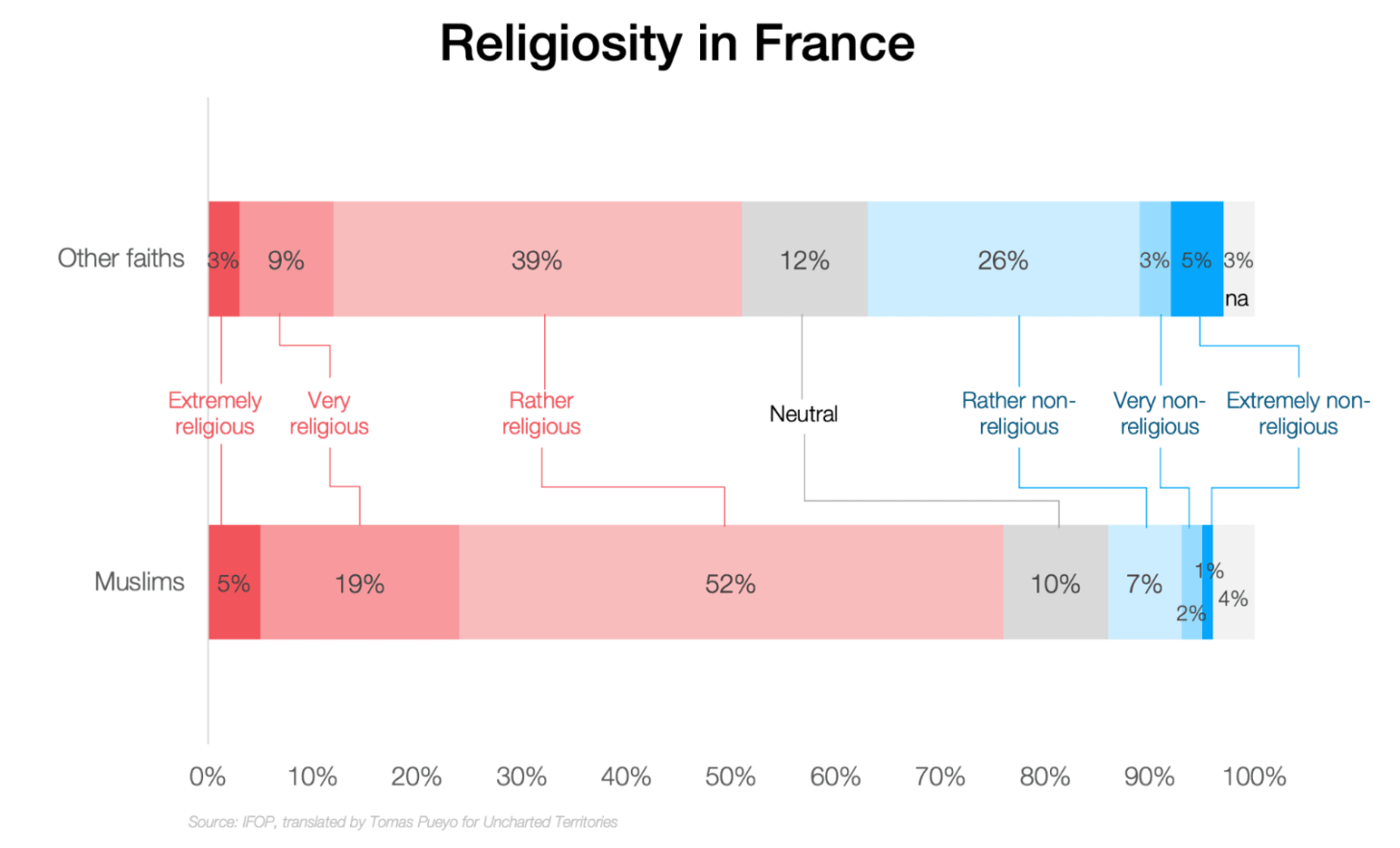

One of the most recent, in-depth surveys of Muslim attitudes in Western countries is the 2025 survey from the non-partisan7 IFOP. It found that Islam is growing in France, now comprising 7% of the population.

Of all French Muslims, 80% feel religious (87% for young people), and 24% are very or extremely religious.

These numbers don’t change much based on origin, job, sex, and many other variables. Surprisingly, French Muslims whose father was also French (so most likely 3rd generation) are more likely to be religious…

Over time, and as the share of Muslims grows, their religiosity increases:

French-born Muslims are more likely to be very or extremely religious (23%) than Maghreb-born Muslims (21%).

The young are 150% more likely to be very or extremely religious vs those over 50 years old.

Over time French Muslims tend to pray more (100% more in the last 30 years), and go more frequently to the mosque (120%).

The share of French Muslim women who wear the hijab has nearly tripled, from 16% in 2003 to 45% in 2025. 59% of them wear it because of pressure or risk of not wearing it.

In 1998, only 19% of French Muslims thought religion was more right than science on the story of the creation of the world. Now, 65% think religion is right. For young Muslims, it’s 81%.

In 1998, 48% of French Muslims wanted Islam to modernize. Now, only 21% do.

Now, as we said, religion by itself doesn’t tell us anything. People should be free to follow any religion they want, as long as it doesn’t impinge on others’ rights. So what does the survey say about that?

Each one of these are problematic:

It’s fine for you and your community to follow Sharia, as long as it doesn’t supplant the law that applies to all. But a large share of French Muslims (including a majority of youngsters) would prefer the entire country to follow Sharia.

Islamism is incompatible with the West, as it believes it’s superior and should supplant it. Over a third of French Muslims support it in some form or another. 24% support the Muslim Brotherhood. 8% support all Islamist positions!

Freedom of religion is at the heart of the West, but 20% of Muslims disagree! As a reminder, in Islam, the punishment for apostasy is death.

The equality of all people is another cornerstone of the West, but several French Muslim positions are incompatible with it, as over 10% of Muslims don’t want to shake hands with people from the other sex, or share swimming pools with them.

This 2015 survey from the non-partisan Wilke company asked Danish Muslims what they thought about different topics:

This data again supports the idea that Muslims there are pretty conservative, but these beliefs don’t impinge on others’ rights. This should be acceptable.

As we’ve seen previously, most think Islam and democracy are compatible.

Again, we find that Muslims think the Quran should be interpreted pretty literally.

This is concerning: Not only do ¾ of Muslims think this, but that share grew since 2006. And they believe instructions should be followed so fully that 11% of Muslims think the Quran should be the foundation of all legislation in Denmark.

And the majority of Muslims don’t think Islam has to modernize.

The vast majority of Muslims want their children to eat halal food at school.

If that’s what you want for yourself, I think it’s fair. If you’re benefiting from the facilities, institutions, and habits of society, it might be too expensive to provide halal food everywhere, so Danish Muslims should not demand it, but they can certainly ask for it. And if there’s a big contingent of Muslims in a certain area and it’s easy to cater to both Muslims and non-Muslims, why not?

But also, let’s not assume this comes at no cost. Halal food reduces diversity, as it eliminates all pork and alcohol from food, including all its derivatives (lard, bacon, gelatin, marshmallows, cooking with alcohol, some desserts) and makes it more expensive.8

Now let’s dive into the radicalization side.

The vast majority of Danish Muslims don’t sympathize with them. 2-3% of them do, however (and 10% don’t respond…).

When asking a more nuanced question, the numbers change a bit.

So nearly 7% of Danish Muslims think drawing Muhammad merits death…

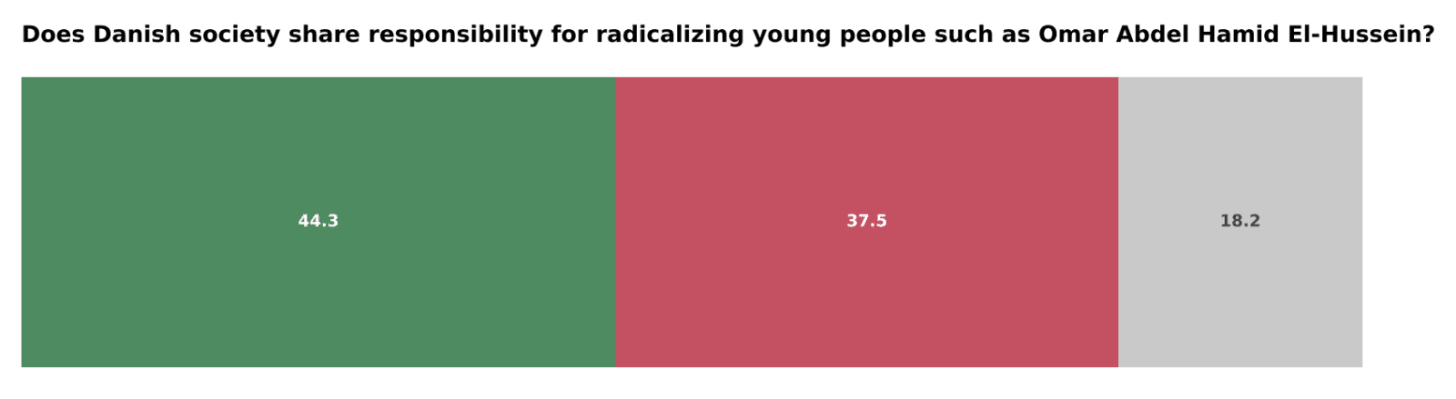

This is very concerning to me. It’s one thing to say you don’t support political violence in the abstract, but then 44% of Danish Muslims believe society is in part to blame for political violence. What exactly did society do wrong? Why doesn’t this happen with other cultures and religions? Is the thought behind this that: “You can draw Muhammad, but then don’t complain when you get shot”?9

This to me is the perfect illustration, because it shows how common this opinion is. On one side, I understand the Muslim position: There is racism in Western countries—like in all societies. And racism towards Muslim is especially prevalent in Europe. But that doesn’t justify violence, as many Muslims think. This attitude only breeds further racism. If a group wants to integrate, it must police itself, in thought and in actions. It must understand that they are entering a host country, and they need to make an effort to adjust to local norms.

An Austrian survey of young Muslims in Vienna in 201910 shows similar data, so I’ll just paste the relevant graphs here11 (note the comparison with the non-migrant background, which is Viennese youth with both parents born in Austria).

Another interesting fact: the more religious the person, the less they believe in democracy and individual rights, and the more they believe in prejudice and violence.

The capital city of Austria, Vienna, released a report just this week on this topic,12 and apparently it shares the same type of data as what we’ve already seen, so I won’t go into much more detail.

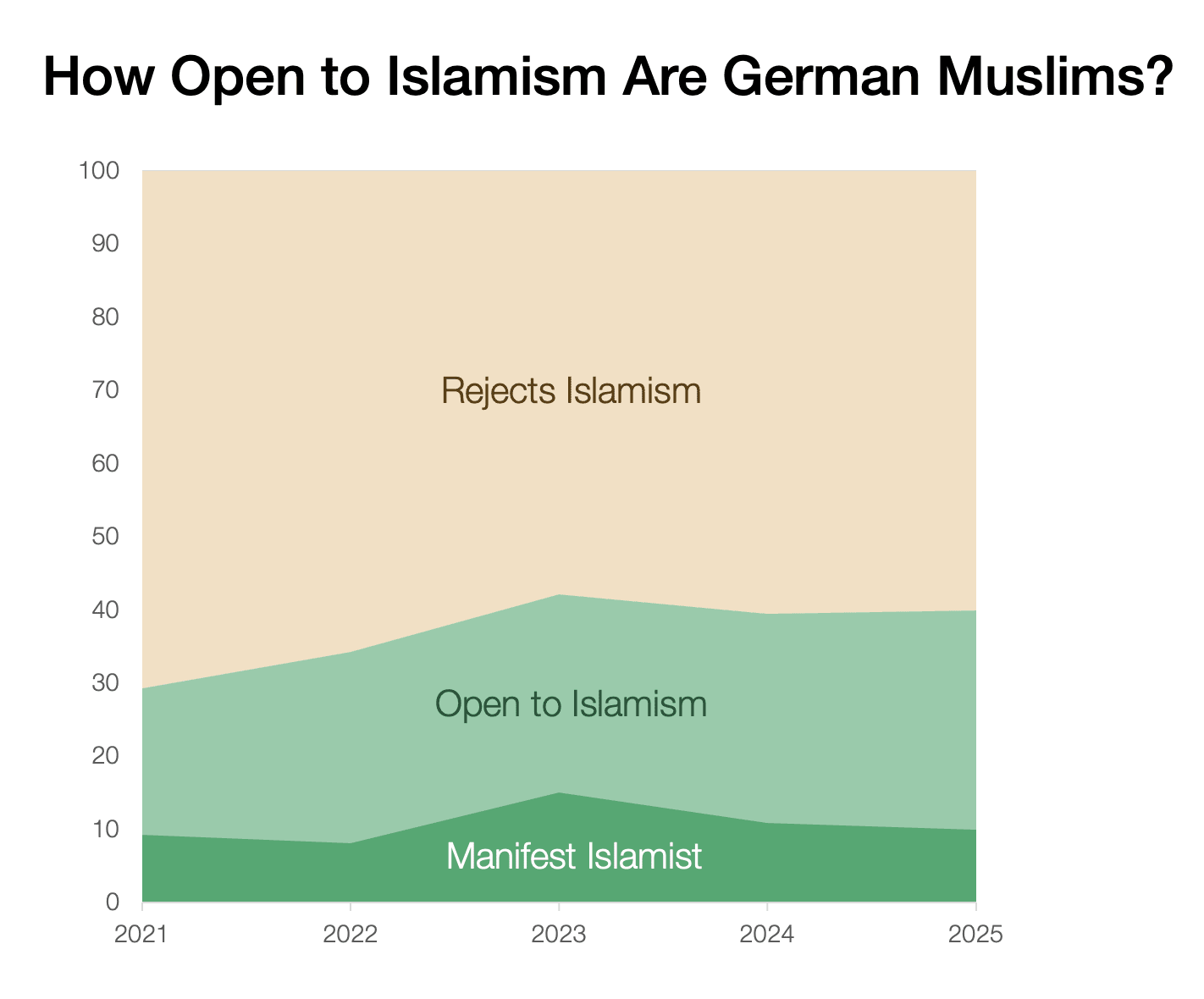

In Germany, the MOTRA-Monitor 2024/25 is a monster 600-page report on the state of the country, and the picture is one of radicalization in all directions. I asked Claude to translate it for me and extract the right data, which I checked with my less-than-perfect German.

Islamism is rampant in Germany.

How did they assess that? They looked at the answer to these eight questions and blended them:

So the silver lining is that more than half of German Muslims don’t think this way. This is so bleak, though. Nearly half of German Muslims think only Islam can solve the problems of our time. A quarter think the rules of the Quran matter more than German law, and that an Islamic theocracy is the best form of government…

This is not an artifact of men vs women,13 nor of education levels, nor age:14 women and educated (high school and above) German Muslims are nearly as Islamist or open to it as male or low-education Muslims. Young Muslims are over 4x more radical than older ones.15

From all this data, a picture emerges about Muslims in Western countries.

First, the US is different from Europe. American Muslims seem much better integrated, and hold less radical views, than their counterparts in Europe.

Second, Muslims in general like the countries they’re in, and appreciate democracy. They identify with their host countries, although their allegiance tends to be to Islam first.

It looks like 20-50% of Muslims are extremely well assimilated into their host countries and hold views quite similar to those of the average native.16 These people are so important! They’re the bridge between natives and more conservative or even radical immigrants, and we should support them.

A large majority of Muslim immigrants tend to be more religious, pious, and conservative than natives or moderate Muslims. This is neither good nor bad per se; I respect how people want to live, and I enjoy the diversity of lifestyles, although diversity of values brings challenges and costs in politics. I respect their common desire to pray, have a mosque to do it in, eat halal, wear traditional clothes, and provide a separate education to girls and boys. These are things many Christians or Jews desire for their children, too, so we should respect and welcome them.

I am less comfortable with the 20-50% who seem more fundamentalist, who think Islam should return to its roots, be interpreted literally, or that religious rules are more important than secular ones. These beliefs are incompatible with Western values and institutions. That’s 9 to 20 million Muslims in Europe, and these beliefs should be acively pushed back on.17

The intolerance is worse. I am even less comfortable with the fairly common position that homosexuality should be banned, that Jews have too much power and can’t be trusted, or that women should have fewer rights than men. 10-50% of Muslims wanting to make drawings of Muhammad illegal is an unacceptably high number, and 7-24% who think making these drawings or burning a sacred book justifies killing the person who did it is really bad.

20-50% Muslims are concerned about extremism. This is good news, and as we said, we need them to act on it.

Because extremism is real and way too common. Depending on their Western host country, you can find numbers like ~25% who think Islam is incompatible with Western values, ~15% who support full Sharia law, ~30% who support some Islamist positions and institutions, ~10%-25% who support Hamas or Jihad, and the support for terrorist organizations like ISIS or Al Qaeda ranges from 2% to 16%. If we assume this group is ~15% of European Muslims, that’s about 8M European Muslims.

All of this comes with the other side of the coin, which is racism and Islamophobia. These feed into each other. But there are also other factors in place, such a asylum seekers vs economic migrants, policies for work and welfare, and much more. So what does all this mean? What actions should we take to address all of this? For that, we can’t just look at beliefs, because many times people say one thing and do another. Beliefs only inform us on what people are more likely to say and do, but not on what they actually do. We must look at actions, too: What is the net contribution of Muslim immigrants to Western society? How can we improve it? That’s where we’re going in the next article.

This is an extraordinarily sensitive topic. It’s not easy to do it justice. I tried and am guaranteed to have failed—hopefully less than most writing on this topic. So please, share your thoughts and knowledge on the topic, but keep your comments constructive and civil.

One caveat across the entire article: Nearly 25% of those raised Muslim in the US don’t consider themselves Muslim anymore. This might influence some surveys, because AFAIK, these surveys tend to qualify people as Muslims by asking them if they identify as Muslim. If a person raised as Muslim says they don’t identify as Muslim, then we’ll miss in the survey that 25% of the sample is fully assimilated into the host country. If this was widespread enough, it would explain how a society can become more Muslim and more radical without causing a long-term conflict: Muslims would be the mix of new immigrants plus the more radical remnants of existing Muslims (thus appearing more radical). The new immigrants could be enough to keep increasing the number of Muslims, even though the leakage through assimilation was strong. In France, this number is 10%, and it looks like in many countries, the number of people leaving Islam is quite low (remember, Islam says apostasy deserves death). So I think that, overall, we can disregard this effect, except for in the US, where it’s so strong. Another show of how the US integrates people so much better than other places.

SCIICS, large-scale telephone survey, 2008, for the Netherlands, Germany, France, Belgium, Austria and Sweden.

But more hostile to Muslims than non-religious Muslims were to the West.

From the center-right Policy Exchange.

The Henry Jackson Society self-describes as non-partisan, but left-wing critics consider it right-wing. I asked ChatGPT and it told me that it’s formally non-party-political, but it’s a center-right / neoconservative foreign-policy and security think tank that presents itself as cross-party and has had some cross-party associations, especially historically.

The French Left and pro-Muslim groups have accused the IFOP of pro-right bias. Is that true? The IFOP is one of the oldest survey companies in France (created in 1938). PolitPro has assessed survey companies and considers IFOP the most reliable. IFOP has worked for the French Socialist Party, and has published surveys with pro-left findings. Grok, ChatGPT, and Claude all think IFOP is neutral. As a reminder, ChatGPT and Claude lean left, and Grok leans right of them, but still left.I looked into the actual criticism of the IFOP by a French Muslim organization, and these criticisms include the fact that it increases islamophobia (that doesn’t mean that a fact is false, just that it’s inconvenient), that it undermines right-wing talking points by highlighting that 7% of the French are Muslim (which lends credence to the IFOP data being accurate), or that the sample size is small (1,000 is reasonable and the methodology uses standard statistics, with standard errors, some sample bias, etc). The main criticism is that this type of survey (methodology here, seems robust to me) carries some biases, but again, this is standard practice. All surveys have these types of biases. The question is: Are they likely to completely misrepresent reality? One of the most telling facts is that younger Muslims appear more Islamist than their parents. The criticism itself claims that this is because older Muslims had to conceal their Islam, but younger ones are prouder of it. This does not sound to me like a bias of a survey: If parents and children are similarly radical, but the parents conceal it while the children don’t, it sounds like the children are indeed more radical, since they feel more comfortable discussing their radicalism. If anything, it means society is more tolerant of their radicalism while their radicalism remains the same or is higher. The other big criticism of the survey is that ~35% of France’s Muslims (of which there are ~7M) declare going to the mosque every Friday. That’s about 2M Muslims, but there is only room for 500k people in French mosques. The mismatch can be explained by social desirability bias (people want to look more religious than they actually are), uneven capacity utilization, multiple prayer sessions or overflow practices (Mosques often hold multiple Friday prayer sessions at staggered times or use overflow areas, courtyards, or streets).Based on all this, I conclude that IFOP is probably low bias, and it seems like its survey results reflect the reality of what French Muslims say, rather than what they do (but here we care about Muslim thought, so this is perfectly on point).

5%–30% according to ChatGPT

Isn’t that like blaming victims of rape because they were too scantily dressed or drank too much?

Junge Menschen mit muslimischer Prägung in Wien, Kenan Güngör, et. al, November 2019

I generated them through Claude by uploading the pdf and asking it to graph them. Since I don’t speak enough German, I didn’t spot-check, but the numbers are very similar to the other stuff we’ve seen.

Data from December 2024. The methodology is questioned, because half the sample was collected by asking people in busy streets on a Friday evening in four locations in Vienna, and the other half online. The two samples were very different in results, but the report just averages them out. My take on this is that the street samples are probably very biased towards more moderate Muslims (which I assume are more likely to go out on a Friday night), and also biased them further towards moderation (they answered the questions discretely on their own on iPads, but being in a public area and handing out an iPad probably makes the answers more attuned to what’s expected of them), whereas the online sample is probably more revealing of the true attitudes (they came from the survey company’s existing panel).

There are fewer Islamist women, but they are more radical: 10.3% of Muslim women were manifestly Islamist in 2025, and 16.6% were latent/open, for a total of 27%. For men, these numbers were 9.8%, 22.7%, and 32.5%.

9.7% of low-schooled Muslims (up to Hauptschule) are Islamists, and 9.2% of those with full high school / university entry level. For openness to Islamism only, the numbers are 30.4% and 25.7%. In other words, 35% of German Muslims with high school or higher education are either Islamist or open to it…

In 2025, 45% of Muslims under 40 hold at least latently Islamist attitudes (11.5% manifest + 33.6% latent), compared to around 35% of 40–59 year-olds and under 10% of those aged 60 and over.

On the lower side of the range in Europe, on the higher side in the US. This is true for all results.

If we accept ~45M as the number of Muslims in Europe.

2026-05-12 20:24:41

I’ve heard about ideological clashes between Muslims and the West for decades, but it seems to be increasing recently in Europe with the rise of Muslim immigration, and it’s now at the center of many elections.1 One side claims that Muslims are like any other religious group. The other that they’re not. What should we think about it? I never had a full p…

2026-05-06 20:02:59

This is a review of the latest data I have come across on dating, sex, and relationships. You should read it as a mosaic of facts that form a picture, rather than a decisive statement on the world today.

2026-04-30 20:03:21

Previous premium article: Why Did Islam Spread So Fast in North Africa & Spain?

Next premium article: An anlysis of all the news on the Game Theory of Sex & Relationships

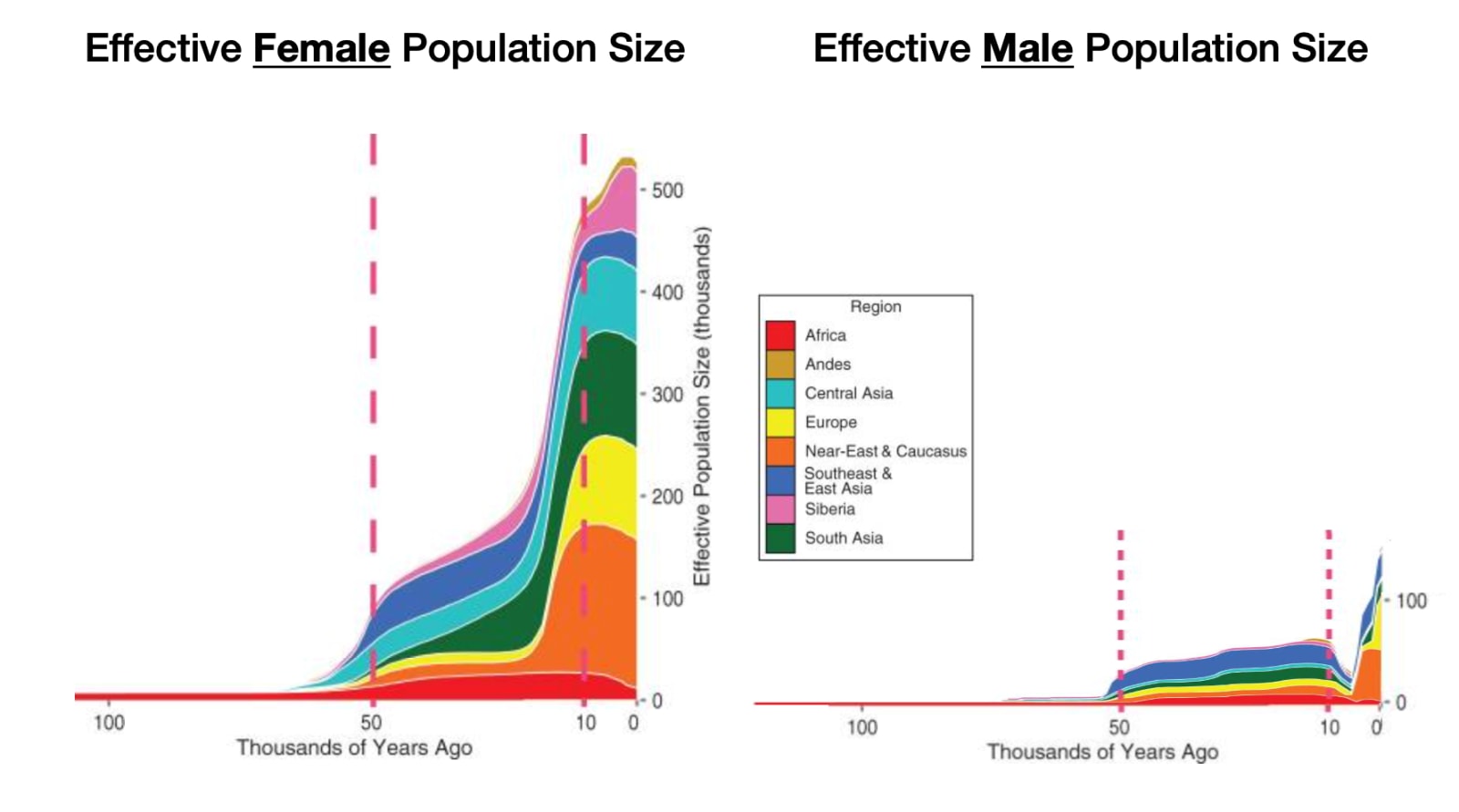

Here’s a crazy fact I read: 80% to 90% of women reproduce, but only about 40% of men do. What?!

It made me think of this:

This represents the idea that, nowadays, women can easily have sex, so they gravitate towards attractive men, which is bad for unattractive men (no access to sex), and bad for all women (heightened competition for a few men only), while attractive men get all the access to sex they want and end up not partnering up.

Are these things true? Do men really reproduce less than women? Is it because historically, the more attractive men could hoard the women, leaving the least attractive men out of luck? If that’s the case, how frequent was it? How did different societies deal with this problem? Did it lead to violence? And what about today? Do more women than men still reproduce? How has technology like Tinder or contraception changed this? What can we predict about the future of sex, reproduction, and violence?

Let’s start with the initial claim: If 80% of women reproduce and only 40% of men, that means women have been 2x more likely to reproduce than men in history. Is this true? This is an estimate of how many women1 reproduced in a studied population throughout history:

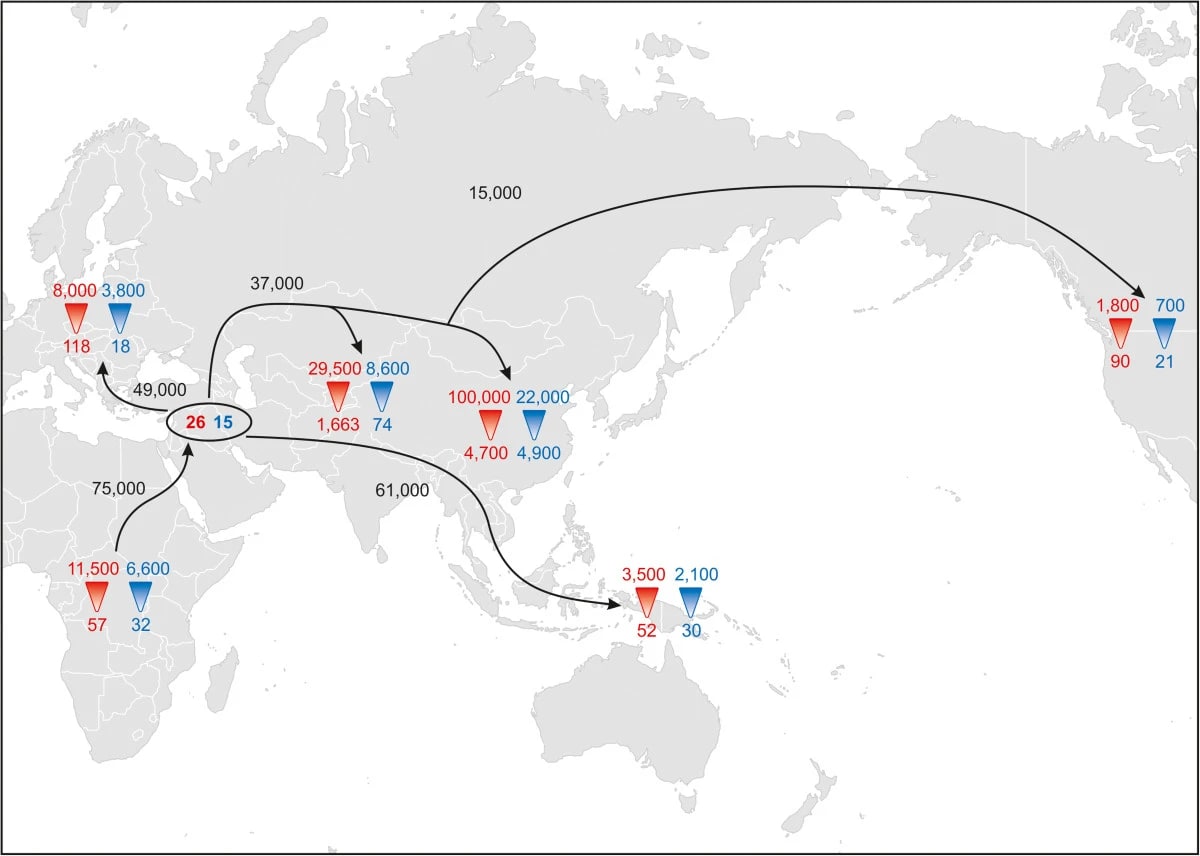

Notice how the human population starts exploding about 70,000 years ago, around the time of the 2nd move out of Africa. Then, there’s a new explosion around 10,000-12,000 years ago, around the time of the end of the last ice age.

But this is for females. How did males reproduce in comparison?

Assuming that 80% of women reproduced at any given time, what does this tell us about the share of men who reproduced?

This is bonkers!! It means historically only about a quarter of men reproduced!?2

This maps tells you where:

How is this possible?

About 3% of men consider themselves fully homosexual, so it can explain only a tiny part of this gap.

The most logical explanation for this would be polygamy (one person marrying several people of the other sex), which generally means polygyny (one man, several women).3 If the average man who marries has 3 wives, it means about ⅔ of men won’t have a wife.

This causes a lot of conflict. Because you get a lot of men who can’t access sex and reproduction. So societies had three options.

One was to just be violent, with men killing other men and kidnapping and raping women. That is not very stable at all, so such societies quickly learn to focus the violence outwards: They organize in clans and tribes where you’re not supposed to kill each other, and should raid your neighbor instead.

The societies that managed to do this at scale were able to spread extremely fast. Examples include the Vikings and the Arab Muslims: In both cases, they had serious polygyny, and men were recruited to go raid foreigners and take their women as partners, concubines, or slaves. The Muslims had the added brilliant idea of telling recruits: “Even if you die fighting, don’t worry, you’ll get your women in heaven.”

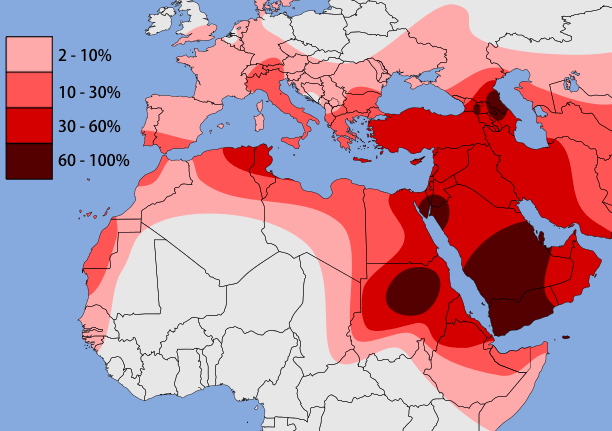

For example, we know from DNA analysis that 80% of the Icelandic male DNA was originally from Scandinavia, whereas 60% of women’s is Gaelic.4 So the most common way that Iceland was settled was with Scandinavian men taking Gaelic women in the British Isles and settling in Iceland. Something similar happened in Arab territories:

So very certainly part of the difference between male and female reproduction is that some men hoarded women, so other men either didn’t have access to women and didn’t reproduce, or had to go to war or raid neighbors to get women. They would either die trying, or succeed and extinguish the male line of the conquered.

It doesn’t always need to be violent though. For example, the Swahili Coast in Africa has in some places up to 80-90% of male ancestors from Persia, India and Arabia, with nearly 100% local female ancestors, from Africa.

This was from trade: Rich Muslim traders would come and marry local women. But slaves were part of the traded goods, and so some of the partnerships would have been coerced rather than free.

Conversely, some Arabic ancestry comes from African women, but not men. Given that Arabs traded African slaves, the most logical explanation is that they didn’t let African men reproduce in Arabia,5 but some of the African women slaves did reproduce with the men.6

This one blew my mind. There’s a second way a polygynous society can manage the sex imbalance: Growth plus age differences. This explains why old men marry young women in Muslim countries.7

If you have 100 men, and they each have on average two wives, you need 200 women. How can you get them? You can solve that if the men are 40 years old and the women are 20 years old.

The women have four children each, for a total of eight children per father—approximately four boys and four girls. That means that our total population of 100 men and 200 women becomes, 20 years later, 800 men and 800 women.

The women marry immediately and have children,8 but not the men! The 800 men have to wait 20 more years. By the time they do, there’s been a new generation. Now there are 1600 men and 1600 women aged 20: Enough women so there’s two of them per man!

Another way to think about this: If every generation is twice as big as the previous one, every man can simply marry, when he is older, two younger women of the next generation.

So by marrying women young enough, remaining fertile, and having lots of children, you can actually maintain a highly polygynous society while all men still have women!

Something like that has happened in high-growth polygynous societies across the world. It’s one of the reasons I believe old men marry young girls in some Muslim countries. I don’t like it (and with children it’s abhorrent), but that’s the logic.

This wouldn’t explain why men have had more children than women though—in fact, it increases the mystery, because it could explain away polygyny without the need for women reproducing more than men.

The other way to handle this problem is by forbidding polygamy. If everybody is monogamous, everybody can pair up and have children. Yay!

This has a big advantage: Instead of pouring resources (men) into violence against each other to fight for women, who are then raped and / or kidnapped, you can focus all the energy on building stuff and accumulating wealth.

This might be one of the reasons why the Greeks (and later, Romans), who were uniquely monogamous, were so successful. They imbued this into Christianity9, which then spread the concept around the world.

Islam and China were not technically monogamous, but in practice the vast majority of marriages have been, I assume for the same reason.

A study looked at the ratio of men to women in several societies, and assuming 80% of women have had surviving descendants, then in East Asian societies about 72% of men had surviving descendants; in Europe it’s 62%, and in Nigeria (Yoruba) it’s 57%. So we can see in the data that monogamy might have indeed had a strong impact in balancing reproduction and descendants—which gives credence to the idea that polygyny was originally one of the causes of this imbalance.

But if monogamy has been so widespread, why do we see such an imbalance in male-female reproduction across the world until so recently?

The most common structure for clans has usually been patrilineal: All the men in a family stay together, and the daughters leave to pair up with men in other clans. This means all men in a clan are related by blood, but not all women.10 So if a clan disappears—whether it’s wiped out, it starves, or it slowly shrinks because it doesn’t have access to resources or status—then the entire male line goes extinct. But not the female line, which is much more mixed! So, in effect, patriarchy is genetically much riskier for men than for women.

Meanwhile, this effect was compounded by the fact that successful clans would wipe out many other clans, further reducing genetic diversity.

And then, these successful clans would grow too much and would need to split. When they did, they tended to do so along lines of close relatives. So for example each successful son would move out with his male descendants. The result is that every split would increase male line genetic concentration.

One paper tried to figure out how much the effects described in this section could explain the gap between male and female reproduction, and it found that it could theoretically explain it all. In other words, you don’t theoretically need polygyny or violence between men to explain this gap. The clan structure could be enough.

My guess is that it’s something in between:

Polygyny meant that many men could not get wives and never reproduced.

Many men killed other men and took the women, further reducing the share of men who would ever reproduce (or killing off their offspring). This was more common in polygynous societies than in monogamous ones.

Even if some males reproduced, clan structure could eliminate their entire male line, if the entire clan was wiped out.

What does all this tell us about today? Here are the consequences we can draw for dating apps, incels, polygamy in the West, immigrant profiles, Ukraine and Russia, and AI and robots.

2026-04-24 20:51:17

We talked about the spread of Islam in Arabia and Egypt in the previous article, but it didn’t stop there. Less than 100 years after Muhammad’s first conquests, Islam was well-established in Spain. How come?

As a reminder from the last article, the two regional superpowers, Byzantium (Romans) and the Sassanids (Persians), were uniquely wea…