2026-05-25 03:00:00

A person watching over my shoulder asked “How are you switching around so fast?” and I realized that while most readers here know this trick, some may not, and it’s awfully useful.

[Update: I published an earlier version of this in 2012 but have got that “How do you” question a couple times recently, so maybe it’ll still be new news to a few people.]

In all the browers I use, Command-1 takes you to your leftmost tab, command-2 to the next one over, and so on up to Command-8. Command-9 selects the rightmost tab. Also, you can right-click on a tab and “pin” it; which shrinks it down to just the favicon, and moves it as far left as it can go.

So the trick is, pin the same heavily-used tabs in the same place, and leave them there forever. In my main browser (currently Safari) it’s like this:

SMS/RCS texts, linked to my Pixel. This is a Google thing, not sure if you need to be on Android for it to work. But for those serious conversations that remain in text-land, it’s awfully nice when you can resort to an actual keyboard.

Calendar.

The local staging version of this blog, where I review and edit articles.

What you are now reading.

Blog comment review/approval.

Quamina (probably moving to Codeberg soon).

Bluesky; but it seems I never go there any more unless I’m following links from elsewhere. To be honest, not sure what I’d replace it with.

Have fun!

2026-05-20 03:00:00

Recently I got an invitation from an organization I respect, to a gathering of senior people, unconference format. Yes, it’s mostly about AI. No, it doesn’t reek of boosterism. My guess is that the discussions would be relatively intelligent and unbeliever contributions would be welcome. I declined, because it’s in the USA.

Here’s the text; maybe someone in a similar situation might find it useful.

Thanks to whoever thought of me for the kind invitation, which I must regretfully decline.

I’m Canadian and as a matter of principle feeling negative about visiting a neighboring country whose leader has repeatedly threatened our sovereignty and shown massive disrespect for our nationhood. Particularly when that leader has followed up similar statements about other nations with military action.

I could probably work around that. But there’s also the issue of entering the US; if I roll up at the border and am asked to disclose my social media output, there’s a significant risk of an extremely negative outcome. I have a family to support and really can’t afford that risk.

I still consider myself a friend of your organization, and one with strong opinions about the subjects scheduled for discussion; my regrets about having to decline are entirely sincere.

—Regards, Tim

2026-05-04 03:00:00

War is bad. Don’t start one. But we’re already in a class war and we’re losing. Where by “we” I mean most people; the winning side comprises, roughly, the richest 0.1% of the population, who are morphing into a hereditary aristocracy. [I mean that, see below.] So, what to do in a war one didn’t choose?

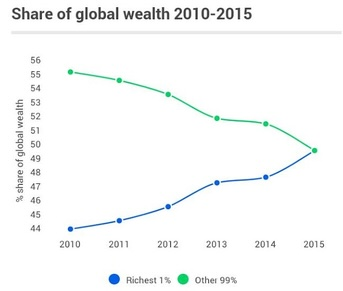

It’s really bad, and getting worse fast. I recommend cruising through Wikipedia’s excellent article on Distribution of wealth; maybe jump straight to the Wealth inequality section. I’ve pulled one helpful graph, sourced from Oxfam, into the margin. The article has loads of other statements of the form “The richest X compared to the poorest Y have Z times️ as much.” The values of X, Y, and Z are uniformly saddening.

As a resident of a wealthy West-Coast New-World city, the effects of pathological inequality are in my face every day: Bentleys gleaming on the road, ragged people huddled in the rain cadging cash outside the drugstores, thousands homeless.

It’s not only sinful by any sane definition of sin, but stupid, inefficient, and damaging. I turn once again to Wikipedia: Effects of economic equality. I’ll add one pointer to an effect that is less obvious: It exacerbates the unaffordability crisis.

One effect of the increasing imbalance between the ultra-wealthy and everyone else is the emergence of, effectively, a hereditary aristocracy. “Wait!” you exclaim, “How about high income-tax rates for the wealthy, and inheritance taxes?” You might well ask. It turns out those are no longer operative. I’ll get into details about that, but first…

I am, as previously related (see Southsiders and Fútbol Joy) a fan of the Vancouver Whitecaps Football Club (VWFC) who play in Major League Soccer. It’s affordable, light-hearted, high-drama, high-quality entertainment and has lifted my spirits notably in the recent dark years.

Vancouver Whitecaps fans bring the love

However, it appears that Vancouver’s about to lose the Whitecaps, at the whim of a Vegas-based purchaser, of whom The Athletic writes:

The potential buyer is Grant Gustavson, the son of Kentucky billionaire Tamara Gustavson and grandson of B. Wayne Hughes, founder of Public Storage, according to multiple sources with knowledge of the discussions. Forbes estimates Tamara Gustavson’s net worth at $8.5 billion.

Gustavson, 30, lives in Las Vegas. A graduate of the University of Southern California, he has been involved with the athletic department at his alma mater and helped to develop the Name, Image and Likeness (NIL) program there. He continues to work with the USC basketball program and is also involved in the management of his family’s farm, “the country’s premier thoroughbred farm with decades of storied champions throughout the stables.”

So this fucking youngster, who has life experience working at the gym at USC (where his Mom’s on the Board of Trustees) and helping out at the family farm, can reach out his mighty hand and snatch away a popular pleasure from another nation. Droit du seigneur in action.

I highly, highly recommend Our Tax System Should Make You Furious from the NYT. By “our” they mean America’s. First, it addresses the canard that the tax system is actually progressive; people who like things the way they are like to say “Forty percent of people pay no federal income taxes, and then the top 1 percent pay 40 percent of the income taxes.” (Tl;dr: Somewhere between highly misleading and a big fat lie.)

Second, it explains the mechanisms by which generational wealth is accumulated and preserved, effectively in perpetuity. People like Bezos and Musk pay basically no income tax, and the way they do it isn’t complicated or hard to understand.

There is actually a family of financial products called Dynasty Trusts. The first ad that popped up in response to my Web search had the marketing copy “Dynasty trusts: preserving family assets for future generations”. Or, put another way, “Dynasty trusts: Starving beggars in your neighborhood.”

So what can we do about it? Tax expert Ray Madoff, the interviewee in the “Should Make You Furious” piece, has smart things to say. Then there’s Thomas Piketty: “Opponents of the tax on the ultra-wealthy lack historical perspective”.

The common thread is taxation of wealth not income, because the arcane abstractions of accounting make income too easy to hide. The argument is that a wealth tax of say 2%/year, starting at a threshold of a few tens of millions, won’t impair the lifestyles of the seriously wealthy, but still yield systemically important public-sector revenue

Also worth reading, from the International Monetary Fund: Game-Changers and Whistle-Blowers: Taxing Wealth. Among other things, it reports that the proportion of wealth that is hidden in one offshore tax shelter or another is pretty small, ranging from 8% in the developed countries up to 30% in poor nations. Apparently it’s harder to hide wealth than income.

If wealth taxation won’t touch wealthy lifestyles and will help build a safer, calmer, happier society, it feels sort of irrational to oppose it. And some of the wealthy don’t. My favorite example of this is Avi Bryant. Check out I’m a Millionaire. Tax Me More, Please and Meet a millionaire who wants Canada to tax the rich. [Disclosure: I made a nice little chunk of money when my tiny investment in Avi’s startup turned into pre-IPO Twitter shares.] I’m also interested in Patriotic Millionaires, which Avi founded.

Also worth checking out: Jeff Atwood’s Stay Gold, America and Launching The Rural Guaranteed Minimum Income Initiative. So, not all of the 0.1% are The Enemy.

Around the world, governments are running up huge debts and cutting back social programs because the taxation revenue doesn’t come near the amount it requires to provide a livable society. So the choice is stark: Cut and slash deeper (read: starving beggars) or find more money. There’s lots of money out there basically just playing financial games; it needs to be put to work doing something useful.

This package of ideas should be easy to sell to voters. Of course, resistance will be ferocious and extremely well-funded. But the currently-winning side in our class war is actually a soft target. Target for what weapon, you ask? Democracy. No need for tumbrils and guillotines. Yet.

2026-04-27 03:00:00

That’s James S.A. Corey, which is to say Daniel Abraham and Ty Franck, and their new series The Captive’s War, an in-progress work comprising 2¼ or so novels. The Coreys are of course best-known for their deservedly wildly popular The Expanse series and the subsequent success of the streaming-video version. The new series is… different. If you’re wondering whether or not you should wade in, the following is for you.

Don’t worry, you can go on reading this even if you plan to read the books. Here’s a spoiler that has appeared in every public mention of the book, which I’ll give away with a quote from page 102: “I think some important scientific questions have finally been answered. Alien life exists, and they are assholes.” Which is to say, it doesn’t go well for the humans.

Now for the meta-spoilers. The novels are The Mercy of Gods and The Faith of Beasts, then there’s a novella, Livesuit. I found The Mercy of Gods a bit of a grind, and if that’s all I’d read I would have been pretty negative about this project. There is a major, major reveal partway into The Faith of Beasts that changed my whole outlook on the series; it makes the storytelling velocity really pick up. It’s a little annoying that Livesuit was published between the two full novels because it only really makes sense if you’ve finished both of them. So do like I did, and read the novella last.

I have to ask why the Coreys couldn’t have pulled the curtain aside a little earlier on. And while I’m griping, let me add that the sped-up storytelling runs into a big honking cliffhanger ending at high velocity. Harumph.

It could go off the rails but if they can maintain their Expanse form, I suspect this series is going to be pretty great. The characters are fun to know and the narrative revolves around the great mother of all trolley-problem ethical challenges, which was not nearly resolved at cliffhanger-ending time.

Those of us who are fussy about the plausibility of future technologies (hey, Charlie Stross) should avert their eyes from the Coreys’ fairly low-effort attempts to explain how the aliens and humans in this story accomplish the things they do. Doesn’t bother me much, though.

Having said all that, this series is not cheerful stuff; I do not recommend it to those who, like many in these troubled times, are having trouble seeing the bright side of, well, anything.

Yep, no hesitation. And they’ve already started working on a streaming version. Unfortunately it’s going to be on Amazon Prime, which I haven’t missed since unsubscribing a couple years ago. Getting this thing on the screen is going to be an extended, difficult, task. There are multiple species of aliens that the show is going to have to absolutely nail, with emotional credibility, for the story to work. Don’t hold your breath.

2026-04-14 03:00:00

On impulse, Lauren and I went out for a short walk — around just a few blocks — as the grey Spring afternoon shaded to dusk. On a second impulse, I grabbed the camera on the way out the door.

In our local community garden, here’s (I think) a chard.

That was in Vancouver’s Mount Pleasant park, small but nice and apparently never not used. Also this old blackened fruit tree, we’re a bit past the fruit-blossom peak for this year. Nice to see I’m not the only old citizen trying to brighten things up.

Now we’re walking up a locally-main street called Main Street or “The Main” if you’re trying to sound hip. Someone put work into that window! I’ve bought my daughter a couple of cool birthday presents from the store behind it. My thanks to the building across the street for providing a dark background reflection.

Back to the next block over from ours. A while ago a bunch of people were building little fairy/elf/hobbit villages at the bottoms of the big old trees. This isn’t that. What is it?

The colors of the natural surfaces are real.

When I shot these, it was getting dark but I didn’t think much, mostly just pointed and shot. (Fiddled with the aperture dial a bit.) Then I came home and pulled them into Lightroom and didn’t need to do really anything about colors. A bit of contrast and highlights here and there. Oh, and fairly brutal cropping, especially on that fruit-tree-flowers pic. Because like I said, I didn’t think very much when I was shooting and I didn’t have to because on a Twenties camera you don’t.

I could take that heavily-cropped fruit-tree picture and print it big enough to occupy any domestic wall in your place and yeah, there’d be grain but it wouldn’t bother your eyes.

Anyhow, modern cameras are pretty great. The lowest ISO in today’s set is 2500 and the highest is 6400; the apertures range from 2.8 to 5.6. Bet you can’t tell the differences. My camera is a reasonably modern Fujifilm but not remotely bleeding-edge in camera tech. (Note: 35mm F1.4, now all the Fuji fanfolk are smiling and nodding.)

Anyhow, there are very very few photographers for whom the camera they carry is the limiting factor in the goodness of their pictures. Certainly not me.

Consider getting a camera. Used is fine, anything built in the last five years, maybe more, will effortlessly take brilliant pictures in almost any conditions. Sure, your phone can take great shots too, but the feeling of walking along with something that fits your hand and you only have to press one physical button once, that feeling, it helps you see the good pictures when they happen.

Then go out after and take a walk in the Spring dusk.

2026-04-10 03:00:00

Our family has used 1Password for many years. Most recently 1Password 7, now at least three years out of date. We didn’t want to upgrade to the latest version, went looking for alternatives, and have been exploring Bitwarden. The best choice isn’t obvious; here’s the story thus far.

Important note: I suspect that most-to-all of the people reading this already are using a password manager. If you’re not, please, PLEASE start now. Your browser probably has an OK one built-in, which is much better than nothing. Here is a good write-up on the basics.

They’re not fancy. The house contains Macs and Androids and Windows and an iPad. We have hundreds of accounts (some require an authenticator) and a basketfull of secure notes: Government-ID numbers, recovery codes, and so on.

1Password had this nice feature where you could sync between devices without involving any 1Password servers, in a variety of ways. We used one of those and liked it. 1Password8 insists on storing your data (encrypted, more on that later). That always bothered me because, obviously, that repository is a top-priority juicy target for all the bad guys, who range from employees of the Chinese government to geeky narcos.

So we’ve been ignoring 1Password’s increasingly plaintive reminders that we were using years-out-of-date software and chugging along with version 7. But, early this year, they broke our sync mode on the Android app and were pretty blunt that the only way to get it back was to go to 1P8.

There are plenty of password managers (Let’s just say “PMs”) out there, but as a regular scanner of the landscape, it seems to me that 1Password (hereinafter “1P”) and Bitwarden (“Bw”) stand out as leaders. The rest of this piece will focus on those two. If you think I’m wrong, say so below but also please say why.

Note that Bw comes in two flavors: That offered as a subscription service by the company of the same name, or as an open-source software suite you can build and run yourself.

This is not to say that the PMs that are starting to appear built-in to browsers and OSes are worthless or unimportant, just that some of us need a little more.

Two are obvious. The first is incompetence, like for example LastPass, who apparently left the doors more or less wide open to those bad guys I mentioned a few paragraphs ago. Complete horror-show.

The second is legal compulsion, where a government applies pressure to a PM provider to cough up our secrets. Anybody who thinks governments won’t try is fooling themselves, because they’ve repeatedly said they want to, and are eager to pass ill-considered legislation such as the CLOUD Act. So we care about that aspect a lot.

I think they both have acceptably-good security postures; check out Bitwarden Security Whitepaper and About the 1Password security model.

Both of them offer to host your data outside of the US, specifically in Canada or the EU.

But it doesn’t matter that much if a bad guy or bad government gets their hands on your password store; what matters is whether or not they can decrypt it. I’m not an infosec professional but I know some and listen to them, and both those security postures give me a good feeling. It’s not an accident that they’re pretty similar.

The actual threat isn’t so much that an adversary cracks the crypto; that’s very unlikely. It’s that they find a way to force a PM vendor to build a back door into their software to get access to keys and passwords. For that reason, it would warm my heart if either or both of Bw and 1P were to post a Warrant Canary.

But I’m going to give Bw a very slight edge. First, because of the fact that you can build and run it yourself, if you’re willing to take responsibility for operating a server with strong security requirements. (I’m not.)

The source being open potentially offers a second, and more important I think, advantage: If they were able to get a Reproducible build working, you’d have assurance that the code you can download is the one their service is running. Which reduces the attack surface. (Mind you, not to zero.) Reproducible builds are hard, but if they did that, it would make a difference to me.

On the other hand, Bw’s software development process embraces GenAI generally and Claude specifically. At this stage in the growth of those technologies, this sends a chill up my spine. To be fair, 1P’s website shouts that it’s just the thing for agentic security, whatever that means. And we don’t know anything about 1P’s internal software-dev process.

1P wins this one. The problem is, do they always pop up when needed and never when they’re not? Can they fill every login field that needs filling? Does the popup show you just what you need and nothing extraneous? I’ve used both and 1P is just better.

This one is also pretty well a saw-off. Both of them have taken substantial chunks of VC money and thus are going to come under relentless pressure to enshittify. I worry a little less about this because from what I read, there’s not much lock-in.

Personal experience too: I recently did an export of everything out of 1P and into Bw and it all Just Worked, albeit putting all my stuff into a folder named "No Folder" that I can’t figure out how to rename.

Both Bw and 1P are subscription-only, at prices that seem fair to me.

As I was reading up on this stuff, the issue of recovering access to your PM after it had been lost came up a couple of times. Here’s a scenario where that could be really important: I die. And then my wife needs to get access to bank accounts and business emails and so on.

Somebody (I’ve lost the link) was horrified that one of the PMs suggested writing the password down on a piece of paper as a last-resort measure, but I’m here to tell you that they’re wrong. My wife has an envelope containing a piece of paper on which appear the passwords for my PM and Mac, my mobile-phone PIN, and a very small number of other secret things she might really need if I’m suddenly gone. I have no idea where she put it, but she’s really smart so I don’t worry.

You should probably do something like this too.

We’ve paid for a year’s worth of both Bw and 1P. At the moment, we’re leaning to 1P because it’s a little more polished. Which matters because my PM is something I use many times every day. Also they’re somewhat Canadian.

If you think we’ve missed something, please do let us know.