2026-04-06 19:30:51

Tunnel vision is what happens when attention, judgment, even perception itself gets compressed into too narrow a field. What stands right in front of us grows brighter and brighter, while the surrounding signals — sometimes the ones that matter more — begin to fade.

Influenced by OpenClaw, my team went through an unusually intense stretch last March. We were shipping an Agentic OS — an agent-native Linux OS — every two weeks, and the pace tightened almost at once. A release every two weeks. Daily syncs. Weekly milestones.

The message from management was simple enough: move fast. Let AI do the quick part. If you wait until everything is fully thought through, the moment will be gone. Someone else will already have launched. This wave has to be caught, and so on.

What felt strange was that the part most worth pausing over became the part few people wanted to ask about. Were we actually speeding up the resolution of problems, or merely speeding up the production of proof that problems were being resolved?

The most dangerous thing about tunnel vision is not that it leaves you blind. It is that what remains in view can look uncannily like the right answer.

Under speed, tension, fear, exhaustion, and pressure, a person’s attention narrows into a point. Organizations do something similar. As outside examples grow more dazzling, it becomes easier for teams inside to compress their attention around the most visible signals: speed, launch, PR, visibility, the sense of staking out ground.

Then complex problems get translated into actions that are easier to manage: shorten the cycle, increase the syncs, move reporting forward, underline the milestones.

None of these moves is irrational on its own. Layer them together, though, and they begin to change in character. They start carrying a function that was never really theirs: using higher-frequency visibility to fill the gap where judgment should have offered steadiness.

A narrowed field of vision is not the same thing as clearer judgment. More often, it simply makes it easier to miss the things that decide the outcome: whether the problem has been defined clearly, whether the use case has truly converged, whether the boundaries have been drawn, whether the risks have been made explicit, and who will finally carry the cost.

AI coding makes it faster to write something. Agent-based products make it easier to get something running. What once took weeks to assemble can now be placed on the table in a matter of hours.

The stimulus this creates for management is immediate: if others can get something running, why are we still discussing boundaries?

And this is where organizations begin to misread an external signal as an internal conclusion. If it runs, then surely all that remains is execution.

But the part that has not become any simpler — goal definition, scenario convergence, permission boundaries, quality standards, coordination mechanisms, risk attribution, rollback when things fail — gets pushed quietly to the edge, largely because it does not shine.

The cheaper the prototype becomes, the easier it is to create the feeling that success is just around the corner. And once that feeling takes hold, the path of least resistance is often not to think the problem through, but to turn the pace all the way up instead: compress the cycle, increase reporting, raise visibility, use control to patch the place where judgment is still incomplete. The first path asks people to carry uncertainty. The second can be driven by anxiety alone.

Healthy management should organize resources around the problem itself:

Anxiety-driven management tends to organize pressure around time:

Both sets of questions can look like progress. But they are not moving the same thing forward. One is working on the problem. The other is trying to manage unease.

Over time, I came to understand why this kind of behavior in an organization left me not only dissatisfied, but quietly angry. It creates a subtle reward structure: high-frequency syncs naturally reward what can be shown, and punish the judgment that cannot. After a while, teams become better at translating work into visible progress than at translating uncertainty into boundaries.

And what we get in return is something that looks remarkably like efficiency: busier, denser, faster, more visible — and not necessarily any closer to being right.

Not every team hits the wall at the same moment. A team like mine, working on Linux OS security, will run into it sooner, because what we face is not only whether something can be built, but what kind of capability it acquires once built, and what follows from that.

At the application layer, getting a prototype running early may genuinely help with trial and error. A rough result does not always turn into a systemic incident. But in OS security, the logic of a demo does not carry cleanly into a real system. What is at stake here is system boundaries, execution privileges, the radius of failure, the cost of rollback, and the burden of auditability. You may be able to accept that something is not smart enough yet. It is much harder to accept that it has already been given capabilities it should never have had.

What is truly expensive in security is often not getting something to move, but answering the questions that attract little glamour: should it have this kind of capability at all? Within what scope does it run? What can it access? Who absorbs the consequence when it gets something wrong? Where is the rollback path?

These questions do not make for bright lines on a launch deck. Yet in complex systems, they are the real cost structure. What is unfortunate is that, before a management team already deep in FOMO, this entire layer of judgment and tradeoff can disappear beneath tunnel vision.

After speaking with the team, I realized that what many people resented was not necessarily the daily report itself. It was closer to a condition of work: being interrupted again and again, questioned again and again, asked again and again to prove one’s value. You are not only doing the work. You are also being asked to keep producing evidence that the work is happening.

The worst part is not simply the time it takes. It is what it does to the structure of attention. Deep judgment depends on continuity. Many important decisions do not appear inside a single sync. They grow slowly, inside a stretch of thought that remains relatively whole.

Once thinking is cut into fragments, people begin to slide from solving problems into performing coherence for the organization. You start choosing what can be shown right away, rather than what actually matters but remains invisible for now.

For me, there is another cost as well. It enters the body. This is not the fatigue of a single sprint. It is the slower depletion that comes from living too long in a state of alertness.

To be fair, management’s anxiety does not come from nowhere. External change is accelerating. Expectations are being rewritten. Daily reporting and high-frequency syncs are not useless by nature. In incident response, or in work that is already clearly defined, or when multiple teams truly do need to coordinate closely, they can even be necessary.

What I object to is something else: the problem has not yet converged, the boundaries have not yet been drawn, and yet the work is already being managed at the tempo of a high-certainty execution phase. That is not execution. It is density being used in place of judgment.

Technical teams cannot hide behind complexity either. Naming boundaries is not the same as refusing action. Clarifying risk is not the same as being conservative. Maturity is not about moving slower. It is about distinguishing more quickly what can be done first, what must be thought through first, and what must never cross the line.

Focus is not the same as narrowing. Real focus gathers resources while preserving a sense of the whole. Narrowing, under pressure, drops the surrounding information until all that remains is a local objective growing brighter — and more dangerous.

If there is something in the age of AI that deserves to be accelerated, I would place my hope in a few forms of reasoning that are less dazzling, but cheaper in the long run:

Speed, by itself, is not a capability. The real capability lies in knowing where speed belongs.

AI will almost certainly keep reshaping products, workflows, and roles. That much is hard to stop. But before any real reconstruction begins, many organizations may go through something else first: AI acts like an amplifier, enlarging the habits and instincts that were already there.

When an organization meets AI, what gets amplified first?

If the first thing a change produces is not better judgment, but faster self-proof, then what is it really rebuilding? Is it rebuilding organizational capability at all?

What makes it sadder is that quite a few managers seem genuinely excited by precisely this.

Maybe that’s enough for now.

2026-04-06 13:58:10

隧道视觉效应(tunnel vision)是指人的注意力、视野或判断,被强烈压缩到一个很窄的范围里,只盯着眼前最突出的目标,忽略了周边更重要的信息。

受到OpenClaw的影响,过去的3月份我的团队经历了非常高强度工作节奏 —— 2周发布一个Agentic OS(一种Agent-Native Linux OS),于是节奏被立刻拉爆:两周要产品发布,每天要同步,周周要里程碑。

管理层给出的要求是:一定要快,让AI搞一下很快的,等你想清楚了黄花菜都凉了,xxx已经发布了,这一波热点一定要抓住,blah blah~

奇怪的是,真正该被问清楚的那一部分,反而更少人愿意停下来问:我们到底是在加速解决问题,还是在加速生产“问题正在被解决”的证据?

“隧道视觉”最危险的地方,不是看不见,而是看得见得太“像正确”。人在高速、紧张、恐惧、疲劳、强压下,注意力会缩成一个点。组织也一样。外部案例越来越亮眼,内部就很容易把注意力压缩到几个最显眼的信号上:速度、上线、PR、可见性、占位感。

然后把复杂问题翻译成一组更容易管理的动作:周期压缩、同步加密、汇报前置、节点强调。

这些动作单独看都合理。叠在一起就变味了——它们开始承担一个本不属于自己的功能:用更高频的可见性,去填补判断不足带来的控制感缺失。

视野收窄,不等于判断更清晰。很多时候,它只是让人更快忽略那些决定结果的东西:问题有没有被定义清楚,场景有没有收敛,边界有没有划清,风险有没有显性化,代价最后由谁承担。

AI coding 让“写出来”更快,Agent 类产品又让“跑起来”更容易。过去要几周拼出来的雏形,现在几个小时就能摆上台。

管理层受到的刺激是直给的:别人都能跑,我们为什么还在讨论边界?

这时组织很容易把外部信号误读成内部结论:既然能跑起来,剩下的就只是执行。

而真正没变简单的那一部分——目标定义、场景收敛、权限边界、质量标准、协作机制、风险归因、失败回滚——因为不够耀眼,就被系统性挤到边缘。

原型越便宜,越容易制造“我们已经快要成了”的氛围。氛围一起来,组织最省事的选择往往不是把问题想清楚,而是把节奏拉满:压周期、加汇报、提可见性,用控制感去补判断的缺口。前者要扛不确定,后者只要焦虑就能驱动。

正常的管理动作,理应围绕问题来组织资源:

焦虑型管理更容易围绕时间来组织压力:

两套问题看起来都在推进,推进的却不是同一件事:一种在处理问题。另一种在管理不安。

思考到这里,我逐渐弄明白自己为什么对于组织上这种行为感到不满甚至愤怒,因为这种行为会把组织带进一个微妙的奖励机制:高频同步天然奖励“可展示”的动作,惩罚“不可见”的判断。久了以后,团队会越来越擅长把工作“翻译”成进度,而不是把不确定“翻译”成边界。于是我们会得到一种很像效率的东西:更忙、更密、更快、更可见,但不一定更接近正确。

不是所有团队都会一样早地撞墙,我在的 Linux OS 安全这类团队会更早,因为它面对的不只是“能不能做出来”,而是“做出来以后它拿到什么能力,会造成什么后果”。

在应用层,原型先跑起来,可能确实能帮助试错,粗糙一点也未必马上变成系统性事故。但在 OS 安全领域,demo 的逻辑不能直接平移到真实系统:这里牵扯的是系统边界、执行权限、错误半径、回滚成本、审计责任。你可以接受它先不够聪明,很难接受它先拿到了不该拿的能力。

安全领域真正昂贵的部分往往不是“让它动起来”,而是回答这些不 glamorous 的问题:它该不该有这类能力?它在哪个范围里运行?它能访问什么?它犯错之后谁来兜底?回滚路径在哪里?

这些很难写成高光句子,但在复杂系统里,它们才是成本结构。比较遗憾的是,面对深陷 FOMO 状态的管理层,这个层面的考虑和取舍已经被“隧道视觉效应”彻底掩盖了。

在跟团队的交流后,我意识到很多人反感的也未必是日报本身。更像是一种工作状态:持续被打断、持续被追问、持续被要求证明价值。你不只要做事,还要不断产出“我正在做事”的证据。

这种状态最糟的不是占用了多少时间,而是注意力结构 —— 深度判断依赖连续性,很多关键决定不是在一次同步里蹦出来的,而是在一段相对完整的思考时间里慢慢长出来的。

当思考被切碎,人会从“解决问题”滑向“组织表达”。你开始优先做能立刻展示的事,而不是那些真正重要但暂时不可见的事。

于我个人而言,这里还多了一些代价,它会“进入”我的身体,这不是简单一次猛冲的疲惫,而是长期处在警觉里的透支。

客观的看,管理层的焦虑并非无缘无故,外部变化确实在加速,预期在被改写。日报、高频同步也不是天然无效,在事故处理中、任务定义足够明确、多个团队必须紧密协作的时候,它们甚至是必要的。

我反对的是另一种场景:问题还没收敛,边界还没划清,却已经按“高确定执行阶段”的节奏去管理它。那不是执行力,是用密度替代判断。

技术团队也不能躲在复杂性后面,指出边界不等于拒绝行动,说清风险也不等于保守。成熟的做法不是慢,而是更快地区分:什么可以先做,什么必须先想清楚,什么绝不能越线。

聚焦不是窄化,而是在保留全局感知的前提下收束资源;窄化是在压力驱动下丢掉周边信息,只剩一个越来越亮、也越来越危险的局部目标。

如果一定要说 AI 时代什么值得被加速,我更倾向于几件不那么耀眼、但更便宜的理性:

速度本身不是能力,正确地分配速度,才是。

AI 当然会继续改写产品、流程、岗位,这事大概率挡不住。但在真正的“重构、变革”发生之前,很多组织会先经历另一件事:AI 像一个放大器,把组织原本的习惯和本能先放大出来。

一个组织面对 AI 时,最先被放大的到底是什么:

如果一种变化最先带来的,不是更好的判断,而是更快的自证——那它重构、变革的,真的是组织能力吗?更加可悲的是,很多管理者反而沾沾自喜地感到“兴奋”。

2026-03-08 22:10:43

这期文章我打算写一个前两天跟自己做的智力小游戏 —— 顺口溜里“左耳进右耳出”,为什么偏偏是“左进右出”,而不是“右进左出”?

以往我不大会做这种看似无聊的事情,不过有了ChatGPT以后时不时的会变成好奇宝宝~

以下是ChatGPT给的答案:

我们形容一个人听不进劝,总会顺口说一句:“左耳进,右耳出。”这句话说起来无比顺滑,就像风穿过空荡的堂屋。

但你有没有想过,为什么偏偏是“左进右出”,而不是“右进左出”?

如果你认为这只是一句毫无逻辑的俗语,或者反过来,你想从中寻找某种高深莫测的玄学机理,我都得先挑战一下你的假设。不要带着猎奇或过度神圣化的滤镜去看待传统文化,我们把这层外衣剥开,看看这五个字背后,中国人是如何构建底层认知逻辑的。

从中国传统文化的坐标系来看,“左到右”绝非随机,它由三个维度的逻辑严密支撑:

- 语言潜意识的“锚定效应”:汉语里有一个铁律——左永远在右之前。你想想我们常用的词:左右、左顾右盼、左思右想。古人在创造对立词汇时,习惯性地将“左”作为起始动作的锚点。“左耳进”是顺应了千百年来形成的肌肉记忆和语言本能,如果硬说“右耳进左耳出”,在发音和语感上就会产生极大的滞涩感。

- 中医与气机的“生杀循环”:古人对宇宙运行的理解基于阴阳五行。在中医和道家内丹学说中,气机运行的核心法则是“左升右降”。左为阳、为东方、为木,代表事物的起始、输入和生机;右为阴、为西方、为金,代表事物的衰退、排泄和肃杀。外界的声音(信息)从左边(生门)进来,符合“输入”设定;从右边(死门)出去,符合“排出”设定。从左到右,刚好完成了一个没有经过“中宫(心)”截留的无效闭环。

- 尊卑秩序的隐喻:虽然历史上“尚左”还是“尚右”有过反复,但在大部分深受礼教影响的时期,“左”代表着尊位。当别人对你说话时,信息从“尊位”进入,表示表面上给了对方面子,做出了“我在听”的姿态;但最终从“卑位”排出去,意味着这些话被贬值、被抛弃。这极其精准地刻画了那种“态度诚恳、坚决不改”的敷衍心态。

- 空间方位学:中国古代的权力中心和建筑风水,其核心视角永远是“坐北朝南”。当你面朝南方站立时,你的左边是东,右边是西。左(东)日出之所,代表着万物肇始、阳气初生、信息的“进入”与发端。右(西)日落之处,代表着一天终结、阴气沉降、事物的“消亡”与排出。“左耳进右耳出”,在古人的潜意识里,就像太阳东升西落一样,是一个完整、迅速且不可逆的滑落过程。

与AI的问答和文化考据到此为止。

这次的思维小游戏让我对AI的态度出现了跌宕起伏:

以前,面对一个跨越人文学科、历史学和心理学的混沌问题,我会自我怀疑:这并非我的专业领域,缺乏严密的逻辑链条和执行路径,想了也是白想。于是我会做一个判断:这个问题太远,太偏,太像无聊的抬杠,就算想了,大概率也找不到像样的回应。

Gemini/ChatGPT等的出现,把跨界探索的阻力降到了零,它为我提供了一个万能的“知识助手”扩展了我的能力和思维边界,有那么一瞬间我觉得自己强的可怕 ,以前很多问题不会被问也不敢问,不是因为它们不重要而是因为:

现在这件事改了,很多原本会被压下去的问题,变成了可以随手试探、马上展开、低成本来回追问的对象。这个变化让我觉得自己变厉害了,不得不承认:

周末我跟家人聊我对“左耳进右耳出”问题的探索的时候,她点了点了淡淡说了句“原来是这样啊~”,也就没有下文了

这让我突然意识到:AI确实像一个极其强悍的外脑,让我敢想敢问,但我也只是从一个“不知道答案的人”,变成了一个“高级的API调用者”,AI能瞬间给我提供上帝视角,让我在几秒钟内看透一个复杂现象的本质,让我产生“我懂了”、“我顿悟了”的极度快感。

过去,拥有答案的人掌握话语权,但在AI时代,答案成了最廉价的工业制成品,AI放大的是我“提出问题”的能力,这种多巴胺的分泌让我忽视了一个关键点:提问能力被放大了,但辨别“发现”与“脑补”的能力如果没同步升级,认知会变得更活跃,也更容易失真,它更加会极大削弱在现实中去死磕、去落地的动力,有了AI你压根不会像学生发paper那样从综述做起,旁征博引论证“左耳进右耳出”的文化渊源,而我如今的思维方式、技术能力都源自学生时期那些折磨人的专业训练。

既然已经深刻意识到了这种“认知杠杆”的威力,我不妨把视线从纯粹的思维探讨拉回现实 —— 以后在AI回答满意之后追问一句“So What?”

2026-03-01 18:11:30

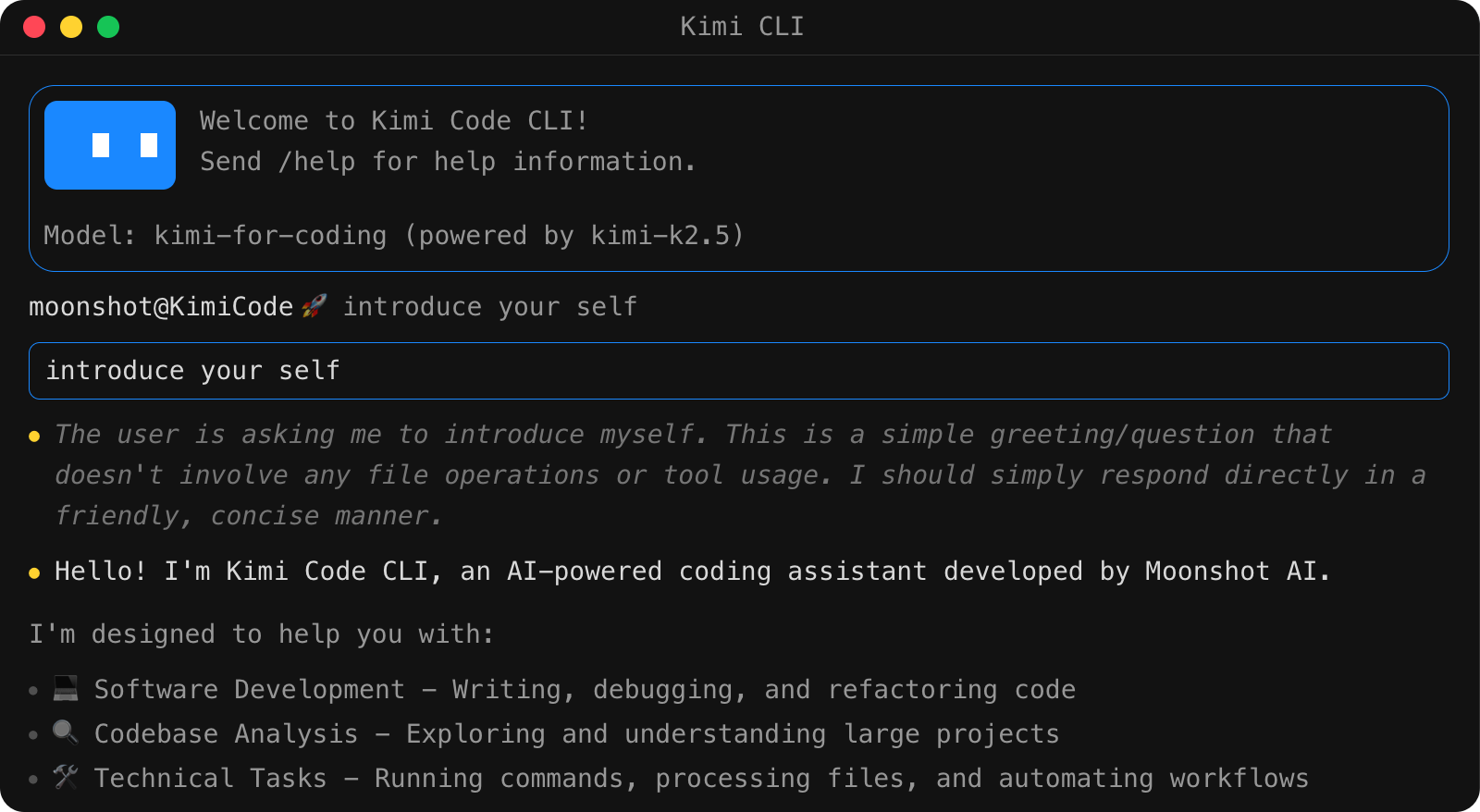

很多 coding agent 的讨论都是关于“它会不会按要求生成代码”,当模型写代码的能力趋于同质化时,coding agent 的上限往往不由大模型的生成能力单方面决定,而是由系统的过程控制、边界划分与协作协议决定的。模型会写代码当然重要,但当任务变成多步骤、要调用工具、可能碰到风险操作、还得把过程讲清楚给人看时,差距就不再只是模型能力的差距,而是系统设计的差距。

最近我在拆 kimi-cli 的过程中找到了一个具象的切入点:表面上它是一个命令行工具,里面其实更像一个小型协作系统。你在命令行里输入一句任务,表面看只是“回车,然后开始跑”。但从系统内部看,事情没那么简单:界面要持续更新,Agent 要一步一步推进,工具调用要在合适的时机触发,有些操作还会停下来等你确认。用户看到的是一条输出流,系统处理的却是另一回事——它在同时处理节奏、状态、边界和人机协作。也正因为这样,很多 coding agent 的差距,看起来像“好不好用”的差距,本质上其实是“过程设计得好不好”的差距。

我想把 kimi-cli 当成一个样本,借它回答一个更有长期价值的问题:拆一个 coding agent到底该先看什么,才能看出亮点、边界和可借鉴的地方?我的第一版观察地图会先放在四个层面:它怎么“跑起来”、怎么“控风险”、怎么“跟人协作”、以及怎么“长期演进”。后面的连载也会沿着这四条线往下拆——不追求把源码讲完,只追求把设计取舍讲清楚。

很多人会把 coding agent 想成“模型回复一次 -> 调工具一次 -> 输出结果”,像一个稍微聪明一点的脚本。但真实一点的场景很快会把这种想象打碎:一个任务可能要先读文件、再搜目录、再改代码、再跑命令、再看报错、再回退、再重试。也就是说,它不是在回答一个问题,而是在推进一个过程。kimi-cli 给我的第一个信号就是,它内部把这件事当成了“循环推进”的系统问题来处理,而不是“单轮对话”的包装问题。你会看到类似 run_soul()、_agent_loop()、_step() 这样的结构线索——这不只是代码组织方式,它反映的是kimi cli设计者对任务本质的判断:coding agent 的核心不是“会说”而是“能推进”。与其投入巨大精力让模型在一步内做对所有事,不如设计一个鲁棒的循环机制,让模型有空间“犯错并自我修正”。

Agent风险控制往往被很粗糙地对待:要么“全部自动执行”,追求爽感;要么“每一步都确认”,追求安全感。真正难的恰恰是中间地带:哪些操作应该无感通过,哪些操作必须提醒,哪些确认可以在一个会话里批量放行,哪些必须一条一条过。这里的设计不是“弹窗细节”,而是产品立场。因为你一旦允许模型触达工具、命令和文件系统,风险就不再抽象,它会直接变成用户信任问题。kimi-cli 里与审批相关的处理(包括确认、拒绝、会话级放行这样的中间态)让我很有兴趣,原因不在于“它做了确认”,而在于它承认了一件事:效率和边界不是二选一,它们需要被设计成一个连续的、可调的机制(这一点我后面会另起文章单独拆)。

跟人协作我觉得是被很多 agent 产品低估的地方。我们常常把 UI/TUI 当成“皮肤”,但对 coding agent 来说,UI 其实是系统能力的一部分。因为用户并不只需要结果,还需要对过程有最低限度的理解:它现在在干嘛、为什么停下来了、下一步要我做什么、刚才那一步到底改了什么。kimi-cli 的一些设计让我意识到,它不是简单把内部日志打印出来,而是在认真处理“系统内部发生了什么”与“人应该看到什么”之间的翻译问题。像 Wire 这样的消息通道设计(包括更原始的流与更适合展示的流)背后反映的是一种很务实的判断:如果系统不能把过程表达清楚,人就很难持续信任它。很多我们以为的“UI 不聪明”,最后追到根上,其实是协作协议没设计好,这不是美观问题,而是预期管理。

agent 一旦开始长功能,复杂度会涨得很快:模型提供商会变,工具会增,配置会分层,交互入口会从 CLI 扩到 Web,用户对行为边界的预期也会分化。这个时候,一个项目值不值得学习,不只看它当前能不能用,还要看它有没有给未来留空间。kimi-cli 在抽象层、配置与扩展路径上的一些安排(比如模型抽象、Agent Spec、工具接入路径,以及面向 Web UI 的会话进程处理思路)给我的感觉是:它不只是想做一个“能跑的命令行助手”,而是在尝试把“agent 作为系统”这件事认真落地。未必每个选择都适合所有团队,但这种“为演进留接口”的意识,本身就值得看。

我想写kimi cli拆解的系列文章,不是因为我想证明某个项目“领先”,也不是因为我想做一套面向源码的讲解课,而是因为我越来越觉得:对于程序员而言,讨论 coding agent如果只停在“效果展示”和“功能截图”,很容易错过真正有复用价值的东西。我们真正能学走的,往往不是某个 prompt,也不是某个 skill 技巧,而是它如何处理过程、如何划边界、如何组织协作、如何为未来留余地。这些东西不够“爽”,但它们才决定一个 agent 能不能从 demo 长成工具,从工具长成系统。

为了达成我的目标,我给自己提了如下要求:

接下来的kimi-cli系列文章我会沿着四条线往下拆:

我拆 kimi-cli 不是为了梳理清楚源码,而是想借它这面镜子,把 coding agent 的过程设计看清楚一点。模型的的光芒常常盖住了Agent在背后的支撑,对于 Builder 而言真正值得学的往往是后者。

如果反馈不错,我再往后和其他项目做一些轻量的“设计立场”对比——不是比谁更强,而是比谁在为哪类问题买单。

坑挖好~~敬请期待后续系列文章

最后,附上kimi-cli的项目整体架构示意图:

2026-02-23 17:00:30

趁着春节休假我给自己的 memos 系统补了一块一直想做的能力:把一条 memo 分享成一张可传播的卡片图。这件事看起来像是“截图”,但真正做起来我才发现,它本质上不是一个图片处理问题,而是一个系统设计问题。

我做下来的体会可以总结成一句话:不是在后端“画”一张图,而是在后端养了一台可控的浏览器,让它在正确的时机按下快门。

从实现需求的角度,最直接的方案当然是把图片生成逻辑塞进主后端里,但我最后没有这么做。原因不是“不能做”,而是职责会变得很混乱。memos 后端擅长的是memo数据、权限、API等管理,而“把一个复杂页面稳定渲染成图”这件事,天然更像浏览器运行时的问题:

这些问题,如果在后端重新实现一套模板引擎和排版逻辑,等于把前端再写一遍。维护成本会很快失控。所以我最后做了一个内部服务 memos-images-rendering,专门负责一件事:

主后端负责鉴权和发令,渲染服务负责执行。边界一下就清楚了。

重要的前提:需要特别说明的一点是我有一台闲置的阿里云99一年的ECS,可以作为memos image rendering服务的环境,如果单为这个服务特意准备VPS/ECS就有点“为了瓶醋包了顿饺子”的味道了。

如果只是输出一张图,很多人第一反应会是 Canvas、SVG、甚至服务端模板拼图。前一版本的share memo as image就是html2canvas的实现,但是在不同的环境(如iOS、macOS、Linux等)图片显示就会不一致。方案都能做但我最终还是选了 Playwright,原因很现实:

也就是说,我复用的是“最终呈现结果”,而不是去复用一堆中间数据结构。这在工程上很重要。因为用户看到的从来不是 DTO,也不是 Markdown AST,用户看到的是最后那张图。

做这种服务最容易低估的一点是:截图动作很简单,截图时机很难。如果你太早截图,常见问题会立刻出现:

很多人会用 networkidle,但线上页面经常有长连接、埋点、异步请求,这个信号并不可靠。所以我在这个服务里用的是一个更“业务化”的约定:

window.__MEMO_SHARE_READY__ = true

这个设计有点像前后端之间的握手协议。 浏览器知道“DOM 出来了”,但只有业务页面自己知道“这张卡现在可以拍了”。这也是我这次实现里最关键的一条经验:渲染服务不应该猜页面状态,而应该和页面约定状态。

为了兼顾不同分享场景,我做了两种渲染模式:

fixed 模式:要尺寸确定,就给你尺寸确定这个模式会截图整个分享画布,然后把结果缩放到目标宽高(例如 2400x1350)。适合场景:

16:9)优点是稳,结果尺寸完全可控。

auto 模式:围绕卡片智能裁切,保留一点“呼吸感”这个模式会围绕 .share-memo-card 自动裁切,而不是死板地截满画布。我做了几个细节处理:

.share-memo-canvas 相交,防止越界这样导出的图不会显得“贴边”,卡片视觉上更像一张真正的分享卡。当然,自动裁切一定会有失败边界(例如选择器缺失、布局异常)。 所以我给它做了兜底:一旦 auto crop 失败,自动回退到画布截图。

这背后的思路很简单:好看是加分项,稳定返回结果是底线。

这种服务如果按“每次请求都启动浏览器”的方式写,基本很快就会卡住。所以我做了几层非常务实的优化:

MAX_CONCURRENCY)这几条加起来的效果,是把“浏览器启动成本”从每次请求里挪走,只在必要时付一次。另外还有一个经常被忽略的细节:清晰度。我这边默认用较高 DPR 渲染,再用 sharp 做高质量缩放。 这会让最终 PNG 在文字和细线条上更稳,不容易出现“能看,但发出去有点糊”的情况。换句话说,我不是只追求“生成成功”,而是追求“发出去像成品”。

图片渲染服务很容易变成一个黑盒:请求进来,等几秒,成了或炸了。为了避免这个问题,我给它加了几类观测信息(可按环境开关):

goto、wait ready、截图、裁切、resize)request id

console error / request failed 日志(用于排查前端资源问题)这类服务一旦线上出问题,最怕的不是错误本身,而是“没有上下文”。 能看到每一阶段耗时,你才能判断问题在网络、页面、字体、图片,还是浏览器进程本身。

这个服务是 internal-only 的,我没有把它设计成公开接口。完整链路里,鉴权责任在 memos 侧:

也就是说,渲染服务不做业务权限判断,它只执行“拍照”动作。这是我这次实现里另一个很明确的选择:让权限留在权限系统里,让渲染留在渲染系统里。

我以前会把这类需求归类为“媒体能力”或者“图片处理”,但这次做完后我更愿意把它叫作:“前端呈现的后端化执行”。它不是在服务端重新发明 UI,而是把浏览器变成一个受控运行时,把页面变成一个可验证的渲染契约。当你接受这个视角之后,很多设计决策都会变得自然:

__MEMO_SHARE_READY__

auto 与 fixed 双模式这些不是“优化点”,而是这个系统能长期稳定运行的前提。

目前版本已经能稳定支撑分享图生成,但还有一些值得继续打磨的方向:

如果你也在做类似的“网页转图片”服务,我的建议是先别急着上复杂架构,先把这三件事做对:

剩下的扩展,都会容易很多。

总结这次实践,让我对Vibe Coding有了新的认识,对于一个Linux OS系统安全背景的人来讲,前端、浏览器这些永远在我的技能点之外的,但是Vibe Coding增强了我的技能树,所以不要把核心价值押注在补足模型能力缺口上,而是增强复杂系统的编排能力。

复杂系统的编排能力,包括数据孤岛、组织阻力、权限、习惯成本,这些是模型能力再强也吃不掉的,因为它们不是智能问题,是人和组织的问题。

但“编排能力”离不开专业领域知识,模型降低的是通用知识的门槛,但在医疗、法律、金融等领域,真正的专业判断力并不会被抹平。

2026-02-14 18:50:53

事情的起因是 Dotey 在 X 上分享的那个 Obsidian CLI 项目。

看着那个在黑色背景中跳动的纯文本光标,一种久违的极客审美油然而生。这不仅是一个工具的发布,更像是一个信号:“Headless(无头化)”正在成为 Agent 时代的默认配置。

这种趋势让一直困扰我的那个选择题变得更加尖锐:在 AI 辅助编程的未来,我们到底该走向 Claude Code 这种纯命令行的极致效率,还是坚守 Cursor 这种重 UI 的集成环境?

按理说,作为一名 Linux 安全工程师,我的肌肉记忆属于终端,属于那些单行命令的组合。根据“奥卡姆剃刀”原则,我也应该拥抱 CLI——它更轻、更快、对 Agent 更友好。

但当我的手指真正悬在键盘上准备修一个复杂的线上 Bug 时,我必须承认一个反直觉的事实:我身体诚实地留在了 UI 里。

这不仅仅是习惯的惯性,而是因为我们在交付的东西,本质上不同。

Teri Radichel 曾详细论证过为何在安全视角下应选择 CLI。她的理由非常硬核:

这套逻辑是工程师的典型思维:追求极致的执行效率与资源隔离。如果你的目标是让 Agent 像流水线工人一样批量处理任务,CLI 确实是最高效的通道。

然而,当我们面对线上事故或复杂重构时,交付的不仅仅是“代码执行”,而是“变更控制”。

如果你把 AI 仅仅当作“更快的键盘”,那么 CLI 胜出。但如果你把 AI 当作一个“极其勤奋但偶尔会产生幻觉的实习生”,UI 就变成了必须的审计台。

我看重 UI,并非因为我不懂命令行,而是为了以下三个控制权:

CLI 的 diff 是流式的,你需要在大脑里重建上下文。而 UI 的文件树与双栏对比,本质上是一个“范围雷达”。

在涉及安全修补时,我最恐惧的不是 AI 写不出代码,而是它“顺手”改了不该改的配置。UI 让这种越界行为在视觉上无处遁形——这是对“非预期改动”的物理防御。

在终端里喂给 AI 上下文,往往需要你把图片转成链接、把日志复制粘贴。这消耗的是工作记忆。

在集成良好的 UI 中,截图、Issue 链接、报错片段是环境的一部分。你不需要整理它们,只需要指向它们。当你的脑力不需要处理“数据搬运”时,才能腾出带宽去处理“逻辑判断”。

你敢让 AI 大胆尝试,是因为你手里攥着“撤销键”。

UI 将 Revert、Discard Changes 做成了极低成本的按钮。而在终端里,回滚往往意味着另一串指令的输入。这种操作成本的差异,决定了你在面对不确定性时的决策心理——是如履薄冰,还是大胆假设。

攻击者从未停止寻找开发环境的漏洞。无论是 LastPass 的泄露事件,还是 DEV#POPPER 针对开发者的社工攻击,都提醒我们:开发终端本身就是一个高价值的攻击面。

正因如此,安全工程师更应该清楚什么时候该钻进“引擎盖”,什么时候该坐在“驾驶位”。

当 AI 逐渐接管了引擎盖下的繁重劳动,人类工程师的价值,也许不再是比 AI 懂更多的指令细节,而是作为驾驶员,手握方向盘,盯着仪表盘上的每一个异常跳动。

问题不在于工具的优劣,而在于你此刻的角色:你是负责燃烧的燃料,还是负责方向的驾驶员?