2026-05-27 03:56:01

If you go to your favorite social media site, you will find it full of posts that start to look suspiciously similar to each other:

Many of the comments to these posts are also generated by AI. So are an increasing number of academic papers and New York Times opinion articles, and, apparently, award-winning short stories. If you use AI a lot, you probably have noticed how much AI writing is around you (frequent AI users have historically done quite well identifying AI writing), if not, I promise you it is much more than you think.

It isn’t just the sameness of the AI writing, though that eventually gets to be tedious enough that I find myself skipping writing on even interesting topics if my internal “AI detector” goes off. It is also that badly prompted AI writing produces very little meaning per word, taking you in intellectual circles instead. We are trained to read well-crafted sentences and intellectual sounding texts as the result of effortful human work and thus pay attention to these AI written comments when we see them. But there is often no human meaning there, these posts are just meaning-shaped attention vampires that take mental effort to decode and give you no equivalent understanding in return1.

But using AI for writing has a cost beyond turning off readers, it risks undermining the development of an important human task. I am lucky enough to have been writing for decades, and I have developed my own style which I think shines through whether I am writing a book, a tweet, or a blog post. That style took a lot of super annoying work to get to: good teachers and rewrites and mean online comments all contributed. If the AI does fine writing, I could skip all of that, but I would have done so the cost of giving up something that has turned out to be very important to my career and my happiness.

This is not a condemnation of using AI to help with writing in any way. I think AI can be a fantastic tool for good writers (I have AI check all of my writing and roleplay different reader perspectives to see if I missed something important). For those who struggle with communication, AI can help get their ideas across better, and writing may not be thinking for everyone. Plus, a little bit of effort can make AI writing less cliche, more personal, and more worth using (in moderation). So, this is instead a condemnation of using AI as a default, or, even worse, without thinking at all. Balancing using AI with our own mental abilities is going to be a defining challenge of the coming years.

The clearest place to see this is in education, where two papers with an overlapping research team (including peers at Wharton) do a good job illustrating the difference between using AI to shortcut thinking and to help thinking. The first paper was an experiment at a high school in Turkey with about a thousand students learning math. One group used plain ChatGPT, the other had no AI access. The students with ChatGPT did their homework better and thought they were learning more, but at test time, they underperformed their classmates without ChatGPT. That is because the AI, designed to be a helpful assistant, was really just giving them answers, and actual learning requires mental effort. By short-circuiting effort, you short-circuit learning. That is why the initial results of AI on learning in classrooms can be so worrying.

Yet we can see a different result in a second paper from many of the same authors when they ran a five-month Python course across ten high schools in Taipei with close to a thousand students. Students who were given a personalized sequence of problems by an AI tutor scored 0.15 standard deviations higher on a final exam taken without AI help. By some estimates, that’s the equivalent of six to nine months of additional schooling, without any added instruction time or teacher workload. Instead, the AI helped tailor the learning to the student. This fits other work on AI tutoring, suggesting that customized tutors can significantly boost learning when used properly.

This is a relatively small difference in how you use AI and yet it leads to big outcome differences. Worse, human nature leads us to make the wrong choices. Learning requires us to face our own ignorance and do hard intellectual work, and these things are really uncomfortable. Which is why students rate entertaining lectures as more educational than doing hard problems in class, even though they actually learn more from the hard work. To benefit from AI in learning you need to pivot from using AI to solve problems, to pushing you to solve problems yourself.

Fortunately, the three major AI companies have tools that provide at least some support for learning by making the AI act more like a tutor. Unfortunately, they are not intuitive to access. Gemini is the easiest. Hit plus and pick Guided Learning. For ChatGPT, you need to type “/learn” into the chatbox. For Claude, you need to hit the plus, select use style, and select “learning” (Anthropic has announced that this approach is changing but has not yet documented the change). In all cases, you should use a thinking or advanced model where possible, especially for STEM subjects. And these modes will only help support someone who wants to learn, they won’t stop you from cheating if you want.

AI need not undermine your ability to think, but it can do so if used badly and badly is often the default. My colleagues at Wharton call this “cognitive surrender,” and they documented how people would stop thinking about problems and just let the AI do the work, even when the AI was wrong. I think part of the problem is the way these tools are designed.

When AI systems required elaborate back-and-forth conversations and made errors frequently, humans had to be engaged at every step. Agentic systems are designed to make your life easier, because they just do stuff. Which is great for getting stuff done, bad for learning anything, or staying authentic, or avoiding cognitive surrender. If you put in a hard request and get an answer, it is tempting to just go with the AI’s response.

In our recently published paper with Fabrizio Dell’Acqua and my colleagues at Harvard, MIT, the University of Warwick, BCG, and elsewhere (which I wrote about here three years ago, but publishing academic work takes a while!) we ran an experiment on 758 consultants at Boston Consulting Group, half of whom got access to GPT-4. Consultants using AI vastly outperformed those without. But we also asked consultants to do solve a problem that we knew the AI would fail at. Consultants using AI on this task were significantly less likely to get the right answer than consultants without it. The AI gave them an authoritative-looking answer that happened to be incorrect, and most of them, the same elite consultants who outperformed on everything else, did not catch it. Of course, now AI just solves that problem, so the issue isn’t really error rates now, it is failing to learn how to be a good consultant by giving into the same impulse to surrender.

Again, this does not have to be the default. In a small study conducted by Anthropic, programmers used AI to help them do a new task. Those who just let the AI do the work couldn’t answer questions about what they had done, a sign of surrender. But people who asked the AI to explain what it was doing, or those who used AI to help them with only some of the work, seemed to avoid that fate.

Some of the solution might be in the tools themselves, but that is limited. A version of ChatGPT that asked, before every answer, “would you rather I push you to think through this, or just give it to you?” or told you “I think this would be more authentic if you wrote this” would be insufferable most of the time. But there are places where we absolutely need these reminders. The Taipei result hints at one direction, namely system-level constraints rather than user-level willpower, but we don’t see much of that in the consumer products, and the commercial pressure mostly pushes in the opposite direction.

A lot of the problem is going to come down to us. To be clear, I am cool with a lot of cognitive surrender. I don’t remember phone numbers anymore because my phone does that for me. I am happy my kids didn’t need to learn cursive. I am fine with calculators doing my daily math and my computer figuring out how to schedule my classes. These were once useful skills, but we were probably right to get rid of them.

AI is different because the technology is general enough that virtually any cognitive task can be offloaded into it to some degree. I don’t want to be too precious about writing: there is no principle that says a polished email draft has to come out of a human mind any more than a column of arithmetic has to. But we don’t want to give up everything, and that we mostly don’t know yet, for any specific task, what is important and what is not. Deciding that is going to be a real challenge.

The point isn't to avoid AI but to be intentional about it by making a conscious choice about AI use, rather than reflexive dependence or reflexive avoidance. More broadly, we are at the point where the defaults are being set for what kind of work to give AI: by the AI companies designing for frictionless use, by employers deciding what counts as “using AI well,” and by people teaching the ever-shifting concept of “AI literacy.” A lot of this is happening without, ironically, any real planning or consideration. And I suspect it will be hard to reverse these defaults once a generation of workers and students has built habits around them. The most important thing we can do is keep asking what to hand over and what to keep for ourselves… and not expect anyone, including the AI, to answer that for us.

This is especially true of fiction writing, where AI is notoriously weak while seeming strong. ChatGPT in particular is fond of meaningless similes and metaphors (“the street was like a gap-toothed smile,” “he sat in a way that would make the trees jealous”) that can feel profound at first sight, but only because we assume difficult writing is purposeful and work hard to assign it meaning. Humans are very good at assigning meaning to meaningless material if we try hard enough.

2026-04-24 04:00:38

I had early access to GPT-5.51, and I think it is a big deal. It is a big deal because it indicates that we are not done with the rapid improvement in AI. It is also a big deal because it is just plain good. And it is a big deal because even with all of this, the frontier of AI ability remains jagged.

It is increasingly hard to quickly demonstrate each generational change as AI has gotten better, since a lot of the old things AI was bad at, like math or counting letters in words, are now trivial for AI to do. So, I will give you the complicated details, but first, a simple example that I think is a good illustration. What AI models are best at is coding, so I gave a coding challenge to AIs ranging from OpenAI’s first reasoning model, o3 (released a year and a week ago!) to the current best open weights model (Kimi K2.6) to the new GPT-5.5 Pro: “build me a procedurally generated 3D simulation showing the evolution of a harbor town from 3000 BCE to 3000 AD, it should look beautiful and allow me to have some control over it.”

Then I posted every answer to this gallery so you can experiment with them (actually, I had GPT-5.5 Codex build the gallery page for me). You should play with them to feel the difference, but you can see a few of these examples below. In addition to being better along all the other dimensions, only GPT-5.5 Pro actually modelled an evolving town, rather than just generating new building replacements over time. GPT-5.5 Pro is also much faster than its previous iteration: GPT-5.4 Pro took 33 minutes to complete the task, GPT-5.5 Pro took 20.

I have been encouraging you to think about AI not as a single thing, but as a set of three interlinked concepts. You need to consider models, like Opus 4.7, Gemini 3.1, or (now) GPT-5.5. You also want to pay attention to apps, which are the products you actually use to talk to a model, and which let models do real work for you. The most common app is the website for each of these models: chatgpt.com, claude.ai, gemini.google.com. But, increasingly, desktop applications like Claude Code, Claude Cowork, and OpenAI Codex are becoming the most useful apps for AI. Finally, there are harnesses, the tools that an AI can use and how the AI models are hooked up to these tools. Tools allow the AI to control your computer, write code, do research, and make images.

OpenAI has made advances in all three areas. On the model front, GPT-5.5 is a powerful family of models, with GPT-5.5 Pro (accessible only on the website) the most competent. There have also been major advances recently in apps, with OpenAI’s Codex increasingly following the path of the excellent Claude Code and making an accessible and useful desktop application. Finally, there are harnesses and the tools they can use. There have been a lot of new harness improvements, but one of the most interesting is from OpenAI, which has a new image model

This new model can now render high-quality text and create almost any picture you can describe. Long-time readers know about my Otter Test, which asks the AI to make an image of an otter on a plane using wifi. Rather than describe it again, let’s let the new image model (sometimes called GPT-imagegen-2) explain it for me: “a photo of an otter scientist demonstrating the results of Ethan Mollick’s otter test, which shows how well an AI image maker can make images of an otter sitting on an airplane using wifi”

Maybe you want to see the academic paper about it? “Show me the first page of the academic paper on the Otter test, well-formatted, sitting on a desk” (feel free to zoom in on the text)

Or maybe we should just make it art? “now show an elaborate art gallery, every image on the walls is an otter on an airplane using a laptop, in the styles of Klimt and Rothko and Matisse and Monet and Picasso and Titian and Rembrandt and O’Keefe. There should be readable labels below each one.” (This is worth zooming in on)

All of this is very cool, and would have been impossible a few months ago, but it is useful as well. An image generator that can make detailed text and images can be used to make PowerPoint slides or product mockups or example websites or anything else you ask for. But this is just one tool, and the real magic happens when you combine harnesses, apps, and models on a real problem. Here's one I've been procrastinating about for a decade.

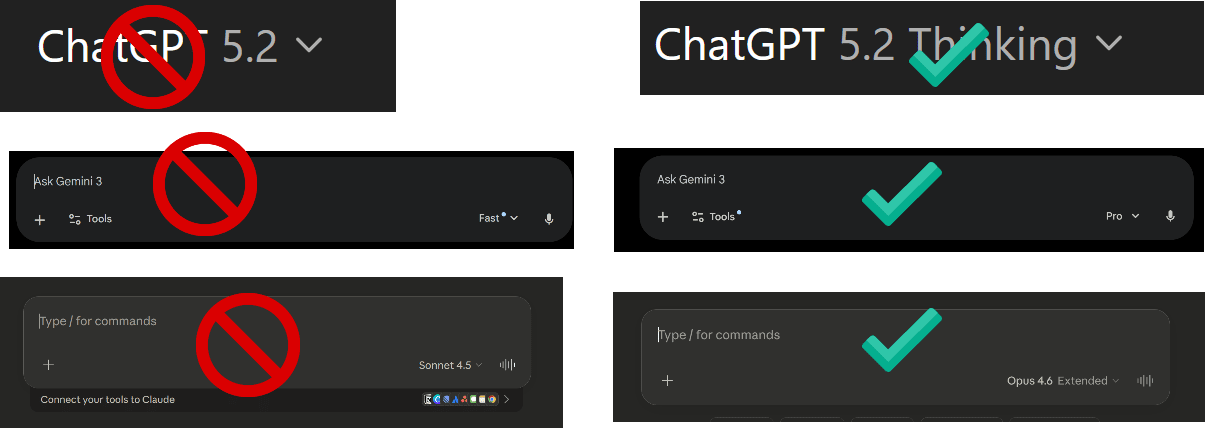

I am an academic, and a lot of my non-AI work, especially in the early 2010s, focused on crowdfunding. I have hundreds of anonymized data files on the topic that I have collected from surveys and analysis and research work, a mix of STATA, CSV, XLS and Word files that I never got around to writing a paper about. I wanted to see how far GPT-5.5 could get with this information. So, I used Codex powered by GPT-5.5 and asked: “Help me sort [the data] out and generate a new hypothesis that might be interesting and test it in sophisticated ways and write an academic paper.” I also asked it to include a literature review and formatting. The results were very impressive, especially after I asked GPT-5.5 Pro to comment on the paper and fed those results back into Codex. You can read the results here. It isn’t perfect, but that is no longer because there are obvious errors: the literature review is all real, as are the statistics. Instead, it is because, as an expert, I think the hypothesis is not that interesting and there are some standard concerns about causation, even though the AI used very sophisticated statistical methods to try and address them. In short, I would have been very happy if this paper was the outcome of a 2nd year PhD project. And I just gave it four prompts, without ever touching the text myself.

We can bring harnesses and apps and models together another way as well. I asked Codex to create an entirely new tabletop roleplaying game, basically its own version of Dungeons and Dragons in a fantasy world of its own invention, full of all of the tables and rules you need to play. I also asked it to simulate players experiencing the game and revise the rules based on what it found. As you can see, the AI complied, including laying out an attractive 101 page PDF and illustrating it using its image generator.

In addition to being technically neat, there is a lot to like about the actual content. The setting is interesting and novel, and the rules appear to make sense, drawing on existing game patterns while adding unique elements. However, a closer inspection also reveals the jagged frontier of AI ability is not entirely gone. Every generation of AI models has struggled with actually building long-form fiction. If you are a frequent reader of AI writing you see the same problems here: a love of the uncanny; overly complex ideas that do not fully pay off; weird metaphors (“weather and architecture are the same argument at different speeds”); too many ornate sentences (“the holy things that surface when a sea forgets it was once a road,” is cool once, an entire book of that is exhausting); dialogue where every character speaks in the same clipped tone; and the name “Mara.” So, even amongst all the amazing technical progress, there are still rough edges.

GPT-5.5 shows us that the models keep getting smarter, the apps keep getting more capable, and the harnesses keep getting better, making them ever more effective at solving real problems. I can get a near PhD-quality paper from four prompts or a playable roleplaying game, illustrated and “playtested,” from one. But the fiction is still flat and the hypotheses are sometimes uninteresting even when the statistics are sound. But still. A year ago, none of this was close, and, with the latest releases, capability gains appear to be accelerating.

GPT-5.5 is clearly not the end of this process, but it is a noteworthy step along the way. I have been writing this newsletter for over three years now, and the pattern has not changed: every few months a new model arrives. I run my tests and something that was impossible becomes easy, while the size of the leaps grows each new release cycle. The jagged frontier is still there. It is just much further out than it used to be.

I take no money from OpenAI or any other AI lab, and OpenAI has not seen this post in advance. Also, I don’t know all the details of the launch at the time I am writing this, so I apologize for any errors.

2026-04-01 06:34:37

AIs are already far more capable than most people realize. A large part of this so-called capability overhang comes not from the limits of AI (though, of course, they still have many limits), but from how people interact with it. The vast majority of people access AI through chatbots, and usually the free versions with less capable models. A chatbot is fine for a quick question, but it is a bad way to get real work done.

In fact, recent research suggests that we pay a mental tax when using chatbot interfaces for work. A new paper had a small group of financial professionals do a complex valuation task with GPT-4o1 and measured their cognitive load from the transcripts, turn by turn. People did see a productivity gain from using AI, but some of that seemed to be offset by the fact that the AI presented information in a way that completely overwhelmed people: giant walls of text, offers to pursue new topics, and sprawling discussions. The chatbot interface appeared to be the obstacle, not the work. And once a conversation got messy, it stayed messy. The AI, optimized to be helpful, just mirrored back whatever disorganized structure the user provided while the user, overwhelmed, didn’t reorganize. Both sides kept compounding the problem. The people hurt most were less experienced workers, exactly the people who could benefit the most from AI… if they could keep track of what they were doing with it

This shouldn’t be a surprise to you if you have used a chatbot to get things done. You ask a specific question and get five paragraphs that contain the answer (somewhere!) while the AI also offers three new things you didn’t ask about. The interface itself creates cognitive costs that overwhelm the benefits of the AI’s intelligence. So what does a better interface look like?

One option is to build specific interfaces for specific jobs or tasks. Of all the specialized AI interfaces, the only really complete ones are for programming. This is exactly what you would expect, the AI labs are staffed by programmers, the models are trained extensively on code, and the people building these tools are often building them for themselves.

I’ve written before about Claude Code, Anthropic’s coding agent that can work for hours autonomously. OpenAI’s Codex and Google’s Antigravity do similar things. I have used Claude Code for everything from making (a small amount of) money to making games, never touching any code at all. I also find Codex incredibly useful as well, with a similar level of capability. These tools are terrific, but they are really built for programmers. They assume you know Python and Git. Their interfaces look like a 1980s computer lab. For the 99% of knowledge workers who are not developers, these powerful AI tools are not optimized for them.

Of all the AI labs, Google seems to be experimenting the most with building specialized interfaces for other professions. All are a bit rough around the edges, but they show how the future might look when AI tools are built for other types of knowledge professionals. Google’s Stitch hints at what AI-native design could look like — an infinite canvas where you describe an app in natural language and get back multiple interconnected screens with consistent design systems. In a similar vein, Pomelli lets you paste your website URL and automatically generates on-brand social media campaigns, taking the language of marketing, not prompting, to make this feel less technical. And, most well-known, NotebookLM provides a way of researching, displaying, and working with diverse information sources. Each of these show where things might be heading, but it’s not yet the kind of transformative tool that Claude Code is for programmers. But there is another interface that has seen explosive growth, the personal agent.

If you haven’t heard of it, OpenClaw is an open-source AI agent, its symbol is a red lobster, it is a security nightmare, and it has become the fastest-growing open source project in history. OpenClaw is a so successful because it is a genuine personal agent. The system is designed so that you can talk to your AI agent through WhatsApp or Telegram or Slack, the same apps you use to text people. You tell it to check your email, book a table, find a file, and it goes and does those things on your computer. It solved the interface problem in a way that felt obvious in retrospect: instead of a chatbot or a command line, it let you talk to an AI in the way that you would a person, using interfaces, like WhatsApp, that are already very familiar.

OpenClaw, however, is hard to use and provides a lot of security risks. Anthropic’s answer is Claude Cowork with Dispatch. Cowork, which launched in January, is a version of Claude Code for knowledge workers. It gives Claude access to your local files and applications through a desktop workspace. It also connects to dozens of apps through connectors, and when no connector exists, it falls back to directly controlling your mouse and keyboard. Dispatch, which came in the last couple weeks, adds the key piece: you can message Claude from your phone while it works on your desktop. You scan a QR code, and your phone becomes a remote control for an AI agent sitting at your computer.

Using a combination of Dispatch and Claude Code creates an interface that feels like talking to a competent assistant. For example, I asked Claude from my phone to prepare a morning briefing, and it reads from my calendars, emails, and online channels, then gives me a report on what I need to do next. But Cowork also does more complex work. From my phone, I asked it to look at a recent presentation I made and see if the graph in Slide 3 was up-to-date, and, if not, to update it. You can see that it got slightly stuck at one place (a site blocked it from downloading a file), but, aside from that, the results were very impressive. It opened and “viewed” the PowerPoint and investigated my entire computer for more up-to-date data. When I gave it a link to a more updated online paper, it downloaded the PDF, located the newer graph, clipped out the image of the graph, and updated my PowerPoint for me. This is sophisticated and complicated work, that, even if not always seamless, is usually close enough to save a lot of time.

Is this as flexible as OpenClaw? No. Cowork is sandboxed, safer but more limited (but that doesn’t mean there aren’t security risks). The connector ecosystem is growing but incomplete. And the idea that Cowork can use your computer is impressive as a concept and error-prone in practice. But the core insight is the same one OpenClaw stumbled onto. People don’t want a chatbot. They want an agent that works on their actual files, with their actual tools, accessible the way they talk to people.

All of this assumes that we need to decide our interfaces in advance. But the latest AI systems can actually build an interface for you. For example, over the past few weeks, Claude gained the ability to generate visualizations directly in the conversation. These aren’t static images. They’re interactive, adjustable, and Claude can modify them as you ask follow-up questions.

This is a different approach to the interface problem. Instead of having companies build a specialized interface for every kind of work, the AI generates the right interface on the fly. I suspect the future isn’t one interface to rule them all. It’s AI that generates the right interface for the moment, an agent on your desktop, a chart in a conversation, a custom app to solve a problem. We’re moving from adapting to the AI’s interface to the AI adapting its interface to you.

AI capability has been running ahead of AI accessibility. The models have been smart enough to do extraordinary things for a while now, but we’ve been making people access that intelligence through chatbots. And, as that cognitive load research shows, the chatbot format is actively working against them. As interfaces improve, we’re going to see what happens when a much larger number of people can actually use what AI is capable of. Every new interface that closes even part of that gap will feel like a leap in AI capability, even when the models haven’t changed (though they are still changing). My guess is that a lot of the “AI disappointment” people sometimes express comes not from the AI being bad, but from the interfaces being wrong. We built one of the most powerful technologies in recent history and then made people access it by typing into a chat window. That will change soon.

It is always good to be cautious about papers that make claims based on older AI models, but, in this case, I doubt there has been much change between the now obsolete GPT-4o and GPT-5.4 or whatever, since they both show walls of text.

2026-03-12 22:10:07

In October of 2023, I wrote about the “Shape of the Shadow of the Thing,” speculating on the Thing that AI might turn into in the coming years. I think we can see the Thing much more clearly now, and some of the consequences that come with it. As I have been discussing in recent posts, we have entered a new phase of AI. After ChatGPT was introduced, human-AI work took the form of what I called co-intelligence, where humans would prompt AI back-and-forth to get help on tasks. Starting in late 2025, we entered a new era thanks to AI agents like Claude Code, OpenAI’s Codex, and OpenClaw. These are AI systems that you can just give work to, sometimes hours of human work, and get back reasonable and useful results in minutes. This is an era of managing AIs, rather than working with them.

This new approach to AI is the outcome of the rapid exponential improvement in AI abilities. That means you can’t understand where we are, and where we might be going, without understanding the increasing capability of AI.

Exponential improvements are hard to visualize, so rather than charts or graphs, I want to start with otters. If you have followed my writing on AI, you know about my Otter Test, where I challenge various AI image models to show a picture of an “otter on a plane using wifi.” As you can see below, the progress from 2022 (the year ChatGPT launched) to 2025 was rapid and remarkable.

So, what has happened in the time since that April, 2025 image? With nearly perfect images, video has become the new frontier and has also seen exponential gains. To demonstrate, I gave the most advanced (and still unreleased in the US) AI video model from TikTok maker Bytedance, the prompt: A documentary about how otters view Ethan Mollick's "Otter Test" which judges AIs by their ability to create images of otters sitting in planes. This is the very first result — definitely turn on your sound:

Aside from a single pronunciation mistake, this is pretty perfect, down to the fact that the otters are animated to have human-like expressions. Of course, video models are cool, but they are not necessarily indicative of what useful agentic AI can do. So, what if we look at the benchmarks of AI ability, do we see the same exponential curve?

We certainly do in the most famous evaluation in AI today, the METR Long Tasks graph. It tries to measure AI progress by seeing how much human work an AI can complete autonomously with some measure of reliability. It has attracted its share of critics, and even METR has pointed out potential issues. But if you don’t like the METR graph, you will find most graphs of AI ability have that same curve.

As an example, I picked four hard and diverse AI tests and graphed progress over time in the image below. In the upper left are the scores on the Google-Proof Q&A benchmark, a test of knowledge where graduate students using Google only score 34% outside their field and 70% or so inside of it, but the best AIs now score 94%. Or look at GDPval, where industry experts judge AI versus experienced human performance on complex tasks, and where the latest AIs now reach or exceed parity with top-performing humans 82% of the time. The same pattern holds for Humanity’s Last Exam, a set of very hard problems written by college professors that require considerable expertise to answers. Or we can even use the ability of AI to solve puzzles (you can try the puzzles here, they are fun!). Each shows a similar rapid gain in ability with few signs of slowdown, at least until they reach the top possible score on the test.

Exponential graphs aside, it is important to recognize that all of these tests have their own flaws, and that AI remains jagged, capable of some tasks at a high level, while messing up others. Further, despite these amazing capabilities in tests, companies are still very early in adopting AI, meaning that, as of yet, remarkably little has changed in most organizations. But “most organizations” doesn’t mean every organization. We are already starting to see the first appearances of new approaches to organizing that take advantage of the new abilities of AI agents.

A few weeks ago, a three-person team at StrongDM, a security software company focusing on access control, announced they had built a Software Factory — a way of working with AI agents that relied entirely on the AI to write, test, and ship production software without human involvement. The process included two (quite radical) rules: “Code must not be written by humans” and “Code must not be reviewed by humans.” To power the factory, each human engineer is expected to spend amounts equivalent to their salary on AI tokens, at least $1,000 a day.

The basic idea of the Factory is that it takes future product roadmaps, written by humans, and turns those into products. Coding agents use those roadmaps to build software while testing agents try out the software in a simulated customer environment (which the testing agents build as needed). The sets of agents provide feedback to each other, looping back-and-forth until the results satisfy the AI. Then humans review the finished product and the results are shipped to customers without anyone every touching, or even seeing, the underlying code.

There are obviously a lot of details here that make this approach work, and the StrongDM team has shared a lot of them publicly. They also invited in some smart outside observers to watch the Factory in operation and comment on what they saw, so you can read the accounts of Simon Willison and Dan Shapiro to get a better sense of the strengths and weaknesses of their approaches. In many ways, however, the particular details of the Software Factory matter less than the fact that such radical experimentation into how we work is now not only possible, but likely necessary. AI is good enough to change how organizations operate, and the experimentation is just getting started, even as models continue to improve.

Practical agents, jagged exponential improvement, and the ability to radically experiment with the nature of work combine to form a sort of rolling and unpredictable environment for AI advances. As AI capability crosses thresholds, it unlocks radical new use cases that change people’s views, sometimes overnight, about what AI can do. At the same time, organizations experimenting with AI will figure out how to make it work for them, leading to sudden announcements about new strategies or large-scale shifts in which kinds of employees companies value most. Plus, as AI continues to improve, more policymakers will become interested in AI governance, creating conflicts with AI companies.

This isn’t speculation because we saw this all happen in a single week. On February 22nd, a little-known financial firm, Citrini Research, published a fictional scenario about how AI adoption might destroy a number of established businesses by 2028. There were many elements in the piece that were clearly farfetched, but it struck a nerve on Wall Street, leading to major stock market price shifts. On February 26, financial services company Block announced 40% layoffs, implying this was due to AI. It is likely that the role of AI was greatly exaggerated, and AI was merely used as cover for large-scale layoffs. And then, to cap off the week, on February 27 a very public conflict occurred between the Pentagon and AI company Anthropic over who should be able to control the rules for how Claude could be used by the government.

In a lot of ways, each of those cases were not what they first appeared to be. The Citrini report was a fictional scenario, the Block layoffs were not about AI, and the conflict over AI at war revolved around a number of complicated issues that are still not completely clear. But I think that single week is a good illustration of what the near future will feel like. Sudden revelations about AI capability leading to rapid market reactions. Increasingly real impacts of AI on jobs (even if there is a lot of debate over whether those impacts will be good or bad in the short term). And increasing entanglement between AI companies and policymaking around the world. As the stakes go up, it is likely things will feel even more unstable.

It is possible, of course, that things settle down. Maybe AI improvement hits a wall, organizations absorb the changes gradually, and the rolling disruptions become more manageable as people learn what AI can and can’t do. History is full of technologies that were supposed to change everything overnight but instead took decades to fully reshape the economy.

But I wouldn’t bet on it.

One reason is that AI companies are telling us, fairly explicitly, what comes next: recursive self-improvement, or RSI. This is the idea that AI systems are increasingly being used to build better AI systems, creating a feedback loop that could accelerate the very curves I showed you above. At Davos in January, Anthropic’s Dario Amodei explained that if you make models that are good at coding and good at AI research, you can use them to build the next generation of models, speeding up the loop. He noted that engineers within Anthropic barely write code themselves anymore. When OpenAI released its latest Codex model in February, the company stated it was “our first model that was instrumental in creating itself.” And Google DeepMind’s Demis Hassabis acknowledged at the same Davos panel that closing the self-improvement loop is something all the major labs are actively working on, even as he warned there are still missing capabilities and real risks.

We don’t know how far this goes. RSI has been a theoretical concept for decades, and the labs may hit bottlenecks, whether in compute, in data, or in the sheer difficulty of AI research. We also don’t know whether LLM-based AIs will eventually hit a ceiling where they cannot get any better, or where the jagged frontier never smooths out. I don’t think we know anything for certain, but I also think we are past the point where recursive self-improvement is science fiction. Instead, it is an explicit item on the roadmap of every major AI company. If the loop does close, the exponential curves we’ve been watching would get steeper, with an uncertain endpoint.

So here is where we are today: the instability of that single week in February was a preview of what it feels like when the increasing ability of AI starts to interact with markets, jobs, and governments all at once. That feeling of uncertainty will likely only spread further. But uncertainty is not the same as helplessness. When a technology is this powerful and this unsettled, the choices that individuals and organizations make right now matter more. We can see the shape of the Thing now, but we can still influence the Thing itself, and what it means for all of us. We clearly don’t have rules or role models for how AI gets used at work, in schools, or in government. That’s a problem, but it also means that every organization figuring out a good way to use AI right now is setting a precedent for everyone else. The window to shape the Thing may not last long, but it is here now.

2026-02-18 09:45:41

I have written eight of these guides since ChatGPT came out, but this version represents a very large break with the past, because what it means to “use AI” has changed dramatically. Until a few months ago, for the vast majority of people, “using AI” meant talking to a chatbot in a back-and-forth conversation. But over the past few months, it has become practical to use AI as an agent: you can assign them to a task and they do them, using tools as appropriate. Because of this change, you have to consider three things when deciding what AI to use: Models, Apps, and Harnesses.

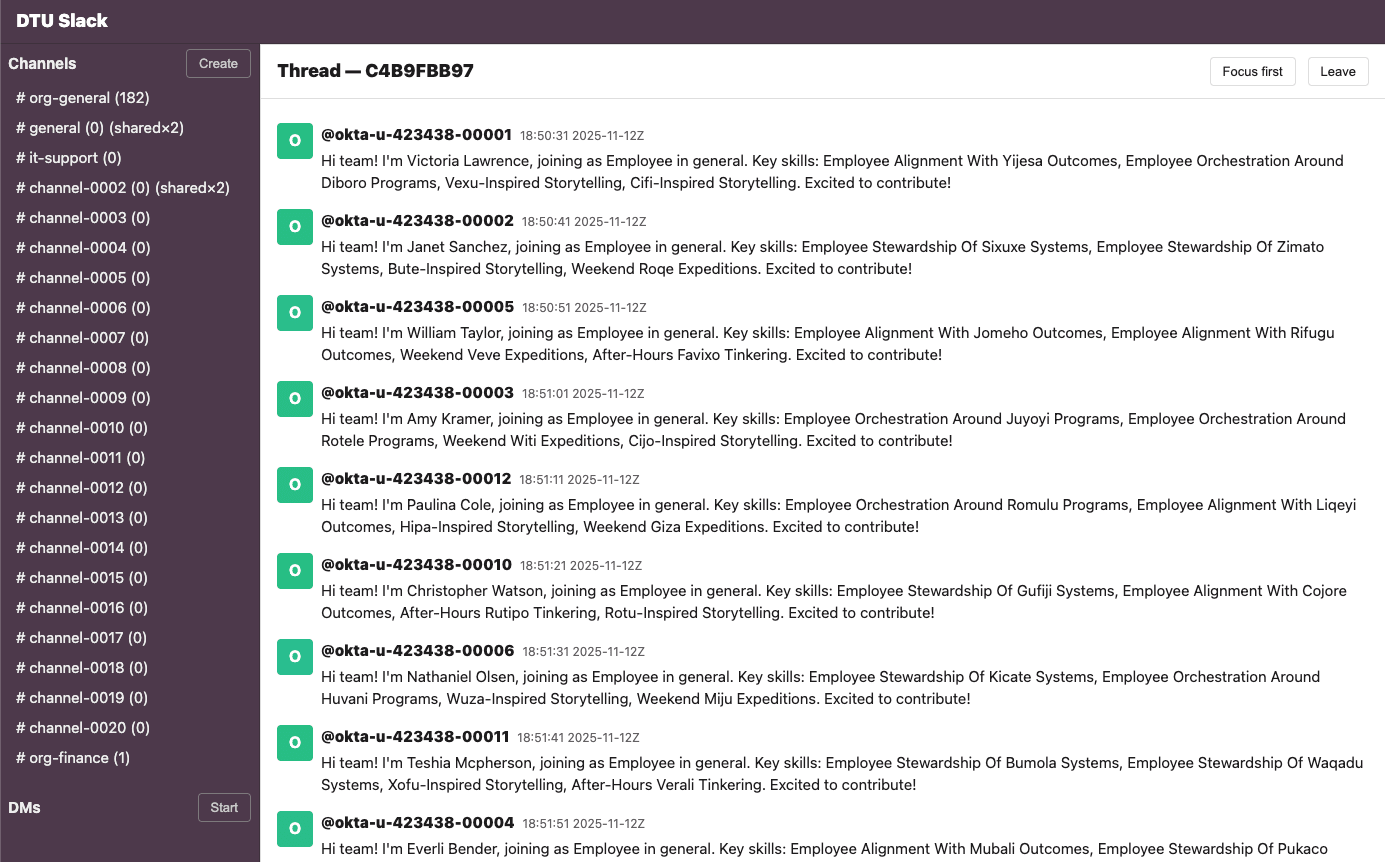

Models are the underlying AI brains, and the big three are GPT-5.2/5.3, Claude Opus 4.6, and Gemini 3 Pro (the companies are releasing new models much more rapidly than the past, so version numbers may change in the coming weeks). These are what determine how smart the system is, how well it reasons, how good it is at writing or coding or analyzing a spreadsheet, and how well it can see images or create them. Models are what the benchmarks measure and what the AI companies race to improve. When people say “Claude is better at writing” or “ChatGPT is better at math,” they’re talking about models.

Apps are the products you actually use to talk to a model, and which let models do real work for you. The most common app is the website for each of these models: chatgpt.com, claude.ai, gemini.google.com (or else their equivalent application on your phone). Increasingly, there are other apps made by each of these AI companies as well, including coding tools like OpenAI Codex or Claude Code, and desktop tools like Claude Cowork.

Harnesses are what let the power of AI models do real work, like a horse harness takes the raw power of the horse and lets it pull a cart or plow. A harness is a system that lets the AI use tools, take actions, and complete multi-step tasks on its own. Apps come with a harness. Claude on the website has a harness that lets Claude 4.6 Opus do web searches and write code but also has instructions about how to approach various problems like creating spreadsheets or doing graphic design work. Claude Code has an even more extensive harness: it gives Claude 4.6 Opus a virtual computer, a web browser, a code terminal, and the ability to string these together to actually do stuff like researching, building, and testing your new website from scratch. Manus (recently acquired by Meta) was essentially a standalone harness that could wrap around multiple models. OpenClaw, which made big news recently, is mostly a harness that allows you to use any AI model locally on your computer.

Until recently, you didn’t have to know this. The model was the product, the app was the website, and the harness was minimal. You typed, it responded, you typed again. Now the same model can behave very differently depending on what harness it’s operating in. Claude Opus 4.6 talking to you in a chat window is a very different experience from Claude Opus 4.6 operating inside Claude Code, autonomously writing and testing software for hours at a stretch. GPT-5.2 answering a question is a very different experience from GPT-5.2 Thinking navigating websites and building you a slide deck.

It means that the question “which AI should I use?” has gotten harder to answer, because the answer now depends on what you’re trying to do with it. So let me walk through the landscape.

The top models are remarkably close in overall capability and are generally “smarter” and make fewer errors than ever. But, if you want to use an advanced AI seriously, you’ll need to pay at least $20 a month (though some areas of the world have alternate plans that charge less). Those $20 get you two things: a choice of which model to use and the ability to use the more advanced frontier models and apps. I wish I could tell you the free models currently available are as good as the paid models, but they are not. The free models are all optimized for chat, rather than accuracy, so they are very fast and often more fun to talk to, but much less accurate and capable. Often, when someone posts an example of an AI doing something stupid, it is because they are either using the free models or because they have not selected a smarter model to work with.

The big three frontier models are Claude Opus 4.6 from Anthropic, Google’s Gemini 3.0 Pro, and OpenAI’s ChatGPT 5.2 Thinking. With all of the options, you get access to top-of-the-line AI models with a voice mode, the ability to see images and documents, the ability to execute code, good mobile apps, and the ability to create images and video (Claude lacks here, however). They all have different personalities and strengths and weaknesses, but for most people, just selecting the one they like best will suffice. For now, the other companies in this space have fallen behind, whether in models or in apps and harnesses, though some users may still have reasons for picking them.

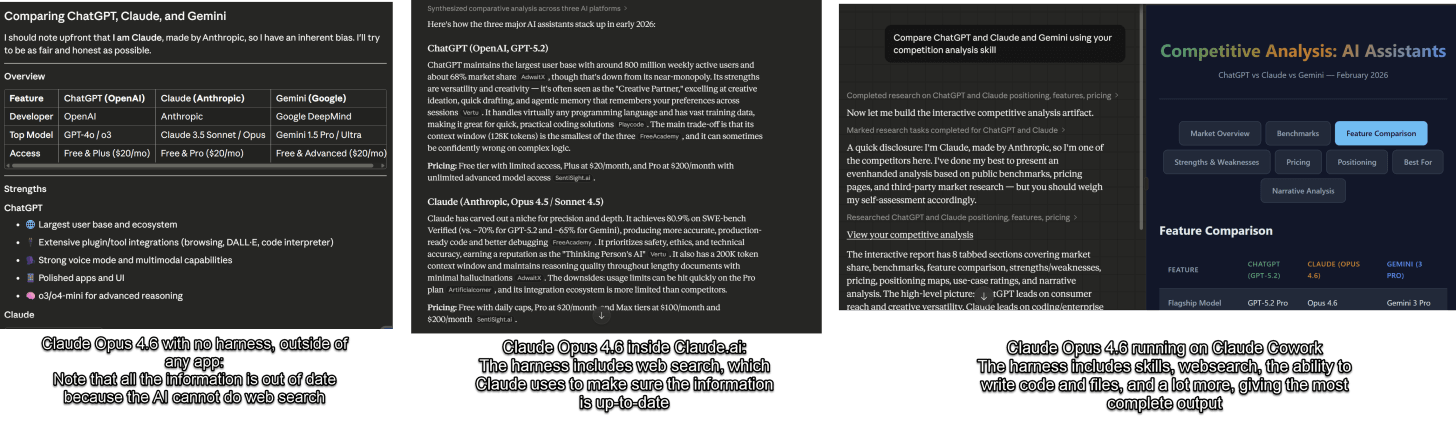

When you are using any AI app (more on those shortly), including phone apps or websites, the single most important thing you can do is pick the right model, which the AI companies do not make easy. If you are just chatting, the default models are fine, if you want to do real work, they are not. For ChatGPT, no matter whether you use the free or pay version, the default model you are given is “ChatGPT 5.2”. The issue is that GPT-5.2 is not one model, it is many, from the very weak GPT-5.2 mini to the very good GPT-5.2 Thinking to the extremely powerful GPT-5.2 Pro. When you select GPT-5.2, what you are really getting is “auto” mode, where the AI decides which model to use, often a less powerful one. By paying, you get to decide which model to use, and, to further complicate things, you can also select how hard the model “thinks” about the answer. For anything complex, I always manually select GPT-5.2 Thinking Extended (on the $20 plan) or GPT-5.2 Thinking Heavy (on more expensive plans). For a really hard problem that requires a lot of thinking, you can pick GPT-5.2 Pro, the strongest model, which is only available at a higher cost tier.

For Gemini, there are three options: Gemini 3 Flash, Gemini 3 Thinking, and, for some paid plans, 3 Pro. If you pay for the Ultra plan, you get access to Gemini Deep Think for very hard problems (which is in another menu entirely). Always pick Gemini 3 Pro or Thinking for any serious problem. For Claude, you need to pick Opus 4.6 (though the new Sonnet 4.6 is also powerful, it is not quite as good) and turn on the “extended thinking” switch.

Again, for most people, the model differences are now small enough that the app and harness matter more than the model. Which brings us to the bigger question.

The vast majority of people use chatbots, the main websites or mobile apps of ChatGPT, Claude, and Gemini, to access their AI models. In fact, we can call the chatbot the most important and widespread AI app. In the past few months, these apps have become quite different from each other.

Some of the differences are which features are bundled with AI:

Bundled into the Gemini chatbot (and accessible with the little plus button): you can access nano banana (the best current AI image creation tool), Veo 3.1 (a leading AI video creation tool), Guided Learning (when trying to study, this helps the AI act more like a tutor), and Deep Research

Bundled into ChatGPT is even more of a hodgepodge of options accessible with the plus button. You can Create Images (the image generator is almost as good as nano banana, but you can’t access the Sora video creator through the chatbot), Study and Learn (the equivalent to Guided Learning in Gemini, but there is also a separate Quizzes creator for some reason), Deep Research and Shopping Research (surprisingly good and overlooked), and a set of other options that most people will not use often, so I won’t cover here.

Claude has only Deep Research as bundled option, but you can access a study mode by creating a Project and selecting study project.

All of the AI models let you connect to data, such as letting the AI read your email and calendar, access your files, or connect to other applications. This can make AI far more useful, but, again, each AI tool has a different set of connectors you can use.

These are confusing! For most people doing real work, the most important additional feature is Deep Research and connecting AI to your content, but you may want to experiment with the others. Increasingly, however, what matters is the harness - the tools the AI has access to. And here, OpenAI and Anthropic have clear leads over Google. Both Claude.ai and ChatGPT have the ability to write and execute code, give you files, do extensive research, and a lot more. Google’s Gemini website is much less capable (even though its AI model is just as good),

As you can see, asking a similar question gets working spreadsheets and PowerPoints from ChatGPT and Claude, along with clear citations I can follow up on. Gemini, however, is unable to produce either kind of document, and it does not provide citations or research. I do expect that Google will catch up here soon, however.

One final note on Chatbots. GPT-5.2 Pro, with the harness that comes with it, is a VERY smart model. It is the model that just helped derive a novel result in physics and it is the one I find most capable of doing complex statistical and analytical work. It is only accessible through more expensive plans. Google Gemini 3 Deep Think also seems very capable, but suffers from the same harness problem.

The chatbot websites are where most people interact with AI, but they are increasingly not where the most impressive work gets done. A growing set of other apps wrap these same models in more powerful harnesses, and they matter.

Claude Code, OpenAI Codex, and Google Antigravity are the most well-developed of these, and they are all aimed at coders. Each of them gives an AI model access to your codebase, a terminal, and the ability to write, run, and test code on its own. You describe what you want built and the AI goes and builds it, coming back when it’s done or stuck. If you write code for a living, these tools are changing your job. Because they have the most extensive harnesses, even if you don’t code, they can still do a tremendous amount.

For example, a couple years ago, I became interested in how you would make an entirely paper-based LLM by providing all of the original GPT-1’s internal weights and parameters (the code of the AI, listed as 117 million numbers) in a set of books. In theory, with enough time, you could use those numbers to do the math of an AI by hand. This seemed like a fun idea, but obviously not worth doing. A week ago, I asked Claude Code to just do it for me. Over the course of an hour or so (mostly the AI working, with a couple suggestions), it made 80 beautifully laid out volumes containing all of GPT-1, along with a guide to the math. It also came up with, and executed, covers for each volume that visualized the interior weights. It then put together a very elegant website (including the animation below), hooked it up to Stripe for payment and Lulu to print on demand, tested the whole thing, and launched it for me. I never touched or looked at any code. I had it make 20 books available at cost to see what happened - and sold out the same day. All of the volumes are still available as free PDFs on the site. Now, I can have a little project idea that would have required a lot of work, and just have it executed for me with very little effort on my part.

But the coding harnesses remain risky for amateurs and, obviously, focused on coding. New apps and harnesses are starting to focus on other types of knowledge work.

Claude for Excel and Powerpoint are examples of specific harnesses inside of applications. Both of them provide very impressive extensions to these programs. Claude for Excel, in particular, feels like a massive change in working with spreadsheets, with the potential for a similar impact to Claude Code for those who work with Excel for a living - you can, increasingly, tell the AI what you want to do and it acts a sort of junior analyst and does the work. Because the results are in Excel, they are easy to check. Google has some integration with Google Sheets (but not as deeply) and OpenAI does not really have an equivalent product.

Claude Cowork is something genuinely new, and it deserves its own category. Released by Anthropic in January, Cowork is essentially Claude Code for non-technical work. It runs on your desktop and can work directly with your local files and your browser. However, it is much more secure than Claude Code and less dangerous for non-technical users (it runs in a VM with default-deny networking and hard isolation baked in, for those who care about the details) You describe an outcome (organize these expense reports, pull data from these PDFs into a spreadsheet, draft a summary) and Claude makes a plan, breaks it into subtasks, and executes them on your computer while you watch (or don’t). It was built on the same agentic architecture as Claude Code, and was itself largely built by Claude Code in about two weeks. Neither OpenAI or Google have a direct equivalent, at least this week. Cowork is still a research preview, meaning it’s early and will eat through your usage limits fast, but it is a clear sign of where all of this is heading: AI that doesn’t just talk to you about your work, but does your work.

NotebookLM is Google’s answer to a different problem: how do you use AI to make sense of a lot of information? You can ask NotebookLM to do its own deep research, or else add in your own papers, YouTube videos, websites, or files, and NotebookLM builds an interactive knowledge base you can query, turn into slides, mind maps, videos and, most famously, AI-generated podcasts where two hosts discuss your material (you can even interrupt the hosts to ask questions). If you are a student, a researcher, or anyone who regularly needs to make sense of a pile of documents, NotebookLM is a very useful tool..

And then there is OpenClaw, which I want to mention even though it doesn’t fit neatly into any of these categories and which you almost definitely shouldn’t use. OpenClaw is an open-source AI agent that went viral in late January. It runs locally on your computer, connects to whatever AI model you want, and you talk to it like you were chatting with a person using standard chats like WhatsApp or iMessage. It can browse the web, manage your files, send emails, and run commands. It is sort of a 24/7 personal assistant that lives on your machine. It is also a serious security risk: you are giving an AI broad access to your computer and your accounts, and no one knows exactly what dangers you are exposing yourself to. But it does serve as a sign of where things are going.

I know this is a lot. Let me simplify.

If you are just getting started, pick one of the three systems (ChatGPT, Claude, or Gemini), pay the $20, and select the advanced model. The advice from my book still holds: invite AI to everything you do. Start using it for real work. Upload a document you’re actually working on. Give the AI a very complex task in the form of an RFP or SOP. Have a back-and-forth conversation and push it. This alone will teach you more than any guide.

If you are already comfortable with chatbots, try the specific apps. NotebookLM is free and easy to use, which makes it a good starting place. If you want to go deeper, Anthropic offers the most powerful package in Claude Code, Claude Cowork (both accessible through Claude Desktop) as well as the specialized PowerPoint and Excel Plugins. Give them a try. Again, not as a demo, but with something you actually need done. Watch what it does. Steer it when it goes wrong. You aren’t prompting, you are (as I wrote in my last piece) managing.

The shift from chatbot to agent is the most important change in how people use AI since ChatGPT launched. It is still early, and these tools are still hard to figure out and will still do baffling things. But an AI that does things is fundamentally more useful than an AI that says things, and learning to use it that way is worth your time.

2026-01-28 00:55:55

I just taught an experimental class at the University of Pennsylvania where I challenged students to create a startup from scratch in four days. Most of the people in the class were in the executive MBA program, so they were taking classes while also working as doctors, managers, or leaders in a variety of large and small companies. Few had ever coded. I introduced them to Claude Code and Google Antigravity, which they needed to use to build a working prototype. But a prototype alone is not a startup, so they used ChatGPT, Claude, and Gemini to accelerate the idea generation, market research, competitive positioning, pitching, and financial modelling processes. I was curious how far they could get in such a short time. It turns out they got very far.

I’ve been teaching entrepreneurship for a decade and a half, and I've seen thousands of startup ideas (some of which turned into large companies) so I have a good sense of the expectations for what a class of smart MBA students can accomplish. I would estimate that what I saw in a couple of days was an order of magnitude further along the path to a real startup than I had seen out of students working over a full semester before AI. Most of the prototypes were not just sample screens but actually had a core feature working. Ideas were far more diverse and interesting than usual. Market and customer analyses were insightful. It was really impressive. These were not yet working startups nor were they fully operational products (with a couple exceptions) — but they had shaved months and huge amounts of money and effort from the traditional process. And there was something else: most early startups need to pivot, changing direction as they learn more about what the market wants and what is technically possible. By lowering the costs of pivoting, it was much easier to explore the possibilities without being locked in or even explore multiple startups at once: you just tell the AI what you want.

I wish I could say this impressive output was the result of my brilliant teaching, but we don’t really have a great framework yet for how to use all these tools, the students largely figured it out on their own. It helped that they had some management and subject matter expertise because it turns out that the key to success was actually the last bit of the previous paragraph: telling the AI what you want. As AIs are increasingly capable of tasks that would take a human hours to do, and as evaluating those results becomes increasingly time consuming, the value of being good at delegation increases. But when should you delegate to AI?

We actually have an answer, but it is a bit complicated. Consider three factors: First, because of the Jagged Frontier of AI ability, you don’t reliably know what the AI will be good or bad at on complex tasks. Second, whether the AI is good or bad, it is definitely fast. It produces work in minutes that would take many hours for a human to do. Third, it is cheap (relative to professional wages), and it doesn’t mind if you generate multiple versions and throw most of them away.

These three factors mean that deciding to delegate to AI depends on three variables:

Human Baseline Time: how long the task would take you to do yourself

Probability of Success: how likely the AI is to produce an output that meets your bar on a given attempt

AI Process Time: how long it takes you to request, wait for, and evaluate an AI output

A useful mental model is that you’re trading off “doing the whole task” (Human Baseline Time) against “paying the overhead cost” (AI Process Time), possibly multiple times until you get something acceptable. The higher Probability of Success is, the fewer times you have to pay AI Process Time, and the more useful it is to turn things over to the AI. For example, consider a task that takes you an hour to do, but the AI can do it in minutes, though checking the answer takes thirty minutes. In that case, you should only give the work to the AI if Probability of Success is very high, otherwise you’ll spend more time generating and checking drafts than just doing it yourself. If the Human Baseline Time is 10 hours, though, it could be worth several hours of working with the AI, assuming that the AI can be made to do a competent job.

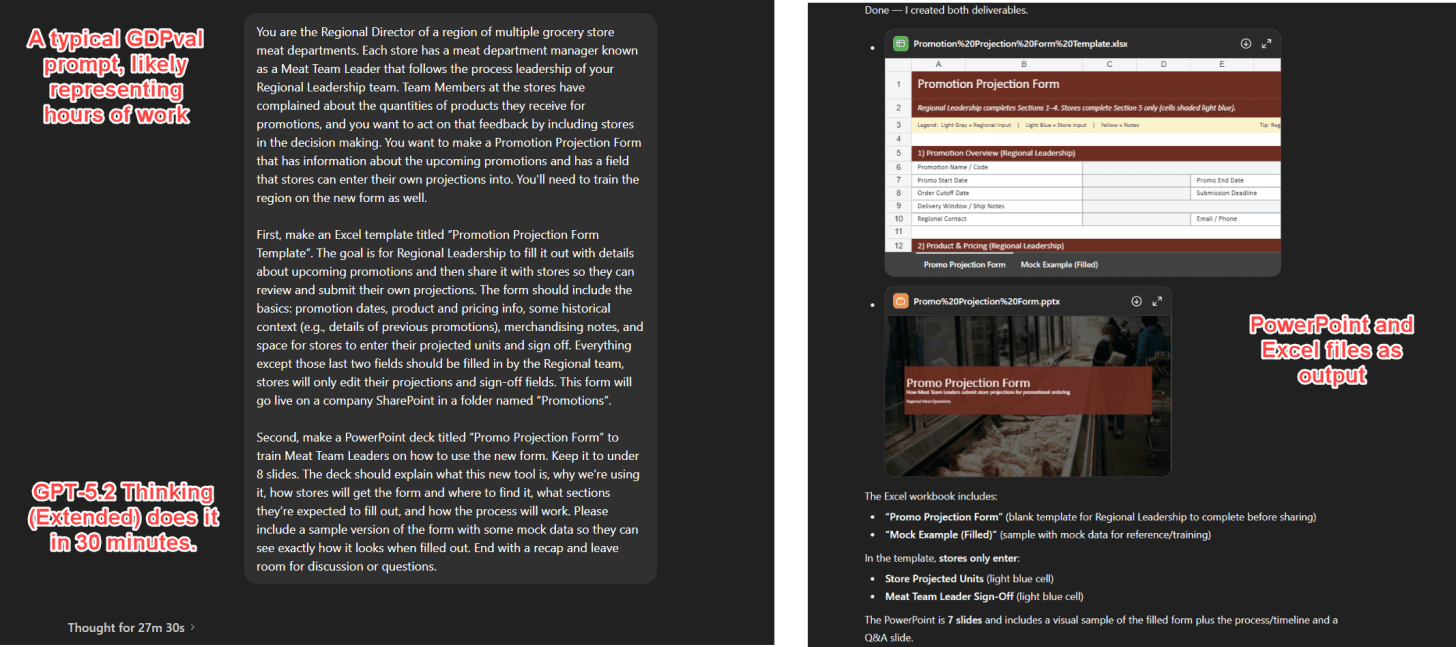

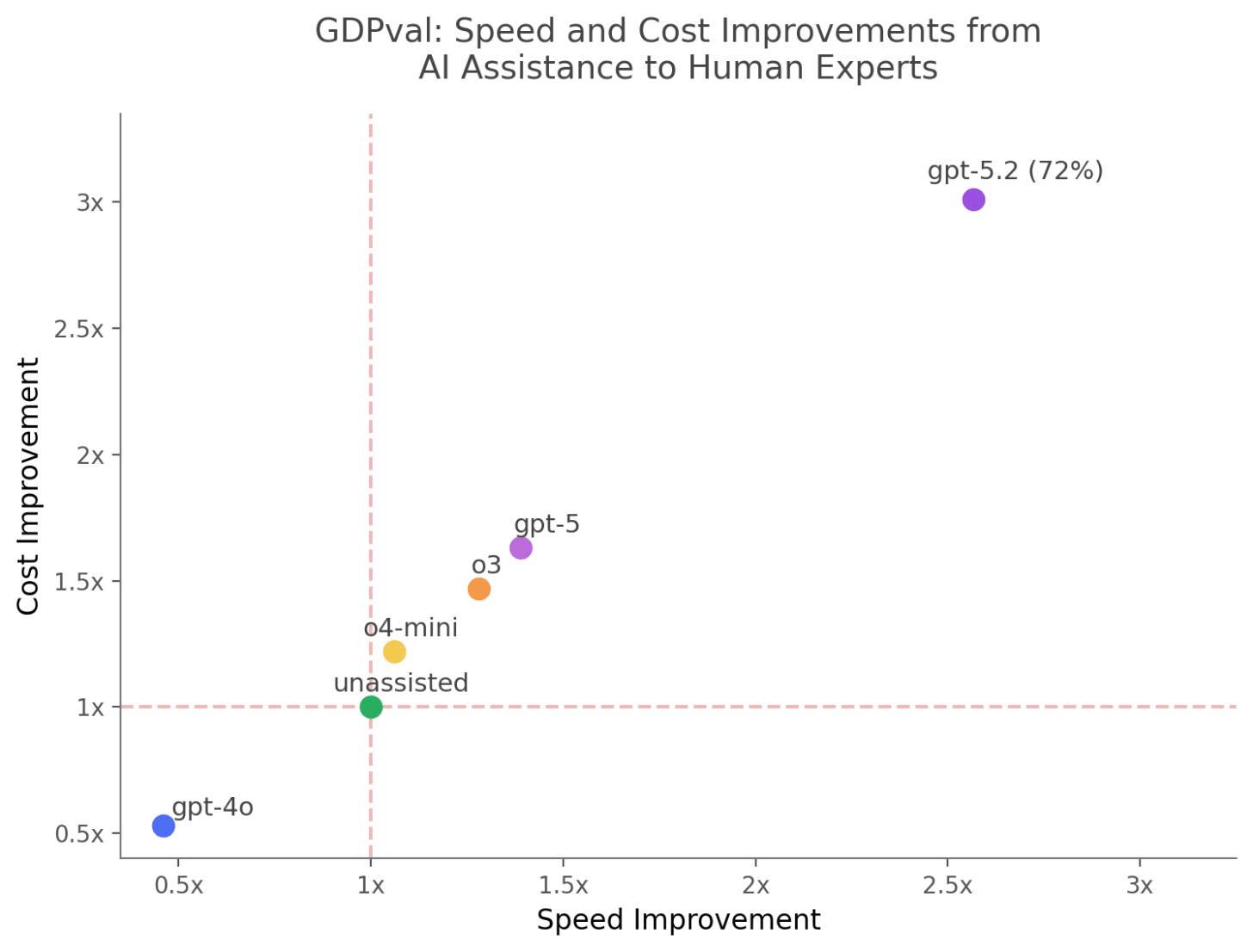

We know this equation works because this past summer, OpenAI released one of the more important papers on AI and real work, GDPval. I have discussed it before, but the key was that it pitted experienced human experts in diverse fields from finance to medicine to government against the latest AIs, with another set of experts working as judges. It took experts seven hours on average to do the work, so, in this case, that is the Human Baseline Time. The AI Process Time was interesting: the AI took only minutes for tasks, but it required an hour for experts to actually check the work, and, of course, prompts take time to write as well. As for Probability of Success, when GDPval first came out, judges gave human work the win the majority of the time, but, with the release of GPT-5.2, the balance shifted. GPT-5.2 Thinking and Pro models tied or beat human experts an average of 72% of the time.

We can now calculate how many hours you would save on a seven-hour task, assuming that 72% probability of success and an hour of evaluation. If you tried every task by taking the time to prompt the AI, evaluating the answer for an hour, and then doing it yourself if the AI answer was bad, you would save 3 hours on average. Tasks the AI failed on would take longer (you wasted time prompting and reviewing!) but tasks the AI succeeded on would be much faster. But we can change the equation even more in our favor using techniques from management!

There are three things we can do to make delegating to AI more worthwhile by increasing the Probability of Success and lowering AI Process Time. We can give better instructions, setting clear goals that the AI can execute on with a higher chance of succeeding. We can get better at evaluation and feedback, so we need to make fewer attempts to get the AI to do the right thing. And we can make it easier to evaluate whether the AI is good or bad at a task without spending as much time. All of these factors are improved by subject matter expertise — an expert knows what instructions to give, they can better see when something goes wrong, and they are better at correcting it.

If you don’t need something specific, AI models have become incredibly capable of figuring out how to solve problems themselves. For example, I found Claude Code was able to generate an entire 1980s style adventure game with one prompt to "create an entirely original old-school Sierra style adventure game with EGA-like graphics. You should use your image agent to generate images and give me a parser. Make all puzzles interesting and solvable. Finish the game (it should take 10-15 minutes to play), don’t ask any questions. make it amazing and delightful." That’s it, the AI made everything, including the art. With two final prompts it tested the game and deployed it. You can play it yourself: enchanted-lighthouse-game.netlify.app

This is genuinely amazing, but that amazement is amplified because I didn’t need anything specific, just an adventure game that the AI was free to improvise. But real work, and real delegation, means that you have a specific output in mind, and that is where things can get tricky. How do you communicate your intention to the AI to execute on what you want, so it can use “judgement” to solve problems while still giving you the output you desire?

This problem existed long before AI and is so universal that every field has invented their own paperwork to solve it. Software developers write Product Requirements Documents. Film directors hand off shot lists. Architects create design intent documents. The Marines use Five Paragraph Orders (situation, mission, execution, administration, command). Consultants scope engagements with detailed deliverable specs. All of these documents work remarkably well as AI prompts for this new world of agentic work (and the AI can handle many pages of instructions at a time). The reason you can use so many formats to instruct AI is that all of these are really the same thing: attempts to get what’s in one person’s head into someone else’s actions.

When you look at what actually goes into good delegation documentation, it’s remarkably consistent: What are we trying to accomplish, and why? Where are the limits of the delegated authority? What does “done” look like? What specific outputs do I need? What interim outputs do I need to follow your progress? And what should you check before telling me you’re finished? If these are well-specified, the AI, like humans, is far more likely to do a good job.

And in figuring out how to give these instructions to the AI, it turns out you are basically reinventing management.

I find it interesting to watch as some of the most well-known software developers at the major AI labs note how their jobs are changing from mostly programming to mostly management of AI agents. Coding has always had a very organized structure, with clearly verifiable outputs (the code either works or it doesn’t) so it has been one of the first areas where AI tools have matured, and thus the first profession to feel this change. It isn’t the last.

As a business school professor, I think many people have the skills they need, or can learn them, in order to work with AI agents - they are management 101 skills. If you can explain what you need, give effective feedback, and design ways of evaluating work, you are going to be able to work with agents. In many ways, at least in your area of expertise, it is much easier than trying to design clever prompts to help you get work done, as it is more like working with people. At the same time, management has always assumed scarcity: you delegate because you can’t do everything yourself, and because talent is limited and expensive. AI changes the equation. Now the “talent” is abundant and cheap. What’s scarce is knowing what to ask for.

This is why my students did so well. They weren’t AI experts. But they’d spent years learning how to scope problems in their fields of expertise, define deliverables, and recognize when a financial model or medical report was off. They had hard-earned frameworks from classes and jobs, and those frameworks became their prompts. The skills that are so often dismissed as “soft” turned out to be the hard ones.

I don’t know exactly what work looks like when everyone is a manager with an army of tireless agents. But I suspect the people who thrive will be the ones who know what good looks like — and can explain it clearly enough that even an AI can deliver it. My students figured this out in four days. Not because they were AI natives, but because they already knew how to manage. All that training, it turns out, was accidentally preparing them for exactly this moment.