2026-05-22 03:00:00

I’ve been slowly listening to Poor Charlie’s Almanack: The Essential Wit and Wisdom of Charles T. Munger.

I like his practicality. He’s never trying to be overly academic, as if he needs to prove how smart he is.

He says Berkshire’s success doesn’t come from them solving hard problems, but from spending their time knowing what a simple solution looks like — and acting on it when they see it!

We’ve succeeded by making the world easy for us, not by solving the world’s hard problems.

Munger analogizes their approach to investing like jumping a fence. They don’t spend all their time trying to figure out how to jump a seven-foot tall fence. Instead, they find a spot where the fence is only a foot tall, jump it, and take the reward on the other side.

The approach he articulates for investing, in fact, seems broadly applicable to any kind of problem solving:

Whenever people ask him for advice (as if somehow he could bestow upon them some kind of knowledge that will save them the pain and hardship of experience) he seems anathema to the idea that you can live life without making lots of mistakes.

To paraphrase Charlie: “I don’t want you to think that we have a method of learning that will prevent you from making mistakes. The best you can do is learn to make fewer mistakes than others. And then, when you inevitably do make mistakes, learn to acknowledge them and fix them quickly.”

Straightforward. Practical. No bullshit. No ego. (Basically the opposite of everything I see on social platforms.)

I quite enjoyed his perspective.

2026-05-19 03:00:00

This is an iconic observation:

If you put the Apple icons in reverse it looks like the portfolio of someone getting really really good at icon design

This isn’t, however, just the story of Apple’s Creator Studio icons. It’s the unfolding story of icon design across the entire macOS platform.

For example, take a look at some of Apple’s other apps like iMovie:

![]()

Or Remote Desktop:

![]()

Apple sets the standard (and the rules) for how icons look on the Mac. Wherever they go, so goes the ecosystem — and they’re taking the entire ecosystem along down with them.

It’s fast becoming the case that if you put any Mac app’s icons in reverse, it looks like the portfolio of someone getting really, really good at icon design.

Even Microsoft — not exactly a bastion of design — starts to look pretty decent with their icons the further back you go. For example, with OneNote, the app icon’s progression looks like it went something like this:

![]()

Some 3rd-party apps continue to fight a good fight, even as Apple’s definition of what an icon should be — or what’s even possible — shrinks all around them.

Apps like Capo (remember, these are reverse chronological):

![]()

Or BBEdit:

![]()

Or Fantastical:

![]()

Or Cot Editor:

![]()

Everyone’s being put in a box squircle. The imposition is real.

I don’t blame any of the 3rd-party app makers. Their designs have to play by Apple’s rules (or end up in icon jail). World-class designers like Matthew Skiles or The Iconfactory are still out there striving for excellence, even as they’re hamstrung by the Mac’s latest rules.

When it comes to icon design on the Mac, the sky is no longer the limit: Apple’s icon design sensibilities are. They set the examples of what world-class icon design should look like, but what do you do when the examples are no longer exemplary?

2026-05-13 03:00:00

Here’s Scott Jenson in his insightful piece “The Ma of a New Machine”:

the chatbot interface [makes us] feel like deep cognitive work is happening. But the interface is fundamentally reactive. It spits complex text at you, you skim it quickly, and you immediately type a reaction to keep the momentum going.

My hypothesis is that the very structure of the chatbot interface (type, read, type again) actively discourages reflection. When you are moving too fast, you get stuck in a groove. You literally need to take a break, step back, and basically step out of this groove so you can view the problem from a new angle. We’ve all walked away from a tough problem only to have the solution arrive unbidden into our thoughts later in the day.

In my decades+ experience designing and developing software, I can’t count the number of times I’ve stepped away from a problem at the computer only to return and find the problem magically resolved in my brain.

But the human-computer interaction of prompting doesn’t encourage the use of that skill in our subconscious.

In fact, I think it actively discourages it (our tools shape us).

Scott talks about this Japanese concept called “Ma” which is about deliberately creating pauses between things. He quotes Studio Ghibli director Hayao Miyazaki who says “if you just have non-stop action with no breathing space at all, it’s just busyness.” Here’s Scott (emphasis mine):

Ma provides a framework for understanding that a pause is not a lack of work

As humans we need pauses. We need space to breathe. We need time to digest.

Pausing, breathing, synthesizing, digesting — these are all necessary work.

“Digestion” is an interesting word here.

Putting food in your body is merely the beginning of feeding yourself. Our bodies must digest that food, break it down, absorb it, and get rid of the waste.

But that’s all happening mostly without our attentive oversight, so I guess it’s not “real” work — right?

Wrong.

Building good, healthy software requires digestion.

2026-05-11 03:00:00

I’ve been posting about how you can make lots of HTML pages and leverage navigations over in-page, JS-dependent interactions.

Now I’m gonna post another example.

On my icon sites, I have a little widget that allows you to resize the icons you’re looking at.

![]()

Previously, I implemented this functionality as a web component that looked something like this:

<icon-list size="md">

<a href=""><img src="" width="128" height="128" /></a>

<a href=""><img src="" width="128" height="128" /></a>

<!-- more -->

</icon-list>

The size attribute corresponded to an enumeration like sm | md | lg | xl which mapped to actual pixel dimensions like 64×64 or 512×512.

When the little widget was clicked to render icons at a different size, JavaScript changed the size attribute on the <icon-list> custom element. From there, the web component’s JS took over changing the dimensions of the children <img> elements, their src attributes, etc.

It all worked pretty well. However, because that was a client-side solution to my otherwise entirely pre-rendered static site, it required some templating logic and data be duplicated and sent over the wire to every client.

I didn’t love that for various reasons — like “Crap, I updated this one small part of how my icon list renders on the server, but forgot to tweak it on the client, so things are slightly broken now.”

Then one day the thought hit me: instead of relying on JS to make that interaction work (click, execute JS, modify in-page DOM to a new list), what if I just made that interaction a navigation? Click, navigate to a new list.

Instead of “every list of icons ships with some JS that allows them to re-render at four different sizes” I could do “every list of icons ships in four different sizes”.

/colors/red/, with JS to re-render the icon list based on user interactions./colors/red/{sm|md|lg|xl}, each a different icon list size.So I tried it. And guess what? Once I added some code to support CSS view transitions, I got a cool effect amongst the icons for free — that’s right, by removing code!

Works nice on mobile too!

I know I’m not doing anything particularly novel here, but as we continue to get new, powerful primitives on the web — like CSS view transitions — I find it really interesting to revisit basic patterns and explore what’s possible now that wasn’t previously.

It’s fun to ask yourself: “Could I remove some client-side JS and get a better overall experience?” If the answer is yes, I’ll bet you the development experience (and maintenance burden) is much improved too!

2026-05-04 03:00:00

I wrote about building websites with LLMs — (L)ots of (L)ittle ht(M)l page(s) — and I think it’s time for a post-mortem on that approach:

I like it.

I’ve tweaked a few things from that original post but the underlying idea is still the same, which I would describe as:

Avoid in-page interactions that require JavaScript in favor of multi-page navigations that rely on HTML and are enhanced with CSS view transitions (and a dash of JS if/where prudent).

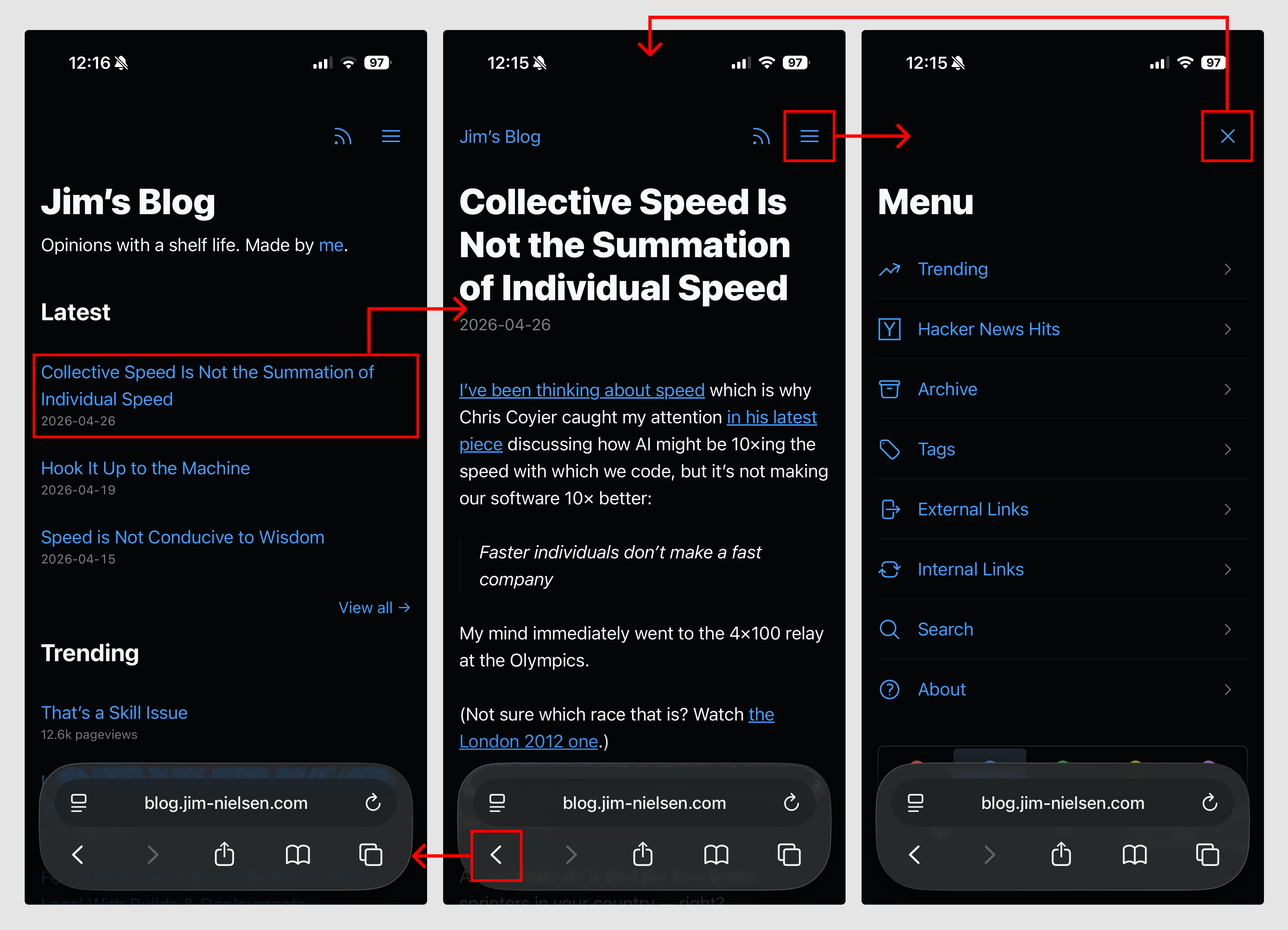

As an example, on my blog I have a “Menu”. It doesn’t “expand” or “slide out” or “pop in” or whatever else you can do with JS. Instead, it navigates to an entirely-new page that is focused on just the menu options of my site.

I say “navigates” because it’s just a link — <a href="/menu/"> — and it functions like a link, but the navigation interaction is enhanced by CSS view transitions.

Have a newer device with a modern browser? Great, you get a nicer effect.

Have an older device, or an older browser, or JS disabled, Et al.? It’ll still work.

If you can follow a link — which is the most fundamental thing a browser can do — it will work.

So how’s it all work under the hood? In essence, all the pages have a link to the menu (except the menu page). When you navigate to the menu, that link is changed to an “X” which “closes” the menu. The closing is still just a link (back to /) but it’s enhanced with JS to actually do a “back” in the browser history. This makes it so “opening/closing” the menu doesn’t add an entry to your browser history.

As a simplified example, the code looks like this:

<!-- Normal page -->

<nav>

<a href="/menu/">

<svg>...</svg>

</a>

</nav>

<!-- Menu page -->

<nav>

<a href="/" onclick="document.referrer ? history.back() : window.location.href = '/'; return false;">

<svg>...</svg>

</a>

</nav>

The document.referrer checks whether we came to this page as a navigation (mostly likely from within the blog itself) or via a direct visit (i.e. somebody typed it into the URL bar, unlikely but possible) which is how I suss out whether there’s a meaningful history.back() run or not.

Here’s a video of how it all works, if that’s your thing:

While this solution seems simplistic, it was not a simple thing to arrive at. It required me to spend time thinking about what was essential to navigation, how that interaction could work across multiple pages, and how I could ensure page size stayed small so the interaction was both fast and robust while remaining intuitive to use.

In other words, the approach shaped the design.

Turns out, if you have a website and you think of the browser as a way to navigate documents — rather than a runtime to execute arbitrary code and fetch, compile, and present them — things can be a lot simpler than our tools often prime us to make them.

Reply via: Email · Mastodon · Bluesky

Related posts linking here: (2026) Out With the JS, In With the HTML

2026-04-27 03:00:00

I’ve been thinking about speed which is why Chris Coyier caught my attention in his latest piece discussing how AI might be 10✕ing the speed with which we code, but it’s not making our software 10✕ better:

Faster individuals don’t make a fast company

My mind immediately went to the 4✕100 relay at the Olympics.

(Not sure which race that is? Watch the London 2012 one.)

Imagine you were put in charge of winning the 4✕100 relay.

All you gotta do is find the four faster sprinters in your country — right?

I’m no track and field expert, but I doubt it’s that simple.

In a relay race, the baton is arguably the most critical element. Passing it cleanly is vital because if you fumble it you’re easily behind a few meters or maybe even disqualified.

So, one could argue, a sprinter’s ability to pass and receive the baton is more important than speed because all the speed in the world won’t help you overcome a dropped baton.

(There are other considerations too, like which leg each runner takes, which sequence works best given individual pairings and rapport, and whether a slower veteran might perform better in the heat of the moment.)

Faster runners won’t guarantee a faster team.

And faster coders won’t guarantee a faster company.

Like a relay race, it might be worth giving some thought to the relationships and interfaces between people.