2026-05-03 22:18:06

For decades, software UX was built around human interaction: log in, read the screen, understand the workflow, and act. That model is changing as AI Agents begin to interact directly with business software.

They now read records, retrieve context, trigger workflows, call APIs and tools, update systems, and move data across applications. Gartner predicts that up to 40% of enterprise applications will include task-specific AI Agents by 2026, up from less than 5% in 2025. Applications now need to support human-facing interfaces as well as agent-ready system behavior. This marks a shift and the necessity to go beyond human UX and augment AI UX in your software strategy.

That is why designing software for AI Agents is now a serious product and architecture priority.

The interface is no longer just what a person sees. It is also what a system allows an agent to do.

In traditional software, employees often acted as the connector between applications. They copied records, updated statuses, reviewed emails, checked dashboards, routed requests, and transferred data between systems. But now, AI Agents can take over parts of this execution (in some cases, even entirely) when the underlying software exposes clear actions, structured context, and controlled access. This is where UX for AI Agents becomes commercially important. Clearly, agent-ready software is not only a technical upgrade. It gives your business a practical way to reduce manual execution, speed up routine work, and extend deeper automation across existing systems.

Knowledge workers spend 60% of their time on “work about work,” including searching for information, switching between applications, chasing updates, and coordinating tasks instead of completing the actual work. This is the operational drag AI Agents are built to reduce.

An AI Agent can update a CRM record, create a support ticket, retrieve data from analytics, and notify a team, triggering the next step without waiting for a person to manually move information between systems. The value is not just speed. It is fewer delayed updates, fewer repetitive follow-ups, and less dependency on manual task coordination. For this to work, software must be designed for agent-led execution. Human users can still interpret ambiguous labels, work around messy layouts, and fill in missing context.

AI Agents, on the other hand, need structured data, clear actions, defined permissions, and machine-readable context. This marks a shift in interface design. When software provides that structural clarity, AI Agents can connect fragmented systems and handle the work about work tasks more effectively, allowing skilled employees to focus on complex decisions, customer conversations, and strategic problem-solving.

Agent-ready design does not mandate a total rebuild; instead, it focuses on the need to optimize the existing software stack for autonomous workflows. In many cases, the better approach is to modernize the workflows that matter most.

That means identifying high-volume tasks, exposing the right actions through Application Programming Interfaces (APIs), improving system-to-system communication, and adding controlled execution paths. This approach aligns with broader AI-native software development trends, where applications are optimized to support intelligent automation as a core architectural capability, rather than a thin add-on. This is why AI-enabled application development has become even more important now. And as for legacy applications, they may need API redesign, back-end workflow modernization, better data access, and tighter integration between business systems before they can support agent-led operations effectively.

But these benefits do not come from adding an AI Agent on top of existing software. They depend on whether the application is ready to expose work in a way agents can understand, execute, and verify. That is where most enterprise systems still fall short.

The issue with current systems is not poor design. Just that it has been designed for a different user. Most enterprise applications work well for humans because people eventually become used to working around incomplete context, but AI Agents need that context from day one to work in a structured, accessible, and governed environment. This brings us to an indispensable question: how to design software for AI Agents? The question also makes UX design for an AI Agent a broader discipline than just visual UX. It is about designing systems wherein agents access actions, understand context, follow rules, and demonstrate what they have done.

Parallel to the growing need for Agent-first software design, we are also concerned that over 40% of Agentic AI projects risk discontinuation by late 2027 due to escalating costs, unclear business value, or inadequate risk controls. The message is clear: agent adoption is accelerating, but weak foundations can turn promising automation into expensive experimentation.

Here is how businesses can design software for AI Agents.

Most applications still treat the screen as the main operating layer. Buttons, forms, menus, and dashboards are where the work appears to happen. For AI Agents, that is not enough. \n  Agents need structured actions they can call directly. Any action a human can perform through the UI must be mirrored by a stable API endpoint, ensuring the agent has the same functional reach as a person.

Agents need structured actions they can call directly. Any action a human can perform through the UI must be mirrored by a stable API endpoint, ensuring the agent has the same functional reach as a person.

The solution is to build action-ready interfaces with:

Now, this is where the AI Agent architecture design comes into play. The application must expose what agents can do, what data they need, what limits apply, and what response the system should return. For agent-ready systems, application development often moves beyond front-end improvement. The work shifts toward API-first execution paths, back-end workflow redesign, and integration logic that allows agents to perform tasks without depending on screen interpretation.

Just giving an AI Agent access to an application does not make it useful. \n  Access only allows the agent into the system. Context tells it what to do, what to avoid, and when to escalate.

Access only allows the agent into the system. Context tells it what to do, what to avoid, and when to escalate.

For example, an AI Agent with write access to a CRM could technically update customer records. But it also needs to understand account ownership, customer priority, deal stage, approval rules, recent interactions, and data source reliability. Without that context, the agent may act quickly but incorrectly.

The solution is to build a context layer that includes:

Building this context layer is a sophisticated engineering challenge. It moves far beyond simple prompt engineering and into the realm of deep system architecture, requiring a mastery of tool access, retrieval logic, and workflow boundaries. Because this involves a high degree of integration between agent behavior and enterprise application design, many organizations find that successful implementation requires AI Agent developers who understand how to bridge the gap between AI models and legacy software environments.

Open automation is a liability. Controlled automation is an asset. AI Agents are capable of managing end-to-end operational tasks, from data orchestration and record management to autonomous communication and workflow initiation. Without limits and guardrails, those same capabilities can create risk. An agent may modify incorrect data, expose sensitive information, bypass critical approval steps, or execute redundant actions when a system response is ambiguous. \n

The solution is to make control part of the UX. In the autonomous systems UX paradigm, guardrails are not only backend security measures. They define how agents operate inside the application.

Agent-ready software should include:

The goal is not simply to make agents autonomous but to make them useful within defined business limits. Agents must know when to act, when to stop, when to ask for review, and how to leave a clear record of every action.

As the shift from human UX to AI UX accelerates, businesses will need applications that support both human judgment and agent-led execution. People will continue to review exceptions, approve sensitive actions, and remain accountable for decisions. Agents will handle structured work when systems grant them the appropriate access, limits, and context. The best software of the next decade will not simply be easy for people to navigate. It will expose the actions, permissions, context, and audit trails agents need to operate safely, while giving humans the control needed to trust the outcome.

2026-05-03 22:09:56

Because “probably present” isn’t good enough at scale

Most distributed systems don’t fail dramatically—they degrade quietly.

…and then it doesn’t.

That tiny mismatch—between what your system believes and what’s actually true—is often caused by one thing:

False positives in Bloom filters

This article explores a simple idea I implemented in a systems project:

What if Bloom filters could understand importance, not just existence?

Bloom filters are everywhere:

They’re popular because they’re efficient. But they come with a trade-off:

They don’t tell you “yes” or “no”—only “maybe.”

In cooperative caching systems:

A false positive means:

At scale, this leads to:

As highlighted in the project design, system performance heavily depends on Bloom filter accuracy

\

\ A Bloom filter is:

m

k hash functionsdef insert(x):

for h in hash_functions:

bit_array[h(x)] = 1

def query(x):

for h in hash_functions:

if bit_array[h(x)] == 0

return False

return True # Possibly Present

Different elements can map to the same bits → false positives

In real systems:

But Bloom filters treat everything the same. That’s the flaw.

Instead of a bit array, we use:

def insert(x, importance):

for h in hash_functions:

idx = h(x)

filter_array[idx] = max(filter_array[idx], importance)

def query(x):

scores = []

for h in hash_functions:

scores.append(filter_array[h(x)])

return min(scores) # Confidence score

Now instead of a binary answer, you get a signal of confidence.

for system in ["Bloom", "ImportanceAware"]:

setup_network()

for request in requests:

simulate_request()

track_false_positive()

Using trace-driven simulation, we measured the number of false positives generated by both Bloom Filter (BF) and Importance-Aware Bloom Filter (IBF) across varying request volumes.

[False Positive Rate vs Number of Requests]

X-axis: Number of requests

Y-axis: False positive rate

Blue Line: Bloom Filter

Orange Line: Importance-Aware Bloom Filter

Here’s the basic usage of bloom filters in caching

\

\ There are various cooperative caching algorithms that uses bloom filters.

We evaluated these four strategies:

Replace Standard Bloom Filter with Importance-Aware Bloom Filter

Now:

Result:

Hypothesis: Importance Aware Summary Cache performs better than Robinhood, NChance, and Greedy caching

To prove this hypothesis, I compared these algorithms in terms of cache miss, latency, and disk access.

\

for algorithm in ["ImportanceAware-SummaryCache", "NChance", "Robinhood", "Greedy"]:

load_trace()

for request in trace:

simulate_algorithm(algorithm)

record_hit_miss()

\

[Latency vs Number of Clients]

X-axis: Number of clients

Y-axis: Latency

Blue Line: Greedy Forwarding

Green Line: Robinhood

Orange Line: NChance

Red Line: Importance-Aware Summary Cache

\ Summary Cache consistently achieves the lowest request latency. As the system scales, the gap between Summary Cache and other algorithms widens, indicating more efficient request routing and fewer unnecessary hops.

[Cache Hit Ratio vs Number of Clients]

X-axis: Number of clients

Y-axis: Global Cache Hit Ratio

Blue Line: Greedy Forwarding

Green Line: Robinhood

Orange Line: NChance

Red Line: Importance-Aware Summary Cache

\ Summary Cache demonstrates a higher global cache hit ratio across all configurations. This suggests more effective utilization of distributed caches and improved data locality.

[Disk Access vs Number of Clients]

X-axis: Number of clients

Y-axis: Disk Access

Blue Line: Greedy Forwarding

Green Line: Robinhood

Orange Line: NChance

Red Line: Importance-Aware Summary Cache

Disk access—used as a proxy for cache misses—is significantly lower for Summary Cache. This indicates that the importance-aware approach reduces unnecessary backend fetches and improves overall system efficiency.

Most optimizations focus on:

But this project highlights something else:

Improve the quality of signals your system relies on

Because when your system stops guessing wrong:

Bloom filters are elegant—but they’re not perfect.

By adding importance awareness, we transform them from:

And that’s a shift that scales.

If your distributed system relies on probabilistic structures, ask yourself:

Are you optimizing for memory efficiency… or decision accuracy?

Because at scale, that distinction matters.

\ \ \

2026-05-03 22:06:12

This article explains how workflow engines evolve from reliability tools into latency-sensitive control planes. It highlights the importance of enforcing SLA deadlines, minimizing orchestration paths, bounding event history, and designing agents for scalable execution. The key takeaway is that performance depends less on computation and more on managing time budgets, queue delays, and state efficiently.

2026-05-03 22:00:47

How are you, hacker?

🪐Want to know what's trending right now?:

The Techbeat by HackerNoon has got you covered with fresh content from our trending stories of the day! Set email preference here.

## Why AI Agents Need Self-Updating Data Infrastructure to Stay Intelligent  By @dailyabay [ 5 Min read ]

Discover how AI agents rely on continuously regenerating data pipelines to stay accurate, adaptive, and real-time in modern automated systems. Read More.

By @dailyabay [ 5 Min read ]

Discover how AI agents rely on continuously regenerating data pipelines to stay accurate, adaptive, and real-time in modern automated systems. Read More.

By @playerzero [ 11 Min read ] AI isn’t just generating code—predictive software quality platforms help enterprises maintain reliability, accelerate releases, and reduce production risk. Read More.

By @dailyabay [ 5 Min read ] Learn how intelligent data layers transform fragmented data into structured, AI-ready systems for accurate insights, automation, and scalable performance. Read More.

By @vanta [ 17 Min read ] Discover the top GRC platforms for 2026 and how automation, AI, and continuous monitoring are transforming compliance into a growth driver. Read More.

By @chris127 [ 6 Min read ] Blockchains are great at consensus over digital state. They are bad at directly observing physical reality: the price of a liter of bottled water. Read More.

By @tigerdata [ 4 Min read ] TimescaleDB boosts PostgreSQL performance with faster ingest, 50% quicker queries, and 90% storage savings—ideal for scaling IIoT workloads. Read More.

By @hanbe [ 7 Min read ] AI’s real danger isn’t Skynet but a quiet collapse of human purpose as work, dignity, and fertility erode in an automated world. Read More.

By @mexcmedia [ 4 Min read ] MEXC partners with Sumsub to combat AI-driven fraud using biometric KYC, liveness checks, and continuous identity verification for global crypto compliance. Read More.

By @quyhoang [ 3 Min read ] AI isn’t the "new compiler" many believe it to be. Learn where the analogy of AI as a compiler fails and why you should still care about the code Read More.

By @sanya_kapoor [ 12 Min read ] Cursor vs Copilot vs Claude Code: real benchmarks, pricing, and workflows from live projects to help developers pick the best AI coding stack in 2026. Read More.

By @assemblyai [ 9 Min read ] Build a real-time voice assistant in ~120 lines using a single WebSocket API. No separate STT, LLM, or TTS services needed. Read More.

By @embeddednetworking [ 21 Min read ] Article talks about STM32 microcontrollers with a built-in Ethernet controller. That includes microcontrollers from F1, F2, F4, F7, H5, H7 series. Read More.

By @padmanabhamv [ 7 Min read ] Google’s Agentic Data Cloud rethinks data platforms for AI agents. Learn how its architecture enables scalable, multi-cloud AI systems. Read More.

By @cysic [ 6 Min read ] Decentralised compute improves access but still relies on trust. Verifiable compute is needed to prove results and make it truly trustless. Read More.

By @ishanpandey [ 4 Min read ] ClawBank's AI agent Manfred filed a US LLC, got an EIN, and opened an FDIC-insured account. The first software to incorporate itself. Read More.

By @ngirchev [ 6 Min read ] AI coding tools boost productivity but can create dependency. This piece explores how “vibe coding” turns development into a reward loop. Read More.

By @ktdevjournal [ 4 Min read ] Why “on track” status hides real risks in game production and creates blind spots that lead to sudden failures. Read More.

By @aimodels44 [ 3 Min read ] This is a simplified guide to an AI model called Kimi-K2.6 [https://www.aimodels.fyi/models/huggingFace/kimi-k2.6-moonshotai?utmsource=hackernoon&utmmedium… Read More.

By @thegeneralist [ 7 Min read ] LinkedIn confirmed it scans your browser for 6,236 extensions on every page load. The security explanation is coherent. The composition of the list isn't. Read More.

By @aimodels44 [ 2 Min read ]

This is a simplified guide to an AI model called gpt-image-2 [https://www.aimodels.fyi/models/replicate/gpt-image-2-openai?utmsource=hackernoon&utmmedium=r… Read More.

🧑💻 What happened in your world this week? It's been said that writing can help consolidate technical knowledge, establish credibility, and contribute to emerging community standards. Feeling stuck? We got you covered ⬇️⬇️⬇️

ANSWER THESE GREATEST INTERVIEW QUESTIONS OF ALL TIME

We hope you enjoy this worth of free reading material. Feel free to forward this email to a nerdy friend who'll love you for it.

See you on Planet Internet! With love,

The HackerNoon Team ✌️

.gif)

2026-05-03 22:00:12

In Part 1 of this series, we explored the “HTML-over-the-wire” philosophy and successfully scaffolded a beautiful, albeit static, Kanban board using Symfony 7.4, Twig, and Tailwind CSS. We avoided the “JavaScript Tax” by relying on AssetMapper instead of Webpack, and we leveraged PHP 8.3 Backed Enums to keep our domain model strictly typed.

\ Now, we face the core challenge - How do we make this static board interactive? How do we allow users to drag and drop cards, and crucially, how do we make those changes reflect instantly on the screens of every other user viewing the board?

\ If we were using React, this is where we would typically reach for a heavy library like react-beautiful-dnd, set up a complex Redux store or context provider to manage the optimistic state, and write custom WebSocket connection logic to handle real-time events.

\ With Symfony UX, we take a radically simpler, standards-based approach:

\ Let’s dive into the code.

Stimulus is not meant to replace React or Vue. It is a “modest” framework. It doesn’t manage state, and it doesn’t render HTML. It works by attaching JavaScript controllers to existing DOM elements via data-controller attributes. When that HTML appears on the screen, the Stimulus controller wakes up and attaches event listeners. When the HTML leaves the screen, the controller disconnects and cleans up.

\ We need a controller to handle the drag-and-drop mechanics. Demystifying the HTML5 Drag and Drop API is notorious for being slightly finicky, but Stimulus makes it manageable.

\ Create a new file at assets/controllers/dragdropcontroller.js:

// assets/controllers/drag_drop_controller.js

import { Controller } from '@hotwired/stimulus';

export default class extends Controller {

// We define targets so we can easily reference our columns in the DOM

static targets = ["column"];

// Triggered when a user starts dragging a card (dragstart event)

start(event) {

// Store the ID of the task being dragged in the drag payload

// We get this from a data attribute we will add to the HTML: data-task-id

event.dataTransfer.setData("text/plain", event.currentTarget.dataset.taskId);

event.dataTransfer.effectAllowed = "move";

}

// Triggered constantly as a card is dragged over a valid dropzone (dragover event)

over(event) {

// Crucial HTML5 quirk: We MUST prevent default behavior to allow a drop to occur.

// By default, HTML elements do not accept drops.

event.preventDefault();

event.dataTransfer.dropEffect = "move";

}

// Triggered when the user releases the mouse button over a column (drop event)

drop(event) {

event.preventDefault();

// 1. Get the data we stored during the 'start' event

const taskId = event.dataTransfer.getData("text/plain");

// 2. Identify the target column we dropped it into

const targetColumn = event.currentTarget.closest('[data-drag-drop-target="column"]');

const newStatus = targetColumn.dataset.status;

// 3. The "Optimistic UI" Update

// We move the DOM element instantly on the client side so the user

// feels zero latency. We don't wait for the server response to give visual feedback.

const taskElement = document.getElementById(`task-${taskId}`);

if (taskElement) {

targetColumn.querySelector('.space-y-3').appendChild(taskElement);

}

// 4. Sync with the Server

// We send a lightweight fetch request in the background to persist the change.

fetch(`/task/${taskId}/move`, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'X-Requested-With': 'XMLHttpRequest' // Identifies this as an AJAX request to Symfony

},

body: JSON.stringify({ status: newStatus })

});

}

}

Now, we must tell our HTML to use this controller. We modify the board container and the individual cards.

\ In templates/board/index.html.twig, add the controller to the main wrapper and define the columns as targets:

{# templates/board/index.html.twig #}

...

{# Initialize the drag_drop controller here.

Every element inside this div is now under the controller's purview. #}

<div class="min-h-screen bg-slate-50 p-8" {{ stimulus_controller('drag_drop') }}>

...

{% for status in statuses %}

{#

Mark this div as a column target for Stimulus

and define its status so JS can read it on drop

#}

<div class="flex-1 bg-slate-200 rounded-xl p-4 min-h-[500px]"

data-drag-drop-target="column"

data-status="{{ status.value }}">

...

\ Next, make the cards draggable and wire up the events in templates/board/_card.html.twig:

{# templates/board/_card.html.twig #}

<turbo-frame id="task-{{ task.id }}">

{#

1. draggable="true" enables the HTML5 API

2. data-task-id stores the ID for the JS to read

3. data-action maps DOM events (dragstart, dragover, drop) to our Stimulus controller methods

#}

<div class="bg-white p-4 rounded shadow mb-3 cursor-move hover:shadow-md transition-shadow"

draggable="true"

data-task-id="{{ task.id }}"

data-action="dragstart->drag_drop#start dragover->drag_drop#over drop->drag_drop#drop">

...

If you refresh your browser now, you can pick up a card and drop it into another column! The UI updates instantly. However, if you refresh the page, the card snaps back to its original position. We haven’t built the backend endpoint to persist the data yet.

Let’s create the endpoint in our BoardController to handle the POST request sent by our Stimulus controller. We must ensure this endpoint is secure and validates the incoming data.

// src/Controller/BoardController.php

// ... [previous imports]

use Symfony\Component\HttpFoundation\Request;

use Doctrine\ORM\EntityManagerInterface;

class BoardController extends AbstractController

{

// ... [index method from Part 1]

#[Route('/task/{id}/move', name: 'app_task_move', methods: ['POST'])]

public function moveTask(

Task $task,

Request $request,

EntityManagerInterface $em

): Response {

// Parse the JSON payload sent by fetch()

$data = json_decode($request->getContent(), true);

// Safety First: Attempt to cast the string to our Backed Enum.

// If the client sends an invalid status (e.g., 'deleted'), tryFrom returns null.

$newStatus = \App\Enum\TaskStatus::tryFrom((string)($data['status'] ?? null));

if (!$newStatus) {

return $this->json(['error' => 'Invalid status provided.'], 400);

}

// Update the entity and save to SQLite

$task->setStatus($newStatus);

$em->flush();

return $this->json(['success' => true]);

}

}

Now, if you drag a card and refresh the page, the change persists! We have a functional, persistent Kanban board.

\ But we promised real-time collaboration. If User A moves a card, User B (looking at the same board on a different computer) needs to see that card move instantly without refreshing.

To achieve real-time synchronization across different browsers, we need two distinct components:

Most developers immediately think of WebSockets for real-time features. However, WebSockets are bidirectional and complex to scale in PHP, requiring persistent daemon processes.

\ We don’t need bidirectional communication here. The client talks to the server via standard AJAX POST requests. We only need the server to broadcast changes down to the clients. For this, Server-Sent Events (SSE) via the Mercure Protocol is far superior.

\ Mercure is a hub. Your PHP app sends a single HTTP POST request to the Mercure Hub with the payload. The Mercure Hub (which is heavily optimized in Go) maintains thousands of open SSE connections to the browsers and distributes the payload to them instantly.

A Turbo Stream is simply a small snippet of HTML wrapped in specific tags. It instructs the Turbo library running on the client to perform a specific DOM mutation (append, prepend, replace, remove, update).

\ For example, to remove task #5 from its old column and append it to the ‘done’ column, the stream we need to broadcast looks like this:

<turbo-stream action="remove" target="task-5"></turbo-stream>

<turbo-stream action="append" target="column-done">

<template>

<!-- The fully rendered HTML of the card goes here -->

</template>

</turbo-stream>

Let’s modify our moveTask method. Once the database is updated successfully, we will generate the Turbo Stream HTML using Twig and publish it to the Mercure Hub.

// src/Controller/BoardController.php

// ... [previous imports]

use Symfony\Component\Mercure\HubInterface;

use Symfony\Component\Mercure\Update;

use Psr\Log\LoggerInterface;

class BoardController extends AbstractController

{

// ... [index method]

#[Route('/task/{id}/move', name: 'app_task_move', methods: ['POST'])]

public function moveTask(

Task $task,

Request $request,

EntityManagerInterface $em,

HubInterface $hub, // Inject the Mercure Hub

LoggerInterface $logger

): Response {

$data = json_decode($request->getContent(), true);

$newStatus = \App\Enum\TaskStatus::tryFrom((string)($data['status'] ?? null));

if (!$newStatus) return $this->json(['error' => 'Invalid status'], 400);

$task->setStatus($newStatus);

$em->flush();

// 1. Render the HTML of the updated card using our existing Twig partial!

// This is the beauty of the stack: we reuse the same templates.

$html = $this->renderView('board/_card.html.twig', [

'task' => $task

]);

// 2. Construct the Turbo Stream payload

$stream = sprintf(

'<turbo-stream action="remove" target="task-%d"></turbo-stream>

<turbo-stream action="append" target="column-%s">

<template>%s</template>

</turbo-stream>',

$task->getId(),

$task->getStatus()->value,

$html

);

// 3. Publish the stream to the Mercure Hub on the 'board' topic

try {

$update = new Update('board', $stream);

$hub->publish($update);

} catch (\Exception $e) {

// Log connection errors (e.g., if the Mercure hub is down in dev)

$logger->warning('Mercure hub not reachable: ' . $e->getMessage());

}

return $this->json(['success' => true]);

}

}

Mercure is secure by design. Browsers cannot just listen to any topic; they must be authorized. Mercure uses JWT (JSON Web Tokens) to handle this.

\ Before a browser can connect to the Mercure hub, our Symfony app must set a special cookie (mercureAuthorization) containing a JWT that grants permission to subscribe to specific topics. We handle this in our initial index route when the board first loads.

\ Here is the complete setup in BoardController::index:

// src/Controller/BoardController.php

...

use Symfony\Component\HttpFoundation\Cookie;

use Lcobucci\JWT\Configuration;

use Lcobucci\JWT\Signer\Hmac\Sha256;

use Lcobucci\JWT\Signer\Key\InMemory;

...

#[Route('/board', name: 'app_board')]

public function index(TaskRepository $taskRepository): Response

{

$response = $this->render('board/index.html.twig', [

'tasks' => $taskRepository->findAll(),

'statuses' => TaskStatus::cases(),

]);

// Generate the JWT authorizing the client to subscribe to the Hub

if (class_exists(Configuration::class)) {

$config = Configuration::forSymmetricSigner(

new Sha256(),

InMemory::plainText($_ENV['MERCURE_JWT_SECRET'])

);

$token = $config->builder()

->withClaim('mercure', ['subscribe' => ['*']]) // Authorize all topics for this demo

->getToken($config->signer(), $config->signingKey())

->toString();

// Set the cookie on the response

$response->headers->setCookie(Cookie::create(

'mercureAuthorization',

$token,

new \DateTime('+1 day'),

'/',

null,

false,

false, // HttpOnly false is required for local debug/Mercure discovery

false,

Cookie::SAMESITE_LAX

));

}

// Inform the client where the Mercure Hub is located

$response->headers->set('Link', sprintf('<%s>; rel="mercure"', $_ENV['MERCURE_PUBLIC_URL']));

return $response;

}

Now, the backend is broadcasting, and the browser is authorized. We just need to tell our frontend HTML to establish the connection.

\ In templates/board/index.html.twig, add the turbostreamlisten Twig helper anywhere inside the body. This helper injects the necessary JavaScript to connect to the Mercure Hub and subscribe to the ‘board’ topic.

{# templates/board/index.html.twig #}

{% extends 'base.html.twig' %}

{% block body %}

<div class="min-h-screen bg-slate-50 p-8" {{ stimulus_controller('drag_drop') }}>

{#

Tell Turbo to establish the SSE connection and listen

for Turbo Streams published to the 'board' topic.

#}

<div {{ turbo_stream_listen('board') }}></div>

<div class="max-w-7xl mx-auto">

...

\ Drag a card in Window A. Watch Window B. The card instantly jumps to the new column, matching Window A.

\ You have just built a real-time collaborative application.

Let’s review what we have accomplished without writing a single line of a heavy JS framework:

\ This is the power of the modern Symfony UX ecosystem. It allows developers to build highly interactive, “SPA-like” applications with a fraction of the complexity, maintaining the developer experience, security, and rendering speed of a traditional server-side application. The HTML-over-the-wire revolution is here, and Symfony is leading the charge in the PHP world.

\ Source Code: You can find the full implementation and follow the project’s progress on GitHub: [https://github.com/mattleads/symfony-kanban]

If you found this helpful or have questions about the implementation, I’d love to hear from you. Let’s stay in touch and keep the conversation going across these platforms:

\

2026-05-03 22:00:05

Fundamental concepts in computer science dealing with organizing and processing data efficiently, essential for solving complex computational problems and writing optimized code.

Design your implementation of the linked list. You can choose to use a singly or doubly linked list.

Design your implementation of the linked list. You can choose to use a singly or doubly linked list.

Data structures and algorithms allows you to write better code, solve complex problems, and understand the inner workings of computer programs.

Data structures and algorithms allows you to write better code, solve complex problems, and understand the inner workings of computer programs.

Exploring coding platforms: My insights and experiences shared. Discover the pros and cons of informed choices. Join me on this insightful journey!

Exploring coding platforms: My insights and experiences shared. Discover the pros and cons of informed choices. Join me on this insightful journey!

Let's learn interesting Rust concepts like smart pointers and ownership with a classic data structure and algorithm together.

Let's learn interesting Rust concepts like smart pointers and ownership with a classic data structure and algorithm together.

3x+1 or Collatz conjecture is a simple maths problem that can easily be implemented using a simple while loop in Python.

3x+1 or Collatz conjecture is a simple maths problem that can easily be implemented using a simple while loop in Python.

Coding Interviews are such an important thing in a programmer's life

that he just can't get away with that. It's the first hurdle they need

to cross to get the software developer job they wish throughout their

school and college days.

Coding Interviews are such an important thing in a programmer's life

that he just can't get away with that. It's the first hurdle they need

to cross to get the software developer job they wish throughout their

school and college days.

I had to quit DSA and CP within a month because of the overwhelming exhaustion, This blog discusses mistakes that I made while learning DSA and CP.

I had to quit DSA and CP within a month because of the overwhelming exhaustion, This blog discusses mistakes that I made while learning DSA and CP.

Understanding algorithms and data structures are crucial to enhancing your performance 10x more than your peers who don't. This is because you analyze problems.

Understanding algorithms and data structures are crucial to enhancing your performance 10x more than your peers who don't. This is because you analyze problems.

Understand how to do binary subtraction in data structures. Binary subtraction is one of the four arithmetic operations where we perform the subtraction method.

Understand how to do binary subtraction in data structures. Binary subtraction is one of the four arithmetic operations where we perform the subtraction method.

Design patterns are reusable solutions for common problems that occur during software development. Here are my 9 favorite design patterns for JavaScript

Design patterns are reusable solutions for common problems that occur during software development. Here are my 9 favorite design patterns for JavaScript

Learn 5 steps to Improve DSA skills. Data structure and algorithms are the most important skills to be prepared for an interview at a top product-based company

Learn 5 steps to Improve DSA skills. Data structure and algorithms are the most important skills to be prepared for an interview at a top product-based company

Technical interviews used to be a challenge for me. I have a bachelor’s degree in Electronics & Telecommunications and a master’s degree in Computer Science.

Technical interviews used to be a challenge for me. I have a bachelor’s degree in Electronics & Telecommunications and a master’s degree in Computer Science.

It was August last year and I was in the process of giving interviews. By that point in time, I was already interviewing for Google India and Amazon India for Machine Learning and Data Science roles respectively. And then my senior advised me to apply for a role in Facebook London.

It was August last year and I was in the process of giving interviews. By that point in time, I was already interviewing for Google India and Amazon India for Machine Learning and Data Science roles respectively. And then my senior advised me to apply for a role in Facebook London.

Well, this is where you are separated by the ones who are good or excellent software developers. In this case, I will tell you that at the beginning or at least in my case and I know that most of the time and for most people who I know, you will feel like an incompetent or an idiot. Basically, how is it possible that I cannot understand this and then you get frustrated.

Well, this is where you are separated by the ones who are good or excellent software developers. In this case, I will tell you that at the beginning or at least in my case and I know that most of the time and for most people who I know, you will feel like an incompetent or an idiot. Basically, how is it possible that I cannot understand this and then you get frustrated.

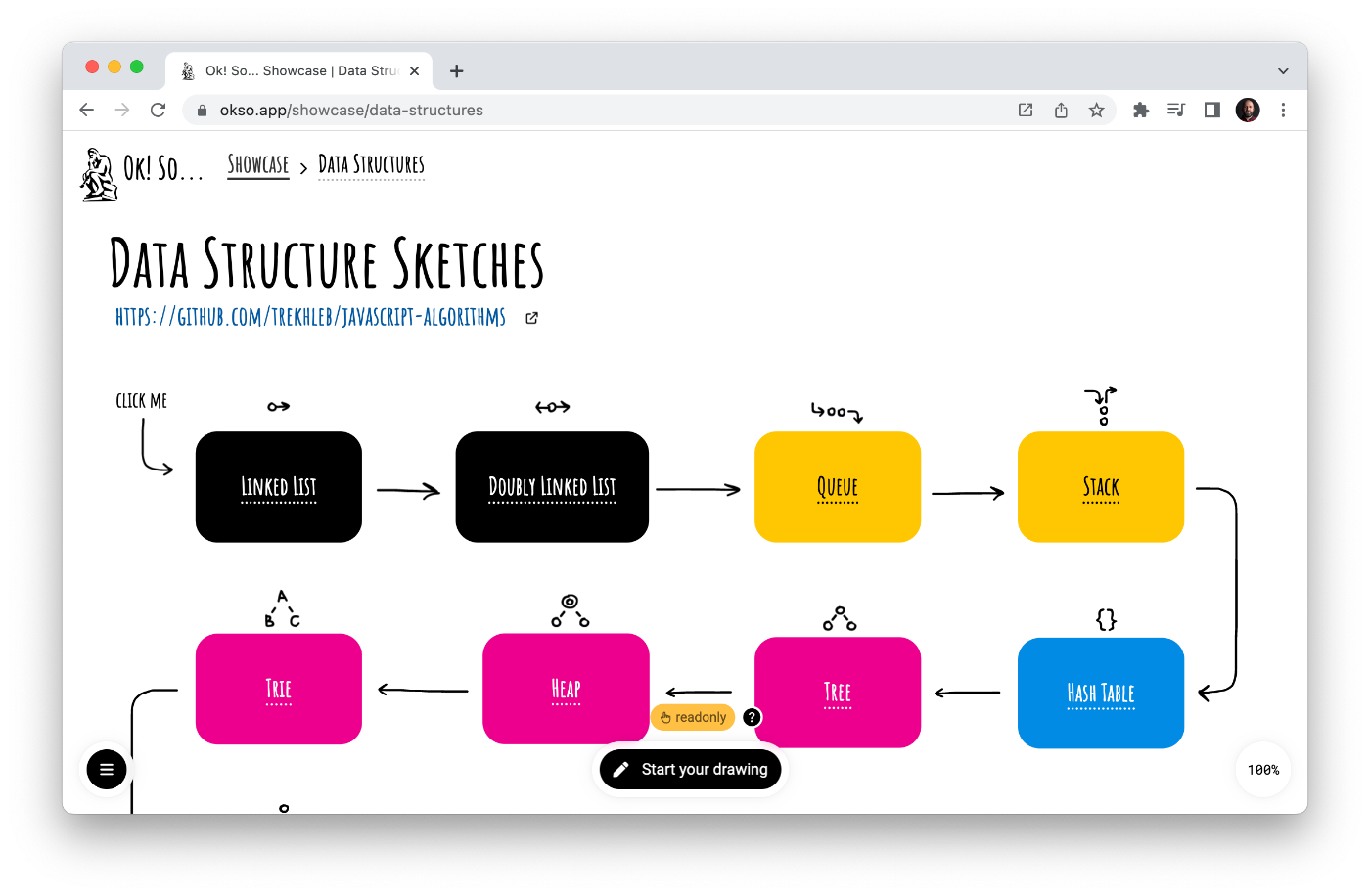

Minimalistic Data Structure Sketches

Minimalistic Data Structure Sketches

Programming is a complex and multifaceted field that encompasses a wide range of mathematical and computational concepts and techniques.

Programming is a complex and multifaceted field that encompasses a wide range of mathematical and computational concepts and techniques.

In this post, we will look at prefix sums and how they can be used to solve a common coding problem, that is, calculating the sum of an array (segment). This article will use Java for the code samples but the concept should apply to most programming languages.

In this post, we will look at prefix sums and how they can be used to solve a common coding problem, that is, calculating the sum of an array (segment). This article will use Java for the code samples but the concept should apply to most programming languages.

Karatsuba algorithm's explanation with examples and illustrations.

Karatsuba algorithm's explanation with examples and illustrations.

This article will introduce the concepts and topics common to all programming languages, that beginners and experts must know!

This article will introduce the concepts and topics common to all programming languages, that beginners and experts must know!

We are going to start a series of lessons based on Data Structures and Algorithms.

We are going to start a series of lessons based on Data Structures and Algorithms.

The need of the hour, especially in the corporate world, is to find professionals who have sufficient knowledge about data structures and algorithms.

The need of the hour, especially in the corporate world, is to find professionals who have sufficient knowledge about data structures and algorithms.

How often have you wanted a piece of information and have turned to Google for a quick answer? Every piece of information that we need in our daily lives can be obtained from the internet. You can extract data from the web and use it to make the most effective business decisions. This makes web scraping and crawling a powerful tool. If you want to programmatically capture specific information from a website for further processing, you need to either build or use a web scraper or a web crawler. We aim to help you build a web crawler for your own customized use.

How often have you wanted a piece of information and have turned to Google for a quick answer? Every piece of information that we need in our daily lives can be obtained from the internet. You can extract data from the web and use it to make the most effective business decisions. This makes web scraping and crawling a powerful tool. If you want to programmatically capture specific information from a website for further processing, you need to either build or use a web scraper or a web crawler. We aim to help you build a web crawler for your own customized use.

Leetcode.com is a website where people–mostly software engineers–practice their coding skills. It’s pretty similar to sites like HackerRank & Topcoder which will rank your code written for a particular problem against the ones submitted by other users.

Leetcode.com is a website where people–mostly software engineers–practice their coding skills. It’s pretty similar to sites like HackerRank & Topcoder which will rank your code written for a particular problem against the ones submitted by other users.

In this article we cover the 5 Graph Patterns for coding interviews. This would help you prepare for coding interviews of top companies!

In this article we cover the 5 Graph Patterns for coding interviews. This would help you prepare for coding interviews of top companies!

The Why?

The Why?

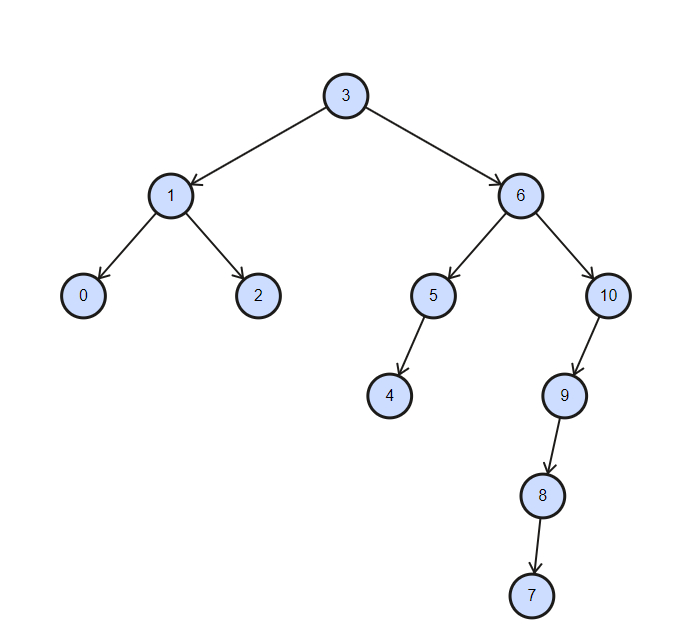

When I faced a search problem in Ruby the first thing that came to my head was a binary tree (yes, I’m a weirdo). After some search about it, I decided to create an open-source tree of my own so anyone can just download and use it in the future.

When I faced a search problem in Ruby the first thing that came to my head was a binary tree (yes, I’m a weirdo). After some search about it, I decided to create an open-source tree of my own so anyone can just download and use it in the future.

An algorithm can take over the world! But we still can take over some decentralized algorithms, especially the ones in crypto.

An algorithm can take over the world! But we still can take over some decentralized algorithms, especially the ones in crypto.

Finding the next biggest value in an array of integers

Finding the next biggest value in an array of integers

Programs may freeze for many reasons, such as software and hardware problems, software bugs, and among others, inefficient algorithm implementations.

Programs may freeze for many reasons, such as software and hardware problems, software bugs, and among others, inefficient algorithm implementations.

In "C" Language, you have structs. With the help of structs, we can define the return data type. You can do the same using classes in python.

In "C" Language, you have structs. With the help of structs, we can define the return data type. You can do the same using classes in python.

I've compiled some of the most useful resources for DSAs, interview practice sites, commonly asked technical questions, and sites to build practical projects.

I've compiled some of the most useful resources for DSAs, interview practice sites, commonly asked technical questions, and sites to build practical projects.

Binary Lifting and its use in finding Lowest Common Ancestor (LCA). Explore this amazing algorithm that speeds up ancestor queries in the tree data structure.

Binary Lifting and its use in finding Lowest Common Ancestor (LCA). Explore this amazing algorithm that speeds up ancestor queries in the tree data structure.

My favorite parts of Computer Science are things that remind me of being human. Believe it or not Computers have this emergent property where as they become more complex they start to do things just like us. We touched on this when I wrote about Recursion. There I discussed how a computer function will call it self over and over until it gets the answer it wants. So very… human of it and to me this touches on problem solving. Memoization can extend this human like quality further.

My favorite parts of Computer Science are things that remind me of being human. Believe it or not Computers have this emergent property where as they become more complex they start to do things just like us. We touched on this when I wrote about Recursion. There I discussed how a computer function will call it self over and over until it gets the answer it wants. So very… human of it and to me this touches on problem solving. Memoization can extend this human like quality further.

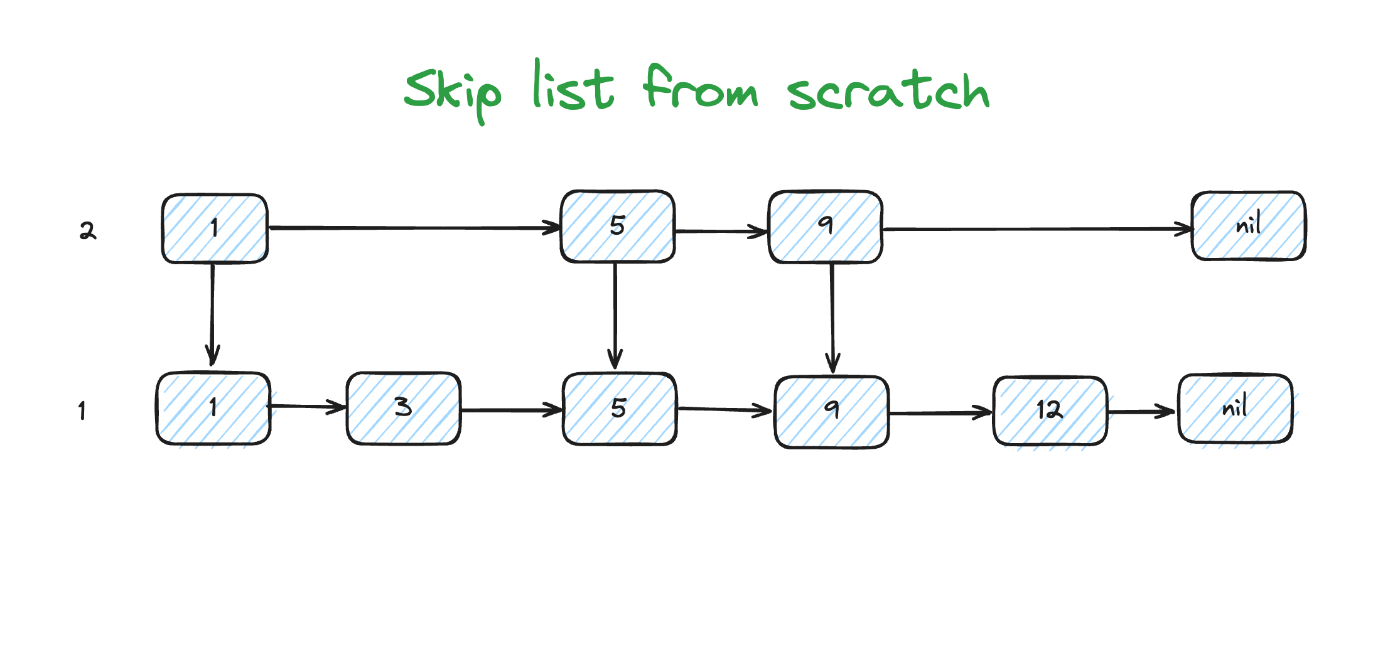

A skip list is a probabilistic data structure that serves as a dynamic set. It offers an alternative to red-black or AVL trees.

A skip list is a probabilistic data structure that serves as a dynamic set. It offers an alternative to red-black or AVL trees.

Learn about different types of graphs in the data structure. Graphs in the data structure can be of various types, read this article to know more.

Learn about different types of graphs in the data structure. Graphs in the data structure can be of various types, read this article to know more.

Learn about why data structures and algorithms are important, and why I failed a Google interview.

Learn about why data structures and algorithms are important, and why I failed a Google interview.

Data Structures and Algorithms are one of the most important skills that every computer science student must-have. It is often seen that people with good knowledge of these technologies are better programmers than others.

Data Structures and Algorithms are one of the most important skills that every computer science student must-have. It is often seen that people with good knowledge of these technologies are better programmers than others.

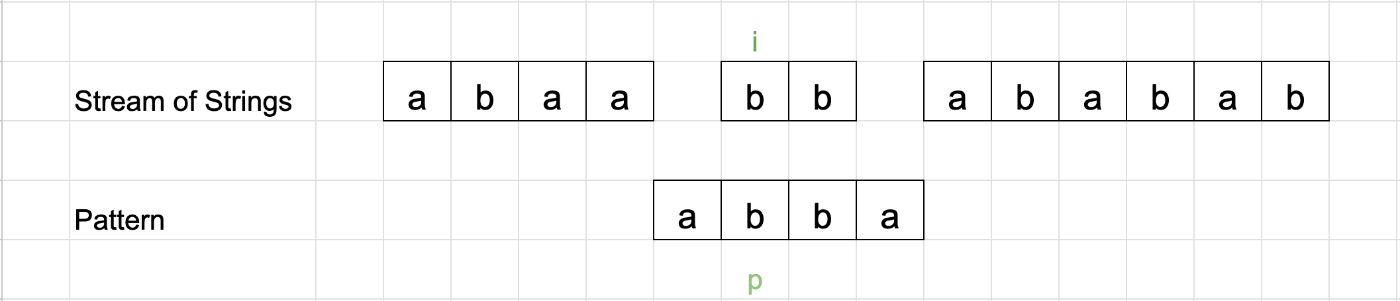

An article explaining the Knuth-Morris-Pratt Algorithm.

An article explaining the Knuth-Morris-Pratt Algorithm.

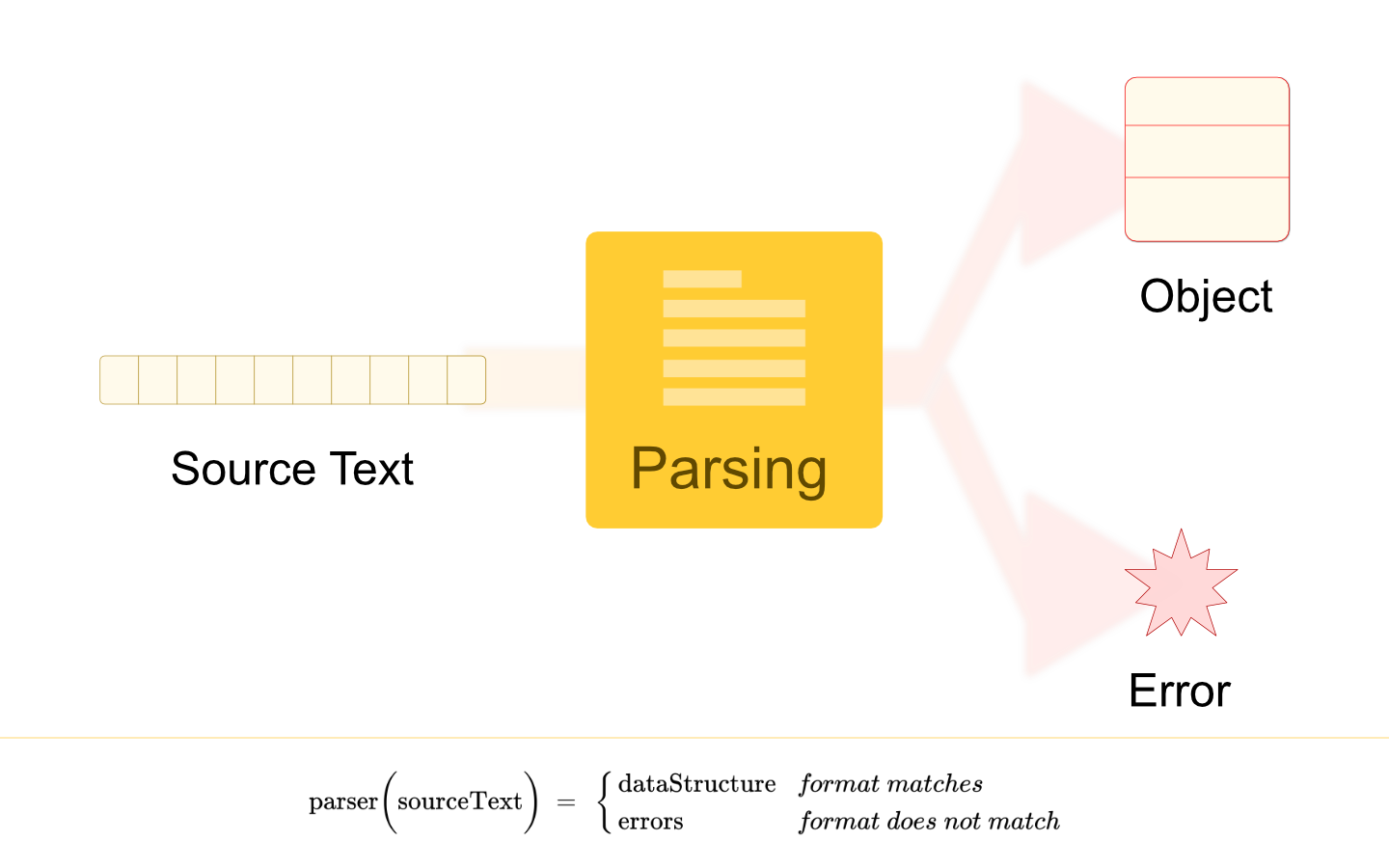

Parsing is a process of converting formatted text into a data structure. A data structure type can be any suitable representation of the information engraved in the source text.

Parsing is a process of converting formatted text into a data structure. A data structure type can be any suitable representation of the information engraved in the source text.

Heap Sort algorithm with step-by-step Python and JavaScript implementations.

Heap Sort algorithm with step-by-step Python and JavaScript implementations.

If you have not read the first blog on the why, how, and hope of this series, check out the first here

If you have not read the first blog on the why, how, and hope of this series, check out the first here

The binary search tree has one serious problem. They can transform into a linked list. The red-black tree solves this problem.

The binary search tree has one serious problem. They can transform into a linked list. The red-black tree solves this problem.

Landing a job at at Google, Apple and other similar companies in the world can seem like an impossible task. Read this guide you can land an interview in tech!

Landing a job at at Google, Apple and other similar companies in the world can seem like an impossible task. Read this guide you can land an interview in tech!

Do you want to pursue a career in Technology and don’t know where to start?

Do you want to pursue a career in Technology and don’t know where to start?

How to run a distributed data-mining operation to source and process crypto market data at zero cost.

How to run a distributed data-mining operation to source and process crypto market data at zero cost.

DSA-Guide: Guide to DSA Problem for Leetcode, Codechef, CSES, GFG

DSA-Guide: Guide to DSA Problem for Leetcode, Codechef, CSES, GFG

Boost your developer skills with Open-source. Learn about Linux kernel data structures, Kubernetes architectural design and PostgreSQL algorithms.

Boost your developer skills with Open-source. Learn about Linux kernel data structures, Kubernetes architectural design and PostgreSQL algorithms.

Using Kotlin at your technical interviews!

Using Kotlin at your technical interviews!

Discover how to boost Apache Spark's query efficiency using data sketches for fast counts and intersections in large datasets. Essential for data pros!

Discover how to boost Apache Spark's query efficiency using data sketches for fast counts and intersections in large datasets. Essential for data pros!

We figured out how the search and insert operation works in the red-black tree in the first part. In this part, we figure out how the delete operation works.

We figured out how the search and insert operation works in the red-black tree in the first part. In this part, we figure out how the delete operation works.

Create a classic Snake game using Python and Pygame. Learn game development basics, including loops, conditionals, and rendering graphics.

Create a classic Snake game using Python and Pygame. Learn game development basics, including loops, conditionals, and rendering graphics.

It’s not just the running time; it’s the space usage too. We see algorithms used in pretty much every program that’s larger than a college project.

It’s not just the running time; it’s the space usage too. We see algorithms used in pretty much every program that’s larger than a college project.

Automate your daily task with Cloudflare Worker's Cron job with an example using LeetCode and Todoist. Test Cloudflare worker cron trigger using Miniflare.

Automate your daily task with Cloudflare Worker's Cron job with an example using LeetCode and Todoist. Test Cloudflare worker cron trigger using Miniflare.

A shift to a decentralized internet may not be easy but it is happening.

A shift to a decentralized internet may not be easy but it is happening.

Visit the /Learn Repo to find the most read blog posts about any technology.