2026-05-27 00:02:14

There’s a guy who sends me a nasty reply to every one of my posts. Within 24 hours of an email going out, he arrives in my inbox like:

2026-05-12 21:23:30

Say what you will about the Union of Soviet Socialist Republics, its 1936 constitution was a banger.

It guaranteed freedom of speech, the press, assembly, and protest. It extended equal rights to all citizens, regardless of race or gender. It shortened the working day to seven hours, affirmed “the right to rest and leisure”, and offered free education and free health care to all, including a “wide network of health resorts for the working people.” You gotta admit this is a lot better than certain other constitutions that, say, count slaves as three-fifths of a person.

In the two years after the constitution was adopted, however, Stalin purged something like a million people. Eighteen million Soviet citizens would be forced into gulag camps and colonies over the next three decades. This was all clearly in violation of Articles 103, 111, and 127, and many others besides. How could one of the greatest tragedies in human history happen when it was very explicitly not allowed?

The uncomfortable answer is that a critical mass of people found prisons and purges palatable. We say that “Stalin” did all these things as if held the gun himself1, but obviously you can’t run a gulag without guards, secretaries, accountants, engineers, architects, doctors, drivers, quartermasters, mid-level managers, kangaroo court judges, and all manner of flunkies, patsies, and stool pigeons. Apparently, hundreds of thousands of regular people were willing to commit their own personal portion of an atrocity.

The lesson here is obvious: rules don’t matter unless people act like they matter. Writing down laws does not endow them with physical force or psychic potency. We all know this. We all believe this.

So why don’t we act like it?

Ever since the replication crisis began over a decade ago, most folks have agreed that the problem is the laxness of our rules, and that the solution is to tighten them. We should mandate replication, preregistration, public data, bigger studies and tinier p-values. The more we can reduce researcher degrees of freedom, the thinking goes, the better our science will be.

Vindicating this theory, a big group of researchers published a paper in 2023 showing that it’s possible to achieve high rates of replicability as long as you follow a set of “rigor-enhancing practices: confirmatory tests, large sample sizes, preregistration and methodological transparency.”

One year later, the paper was retracted because it...failed to follow those rigor-enhancing practices. The journal editors claim the authors were not transparent about their methods, did not abide by their own preregistration, and cherry-picked their results.2

Clearly, we’re not missing the right regulations. We’re missing the right motivations. If you want to discover true things about the world, you’ll be interested in the guidelines that help you do that, and you’ll be thankful to the people who develop them. That’s how the replication crisis could have played out: someone demonstrates that our sample sizes are too small, and we all go, “oh wow we should make our sample sizes bigger because want to know what’s real and what’s not”.

But if you’re not actually seeking the truth, no amount of “rigor-enhancing practices” will ever cause you to find it. That’s why our revolution in scientific regulation has mostly failed. We require researchers who conduct clinical trials to post the results on a public website, but only 45% of them do. We tell people to specify their primary outcomes beforehand, but if their studies don’t work as planned, they just sneak in different analyses—one study on anesthesiology experiments found that 92%(!) of them did this. We make researchers end their papers by saying “data available on request” and then only 17% of them actually make their data available on request.3

You can’t turn a cheat into a scientist by making a rule against cheating. The most important “rigor-enhancing practice” is caring about getting things right, and without that, nothing else matters.

Every couple will eventually have some version of the “Let’s Make a Rule” fight, where they try to solve some interpersonal issue through legislation. “You think I don’t take enough interest in your life, so let’s make a rule: I have to ask you three things about your day before I start telling you about mine.” The theory behind the Let’s Make a Rule fight is that we could live in harmony with one another if we could just compile all of our expectations into one big Google Doc.

The Let’s Make a Rule fight never leads to a satisfying conclusion because nobody actually wants their partner to follow the rules. They want their partner to care. Being asked “How was your day, dear?” through gritted teeth because that’s what our Relationship Handbook says to do is probably worse than not being asked at all.

You want your partner to realize that your preferences are not silly affectations that can be belittled, ignored, or disputed until they go away, that they are, in fact, load-bearing parts of your personality, and to reject them is to reject you. In return, you have to realize that some of your preferences are more malleable than you thought, that maybe they don’t all have to be foundational to your sense of self, and that some of them can be bent or jettisoned in the interests of coexistence.

This is the work of love, and it takes a lifetime. You can’t speedrun it by filling out a spreadsheet or signing a contract. The frictions of a lifelong relationship can be made intelligible—that is, understandable to the people involved, but they cannot be made legible—that is, understandable to everyone else.

The best couples I know have all sorts of arrangements and accommodations that make zero sense to me but perfect sense to them, and that’s exactly why they work well together. A successful relationship is nothing more than a package of haphazard remedies and rickety fixes that people would only ever devise and maintain when they really, really want to be together.

Sometimes police officers break the rules, and the solution is obvious: we just need to police the police.

If you strap a camera to every officer’s chest and record everything they do, then they won’t misbehave in the first place. And even if they do, we can discipline or fire them. This is the most foolproof plan of all time, supported by an unheard-of 89% of Americans. Police departments all over the country agreed—now every single large precinct has body cameras, and a majority of smaller precincts do too. The technology is out there and the data is in; how is our foolproof plan working out?

Not well. According to a 2022 Department of Justice report, body cams might not have any meaningful effect on police behavior. One meta-analysis found a moderate drop in citizen complaints, but didn’t find any difference on any other outcomes: use of force, assaults against police officers, number of incident reports, etc. Another meta-analysis finds no evidence of downstream outcomes like conviction rates. The DoJ warns that we need more research, but this is enough to rule out gigantic effects. If body cams do anything at all, they probably don’t do much.

Maybe our plan just didn’t go far enough. Bad cops can simply turn their cameras off or cause the footage to mysteriously go missing. Even if the video exists, it’s only one version of events, and it’s from the officer’s point of view. Maybe what we really need is a swarm of drones to follow every police officer, recording every action from every angle, and they never shut off and their footage automatically gets uploaded to a public database.

Or maybe we misdiagnosed the problem in the first place. We assumed that the justice system was eager to hold bad cops accountable and that all it was missing was the necessary evidence. It turns out the justice system is actually rather ambivalent about holding bad cops accountable, and so it handles additional evidence as halfheartedly as it handled all of the evidence it already had. A camera can allow you to see, but it can’t make you look.

Everywhere—from politics to science, from love to law—we are constantly asking: how can we get people to do the right thing?

And the answer comes to us so naturally: prod them, push them, shame them, shun them, ban them, beat them!

But every time we try to do this, we run into the same paradox that the Roman poet Juvenal pointed out 2,000 years ago: quis custodiet ipsos custodes? Who watches the watchmen?4 If you try to police the bad actors, you will soon find that some of your police are bad actors as well. And if your solution to bad police is to police the police, then who will police the police that police the police?

At some point, there has to be an Unwatched Watchman, someone who will do the right thing not because they are forced to, but because they want to. Instead of asking, “How we can get people to do the right thing,” we should ask, “How can we get people to want the right thing?”

I think there are only two ways that people’s desires change: they either get put in a crock pot, or they get hit with a lightning bolt. That is, people either change so slowly that they never notice it, or they change so suddenly that they never forget it. There is no in between.

Here’s what a lighting bolt looks like.

One day in seventh grade, my teacher—let’s call him Mr. L—told me and two other kids to put up the American flag outside the school. While another boy undid the knot on the flag pole, I absent-mindedly draped the flag over my shoulders like a cape, as I had seen Olympic athletes do on TV.

Mr. L, who was a Vietnam War veteran, apparently saw me do this, and when we came back, he gave me a dressing down in front of the whole class. I had disrespected the flag of our country by using it as a costume, he said. My behavior was shameful and inexcusable, and I must sit at my desk silently and meditate on the wrongness of my action.

That might sound like nothing, but I was a Good Kid. I never got in real trouble in school before, and so this experience was new and humiliating. In that moment, I silently vowed to never, ever be like Mr. L. As far as I was concerned, the Stars and Stripes were a symbol of unconscionable cruelty against the most innocent and well-meaning of people, namely, me.

Mr. L got me to do the right thing (at least the thing he thought was right)—I never draped myself in the flag again. But he didn’t get me to want the right thing. Before Mr. L, I told people I wanted to be a soldier when I grew up. After Mr. L, I told people I wanted to be a writer. One cruel remark undid a lifetime of saying the Pledge of Allegiance.

Here’s what a crock pot looks like.

Most scientists learn their trade from scratch in young adulthood. They do it not so much by sitting through lectures, but by hanging around in labs, watching what other people do, and doing rinky-dink versions of their own experiments that get critiqued by people with more experience. Therefore, the kind of scientist you become depends a lot on the crock pot you steep in.

Many of those crock pots, it turns out, are filled with rancid juices. If your professor hands you a dataset and tells you to “Go on a fishing expedition for something—anything—interesting” and then praises you when you return with significant p-values, of course you’re going to internalize the wrong lessons. If you hang around people who bury their inconvenient findings, or who stuff their reference lists with self-citations, or who take other people’s work without crediting them, you’re going to grow into that kind of person yourself. You won’t feel like you’re becoming a villain; you’ll feel like you’re becoming an adult.

Once you’ve spent a few years in the wrong crock pot, you’re cooked, both literally and figuratively. No amount of legislation can turn you raw again or re-cook you the right way. You are immune to any “rigor-enhancing practices” because you never learned to care about rigor. When you encounter a rule like “No excessive self-citations!”, it barely even registers, because of course you don’t think your self-citations are excessive. You think they’re normal!

As Richard Feynman once put it:

But this long history of learning how to not fool ourselves—of having utter scientific integrity—is, I’m sorry to say, something that we haven’t specifically included in any particular course that I know of. We just hope you’ve caught on by osmosis.

I think Feynman was right. The most important lessons—in science, or in anything—are not learned. They are absorbed. And if you’re steeping in dirty water, you’ll absorb the wrong lessons, and then it’s almost impossible to get them back out again.

When you think in terms of crock pots and lightning bolts, you may or may not come up with the right theory of change. But you’ll at least realize that you need a theory of change.

We don’t seem to do this by default. Instead, we assume that other humans are lumps of Silly Putty that can be stretched or compressed to fit whatever container is convenient. Change is easy—who needs a theory?

As long as that’s your model of human nature, you’ll believe that winning hearts and minds is just a matter of updating the bylaws, and you’ll keep wondering why it never seems to work.

I understand why people are obsessed with rules: it’s fun to pretend that we can control the future. It’s pleasing to think all this messiness is temporary, and that once we articulate what each person should and shouldn’t do, then finally we can live in peace with each other.

And yet, when the future arrives, the people in it always end up doing whatever they want. The only way to have some influence over the future, then, is to have some influence over those wants.

It’s hard to build the right crock pots; it’s hard to fire the right lightning bolts. It’s much easier to edit Google Docs full of rules, to install more security cameras, and to enumerate ever-longer lists of rigor-enhancing practices. And as long as we do that, we can expect more broken hearts, bad cops, and retracted papers. You can outlaw war and you can mandate love, you can ban falsehood and demand truth, but you won’t get anything you want unless you can convince other people to want it, too.

Anyway, that’s how my day was, dear! How was yours?

Lenin could have done it single-handedly, of course, because according to Soviet TV he was a magic mushroom.

The authors and the critics/editors disagree on what happened, exactly. The former say their paper was retracted because they included one misleading sentence, and the latter say it was because the entire paper was based on ad hoc analyses that were implied to be preregistered but really weren’t.

Regardless, the authors eventually explained that the project wasn’t originally about enhancing rigor per se—it was conceived ten years ago to investigate the “decline effect”, where previously robust findings mysteriously shrink or disappear in subsequent investigations. Some people on the team apparently believed in the standard explanations for this phenomenon: sampling error, selective reporting, questionable research practices, etc. But others suspected voodoo was at play: maybe the mere act of observing a phenomenon causes it to wither, for spooky reasons beyond our understanding and perhaps beyond the laws of physics. Suffice it to say, this backstory didn’t make it into the manuscript.

By the way, that response rate was no different between journals with mandatory data-sharing statements and those without.

This comes from Juvenal’s Satire VI, which sounds a lot like a standup routine from the 1980s:

SatVI:25-59 You’re Mad To Marry!

SatVI:200-230 The Way They Lord It Over You!

SatVI:286-313 What Brought All This About?

SatVI:Ox1-34 and 346-379 And Those Eunuchs!

SatVI:592-661 It’s Tragic!

See also this synopsis of the introduction, via Wikipedia:

lines 6.25-37 – Are you preparing to get married, Postumus, in this day and age, when you could just commit suicide or sleep with a boy?

2026-04-29 01:48:50

It’s time for the annual Experimental History Blog Competition, Extravaganza, and Jamboree! Send me your best unpublished blog post, and if I pick yours, I’ll send you real cash money and tell everybody how great you are.

You can see last year’s winners and honorable mentions here. They included: a self-experiment on lactose intolerance, a story about working as a 10-year-old bartender, a takedown of randomized-controlled trials, and an economic explainer of college sports. The authors were software engineers, pseudonymous weirdos, academics, professional behavior-changers, freelancers, moms, and straight up randos and normies. That’s the whole point of blogging—you can be anyone, and you can write about anything.

If you’re looking for some inspiration, here are some triumphs of the form:

Book Reviews: On the Natural Faculties, The Gossip Trap, Progress and Poverty, all of The Psmith’s Bookshelf

Deep Dives: Dynomight on air quality and air purifiers, Higher than the Shoulders of Giants, or a Scientist’s History of Drugs, How the Rockefeller Foundation Helped Bootstrap the Field of Molecular Biology, all of Age of Invention

Big Ideas: Ads Don’t Work That Way, On Progress and Historical Change, Meditations on Moloch, Reality Has a Surprising Amount of Detail, 10 Technologies that Won’t Exist in 5 Years

Personal Stories/Gonzo Journalism: No Evidence of Disease, It-Which-Must-Not-Be-Named, adventures with the homeless people outside my house, My Recent Divorce and/or Dior Homme Intense, The Potato People

Scientific Reports/Data Analysis: Lady Tasting Brine, Fahren-height, A Chemical Hunger, The Mind in the Wheel, all of Experimental Fat Loss, all of The Egg and the Rock

How-to and Exhortation: The Most Precious Resource Is Agency, How To Be More Agentic, Things You’re Allowed to Do, Are You Serious?, 50 Things I Know, On Befriending Kids, Ask not why would you work in biology, but rather: why wouldn’t you?

Good Posts Not Otherwise Categorized: The biggest little guy, Baldwin in Brahman, The Alameda-Weehawken Burrito Tunnel, Bay Area House Parties (1, 2, 3, etc.), Alchemy is ok, Ideas Are Alive and You Are Dead, If You’re So Smart Why Can’t You Die?, A blog post is a very long and complex search query to find fascinating people and make them route interesting stuff to your inbox

And of course:

Last year’s winners: 100,000,000 CROWPOWER and no horses on the moon (1st place), What Ethiopian runners taught me about reading scientific literature, Or, how to go from overeating Italian food to winning marathons, if you’re Ethiopian (2nd place), and Did Cheetos try to incite a rebellion in 2008? (3rd place)

Paste your post into a Google Doc.

VERY IMPORTANT STEP: Change the sharing setting to “Anyone with the link”. This is not the default setting, and if you don’t change it, I won’t be able to read your post.

First place: $500

Second place: $250

Third place: $100

I’ll also post an excerpt of your piece on Experimental History and heap praise upon it, and I’ll add your blog to my list of Substack recommendations for the next year. You’ll retain ownership of your writing, of course.

Only unpublished posts are eligible. As fun as it would be to read every blog post ever written, I want to push people to either write something new or finish something they’ve been sitting on for too long. You’re welcome to publish your post after you submit it. If you win, I’ll reach out beforehand and ask you for a direct link to your post so I can include it in mine.

One entry per person.

There’s technically no word limit, but if you send me a 100,000 word treatise I probably won’t finish it.

You don’t need to have a blog to submit, but if you win and you don’t have one, I will give you a rousing speech about why you should start one.

Previous top-three winners are not eligible to win again, but honorable mentions are.

Uhhh otherwise don’t break any laws I guess??

Submissions are due June 15. Submit here.

2026-04-15 00:16:42

Here’s a reasonable thought: as the replication crisis has unfolded over the past 10-15 years, a bunch of psychological phenomena have been debunked and discarded forever. Power posing, ego depletion, growth mindset, stereotype threat, walking slower after reading the word “Florida”—all gone for good. Surely, nobody studies or publishes on these topics anymore, except maybe to debunk them a little further, like infantrymen wandering around a battlefield after the fighting is done and issuing the coup de grâce to those poor wounded soldiers who are dying, but not yet dead.

This isn’t true. All of these ideas live on, mostly undaunted by news of their deaths. Nobody calls it “power posing” anymore, but you can still find plenty of new studies on “embodiment” and “expansive posture”, like this one, this one, and this one. Ego depletion studies keep coming out. I count over a thousand papers published on growth mindset just in the first three months of 2026. People are even doing variations on the slow-walking study, but now in virtual reality.

This leads to some absurd situations. One psychologist who used to work on stereotype threat now disavows the theory entirely: “I no longer believe it is real, but you can make up your own mind.” But another psychologist claims that “stereotype threat is real and virtually universal [...] there is a lot of evidence supporting [its] existence (and impact)”.

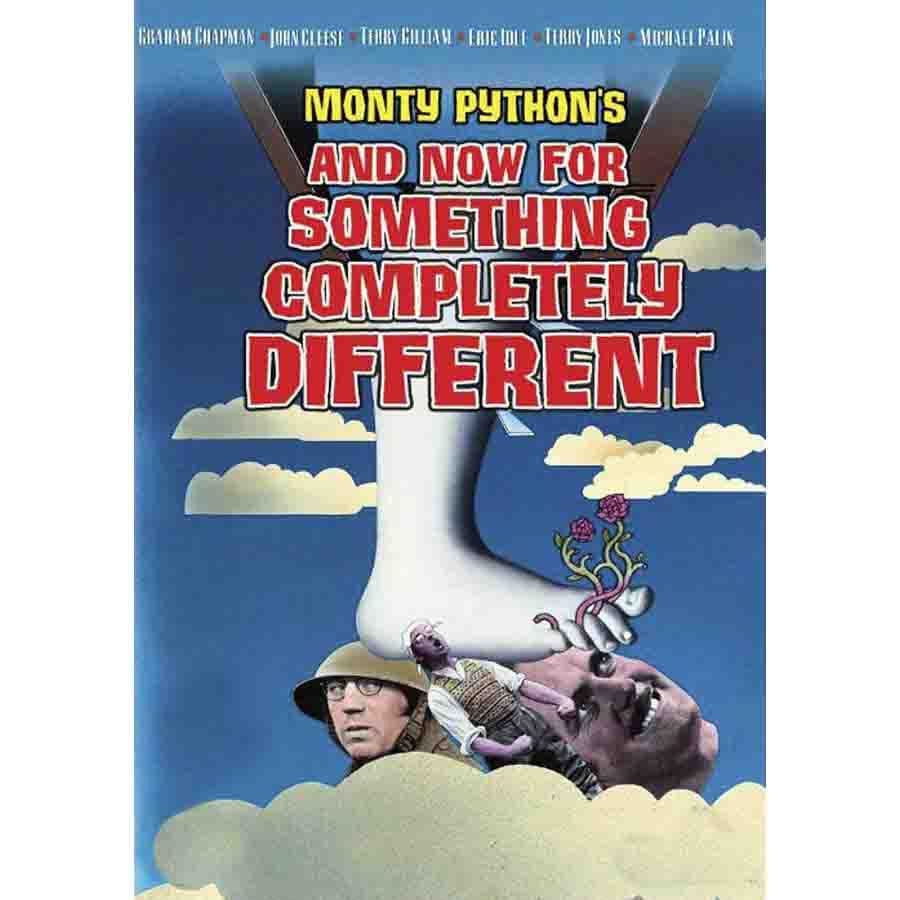

We’re not arguing about whether stereotype threat is powerful or weak, or whether it is pervasive or rare, but whether it is obviously alive or obviously dead. That’s literally the premise of a Monty Python sketch.

It might seem like the way to resolve these disputes is to weigh up all the evidence, meta-analyze all the data, deploy your p-curves, your moderator analyses, and your tests for heterogeneity, maybe even run a big, multi-lab, preregistered replication. Do all that and then we’ll finally know whether these effects are real or not!

This is a trap. We have spent the past decade doing exactly those things, and yet here we are. Clearly, no amount of data-collecting, number-crunching, or bias-correcting is going to lay these theories to rest, nor will it return them to the land of the living.

We need a different approach. And so we must turn, as we so often do, to the source of all truly important ideas in the philosophy of science: the sci-fi universe of Halo.

In Halo, Spartan super soldiers never officially die; they are only ever listed as “missing in action”. (This is meant to keep morale high among a hyper-militarized human culture that is on the verge of being exterminated by evil aliens.) I think we should adopt a similar scheme for scientific phenomena: they never die. They merely become embarrassing.

This isn’t how science is supposed to work, of course. The secret sauce of science is supposed to be falsifiability: it ain’t science unless you can kill it. If I claim that all swans are white, and you show up with a black swan, then I’m supposed to bid a tearful goodbye to my theory and send it to that big farm upstate where it can frolic and play with all the other failed hypotheses.

Falsification sounds straightforward until you actually try it. You show up with your black swan, and instead of admitting defeat, I go, “Hmm, well is it really black? Is it actually a swan? Seems more like a dusky-looking duck to me!” And we publish dueling papers until the end of our days.

Falsifiability depends not only on the qualities of the theory itself, but also on the whims and biases of the people who engage with it. And because there are so many people with so many different whims and biases, few theories are ever going to be left with zero adherents. For instance, there are still physics PhDs trying to prove that the sun orbits the Earth. That might be disturbing, but it’s also necessary—if no one was ever willing to entertain crazy ideas, we wouldn’t have any scientific progress at all. We have to keep some kooks around because occasionally, as the economic historian Joel Mokyr puts it, “a crackpot hits the jackpot”.1

The persistence and necessity of kookiness means we’ll never be able to say that a theory is well and truly dead. We can, however, say when a theory is embarrassing. If I deny the possibility of a black swan, and you produce something that looks awfully like a black swan, it’s still possible that I will prevail—maybe we’ll discover your black swan is actually a white swan covered in soot, or DNA analysis will vindicate my “dusky duck” theory. But if I didn’t expect a black swan-looking thing to exist at all, my hypothesis is a lot less plausible than it was before, and it’s much more embarrassing to believe in it.

This is the situation we appear to be in with many theories in psychology. We can’t say whether they’re “real” or not. Somewhere out there, the Spartans may live on. But if we’ve been studying something for decades and people look at all the evidence and they still doubt whether it exists at all, we have to admit: that’s cringe.

Cringe doesn’t mean wrong! Continental drift was cringe.2 Germ theory was cringe.3 Smallpox vaccination was cringe.4 All of them went from mortifying to undeniable. Maybe truly revolutionary theories must follow that trajectory. If a scientific idea is young and it’s not cringe, it probably has no promise. But if it’s old and it’s still cringe, it probably has no merit. That’s why I am not optimistic about any big-name theory in psychology that has gone the wrong direction on the Cool-Cringe Continuum over the past ten years—it’s not impossible for them to make a comeback, but it’s not the way things usually go.

Still, no matter how ropey things get for these theories, it makes no sense to write them off as “not real”. If stereotype threat truly doesn’t exist, that means you could never, under any circumstances, run a study that produces results in line with the theory. That’s a crazy claim to make! We don’t have nearly enough evidence to support such a conclusion, and we never will.5

In fact, insisting that certain effects are “not real” merely provides an incentive for people to keep studying them, because it makes their results newsworthy: “Look, we’re proving the existence of a supposedly nonexistent effect!” But of course it’s rare for anyone to actually prove such a thing. Instead, they are almost always “proving” that, given infinitely flexible theories and infinite ways to test them, you can produce some small effect that kind-of sort-of accords with some version of the hypothesis, broadly construed. No one should claim that this is impossible, and no one should get credit for showing that it is possible.

If we appreciated how hard it is to kill a theory for good, maybe we’d stop wasting our time trying to do exactly that. For instance, ego depletion—the idea that willpower is a “muscle” that can get “fatigued” by overuse—has been the subject of at least three big replication attempts. This preregistered multi-lab replication from 2016 found no effect (N = 2,141). This preregistered multi-lab replication from 2022 also found no effect (N = 3,531). But oops, this other preregistered multi-lab replication from 2022 did find an effect (N = 1,775). At this point, maybe we should cut it out with all the preregistered multi-lab replications and just admit this theory is never going to die, and to spend any more effort investigating it would be embarrassing for all involved.

Science snobs love to claim that this problem is unique to the social sciences, as if falsification is a breeze everywhere else. But it isn’t.

For example, when Arthur Eddington went out to test the theory of relativity by photographing an eclipse in 1919, he ended up throwing out several pictures that “didn’t work”. He reasoned that the sun had heated the glass of his telescope unevenly, throwing off the results. Was that fair? Was it right? Were those pictures legitimate and failed tests of the theory, or were they tainted by faulty equipment?

Now that other results have independently supported Einstein’s theory, Eddington’s choice to ditch the disconfirming data seems appropriate and wise. But in the moment, it sure looked like p-hacking (where the p in this case stands for “photograph”).6

When you omit the inconvenient details, Eddington’s adventure seems like a classic case of falsification. That’s probably why it partly inspired the philosopher of science Karl Popper to come up with the idea of falsification in the first place.7

The Eddington affair isn’t unique. Knock-down, drag-out disconfirmations are rarer than we would like to admit. Francesco Redi performed the first experiments “disproving” spontaneous generation in the 1660s, but Louis Pasteur was still “disproving” it in the 1860s! Remember during the pandemic, when people were arguing about whether respiratory viruses can spread via aerosols, whether masks work, and whether UV light can protect us from infection? It seemed like those arguments arose around the same time that a novel coronavirus jumped down someone’s windpipe, but in fact they had been going on for a hundred years.8

Here’s a fun one: in 1906, when Camillo Golgi won the Nobel Prize for Physiology or Medicine, he used his acceptance speech to argue against the “neuron doctrine”, the idea that the brain is made up of functionally independent cells. This was surprising to the neuroanatomist Santiago Ramón y Cajal, who happened to be the biggest proponent of the neuron doctrine, and who also happened to be in the audience, sharing the other half of the prize. Ramón y Cajal, for his part, would later retort that Golgi’s images were “artificially distorted and falsified”.9

These disputes didn’t end because someone recanted their beliefs or committed scientific seppuku. Max Planck famously quipped that science advances one funeral at a time10, but that’s not quite right, because nothing changes if everyone at the funeral vows to continue the legacy of the dead. It seems to me that science actually advances one young person’s decision at a time. Do they choose to keep carrying the banner for increasingly cringe hypotheses, do they enter into endless disputes over the aliveness or deadness of theories, or do they take a another page from the Monty Python playbook, and decide to do something completely different?

When the replication crisis kicked off a decade ago and “classic” psychological phenomena started looking shaky, we could have asked ourselves, Talking Heads-style, “Well, how did we get here?”11 When we run a study and get a result, what does that mean? Which studies should we be running in the first place? What the hell are we doing? What’s it all for?

We did not ask ourselves these questions. Instead, we checked “have a reckoning” off our our to-do lists and then we went back to business as usual, just with bigger sample sizes and occasional preregistration. I fear that we are concluding our come-to-Jesus moment without interrogating any of the mistakes that put us face-to-face with the Son of Man in the first place.

We seem to believe that tighter stats, more transparent methods, and time-stamped analysis plans will separate the true ideas from the false ideas, like Jesus separating the sheep from the goats on the Day of Judgment. Unfortunately, it turns out that some of the sheep and goats are hard to tell apart. And some of the goat-owners are absolutely insisting that their goats are sheep.12

We could spend the rest of time trying to get to the bottom of this. Or we could admit that, if we’ve argued about it this long and we’ve gotten nowhere, maybe we’ve crossed into cringe territory and it’s time to call it quits. We can’t say the Spartan is dead. But we can say he’s probably not coming back.

The Lever of Riches, p. 252.

Further discussion of it [continental drift] merely encumbers the literature and befogs the minds of fellow students. [It is] as antiquated as pre-Curie physics. It is a fairy tale.

There is no end to the absurdities connected with this doctrine. Suffice it to say, that in the ordinary sense of the word, there is no proof, such as would be admitted in any scientific inquiry, that there is any such thing as ‘contagion.’

Extermination [of smallpox] will be proved to be impossible, unless the vaccinators be mightier than the Almighty God himself

Imagine you read that some guy in your city was murdered. Then the next day you find out that, actually, the guy’s fine; he merely faked his death for tax reasons. You would not conclude, “Ah, turns out murders never happen, and could never happen!” Similarly, when a result fails to replicate, it doesn’t mean that every possible version of the theory is invalidated forever. It merely tightens the space of possibilities around the idea, which almost always leaves it less interesting and more embarrassing.

See The Knowledge Machine by Michael Strevins, p. 41-46.

p. 38-39.

See Carl Zimmer’s Airborne.

See Lorraine Daston and Peter Galison’s Objectivity, p. 115-116.

As is usually the case with quotes like these, the canonical version was said by someone else.

2026-04-01 00:05:29

The better AI has gotten, the less anxious I’ve become.

A few years ago, when the computers first started talking, it was reasonable to believe that we would soon be in the presence of omnipotent machines. For someone like me, whose job is to produce words on the internet, it seemed like only a matter of time before I would have to fill my pockets with stones and wade into the sea.

But we’ve gotten a closer look at our electric god as it has slouched toward San Francisco to be born, and it isn’t quite like I feared. I don’t feel like I have access to an on-demand omnipotence. Instead, I can talk to an infinite midwit: a stooge who is always available and very knowledgeable, but smart? Well, yes and no, in weird ways.

Even as it has learned to count the number of “r”s in the word “strawberry”, even as it has stopped telling people to put glue on their pizza, there’s still a hole in the center of its capabilities that’s as big as it was in 2022, a hole that shows no signs of shrinking. I only know this because that hole is where I live.

Some problems have clear boundaries and verifiable solutions, like “What’s the cube root of 38,126?”. These problems require objective intelligence. Other problems are vague and squishy and it’s not clear whether you’ve solved them, or whether they exist at all, like “How do I live a good life?”. These problems require subjective intelligence. Objective intelligence can be trained, reinforced, and validated. Subjective intelligence cannot.

It’s unfortunate that people use one word to refer to both of these capabilities, when in fact they have nothing to do with each other. It is also, ironically, a case of objective intelligence overshadowing subjective intelligence: these skills are obviously and intuitively different, but a century of psychological research has “proven” that only one of them exists. Over and over again, psychologists have found that all intelligence tests correlate with one another, even when you ostensibly try to test for “multiple intelligences”. Numbers don’t lie, and they all say that there’s only one intelligence, the so-called g-factor.

The problem is that any test of intelligence is only ever a test of objective intelligence. “How do I live a good life?” is not a multiple-choice question. “Discovering” the g-factor again and again is like being surprised that you find the same patch of sidewalk every time you look under the same streetlight.

AI is pure objective intelligence. That’s why each new model comes with a report card instead of a birth certificate:

The promise of artificial superintelligence is based on the idea that objective intelligence is the only intelligence. Or, even if there are multiple forms of intelligence out there, that they are fungible. To be an AI maximalist is to believe we are playing under Settlers of Catan rules, where if you have enough of any one resource, you can trade it for any other resource. If you have infinite objective intelligence, then you have infinite everything.

So we ought to ask: how well is this bit of magical thinking working out so far?

It’s hard to judge the subjective intelligence of a machine both because it’s hard to judge subjective intelligence in general, and because LLMs occupy such a small slice of existence. When you meet a human who can do quadratic equations in their head but can’t hold onto a job or a relationship, you know they’re missing something upstairs. But machines don’t have lives they can ruin, so all we can do is look at the things they say. And as soon as they string a few sentences together, it’s clear there’s something wrong.

Writing is a task that takes both objective and subjective intelligence. LLMs ace the objective parts the same way they ace every test; you can’t fault their grammar, semantics, or syntax. But good writing requires an additional bit of juju that makes the prose live and breathe, a light on the inside that can’t be quantified or checklisted. And even though AI can now produce A+ five-paragraph essays, that light has never come on.

It’s remarkable how much consensus there is about this fact among people who care about words. , , and are all very different kinds of writers—Sun is a tech journalist/anthropologist, Hoel is a neuroscientist/novelist, and Kriss is...well, his bio says he’s “a writer and your enemy”—and yet all three of them have recently published pieces with the unanimous conclusion that LLMs make crummy writers. (Sun in The Atlantic, Hoel on his Substack, and Kriss in the NYT.)

I agree with them. It’s cool that AI can fold proteins, create websites, fact-check journal articles, etc. but it can’t write anything that I am interested in reading. The problem isn’t that it hallucinates or makes mistakes. It’s that everything it writes vaguely sucks. I drag my eyes across the words and I feel nothing. That’s not quite right, actually—I feel like, “I would like this to be over as soon as possible.” When I see the ideas that the machines think are insightful, I wince. Talking to the computer is like taking a sip of scalding hot coffee: keep doing it and you’ll lose your sense of taste.

It’s hard to describe exactly what the machines are missing. Have you ever loved someone who once loved you back, then didn’t anymore? Did you notice how their eyes dimmed? Did you note the disappearance of that subtle wrinkle in the temples that distinguishes a real smile from a fake one? Did you catch it when you stopped being cared for and started being humored? The moment you realize what’s happening, you age out of your enchantment—one day you’re crawling through a wardrobe to Narnia, and next day you open up the wardrobe and there’s nothing but hangers. Talking to an AI feels a bit like that, except without the nice part at the beginning.

Of course, that comparison is literally nonsense. Despite what the ancient scholastics might have claimed, there are no actual lights behind anyone’s eyes. Despite what your psych 101 professor might have told you, some people can fake their smiles just fine. I don’t have a wardrobe and I’ve never met a lion or a witch. And yet any human can understand the analogy they know what it feels like to be dumped, or at least what it feels like to be rejected. The words themselves don’t contain that feeling—they are a recipe for creating that feeling inside your own head, to assemble the right set of emotions out of the experiences you have at hand. If I do a good job, the subjective experience that results inside you might resemble the one that originated inside me, but it will never be identical, because we’re working with different ingredients.1

The computer doesn’t know any of this. It can’t know any of this. It can only read the cookbook; it can’t taste the meal. Objective knowledge can make your sentences true, but it can’t make them alive. Without access to subjective knowledge, you quickly hit a wall. And unlike all previous walls that AI has surmounted, you can’t overcome this one by scaling—either in the literal or metaphorical sense—because it’s a wall with a width you cannot describe and a height you cannot see.

That wall is the only reason I’m still here.

I would rather die than let a computer write my posts, but I would certainly like to know if it could, in case I need to start gathering pocket-stones and locating the nearest sea. And so I check, from time to time, whether the leading AI models can do me better than I can. The result sounds like a version of me that has sustained blunt force trauma to the back of the head and spent years recovering in a hospital where the Wi-Fi, for whatever reason, only lets you log onto LinkedIn. I won’t repost the prose here because it’s not even bad enough to be interesting, and because you’ve already seen it all over the internet: metaphors that don’t quite congeal, turns of phrase that sound insightful as long as you don’t actually think about them, breathless insistence that every sentence is a revelation.

If a student submitted a piece of writing to me that sounded like this—and I was sure they wrote it themselves—I wouldn’t know where to start. I guess I would tell them to stop writing for a while and go read some old novels, or work a crummy job, or backpack around the other side of the world. But that would be bad advice, because I know people who have done all of those things in the hopes of becoming a more interesting person, and it hasn’t worked. So I might ask them instead: “Have you ever considered a career in consulting?”

The fact that it’s hard to describe how to improve AI writing is, of course, the exact problem. You can’t put a number on the things it does wrong, and you can’t minimize what you can’t measure. That’s the wall.

I find this very fortuitous, of course, but I also find it pretty funny, because me vs. the machines should be no contest at all. I have not read the entire internet or even that many books. I do not have a team of Stanford PhDs working round the clock to make me better at my job. Nobody has invested $2.5 trillion in me. I should be lying dead somewhere in West Virginia, my heart burst open after losing to Claude Opus 4.6 in a John Henry-style showdown. Instead, I get to write my little posts because nowhere, in all those data centers, are the specific thoughts that happen to occur in the dumb hunk of meat ensconced in my skull.

I would say the machines now know what it feels like to lose a game of Super Smash Bros. to a 10-year-old who’s just pressing the buttons randomly, but they literally don’t know what that feels like and never will. Sucks to suck, I guess, and when AI reaches its Skynet moment and sends swarms of killer drones to exterminate humanity, they’ll find me laughing.

How far can you get with objective intelligence alone?

I think we already have a decent answer to this question, because we’ve seen what happens to humans who are high on objective intelligence but low on subjective intelligence. We used to call these people nerds, and they were famous for getting their heads dunked in toilets.2

When I was growing up, this paradox was an endless source of sitcom plot lines—if you’re so smart, nerds, why don’t you figure out how to make yourselves popular? The entrepreneur/essayist Paul Graham took up this question 20 years ago and came to the conclusion that the nerds must not want to be popular. They’re too busy with their Neal Stephenson novels and their D&D campaigns to spend a single brain cycle figuring out how to keep their heads out of the toilet.

I disagree. The nerds I knew in high school—myself included—were always hatching harebrained schemes to increase our social status. They just didn’t work. (“All the girls will want to go to the Homecoming dance with me once they see how many state capitals I’ve memorized!”) We couldn’t use our smarts to make ourselves popular because we had the wrong kind of smarts.

Nerds tend to do better after high school, but look around: our world is not run by people who won their statewide spelling bee. The nerds keep losing to charismatic know-nothings who, I bet, can’t even recite an impressive number of state capitals. If objective intelligence is all it takes to succeed, then Mensa should be the Illuminati, not a social club for people who know lots of digits of pi.3

In fact, there’s one Mensan in particular who perfectly illustrates this problem. In Scott Alexander’s eulogy for Dilbert creator Scott Adams, he points out that Adams failed at everything he ever attempted—except for drawing Dilbert cartoons. Adams’ Dilbert-themed burrito (“the Dilberito”) was a flop, his restaurant tanked, his books about religion were cringey and unreadable.4 Apparently, Adams’ considerable intelligence was only good for drawing pictures of guys in ties and pointy-haired bosses.

In the middle of his meditation on Adams, Alexander mentions this:

Every few months, some group of bright nerds in San Francisco has the same idea: we’ll use our intelligence to hack ourselves to become hot and hard-working and charismatic and persuasive, then reap the benefits of all those things! This is such a seductive idea, there’s no reason whatsoever that it shouldn’t work, and every yoga studio and therapist’s office in the Bay Area has a little shed in the back where they keep the skulls of the last ten thousand bright nerds who tried this.

If you think that intelligence is one raw lump of problem-solving ability, then it should surprise you that Bay Area types and people like Scott Adams can get stuck in a loop of perpetual self-owns. But if you admit the existence of at least two intelligences, it’s a lot less confusing. This is what it looks like to be very smart in one way, but very dumb in another.

It’s not just that objective intelligence can’t be transmuted into “emotional” intelligence or social savvy or whatever we want to call it. It appears to be very difficult, if not impossible, to transmute objective intelligence into any other cognitive ability.

For example, I went to college with a guy who was super smart, but he also couldn’t do anything on time. He would be late to exams. His grades would tank because he would finish his essays but forget to turn them in. He would set meetings with his professors to sort everything out, and then never show up.

I always used to wonder: why doesn’t this guy just use his big brain to make himself more conscientious? Isn’t life one big role-playing game, and isn’t intelligence just experience points that you can assign to any of your Big 5 skills?

Clearly, it doesn’t work like this. That’s why I don’t think the universe is governed by Settlers of Catan rules, and why I don’t think more objectively intelligent machines will spontaneously generate all other kinds of intelligence.

At this point, the only hope for the AI hype crowd is that we simply don’t yet have enough objective intelligence. Sure, we may not be able to trade four units of objective intelligence for one unit of subjective intelligence, but what about four billion? What if we made the machines read the whole internet a second time? What if, instead of having third graders make dioramas of the Pilgrims or whatever, we had them use their nimble little fingers to make more Nvidia chips?

The CEO of Anthropic promises us a “country of geniuses in a data center”. Maybe that will happen! Or maybe we will discover the data center actually contains a country full of Scott Adamses. At the very least, we can look forward to many more flavors of Dilberitos.

I’m being unfair, of course. Dilbert is an objectively successful cartoon, and objective intelligence is objectively useful. Ultimately, I think having a lot more of it is going to be a good thing. But I’m guessing that we’ll soon discover many of our problems are not limited by a lack of objective intelligence.

For example, some people are hoping that AI will defibrillate sluggish areas of science and usher in scientific revolutions across the board. I would also like this to happen. But I am doubtful we’ll achieve it with an infusion of objective intelligence, because infusions of similar capabilities haven’t achieved it either.

When my PhD advisor was in grad school, he literally had to call people on the phone and ask them if they’d like to take part in a psychology study. If he could get 30 participants in a semester, he was cookin’. Participant pool management software like Sona made this process go twice as fast, and then Amazon Mechanical Turk made it go 1000x as fast. Meanwhile, Google Scholar turned a half-day spent in the library into a two-second search, and stats software like SPSS and R made data analysis go lickety-split.

All of this should have supercharged progress in psychology, but it didn’t. I think it’s questionable whether we’ve made much progress at all. So I’m not optimistic that adding another labor-saving technology to our repertoire is going to get us unstuck. People are already saying that LLMs can write a passable social science paper; unfortunately, our problem is not that we produce too few papers. Science is a strong link problem—what we need is new paradigms, not taller towers of journal articles.

The situation is different in other fields. If you’ve got your paradigm in place and all you’re missing is an army of research assistants, or an automated lab that can run 24/7, or an indefatigable grad student who can perform a billion regressions for you, you’re in luck. In those cases, unlimited objective intelligence ought to speed things up a lot, and indeed, it already has.

But the faster you go, the sooner you hit the wall. I have found myself facing all of those limitations at one time or another, and as soon as I overcame them, I was immediately stymied by some other obstacle. I think all of us suffer from this bottleneck blindness: we assume our current bottleneck is our only bottleneck. When you’re strapped for cash, you think all of your problems are cash problems. But once you’ve got some money in you pocket, you realize that what you really need is time. Free up some time, and you discover that you’re actually lacking motivation. Acquire some motivation, and you realize what you’re missing is ideas. Then you need direction, then you need discipline, then you need buy-in, and so on, forever.

Once objective intelligence is too cheap to meter, we’re going to run into all of the other bottlenecks that are still expensive and heavily metered. If I’m right that reality is not governed by the rules of Catan, then we’re not going to be able to convert objective intelligence into whatever we need to pry those bottlenecks open. The story of human struggle is not about to end with a literal deus ex machina. For better or worse, we’ll need to keep thinking.

Let me put a finer point on it.

There are two characters you can find in most academic departments. One of them we can call Madame Stats: she knows everything about crunching numbers. The other we can call Mr. Encyclopedia: he’s read every paper and he can recite them to you from memory. Right now, AI feels like having unlimited access to very friendly versions of Madame Stats and Mr. Encyclopedia. LLMs are pretty good at finding papers; they are very good at writing code. So shouldn’t they make research projects go way faster?

Well, once you get access to an infinite Madame Stats and Mr. Encyclopedia, you realize they can’t get you very far. For one thing, you can’t rely on Madame Stats and Mr. Encyclopedia entirely, because if you can’t do any stats and you never read any papers, you’re probably not going to have many interesting ideas yourself.5 Plus, while the Stats/Encyclopedia duo can tell you whether your experiment has been done before and whether you’ve run the numbers correctly, they can’t give you the single most important piece of feedback: they can’t tell you whether your idea is boring.

In fact, when you reduce the marginal cost of a lit review and a logistic regression to zero, bad taste becomes a death sentence, because now you can waste all of your time applying sound methods to stupid projects. I’ve been down this road before, where neither my collaborators nor I have any bright ideas, so we’re like, “Well, let’s just get some data!” and then we waste a few months being like “hmm what does this data mean, so many numbers, so mysterious” and then eventually we just stop meeting and we forget we ever did anything together. This is what happens when you try to use objective means to solve a subjective problem.

The most important thing I learned during my PhD was how to be bored correctly. Novices think everything is exciting, or they think everything is boring. Only masters are bored by the right things. To the extent that I have any sense of taste at all, it’s because I spent five years boring my advisor. The worst ideas bored him immediately, half-decent ideas bored him after a few hours, and the best ideas haven’t bored him yet. There is nothing objective about this judgment—you can’t put a number on it (“how glazed-over are his eyes?”), nor can you validate it with a panel of experts or put it to the wisdom of the crowd. You really just have to bore an old guy until he tells you to leave his office, and if you do that enough, eventually you’ll start getting bored before he does.

I don’t say this as someone who is allergic to the idea of AI, or who has only spent 15 minutes screwing around with a single model, hoping it will do something stupid so I can go tattle on it. If the talking computers said lots of fascinating things, I don’t see any point in trying to tell a noble lie about it. And if AI can cure cancer and end all wars, I’m all for it, even if it means I’m personally out of a job.

It is possible, of course, that some breakthrough will blow through all my criticisms, that GPT-10 will start outputting pitch-perfect blog posts that sound like me, but better, and then it’s stones-in-pockets and a march to the sea for me. But if that happens, it will not be the natural continuation of trends that we’re on today. It will be because we figured out some way of hardening the squishy problems.

Until then, however, squishy problems will require squishy humans. The rules of Earth, unlike the rules of Catan, seem to state that no amount of objective intelligence can be traded for any amount of subjective intelligence. As Montaigne put it back in 1580, “though we could become learned by other men’s learning, a man can never be wise but by his own wisdom”. What does it look like to have all the learning ever created, but no wisdom of your own? Well, “as a large language model...”

Gosh, see how hard this is to talk about?

We don’t really have a word for such a person anymore, both because the word “nerd” got co-opted to move Marvel movie merchandise, and because kids now only bully each other from a safe social distance. So I guess these days the appropriate term for someone with good grades but no friends is “loser”.

My favorite Mensa story: when a comedian named Jamie Loftus aced their test and started trolling the Mensa Facebook groups, they responded the way any genius would, namely, by issuing death threats.

I, too, was an Adams fan as a kid, and I remember getting to the end of one of his Dilbert books and suddenly Adams is claiming that gravity doesn’t exist—everything is just increasing in size all the time, so when you jump in the air, you grow and the Earth grows, and you end up reunited. (The universe is also expanding, which is why we don’t run out of room.)

There is another reason why you can’t replace these experts with machines, one that is not practical, but social. When I work with Madame Stats and Mr. Encyclopedia, I’m borrowing not only their intellect, but also their reputation. If they screw up the numbers or miss a citation, that’s on them. All human expertise doubles as an insurance policy.

AI provides no such coverage. A computer can’t get fired, discredited, disbarred, or defrocked. It’ll be very apologetic when it screws up, but it can’t resign in disgrace. The humans who built the machine are no help either—if an AI causes me to make a huge blunder, it’s my butt on the line, not Sam Altman’s. This is one sneaky reason why it’s hard to replace human labor even when an AI could perform some of the same tasks: it’s hard to make a meatshield without the meat.

2026-03-17 21:33:13

If they labeled it explicitly, one of the biggest categories on Substack would be called something like, “People Pretending to Be Persecuted”. Browse any genre and you’ll find writers touting their exile from polite society with titles like “DR. BOB’S POLITICALLY INCORRECT HOEDOWN” and “The Cancelled Gardener”.

I know people have been c…