2026-06-06 00:50:04

Google's AI app Pixel Studio launched less than two years ago, but is now being shut down.

![]()

2026-06-05 23:41:34

We're waiting to learn more about what happened aboard the International Space Station.

2026-06-05 22:39:57

It's the first instance of an vaccine antigen designed exclusively by artificial intelligence.

2026-06-05 21:38:08

This week on the Engaadget Podcast, we dive into NVIDIA's RTX Spark chip and the many ways it could reshape the world of Windows PCs (or not).

2026-06-05 21:30:00

Microsoft has two livestreams planned for this Sunday.

2026-06-05 21:30:00

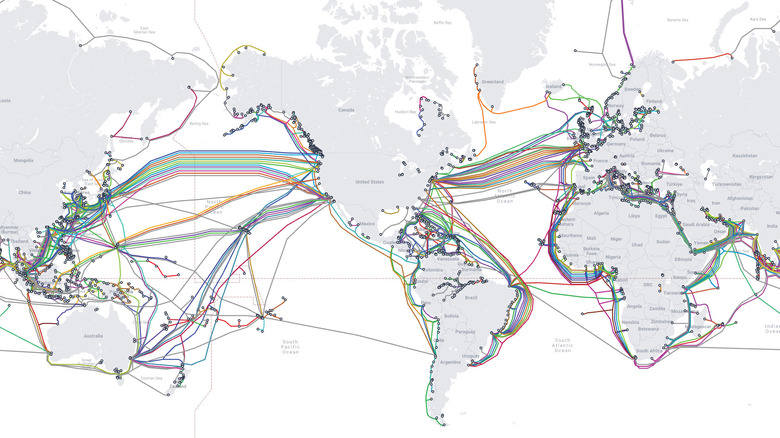

A closer look at how undersea cables connect the world.