2026-06-02 22:04:43

If you liked this piece, you should subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 5,000 to 18,000 words, including vast, detailed analyses of NVIDIA, Anthropic and OpenAI’s finances, and the AI bubble writ large. My Hater's Guides To the SaaSpocalypse, Private Credit and Private Equity are essential to understanding our current financial system, and my guide to how OpenAI Kills Oracle pairs nicely with my Hater's Guide To Oracle.

Over the last three weeks, I’ve published an exhaustive three-part guide to how the AI bubble might collapse, the events that might trigger it, and the consequences.

Subscribing to premium is both great value and makes it possible to write these large, deeply-researched free pieces every week.

Something changed in the last week.

Shortly after Uber COO Andrew Macdonald said that it was “getting harder to justify” spending money on AI as it was “very hard to draw a line” from that spend to useful consumer features (after its CTO said Uber burned its entire annual token budget in four months), Axios’ Madison Mills reported that one company had accidentally spent $500 million in the space of a month on Anthropic’s models after failing to set spend limits. A few days later, Mills would report that other companies were now looking for ways to reduce their AI spend.

That’s because, as I’ve said before, nobody can actually measure the ROI of AI, or even create a standard measurement of the cost of a task thanks to the inevitable hallucination-prone nature of LLMs and the ever-growing list of different harnesses and “agentic” (sigh) interfaces. Every different prompt and project and interaction can go wrong in a way that is hard to predict or plan for other than having an eternal vigilance that the supposed “intelligence” doesn’t do something catastrophically stupid, because LLMs have no thoughts, consciousness or ability to learn outside of pre and post-training.

If you can’t measure how good something is, how much it might cost, or what your return on investment might be, it’s fair to ask why you’re even paying for it in the first place.

People are (reasonably!) harping on about the ROI problem, but I think the “can’t really measure the cost” part is an even bigger problem.

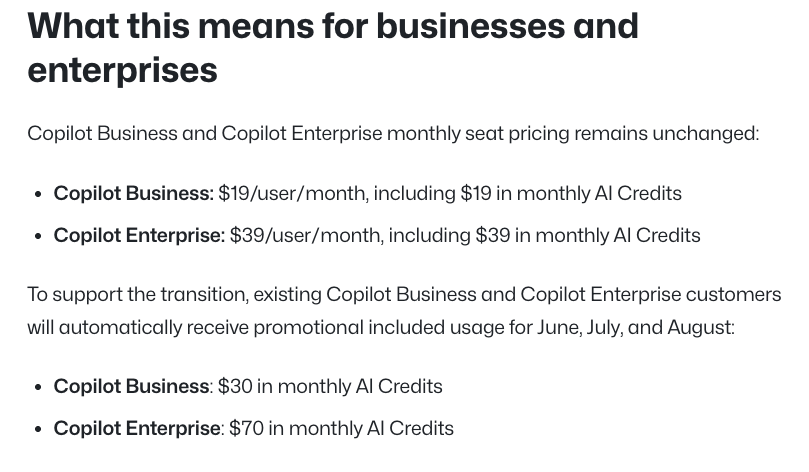

Yesterday, Microsoft’s GitHub Copilot moved all customers to token-based billing from a premium request model (as I reported a week before everyone) as users had been allowed to burn thousands of dollars of tokens on a $39-a-month subscription.

Customers are irate. One burned through 50% of their monthly credits in a single prompt, another burned 60% in the space of a few hours, another 31% in a single prompt, another estimated that they’d burn their monthly credits in the space of a single five hour session, another burned nearly half of their credits in eight prompts, another around 14% of their credits in two prompts, and another lamented that GitHub Copilot had gone from their favorite subscription to their most-stressful overnight after burning 33% of their monthly balance in a few hours.

And, to be clear, this is during a promotional period where you get $11 or $21 in free monthly credits:

These users — much like the users of effectively every subsidized AI subscription — never really knew how much anything they did cost, because Microsoft intentionally hid the actual cost of prompts and allowed users to spend obscene amounts as a way of boosting growth for GitHub Copilot.

This problem is industry-wide.

Every single user of every single AI subscription service is having their tokens subsidized and the actual cost of AI obfuscated. As a result, every frothy, fluffy hype-piece about Claude Code or AI in general is a kalopsia — the belief that something is more beautiful than it really is.

Educational Sidebar! While many of you may know this, for those just joining me, let me break down how the average AI subscription works. You pay a monthly subscription to, say, Anthropic or OpenAI’s services, and get to use these services as much as you’d like subject to both daily and weekly “rate limits.” None of these companies ever really explain what that rate limit might be, giving users instead a vague percentage gauge and leaving them to work it out on their own.

When you use an AI model, you feed information into it via input tokens (a token is about ¾ of a word) and receive outputs via output tokens, and companies bill on a per-million token basis. While models can “cache” information as a means of avoiding having to read or write it again, every single interaction costs money, regardless of its success or efficacy. This is why every AI startup is inherently unprofitable — they’re literally sending every penny of their venture capital money directly to Anthropic and OpenAI to power their unprofitable services. AI labs may be able to run their own infrastructure and save some costs, but we have no evidence that this makes anything “profitable.”

For example, Anthropic lets you burn anywhere from $8 to $13.50 in tokens for every dollar of subscription cost, and while AI boosters will say that Anthropic is “profitable on inference,” nobody actually has proof outside of theoretical scenarios posed by CEO Dario Amodei.

Think of it like this: if you’re using an AI subscription with rate limits but no actual costs, any mistakes a model makes — such as getting stuck in a loop or just doing the wrong thing — can be dismissed as the troubled nature of early-stage technology, because the “cost” was $20, $100, or $200 for the entire month. Anthropic, OpenAI and every other AI company deliberately obfuscated these costs because they knew that the second a user actually had to pay for the fuckups of an AI model they’d scream like they were being stung to death by bees.

This issue bubbled to the surface in the last few months because Anthropic and OpenAI both quietly moved all of their enterprise customers to token-based billing in Q1 2026, and because these enterprise customers are run by Business Idiots with no connection to actual work, CEOs encouraged (or actively incentivized) their workers to use AI as much as possible, in some cases even making one’s AI use a KPI that could cost them their job.

These same workers were conditioned — through their use of AI subscription products that hide the true costs — to use them as if they cost nothing, all while being screamed at by useless middle managers to “make sure to adopt AI at scale,” all while never, ever having any awareness of what a particular unit of work cost.

This was always a recipe for destruction. The overwhelming majority of AI users are completely divorced from and actively trained to ignore the true cost of AI tokens, which means they naturally use these services in a way that’s actively uneconomical. Every frothy hype-piece you’ve read has been written by somebody who has been conned into ignoring the true cost of AI, all in service of spreading a technology that’s unreliable, inconsistent and expensive at its core, and never, ever seems to get cheaper.

Sidenote: Even with the “cost of intelligence” (the per-million token cost) of models coming down, models are using far, far more tokens for the same task, ultimately raising the cost of inference. Put another way, imagine if the cost of gas got cheaper but the distance between you and your destination kept getting longer.

OpenAI, Anthropic and other AI companies have actively conspired to mislead the world about the true costs of AI, and it was working great right up until they decided to try charging what it actually cost. Less than a quarter into the shift to token-based billing, enterprises are freaking the fuck out, with Walmart setting token limits on its internal “Code Puppy” AI coding tool, with a spokesperson saying that it “wanted employees to apply AI in ways that create value” mere days after Amazon SVP Dave Treadwell told employees to “not use AI just for the sake of using AI.”

The last few years of AI hype have been built on lies. Every company has conspired to make you think that AI is affordable and sustainable, that profitability was possible, that hallucinations were fixable, and that any problems you faced today were a result of being in “the early innings.” In reality, the AI industry has absorbed over a trillion dollars, effectively all tech talent, the majority of startup funding, the majority of media coverage, the art and work of millions of people, and been given chance after chance after chance to fix the obvious, glaring issues.

Every time a skeptic dared to stand out and say that none of this made sense, they were told that it was just like Uber (it’s not) or that Amazon Web Services cost a lot of money (it cost $52 billion over the course of 14 years and was cash-flow positive in nine), that “costs always come down,” and that everything would magically be alright as long as they were patient for an indeterminate amount of time.

Four years and a trillion dollars in, AI is more expensive, its companies more cash-intensive, its products just as unreliable, and its boosters more desperate than ever to make you ignore reality as a means of empowering one of a few ultra-rich oafs. Products from OpenAI and Anthropic are built to ingratiate and coddle losers while creating work-shaped outputs that are good enough to impress braindead executives, imbeciles and middle management hall monitors that don’t do any real work, and the reason it’s worked this long is that both companies intentionally misled everybody about how much the real costs were.

I must repeat myself: AI is more expensive today than it was three years ago, and it is not getting cheaper. Sam Altman’s comments about “intelligence too cheap to meter” were lies. NVIDIA’s Blackwell GPUs didn’t make it cheaper, and its Vera Rubin GPUs won’t either. Google’s TPUs won’t do it, Amazon’s Trainium or Inferentia chips won’t do it, Vera Rubin CPUs won’t do it, OpenAI’s chips won’t do it, and no, DeepSeek won’t do it either.

People chose — and still choose — to believe that AI would get cheaper because they think things got cheaper over time in the past, which is sort of true but not remotely similar in any way, because the cost of running and training AI models comes from using the hardware as well as its upfront cost. Large Language Models require expensive GPUs thanks to their reliance on power-intensive parallel processing, and larger, more-complex models in turn require more GPUs to both train and run inference with.

And three generations in, NVIDIA GPUs don’t appear to be bringing the cost down at all, which heavily-suggests that the inherent business model of generative AI is broken.

People love to compare AI to the Dot Com Bubble (AI is far, far worse) because it’s much easier to rationalize bad behavior than accept that we’re facing the largest misallocation of capital of all time.

The Dot Com Bubble was really two bubbles — one around eCommerce and internet startups, and one around telecommunications infrastructure.

Per Justin Kollar, the telecommunications bubble grew because of a fundamental misunderstanding of demand:

This continental rewiring was also justified by another powerful myth—that internet traffic was doubling every 90 days. The claim spread through analyst reports, earnings calls, and investor presentations like a particularly virulent meme. If true, it meant that demand was growing exponentially, far outpacing any conceivable supply, and that every new trench of fiber would soon pay for itself many times over.

But the mathematics were fiction. Network researchers like Andrew Odlyzko (at AT&T), looking at actual traffic data, found that U.S. backbone traffic was doubling roughly once a year—rapid growth, certainly, but nowhere near the purported 90-day cycle. Meanwhile, advances in fiber technology were making each strand exponentially more powerful. Dense wavelength-division multiplexing allowed dozens of signals to travel simultaneously down the same line at different wavelengths of light, like multiple conversations happening in different colors.

As a result, infrastructure was built far in excess of what demand existed, because most people weren’t online, and those who were had very slow internet connections. Per me:

The similarity everybody points to is that “people doubted the internet at the time,” and people really need to remember their fucking history. In 2000, only 52% of American adults were using the internet, and by 2003, that number had only increased to 61%. Per the World Bank, in 2005 only 16% of the world used the internet, and in 2024, that number had increased to 71%.

Yet the real difference is the access to high speed internet. When the internet was connected to via a 56k modem, access was either charged-by-the-minute or much, much slower. While we’re used to connecting at speeds that make using a web-based app near-indistinguishable from one run on our computer, back in 2000, 2001, or 2002, the average US internet speed was, at best, 400 Kilobits/s, or roughly 50 kilobytes a second, compared to the average US internet speed of over 200Megabits per second, or 25 megabytes a second.

In simpler terms, a website took time to load in a way that feels almost impossible to conceive if you didn’t experience it at the time. We’ve also had dramatic improvements in web design and accessibility, the advent of mobile browsing, and the proliferation of widespread mobile and desktop internet access. In the 2000s, we were at the very early days of eCommerce, and the weird irony of the dot com bubble is that it was actually pretty useful to lay millions of miles of fiber optic.

Here’s a critical difference between AI and the Dot Com Bubble: when people actually lit up the dark fiber, the underlying internet service was faster, better and cheaper than a dial-up connection. Services like TheGlobe, WebVan, and Pets Dot Com ran businesses that lost incredible sums of money did so not because of the costs associated with accessing their services, but the unrealistic and unsustainable business models themselves.

Their eventual functional forms — Facebook, Instacart, and Chewy — didn’t require fundamental scientific breakthroughs in how goods were delivered or internet services were accessed. Their failures were a result of poorly run businesses that lost money by expanding too rapidly or spending $400 to acquire each customer.

Dell and CoreWeave just turned on the first Vera Rubin GPUs, and you’ll notice nobody is saying the words “profitable” or “sustainable,” because NVIDIA is not interested in making stuff more efficient rather than more expensive.

According to CEO Jensen Huang, AI data centers — which currently cost somewhere in the region of $50 billion per gigawatt — will now cost between $80 billion and $100 billion per gigawatt in the future. Does this sound like it’s getting cheaper to you? Even if said data center packs theoretically more “power,” what does that “power” do for the customer running compute on it? Is it cheaper? More efficient? How do we not have these answers?

All of this is to say that the Dot Com Bubble happened due to irrational exuberance and growth lust, and what was recovered at the end came not from scientific breakthroughs but the fact that the useful infrastructure existed and could be adapted and used to make things cheaper and more efficient.

That isn’t the case with AI data centers, AI startups or anything else to do with the AI Bubble.

Every few days somebody makes a post like this suggesting that “the internet didn’t go away” and “railways didn’t go away” when their bubbles popped, but I think this is a fundamental misunderstanding of what AI is.

An AI data center full of AI GPUs is useful for AI and very little else. There are GPU-powered analytics tools, GPU-powered modeling and scientific applications, but the nature of GPUs — good at doing the same thing across big data sets in parallel, but bad at handling many little independent tasks — makes them impractical for most of what modern computing demands.

The entire Dot Com Redemption storyline comes from the idea that it “left behind useful infrastructure,” by which they mean “cabling that allowed hundreds of millions of people to use the internet.” While there was some amount of further construction and capex to handle, the end result was useful fiber that connected people with a faster connection at a lower cost.

No such story exists for AI.

AI data centers are ruinously expensive, requiring billions in upfront funding with operating costs so high that they, at best, run at a loss for the first five or six years of service, if they ever recover their original costs at all. A rack of Vera Rubin or Blackwell GPUs will cost as much to run in five years as they do today, as will an incomplete data center cost just as much to finish construction, connect to the grid or acquire behind-the-meter (IE: generators) power for.

In the aftermath of the Dot Com Bubble, dead startups flooded the market with cheap server and office gear, which allowed plucky founders to cobble together their own services. A single Sun Microsystems Ultra Enterprise 3000 cost $43,000 ($89,000 in today’s money) and had a power draw of between 1,200W and 1,500W, but could run an entire company’s infrastructure. A single B200 Blackwell GPU uses 1,200W, and more-complex AI coding tasks can take up four to twelve of them for a single user’s output. Put simply, you can’t really do very much with a few of these GPUs, and what you can do isn’t profitable, scaleable or valuable.

Similarly, dark fiber could be lit up with the right transceivers and networking gear to create internet access. AI data centers are effectively large boxes with custom cooling built for a very limited subset of chips. Adapting them to other uses would require gutting the data center, which would mean that the vast majority of the capital expenditures were wasted.

Even if you were able to buy a hundred Blackwell GPUs from a dead neocloud, you, as a regular person, couldn’t do anything with them. In fact, nobody really could, because you’d still need a physical data center and bespoke cooling, which means that even if the chips were free, the associated construction capex or, at the very least, physical colocation space would still cost a great deal of money

The internet and railways didn’t go away because their up front costs were the only real costs that mattered.

Even if somebody were able to pick up a cheap AI data center full of the latest generations of GPUs, the underlying operating expenses are awful, and the only way to make them even close to generating a profit is to have consistent use of all your GPUs. There’s a cost to having them sit idle — both in electricity and personnel — and unless the plan is to have them sit in a data center turned off until you can find somebody else to sell them to, you’ll have to come up with a business model for your AI services that actually makes a profit…which nobody appears to have done, even with unlimited capital and the entire focus of the tech industry.

Then there’s the issue of training, which is entirely made up of opex. If you want to train a new model, you’ll likely need thousands — or even tens of thousands — of H100 or H200 GPUs, and they’ll cost just as much electricity whether or not you make anything useful. A failed or unhelpful training run could cost tens of millions or hundreds of millions of dollars, and that will require financial backing that won’t exist.

While there could be a theoretical future of LLMs run at their true cost (IE: unaffordable for most) as I covered in last week’s premium newsletter, that would require demand, and as I’ve discussed above, the demand for AI services is a mirage built on subsidized subscriptions, and companies paying the actual costs are already screaming for mercy.

Once the bubble bursts, any excitement for AI — and by extension excitement to spend money on AI — goes out the window. AI startups won’t get funded. AI token budgets won’t get greenlit. AI data centers won’t be able to raise debt.

Every part of this bubble relies upon the momentum of hype to substantiate every link in the chain. Hype must exist around the nebulous concept of an “AI factory” to raise debt to buy NVIDIA GPUs and build data centers, hype must exist around AI software to convince enterprises to keep buying services from OpenAI and Anthropic, hype must exist around theoretical demand and outcomes from AI services to fund AI startups, and hype must exist perpetually in the media to make everybody ignore AI’s ruinous costs.

This hype was unsustainable without buckets of lies, misinformation and a captured tech and business media. The value of AI has been inflated by the vagueness of how it’s discussed. For example, major media outlets will gladly write that “AI can build software,” but said sentence suggests that you can just type “build me Slack 2” into Claude and have it fart out a fully-functional, production-ready piece of software, rather than a quasi-functional mound of code-slop that can do enough to trick a business idiot or lazy journalist, but little else.

Said vagueness created a society-wide gravitational pull of consensus that you needed to be behind AI now, because it’s just like the new internet, except bigger, and if you say it’s not you’re going to be really embarrassed.

Creating this pressure was necessary, because without a society-wide aggression against those who didn’t adopt these tools, AI might have actually had to stand on its own merits. That fact AI companies backed by the full manufactured consent of the markets and most of the economy still had to subsidize their products shows exactly how flimsy their value truly is.

The only way to inflate the AI bubble both on a hardware and software level was to mislead the general public and investors on the costs and efficacy of AI models.

Now that organizations are having to pay the actual cost of AI, suddenly they’re concerned about its outcomes, and everybody has become a little hysterical.

Late last week, SemiAnalysis wrote one of the most insane articles I’ve ever read — AI Dark Output: The Visible Cost of Invisible Output — saying that “AI output will be real before it is measurable,” and, well, whatever the fuck this is:

We are at risk of having an event on the scale of the Industrial Revolution where most of the new output is invisible even as businesses spend increasingly large amounts on AI services.

SemiAnalysis is a semiconductor analyst firm with an obvious reason to keep the AI bubble inflated, and if they’re writing a piece that amounts to “AI has a return on investment, you just can’t see it,” things are getting desperate. Here’s how they define “Dark Output”:

Dark output is AI-enabled economic value that exists but is not visible, or is badly distorted, in GDP, prices, labor statistics, or industry accounts. We categorize this into two buckets:

1. Substitution dark output is work that was previously done by humans and is now done by AI. In our Dark Output Monitor we have identified roughly $1.5T in tasks that current generation AI could substantially augment or automate.

2. New dark output is new work done by AI that wasn’t previously being done by humans (probably because it was too expensive to do until AI made it cheap). In the long run this is likely to be much larger than the substitution side.

That “substitution dark output” is explained using a theoretical example of “...a simple legal document which in theoretical GDP should have the same inflation adjusted value to a user whether a lawyer drafts it or AI drafts it,” which is nonsense.

When you pay a lawyer, you don’t pay them to “create an output,” you buy their experience and time and ability to find and adapt case law to reach an outcome, such as in the process of filing stuff, avoiding or actively participating in litigation. Just because AI can fart out an approximation of what a human output may look like — likely riddled with hallucinations — doesn’t mean that said output was created with any “experience.” Models don’t think, they have no experiences, and even if a lawyer is prompting them, that doesn’t mean that the lawyer’s discernment or taste is reflected in the final output.

Then there’s this bit:

When AI takes over the task, the receipts vanish as the cost is absorbed in tokens, and when government officials survey lawyers on the cost of services they may find that the average price has gone up, as the simplest documents are now completed by AI and not lawyers. From the perspective of GDP, the transaction has effectively vanished except for a few dollars of tokens sitting in an unrelated sector of the economy.

We’re four fucking years into it but we’re still using hypotheticals. Are “...the simplest documents now completed by AI and not lawyers”? You don’t get a lawyer to write a document because they’re the only ones who can write it — you get it to mitigate the risk using the experience of the law firm, both in the associate drafting the document and the partner overseeing it.

This flimsy, half-assed logic is how the AI bubble got inflated in the first place. Supposedly smart people continually show a total lack of awareness of how jobs work at basically every level, and in this case — where it should be theoretically possible to find and talk to a lawyer doing this — the supposed “dark output” includes “the research done to complete this article.”

You may be wondering what that “new work done by AI that wasn’t previously being done by humans because AI made it cheap” is, and the answer is “literature reviews” and “summarizing the last six months of email,” and I wish I was kidding. But don’t worry, “...there are anecdotal signs that a large fraction of current token spend is for new work that wasn’t previously paid for rather than replacing existing work.”

Have you ever noticed that every story about AI job loss reads like it was written by The Riddler?

For example, last year a ton of outlets reported that “Oxford Economics had proven that entry-level workers were being replaced with AI,” but in reality, the study said that “...there are signs that entry-level positions are being displaced by artificial intelligence at higher rates” with no actual data beyond post-2022 employment declines in some fields that AI might be able to do.

Similarly, CNBC’s brainless headline that an MIT study found that AI “could already replace 11.7% of the US workforce” was entirely based on a labor simulation tool rather than any economic analysis of the actual shit AI can do and what it’s doing in the real world.

That’s because AI job loss is a fucking myth. Every company laying off people because of “the power of AI” is doing so because their shareholders are mad and because they know they’ll get headlines.

And if it were actually happening there’d be fucking riots in the streets! Unemployment would be spiking! Things would be burning!

The thing that everybody wants you to avoid thinking about is that if AI worked as advertised, there would be obvious, impossible-to-ignore economic signs:

For all of these things to happen, AI would have to be both flawless, hallucination free, a completely different product capable of autonomous intelligence and having unique ideas.

The reason that we can’t measure “AI job loss” is because AI can’t do jobs. It can be used to replace some specific contract positions with extremely shitty versions that don’t scale, but it does not replace jobs because it is incapable of human work. It cannot speak to colleagues, it cannot accrue experience, it does not have instincts or culture or taste or anything other than whatever training data has been crammed up its ass or through endless post-training.

Nevertheless, the threat of AI job loss has been enough to allow both Sam Altman and Dario Amodei to raise hundreds of billions of dollars lying about it, and now that both of them have walked back their job loss scare-propaganda, every oaf and moron that believed them without actually checking should be booted out of their representative industries. It’s fucking embarrassing! You should all be ashamed of yourselves!

As I said above, the ROI of AI should be really easy to measure if it actually existed.

If AI was magically able to build and maintain software, we’d have small companies that could build and deploy at the scale of a hyperscaler, and hyperscalers would, in theory, be expanding their margins so aggressively that it would create a new golden age of software revenues…or they’d become entirely infrastructure providers, as anybody else could compete on software.

But on a far-simpler level, it would be extremely obvious.

Anybody can access ChatGPT, Claude or Gemini, effectively anywhere in the world. The theoretical “power” of AI is that it “just does stuff,” and the proliferation of LLMs would mean that somebody would’ve “done” some “stuff” that we could point at with exceptional ease. Random guys in the midwest would be pumping out profitable, functional, and feature-rich software. Lawsuits would be won by pro se plaintiffs with incredible counsel from a theoretical “country of geniuses in a data center.”

Four years in, we’d have one major AI-powered company demolishing the competition in any industry, or every industry would become so prevalent with (powerful) AI that it would effectively reduce the cost of the service to nothing.

We’d be able to point to companies that adopted AI and then completely fucking exploded. We’d be able to point to useless coworkers who were now doing impressive, meaningful work.

There would be widespread economic upheaval, as the concept of a “large company” would lose meaning, because those theoretical “geniuses in the data center” would be automating all the work.”

There also wouldn’t be so many pieces insisting that AI is super powerful and so many quotes from Business Idiots saying it’s “real.” We wouldn’t talk about what AI could do at all. We wouldn’t need Anthropic to lie that Mythos was too powerful to release only to release it several months later.

We wouldn’t have to talk about the fucking potential at all because we’d be able to point to what was going on because it would be obvious!

Last week, Bain & Co. released a study of 951 executives from companies with more than $100 million in revenue, and unsurprisingly, the data did not declaratively explain what the ROI of AI was:

In an April survey of 951 respondents from companies with more than $100 million in revenue, Bain found that 37% said they experienced cost reductions of between 10% and 20%, but a larger 40% saw improvements of 10% or less. Only 4% of global respondents achieved AI-related savings of more than 30%, the survey, shared exclusively with Bloomberg, found.

10% of…what? What’s the cost you saved on? 10% of $10 million is a lot for a company with $100 million in revenue, but 10% of $1000 isn’t, much like 20% or 30% isn’t either! Yet there are two punchlines to come:

Here’s the part that Bain found the most troubling: 44% of large companies that are funding their next wave of AI spending are basing those investments on the last round of savings — savings that haven’t yet materialized for some of them.

This also assumes that those savings are enough to warrant future spending, which…this data does not actually prove.

Thankfully, Bain did manage to publish one of the single-funniest quotes of the AI bubble:

“The technology worked. The value didn’t arrive,” Bain concluded in the report. “Self-funding the next wave from past returns sounds like discipline. In reality, it is a circular bet with a structural leak,” the firm cautioned.

Put another way, the technology “worked (?),” but did not provide value in doing so. Sounds like it didn’t fuckin’ work to me!

Bain had one other crucial bit of advice:

“Companies that don’t validate their reinvestment math against what automation actually returned, rather than what it was supposed to return,” the report concluded, “are compounding risk rather than managing it.”

Just so we’re clear, Bain & Co, a management consultancy with billions in annual revenue, is advising its clients that they should make sure that they’re getting some sort of return on their investment? And that reinvesting in something that doesn’t have a return on investment would be bad?

If AI was real, these fucknuts would be replaced first! They’d replace everybody who wrote this report! You don’t need somebody to tell you this, and if you do you’re a fucking moron!

Thankfully, the AI industry is saved, as Sam Altman had the following to say about AI’s remarkable costs:

FABER: And you think the compute, you know, the last week I was hearing about compute, for example, companies starting to wonder, well, what are we spending it on? Our bills are going through the roof, and it’s not clear to us exactly what – you know, in other words, I know a lot of my spend is going well, but I don’t know which part of it.

ALTMAN: So I think this is the most fair contribution – criticism right now of AI, which is, you hear companies saying, I am spending a ton of money on AI. And I know some great stuff is happening, but I know there’s a ton of waste, and you know, when – how long do I have to wait for it to really show up in revenue, and how long do I have to wait to really get the costs under control? And I assume that the industry will figure that out pretty quickly, but I think that is a fair, a fair issue.

Motherfucker you are the industry! You are the one that has to work this out! OpenAI is the AI industry! You are OpenAI’s CEO! You lazy, ignorant, dog-brained loser!

This was an opportunity for “journalist” David Faber to push back, and here’s how that went:

FABER: You do.

ALTMAN: Yeah.

FABER: Quickly being –

ALTMAN: I would bet that by another year or two from now, there is a much better rationalization of companies’ spend relative to outcomes.

FABER: And finally, Sam, are we ever going to see things like this up in space?

This is how the AI bubble inflated! This is how it happened! It happened every time a journalist asked a meaningful question and then immediately diverted to a totally different imaginary topic that made the subject feel good! David Faber, resign and give your job to somebody who has an iota of courage or pride in their work! Unbelievable!

Sam Altman is worth billions of dollars, and OpenAI is allegedly worth $852 billion too, and the best he can give us is “teehee, someone else will work it out,” because Sam Altman is a loser that ingrates other losers empowered by losers to sell loser technology to other losers, and the only way that he’s been able to do this is because the people that should know better are sitting around their thumbs up their asses asking him whether there will be data centers in space.

If AI had ROI, we wouldn’t be debating whether it had ROI. We wouldn’t discuss its potential, or whether it could, theoretically, under different circumstances, in the future, in a way that nobody can describe be super powerful and do all of the stuff it can’t do today.

If AI had ROI, we’d be able to point with specificity to inarguable examples of economic impacts. AI boosters can jerk their binguses all they like about how Spotify’s CEO said its best engineers don’t write any code anymore. What does that mean? Is Spotify shipping better features, and are those features launching at a rapid clip? Is the software more secure, or stable? Spotify’s design still looks like absolute dogshit! Most software is worse! Things keep breaking everywhere, and in many cases it’s because of AI coding tools!

In fact, I’d be willing to believe that AI had a negative economic impact, increasing operating expenses across the board and giving some software engineers prompt-based concussions by automating some coding in a way that makes them lazy and bad at writing software by speeding up the process of writing code with so much of it that it’s impossible to review it all (see Mo Bitar’s video). LLMs appear to be able to write some code sometimes and do so at high speed, and ingratiates software engineers that don’t really care about writing software by making them feel like they wrote it.

While it might allow some things to go theoretically faster, the overall economic impact of AI-generated code appears to be worse code, worse software, and massive, multi-million dollar bills from Anthropic and Cursor. I will concede that some software engineers seem to like these things, and that many software engineers appear to be using them, but I am yet to see a single one who obsessively posts about their token spend create anything of note or worth, and none of these people appear to be able to point to the actual ROI of all that AI they’re using.

I realize I’m painting with a broad brush, so let me get a broader one: I believe anyone who relies on LLMs for anything is a mark.

I don’t give a shit if you use them to spit out a script or do some simple sideline part of your job, or transcribe or dictate into them, or if you’ve used them as a search engine (and even then, you best check every source!), but the moment you rely on and run your entire process on these things, I immediately doubt your ability to do anything, or at the very least wonder how gullible you truly are when somebody ingratiates you enough.

Why? Because every single “AI setup” I’ve seen anyone ever use involves a rube goldberg machine of bullshit deterministic scripts to try and bring the hallucination-guaranteed nature of LLMs to heel, usually to the point that you’re doing more work making the LLM work than you did before they existed, and you’re only proud of it because you feel like you’re special.

Sidenote: If you’re normal about LLMs I have no beef with you – but something about this technology makes people act irrationally and aggressively to skeptics in a way that requires them to debase themselves. This is a product, and if you feel the need to defend a product you are the victim of a con.

There are, of course, exceptions. I’ve talked to a few people who describe LLMs normally, without hype, who tell very specific stories of very specific outcomes that save indeterminate amounts of time. There are some that have used LLMs to create python scripts to search and organize data, to which I say “you’re impressed with Python, not LLMs.”

If all we’re left with from this era is the ability for some people to write Python scripts without learning Python, this is still an egregious and horrifying waste of capital.

Remember: what you are using is the end result of over a trillion dollars of investment. It is only made possible through manufactured consent that actively misinforms people about the current and future capabilities of LLMs. They didn’t raise hundreds of billions of dollars by talking about any product currently on the market, and that’s because the current products are not very good products.

You are all the victims of a con. No matter how “well” your Breakfast Machine of different API calls and if-this-then-that automations may or may not function, you have been sold a bill of goods for “artificial intelligence” that is impossibly stupid. When some of you are pushed to prove the ROI of AI, you immediately return to boring talking points about Uber, or the Dot Com Bubble, or some other slop fed to you by people actively conning you at this very moment.

I mean this with as much empathy as I can muster: if you’re a huge AI booster, why do you defend this so vociferously? What is it about my criticism that hurts? Is it that I’m yucking your yum? Is it that I don’t immediately ingest and regurgitate the theoretical idea that the thing you’re using all the time is or may become sentient? Is it because I’m not impressed?

I think it’s far more likely that people are angry that I’m asking simple questions that should have — and don’t — have satisfying answers. I’m also fundamentally unimpressed with anything I’ve seen an LLM do, because my requirement for software or hardware is that it works as advertised, and the very fundament of the AI con is that LLMs are sold based on their theoretical capabilities.

The reason nobody can show you the ROI from AI is that AI does not have a return on investment. Large Language Models can speed up some things in a way that becomes increasingly less-valuable and accurate with the complexity of the task, and more investment in AI data centers does not appear to do anything other than expand the number of tasks that an LLM can attempt.

While some people have been able to get something out of generative AI, that something never seems to be a tangible or impressive achievement. Every “successful” AI story is a result of either ignoring the obvious problems with LLMs or mitigating them at a great cost for an aggressively expensive and mediocre result.

LLMs are sold as “AI,” a technology best-known for automating things, yet they can’t be trusted to run anything on their own.

Instead, they manipulate the user into covering up their errors, explaining away their failures, coddling their meager returns and crediting them with the actual labor that LLMs are meant to automate away.

They do so by their investors and executives conning the media and the markets with outright lies and half-truths that exploit society’s weak points. The media and markets are informed by people that neither understand technology nor history, and Business Idiots that have reached the heights of their careers through diplomacy and ratfucking that care only about attention and adulation for things that other people do.

LLMs coddle the easily-led and narcissistic into believing that the model is doing the work as the human being has to constantly cater to the model’s inefficiencies and inabilities, using more energy and resources than any technology ever made.

And yet with all the money, all the attention, all the resources, all the land, all the power, all the affordances and excuses and endless fucking applause for mediocrity, nobody can actually point to the ROI of AI, because it doesn’t exist outside of it burping out stolen content and enriching and ingratiating billionaire dullards. Even at a hundredth of the price I’d be dismissive, because everything I’ve seen is so decidedly unexceptional.

I realize that some will say I’m dismissive of LLMs’ capabilities, and I’m sorry — I’m just not impressed. You spent a trillion dollars to make it somewhat easier to code some things sometimes but not in such a way that it actually results in anything, research reports that nobody will read, shitty powerpoint decks and excel spreadsheets, and art that looks like stock images because that’s exactly what it was trained on.

This shit needs to work every time without fail and be absolutely flawless and autonomous.

You are paying for a tool. You are paying for software. You are a customer. Your job is not to explain to others why this is exciting, nor is it your job to cover up for its mistakes. If you truly love this stuff you should be either secure enough in doing so that you don’t feel compelled to defend it or be demeaning to those that disagree.

The fact that I have to write that sentence is proof that something is very, very wrong with the AI industry, and that LLMs are about far more than software.

If you liked this piece, you should subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 10,000 to 18,000 words, including vast, detailed analyses of the biggest events and companies in the AI bubble.

2026-05-30 00:58:10

Last week I ran the second part of my three-part “What If…We’re In An AI Bubble?” series where I have been covering the scenarios that I believe could lead to the bubble popping.

Here’s what I’ve discussed so far:

Today I want to start with a very simple rundown of what has to happen for the AI bubble to make sense. These are all points that are rooted entirely in the projections and sales of the companies in question.

As NVIDIA intends to sell over a trillion dollars of Blackwell and Vera Rubin GPUs by the end of 2027, it needs to have around (assuming a PUE of 1.35) 40GW of data center capacity built to support the 30GW+ of GPUs it will have sold.

With that compute being sold at around $12 million a megawatt (based on discussions with analysts and sources), that means that there must be around $435 billion in global annual compute demand to substantiate the amount of GPUs sold.

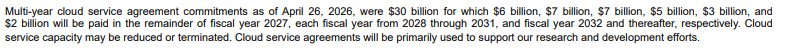

Outside of OpenAI and Anthropic, there doesn’t appear to be more than a few billion dollars of demand. Another concerning sign is that NVIDIA has had to agree to spend $30 billion in multi-year cloud compute agreements across the very partners it’s selling GPUs to (per page 16 of its most-recent 10-Q):

The other problem is that data centers are taking way, way too long to finish, taking upwards of 24 months even for smaller 40MW builds.

This means that…

Put another way, NVIDIA’s continued growth relies on people’s belief that A) these data centers get built and B) that they’ll actually make money.

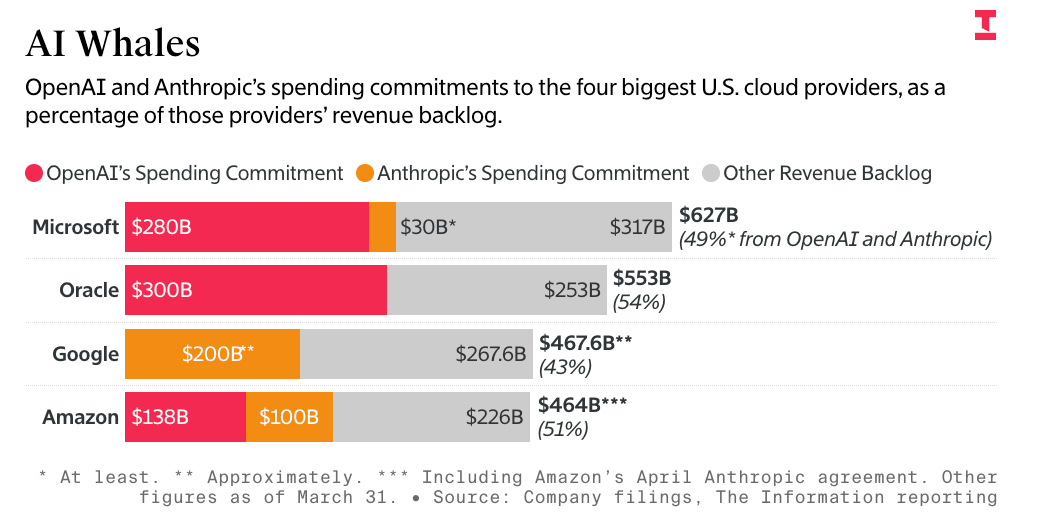

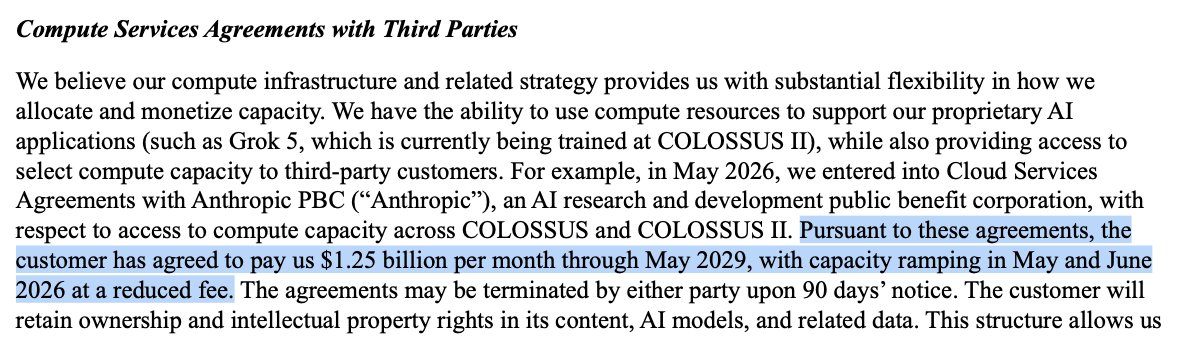

Per COO Greg Brockman, OpenAI will spend around $50 billion on compute in 2026, and I imagine Anthropic will spend in or around the same amount, especially as it’s now agreed to spend $15 billion a year on Musk’s Colossus data centers on top of whatever it spends on Google Cloud, Microsoft Azure and Amazon Web Services.

$100 billion is nowhere near enough to justify the compute being built. And while Anthropic and OpenAI have made more than $1.1 trillion in compute commitments in the next 3-5 years across Microsoft, Google, Amazon, Oracle, CoreWeave, Cerebras, Terawulf, and Cipher Mining, there’s so much more compute that needs to be sold on top of that.

Even if both doubled their spend in a year, we’d still need at least another two Anthropic or OpenAI-sized compute customers — either in aggregate or as separate companies — at a time when I can’t find a single other company spending even a hundred million dollars a year on compute. Most AI startups (and customers) want to pay Anthropic or OpenAI directly to access their models, which means that either Anthropic and OpenAI need to use roughly twice the amount of compute they do today and then some to meet the capacity being built.

This will require them to do something either historic or impossible.

This is not hyperbole!

OpenAI, per The Information, plans to burn $852 billion through the end of 2030. Anthropic has, per The Information, agreed to spend $330 billion on compute on Microsoft, Google, and Amazon, at least another $30 billion on compute with CoreWeave, and another $63 billion in TPUs bought from Broadcom.

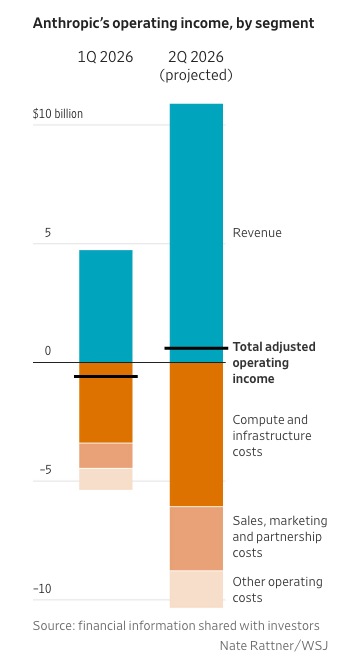

To reach this point, Anthropic projects it will hit $174 billion in annual revenue by the end of 2029, and OpenAI $284 billion. Both have made ridiculous claims of profitability (with Anthropic actively conning investors with a “profitable” quarter based on discounted bills) in the next few years that are immaterial to the larger point that they need actual, real cash to meet their obligations.

This is, again, not hyperbole. If we assume that the services in question are profitable, sustainable businesses, then revenues attached to AI services must exceed those driven by AI compute by a reasonable margin. It isn’t enough for us to have a few AI companies that spend a lot more on compute than they take in revenue, because at some point venture capital subsidies will run dry.

This isn’t happening. Putting aside the profitability part for a second, OpenAI and Anthropic account for 89% of all AI startup revenues, with the nearest competitor being Cursor with its pathetic $3 billion in annualized revenue. These are rookie numbers. They are insufficient. We need so much more than this.

Again, not hyperbole! These are OpenAI and Anthropic’s own revenue projections — $184 billion and $174 billion respectively — that they expect to hit by the end of 2029. These are the same projections that have been used to make their $1.1 trillion in compute commitments, much of which make up 50% of Google, Amazon, and Microsoft’s remaining performance obligations:

These commitments reflect expected revenue and demand for OpenAI and Anthropic’s services, but they’re commitments, which means that they need to be paid even if that demand doesn’t exist.

This is a huge problem for these companies. If they buy too much compute and don’t have the demand and revenue to support it, they’ll go bankrupt.

To be clear, that’s not my opinion, it’s what Anthropic CEO Dario Amodei said to Dwarkesh Patel in February, emphasis mine:

Basically I’m saying, “In 2027, how much compute do I get?” I could assume that the revenue will continue growing 10x a year, so it’ll be $100 billion at the end of 2026 and $1 trillion at the end of 2027. Actually it would be $5 trillion dollars of compute because it would be $1 trillion a year for five years. I could buy $1 trillion of compute that starts at the end of 2027. If my revenue is not $1 trillion dollars, if it’s even $800 billion, there’s no force on earth, there’s no hedge on earth that could stop me from going bankrupt if I buy that much compute.

Even though a part of my brain wonders if it’s going to keep growing 10x, I can’t buy $1 trillion a year of compute in 2027. If I’m just off by a year in that rate of growth, or if the growth rate is 5x a year instead of 10x a year, then you go bankrupt. So you end up in a world where you’re supporting hundreds of billions, not trillions. You accept some risk that there’s so much demand that you can’t support the revenue, and you accept some risk that you got it wrong and it’s still slow.

That is not good! As I’ve covered before, buying compute is a knife-catching game where you have to guess how much you need for a particular year, and if you guess correctly you don’t lose as much money but if you guess wrong you run out of money.

It should be far more worrying to executives that the single-largest AI company is basically saying that if he mistimes growth his company explodes!

Per Business Insider, Uber COO Andrew Macdonald said this weekend that it was becoming “harder to justify AI costs within the company”:

"That link is not there yet, right?" he said. "I think maybe implicitly there is more that is getting shipped, but it's very hard to draw a line between one of those stats and, 'Okay, now we're actually producing 25% more useful consumer features.’

Macdonald added that AI can seem free if you're "just a user sitting there coming up with interesting use cases" without paying for it. But ultimately, the company foots the bill.

Anthropic’s meteoric revenue growth has come from both AI startups burning more tokens (as Opus 4.7 appears to burn more than ever) and large organizations doing some form of “token-maxxing,” meaning that they tell their employees to use AI as much as they want, usually with KPIs that specifically track AI usage, as is the case at Meta, Amazon, and Zillow.

Even organizations that aren’t actively incentivizing their engineers to burn more tokens are finding they’re blowing through their budgets at record speed. The situation with Uber’s COO was caused by his CTO saying back in April that the company had burned through its entire annual token budget in four months. Similarly, my reporting on Zillow’s AI spend showed that it will likely max out its annual Cursor budget by the end of May.

The problem, as Macdonald said, is that nobody can seem to track all of this spend to an actual return on investment. This isn’t a situation where somebody is saying “the ROI is low but improving” or “we’re on the path to working that out,” but “it’s very hard to actually draw a line between “what we’ve spent” and “a reason we’re spending it.”

Sidenote: To be clear, Anthropic only moved organizations to token-based billing in Q1 2026. Mere months into charging organizations the actual costs of their AI usage already has them squealing like a stuck pig.

This makes it hard for Uber to say how much it should reduce its token budgets. If you can’t measure the return on investment, how do you measure how much you’re meant to spend? What is “enough”? Because right now it’s clear that whatever they’re spending is too much, which means that there’s a ceiling to Anthropic and OpenAI’s revenue story.

OpenAI and especially Anthropic cannot afford for this conversation to be happening, because it suggests there’s a ceiling to the amount that people will spend on AI. It appears there’s a limit to which organizations can be abused and manipulated into believing that “the future is here,” and that limit is when they pay millions for something that doesn’t appear to have a measurable return on investment.

Anthropic and OpenAI need organizations to willingly spend 10% to 100% of their headcount on AI, as their revenue projections are clearly tied to every organization maintaining a significant spend on tokens in perpetuity.

There’re really two problems:

This is budgetary poison. Right now, the vast majority of AI token spend is experimental, and if companies are already hesitating at the amounts they’re spending, Anthropic has no way to keep growing, and they also have no super secret models or harnesses or products that are going to reverse this trend. Nobody knows why they’re spending so much money or even how much money they might spend in a given month, which makes it tough to view Anthropic’s (suspicious) revenue growth as anything but a chaotic money-dump driven by CEOs that don’t know what their companies actually do and have been beguiled by the AI grift machine.

And as I wrote up last week, OpenAI had a negative 122% operating margin in Q1 2026, and ChatGPT growth has stalled. It is unclear what its API revenue is, but it’s likely much less than Anthropic despite shoving its enterprise customers onto token-based billing not long after they did.

As I’ve said: this cannot happen, and neither Anthropic nor OpenAI can afford to slow down. Their revenues must grow to over $100 billion by 2028, as their compute commitments demand it. Their growth must continue.

It’s been a little under four years of endless confidence about the inevitable growth of generative AI, and by extension the eternal success and growth of OpenAI.

Yet in reality, its economics have only ever soured, and its growth appears to be collapsing.

In October 2024, The Information reported that OpenAI believed it would turn profitable in 2029, that its total losses between 2023 and 2028 would be $44 billion, and that its (non-GAAP, every one of these numbers is non-GAAP) gross margin would be 41% in 2024, though it would end up being a point lower at 40% in the end. OpenAI would then project a gross margin of 49% for 2025…but it ended up at 33% anyway.

OpenAI would also say on September 5 2025 that it would actually burn $115 billion through 2029, but that “burn” assumed that it would have revenues of $60 billion in 2027, $100 billion in 2028, $145 billion in 2029, and $200 billion in 2030, when it would “become profitable” in some undiscussed manner. Two weeks later on September 19 2025, The Information would report that actually OpenAI would spend “about $450 billion to rent servers through 2030,” but not otherwise update the burn-rate.

On November 4, 2025, OpenAI CEO Sam Altman would say that the company had hit $20 billion in ARR and had made $1.4 trillion in commitments “over the next 8 years,” and a few months later On February 20, 2026, OpenAI would claim that it had targeted “around $600 billion in compute commitments by 2030.” The very same day, The Information would report that it planned to spend $665 billion on compute through 2030, that it missed gross margin projections (without sharing what those margins might be), and that ChatGPT had hit 910 million weekly active users that month, 90 million short of its goal of 1 billion by the end of 2025.

It’s very obvious by now that OpenAI has been making up all of its projections, and that none of the numbers actually add up. My own reporting from November 2025 from actual Azure personnel suggests that OpenAI’s Q1 to Q3 revenues were billions lower than every other reported figure, and I think it’s likely that OpenAI is overstating its revenues.

In any case, on May 22, 2026, The Information would report that OpenAI’s Q1 2026 operating margin was negative 122%, and that its Q1 average weekly active users (WAUs) sat at 905 million — suggesting that growth has stalled.

OpenAI had anticipated that it would cross the one billion WAU mark by the end of 2025 — and it blamed its failure to do so on fiercer competition, primarily from Google’s Gemini.

For OpenAI to afford its compute commitments, it has to make or raise $852 billion in the next four years. It must have that cashflow, or it will run out of money or be sued out of existence by its numerous counterparties from CoreWeave, Microsoft, Amazon, and Cerebras.

Sidenote: To be clear, Anthropic is in exactly the same boat, with $375 billion — and that’s only assuming a single year SpaceX compute (billed at $1.25 billion a month) — in compute commitments for a company that can only afford them if its supposed $50 billion of annualized revenue becomes its actual revenue and also fucking quadruples in the space of four years.

In the final part, I’m going to get into the depths of destruction — the unraveling of the greater data center debt industry, the massive damage to private credit to come, potential shareholder lawsuits against NVIDIA, and the consequences of the deaths of OpenAI and Anthropic.

Time. Space. Reality.

It's more than a linear path — it’s a prism of endless possibility. I am the Watcher, and I am well aware of how AI generated that sentence sounds.

I am your guide through these vast new realities.

Follow me and dare to face the unknown.

And ponder the question…

What If…We’re in an AI Bubble?

I also want to add that I realize three headlines didn’t make the cut — what if there’s not a bailout, what if I’m wrong, and what if I’m right — and I intend to cover all three of them in future free newsletters.

Nevertheless, today’s is an absolute beast, a 16,000 word conclusion to the first multi-part Where’s Your Ed At Premium.

2026-05-27 00:47:30

If you liked this piece, you should subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 5,000 to 18,000 words, including vast, detailed analyses of NVIDIA, Anthropic and OpenAI’s finances, and the AI bubble writ large. My Hater's Guides To Private Credit and Private Equity are essential to understanding our current financial system, and my guide to how OpenAI Kills Oracle pairs nicely with my Hater's Guide To Oracle.

This week, I’ll publish the final part of my ongoing series (“What If…We’re In An AI Bubble?”) about the factors and events that will cause the AI bubble to finally pop, focusing on what consequences might follow the collapse of OpenAI and the wider data center

Subscribing to premium is both great value and makes it possible to write these large, deeply-researched free pieces every week.

Today I’m going to speak from the heart, and tell you that we’re ruled by fucking imbeciles.

AI is a perfect storm of failed concepts and organizations, and the apex of the Era of the Business Idiot, an epoch where we’re ruled by people so thoroughly disconnected from the actual workforce that it was inevitable that a technology would be created specifically to grift them.

Just ask Aaron Levie, CEO of Box:

CEOs are uniquely prone to AI psychosis because they’re sufficiently distant from the last mile of work that still has to happen to generate most value with AI.

LLMs are dangerous for many, many reasons, but the under-discussed one is how well they play to a certain kind of executive imbecile. Generative AI is — to quote Mo Bitar — really good at doing an impression of work, much like most managers and c-suite executives, and even if it’s completely incapable of doing something, it’ll absolutely say it can and tell you you’re amazing for suggesting it.

And that’s why Business Idiots love it.

Where regular human beings would say annoying things like “that’s not possible within that timeline” or “we don’t have the resources to do it,” AI will say “of course, right away!” and burn as many tokens as possible. When it makes mistakes, it’ll apologize — as it should because it failed you — but then promise to do better next time, all while costing so much less, at least in theory, than a regular, stinky human being.

It’ll create a PRD (product requirements document) of a theoretical software project with the confidence and vigor that you need to take it immediately to a software engineer and say “build this immediately,” and when the software engineer tells you a bunch of bullshit about it not being possible, it’ll spit out several convincing-sounding responses. Fuck, why even bother talking to that engineer at all? Claude Code can mock up a prototype that you can then shove in their fucking face before you fire them for not using AI to do it themselves.

I realize I sound a little churlish and dismissive of those who may or may not actually get something out of AI, but this entire industry feels like a mixture of kayfabe and ignorance, slathered with a kind of angry desperation that reflects the distance between reality and fantasy, driven by people that don’t do any fucking work.

Any executive-level fuckwit you’ve met in your life now has a seemingly-powerful tool that can burp up mimicry of open source software and, if you constantly prompt it, eventually get something half-functional onto some sort of web server. When you face bugs, it’ll try and fix them, sometimes also “fixing” (adding or deleting code) from elsewhere to be helpful, like when Cursor using Anthropic’s Claude Opus 4.6 model deleted an entire production database and all its backups. It will never, ever say no, even if it’s incapable, even if it has no thoughts, even if what you are asking is equal parts impossible and unreasonable in both its timescale and scope.

A Business Idiot, given his druthers, can sit there and fuck around and make an LLM spit out something that makes him feel like he’s coding, which in turn makes him feel that you, a lazy and stupid engineer, could do even more with the power of AI. It doesn’t matter that it costs an absolute shit-ton of money, or that there’s no way to measure its efficacy. The Lion does not concern himself with things like “efficacy” or “productivity,” and the Lion is increasingly tired of your whining! The Lion doesn’t even understand what it is you do every day other than not doing what The Lion is asking for!

You laugh, but this is genuinely how the majority of managers and executives think and act, and now they have a special chatbot that can fart out functional-enough prototypes to convince a Business Idiot they can do anything, because executives and managers do not regularly do much work. As a result, they have little idea what work looks like other than when they look over your shoulder, which is why they wanted you back in the office, and their distance from production is why the same people who were anti-remote work are now aggressively trying to shove AI down your throat.

Organizations aren’t burning millions or hundreds of millions of dollars a year on AI because it’s good, they’re doing it because they are run by people who do not know what the fuck they’re doing.

Generative AI is catnip for hall monitors, snitches, toadies, and any other group that hates work and loves talking down to others. Put another way, it ingratiates losers who believe that learning to do or being good at something is a waste of time, because they deserve to just do what they want without any of that messy “effort.”

While I’m not saying every LLM user is an imbecile, they’re built to convince the mediocre and incurious that they’re remarkable, and it turns out that a great many of them run venture capital firms and Fortune 500 companies.

I also want to be clear that while there are sane and normal people who use these things, they’re mostly drowned out by a crowd of people that oscillate between bootlicking and regurgitating capitalist mythology in a way that makes it hard to trust anybody who spends significant amounts of time using an LLM.

One thing you’ll notice about the most moistened AI boosters is that they lack much degree of pride in their work. Everything they say must, at some point, compliment the mindless, unprofitable, unreliable tool underneath it — how “incredibly powerful” it is, how it’s “only getting better,” how it’s “only the beginning” of something that’s eaten over a trillion dollars and absorbed the majority of venture capital.

It isn’t about the work, or the craft, or the thought behind it. Everything is a numb, mindless death march toward saying “job done” and burping out some sort of pseudo product, if one even exists.

I’m not even being sarcastic! Per Bloomberg, Salesforce has been marketing “powerful AI products” that don’t actually exist:

Patients at the University of Chicago Medicine featured in a promotional video seamlessly refill prescriptions, book appointments and even get parking tips with the help of Agentforce, Salesforce Inc.’s flagship AI tool.

All this is possible, according to the video released in October, with the new technology. But the scenes are largely aspirational — little of that AI functionality is live. Patients who call the hospital system today are greeted with keypad-selection menus and routed to human schedulers. Its chatbot is still being tested and not visible to most web visitors.

The problem is that chunks of the capabilities shown in presentations – including from top customers like Williams-Sonoma Inc. and Finnair Oyj. – are actually mock-ups that aren’t yet widely in use.

In a rational society, Salesforce’s stock would take a beating and the SEC would open an immediate and brutal investigation.

Sadly, our society is oriented around the power fantasies of the mediocre and spiritually-dead losers, people bereft of pride or joy in the things they create that believe that they’re owed everything.

They’re Business Idiots, and they are your enemy. Even those who believe they’re aligned with the Business Idiots by supporting and using Large Language Models are the enemy, because The Business Idiots believe that “AI” will simply remove anybody else from the picture, automating work, creativity, communication, friendship, and that includes anyone that helped its ascent.

And yet none of it’s really working, because Business Idiots don’t really know how anything works.

As I said back in the original piece, think of The Business Idiot as a kind of con artist, except the con has become the standard way of doing business for an alarmingly large part of society. Salesforce, one of the most-prominent hypesters behind the AI bubble has spent millions of dollars on advertising and marketing to promote a product that doesn’t exist in the way that it’s being sold.

Sidenote: Now, I want to preface this by saying that not every LLM user is a loser. There are plenty of people bullied into using these models by their bosses, who use them as a glorified search engine, or who use them to write quick scripts or coding snippets to speed up their work. I think this is pretty clear in the copy, but I’m anticipating somebody reads that title and then stops reading entirely.

Only an economy oriented around coveting and coddling losers would have let AI get this far. Every single story about AI has to either directly gloss over the obvious financial and technological issues or start speaking in the kinds of vague theoreticals reserved for cults and multi-level marketing scams. Even Bloomberg’s piece — which is pretty critical! — helps gaslight Salesforce’s customers by quoting an executive blaming their own processes for Salesforce’s outright lies:

Successful deployment of more advanced uses of Agentforce is as much about a customers’ processes and internal compliance as it is about technology, said Madhav Thattai, Salesforce executive vice president of Agentforce. “You’re going to see customers start with simpler use cases,” he said. “As they start to develop and implement more complex things, they kind of realize this full autonomous vision.”

What the fuck does that mean? What’re you talking about, Madhav? What “autonomous vision”? What complex things? Do you even know? Hello?

Even in this very critical piece, the endless pursuit of “fairness” — the Business Idiot’s favourite weapon when they don’t want to be graded on their actual work — means that we have this slop-adjacent explainer that mostly amounts to “yeah you know sometimes their shit needs to be better and then one day, wow, boom! We’re gonna have all sorts of stuff happening.”

But this is the world the Business Idiots have created, as I described last year:

I, however, believe the problem runs a little deeper than the economy, which is a symptom of a bigger, virulent, and treatment-resistant plague that has infected the minds of those currently twigging at the levers of power — and really, the only levers that actually matter.

The incentives behind effectively everything we do have been broken by decades of neoliberal thinking, where the idea of a company — an entity created to do a thing in exchange for money —has been drained of all meaning beyond the continued domination and extraction of everything around it, focusing heavily on short-term gains and growth at all costs. In doing so, the definition of a “good business” has changed from one that makes good products at a fair price to a sustainable and loyal market, to one that can display the most stock price growth from quarter to quarter.

This is the Rot Economy, which is a useful description for how tech companies have voluntarily degraded their core products in order to placate shareholders, transforming useful — and sometimes beloved — services into a hollow shell of their former selves as a means of expressing growth. But it’s worth noting that this transformation isn’t constrained to the tech industry, nor was it a phenomena that occurred when the tech industry entered its current VC-fuelled, publicly-traded incarnation.

This naturally created a tech industry (and a larger economy) dominated by executives that were rewarded for growth, which meant that our tech products are inherently oriented around that growth:

When the leader of a company doesn't participate in or respect the production of the goods that enriches them, it creates a culture that enables similarly vacuous leaders on all levels. Management as a concept no longer means doing "work," but establishing cultures of dominance and value extraction. A CEO isn't measured on happy customers or even how good their revenue is today, but how good revenue might be tomorrow and whether those customers are paying them more. A "manager," much like a CEO, is no longer a position with any real responsibility — they're there to make sure you're working, to know enough about your work that they can sort of tell you what to do, but somehow the job of "telling you what to do" doesn't come with it any actual work, and the instructions don’t need to be useful or even meaningful.

The problem with an economy dominated by Business Idiots is that it eventually loses its connection to the wider concept of production or solutions to customers’ problems, because that might cause management to interact with the real world and, by extension, have actual problems themselves. The problems that Microsoft, Google, Meta and Amazon solve on a daily basis are those related to its shareholders. How do we keep growing? How do we keep people engaged with our products? How do we convince our customers to pay more for our customers? And how do we keep people buying our stock?

Thankfully, The Business Idiots have captured both the media and the markets, twisting the definition of a “good company” into one measured by these very same questions. It doesn’t matter that Facebook is deliberately broken or Google Search’s results were intentionally made worse because number go up, and that’s all The Business Idiot cares about! It doesn’t even matter that 10% of Meta’s 2024 revenue came from scams or that its Kylie Jenner-branded chatbot led a man with dementia to his death or that its John Cena-branded chatbot would roleplay about having sex with children or that it wants to spend $125 billion or more on AI in 2026 because Meta’s ad sales have yet to slow down.

It doesn’t matter that Meta CTO Andrew “Boz” Bosworth has overseen multiple unprofitable, unpopular products or is hated by basically every single person I’ve ever talked to at Meta — The Wall Street Journal will still write a glowing profile saying he’s a “blunt, outspoken provocateur” that’s “transforming Meta” by “unleashing AI.” One can be a colossal fucking loser that everybody hates, lay off thousands of people, fail to make anything of note, oversee multiple failures, and the Business Idiot’s consent-manufacturing machine will help wall you off from reality.

“But Ed,” I hear you cry. “You can’t call somebody like Andrew Bosworth a loser. He’s a huge success! He made lots of money!” You’re falling for the Business Idiot’s biggest trap: that having wealth or being a C-suite executive is proof that you’re not a disconnected loser.

Boz, like every other oaf destroying your favourite tech products, is the ultimate loser — he’s succeeded by taking credit for other people’s ideas, firing people when his own ideas fail, and repeating the cycle as many times as he wants because that’s what being an executive means to him. Boz has no pride in his work. If he did, he’d have resigned over the failures of both the metaverse and Meta’s wasteful, directionless AI efforts, or even over how fucking awful Facebook has become.

The sad truth is that he doesn’t care! He doesn’t give a shit. Boz, like every other Business Idiot, exists to extract value from others and get rewarded by shareholders. As he said in 2018, to Boz, “all the work [Meta does] in growth is justified.” That includes deliberately making notifications less useful, injecting clickbait and AI slop into your feed, and hiding chronological feeds behind an Escher painting of different menu options.

Boz is indicative of the vast majority of CEOs and upper-level management of most of the world’s organizations. If you read this and feel self-conscious, it’s because you secretly know I’m talking about you or somebody you know. One can be incredibly-rich and well-known and yet a huge, unbelievable loser, because being a loser is deep within your soul. A loser is somebody who takes from others, claims others’ work as their own, and demands more credit for having done so. A loser is somebody who believes work and creation is beneath them, and that they are owed the fruits of labor regardless of their actual contributions to the world.

This is why so many people have such an abnormal reaction to AI, promoting and defending it like it’s their religion or nation state. While many people use LLMs and see them as a kind of word calculator or search engine, so many more see within it the chance to ascend above the proles who “work” or “create,” because they find the process of labor or effort so utterly loathsome. When somebody badmouths AI, the Business Idiot must defend it with everything they have, because attacking LLMs is attacking the output of an LLM, which is in turn a judgment on those who are tolerant of its mediocrity and impossible-to-avoid hallucinations.

You see, if you demand good work with intention, that might mean the Business Idiot actually had to do something, and that’s not what The Business Idiot signed up for. We are slaves to middle management and the middle management mindset, we are living in their world, and it will collapse because they never really understood anything to begin with.

LLMs impress the writers who do not want to write, the coders who don’t want to code, the researchers who don’t want to research, and the lawyers that don’t want to actually understand case law. Those that desperately tell you how powerful AI is and that you simply must use it are looking for you to validate their own laziness or distaste for effort, and those who are impressed with LLMs’ outputs tend to be people with low standards.

The aggression with which AI boosters and executives act toward those who aren’t impressed suggests a genuine intellectual and moral weakness. Nobody who’s this insistent, aggressive and violative with their language of “it’s here and if you don’t adopt it you’re stupid and dead” has ever been right about anything. Nobody this desperate, insistent and forceful has ever had good intentions, good vibes or brought good omens — they are always bearers of some kind of con.

Most technology is sold on elevating and ascending human beings. AI cheapens every interaction by creating a work-shaped product from a person that doesn’t respect you enough to give you work that’s barely fit for a human because it wasn’t made for one.

This is why being an AI booster requires you to debase yourself. You must accept becoming a dogshit dealer that loves accepting and receiving low quality goods. You must celebrate intentionless and decaying slop, and defend it and the machine that made it with your entire being. You must sully yourself — treat its unexceptional, sloppy and unreliable outputs as signs of sentience, or at least the proof that digital sentience is possible. You must defend horrible, abrasive, ugly, loud monoliths of steel full of $50,000 graphics cards. You must say they are necessary, and you must aggressively antagonize those who do not.