2026-06-07 16:03:45

I was on the Lego website recently, and I enjoyed their animation on their age picker – rather than a plain text field, the numbers are made of Lego bricks that animate into view, accompanied by the sound of bricks snapping together:

I don’t know if this age picker is visible everywhere, or if it’s specifically to deal with online age verification laws in the UK; whatever the purpose, I thought it was cute.

I wanted to save a copy of it, and because it has animation and audio, a static screenshot wouldn’t be enough. It took me a couple of attempts to record it as a video, and in doing so I learnt several new web APIs.

On macOS, QuickTime Player can make a video recording of your screen. You can select the entire screen, a single window, or a specific area of the screen. I’ve used this a couple of times for bug reports and quick videos, and it works pretty well.

Unfortunately, QuickTime Player isn’t able to record the audio, so it only creates a silent version of the animation. The sounds of bricks snapping together is half the fun!

A quick search suggests there are ways to record screen audio in QuickTime Player, but they all require installing third-party plugins to make my Mac’s audio available as a pseudo-microphone. I’m very picky about what I install, and making a fun video doesn’t justify a new app.

One tool I have installed already is Playwright, a framework for automating browsers. I use it to take screenshots and test my websites, and it turns out you can also use it to record videos.

To record a video, you create a new browser context which sets a video directory, interact with the page as normal, then close the context to save the video. Here’s an example using Playwright’s Python library to open my list of articles, then scrolls three times:

from playwright.sync_api import sync_playwright

import time

with sync_playwright() as p:

browser = p.chromium.launch()

# Create a new context that sets a video directory

context = browser.new_context(record_video_dir="videos/")

# Open my list of articles, then scroll down the page three times

page = context.new_page()

page.goto("https://alexwlchan.net/articles/")

for _ in range(3):

time.sleep(0.5)

page.mouse.wheel(0, 250)

time.sleep(0.5)

# Close the context, which causes the video to be saved

context.close()When you run this script, you get a video in the videos/ directory.

I can imagine this might be useful in a large test suite, especially in a complex multi-step test.

When a test fails, you can watch a screen recording of the browser during the test, which could be more informative than a textual log.

(Indeed, Playwright has a video=retain-on-failure option which only preserves videos created during failing tests, for precisely this use case.)

I ran into two problems with this approach: like QuickTime, you can’t record screen audio; and you can only record videos at 1× pixel density, which makes a very low-resolution and blurry-looking video on modern screens.

Once again I am reminded that modern web tech is amazing, and web browsers are incredibly capable.

There’s a Screen Capture API to record the screen. You can select a tab, a window, or the entire screen. The feature has limited browser support so I don’t think I’d use it in a big web app, but it’s fine for a one-off screen recording. (I wonder how browser-based video conference apps like Google Meet do screen sharing? Do they use this API, or do they use something with wider support?)

To record video, first we call getDisplayMedia() to get the contents of a tab as a MediaStream.

Using the example from the MDN docs:

async function startCapture(displayMediaOptions) {

let captureStream;

try {

captureStream =

await navigator.mediaDevices.getDisplayMedia(displayMediaOptions);

} catch (err) {

console.error(`Error: ${err}`);

}

return captureStream;

}

const displayMediaOptions = {

// Only allow the user to select a single browser tab

video: { displaySurface: 'browser' },

// Include the audio from the tab

audio: true,

// Offer the current tab as the default capture source

preferCurrentTab: true,

};

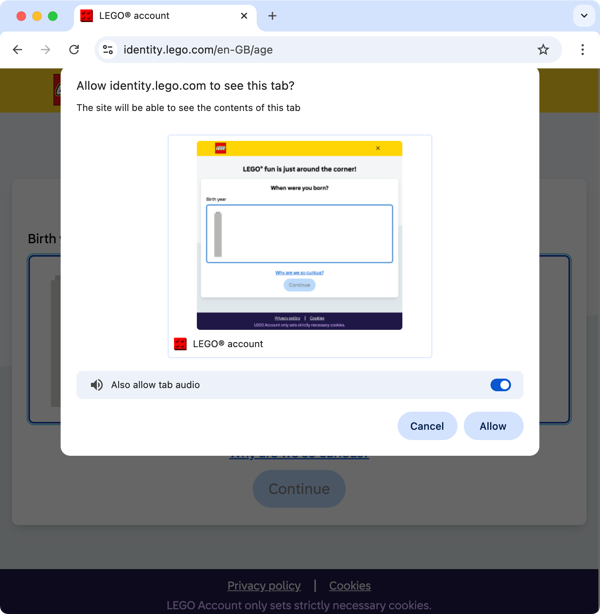

const stream = await startCapture(displayMediaOptions);When you run this in the DevTools console, it triggers a permissions dialog to confirm you want to start recording the contents of the tab. The JavaScript is running on the current page, so it’s theoretically able to see the stream you’re creating. You have to confirm you’re willing to share the website with itself:

If we didn’t set displaySurface: 'browser', this would offer other options like sharing an arbitrary window or the entire screen.

On my Mac, that delegates to an OS-level interface for choosing what to share.

Next, we have to pass the output of the stream to a MediaRecorder:

const mediaRecorder = new MediaRecorder(stream, { mimeType: 'video/mp4' });To store the video data, we create an array, and append to it as we receive dataavailable events:

let videoChunks = [];

mediaRecorder.addEventListener("dataavailable", (ev) => {

if (ev.data.size > 0) videoChunks.push(ev.data);

})Now the MediaRecorder is set up, we call the start() method to start writing data to videoChunks.

We click and scroll in the browser window to capture whatever it is we want to record.

When we’re done, we call stop() to finish the recording:

mediaRecorder.start();

// Do stuff in the browser tab that we want to record

mediaRecorder.stop();To extract the recorded video data, we can concatenate the video chunks with a Blob object, then use FileReader to output the result as a base64-encoded data URL:

function printDataURL(chunks, mimeType) {

const blob = new Blob(chunks, { type: mimeType });

const reader = new FileReader();

reader.readAsDataURL(blob);

reader.addEventListener("loadend", () => {

console.log(reader.result);

});

}

printDataURL(videoChunks, mediaRecorder.mimeType);

// data:video/mp4;codecs=avc1,opus;base64,AAAAJGZ0eX…I copy this base64-encoded string out of my DevTools console, save it to an MP4 file, and voila, I have a recording of this Lego age picker – complete with animation and audio.

As I was writing this post, I realised there’s an even smoother method, that saves you copying and base64-decoding the data: URL.

Rather than reading the blob using a FileReader, we can create a blob URL that points to the object, then construct and click an <a> tag that downloads the blob:

function downloadVideo(chunks, mimeType) {

// Construct the blob

const blob = new Blob(chunks, { type: mimeType });

// Create a blob URL

const url = URL.createObjectURL(blob);

// Create an <a> tag that points to the blob

const a = document.createElement("a");

a.href = url;

a.download = "recording.mp4";

// Click the <a> tag

a.click();

// Wait a second for the download to complete, then release the blob URL

setTimeout(() => URL.revokeObjectURL(url), 1000);

}

downloadVideo(videoChunks, mediaRecorder.mimeType);When I run this code, the video gets downloaded as an MP4 file directly to my Downloads folder.

It’s worth noting that when you call MediaRecorder.stop(), it emits a final dataavailable event and then a stop event.

If you’re doing this interactively in the DevTools console, the delay between you typing mediaRecorder.stop() and downloadVideo() is plenty for the final chunk to be written to videoChunks.

If you’re doing it programatically, you should only download the video when you see the stop event.

To create the video at the top of the post, I wrapped everything in a Python script that used Playwright to run JavaScript on the page, so I’d get consistent timing for the key strokes. The recording isn’t perfect – in particular, there’s a subtle glitch in the appearance of the final “6” – but it’s plenty good enough for a quick video.

I’d also like to work out how this animation works, but that’s a question for another day.

[If the formatting of this post looks odd in your feed reader, visit the original article]

2026-06-05 16:14:37

A month ago, I wrote about my Playwright fixture for testing static websites in a browser. I’ve been copying that fixture from project-to-project, but recently I decided to add it to chives, the utility library I use for all my static websites (or tiny archives).

One of my rules for chives is that everything in it has to be tested – but how do you test a pytest fixture? Test code is just code, and it isn’t immune to bugs. Who tests the tests?

Enter Pytester, a tool designed for testing pytest plugins. Pytester allows you to run isolated test suites, make assertions about the outcomes, and verify the behaviour of custom fixtures. In your top-level test suite, you always want everything to be passing, but with Pytester you can write a mixture of passing and failing tests, and check the results are what you expect.

Pytester is disabled by default, so you first enable it in your top-level conftest.py file (the pytest configuration file where you configure plugins and fixtures):

# conftest.py

pytest_plugins = ["pytester"]Here’s an example of using Pytester where we create a test suite with two tests and check that one passes, one fails:

from pytest import Pytester

def test_with_pytester(pytester: Pytester):

"""

Run an isolated test suite with pytester.

"""

# Make a temporary pytest test file

pytester.makepyfile(

"""

def test_arithmetic():

assert 2 + 2 == 4

def test_list_inclusion():

assert "yellow" in ["red", "green", "blue"]

"""

)

# Run the isolated test suite with pytest

result = pytester.runpytest()

# Check that one test passed, one failed

result.assert_outcomes(passed=1, failed=1)I can imagine creating something similar with some complicated collection of nested functions, exec() and pytest.raises, but using Pytester is a cleaner interface than what I’d build.

Under the hood, Pytester creates a temporary directory, writes specified files into it, then runs a fresh pytest subprocess against it.

It has helper functions for writing files, including Python files (makepyfile), a conftest.py file (makeconftest), and plain text files (maketxtfile).

When we’re testing a fixture, we can create a conftest.py file that imports that fixture, then reference it in the tests.

Here’s a more complicated example, where we import one of my Playwright fixtures in my conftest.py, write an HTML file into the temporary directory, then use them both in the test:

from pytest import Pytester

def test_browser_fixture(pytester: Pytester):

"""

Try testing the browser fixture with pytester.

"""

# Make a conftest.py file

pytester.makeconftest("""

from chives.browser_fixtures import browser

""")

# Make an HTML file

(pytester.path / "greeting.html").write_text("""

<p>Hello world!</p>

""")

# Make a temporary pytest test file

pytester.makepyfile(

"""

from chives.browser_fixtures import file_uri

from playwright.sync_api import Browser, expect

def test_browser_fixture(browser: Browser) -> None:

uri = file_uri("greeting.html")

p = browser.new_page()

p.goto(uri)

expect(p.get_by_text("Hello world!")).to_be_visible()

"""

)

# Run the isolated test suite with pytest

result = pytester.runpytest()

# Check that one test passed

result.assert_outcomes(passed=1)This pattern is sufficient for many fixtures, but it doesn’t work for Playwright – if you run this test, the isolated test suite gives an error rather than a passing test.

Playwright needs you to install a web browser to work (for example, playwright install webkit), and Pytester runs in a sufficiently isolated environment that Playwright can’t find the browsers you already have installed.

We could run the install command inside the temporary directory, but that would be slow and inefficient – it would be better if we could tell Playwright to look for the already-installed browsers elsewhere.

If we set the PLAYWRIGHT_BROWSERS_PATH environment variable inside our isolated test suite, Playwright will look there for browsers.

First, we need to work out where browsers are installed – we could hard-code the location, or we could inspect the executable_path property property on a browser:

from pathlib import Path

from playwright.sync_api import sync_playwright

import pytest

@pytest.fixture(scope="session")

def playwright_browsers_path() -> str:

"""

Return the cache directory where Playwright browsers are installed.

"""

with sync_playwright() as p:

# In my local builds, this returns a path like:

#

# ~/Library/Caches/ms-playwright/webkit-2272/pw_run.sh

#

# Unwrap two levels to get to the `ms-playwright` folder.

return str(Path(p.webkit.executable_path).parent.parent)Then we need to set this as an environment variable inside the Pytester test suite.

I couldn’t find an easy way to set an environment variable; the best approach I came up with was to modify os.environ inside the conftest.py file.

(Perhaps we could access the MonkeyPatch object and set more environment variables, but using private attributes is icky.)

Here’s how the new test starts:

def test_browser_fixture(pytester: Pytester, playwright_browsers_path: str):

"""

Test the browser fixture with pytester.

"""

# Make a conftest.py file

pytester.makeconftest(f"""

from chives.browser_fixtures import browser

import os

os.environ["PLAYWRIGHT_BROWSERS_PATH"] = {playwright_browsers_path!r}

""")

...and now the overall test passes. Here’s the complete code for the new test:

test_browser_fixture.pyfrom pathlib import Path

from playwright.sync_api import sync_playwright

import pytest

from pytest import Pytester

@pytest.fixture(scope="session")

def playwright_browsers_path() -> str:

"""

Return the cache directory where Playwright browsers are installed.

"""

with sync_playwright() as p:

# In my local builds, this returns a path like:

#

# ~/Library/Caches/ms-playwright/webkit-2272/pw_run.sh

#

# Unwrap two levels to get to the `ms-playwright` folder.

return str(Path(p.webkit.executable_path).parent.parent)

def test_browser_fixture(pytester: Pytester, playwright_browsers_path: str):

"""

Test the browser fixture with pytester.

"""

# Make a conftest.py file

pytester.makeconftest(f"""

from chives.browser_fixtures import browser

import os

os.environ["PLAYWRIGHT_BROWSERS_PATH"] = {playwright_browsers_path!r}

""")

# Make an HTML file

(pytester.path / "greeting.html").write_text("""

<p>Hello world!</p>

""")

# Make a temporary pytest test file

pytester.makepyfile(

"""

from chives.browser_fixtures import file_uri

from playwright.sync_api import Browser, expect

def test_browser_fixture(browser: Browser) -> None:

uri = file_uri("greeting.html")

p = browser.new_page()

p.goto(uri)

expect(p.get_by_text("Hello world!")).to_be_visible()

"""

)

# Run the isolated test suite with pytest

result = pytester.runpytest()

# Check that one test passed

result.assert_outcomes(passed=1)The full test suite is more extensive, and checks that certain scenarios fail or error – will the fixtures spot the mistakes I expect them to?

For example, my Page fixture is meant to load a page and fail the test if there are any console warnings or errors; does it actually fail the test correctly?

I don’t expect to use Pytester very often, because it’s rare for me to write fixtures complex enough to need their own test suite – but sometimes I do, and it’s good to know how to create another layer of safety net.

[If the formatting of this post looks odd in your feed reader, visit the original article]

2026-05-29 15:51:28

The first iteration of my scrapbook of social media only had posts from public or semi-public social media sites – Twitter, Instagram, Tumblr, and so on. Every post is saved as JSON metadata, and rendered as HTML in my web browser.

For a while now, I’ve wanted to add support for text conversations. I don’t care about saving my texts en masse, but there are moments which stand out and which I’d like to save. I thought this would be quite straightforward, but I ran into trouble rendering chat bubbles in CSS. I got it working once I walked through it carefully, and I wanted to explain how it works – so I understand it now, and so I remember it later.

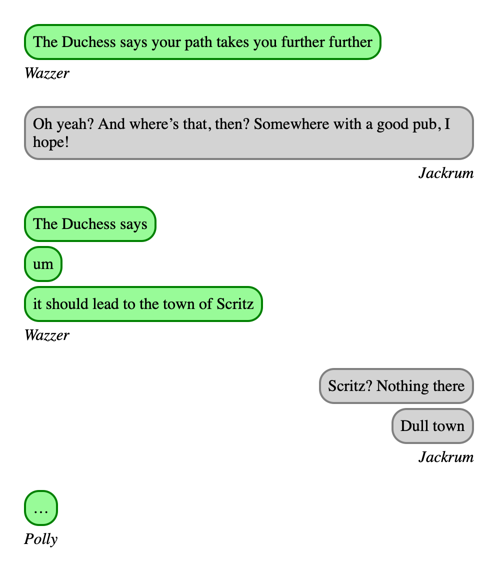

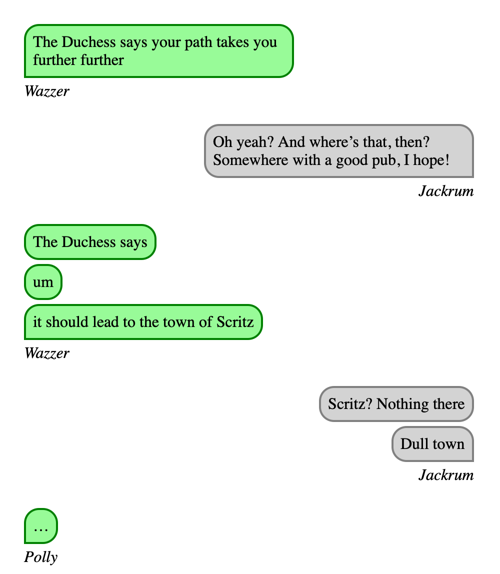

Let’s start with some semantic HTML which represents a group chat with three participants, based on characters from Terry Pratchett’s Monstrous Regiment:

<div class="conversation">

<div class="message-group" data-sender="Wazzer">

<blockquote>The Duchess says your path takes you further</blockquote>

<cite>Wazzer</cite>

</div>

<div class="message-group" data-sender="Jackrum">

<blockquote>Oh yeah? And where’s that, then? Somewhere with a good pub, I hope!</blockquote>

<cite>Jackrum</cite>

</div>

<div class="message-group" data-sender="Wazzer">

<blockquote>The Duchess says</blockquote>

<blockquote>um</blockquote>

<blockquote>it should lead to the town of Scritz</blockquote>

<cite>Wazzer</cite>

</div>

<div class="message-group" data-sender="Jackrum">

<blockquote>Scritz? Nothing there</blockquote>

<blockquote>Dull town</blockquote>

<cite>Jackrum</cite>

</div>

<div class="message-group" data-sender="Polly">

<blockquote>…</blockquote>

<cite>Polly</cite>

</div>

</div>I’m using a <div class="conversation"> for the conversation as a whole, then a <div class="message-group"> to group consecutive messages from each sender.

Each message is a <blockquote>, and the sender is shown in a <cite> element.

The sender is also in a data-sender attribute on each message group – this will allow us to apply different styles based on the sender.

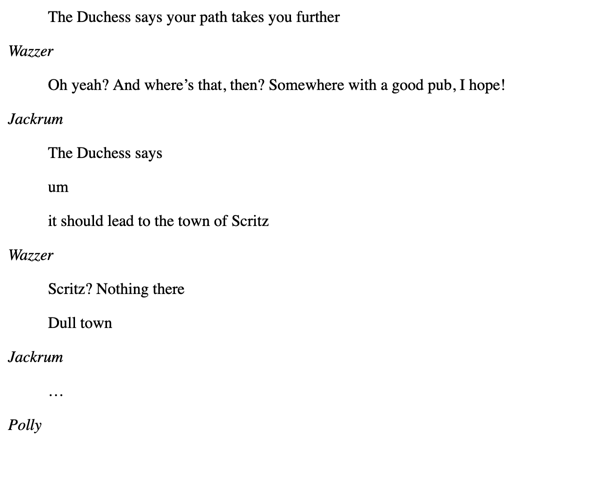

When rendered with the browser’s default stylesheet, this doesn’t look anything like a text message conversation, but it’s also not unreadable:

I always try to use semantic HTML when I can, and lean on the browser’s default styles – they’re a solid baseline and give me a bare minimum even if my CSS breaks. I dislike the approach of many CSS frameworks to reset the browser styles and rebuild them from scratch.

Let’s start by adding some CSS that makes the messages look more like chat bubbles, tweak the spacing so it’s clearer who sent which message, and clamp the width. Let’s suppose this conversation is told from Sergeant Jackrum’s perspective, so we’ll colour his messages differently:

.conversation {

width: 450px;

padding: 1em;

.message-group {

&:not(:last-child) {

margin-bottom: 1.5em;

}

blockquote {

border: 2px solid green;

background: palegreen;

margin: 0;

border-radius: 15px;

margin-bottom: 4px;

padding: 7px;

}

&[data-sender="Jackrum"] blockquote {

border: 2px solid grey;

background: lightgrey;

}

}

}There are a couple of neat tricks in here: I’m using CSS nesting to keep the CSS tidy, along with the & nesting selector.

These are two features that were previously only available in preprocessors like Sass, but are now widely supported by vanilla CSS.

Then I’m using an attribute selector with the data-sender attribute to apply styles to messages sent by Jackrum.

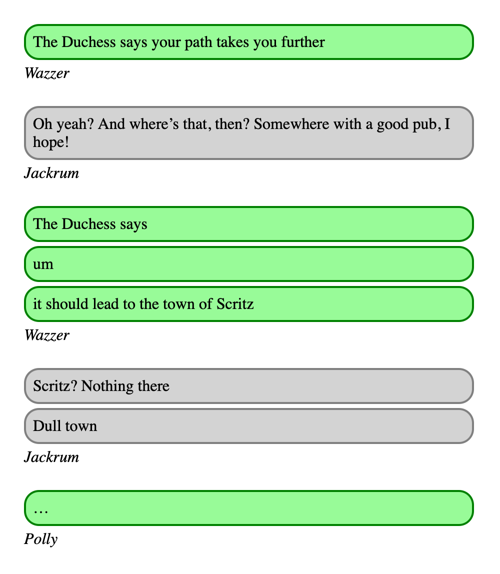

Here’s what it looks like:

This already looks a bit like a messaging app! So far, so easy – these are all CSS properties I’m comfortable with. The colours and spacing are all arbitrary; I just wanted enough to prove the basic idea before moving on to the tricky part – right-sizing and right-aligning the bubbles. In the real scrapbook, I match the colours to the messaging app where the conversation took place – light blue for iMessage, dark blue for Signal, green for SMS, and so on.

Messaging apps usually resize their text bubbles so they’re only as large as the text, rather than filling the width of the screen. We can do a first pass at this with three rules:

blockquote {

width: max-content;

max-width: 100%;

box-sizing: border-box;

}The max-content keyword is a new one for me – it expands the element to the maximum size required to show its contents, without any soft wrapping.

For short messages, this shrinks the bubble so it’s only as wide as needed for the text.

For long messages, I need the max-width property to avoid the bubble expanding beyond the width of the conversation, and box-sizing: border-box ensures that width applies to the entire element (padding and borders included), not just the text inside.

Without them, the bubbles would expand beyond the right edge of the conversation.

Here’s what it looks like now:

The next change is to make Jackrum’s messages appear on the right-hand side of the screen.

Initially I tried adding margin-left: auto to the message group, but the message bubbles didn’t budge – the message group still takes up the full width of the screen, even if the individual messages don’t.

Then I tried playing with flexbox layouts, which I’m less familiar with but I did get working.

These flex properties achieve the desired effect:

.message-group {

display: flex;

flex-direction: column;

align-items: flex-start;

&[data-sender="Jackrum"] {

align-items: flex-end;

}

}But flexbox is an area of CSS I don’t know that well, and while I can understand it, I need to refer to the documentation every time.

After thinking some more, I realised that I could apply margin-left: auto to the blockquote elements rather than the message group.

This has a similar effect, but now it’s using CSS properties I understand:

.message-group[data-sender="Jackrum"] {

blockquote {

margin-left: auto;

}

cite {

display: block;

text-align: right;

}

}I’m going to use the margin approach because I understand it better and this is only a personal project, but I can see reasons for preferring flex.

For example, if some of the messages are written right-to-left and you want to flip the order of the bubbles, the flexbox approach will automatically do the right thing if you set dir="rtl" – whereas the margins need to be manually specified.

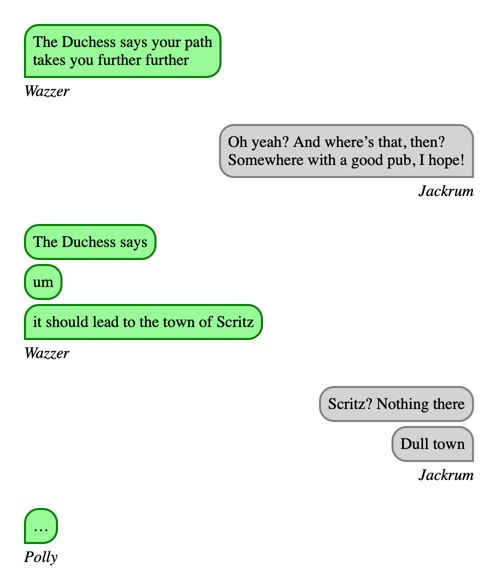

This looks pretty close to what I want:

Next, let’s square off the corner of the last message in each group, and limit the width of the message bubbles so they don’t fill the entire width of the screen. Here are the CSS rules:

blockquote {

max-width: 60%;

}

.message-group:not([data-sender="Jackrum"]) {

blockquote:has(+ cite) {

border-bottom-left-radius: 0;

}

}

.message-group[data-sender="Jackrum"] {

blockquote:has(+ cite) {

border-bottom-right-radius: 0;

}

}The choice of max-width: 60% is arbitrary; I just want the bubbles to avoid filling the whole conversation.

To round off the corner of the last message bubble in each conversation, I’m targeting the final message by using the :has() pseudo-class to find a blockquote which is immediately followed by a cite element.

Then I set a custom border radius, depending on whether the message is on the left/right of the screen.

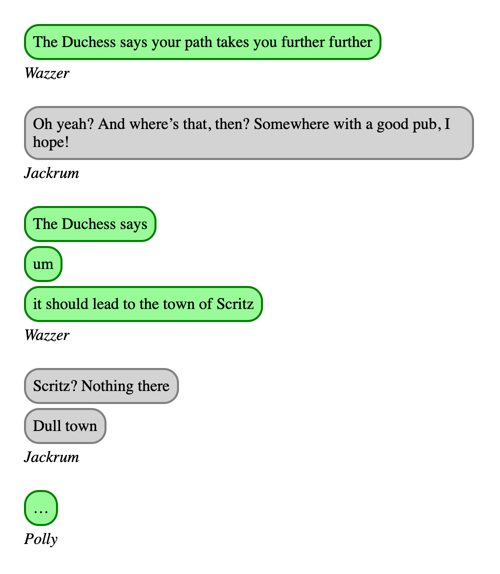

Here’s what the conversation looks like now:

The last issue is that sometimes the message bubbles have extra whitespace – notice how Jackrum’s first message has unnecessary padding on the right-hand side. The bubble could shrink slightly without affecting the text, and it would look better.

I don’t think you can do a “shrink-wrap” layout in pure CSS, but we can do it with a bit of JavaScript.

In particular, we can use a Range object to get all the text and nodes in each blockquote, use the getBoundingClientRect() method to work out how much space that takes up on the page, then resize the blockquote to fit:

function shrinkWrap(elem) {

const range = document.createRange();

range.selectNodeContents(elem);

const bbox = range.getBoundingClientRect();

elem.style.width = `${bbox.width}px`;

elem.style.boxSizing = "content-box";

}

window.addEventListener("load", () =>

document

.querySelectorAll(".message-group blockquote")

.forEach(shrinkWrap)

);We set the width to an exact pixel count, and reset box-sizing to the default content-box – this means the width is applied to the text in the bubble, and then the padding and borders are added after.

If we stuck to box-sizing: border-box, the content would be narrower than the width and be wrapped incorrectly.

This only runs on page load – after the blockquote elements have been drawn for the first time.

You could also run it when the window is resized to make the bubbles reflow when the screen width changes, but I haven’t missed that in my scrapbook.

We can complement this with text-wrap: balance on the blockquote elements, which balances the number of characters on each line.

This gives a tighter fit within the bubble, because each line is about the same length.

Here’s the final result:

Notice that Wazzer’s first message now fills the bubble better, and there’s no extra space on the right-hand side of Jackrum’s first message.

I’m happy with this as a visual representation of a text conversation.

The other thing I considered is some sort of visual flourish on the corner above the sender’s name.

The simple right angle is fine, but the new CSS corner-shape and border-shape properties look like they might allow more interesting shapes here.

Perhaps at some point I could add a nice-looking tail to the message bubbles?

It’s only experimental so I haven’t tried it yet, but I’ve got an eye on it for the future.

Putting it all together, here’s my final CSS:

.conversation {

width: 450px;

padding: 1em;

.message-group {

&:not(:last-child) {

margin-bottom: 1.5em;

}

blockquote {

border: 2px solid green;

background: palegreen;

margin: 0;

border-radius: 15px;

margin-bottom: 4px;

padding: 7px;

width: max-content;

max-width: 60%;

box-sizing: border-box;

text-wrap: balance;

}

&:not([data-sender="Jackrum"]) {

blockquote:has(+ cite) {

border-bottom-left-radius: 0;

}

}

&[data-sender="Jackrum"] {

blockquote {

border: 2px solid grey;

background: lightgrey;

margin-left: auto;

}

blockquote:has(+ cite) {

border-bottom-right-radius: 0;

}

cite {

display: block;

text-align: right;

}

}

}

}I’m really pleased with the final effect, and it’s a great addition to my scrapbook.

My mistake, as always, was trying to build this by editing the CSS for the existing project – rather than developing it as a standalone component first. CSS is tricky and difficult to reason about, and trying to rush a fix is never the optimal outcome.

This may not be a perfect chat UI, but it’s pretty close, and I understand exactly how it works. I’m happy with that.

[If the formatting of this post looks odd in your feed reader, visit the original article]

2026-05-14 15:51:01

In my previous post, I explained how I use the FSEvents API to detect changed files on macOS. It’s part of my livereload mechanism for working on this site. I make a change to a source file, that triggers a rebuild of the site, and then the development site automatically refreshes in my web browser. I’m trying to build this all myself, with no third-party dependencies.

Once I’ve detected a changed file and rebuilt the site, how do I automatically refresh my open browser windows? In this post, I’ll explain how I use HTTP long polling to tell pages when it’s time to reload.

In most HTTP servers I’ve built, when the server sends a response to a client, I want it to return as quickly as possible. When I’m writing HTTP clients that fetch data from servers, I expect the server to respond quickly. The entire interaction happens within a few seconds of the request – but it doesn’t have to be that way.

HTTP long polling is a technique where a client makes a normal HTTP request, but the server doesn’t respond immediately. Rather than closing or timing out the connection, both sides hold it open, and the server can send more data to the client over time.

This mechanism can allow the server to tell the browser when it’s time to reload the page. When the browser loads a page, it opens a long-lived HTTP connection to the server. The server only sends data when something has changed, so the browser waits to receive data and then reloads the page.

This technique is used in Tailscale – clients use HTTP long polling to get network updates from the control plane.

When the tailscaled daemon starts, it opens a long-running connection to the control servers, and when something changes in the network, the servers send the updated network information (or “netmap”) down that connection.

Clients can hold open a connection for a long time, and receive many updates on the same connection.

All the scripts for my blog are written in Python, so I want to use Python to write a web server that serves a long-lived connection and tells the browser when to reload.

For production Python web servers I’d use a proper server framework like Gunicorn or uWSGI, but for a small and low-traffic local server, I can just use the standard library’s http.server module.

To create a server, I need to create a subclass of BaseHTTPRequestHandler that handles GET requests, then pass that to an instance of HTTPServer.

Here’s a server that receives a GET request, holds open the connection, and writes “waiting…” once every second:

from http.server import HTTPServer, BaseHTTPRequestHandler

import time

class SlowHandler(BaseHTTPRequestHandler):

"""

An HTTP handler that sends "waiting..." once a second on

a long-running connection.

"""

def do_GET(self) -> None:

"""

Handle a new GET request from a client.

"""

print("Client connected")

# Send the initial HTTP headers.

self.send_response(200)

self.send_header("Content-type", "text/plain")

self.end_headers()

try:

while True:

self.wfile.write(b"waiting...\n")

# Flush to stdout to ensure the client receives the data

# immediately, without buffering.

self.wfile.flush()

# Sleep until we're ready to write again.

time.sleep(1)

# If the client closes the connection, we'll get a BrokenPipeError

# the next time we try to write data.

except BrokenPipeError:

print("Client disconnected")

server_address = ("localhost", 5555)

server = HTTPServer(server_address, SlowHandler)

server.serve_forever()If you run this script and make a GET request with curl, you’ll see “waiting…” printed in a loop:

$ curl http://localhost:5555/

waiting...

waiting...

waiting...We could wire this up to check if there have been any changes in the last second, and write a different message to the connection – but that would introduce at least a second of latency into the browser getting updates. Ideally, we’d send a response as soon as an update is ready. How can we coordinate that within a single Python script?

The solution is another standard library feature I’ve not used before: threading.Event.

This allows us to track the value of a single true/false flag, and we can use the wait() method to block until the flag is set to true.

First I create the event:

import threading

rebuild_event = threading.Event()When the site is finished rebuilding, we set the flag to true, then clear it immediately (waiting for the next rebuild):

for changeset in watch_for_changed_files():

rebuild_site(based_on=changeset)

rebuild_event.set()

rebuild_event.clear()Then in the HTTP handler, we wait for the flag to be set and only then send a response:

class WaitForChangesHandler(BaseHTTPRequestHandler):

"""

An HTTP handler that waits until the site is rebuilt to send a response.

"""

def do_GET(self) -> None:

"""

Handle a new GET request from a browser.

"""

print("Client connected")

try:

rebuild_event.wait()

self.send_response(200)

self.send_header("Content-type", "text/plain")

self.end_headers()

self.wfile.write(b"reload\n")

self.wfile.flush()

except BrokenPipeError:

print("Client disconnected")We can also use threading to start the server in a background thread, and wait for file changes in the main thread:

server_address = ("localhost", 5555)

server = HTTPServer(server_address, WaitForChangesHandler)

threading.Thread(target=server.serve_forever, daemon=True).start()

for changeset in watch_for_changed_files():

rebuild_site(based_on=changeset)

rebuild_event.set()

rebuild_event.clear()When we make an HTTP call to the web server, it now waits until something changes, and only then sends a response:

$ curl http://localhost:5555/

time to reloadThis minimises the delay between rebuilding the site and refreshing the page, which allows for very fast reloads when I change a source file.

We can use the built-in fetch() method to make a request to the server, wait for a response, and then trigger a page reload.

This only requires a few lines of JavaScript:

async function waitForChanges() {

await fetch('http://localhost:5555/wait-for-changes');

window.location.reload();

}

window.addEventListener("DOMContentLoaded", waitForChanges);This is only added to local builds, so the live site won’t fetch localhost:5555 on your computer.

If the livereload server is different to the server that’s serving this JavaScript, we need to tweak the Access-Control-Allow-Origin header to allow the page to talk to the livereload server:

self.send_response(200)

self.send_header("Access-Control-Allow-Origin", "*")

self.send_header("Content-type", "text/plain")

self.end_headers()For a live application we’d want to scope this more tightly than *, but for a server that’s only used for local development it’s fine.

The basic HTTPServer can only handle one connection at a time, so if I have two browser windows open, only one of them can receive the reload event.

This is sub-optimal – when something changes, every browser window should reload.

We can fix this by replacing HTTPServer with ThreadingHTTPServer, which creates a new thread for every request:

from http.server import ThreadingHTTPServer

server_address = ("localhost", 5555)

server = ThreadingHTTPServer(server_address, WaitForChangesHandler)

threading.Thread(target=server.serve_forever, daemon=True).start()I’m not sure how this scales for a very large number of long-running requests, but I’ll only ever have a small, single-digit number of browser windows open, and it’s plenty for that.

fetch() timeoutsAlthough we don’t set an explicit timeout in our fetch() call, browsers apply their own default timeout.

For example, a quick search suggests Firefox used to set a 30 second timeout (I couldn’t immediately find a reference about whether that’s still true).

If the server doesn’t respond in that time, the connection is closed and no more data is received.

That means that if I go longer than the default timeout without making changes, the browser will close the connection to the livereload server, and then it won’t be notified about further changes.

We can fix this by changing the server so it always responds within 20 seconds (or another timeout of our choice). It sends a 200 OK immediately if there’s a change, or a 204 No Content if it hits the timeout before a change happens. Then the on-page JavaScript can run in a loop, and only reload when it gets a 200 OK.

We can pass a timeout parameter to rebuild_event.wait(), which blocks until the flag is set or the timeout expires, and returns the current value of the flag.

Here’s the updated server:

class WaitForChangesHandler(BaseHTTPRequestHandler):

"""

An HTTP handler that sends one of two responses:

* 200 OK -- the site has changed, reload the page, or

* 204 No Content -- nothing has changed recently, make a new GET request

"""

def do_GET(self) -> None:

"""

Handle a new GET request from a browser.

"""

try:

has_changes = rebuild_event.wait(timeout=20)

if has_changes:

self.send_response(200)

self.send_header("Access-Control-Allow-Origin", "*")

self.send_header("Content-type", "text/plain")

self.end_headers()

self.wfile.write(b"reload\n")

self.wfile.flush()

else:

self.send_response(204)

self.end_headers()

except BrokenPipeError:

passThen in the JavaScript, we make requests in a loop, check the status code of each response, and only reload if we get a 200 OK:

async function waitForChanges() {

while (true) {

const response = await fetch('http://localhost:5555/wait-for-changes');

if (response.status === 200) {

window.location.reload();

break;

}

}

}If the fetch() call fails – for example, if the server gets restarted – the loop will break, and then the window will stop receiving updates.

We can fix this by wrapping the body of the loop with a try … except, and in the except we tell the function to wait a second before trying again:

async function waitForChanges() {

while (true) {

try {

const response = await fetch('http://localhost:5555/wait-for-changes');

if (response.status === 200) {

window.location.reload();

break;

}

} catch {

await new Promise(resolve => setTimeout(resolve, 1000));

}

}

}This is the code I’ve been using for several weeks now, and it’s held up well.

Using third-party libraries. Initially I was using the python-livereload library, which bundles a copy of livereload-js, but I wanted to write my own implementation – partly to avoid dependencies, partly to understand how it works.

Polling the client. I could write a timestamp to a file at the end of a build, serve that file as part of the site, and have JavaScript on the page continuously poll that file for changes. I prefer waiting for changes, because it avoids unnecessary work and CPU cycles.

Manipulate the browser directly. The server and my web browser are almost always on the same machine. Rather than have the browser trigger the reload, the rebuild script could use something like AppleScript to find matching browser windows and trigger a reload.

That would be the ultimate “only has to work on my machine” solution, but it might be more complicated, because I’d have to write new code for every browser I use. (I routinely test with Safari, Firefox, and Chrome.) It also wouldn’t work if I’m using a web browser on a different machine, for example when I’m testing how the site looks on a phone.

Use WebSockets to tell the web page about changes. That’s how livereload-js works, and WebSockets are a good tool for creating a persistent connection between a server and a client. They’re capable of two-way communication – for example, Slack uses WebSockets to maintain a connection between the Slack app and their servers.

I didn’t use WebSockets because they’re more complicated to implement on the server (there’s no WebSockets server in the Python standard library), and I don’t need their flexibility. My server–browser communication is strictly one-way, so HTTP long polling is fine.

Here’s a diagram which illustrates the code we’ve written: when the site is rebuilt, we call rebuild_event.set(), which unblocks a rebuild_event.wait() in the web server.

The web server sends an HTTP 200 OK to the web browser, which has been waiting for a response to GET /wait-for-changes.

The browser reloads the page, and the cycle starts again.

(Click for a larger version.)

Here’s the final Python web server:

from http.server import BaseHTTPRequestHandler, ThreadingHTTPServer

import threading

rebuild_event = threading.Event()

class WaitForChangesHandler(BaseHTTPRequestHandler):

"""

An HTTP handler that sends one of two responses:

* 200 OK -- the site has changed, reload the page, or

* 204 No Content -- nothing has changed recently, make a new GET request

"""

def do_GET(self) -> None:

"""

Handle a new GET request from a browser.

"""

try:

has_changes = rebuild_event.wait(timeout=20)

if has_changes:

self.send_response(200)

self.send_header("Access-Control-Allow-Origin", "*")

self.send_header("Content-type", "text/plain")

self.end_headers()

self.wfile.write(b"reload\n")

self.wfile.flush()

else:

self.send_response(204)

self.send_header("Access-Control-Allow-Origin", "*")

self.end_headers()

except BrokenPipeError:

pass

server_address = ("localhost", 5555)

server = ThreadingHTTPServer(server_address, WaitForChangesHandler)

threading.Thread(target=server.serve_forever, daemon=True).start()

for changeset in watch_for_changed_files():

rebuild_site(based_on=changeset)

rebuild_event.set()

rebuild_event.clear()and here’s the JavaScript that gets embedded in the page:

async function waitForChanges() {

while (true) {

try {

const response = await fetch('http://localhost:5555/wait-for-changes');

if (response.status === 200) {

window.location.reload();

break;

}

} catch {

await new Promise(resolve => setTimeout(resolve, 1000));

}

}

}

window.addEventListener("DOMContentLoaded", waitForChanges);Combined with the previous post, whenever I make a change to a source file, the effect is reflected near-instantly in my web browser. Informal benchmarking shows there’s only about 150 milliseconds between saving a file in my text editor and my browser reloading with the changes, which is on par with the fastest human reaction times.

This makes working on the site feel incredibly smooth. As I’m working on complex layouts or editing a tricky sentence, I can save my work and see the changes. The rendered site looks different to monospaced code in my text editor, and I often spot new mistakes or issues that way.

For a long time I’d have reached for a third-party library to do this, and it’s pretty satisfying to have written my own. The whole thing is only 150 lines of code, and I understand exactly what it’s doing.

[If the formatting of this post looks odd in your feed reader, visit the original article]

2026-05-11 14:41:24

When I’m working on this website, I want a local server with live reload. I want to be able to open the site in my web browser, make changes to the source files, and have my browser automatically refresh the page when the site is updated. I use this whenever I’m working on the site, and I find it helpful to see my writing in a different font/layout to my text editor; I spot lots of typos and mistakes that way.

When I was using Jekyll, I used the command jekyll serve --livereload.

Now I’ve written my own static site generator, I need to build my own version.

This was a fun challenge, because it touched a number of areas I’ve not worked in before – macOS filesystem events, non-blocking I/O, and HTTP long polling.

In this post I’ll explain how I detect changes to source files to trigger a rebuild; in my next post I’ll explain how that automatically refreshes any open pages in my browser. First we’re going to build a Swift script that detects changes using the FSEvents API, then we’ll get that information into a Python script.

There are several ways to detect changes to files on macOS; I’m going to use the File System Events API (also called “FSEvents” for short). This allows you to receive notifications about any changes to a directory tree, or files within it. One of the main purposes of this API is to allow backup software to detect incremental changes without continuously rescanning an entire tree, but we can use it for other things.

Apple has a File System Events Programming Guide which explains the FSEvents API in detail, and that it’s exactly what I need: “The file system events API is designed for passively monitoring a large tree of files for changes”. It mentions a couple of alternatives – kernel extensions for getting immediate notifications and pre-empting file changes, or kqueues for monitoring changes to a single file – but they’re not what I need, so I didn’t explore them further.

The guide is a little outdated, but the broad strokes are still correct. In Using the File System Events API, it explains the lifecycle of a file system events stream: create a stream, start listening, receive notifications, trigger a callback you provide, stop listening, release the stream.

Let’s start with a script that prints a static message whenever it sees a change – we don’t care about what file it was yet, for now we just want to know when any file changed.

Here are the steps:

Create a file systems event stream using FSEventStreamCreate.

This function takes a lot of arguments and you can’t use named arguments, so I found it helpful to define each argument as a variable, then pass those variables into the function.

I wrapped my FSEventStreamCreate call in another function:

import Foundation

/// Create a new file system events stream that watches for changes

/// in the given directories.

func createFSEventStream(_ pathsToWatch: [String]) -> FSEventStreamRef {

let callback: FSEventStreamCallback = { (_, _, _, _, _, _) in

print("Detected file change!")

// Flush stdout to ensure it's printed immediately

fflush(stdout)

}

let context: UnsafeMutablePointer<FSEventStreamContext>? = nil

let sinceWhen = FSEventStreamEventId(kFSEventStreamEventIdSinceNow)

let latency = 0.01

let flags = FSEventStreamCreateFlags()

guard let eventStream = FSEventStreamCreate(

kCFAllocatorDefault, callback, context, pathsToWatch as CFArray, sinceWhen, latency, flags

) else {

fatalError("Failed to create FSEventStream: check your paths or permissions.")

}

return eventStream

}The callback function is an instance of FSEventStreamCallback, which will be called whenever a file changes.

The arguments contain information about the file which just changed.

For now we ignore all of that information, and just print a static message.

The context argument allows us to attach some context to the stream.

I’m not sure what it’s for – perhaps for applications that have multiple event streams, and need to distinguish between them in the callback?

I don’t think I need this, and the docs say I can pass NULL, so that’s what I’ve done.

The sinceWhen argument asks for events that happened after a given event ID.

I imagine this is useful for long-running applications like backup software – it means they can resume an event stream if the app is quit and relaunched, without rescanning the tree on every app launch.

I just need events from when the script started running, so I can use the kFSEventStreamEventIdSinceNow constant.

The latency argument is how long the OS will wait to coalesce rapid-fire events into a single event.

A shorter latency means you get notifications faster, but you’ll get more of them.

I’ll implement my own event coalescing later, so I set this quite low and accept the stream.

The flags modify the behaviour of the event stream.

We’re using the defaults for now; we’ll come back and add some more later.

Finally, we create the event stream by using FSEventStreamCreate.

This returns an Optional value which can be nil if the stream wasn’t created successfully; for example, if you try to watch a directory that doesn’t exist or which you don’t have permission to read.

Choose the folders you want to watch. For this initial script, we’ll use two folders that should be present on every Mac: the user’s Desktop and Documents folder.

let home = URL(fileURLWithPath: NSHomeDirectory())

let pathsToWatch = [

home.appendingPathComponent("Desktop").path,

home.appendingPathComponent("Documents").path

]Schedule the event stream and start listening for changes.

The FS Events Guide tells you to use FSEventStreamScheduleWithRunLoop, but that function has been deprecated for several years.

The recommended replacement is FSEventStreamSetDispatchQueue:

let queue = DispatchQueue(label: "net.alexwlchan.watch_file_changes")

FSEventStreamSetDispatchQueue(eventStream, queue)

FSEventStreamStart(eventStream)

print("Listening for changes in \(pathsToWatch.joined(separator: ", "))")

dispatchMain()Clean up the event stream when we’re done. In a simple script like mine that might not be necessary – the system probably cleans up an event stream if it’s not used for a while – but it’s good hygiene and ensures my Mac doesn’t start tracking dozens of redundant event streams.

First, here’s a function to call the FSEventStream methods that stop, invalidate, and release references to the stream:

/// Stop a file system events stream, invalidate it, and release our

/// reference to it.

func cleanupEventStream(_ eventStream: FSEventStreamRef) {

FSEventStreamStop(eventStream)

FSEventStreamInvalidate(eventStream)

FSEventStreamRelease(eventStream)

}Then, a function to create dispatch source objects that watch for a termination signal (SIGINT, SIGTERM, SIGHUP) and runs our cleanup function.

We have to disable the default handlers, or they can terminate the script before we run our cleanup code:

/// Register cleanup handlers for SIGINT, SIGTERM and SIGHUP that

/// clean up the event stream when the script exits.

///

/// Returns an array of `DispatchSourceSignal`; the caller must hold

/// a reference to these in a global variable, or they will be cancelled.

func registerCleanup(_ eventStream: FSEventStreamRef) -> [DispatchSourceSignal] {

let signals = [SIGINT, SIGTERM, SIGHUP]

var sources: [DispatchSourceSignal] = []

for sig in signals {

let signalSource = DispatchSource.makeSignalSource(signal: sig, queue: .main)

signalSource.setEventHandler {

print("\nStopping listener...")

cleanupEventStream(eventStream)

exit(0)

}

signal(sig, SIG_IGN)

signalSource.activate()

sources.append(signalSource)

}

return sources

}Finally, we call this function and hold a reference to the dispatch sources – if not, Swift will deallocate them as unused, and then our cleanup code won’t run.

let cleanup = registerCleanup(eventStream)Here’s the complete script:

watch_for_changes.swift#!/usr/bin/env swift

/// Watch for changed files in a directory, and print a message when

/// something changes.

///

/// Example:

///

/// $ swift scripts/watch_for_changed_files.swift ~/Desktop/ ~/Documents/

/// Listening for changes in /Users/alexwlchan/Desktop/, /Users/alexwlchan/Documents/

/// Detected file change!

/// Detected file change!

/// Detected file change!

///

import Foundation

/// Create a new file system events stream that watches for changes

/// in the given directories.

func createFSEventStream(_ pathsToWatch: [String]) -> FSEventStreamRef {

let callback: FSEventStreamCallback = { (_, _, _, _, _, _) in

print("Detected file change!")

// Flush stdout to ensure it's printed immediately

fflush(stdout)

}

let context: UnsafeMutablePointer<FSEventStreamContext>? = nil

let sinceWhen = FSEventStreamEventId(kFSEventStreamEventIdSinceNow)

let latency = 0.01

let flags = FSEventStreamCreateFlags()

guard let eventStream = FSEventStreamCreate(

kCFAllocatorDefault, callback, context, pathsToWatch as CFArray, sinceWhen, latency, flags

) else {

fatalError("Failed to create FSEventStream: check your paths or permissions.")

}

return eventStream

}

/// Stop a file system events stream, invalidate it, and release our

/// reference to it.

func cleanupEventStream(_ eventStream: FSEventStreamRef) {

FSEventStreamStop(eventStream)

FSEventStreamInvalidate(eventStream)

FSEventStreamRelease(eventStream)

}

/// Register cleanup handlers for SIGINT, SIGTERM and SIGHUP that

/// clean up the event stream when the script exits.

///

/// Returns an array of `DispatchSourceSignal`; the caller must hold

/// a reference to these in a global variable, or they will be cancelled.

func registerCleanup(_ eventStream: FSEventStreamRef) -> [DispatchSourceSignal] {

let signals = [SIGINT, SIGTERM, SIGHUP]

var sources: [DispatchSourceSignal] = []

for sig in signals {

let signalSource = DispatchSource.makeSignalSource(signal: sig, queue: .main)

signalSource.setEventHandler {

print("\nStopping listener...")

cleanupEventStream(eventStream)

exit(0)

}

signal(sig, SIG_IGN)

signalSource.activate()

sources.append(signalSource)

}

return sources

}

// Choose which folders to watch.

let home = URL(fileURLWithPath: NSHomeDirectory())

let pathsToWatch = [

home.appendingPathComponent("Desktop").path,

home.appendingPathComponent("Documents").path

]

// Create the event stream.

let eventStream = createFSEventStream(pathsToWatch)

// Register cleanup handlers that will run when the script exits.

let cleanup = registerCleanup(eventStream)

// Schedule the event stream and start listening for changes.

let queue = DispatchQueue(label: "net.alexwlchan.watch_file_changes")

FSEventStreamSetDispatchQueue(eventStream, queue)

FSEventStreamStart(eventStream)

print("Listening for changes in \(pathsToWatch.joined(separator: ", "))")

dispatchMain()When you run this script, you should see it print Detected file change! every time you change a file on your Desktop.

Stop the script with ^C.

$ swift watch_for_changes.swift

Listening for changes in /Users/alexwlchan/Desktop, /Users/alexwlchan/Documents

Detected file change!

Detected file change!

Detected file change!

^C

Stopping listener...This alone is enough to know I should kick off a site rebuild, but a full rebuild takes 10–15s. If I know which file had changed, I can do an incremental rebuild that would be much faster. Let’s tackle that next.

If we want to know which file changed, and not merely that a file changed, we need to customise the FSEventStreamCallback.

This callback takes six parameters, and the fourth parameter eventPaths is an array of paths where changes occurred.

The type is a bit gnarly: by default it’s a raw C array of raw C strings, or we can set the kFSEventStreamCreateFlagUseCFTypes flag to get a CFArrayRef of CFStringRef objects.

(Here CF stands for Core Foundation, one of Apple’s low-level frameworks.)

I started by setting the flag, and writing a function to converts the CFArrayRef into a vanilla Swift array:

let flags = FSEventStreamCreateFlags(kFSEventStreamCreateFlagUseCFTypes)

/// Convert a raw pointer from an FSEvent callback into a Swift String.

///

/// FSEventStream must be created with 'kFSEventStreamCreateFlagUseCFTypes'

func convertFSEventPaths(_ eventPaths: UnsafeRawPointer) -> [String] {

let cfArray = Unmanaged<CFArray>.fromOpaque(eventPaths)

return cfArray.takeUnretainedValue() as! [String]

}I can imagine that if you’re working in a very performance-sensitive application, you might skip this step and operate on the C types directly, but that’s not necessary for me.

Then I modified the callback to parse the event paths, and print them one-by-one:

let callback: FSEventStreamCallback = { (_, _, _, eventPaths, _, _) in

for p in convertFSEventPaths(eventPaths) {

print("Detected change in \(p)")

}

// Flush stdout to ensure it's printed immediately

fflush(stdout)

}If we add these modifications to our script, it now prints the folder in which a change occurred – for example, if I edit a file /Users/alexwlchan/books/Reading List.txt, it prints the parent folder /Users/alexwlchan/Desktop/books.

$ swift watch_for_changed_folders.swift

Listening for changes in /Users/alexwlchan/Desktop, /Users/alexwlchan/Documents

Detected change in /Users/alexwlchan/Desktop/

Detected change in /Users/alexwlchan/Desktop/books/

Detected change in /Users/alexwlchan/Documents/My notes/

^C

Stopping listener...One thing I noticed is that a single operation can sometimes emit multiple filesystem events – for example, if I save a file in my text editor, that emits two events. I’m guessing that’s one event to write the contents of the file, one event to update the metadata, but I’m not sure.

Because I don’t need fine-grained resolution of filesystem events, I use a Set(…) to de-duplicate events:

let callback: FSEventStreamCallback = { (_, _, _, eventPaths, _, _) in

for p in Set(convertFSEventPaths(eventPaths)) {

print("Detected change in \(p)")

}

// Flush stdout to ensure it's printed immediately

fflush(stdout)

}If we’re interested in the individual files rather than the directories, we can use the kFSEventStreamCreateFlagFileEvents flag:

let flags = FSEventStreamCreateFlags(

kFSEventStreamCreateFlagFileEvents | kFSEventStreamCreateFlagUseCFTypes

)This shows us every file which is changing, and often reveals clues about how our computers work under the hood – for example, when I took a screenshot, we can see it got saved to a hidden file first (.Screenshot), before being moved into its final location.

$ swift watch_for_changed_files.swift

Listening for changes in /Users/alexwlchan/Desktop, /Users/alexwlchan/Documents

Detected change in /Users/alexwlchan/Desktop/greeting.txt

Detected change in /Users/alexwlchan/Desktop/greeting.txt

Detected change in /Users/alexwlchan/Desktop/.Screenshot 2026-05-09 at 10.45.42.png

Detected change in /Users/alexwlchan/Desktop/Screenshot 2026-05-09 at 10.45.42.png

Detected change in /Users/alexwlchan/Desktop/.DS_StoreHere’s the complete script:

watch_for_changed_files.swift#!/usr/bin/env swift

/// Watch for changed files in a directory, and print the paths of

/// changed files.

///

/// Example:

///

/// $ swift scripts/watch_for_changed_files.swift ~/Desktop/ ~/Documents/

/// Listening for changes in /Users/alexwlchan/Desktop/, /Users/alexwlchan/Documents/

/// Detected change in /Users/alexwlchan/Desktop/greeting.txt

/// Detected change in /Users/alexwlchan/Desktop/booktracker/index.html

/// Detected change in /Users/alexwlchan/Documents/proposal.pdf

///

import Foundation

/// Convert a raw pointer from an FSEvent callback into a Swift String.

///

/// FSEventStream must be created with 'kFSEventStreamCreateFlagUseCFTypes'

func convertFSEventPaths(_ eventPaths: UnsafeRawPointer) -> [String] {

let cfArray = Unmanaged<CFArray>.fromOpaque(eventPaths)

return cfArray.takeUnretainedValue() as! [String]

}

/// Create a new file system events stream that watches for changes

/// in the given directories.

func createFSEventStream(_ pathsToWatch: [String]) -> FSEventStreamRef {

let callback: FSEventStreamCallback = { (_, _, _, eventPaths, _, _) in

for p in Set(convertFSEventPaths(eventPaths)) {

print("Detected change in \(p)")

}

fflush(stdout)

}

let context: UnsafeMutablePointer<FSEventStreamContext>? = nil

let sinceWhen = FSEventStreamEventId(kFSEventStreamEventIdSinceNow)

let latency = 0.01

let flags = FSEventStreamCreateFlags(

kFSEventStreamCreateFlagFileEvents | kFSEventStreamCreateFlagUseCFTypes

)

guard let eventStream = FSEventStreamCreate(

kCFAllocatorDefault, callback, context, pathsToWatch as CFArray, sinceWhen, latency, flags

) else {

fatalError("Failed to create FSEventStream: check your paths or permissions.")

}

return eventStream

}

/// Stop a file system events stream, invalidate it, and release our

/// reference to it.

func cleanupEventStream(_ eventStream: FSEventStreamRef) {

FSEventStreamStop(eventStream)

FSEventStreamInvalidate(eventStream)

FSEventStreamRelease(eventStream)

}

/// Register cleanup handlers for SIGINT, SIGTERM and SIGHUP that

/// clean up the event stream when the script exits.

///

/// Returns an array of `DispatchSourceSignal`; the caller must hold

/// a reference to these in a global variable, or they will be cancelled.

func registerCleanup(_ eventStream: FSEventStreamRef) -> [DispatchSourceSignal] {

let signals = [SIGINT, SIGTERM, SIGHUP]

var sources: [DispatchSourceSignal] = []

for sig in signals {

let signalSource = DispatchSource.makeSignalSource(signal: sig, queue: .main)

signalSource.setEventHandler {

print("\nStopping listener...")

cleanupEventStream(eventStream)

exit(0)

}

signal(sig, SIG_IGN)

signalSource.activate()

sources.append(signalSource)

}

return sources

}

// Choose which folders to watch.

let home = URL(fileURLWithPath: NSHomeDirectory())

let pathsToWatch = [

home.appendingPathComponent("Desktop").path,

home.appendingPathComponent("Documents").path

]

// Create the event stream.

let eventStream = createFSEventStream(pathsToWatch)

// Register cleanup handlers that will run when the script exits.

let cleanup = registerCleanup(eventStream)

// Schedule the event stream and start listening for changes.

let queue = DispatchQueue(label: "net.alexwlchan.watch_file_changes")

FSEventStreamSetDispatchQueue(eventStream, queue)

FSEventStreamStart(eventStream)

print("Listening for changes in \(pathsToWatch.joined(separator: ", "))")

dispatchMain()This is great if I’m writing a Swift app – but my static site generator is written in Python, so I’d really like to know about these changes in Python. How can I pass this information to a Python script?

I want my Python code to invoke the Swift script as a new process, specify what directories it wants to watch, and read lines from stdout to see which files changed.

This means I have to change the Swift script in three ways:

Listening for changes and Stopping listener messages to stderr;Detected change message to print just the path of the changed file.What’s nice is that there’s nothing Python-specific about this mechanism; you could use this to expose a stream of changed files in any language. (Although in practice I’ll only use it in Python, which I use for the majority of my recreational coding.)

I briefly considered trying to create some Python-Swift bridge, similar to what I did with clonefile() last year, but I couldn’t work out how to do it without bringing in more dependencies.

Plus, it would have been a Python-only solution.

Here’s the updated Swift script:

watch_for_changed_files.swift [DIRS...]#!/usr/bin/env swift

/// Watch for changed files in a directory, and print the paths of

/// changed files to stdout.

///

/// Example:

///

/// $ swift scripts/watch_for_changed_files.swift ~/Desktop/ ~/Documents/

/// Listening for changes in /Users/alexwlchan/Desktop/, /Users/alexwlchan/Documents/

/// /Users/alexwlchan/Desktop/greeting.txt

/// /Users/alexwlchan/Desktop/booktracker/index.html

/// /Users/alexwlchan/Documents/proposal.pdf

///

import Foundation

/// Convert a raw pointer from an FSEvent callback into a Swift String.

///

/// FSEventStream must be created with 'kFSEventStreamCreateFlagUseCFTypes'

func convertFSEventPaths(_ eventPaths: UnsafeRawPointer) -> [String] {

let cfArray = Unmanaged<CFArray>.fromOpaque(eventPaths)

return cfArray.takeUnretainedValue() as! [String]

}

/// Create a new file system events stream that watches for changes

/// in the given directories.

func createFSEventStream(_ pathsToWatch: [String]) -> FSEventStreamRef {

let callback: FSEventStreamCallback = { (_, _, _, eventPaths, _, _) in

for p in Set(convertFSEventPaths(eventPaths)) {

print(p)

}

fflush(stdout)

}

let context: UnsafeMutablePointer<FSEventStreamContext>? = nil

let sinceWhen = FSEventStreamEventId(kFSEventStreamEventIdSinceNow)

let latency = 0.01

let flags = FSEventStreamCreateFlags(

kFSEventStreamCreateFlagFileEvents | kFSEventStreamCreateFlagUseCFTypes

)

guard let eventStream = FSEventStreamCreate(

kCFAllocatorDefault, callback, context, pathsToWatch as CFArray, sinceWhen, latency, flags

) else {

fatalError("Failed to create FSEventStream: check your paths or permissions.")

}

return eventStream

}

/// Stop a file system events stream, invalidate it, and release our

/// reference to it.

func cleanupEventStream(_ eventStream: FSEventStreamRef) {

FSEventStreamStop(eventStream)

FSEventStreamInvalidate(eventStream)

FSEventStreamRelease(eventStream)

}

/// Register cleanup handlers for SIGINT, SIGTERM and SIGHUP that

/// clean up the event stream when the script exits.

///

/// Returns an array of `DispatchSourceSignal`; the caller must hold

/// a reference to these in a global variable, or they will be cancelled.

func registerCleanup(_ eventStream: FSEventStreamRef) -> [DispatchSourceSignal] {

let signals = [SIGINT, SIGTERM, SIGHUP]

var sources: [DispatchSourceSignal] = []

for sig in signals {

let signalSource = DispatchSource.makeSignalSource(signal: sig, queue: .main)

signalSource.setEventHandler {

fputs("\nStopping listener...\n", stderr)

cleanupEventStream(eventStream)

exit(0)

}

signal(sig, SIG_IGN)

signalSource.activate()

sources.append(signalSource)

}

return sources

}

// Choose which folders to watch.

let pathsToWatch: [String]

if CommandLine.arguments.count > 1 {

pathsToWatch = Array(CommandLine.arguments.dropFirst())

} else {

pathsToWatch = ["."]

}

// Create the event stream.

let eventStream = createFSEventStream(pathsToWatch)

// Register cleanup handlers that will run when the script exits.

let cleanup = registerCleanup(eventStream)

// Schedule the event stream and start listening for changes.

let queue = DispatchQueue(label: "net.alexwlchan.watch_file_changes")

FSEventStreamSetDispatchQueue(eventStream, queue)

FSEventStreamStart(eventStream)

fputs("Listening for changes in \(pathsToWatch.joined(separator: ", "))\n", stderr)

dispatchMain()And here’s the first Python function I came up with:

from collections.abc import Iterator

from pathlib import Path

import subprocess

def watch_for_changed_files(*dirs: str | Path) -> Iterator[Path]:

"""

Watch one or more directory trees for changes, and yield the paths of

files when they change.

"""

cmd = ["swift", "watch_for_changed_files.swift"] + [str(d) for d in dirs]

proc = subprocess.Popen(cmd, stdout=subprocess.PIPE, text=True, bufsize=1)

try:

for line in proc.stdout:

yield Path(line.strip())

finally:

proc.terminate()

proc.wait()

for p in watch_for_changed_files("/Users/alexwlchan/Desktop"):

print(p)This function uses the subprocess module to start my Swift script in a new process, then it reads lines from proc.stdout and yields them to the caller.

The caller gets a stream of changed file paths, and doesn’t need to worry about the underlying process.

The function will keep iterating over proc.stdout while stdout stays open, which lasts as long as the Swift process is running.

It’s a long-running listener that only stops when I break out of the loop (whether with an explicit break, an exception, or stopping the whole Python script).

The text=True parameter means stdout will be opened in text mode rather than binary mode, and bufsize=1 means the output will be line-buffered, so proc.stdout will be flushed every time the Swift script writes a newline.

(This pairs with fflush(stdout) in the Swift script to ensure there’s no buffering delay when I get a filesystem event.)

The try … finally construction ensures the process is stopped and cleaned up correctly when I’m done.

In my live reload script, I can now do an incremental rebuild that only rebuilds parts of the site that have changed. If I’ve changed the base template? Rebuild the entire site. If I’ve edited an article? Only one page needs to change. This is more efficient and makes rebuilds much faster.

One problem with this function is that it doesn’t do debouncing. If I change a lot of files at once – say, a bulk find and replace – this function will emit every file separately, kicking off a bunch of redundant rebuilds. If I change ten files at once, I only need to do one rebuild, not ten.

What I’d like to do is coalesce all the changes that have happened since the last rebuild into a single event, then use them to inform the next rebuild.

You can do some of this coalescing in Swift by tweaking the latency parameter, but that doesn’t work here because the latency is variable.

The length of a rebuild can vary from a hundred milliseconds to multiple seconds, depending on how much of the site is being rebuilt.

What I’d like to do is read everything that’s available in proc.stdout, emit that to the caller, then wait for something else to be written.

By default, reading from proc.stdout is a blocking operation – if we call read() and there’s nothing available, it waits until there’s something for us to read.

To debounce, we’ll need to change this behaviour.

First, we change proc.stdout to be non-blocking:

import os

os.set_blocking(proc.stdout.fileno(), False)Then, we need to know when anything has been written to stdout, so we can read all the available output and emit it to the caller.

We could poll proc.stdout repeatedly and look for non-empty output, but that would be very inefficient – a better approach would be to use the selectors module and get notified when something gets written.

We create a selector that waits until there’s data waiting to be read from proc.stdout:

import selectors

sel = selectors.DefaultSelector()

sel.register(proc.stdout, selectors.EVENT_READ)Then, we call the select() method, which blocks until an event is ready.

At that point, we read everything that’s available proc.stdout and deliver it as a single changeset to the caller.

For some use cases you might want to capture and inspect the event, but here it’s enough to know that an event was emitted, and start reading stdout:

while True:

sel.select()

captured_paths = set()

while True:

line = proc.stdout.readline()

if not line:

break

captured_paths.add(Path(line.strip()))

yield captured_pathsThis changes the signature of the overall function, because now we’re emitting changesets instead of single files. Here’s the updated function:

from collections.abc import Iterator

import os

from pathlib import Path

import selectors

import subprocess

def watch_for_changed_files(*dirs: str | Path) -> Iterator[set[Path]]:

"""

Watch one or more directory trees for changes, and yield the paths of

files when they change.

"""

cmd = ["swift", "scripts/watch_for_changed_files.swift"] + [str(d) for d in dirs]

proc = subprocess.Popen(cmd, stdout=subprocess.PIPE, text=True, bufsize=1)

os.set_blocking(proc.stdout.fileno(), False)

sel = selectors.DefaultSelector()

sel.register(proc.stdout, selectors.EVENT_READ)

try:

while True:

sel.select()

captured_paths = set()

while True:

line = proc.stdout.readline()

if not line:

break

captured_paths.add(Path(line.strip()))

yield captured_paths

finally:

proc.terminate()

proc.wait()Using third-party libraries. Initially I was using the python-livereload library, but I wanted to replace it with my own implementation – partly to remove a dependency, partly to understand how this functionality works. There are other Python libraries that offer filesystem watching, including fswatch, inotify, and watchdog, but I didn’t want to use them for similar reasons.

I have an advantage over these library authors – while they aim to support cross-platform filesystem watching, I only have to get it working on macOS. Specifically, the exact versions of macOS that my Macs are running, and no others. This means I can write a smaller, more focused bit of code.

Polling the source files. This is easy to write, but I have enough source files that it’s surprisingly slow – about 90ms to scan 13,000 source files, and I’m worried about the effect on power consumption and the lifespan of my SSD if I polled in a hot loop. For comparison, my final code only takes 2–4ms to detect a change and trigger a new build, and it’s very judicious about CPU cycles and disk reads.

Here’s a diagram which illustrates the code we’ve written: the FSEvents API emits an event to our Swift script, that prints the file paths to stdout, where they get read by a Python script that kicks off a site rebuild. (Click for a larger version.)

In informal benchmarking, there’s about 2–4 milliseconds between the on-disk modified time of a file and it being picked up by this function. Given file changes only occur when I do something, this is plenty fast enough. (I could click the “save” button as fast as I could, and the code would still have time for a long nap between consecutive clicks.)

Both the Swift and the Python code pause until something interesting happens, so this is very efficient – no aggressive polling that could hurt my battery life or SSD longevity.

Before I started this script, the only way I knew how to track file changes was by polling, which is undesirable for a number of reasons. I wasn’t sure if I could write an alternative, but now it’s done, I’m proud of the result.

I learnt a lot about topics I only vaguely understood before, including the macOS FSEvents API, how blocking and non-blocking I/O works in Python, and using the selectors module.

Explaining it all for this article has cemented that learning, and I understand every line of this code.

I’m pleased I can do this without adding third-party dependencies, especially for something as low-level as filesystem access. Even if I eventually replace this code with a library, I’ll have a better mental model of how it works.

I’m surprised by how much this has improved my workflow. I was waiting 5 to 10 seconds with Jekyll; now, my browser reloads almost instantly with new changes. Everything feels a lot smoother, and it’s renewed my interest in working on the site.

In my next post, I’ll explain how I combine this watcher with HTTP long polling to trigger an automatic browser refresh the moment the rebuild finishes.

[If the formatting of this post looks odd in your feed reader, visit the original article]

2026-05-02 14:08:53

I build a lot of static websites – including this site and all of my local media archives – and I want to test them. Most of my pages are static HTML and I can write automated tests that analyse the HTML, but for more complex sites I have JavaScript that runs in the browser and modifies the page. The only way to test that functionality is to open the page in a browser, click around, and see what happens. I could do that manually, but it quickly gets tedious.

To automate this process, I’ve been using a testing framework called Playwright, which is designed for this sort of end-to-end testing. It’s a tool that allows you to programatically control a web browser, look at the contents of a page, and make assertions about what’s there. Playwright can be used to test or script any kind of web app; I’m using it for static sites because those are the only web apps I have.

Playwright is available as a CLI, or there are libraries to use it with TypeScript, Python, .NET, and Java. All my other tests are written in Python, so that’s what I’m using.

To set up Playwright with Python, you install the playwright library using pip or uv, then install a web browser for Playwright to control.

(You can’t use Playwright with the browser you use day-to-day; you need special binaries with control hooks.)

I use Safari as my main browser, and Safari is based on WebKit, so let’s install that:

$ uv pip install playwright

$ python3 -m playwright install webkitThen we can start writing tests.

Here’s a basic test in which Playwright launches WebKit, opens example.com, and checks the text Example domain is visible on the page:

from playwright.sync_api import expect, sync_playwright

def test_basic_playwright() -> None:

"""

Run a basic test with Playwright: load a web page and check it

contains the expected text.

"""